# Objective

- Shadow maps should only be sampled if the mesh is a shadow receiver AND shadow mapping is enabled for the light

## Solution

- Fix the logic in the shader

See #3165 and #3175

# Objective

- @superdump was having trouble with this loop in the GLTF loader.

## Solution

- Make it probably linear.

- Measured times:

- Old: 40s, new: 200ms

I think there's still room for improvement. For example, I think making the nodes be in `Arc`s could be a significant gain, since currently there's duplication all the way down the tree.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Use `std140_size_static()` everywhere instead of manual sizes as the crevice rewrite appears to have fixed all the problems as it claimed to do.

I've tested `3d_scene_pipelined`, `bevymark_pipelined`, and `load_gltf_pipelined` and all three look fine.

# Objective

I need to queue my own textures up for font rendering(texture arrays) and I noticed a bunch of `ImageX`, like `ImageDataLayout`, were missing from the render resources exports.

## Solution

Add new exports to render resources.

# Objective

Allow shadow mapping to be enabled/disabled per-light.

## Solution

- NOTE: This PR is on top of https://github.com/bevyengine/bevy/pull/3072

- Add `shadows_enabled` boolean property to `PointLight` and `DirectionalLight` components.

- Do not update the frusta for the light if shadows are disabled.

- Do not check for visible entities for the light if shadows are disabled.

- Do not fetch shadows for lights with shadows disabled.

- I reworked a few types for clarity: `ViewLight` -> `ShadowView`, the bulk of `ViewLights` members -> `ViewShadowBindings`, the entities Vec in `ViewLights` -> `ViewLightEntities`, the uniform offset in `ViewLights` for `GpuLights` -> `ViewLightsUniformOffset`

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

## Shader Imports

This adds "whole file" shader imports. These come in two flavors:

### Asset Path Imports

```rust

// /assets/shaders/custom.wgsl

#import "shaders/custom_material.wgsl"

[[stage(fragment)]]

fn fragment() -> [[location(0)]] vec4<f32> {

return get_color();

}

```

```rust

// /assets/shaders/custom_material.wgsl

[[block]]

struct CustomMaterial {

color: vec4<f32>;

};

[[group(1), binding(0)]]

var<uniform> material: CustomMaterial;

```

### Custom Path Imports

Enables defining custom import paths. These are intended to be used by crates to export shader functionality:

```rust

// bevy_pbr2/src/render/pbr.wgsl

#import bevy_pbr::mesh_view_bind_group

#import bevy_pbr::mesh_bind_group

[[block]]

struct StandardMaterial {

base_color: vec4<f32>;

emissive: vec4<f32>;

perceptual_roughness: f32;

metallic: f32;

reflectance: f32;

flags: u32;

};

/* rest of PBR fragment shader here */

```

```rust

impl Plugin for MeshRenderPlugin {

fn build(&self, app: &mut bevy_app::App) {

let mut shaders = app.world.get_resource_mut::<Assets<Shader>>().unwrap();

shaders.set_untracked(

MESH_BIND_GROUP_HANDLE,

Shader::from_wgsl(include_str!("mesh_bind_group.wgsl"))

.with_import_path("bevy_pbr::mesh_bind_group"),

);

shaders.set_untracked(

MESH_VIEW_BIND_GROUP_HANDLE,

Shader::from_wgsl(include_str!("mesh_view_bind_group.wgsl"))

.with_import_path("bevy_pbr::mesh_view_bind_group"),

);

```

By convention these should use rust-style module paths that start with the crate name. Ultimately we might enforce this convention.

Note that this feature implements _run time_ import resolution. Ultimately we should move the import logic into an asset preprocessor once Bevy gets support for that.

## Decouple Mesh Logic from PBR Logic via MeshRenderPlugin

This breaks out mesh rendering code from PBR material code, which improves the legibility of the code, decouples mesh logic from PBR logic, and opens the door for a future `MaterialPlugin<T: Material>` that handles all of the pipeline setup for arbitrary shader materials.

## Removed `RenderAsset<Shader>` in favor of extracting shaders into RenderPipelineCache

This simplifies the shader import implementation and removes the need to pass around `RenderAssets<Shader>`.

## RenderCommands are now fallible

This allows us to cleanly handle pipelines+shaders not being ready yet. We can abort a render command early in these cases, preventing bevy from trying to bind group / do draw calls for pipelines that couldn't be bound. This could also be used in the future for things like "components not existing on entities yet".

# Next Steps

* Investigate using Naga for "partial typed imports" (ex: `#import bevy_pbr::material::StandardMaterial`, which would import only the StandardMaterial struct)

* Implement `MaterialPlugin<T: Material>` for low-boilerplate custom material shaders

* Move shader import logic into the asset preprocessor once bevy gets support for that.

Fixes#3132

This is a squash-and-rebase of @Ku95's documentation of the new renderer onto the latest `pipelined-rendering` branch.

Original PR is #2884.

Co-authored-by: dataphract <dataphract@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Fix nested shader defs. For example, in:

```rust

#ifdef A

#ifdef B

some code here

#endif

#endif

```

...before this PR, if `A` *is not* defined, and `B` *is* defined, then `some code here` will be output.

## Solution

- Combine the logic of whether the parent and child scope guards are defined and use that as the resulting child scope guard boolean value

# Objective

Add depth prepass and support for opaque, alpha mask, and alpha blend modes for the 3D PBR target.

## Solution

NOTE: This is based on top of #2861 frustum culling. Just lining it up to keep @cart loaded with the review train. 🚂

There are a lot of important details here. Big thanks to @cwfitzgerald of wgpu, naga, and rend3 fame for explaining how to do it properly!

* An `AlphaMode` component is added that defines whether a material should be considered opaque, an alpha mask (with a cutoff value that defaults to 0.5, the same as glTF), or transparent and should be alpha blended

* Two depth prepasses are added:

* Opaque does a plain vertex stage

* Alpha mask does the vertex stage but also a fragment stage that samples the colour for the fragment and discards if its alpha value is below the cutoff value

* Both are sorted front to back, not that it matters for these passes. (Maybe there should be a way to skip sorting?)

* Three main passes are added:

* Opaque and alpha mask passes use a depth comparison function of Equal such that only the geometry that was closest is processed further, due to early-z testing

* The transparent pass uses the Greater depth comparison function so that only transparent objects that are closer than anything opaque are rendered

* The opaque fragment shading is as before except that alpha is explicitly set to 1.0

* Alpha mask fragment shading sets the alpha value to 1.0 if it is equal to or above the cutoff, as defined by glTF

* Opaque and alpha mask are sorted front to back (again not that it matters as we will skip anything that is not equal... maybe sorting is no longer needed here?)

* Transparent is sorted back to front. Transparent fragment shading uses the alpha blending over operator

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

Adds new `EntityRenderCommand`, `EntityPhaseItem`, and `CachedPipelinePhaseItem` traits to make it possible to reuse RenderCommands across phases. This should be helpful for features like #3072 . It also makes the trait impls slightly less generic-ey in the common cases.

This also fixes the custom shader examples to account for the recent Frustum Culling and MSAA changes (the UX for these things will be improved later).

# Objective

- Update vendor crevice to have the latest update from crevice 0.8.0

- Using https://github.com/ElectronicRU/crevice/tree/arrays which has the changes to make arrays work

## Solution

- Also updated glam and hexasphere to only have one version of glam

- From the original PR, using crevice to write GLSL code containing arrays would probably not work but it's not something used by Bevy

# Objective

Implement frustum culling for much better performance on more complex scenes. With the Amazon Lumberyard Bistro scene, I was getting roughly 15fps without frustum culling and 60+fps with frustum culling on a MacBook Pro 16 with i9 9980HK 8c/16t CPU and Radeon Pro 5500M.

macOS does weird things with vsync so even though vsync was off, it really looked like sometimes other applications or the desktop window compositor were interfering, but the difference could be even more as I even saw up to 90+fps sometimes.

## Solution

- Until the https://github.com/bevyengine/rfcs/pull/12 RFC is completed, I wanted to implement at least some of the bounding volume functionality we needed to be able to unblock a bunch of rendering features and optimisations such as frustum culling, fitting the directional light orthographic projection to the relevant meshes in the view, clustered forward rendering, etc.

- I have added `Aabb`, `Frustum`, and `Sphere` types with only the necessary intersection tests for the algorithms used. I also added `CubemapFrusta` which contains a `[Frustum; 6]` and can be used by cube maps such as environment maps, and point light shadow maps.

- I did do a bit of benchmarking and optimisation on the intersection tests. I compared the [rafx parallel-comparison bitmask approach](c91bd5fcfd/rafx-visibility/src/geometry/frustum.rs (L64-L92)) with a naïve loop that has an early-out in case of a bounding volume being outside of any one of the `Frustum` planes and found them to be very similar, so I chose the simpler and more readable option. I also compared using Vec3 and Vec3A and it turned out that promoting Vec3s to Vec3A improved performance of the culling significantly due to Vec3A operations using SIMD optimisations where Vec3 uses plain scalar operations.

- When loading glTF models, the vertex attribute accessors generally store the minimum and maximum values, which allows for adding AABBs to meshes loaded from glTF for free.

- For meshes without an AABB (`PbrBundle` deliberately does not have an AABB by default), a system is executed that scans over the vertex positions to find the minimum and maximum values along each axis. This is used to construct the AABB.

- The `Frustum::intersects_obb` and `Sphere::insersects_obb` algorithm is from Foundations of Game Engine Development 2: Rendering by Eric Lengyel. There is no OBB type, yet, rather an AABB and the model matrix are passed in as arguments. This calculates a 'relative radius' of the AABB with respect to the plane normal (the plane normal in the Sphere case being something I came up with as the direction pointing from the centre of the sphere to the centre of the AABB) such that it can then do a sphere-sphere intersection test in practice.

- `RenderLayers` were copied over from the current renderer.

- `VisibleEntities` was copied over from the current renderer and a `CubemapVisibleEntities` was added to support `PointLight`s for now. `VisibleEntities` are added to views (cameras and lights) and contain a `Vec<Entity>` that is populated by culling/visibility systems that run in PostUpdate of the app world, and are iterated over in the render world for, for example, queuing up meshes to be drawn by lights for shadow maps and the main pass for cameras.

- `Visibility` and `ComputedVisibility` components were added. The `Visibility` component is user-facing so that, for example, the entity can be marked as not visible in an editor. `ComputedVisibility` on the other hand is the result of the culling/visibility systems and takes `Visibility` into account. So if an entity is marked as not being visible in its `Visibility` component, that will skip culling/visibility intersection tests and just mark the `ComputedVisibility` as false.

- The `ComputedVisibility` is used to decide which meshes to extract.

- I had to add a way to get the far plane from the `CameraProjection` in order to define an explicit far frustum plane for culling. This should perhaps be optional as it is not always desired and in that case, testing 5 planes instead of 6 is a performance win.

I think that's about all. I discussed some of the design with @cart on Discord already so hopefully it's not too far from being mergeable. It works well at least. 😄

# Objective

- Support tangent vertex attributes, and normal maps

- Support loading these from glTF models

## Solution

- Make two pipelines in both the shadow and pbr passes, one for without normal maps, one for with normal maps

- Select the correct pipeline to bind based on the presence of the normal map texture

- Share the vertex attribute layout between shadow and pbr passes

- Refactored pbr.wgsl to share a bunch of common code between the normal map and non-normal map entry points. I tried to do this in a way that will allow custom shader reuse.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

This implements the following:

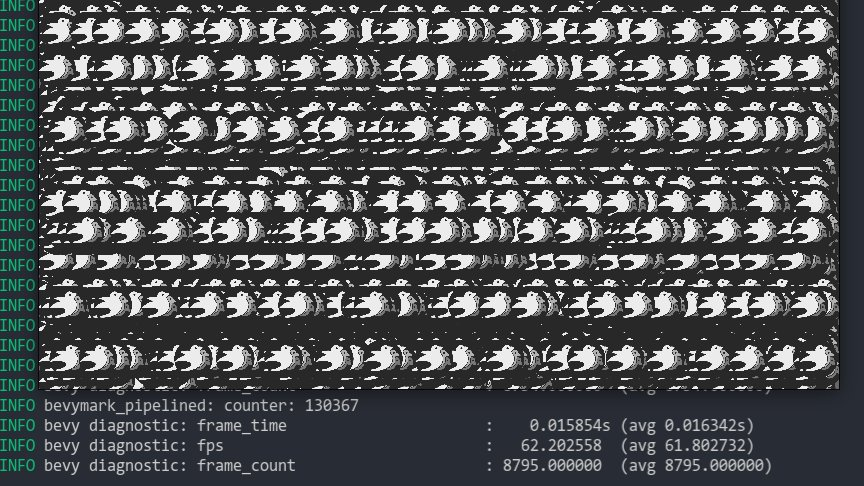

* **Sprite Batching**: Collects sprites in a vertex buffer to draw many sprites with a single draw call. Sprites are batched by their `Handle<Image>` within a specific z-level. When possible, sprites are opportunistically batched _across_ z-levels (when no sprites with a different texture exist between two sprites with the same texture on different z levels). With these changes, I can now get ~130,000 sprites at 60fps on the `bevymark_pipelined` example.

* **Sprite Color Tints**: The `Sprite` type now has a `color` field. Non-white color tints result in a specialized render pipeline that passes the color in as a vertex attribute. I chose to specialize this because passing vertex colors has a measurable price (without colors I get ~130,000 sprites on bevymark, with colors I get ~100,000 sprites). "Colored" sprites cannot be batched with "uncolored" sprites, but I think this is fine because the chance of a "colored" sprite needing to batch with other "colored" sprites is generally probably way higher than an "uncolored" sprite needing to batch with a "colored" sprite.

* **Sprite Flipping**: Sprites can be flipped on their x or y axis using `Sprite::flip_x` and `Sprite::flip_y`. This is also true for `TextureAtlasSprite`.

* **Simpler BufferVec/UniformVec/DynamicUniformVec Clearing**: improved the clearing interface by removing the need to know the size of the final buffer at the initial clear.

Note that this moves sprites away from entity-driven rendering and back to extracted lists. We _could_ use entities here, but it necessitates that an intermediate list is allocated / populated to collect and sort extracted sprites. This redundant copy, combined with the normal overhead of spawning extracted sprite entities, brings bevymark down to ~80,000 sprites at 60fps. I think making sprites a bit more fixed (by default) is worth it. I view this as acceptable because batching makes normal entity-driven rendering pretty useless anyway (and we would want to batch most custom materials too). We can still support custom shaders with custom bindings, we'll just need to define a specific interface for it.

Adds support for MSAA to the new renderer. This is done using the new [pipeline specialization](#3031) support to specialize on sample count. This is an alternative implementation to #2541 that cuts out the need for complicated render graph edge management by moving the relevant target information into View entities. This reuses @superdump's clever MSAA bitflag range code from #2541.

Note that wgpu currently only supports 1 or 4 samples due to those being the values supported by WebGPU. However they do plan on exposing ways to [enable/query for natively supported sample counts](https://github.com/gfx-rs/wgpu/issues/1832). When this happens we should integrate

# Objective

Make possible to use wgpu gles backend on in the browser (wasm32 + WebGL2).

## Solution

It is built on top of old @cart patch initializing windows before wgpu. Also:

- initializes wgpu with `Backends::GL` and proper `wgpu::Limits` on wasm32

- changes default texture format to `wgpu::TextureFormat::Rgba8UnormSrgb`

Co-authored-by: Mariusz Kryński <mrk@sed.pl>

# Objective

- Fixes#2919

- Initial pixel was hard coded and not dependent on texture format

- Replace #2920 as I noticed this needed to be done also on pipeline rendering branch

## Solution

- Replace the hard coded pixel with one using the texture pixel size

# Objective

while testing wgpu/WebGL on mobile GPU I've noticed bevy always forces vertex index format to 32bit (and ignores mesh settings).

## Solution

the solution is to pass proper vertex index format in GpuIndexInfo to render_pass

## New Features

This adds the following to the new renderer:

* **Shader Assets**

* Shaders are assets again! Users no longer need to call `include_str!` for their shaders

* Shader hot-reloading

* **Shader Defs / Shader Preprocessing**

* Shaders now support `# ifdef NAME`, `# ifndef NAME`, and `# endif` preprocessor directives

* **Bevy RenderPipelineDescriptor and RenderPipelineCache**

* Bevy now provides its own `RenderPipelineDescriptor` and the wgpu version is now exported as `RawRenderPipelineDescriptor`. This allows users to define pipelines with `Handle<Shader>` instead of needing to manually compile and reference `ShaderModules`, enables passing in shader defs to configure the shader preprocessor, makes hot reloading possible (because the descriptor can be owned and used to create new pipelines when a shader changes), and opens the doors to pipeline specialization.

* The `RenderPipelineCache` now handles compiling and re-compiling Bevy RenderPipelineDescriptors. It has internal PipelineLayout and ShaderModule caches. Users receive a `CachedPipelineId`, which can be used to look up the actual `&RenderPipeline` during rendering.

* **Pipeline Specialization**

* This enables defining per-entity-configurable pipelines that specialize on arbitrary custom keys. In practice this will involve specializing based on things like MSAA values, Shader Defs, Bind Group existence, and Vertex Layouts.

* Adds a `SpecializedPipeline` trait and `SpecializedPipelines<MyPipeline>` resource. This is a simple layer that generates Bevy RenderPipelineDescriptors based on a custom key defined for the pipeline.

* Specialized pipelines are also hot-reloadable.

* This was the result of experimentation with two different approaches:

1. **"generic immediate mode multi-key hash pipeline specialization"**

* breaks up the pipeline into multiple "identities" (the core pipeline definition, shader defs, mesh layout, bind group layout). each of these identities has its own key. looking up / compiling a specific version of a pipeline requires composing all of these keys together

* the benefit of this approach is that it works for all pipelines / the pipeline is fully identified by the keys. the multiple keys allow pre-hashing parts of the pipeline identity where possible (ex: pre compute the mesh identity for all meshes)

* the downside is that any per-entity data that informs the values of these keys could require expensive re-hashes. computing each key for each sprite tanked bevymark performance (sprites don't actually need this level of specialization yet ... but things like pbr and future sprite scenarios might).

* this is the approach rafx used last time i checked

2. **"custom key specialization"**

* Pipelines by default are not specialized

* Pipelines that need specialization implement a SpecializedPipeline trait with a custom key associated type

* This allows specialization keys to encode exactly the amount of information required (instead of needing to be a combined hash of the entire pipeline). Generally this should fit in a small number of bytes. Per-entity specialization barely registers anymore on things like bevymark. It also makes things like "shader defs" way cheaper to hash because we can use context specific bitflags instead of strings.

* Despite the extra trait, it actually generally makes pipeline definitions + lookups simpler: managing multiple keys (and making the appropriate calls to manage these keys) was way more complicated.

* I opted for custom key specialization. It performs better generally and in my opinion is better UX. Fortunately the way this is implemented also allows for custom caches as this all builds on a common abstraction: the RenderPipelineCache. The built in custom key trait is just a simple / pre-defined way to interact with the cache

## Callouts

* The SpecializedPipeline trait makes it easy to inherit pipeline configuration in custom pipelines. The changes to `custom_shader_pipelined` and the new `shader_defs_pipelined` example illustrate how much simpler it is to define custom pipelines based on the PbrPipeline.

* The shader preprocessor is currently pretty naive (it just uses regexes to process each line). Ultimately we might want to build a more custom parser for more performance + better error handling, but for now I'm happy to optimize for "easy to implement and understand".

## Next Steps

* Port compute pipelines to the new system

* Add more preprocessor directives (else, elif, import)

* More flexible vertex attribute specialization / enable cheaply specializing on specific mesh vertex layouts

# Objective

The current TODO comment is out of date

## Solution

I switched up the comment

Co-authored-by: William Batista <45850508+billyb2@users.noreply.github.com>

Upgrades both the old and new renderer to wgpu 0.11 (and naga 0.7). This builds on @zicklag's work here #2556.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

Using RenderQueue in BufferVec allows removal of the staging buffer entirely, as well as removal of the SpriteNode.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- removed unused RenderResourceId and SwapChainFrame (already unified with TextureView)

- added deref to BindGroup, this makes conversion to wgpu::BindGroup easier

## Solution

- cleans up the API

# Objective

Using fullscreen or trying to resize a window caused a panic. Fix that.

## Solution

- Don't wholesale overwrite the ExtractedWindows resource when extracting windows

- This could cause an accumulation of unused windows that are holding onto swap chain frames?

- Check the if width and/or height changed since the last frame

- If the size changed, recreate the swap chain

- Ensure dimensions are >= 1 to avoid panics due to any dimension being 0

This changes how render logic is composed to make it much more modular. Previously, all extraction logic was centralized for a given "type" of rendered thing. For example, we extracted meshes into a vector of ExtractedMesh, which contained the mesh and material asset handles, the transform, etc. We looked up bindings for "drawn things" using their index in the `Vec<ExtractedMesh>`. This worked fine for built in rendering, but made it hard to reuse logic for "custom" rendering. It also prevented us from reusing things like "extracted transforms" across contexts.

To make rendering more modular, I made a number of changes:

* Entities now drive rendering:

* We extract "render components" from "app components" and store them _on_ entities. No more centralized uber lists! We now have true "ECS-driven rendering"

* To make this perform well, I implemented #2673 in upstream Bevy for fast batch insertions into specific entities. This was merged into the `pipelined-rendering` branch here: #2815

* Reworked the `Draw` abstraction:

* Generic `PhaseItems`: each draw phase can define its own type of "rendered thing", which can define its own "sort key"

* Ported the 2d, 3d, and shadow phases to the new PhaseItem impl (currently Transparent2d, Transparent3d, and Shadow PhaseItems)

* `Draw` trait and and `DrawFunctions` are now generic on PhaseItem

* Modular / Ergonomic `DrawFunctions` via `RenderCommands`

* RenderCommand is a trait that runs an ECS query and produces one or more RenderPass calls. Types implementing this trait can be composed to create a final DrawFunction. For example the DrawPbr DrawFunction is created from the following DrawCommand tuple. Const generics are used to set specific bind group locations:

```rust

pub type DrawPbr = (

SetPbrPipeline,

SetMeshViewBindGroup<0>,

SetStandardMaterialBindGroup<1>,

SetTransformBindGroup<2>,

DrawMesh,

);

```

* The new `custom_shader_pipelined` example illustrates how the commands above can be reused to create a custom draw function:

```rust

type DrawCustom = (

SetCustomMaterialPipeline,

SetMeshViewBindGroup<0>,

SetTransformBindGroup<2>,

DrawMesh,

);

```

* ExtractComponentPlugin and UniformComponentPlugin:

* Simple, standardized ways to easily extract individual components and write them to GPU buffers

* Ported PBR and Sprite rendering to the new primitives above.

* Removed staging buffer from UniformVec in favor of direct Queue usage

* Makes UniformVec much easier to use and more ergonomic. Completely removes the need for custom render graph nodes in these contexts (see the PbrNode and view Node removals and the much simpler call patterns in the relevant Prepare systems).

* Added a many_cubes_pipelined example to benchmark baseline 3d rendering performance and ensure there were no major regressions during this port. Avoiding regressions was challenging given that the old approach of extracting into centralized vectors is basically the "optimal" approach. However thanks to a various ECS optimizations and render logic rephrasing, we pretty much break even on this benchmark!

* Lifetimeless SystemParams: this will be a bit divisive, but as we continue to embrace "trait driven systems" (ex: ExtractComponentPlugin, UniformComponentPlugin, DrawCommand), the ergonomics of `(Query<'static, 'static, (&'static A, &'static B, &'static)>, Res<'static, C>)` were getting very hard to bear. As a compromise, I added "static type aliases" for the relevant SystemParams. The previous example can now be expressed like this: `(SQuery<(Read<A>, Read<B>)>, SRes<C>)`. If anyone has better ideas / conflicting opinions, please let me know!

* RunSystem trait: a way to define Systems via a trait with a SystemParam associated type. This is used to implement the various plugins mentioned above. I also added SystemParamItem and QueryItem type aliases to make "trait stye" ecs interactions nicer on the eyes (and fingers).

* RenderAsset retrying: ensures that render assets are only created when they are "ready" and allows us to create bind groups directly inside render assets (which significantly simplified the StandardMaterial code). I think ultimately we should swap this out on "asset dependency" events to wait for dependencies to load, but this will require significant asset system changes.

* Updated some built in shaders to account for missing MeshUniform fields

Before using this image resulted in an `Error in Queue::write_texture: copy of 0..4 would end up overrunning the bounds of the Source buffer of size 0`

# Objective

Bevy should expose all wgpu types needed for building rendering pipelines.

Closes#2818

## Solution

Add wgpu's StencilOperation to bevy_render2::render_resource's export.

This updates the `pipelined-rendering` branch to use the latest `bevy_ecs` from `main`. This accomplishes a couple of goals:

1. prepares for upcoming `custom-shaders` branch changes, which were what drove many of the recent bevy_ecs changes on `main`

2. prepares for the soon-to-happen merge of `pipelined-rendering` into `main`. By including bevy_ecs changes now, we make that merge simpler / easier to review.

I split this up into 3 commits:

1. **add upstream bevy_ecs**: please don't bother reviewing this content. it has already received thorough review on `main` and is a literal copy/paste of the relevant folders (the old folders were deleted so the directories are literally exactly the same as `main`).

2. **support manual buffer application in stages**: this is used to enable the Extract step. we've already reviewed this once on the `pipelined-rendering` branch, but its worth looking at one more time in the new context of (1).

3. **support manual archetype updates in QueryState**: same situation as (2).

# Objective

Fixes a usability problem where the user is unable to use their reference to a ComputePipeline in their compute pass.

## Solution

Implements Deref, allowing the user to obtain the reference to the underlying wgpu::ComputePipeline

# Objective

Forward perspective projections have poor floating point precision distribution over the depth range. Reverse projections fair much better, and instead of having to have a far plane, with the reverse projection, using an infinite far plane is not a problem. The infinite reverse perspective projection has become the industry standard. The renderer rework is a great time to migrate to it.

## Solution

All perspective projections, including point lights, have been moved to using `glam::Mat4::perspective_infinite_reverse_rh()` and so have no far plane. As various depth textures are shared between orthographic and perspective projections, a quirk of this PR is that the near and far planes of the orthographic projection are swapped when the Mat4 is computed. This has no impact on 2D/3D orthographic projection usage, and provides consistency in shaders, texture clear values, etc. throughout the codebase.

## Known issues

For some reason, when looking along -Z, all geometry is black. The camera can be translated up/down / strafed left/right and geometry will still be black. Moving forward/backward or rotating the camera away from looking exactly along -Z causes everything to work as expected.

I have tried to debug this issue but both in macOS and Windows I get crashes when doing pixel debugging. If anyone could reproduce this and debug it I would be very grateful. Otherwise I will have to try to debug it further without pixel debugging, though the projections and such all looked fine to me.

# Objective

- Prevent the need to have a system that synchronizes sprite sizes with their images

## Solution

- Read the sprite size from the image asset when rendering the sprite

- Replace the `size` and `resize_mode` fields of `Sprite` with a `custom_size: Option<Vec2>` that will modify the sprite's rendered size to be different than the image size, but only if it is `Some(Vec2)`

# Objective

- Avoid unnecessary work in the vertex shader of the numerous shadow passes

- Have the natural order of bind groups in the pbr shader: view, material, mesh

## Solution

- Separate out the vertex stage of pbr.wgsl into depth.wgsl

- Remove the unnecessary calculation of uv and normal, as well as removing the unnecessary vertex inputs and outputs

- Use the depth.wgsl for shadow passes

- Reorder the bind groups in pbr.wgsl and PbrShaders to be 0 - view, 1 - material, 2 - mesh in decreasing order of rebind frequency

# Objective

Allow marking meshes as not casting / receiving shadows.

## Solution

- Added `NotShadowCaster` and `NotShadowReceiver` zero-sized type components.

- Extract these components into `bool`s in `ExtractedMesh`

- Only generate `DrawShadowMesh` `Drawable`s for meshes _without_ `NotShadowCaster`

- Add a `u32` bit `flags` member to `MeshUniform` with one flag indicating whether the mesh is a shadow receiver

- If a mesh does _not_ have the `NotShadowReceiver` component, then it is a shadow receiver, and so the bit in the `MeshUniform` is set, otherwise it is not set.

- Added an example illustrating the functionality.

NOTE: I wanted to have the default state of a mesh as being a shadow caster and shadow receiver, hence the `Not*` components. However, I am on the fence about this. I don't want to have a negative performance impact, nor have people wondering why their custom meshes don't have shadows because they forgot to add `ShadowCaster` and `ShadowReceiver` components, but I also really don't like the double negatives the `Not*` approach incurs. What do you think?

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Add support for configurable shadow map sizes

## Solution

- Add `DirectionalLightShadowMap` and `PointLightShadowMap` resources, which just have size members, to the app world, and add `Extracted*` counterparts to the render world

- Use the configured sizes when rendering shadow maps

- Default sizes remain the same - 4096 for directional light shadow maps, 1024 for point light shadow maps (which are cube maps so 6 faces at 1024x1024 per light)

Makes some tweaks to the SubApp labeling introduced in #2695:

* Ergonomics improvements

* Removes unnecessary allocation when retrieving subapp label

* Removes the newly added "app macros" crate in favor of bevy_derive

* renamed RenderSubApp to RenderApp

@zicklag (for reference)

This is a rather simple but wide change, and it involves adding a new `bevy_app_macros` crate. Let me know if there is a better way to do any of this!

---

# Objective

- Allow adding and accessing sub-apps by using a label instead of an index

## Solution

- Migrate the bevy label implementation and derive code to the `bevy_utils` and `bevy_macro_utils` crates and then add a new `SubAppLabel` trait to the `bevy_app` crate that is used when adding or getting a sub-app from an app.

# Objective

A question was raised on Discord about the units of the `PointLight` `intensity` member.

After digging around in the bevy_pbr2 source code and [Google Filament documentation](https://google.github.io/filament/Filament.html#mjx-eqn-pointLightLuminousPower) I discovered that the intention by Filament was that the 'intensity' value for point lights would be in lumens. This makes a lot of sense as these are quite relatable units given basically all light bulbs I've seen sold over the past years are rated in lumens as people move away from thinking about how bright a bulb is relative to a non-halogen incandescent bulb.

However, it seems that the derivation of the conversion between luminous power (lumens, denoted `Φ` in the Filament formulae) and luminous intensity (lumens per steradian, `I` in the Filament formulae) was missed and I can see why as it is tucked right under equation 58 at the link above. As such, while the formula states that for a point light, `I = Φ / 4 π` we have been using `intensity` as if it were luminous intensity `I`.

Before this PR, the intensity field is luminous intensity in lumens per steradian. After this PR, the intensity field is luminous power in lumens, [as suggested by Filament](https://google.github.io/filament/Filament.html#table_lighttypesunits) (unfortunately the link jumps to the table's caption so scroll up to see the actual table).

I appreciate that it may be confusing to call this an intensity, but I think this is intended as more of a non-scientific, human-relatable general term with a bit of hand waving so that most light types can just have an intensity field and for most of them it works in the same way or at least with some relatable value. I'm inclined to think this is reasonable rather than throwing terms like luminous power, luminous intensity, blah at users.

## Solution

- Documented the `PointLight` `intensity` member as 'luminous power' in units of lumens.

- Added a table of examples relating from various types of household lighting to lumen values.

- Added in the mapping from luminous power to luminous intensity when premultiplying the intensity into the colour before it is made into a graphics uniform.

- Updated the documentation in `pbr.wgsl` to clarify the earlier confusion about the missing `/ 4 π`.

- Bumped the intensity of the point lights in `3d_scene_pipelined` to 1600 lumens.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

The default perspective projection near plane being at 1 unit feels very far away if one considers units to directly map to real world units such as metres. Not being able to see anything that is closer than 1m is unnecessarily limiting. Using a default of 0.1 makes more sense as it is difficult to even focus on things closer than 10cm in the real world.

## Solution

- Changed the default perspective projection near plane to 0.1.

# Objective

- Allow the user to set the clear color when using the pipelined renderer

## Solution

- Add a `ClearColor` resource that can be added to the world to configure the clear color

## Remaining Issues

Currently the `ClearColor` resource is cloned from the app world to the render world every frame. There are two ways I can think of around this:

1. Figure out why `app_world.is_resource_changed::<ClearColor>()` always returns `true` in the `extract` step and fix it so that we are only updating the resource when it changes

2. Require the users to add the `ClearColor` resource to the render sub-app instead of the parent app. This is currently sub-optimal until we have labled sub-apps, and probably a helper funciton on `App` such as `app.with_sub_app(RenderApp, |app| { ... })`. Even if we had that, I think it would be more than we want the user to have to think about. They shouldn't have to know about the render sub-app I don't think.

I think the first option is the best, but I could really use some help figuring out the nuance of why `is_resource_changed` is always returning true in that context.