# Objective

I found several words in code and docs are incorrect. This should be fixed.

## Solution

- Fix several minor typos

Co-authored-by: Chris Ohk <utilforever@gmail.com>

# Objective

- Implement pipelined rendering

- Fixes#5082

- Fixes#4718

## User Facing Description

Bevy now implements piplelined rendering! Pipelined rendering allows the app logic and rendering logic to run on different threads leading to large gains in performance.

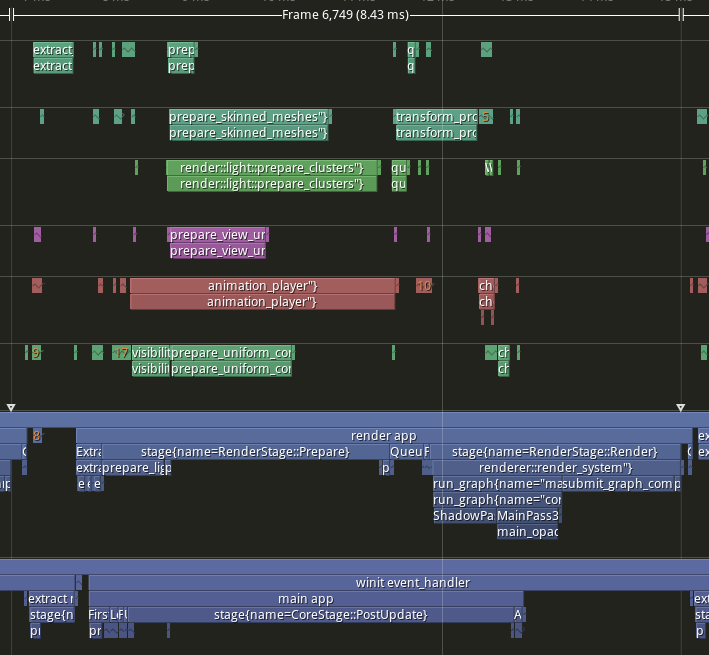

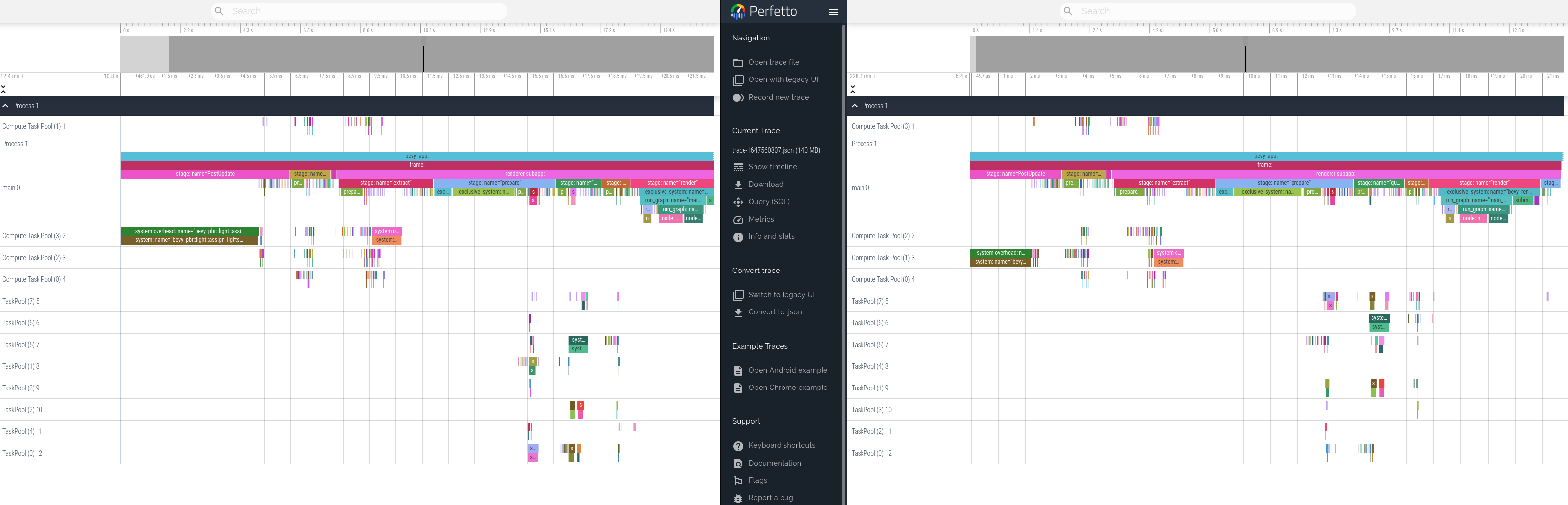

*tracy capture of many_foxes example*

To use pipelined rendering, you just need to add the `PipelinedRenderingPlugin`. If you're using `DefaultPlugins` then it will automatically be added for you on all platforms except wasm. Bevy does not currently support multithreading on wasm which is needed for this feature to work. If you aren't using `DefaultPlugins` you can add the plugin manually.

```rust

use bevy::prelude::*;

use bevy::render::pipelined_rendering::PipelinedRenderingPlugin;

fn main() {

App::new()

// whatever other plugins you need

.add_plugin(RenderPlugin)

// needs to be added after RenderPlugin

.add_plugin(PipelinedRenderingPlugin)

.run();

}

```

If for some reason pipelined rendering needs to be removed. You can also disable the plugin the normal way.

```rust

use bevy::prelude::*;

use bevy::render::pipelined_rendering::PipelinedRenderingPlugin;

fn main() {

App::new.add_plugins(DefaultPlugins.build().disable::<PipelinedRenderingPlugin>());

}

```

### A setup function was added to plugins

A optional plugin lifecycle function was added to the `Plugin trait`. This function is called after all plugins have been built, but before the app runner is called. This allows for some final setup to be done. In the case of pipelined rendering, the function removes the sub app from the main app and sends it to the render thread.

```rust

struct MyPlugin;

impl Plugin for MyPlugin {

fn build(&self, app: &mut App) {

}

// optional function

fn setup(&self, app: &mut App) {

// do some final setup before runner is called

}

}

```

### A Stage for Frame Pacing

In the `RenderExtractApp` there is a stage labelled `BeforeIoAfterRenderStart` that systems can be added to. The specific use case for this stage is for a frame pacing system that can delay the start of main app processing in render bound apps to reduce input latency i.e. "frame pacing". This is not currently built into bevy, but exists as `bevy`

```text

|-------------------------------------------------------------------|

| | BeforeIoAfterRenderStart | winit events | main schedule |

| extract |---------------------------------------------------------|

| | extract commands | rendering schedule |

|-------------------------------------------------------------------|

```

### Small API additions

* `Schedule::remove_stage`

* `App::insert_sub_app`

* `App::remove_sub_app`

* `TaskPool::scope_with_executor`

## Problems and Solutions

### Moving render app to another thread

Most of the hard bits for this were done with the render redo. This PR just sends the render app back and forth through channels which seems to work ok. I originally experimented with using a scope to run the render task. It was cuter, but that approach didn't allow render to start before i/o processing. So I switched to using channels. There is much complexity in the coordination that needs to be done, but it's worth it. By moving rendering during i/o processing the frame times should be much more consistent in render bound apps. See https://github.com/bevyengine/bevy/issues/4691.

### Unsoundness with Sending World with NonSend resources

Dropping !Send things on threads other than the thread they were spawned on is considered unsound. The render world doesn't have any nonsend resources. So if we tell the users to "pretty please don't spawn nonsend resource on the render world", we can avoid this problem.

More seriously there is this https://github.com/bevyengine/bevy/pull/6534 pr, which patches the unsoundness by aborting the app if a nonsend resource is dropped on the wrong thread. ~~That PR should probably be merged before this one.~~ For a longer term solution we have this discussion going https://github.com/bevyengine/bevy/discussions/6552.

### NonSend Systems in render world

The render world doesn't have any !Send resources, but it does have a non send system. While Window is Send, winit does have some API's that can only be accessed on the main thread. `prepare_windows` in the render schedule thus needs to be scheduled on the main thread. Currently we run nonsend systems by running them on the thread the TaskPool::scope runs on. When we move render to another thread this no longer works.

To fix this, a new `scope_with_executor` method was added that takes a optional `TheadExecutor` that can only be ticked on the thread it was initialized on. The render world then holds a `MainThreadExecutor` resource which can be passed to the scope in the parallel executor that it uses to spawn it's non send systems on.

### Scopes executors between render and main should not share tasks

Since the render world and the app world share the `ComputeTaskPool`. Because `scope` has executors for the ComputeTaskPool a system from the main world could run on the render thread or a render system could run on the main thread. This can cause performance problems because it can delay a stage from finishing. See https://github.com/bevyengine/bevy/pull/6503#issuecomment-1309791442 for more details.

To avoid this problem, `TaskPool::scope` has been changed to not tick the ComputeTaskPool when it's used by the parallel executor. In the future when we move closer to the 1 thread to 1 logical core model we may want to overprovide threads, because the render and main app threads don't do much when executing the schedule.

## Performance

My machine is Windows 11, AMD Ryzen 5600x, RX 6600

### Examples

#### This PR with pipelining vs Main

> Note that these were run on an older version of main and the performance profile has probably changed due to optimizations

Seeing a perf gain from 29% on many lights to 7% on many sprites.

<html>

<body>

<!--StartFragment--><google-sheets-html-origin>

| percent | | | Diff | | | Main | | | PR | |

-- | -- | -- | -- | -- | -- | -- | -- | -- | -- | -- | -- | --

tracy frame time | mean | median | sigma | mean | median | sigma | mean | median | sigma | mean | median | sigma

many foxes | 27.01% | 27.34% | -47.09% | 1.58 | 1.55 | -1.78 | 5.85 | 5.67 | 3.78 | 4.27 | 4.12 | 5.56

many lights | 29.35% | 29.94% | -10.84% | 3.02 | 3.03 | -0.57 | 10.29 | 10.12 | 5.26 | 7.27 | 7.09 | 5.83

many animated sprites | 13.97% | 15.69% | 14.20% | 3.79 | 4.17 | 1.41 | 27.12 | 26.57 | 9.93 | 23.33 | 22.4 | 8.52

3d scene | 25.79% | 26.78% | 7.46% | 0.49 | 0.49 | 0.15 | 1.9 | 1.83 | 2.01 | 1.41 | 1.34 | 1.86

many cubes | 11.97% | 11.28% | 14.51% | 1.93 | 1.78 | 1.31 | 16.13 | 15.78 | 9.03 | 14.2 | 14 | 7.72

many sprites | 7.14% | 9.42% | -85.42% | 1.72 | 2.23 | -6.15 | 24.09 | 23.68 | 7.2 | 22.37 | 21.45 | 13.35

<!--EndFragment-->

</body>

</html>

#### This PR with pipelining disabled vs Main

Mostly regressions here. I don't think this should be a problem as users that are disabling pipelined rendering are probably running single threaded and not using the parallel executor. The regression is probably mostly due to the switch to use `async_executor::run` instead of `try_tick` and also having one less thread to run systems on. I'll do a writeup on why switching to `run` causes regressions, so we can try to eventually fix it. Using try_tick causes issues when pipeline rendering is enable as seen [here](https://github.com/bevyengine/bevy/pull/6503#issuecomment-1380803518)

<html>

<body>

<!--StartFragment--><google-sheets-html-origin>

| percent | | | Diff | | | Main | | | PR no pipelining | |

-- | -- | -- | -- | -- | -- | -- | -- | -- | -- | -- | -- | --

tracy frame time | mean | median | sigma | mean | median | sigma | mean | median | sigma | mean | median | sigma

many foxes | -3.72% | -4.42% | -1.07% | -0.21 | -0.24 | -0.04 | 5.64 | 5.43 | 3.74 | 5.85 | 5.67 | 3.78

many lights | 0.29% | -0.30% | 4.75% | 0.03 | -0.03 | 0.25 | 10.29 | 10.12 | 5.26 | 10.26 | 10.15 | 5.01

many animated sprites | 0.22% | 1.81% | -2.72% | 0.06 | 0.48 | -0.27 | 27.12 | 26.57 | 9.93 | 27.06 | 26.09 | 10.2

3d scene | -15.79% | -14.75% | -31.34% | -0.3 | -0.27 | -0.63 | 1.9 | 1.83 | 2.01 | 2.2 | 2.1 | 2.64

many cubes | -2.85% | -3.30% | 0.00% | -0.46 | -0.52 | 0 | 16.13 | 15.78 | 9.03 | 16.59 | 16.3 | 9.03

many sprites | 2.49% | 2.41% | 0.69% | 0.6 | 0.57 | 0.05 | 24.09 | 23.68 | 7.2 | 23.49 | 23.11 | 7.15

<!--EndFragment-->

</body>

</html>

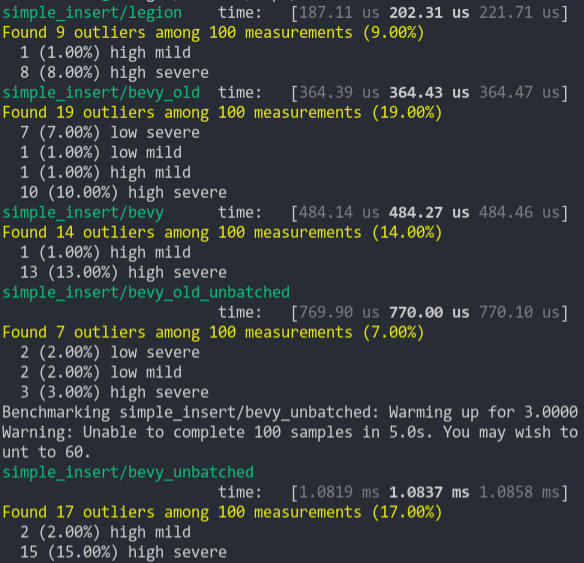

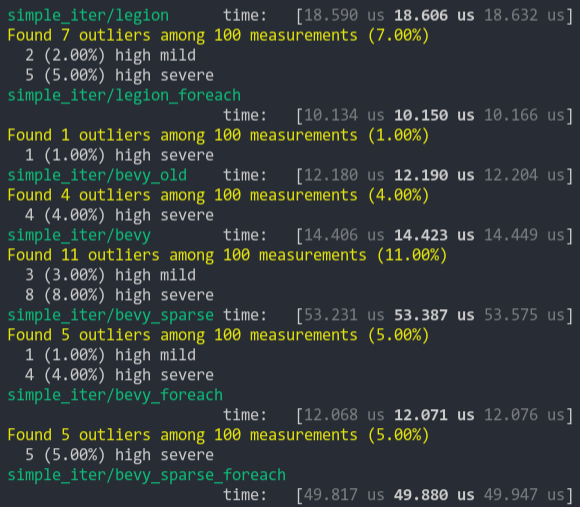

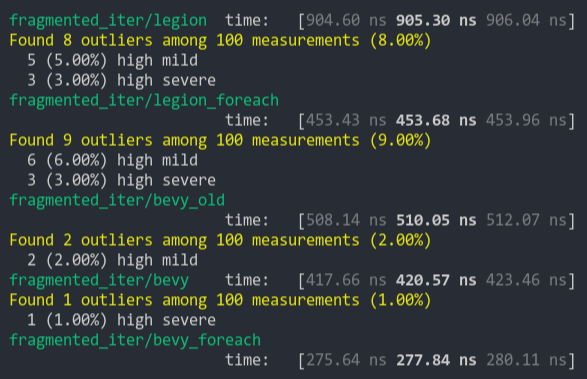

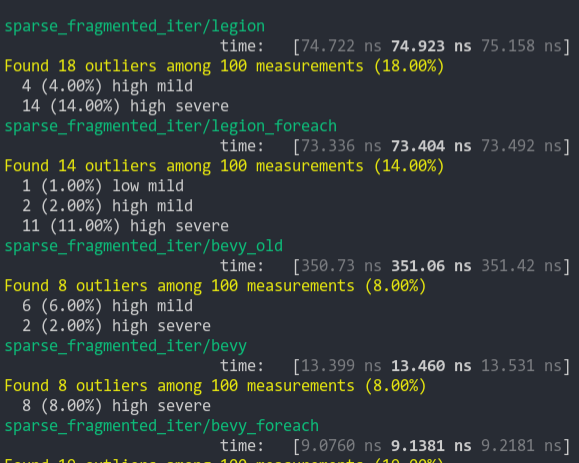

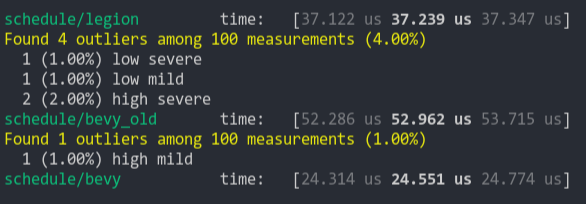

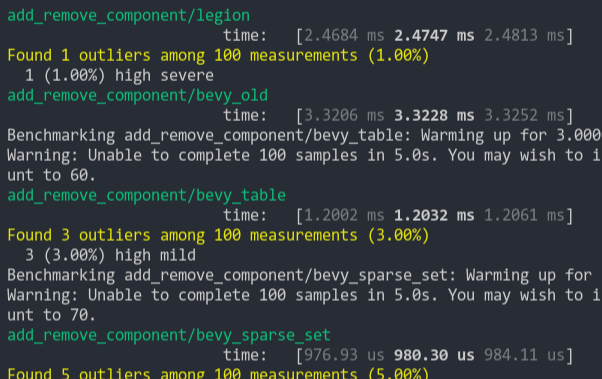

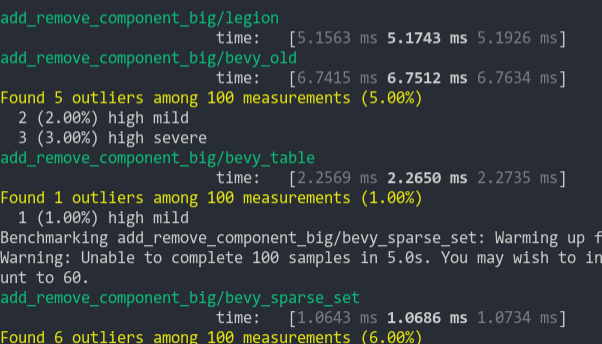

### Benchmarks

Mostly the same except empty_systems has got a touch slower. The maybe_pipelining+1 column has the compute task pool with an extra thread over default added. This is because pipelining loses one thread over main to execute systems on, since the main thread no longer runs normal systems.

<details>

<summary>Click Me</summary>

```text

group main maybe-pipelining+1

----- ------------------------- ------------------

busy_systems/01x_entities_03_systems 1.07 30.7±1.32µs ? ?/sec 1.00 28.6±1.35µs ? ?/sec

busy_systems/01x_entities_06_systems 1.10 52.1±1.10µs ? ?/sec 1.00 47.2±1.08µs ? ?/sec

busy_systems/01x_entities_09_systems 1.00 74.6±1.36µs ? ?/sec 1.00 75.0±1.93µs ? ?/sec

busy_systems/01x_entities_12_systems 1.03 100.6±6.68µs ? ?/sec 1.00 98.0±1.46µs ? ?/sec

busy_systems/01x_entities_15_systems 1.11 128.5±3.53µs ? ?/sec 1.00 115.5±1.02µs ? ?/sec

busy_systems/02x_entities_03_systems 1.16 50.4±2.56µs ? ?/sec 1.00 43.5±3.00µs ? ?/sec

busy_systems/02x_entities_06_systems 1.00 87.1±1.27µs ? ?/sec 1.05 91.5±7.15µs ? ?/sec

busy_systems/02x_entities_09_systems 1.04 139.9±6.37µs ? ?/sec 1.00 134.0±1.06µs ? ?/sec

busy_systems/02x_entities_12_systems 1.05 179.2±3.47µs ? ?/sec 1.00 170.1±3.17µs ? ?/sec

busy_systems/02x_entities_15_systems 1.01 219.6±3.75µs ? ?/sec 1.00 218.1±2.55µs ? ?/sec

busy_systems/03x_entities_03_systems 1.10 70.6±2.33µs ? ?/sec 1.00 64.3±0.69µs ? ?/sec

busy_systems/03x_entities_06_systems 1.02 130.2±3.11µs ? ?/sec 1.00 128.0±1.34µs ? ?/sec

busy_systems/03x_entities_09_systems 1.00 195.0±10.11µs ? ?/sec 1.00 194.8±1.41µs ? ?/sec

busy_systems/03x_entities_12_systems 1.01 261.7±4.05µs ? ?/sec 1.00 259.8±4.11µs ? ?/sec

busy_systems/03x_entities_15_systems 1.00 318.0±3.04µs ? ?/sec 1.06 338.3±20.25µs ? ?/sec

busy_systems/04x_entities_03_systems 1.00 82.9±0.63µs ? ?/sec 1.02 84.3±0.63µs ? ?/sec

busy_systems/04x_entities_06_systems 1.01 181.7±3.65µs ? ?/sec 1.00 179.8±1.76µs ? ?/sec

busy_systems/04x_entities_09_systems 1.04 265.0±4.68µs ? ?/sec 1.00 255.3±1.98µs ? ?/sec

busy_systems/04x_entities_12_systems 1.00 335.9±3.00µs ? ?/sec 1.05 352.6±15.84µs ? ?/sec

busy_systems/04x_entities_15_systems 1.00 418.6±10.26µs ? ?/sec 1.08 450.2±39.58µs ? ?/sec

busy_systems/05x_entities_03_systems 1.07 114.3±0.95µs ? ?/sec 1.00 106.9±1.52µs ? ?/sec

busy_systems/05x_entities_06_systems 1.08 229.8±2.90µs ? ?/sec 1.00 212.3±4.18µs ? ?/sec

busy_systems/05x_entities_09_systems 1.03 329.3±1.99µs ? ?/sec 1.00 319.2±2.43µs ? ?/sec

busy_systems/05x_entities_12_systems 1.06 454.7±6.77µs ? ?/sec 1.00 430.1±3.58µs ? ?/sec

busy_systems/05x_entities_15_systems 1.03 554.6±6.15µs ? ?/sec 1.00 538.4±23.87µs ? ?/sec

contrived/01x_entities_03_systems 1.00 14.0±0.15µs ? ?/sec 1.08 15.1±0.21µs ? ?/sec

contrived/01x_entities_06_systems 1.04 28.5±0.37µs ? ?/sec 1.00 27.4±0.44µs ? ?/sec

contrived/01x_entities_09_systems 1.00 41.5±4.38µs ? ?/sec 1.02 42.2±2.24µs ? ?/sec

contrived/01x_entities_12_systems 1.06 55.9±1.49µs ? ?/sec 1.00 52.6±1.36µs ? ?/sec

contrived/01x_entities_15_systems 1.02 68.0±2.00µs ? ?/sec 1.00 66.5±0.78µs ? ?/sec

contrived/02x_entities_03_systems 1.03 25.2±0.38µs ? ?/sec 1.00 24.6±0.52µs ? ?/sec

contrived/02x_entities_06_systems 1.00 46.3±0.49µs ? ?/sec 1.04 48.1±4.13µs ? ?/sec

contrived/02x_entities_09_systems 1.02 70.4±0.99µs ? ?/sec 1.00 68.8±1.04µs ? ?/sec

contrived/02x_entities_12_systems 1.06 96.8±1.49µs ? ?/sec 1.00 91.5±0.93µs ? ?/sec

contrived/02x_entities_15_systems 1.02 116.2±0.95µs ? ?/sec 1.00 114.2±1.42µs ? ?/sec

contrived/03x_entities_03_systems 1.00 33.2±0.38µs ? ?/sec 1.01 33.6±0.45µs ? ?/sec

contrived/03x_entities_06_systems 1.00 62.4±0.73µs ? ?/sec 1.01 63.3±1.05µs ? ?/sec

contrived/03x_entities_09_systems 1.02 96.4±0.85µs ? ?/sec 1.00 94.8±3.02µs ? ?/sec

contrived/03x_entities_12_systems 1.01 126.3±4.67µs ? ?/sec 1.00 125.6±2.27µs ? ?/sec

contrived/03x_entities_15_systems 1.03 160.2±9.37µs ? ?/sec 1.00 156.0±1.53µs ? ?/sec

contrived/04x_entities_03_systems 1.02 41.4±3.39µs ? ?/sec 1.00 40.5±0.52µs ? ?/sec

contrived/04x_entities_06_systems 1.00 78.9±1.61µs ? ?/sec 1.02 80.3±1.06µs ? ?/sec

contrived/04x_entities_09_systems 1.02 121.8±3.97µs ? ?/sec 1.00 119.2±1.46µs ? ?/sec

contrived/04x_entities_12_systems 1.00 157.8±1.48µs ? ?/sec 1.01 160.1±1.72µs ? ?/sec

contrived/04x_entities_15_systems 1.00 197.9±1.47µs ? ?/sec 1.08 214.2±34.61µs ? ?/sec

contrived/05x_entities_03_systems 1.00 49.1±0.33µs ? ?/sec 1.01 49.7±0.75µs ? ?/sec

contrived/05x_entities_06_systems 1.00 95.0±0.93µs ? ?/sec 1.00 94.6±0.94µs ? ?/sec

contrived/05x_entities_09_systems 1.01 143.2±1.68µs ? ?/sec 1.00 142.2±2.00µs ? ?/sec

contrived/05x_entities_12_systems 1.00 191.8±2.03µs ? ?/sec 1.01 192.7±7.88µs ? ?/sec

contrived/05x_entities_15_systems 1.02 239.7±3.71µs ? ?/sec 1.00 235.8±4.11µs ? ?/sec

empty_systems/000_systems 1.01 47.8±0.67ns ? ?/sec 1.00 47.5±2.02ns ? ?/sec

empty_systems/001_systems 1.00 1743.2±126.14ns ? ?/sec 1.01 1761.1±70.10ns ? ?/sec

empty_systems/002_systems 1.01 2.2±0.04µs ? ?/sec 1.00 2.2±0.02µs ? ?/sec

empty_systems/003_systems 1.02 2.7±0.09µs ? ?/sec 1.00 2.7±0.16µs ? ?/sec

empty_systems/004_systems 1.00 3.1±0.11µs ? ?/sec 1.00 3.1±0.24µs ? ?/sec

empty_systems/005_systems 1.00 3.5±0.05µs ? ?/sec 1.11 3.9±0.70µs ? ?/sec

empty_systems/010_systems 1.00 5.5±0.12µs ? ?/sec 1.03 5.7±0.17µs ? ?/sec

empty_systems/015_systems 1.00 7.9±0.19µs ? ?/sec 1.06 8.4±0.16µs ? ?/sec

empty_systems/020_systems 1.00 10.4±1.25µs ? ?/sec 1.02 10.6±0.18µs ? ?/sec

empty_systems/025_systems 1.00 12.4±0.39µs ? ?/sec 1.14 14.1±1.07µs ? ?/sec

empty_systems/030_systems 1.00 15.1±0.39µs ? ?/sec 1.05 15.8±0.62µs ? ?/sec

empty_systems/035_systems 1.00 16.9±0.47µs ? ?/sec 1.07 18.0±0.37µs ? ?/sec

empty_systems/040_systems 1.00 19.3±0.41µs ? ?/sec 1.05 20.3±0.39µs ? ?/sec

empty_systems/045_systems 1.00 22.4±1.67µs ? ?/sec 1.02 22.9±0.51µs ? ?/sec

empty_systems/050_systems 1.00 24.4±1.67µs ? ?/sec 1.01 24.7±0.40µs ? ?/sec

empty_systems/055_systems 1.05 28.6±5.27µs ? ?/sec 1.00 27.2±0.70µs ? ?/sec

empty_systems/060_systems 1.02 29.9±1.64µs ? ?/sec 1.00 29.3±0.66µs ? ?/sec

empty_systems/065_systems 1.02 32.7±3.15µs ? ?/sec 1.00 32.1±0.98µs ? ?/sec

empty_systems/070_systems 1.00 33.0±1.42µs ? ?/sec 1.03 34.1±1.44µs ? ?/sec

empty_systems/075_systems 1.00 34.8±0.89µs ? ?/sec 1.04 36.2±0.70µs ? ?/sec

empty_systems/080_systems 1.00 37.0±1.82µs ? ?/sec 1.05 38.7±1.37µs ? ?/sec

empty_systems/085_systems 1.00 38.7±0.76µs ? ?/sec 1.05 40.8±0.83µs ? ?/sec

empty_systems/090_systems 1.00 41.5±1.09µs ? ?/sec 1.04 43.2±0.82µs ? ?/sec

empty_systems/095_systems 1.00 43.6±1.10µs ? ?/sec 1.04 45.2±0.99µs ? ?/sec

empty_systems/100_systems 1.00 46.7±2.27µs ? ?/sec 1.03 48.1±1.25µs ? ?/sec

```

</details>

## Migration Guide

### App `runner` and SubApp `extract` functions are now required to be Send

This was changed to enable pipelined rendering. If this breaks your use case please report it as these new bounds might be able to be relaxed.

## ToDo

* [x] redo benchmarking

* [x] reinvestigate the perf of the try_tick -> run change for task pool scope

# Objective

Fixes#3310. Fixes#6282. Fixes#6278. Fixes#3666.

## Solution

Split out `!Send` resources into `NonSendResources`. Add a `origin_thread_id` to all `!Send` Resources, check it on dropping `NonSendResourceData`, if there's a mismatch, panic. Moved all of the checks that `MainThreadValidator` would do into `NonSendResources` instead.

All `!Send` resources now individually track which thread they were inserted from. This is validated against for every access, mutation, and drop that could be done against the value.

A regression test using an altered version of the example from #3310 has been added.

This is a stopgap solution for the current status quo. A full solution may involve fully removing `!Send` resources/components from `World`, which will likely require a much more thorough design on how to handle the existing in-engine and ecosystem use cases.

This PR also introduces another breaking change:

```rust

use bevy_ecs::prelude::*;

#[derive(Resource)]

struct Resource(u32);

fn main() {

let mut world = World::new();

world.insert_resource(Resource(1));

world.insert_non_send_resource(Resource(2));

let res = world.get_resource_mut::<Resource>().unwrap();

assert_eq!(res.0, 2);

}

```

This code will run correctly on 0.9.1 but not with this PR, since NonSend resources and normal resources have become actual distinct concepts storage wise.

## Changelog

Changed: Fix soundness bug with `World: Send`. Dropping a `World` that contains a `!Send` resource on the wrong thread will now panic.

## Migration Guide

Normal resources and `NonSend` resources no longer share the same backing storage. If `R: Resource`, then `NonSend<R>` and `Res<R>` will return different instances from each other. If you are using both `Res<T>` and `NonSend<T>` (or their mutable variants), to fetch the same resources, it's strongly advised to use `Res<T>`.

# Objective

- This pulls out some of the changes to Plugin setup and sub apps from #6503 to make that PR easier to review.

- Separate the extract stage from running the sub app's schedule to allow for them to be run on separate threads in the future

- Fixes#6990

## Solution

- add a run method to `SubApp` that runs the schedule

- change the name of `sub_app_runner` to extract to make it clear that this function is only for extracting data between the main app and the sub app

- remove the extract stage from the sub app schedule so it can be run separately. This is done by adding a `setup` method to the `Plugin` trait that runs after all plugin build methods run. This is required to allow the extract stage to be removed from the schedule after all the plugins have added their systems to the stage. We will also need the setup method for pipelined rendering to setup the render thread. See e3267965e1/crates/bevy_render/src/pipelined_rendering.rs (L57-L98)

## Changelog

- Separate SubApp Extract stage from running the sub app schedule.

## Migration Guide

### SubApp `runner` has conceptually been changed to an `extract` function.

The `runner` no longer is in charge of running the sub app schedule. It's only concern is now moving data between the main world and the sub app. The `sub_app.app.schedule` is now run for you after the provided function is called.

```rust

// before

fn main() {

let sub_app = App::empty();

sub_app.add_stage(MyStage, SystemStage::parallel());

App::new().add_sub_app(MySubApp, sub_app, move |main_world, sub_app| {

extract(app_world, render_app);

render_app.app.schedule.run();

});

}

// after

fn main() {

let sub_app = App::empty();

sub_app.add_stage(MyStage, SystemStage::parallel());

App::new().add_sub_app(MySubApp, sub_app, move |main_world, sub_app| {

extract(app_world, render_app);

// schedule is automatically called for you after extract is run

});

}

```

# Objective

A separate `tracing` span for running a system's commands is created, even if the system doesn't have commands. This is adding extra measuring overhead (see #4892) where it's not needed.

## Solution

Move the span into `ParallelCommandState` and `CommandQueue`'s `SystemParamState::apply`. To get the right metadata for the span, a additional `&SystemMeta` parameter was added to `SystemParamState::apply`.

---

## Changelog

Added: `SystemMeta::name`

Changed: Systems without `Commands` and `ParallelCommands` will no longer show a "system_commands" span when profiling.

Changed: `SystemParamState::apply` now takes a `&SystemMeta` parameter in addition to the provided `&mut World`.

# Objective

- fix new clippy lints before they get stable and break CI

## Solution

- run `clippy --fix` to auto-fix machine-applicable lints

- silence `clippy::should_implement_trait` for `fn HandleId::default<T: Asset>`

## Changes

- always prefer `format!("{inline}")` over `format!("{}", not_inline)`

- prefer `Box::default` (or `Box::<T>::default` if necessary) over `Box::new(T::default())`

# Objective

> System chaining is a confusing name: it implies the ability to construct non-linear graphs, and suggests a sense of system ordering that is only incidentally true. Instead, it actually works by passing data from one system to the next, much like the pipe operator.

> In the accepted [stageless RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/45-stageless.md), this concept is renamed to piping, and "system chaining" is used to construct groups of systems with ordering dependencies between them.

Fixes#6225.

## Changelog

System chaining has been renamed to system piping to improve clarity (and free up the name for new ordering APIs).

## Migration Guide

The `.chain(handler_system)` method on systems is now `.pipe(handler_system)`.

The `IntoChainSystem` trait is now `IntoPipeSystem`, and the `ChainSystem` struct is now `PipeSystem`.

# Objective

- Adding Debug implementations for App, Stage, Schedule, Query, QueryState.

- Fixes#1130.

## Solution

- Implemented std::fmt::Debug for a number of structures.

---

## Changelog

Also added Debug implementations for ParallelSystemExecutor, SingleThreadedExecutor, various RunCriteria structures, SystemContainer, and SystemDescriptor.

Opinions are sure to differ as to what information to provide in a Debug implementation. Best guess was taken for this initial version for these structures.

Co-authored-by: targrub <62773321+targrub@users.noreply.github.com>

# Background

Incremental implementation of #4299. The code is heavily borrowed from that PR.

# Objective

The execution order ambiguity checker often emits false positives, since bevy is not aware of invariants upheld by the user.

## Solution

Title

---

## Changelog

+ Added methods `SystemDescriptor::ignore_all_ambiguities` and `::ambiguous_with`. These allow you to silence warnings for specific system-order ambiguities.

## Migration Guide

***Note for maintainers**: This should replace the migration guide for #5916*

Ambiguity sets have been replaced with a simpler API.

```rust

// These systems technically conflict, but we don't care which order they run in.

fn jump_on_click(mouse: Res<Input<MouseButton>>, mut transforms: Query<&mut Transform>) { ... }

fn jump_on_spacebar(keys: Res<Input<KeyCode>>, mut transforms: Query<&mut Transform>) { ... }

//

// Before

#[derive(AmbiguitySetLabel)]

struct JumpSystems;

app

.add_system(jump_on_click.in_ambiguity_set(JumpSystems))

.add_system(jump_on_spacebar.in_ambiguity_set(JumpSystems));

//

// After

app

.add_system(jump_on_click.ambiguous_with(jump_on_spacebar))

.add_system(jump_on_spacebar);

```

# Objective

- Add ability to create nested spawns. This is needed for stageless. The current executor spawns tasks for each system early and runs the system by communicating through a channel. In stageless we want to spawn the task late, so that archetypes can be updated right before the task is run. The executor is run on a separate task, so this enables the scope to be passed to the spawned executor.

- Fixes#4301

## Solution

- Instantiate a single threaded executor on the scope and use that instead of the LocalExecutor. This allows the scope to be Send, but still able to spawn tasks onto the main thread the scope is run on. This works because while systems can access nonsend data. The systems themselves are Send. Because of this change we lose the ability to spawn nonsend tasks on the scope, but I don't think this is being used anywhere. Users would still be able to use spawn_local on TaskPools.

- Steals the lifetime tricks the `std:🧵:scope` uses to allow nested spawns, but disallow scope to be passed to tasks or threads not associated with the scope.

- Change the storage for the tasks to a `ConcurrentQueue`. This is to allow a &Scope to be passed for spawning instead of a &mut Scope. `ConcurrentQueue` was chosen because it was already in our dependency tree because `async_executor` depends on it.

- removed the optimizations for 0 and 1 spawned tasks. It did improve those cases, but made the cases of more than 1 task slower.

---

## Changelog

Add ability to nest spawns

```rust

fn main() {

let pool = TaskPool::new();

pool.scope(|scope| {

scope.spawn(async move {

// calling scope.spawn from an spawn task was not possible before

scope.spawn(async move {

// do something

});

});

})

}

```

## Migration Guide

If you were using explicit lifetimes and Passing Scope you'll need to specify two lifetimes now.

```rust

fn scoped_function<'scope>(scope: &mut Scope<'scope, ()>) {}

// should become

fn scoped_function<'scope>(scope: &Scope<'_, 'scope, ()>) {}

```

`scope.spawn_local` changed to `scope.spawn_on_scope` this should cover cases where you needed to run tasks on the local thread, but does not cover spawning Nonsend Futures.

## TODO

* [x] think real hard about all the lifetimes

* [x] add doc about what 'env and 'scope mean.

* [x] manually check that the single threaded task pool still works

* [x] Get updated perf numbers

* [x] check and make sure all the transmutes are necessary

* [x] move commented out test into a compile fail test

* [x] look through the tests for scope on std and see if I should add any more tests

Co-authored-by: Michael Hsu <myhsu@benjaminelectric.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

The [Stageless RFC](https://github.com/bevyengine/rfcs/pull/45) involves allowing exclusive systems to be referenced and ordered relative to parallel systems. We've agreed that unifying systems under `System` is the right move.

This is an alternative to #4166 (see rationale in the comments I left there). Note that this builds on the learnings established there (and borrows some patterns).

## Solution

This unifies parallel and exclusive systems under the shared `System` trait, removing the old `ExclusiveSystem` trait / impls. This is accomplished by adding a new `ExclusiveFunctionSystem` impl similar to `FunctionSystem`. It is backed by `ExclusiveSystemParam`, which is similar to `SystemParam`. There is a new flattened out SystemContainer api (which cuts out a lot of trait and type complexity).

This means you can remove all cases of `exclusive_system()`:

```rust

// before

commands.add_system(some_system.exclusive_system());

// after

commands.add_system(some_system);

```

I've also implemented `ExclusiveSystemParam` for `&mut QueryState` and `&mut SystemState`, which makes this possible in exclusive systems:

```rust

fn some_exclusive_system(

world: &mut World,

transforms: &mut QueryState<&Transform>,

state: &mut SystemState<(Res<Time>, Query<&Player>)>,

) {

for transform in transforms.iter(world) {

println!("{transform:?}");

}

let (time, players) = state.get(world);

for player in players.iter() {

println!("{player:?}");

}

}

```

Note that "exclusive function systems" assume `&mut World` is present (and the first param). I think this is a fair assumption, given that the presence of `&mut World` is what defines the need for an exclusive system.

I added some targeted SystemParam `static` constraints, which removed the need for this:

``` rust

fn some_exclusive_system(state: &mut SystemState<(Res<'static, Time>, Query<&'static Player>)>) {}

```

## Related

- #2923

- #3001

- #3946

## Changelog

- `ExclusiveSystem` trait (and implementations) has been removed in favor of sharing the `System` trait.

- `ExclusiveFunctionSystem` and `ExclusiveSystemParam` were added, enabling flexible exclusive function systems

- `&mut SystemState` and `&mut QueryState` now implement `ExclusiveSystemParam`

- Exclusive and parallel System configuration is now done via a unified `SystemDescriptor`, `IntoSystemDescriptor`, and `SystemContainer` api.

## Migration Guide

Calling `.exclusive_system()` is no longer required (or supported) for converting exclusive system functions to exclusive systems:

```rust

// Old (0.8)

app.add_system(some_exclusive_system.exclusive_system());

// New (0.9)

app.add_system(some_exclusive_system);

```

Converting "normal" parallel systems to exclusive systems is done by calling the exclusive ordering apis:

```rust

// Old (0.8)

app.add_system(some_system.exclusive_system().at_end());

// New (0.9)

app.add_system(some_system.at_end());

```

Query state in exclusive systems can now be cached via ExclusiveSystemParams, which should be preferred for clarity and performance reasons:

```rust

// Old (0.8)

fn some_system(world: &mut World) {

let mut transforms = world.query::<&Transform>();

for transform in transforms.iter(world) {

}

}

// New (0.9)

fn some_system(world: &mut World, transforms: &mut QueryState<&Transform>) {

for transform in transforms.iter(world) {

}

}

```

# Objective

Now that we can consolidate Bundles and Components under a single insert (thanks to #2975 and #6039), almost 100% of world spawns now look like `world.spawn().insert((Some, Tuple, Here))`. Spawning an entity without any components is an extremely uncommon pattern, so it makes sense to give spawn the "first class" ergonomic api. This consolidated api should be made consistent across all spawn apis (such as World and Commands).

## Solution

All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input:

```rust

// before:

commands

.spawn()

.insert((A, B, C));

world

.spawn()

.insert((A, B, C);

// after

commands.spawn((A, B, C));

world.spawn((A, B, C));

```

All existing instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api. A new `spawn_empty` has been added, replacing the old `spawn` api.

By allowing `world.spawn(some_bundle)` to replace `world.spawn().insert(some_bundle)`, this opened the door to removing the initial entity allocation in the "empty" archetype / table done in `spawn()` (and subsequent move to the actual archetype in `.insert(some_bundle)`).

This improves spawn performance by over 10%:

To take this measurement, I added a new `world_spawn` benchmark.

Unfortunately, optimizing `Commands::spawn` is slightly less trivial, as Commands expose the Entity id of spawned entities prior to actually spawning. Doing the optimization would (naively) require assurances that the `spawn(some_bundle)` command is applied before all other commands involving the entity (which would not necessarily be true, if memory serves). Optimizing `Commands::spawn` this way does feel possible, but it will require careful thought (and maybe some additional checks), which deserves its own PR. For now, it has the same performance characteristics of the current `Commands::spawn_bundle` on main.

**Note that 99% of this PR is simple renames and refactors. The only code that needs careful scrutiny is the new `World::spawn()` impl, which is relatively straightforward, but it has some new unsafe code (which re-uses battle tested BundlerSpawner code path).**

---

## Changelog

- All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input

- All instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api

- World and Commands now have `spawn_empty()`, which is equivalent to the old `spawn()` behavior.

## Migration Guide

```rust

// Old (0.8):

commands

.spawn()

.insert_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

commands.spawn_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

let entity = commands.spawn().id();

// New (0.9)

let entity = commands.spawn_empty().id();

// Old (0.8)

let entity = world.spawn().id();

// New (0.9)

let entity = world.spawn_empty();

```

# Objective

- Add unit tests for ambiguity detection reporting.

- Incremental implementation of #4299.

## Solution

- Refactor ambiguity detection internals to make it testable. As a bonus, this should make it easier to extend in the future.

## Notes

* This code was copy-pasted from #4299 and modified. Credit goes to @alice-i-cecile and @afonsolage, though I'm not sure who wrote what at this point.

# Objective

Take advantage of the "impl Bundle for Component" changes in #2975 / add the follow up changes discussed there.

## Solution

- Change `insert` and `remove` to accept a Bundle instead of a Component (for both Commands and World)

- Deprecate `insert_bundle`, `remove_bundle`, and `remove_bundle_intersection`

- Add `remove_intersection`

---

## Changelog

- Change `insert` and `remove` now accept a Bundle instead of a Component (for both Commands and World)

- `insert_bundle` and `remove_bundle` are deprecated

## Migration Guide

Replace `insert_bundle` with `insert`:

```rust

// Old (0.8)

commands.spawn().insert_bundle(SomeBundle::default());

// New (0.9)

commands.spawn().insert(SomeBundle::default());

```

Replace `remove_bundle` with `remove`:

```rust

// Old (0.8)

commands.entity(some_entity).remove_bundle::<SomeBundle>();

// New (0.9)

commands.entity(some_entity).remove::<SomeBundle>();

```

Replace `remove_bundle_intersection` with `remove_intersection`:

```rust

// Old (0.8)

world.entity_mut(some_entity).remove_bundle_intersection::<SomeBundle>();

// New (0.9)

world.entity_mut(some_entity).remove_intersection::<SomeBundle>();

```

Consider consolidating as many operations as possible to improve ergonomics and cut down on archetype moves:

```rust

// Old (0.8)

commands.spawn()

.insert_bundle(SomeBundle::default())

.insert(SomeComponent);

// New (0.9) - Option 1

commands.spawn().insert((

SomeBundle::default(),

SomeComponent,

))

// New (0.9) - Option 2

commands.spawn_bundle((

SomeBundle::default(),

SomeComponent,

))

```

## Next Steps

Consider changing `spawn` to accept a bundle and deprecate `spawn_bundle`.

# Objective

While using the ParallelExecutor, systems do not actually start until `prepare_systems` completes. In stages where there are large numbers of "empty" systems with very little work to do, this delay adds significant overhead, which can add up over many stages.

## Solution

Immediately and synchronously signal the start of systems that can run without dependencies inside `prepare_systems` instead of waiting for the first executor iteration after `prepare_systems` completes. Any system that is dependent on them still cannot run until after `prepare_systems` completes, but there are a large number of unconstrained systems in the base engine where this is a general benefit in almost every case.

## Performance

This change was tested against `many_foxes` in the default configuration. As this change is sensitive to the overhead around scheduling systems, the spans for measuring system timing, system overhead, and system commands were all commented out for these measurements.

The median stage timings between `main` and this PR are as follows:

|stage|main|this PR|

|:--|:--|:--|

|First|75.54 us|61.61 us|

|LoadAssets|51.05 us|42.32 us|

|PreUpdate|54.6 us|55.56 us|

|Update|61.89 us|51.5 us|

|PostUpdate|7.27 ms|6.71 ms|

|AssetEvents|47.82 us|35.95 us|

|Last|39.19 us|37.71 us|

|reserve_and_flush|57.83 us|48.2 us|

|Extract|1.41 ms|1.28 ms|

|Prepare|554.49 us|502.53 us|

|Queue|216.29 us|207.51 us|

|Sort|67.03 us|60.99 us|

|Render|1.73 ms|1.58 ms|

|Cleanup|33.55 us|30.76 us|

|Clear Entities|18.56 us|17.05 us|

|**full frame**|**11.9 ms**|**10.91 ms**|

For the first few stages, the benefit is small but cumulative over each. For PostUpdate in particular, this allows `parent_update` to run while prepare_systems is running, which is required for the animation and transform propagation systems, which dominate the time spent in the stage, but also frontloads the contention as the other "empty" systems are also running while `parent_update` is running. For Render, where there is just a single large exclusive system, the benefit comes from not waiting on a spuriously scheduled task on the task pool to kick off the system: it's immediately scheduled to run.

# Objective

This code is very disjoint, and the `stage.rs` file that it's in is already very long.

All I've done is move the code and clean up the compiler errors that result.

Followup to #5916, split out from #4299.

# Objective

Ambiguity sets are used to ignore system order ambiguities between groups of systems. However, they are not very useful: they are clunky, poorly integrated, and generally hampered by the difficulty using (or discovering) the ambiguity detector.

As a first step to the work in #4299, we're removing them.

## Migration Guide

Ambiguity sets have been removed.

# Objective

Rust 1.63 resolved [an issue](https://github.com/rust-lang/rust/issues/83701) that prevents you from combining explicit generic arguments with `impl Trait` arguments.

Now, we no longer need to use dynamic dispatch to work around this.

## Migration Guide

The methods `Schedule::get_stage` and `get_stage_mut` now accept `impl StageLabel` instead of `&dyn StageLabel`.

### Before

```rust

let stage = schedule.get_stage_mut::<SystemStage>(&MyLabel)?;

```

### After

```rust

let stage = schedule.get_stage_mut::<SystemStage>(MyLabel)?;

```

*This PR description is an edited copy of #5007, written by @alice-i-cecile.*

# Objective

Follow-up to https://github.com/bevyengine/bevy/pull/2254. The `Resource` trait currently has a blanket implementation for all types that meet its bounds.

While ergonomic, this results in several drawbacks:

* it is possible to make confusing, silent mistakes such as inserting a function pointer (Foo) rather than a value (Foo::Bar) as a resource

* it is challenging to discover if a type is intended to be used as a resource

* we cannot later add customization options (see the [RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/27-derive-component.md) for the equivalent choice for Component).

* dependencies can use the same Rust type as a resource in invisibly conflicting ways

* raw Rust types used as resources cannot preserve privacy appropriately, as anyone able to access that type can read and write to internal values

* we cannot capture a definitive list of possible resources to display to users in an editor

## Notes to reviewers

* Review this commit-by-commit; there's effectively no back-tracking and there's a lot of churn in some of these commits.

*ira: My commits are not as well organized :')*

* I've relaxed the bound on Local to Send + Sync + 'static: I don't think these concerns apply there, so this can keep things simple. Storing e.g. a u32 in a Local is fine, because there's a variable name attached explaining what it does.

* I think this is a bad place for the Resource trait to live, but I've left it in place to make reviewing easier. IMO that's best tackled with https://github.com/bevyengine/bevy/issues/4981.

## Changelog

`Resource` is no longer automatically implemented for all matching types. Instead, use the new `#[derive(Resource)]` macro.

## Migration Guide

Add `#[derive(Resource)]` to all types you are using as a resource.

If you are using a third party type as a resource, wrap it in a tuple struct to bypass orphan rules. Consider deriving `Deref` and `DerefMut` to improve ergonomics.

`ClearColor` no longer implements `Component`. Using `ClearColor` as a component in 0.8 did nothing.

Use the `ClearColorConfig` in the `Camera3d` and `Camera2d` components instead.

Co-authored-by: Alice <alice.i.cecile@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: devil-ira <justthecooldude@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

I noticed while working on #5366 that the documentation for label types wasn't working correctly. Having experimented with this for a few weeks, I believe that generating docs in macros is more effort than it's worth.

## Solution

Add more boilerplate, copy-paste and edit the docs across types. This also lets us add custom doctests for specific types. Also, we don't need `concat_idents` as a dependency anymore.

# Objective

- Closes#4954

- Reduce the complexity of the `{System, App, *}Label` APIs.

## Solution

For the sake of brevity I will only refer to `SystemLabel`, but everything applies to all of the other label types as well.

- Add `SystemLabelId`, a lightweight, `copy` struct.

- Convert custom types into `SystemLabelId` using the trait `SystemLabel`.

## Changelog

- String literals implement `SystemLabel` for now, but this should be changed with #4409 .

## Migration Guide

- Any previous use of `Box<dyn SystemLabel>` should be replaced with `SystemLabelId`.

- `AsSystemLabel` trait has been modified.

- No more output generics.

- Method `as_system_label` now returns `SystemLabelId`, removing an unnecessary level of indirection.

- If you *need* a label that is determined at runtime, you can use `Box::leak`. Not recommended.

## Questions for later

* Should we generate a `Debug` impl along with `#[derive(*Label)]`?

* Should we rename `as_str()`?

* Should we remove the extra derives (such as `Hash`) from builtin `*Label` types?

* Should we automatically derive types like `Clone, Copy, PartialEq, Eq`?

* More-ergonomic comparisons between `Label` and `LabelId`.

* Move `Dyn{Eq, Hash,Clone}` somewhere else.

* Some API to make interning dynamic labels easier.

* Optimize string representation

* Empty string for unit structs -- no debug info but faster comparisons

* Don't show enum types -- same tradeoffs as asbove.

Remove unnecessary calls to `iter()`/`iter_mut()`.

Mainly updates the use of queries in our code, docs, and examples.

```rust

// From

for _ in list.iter() {

for _ in list.iter_mut() {

// To

for _ in &list {

for _ in &mut list {

```

We already enable the pedantic lint [clippy::explicit_iter_loop](https://rust-lang.github.io/rust-clippy/stable/) inside of Bevy. However, this only warns for a few known types from the standard library.

## Note for reviewers

As you can see the additions and deletions are exactly equal.

Maybe give it a quick skim to check I didn't sneak in a crypto miner, but you don't have to torture yourself by reading every line.

I already experienced enough pain making this PR :)

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

- Currently, the `Extract` `RenderStage` is executed on the main world, with the render world available as a resource.

- However, when needing access to resources in the render world (e.g. to mutate them), the only way to do so was to get exclusive access to the whole `RenderWorld` resource.

- This meant that effectively only one extract which wrote to resources could run at a time.

- We didn't previously make `Extract`ing writing to the world a non-happy path, even though we want to discourage that.

## Solution

- Move the extract stage to run on the render world.

- Add the main world as a `MainWorld` resource.

- Add an `Extract` `SystemParam` as a convenience to access a (read only) `SystemParam` in the main world during `Extract`.

## Future work

It should be possible to avoid needing to use `get_or_spawn` for the render commands, since now the `Commands`' `Entities` matches up with the world being executed on.

We need to determine how this interacts with https://github.com/bevyengine/bevy/pull/3519

It's theoretically possible to remove the need for the `value` method on `Extract`. However, that requires slightly changing the `SystemParam` interface, which would make it more complicated. That would probably mess up the `SystemState` api too.

## Todo

I still need to add doc comments to `Extract`.

---

## Changelog

### Changed

- The `Extract` `RenderStage` now runs on the render world (instead of the main world as before).

You must use the `Extract` `SystemParam` to access the main world during the extract phase.

Resources on the render world can now be accessed using `ResMut` during extract.

### Removed

- `Commands::spawn_and_forget`. Use `Commands::get_or_spawn(e).insert_bundle(bundle)` instead

## Migration Guide

The `Extract` `RenderStage` now runs on the render world (instead of the main world as before).

You must use the `Extract` `SystemParam` to access the main world during the extract phase. `Extract` takes a single type parameter, which is any system parameter (such as `Res`, `Query` etc.). It will extract this from the main world, and returns the result of this extraction when `value` is called on it.

For example, if previously your extract system looked like:

```rust

fn extract_clouds(mut commands: Commands, clouds: Query<Entity, With<Cloud>>) {

for cloud in clouds.iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

the new version would be:

```rust

fn extract_clouds(mut commands: Commands, mut clouds: Extract<Query<Entity, With<Cloud>>>) {

for cloud in clouds.value().iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

The diff is:

```diff

--- a/src/clouds.rs

+++ b/src/clouds.rs

@@ -1,5 +1,5 @@

-fn extract_clouds(mut commands: Commands, clouds: Query<Entity, With<Cloud>>) {

- for cloud in clouds.iter() {

+fn extract_clouds(mut commands: Commands, mut clouds: Extract<Query<Entity, With<Cloud>>>) {

+ for cloud in clouds.value().iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

You can now also access resources from the render world using the normal system parameters during `Extract`:

```rust

fn extract_assets(mut render_assets: ResMut<MyAssets>, source_assets: Extract<Res<MyAssets>>) {

*render_assets = source_assets.clone();

}

```

Please note that all existing extract systems need to be updated to match this new style; even if they currently compile they will not run as expected. A warning will be emitted on a best-effort basis if this is not met.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

`SAFETY` comments are meant to be placed before `unsafe` blocks and should contain the reasoning of why in this case the usage of unsafe is okay. This is useful when reading the code because it makes it clear which assumptions are required for safety, and makes it easier to spot possible unsoundness holes. It also forces the code writer to think of something to write and maybe look at the safety contracts of any called unsafe methods again to double-check their correct usage.

There's a clippy lint called `undocumented_unsafe_blocks` which warns when using a block without such a comment.

## Solution

- since clippy expects `SAFETY` instead of `SAFE`, rename those

- add `SAFETY` comments in more places

- for the last remaining 3 places, add an `#[allow()]` and `// TODO` since I wasn't comfortable enough with the code to justify their safety

- add ` #![warn(clippy::undocumented_unsafe_blocks)]` to `bevy_ecs`

### Note for reviewers

The first commit only renames `SAFETY` to `SAFE` so it doesn't need a thorough review.

cb042a416e..55cef2d6fa is the diff for all other changes.

### Safety comments where I'm not too familiar with the code

774012ece5/crates/bevy_ecs/src/entity/mod.rs (L540-L546)774012ece5/crates/bevy_ecs/src/world/entity_ref.rs (L249-L252)

### Locations left undocumented with a `TODO` comment

5dde944a30/crates/bevy_ecs/src/schedule/executor_parallel.rs (L196-L199)5dde944a30/crates/bevy_ecs/src/world/entity_ref.rs (L287-L289)5dde944a30/crates/bevy_ecs/src/world/entity_ref.rs (L413-L415)

Co-authored-by: Jakob Hellermann <hellermann@sipgate.de>

# Objective

- Fixes#4271

## Solution

- Check for a pending transition in addition to a scheduled operation.

- I don't see a valid reason for updating the state unless both `scheduled` and `transition` are empty.

Right now, a direct reference to the target TaskPool is required to launch tasks on the pools, despite the three newtyped pools (AsyncComputeTaskPool, ComputeTaskPool, and IoTaskPool) effectively acting as global instances. The need to pass a TaskPool reference adds notable friction to spawning subtasks within existing tasks. Possible use cases for this may include chaining tasks within the same pool like spawning separate send/receive I/O tasks after waiting on a network connection to be established, or allowing cross-pool dependent tasks like starting dependent multi-frame computations following a long I/O load.

Other task execution runtimes provide static access to spawning tasks (i.e. `tokio::spawn`), which is notably easier to use than the reference passing required by `bevy_tasks` right now.

This PR makes does the following:

* Adds `*TaskPool::init` which initializes a `OnceCell`'ed with a provided TaskPool. Failing if the pool has already been initialized.

* Adds `*TaskPool::get` which fetches the initialized global pool of the respective type or panics. This generally should not be an issue in normal Bevy use, as the pools are initialized before they are accessed.

* Updated default task pool initialization to either pull the global handles and save them as resources, or if they are already initialized, pull the a cloned global handle as the resource.

This should make it notably easier to build more complex task hierarchies for dependent tasks. It should also make writing bevy-adjacent, but not strictly bevy-only plugin crates easier, as the global pools ensure it's all running on the same threads.

One alternative considered is keeping a thread-local reference to the pool for all threads in each pool to enable the same `tokio::spawn` interface. This would spawn tasks on the same pool that a task is currently running in. However this potentially leads to potential footgun situations where long running blocking tasks run on `ComputeTaskPool`.

## Objective

- ~~Make absurdly long-lived changes stay detectable for even longer (without leveling up to `u64`).~~

- Give all changes a consistent maximum lifespan.

- Improve code clarity.

## Solution

- ~~Increase the frequency of `check_tick` scans to increase the oldest reliably-detectable change.~~

(Deferred until we can benchmark the cost of a scan.)

- Ignore changes older than the maximum reliably-detectable age.

- General refactoring—name the constants, use them everywhere, and update the docs.

- Update test cases to check for the specified behavior.

## Related

This PR addresses (at least partially) the concerns raised in:

- #3071

- #3082 (and associated PR #3084)

## Background

- #1471

Given the minimum interval between `check_ticks` scans, `N`, the oldest reliably-detectable change is `u32::MAX - (2 * N - 1)` (or `MAX_CHANGE_AGE`). Reducing `N` from ~530 million (current value) to something like ~2 million would extend the lifetime of changes by a billion.

| minimum `check_ticks` interval | oldest reliably-detectable change | usable % of `u32::MAX` |

| --- | --- | --- |

| `u32::MAX / 8` (536,870,911) | `(u32::MAX / 4) * 3` | 75.0% |

| `2_000_000` | `u32::MAX - 3_999_999` | 99.9% |

Similarly, changes are still allowed to be between `MAX_CHANGE_AGE`-old and `u32::MAX`-old in the interim between `check_tick` scans. While we prevent their age from overflowing, the test to detect changes still compares raw values. This makes failure ultimately unreliable, since when ancient changes stop being detected varies depending on when the next scan occurs.

## Open Question

Currently, systems and system states are incorrectly initialized with their `last_change_tick` set to `0`, which doesn't handle wraparound correctly.

For consistent behavior, they should either be initialized to the world's `last_change_tick` (and detect no changes) or to `MAX_CHANGE_AGE` behind the world's current `change_tick` (and detect everything as a change). I've currently gone with the latter since that was closer to the existing behavior.

## Follow-up Work

(Edited: entire section)

We haven't actually profiled how long a `check_ticks` scan takes on a "large" `World` , so we don't know if it's safe to increase their frequency. However, we are currently relying on play sessions not lasting long enough to trigger a scan and apps not having enough entities/archetypes for it to be "expensive" (our assumption). That isn't a real solution. (Either scanning never costs enough to impact frame times or we provide an option to use `u64` change ticks. Nobody will accept random hiccups.)

To further extend the lifetime of changes, we actually only need to increment the world tick if a system has `Fetch: !ReadOnlySystemParamFetch`. The behavior will be identical because all writes are sequenced, but I'm not sure how to implement that in a way that the compiler can optimize the branch out.

Also, since having no false positives depends on a `check_ticks` scan running at least every `2 * N - 1` ticks, a `last_check_tick` should also be stored in the `World` so that any lull in system execution (like a command flush) could trigger a scan if needed. To be completely robust, all the systems initialized on the world should be scanned, not just those in the current stage.

# Objective

- `RunOnce` was a manual `System` implementation.

- Adding run criteria to stages was yet to be systemyoten

## Solution

- Make it a normal function

- yeet

## Changelog

- Replaced `RunOnce` with `ShouldRun::once`

## Migration guide

The run criterion `RunOnce`, which would make the controlled systems run only once, has been replaced with a new run criterion function `ShouldRun::once`. Replace all instances of `RunOnce` with `ShouldRun::once`.

# Objective

Reduce from scratch build time.

## Solution

Reduce the size of the critical path by removing dependencies between crates where not necessary. For `cargo check --no-default-features` this reduced build time from ~51s to ~45s. For some commits I am not completely sure if the tradeoff between build time reduction and convenience caused by the commit is acceptable. If not, I can drop them.

# Objective

`AsSystemLabel` has been introduced on system descriptors to make ordering systems more convenient, but `SystemSet::before` and `SystemSet::after` still take `SystemLabels` directly:

use bevy::ecs::system::AsSystemLabel;

/*…*/ SystemSet::new().before(foo.as_system_label()) /*…*/

is currently necessary instead of

/*…*/ SystemSet::new().before(foo) /*…*/

## Solution

Use `AsSystemLabel` for `SystemSet`

# Objective

- Make it possible to use `System`s outside of the scheduler/executor without having to define logic to track new archetypes and call `System::add_archetype()` for each.

## Solution

- Replace `System::add_archetype(&Archetype)` with `System::update_archetypes(&World)`, making systems responsible for tracking their own most recent archetype generation the way that `SystemState` already does.

This has minimal (or simplifying) effect on most of the code with the exception of `FunctionSystem`, which must now track the latest `ArchetypeGeneration` it saw instead of relying on the executor to do it.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

Remove the 'chaining' api, as it's peculiar

~~Implement the label traits for `Box<dyn ThatTrait>` (n.b. I'm not confident about this change, but it was the quickest path to not regressing)~~

Remove the need for '`.system`' when using run criteria piping

This adds the concept of "default labels" for systems (currently scoped to "parallel systems", but this could just as easily be implemented for "exclusive systems"). Function systems now include their function's `SystemTypeIdLabel` by default.

This enables the following patterns:

```rust

// ordering two systems without manually defining labels

app

.add_system(update_velocity)

.add_system(movement.after(update_velocity))

// ordering sets of systems without manually defining labels

app

.add_system(foo)

.add_system_set(

SystemSet::new()

.after(foo)

.with_system(bar)

.with_system(baz)

)

```

Fixes: #4219

Related to: #4220

Credit to @aevyrie @alice-i-cecile @DJMcNab (and probably others) for proposing (and supporting) this idea about a year ago. I was a big dummy that both shut down this (very good) idea and then forgot I did that. Sorry. You all were right!

Tracing added support for "inline span entering", which cuts down on a lot of complexity:

```rust

let span = info_span!("my_span").entered();

```

This adapts our code to use this pattern where possible, and updates our docs to recommend it.

This produces equivalent tracing behavior. Here is a side by side profile of "before" and "after" these changes.

# Objective

- In the large majority of cases, users were calling `.unwrap()` immediately after `.get_resource`.

- Attempting to add more helpful error messages here resulted in endless manual boilerplate (see #3899 and the linked PRs).

## Solution

- Add an infallible variant named `.resource` and so on.

- Use these infallible variants over `.get_resource().unwrap()` across the code base.

## Notes

I did not provide equivalent methods on `WorldCell`, in favor of removing it entirely in #3939.

## Migration Guide

Infallible variants of `.get_resource` have been added that implicitly panic, rather than needing to be unwrapped.

Replace `world.get_resource::<Foo>().unwrap()` with `world.resource::<Foo>()`.

## Impact

- `.unwrap` search results before: 1084

- `.unwrap` search results after: 942

- internal `unwrap_or_else` calls added: 4

- trivial unwrap calls removed from tests and code: 146

- uses of the new `try_get_resource` API: 11

- percentage of the time the unwrapping API was used internally: 93%

# Objective

- Fix the ugliness of the `config` api.

- Supercedes #2440, #2463, #2491

## Solution

- Since #2398, capturing closure systems have worked.

- Use those instead where we needed config before

- Remove the rest of the config api.

- Related: #2777

What is says on the tin.

This has got more to do with making `clippy` slightly more *quiet* than it does with changing anything that might greatly impact readability or performance.

that said, deriving `Default` for a couple of structs is a nice easy win

# Objective

- Fixes#3078

- Fixes#1397

## Solution

- Implement Commands::init_resource.

- Also implement for World, for consistency and to simplify internal structure.

- While we're here, clean up some of the docs for Command and World resource modification.

# Objective

It would be useful to be able to restart a state (such as if an operation fails and needs to be retried from `on_enter`). Currently, it seems the way to restart a state is to transition to a dummy state and then transition back.

## Solution

The solution is to add a `restart` method on `State<T>` that allows for transitioning to the already-active state.

## Context

Based on [this](https://discord.com/channels/691052431525675048/742884593551802431/920335041756815441) question from the Discord.

Closes#2385

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Make it possible to use `&World` as a system parameter

## Solution

It seems like all the pieces were already in place, very simple impl

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

#3457 adds the `doc_markdown` clippy lint, which checks doc comments to make sure code identifiers are escaped with backticks. This causes a lot of lint errors, so this is one of a number of PR's that will fix those lint errors one crate at a time.

This PR fixes lints in the `bevy_ecs` crate.

Fills in some gaps we had in our Bevy ECS tracing spans:

* Exclusive systems

* System Commands (for `apply_buffers = true` cases)

* System archetype updates

* Parallel system execution prep

# Objective

- New clippy lints with rust 1.57 are failing

## Solution

- Fixed clippy lints following suggestions

- I ignored clippy in old renderer because there was many and it will be removed soon

# Objective

- Fixes#2904 (see for context)

## Solution

- Simply hoist span creation out of the threaded task

- Confirmed to solve the issue locally

Now all events have the full span parent tree up through `bevy_ecs::schedule::stage` all the way to `bevy_app::app::bevy_app` (and its parents in bevy-consumer code, if any).

# Objective

- Avoid usages of `format!` that ~immediately get passed to another `format!`. This avoids a temporary allocation and is just generally cleaner.

## Solution

- `bevy_derive::shader_defs` does a `format!("{}", val.to_string())`, which is better written as just `format!("{}", val)`

- `bevy_diagnostic::log_diagnostics_plugin` does a `format!("{:>}", format!(...))`, which is better written as `format!("{:>}", format_args!(...))`

- `bevy_ecs::schedule` does `tracing::info!(..., name = &*format!("{:?}", val))`, which is better written with the tracing shorthand `tracing::info!(..., name = ?val)`

- `bevy_reflect::reflect` does `f.write_str(&format!(...))`, which is better written as `write!(f, ...)` (this could also be written using `f.debug_tuple`, but I opted to maintain alt debug behavior)

- `bevy_reflect::serde::{ser, de}` do `serde::Error::custom(format!(...))`, which is better written as `Error::custom(format_args!(...))`, as `Error::custom` takes `impl Display` and just immediately calls `format!` again

This implements the most minimal variant of #1843 - a derive for marker trait. This is a prerequisite to more complicated features like statically defined storage type or opt-out component reflection.

In order to make component struct's purpose explicit and avoid misuse, it must be annotated with `#[derive(Component)]` (manual impl is discouraged for compatibility). Right now this is just a marker trait, but in the future it might be expanded. Making this change early allows us to make further changes later without breaking backward compatibility for derive macro users.

This already prevents a lot of issues, like using bundles in `insert` calls. Primitive types are no longer valid components as well. This can be easily worked around by adding newtype wrappers and deriving `Component` for them.

One funny example of prevented bad code (from our own tests) is when an newtype struct or enum variant is used. Previously, it was possible to write `insert(Newtype)` instead of `insert(Newtype(value))`. That code compiled, because function pointers (in this case newtype struct constructor) implement `Send + Sync + 'static`, so we allowed them to be used as components. This is no longer the case and such invalid code will trigger a compile error.

Co-authored-by: = <=>

Co-authored-by: TheRawMeatball <therawmeatball@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Fixes these issues:

- `WorldId`s currently aren't necessarily unique

- I want to guarantee that they're unique to safeguard my librarified version of https://github.com/bevyengine/bevy/discussions/2805

- There probably hasn't been a collision yet, but they could technically collide

- `SystemId` isn't used for anything

- It's no longer used now that `Locals` are stored within the `System`.

- `bevy_ecs` depends on rand

## Solution

- Instead of randomly generating `WorldId`s, just use an incrementing atomic counter, panicing on overflow.

- Remove `SystemId`

- We do need to allow Locals for exclusive systems at some point, but exclusive systems couldn't access their own `SystemId` anyway.

- Now that these don't depend on rand, move it to a dev-dependency

## Todo

Determine if `WorldId` should be `u32` based instead

## Objective

The upcoming Bevy Book makes many references to the API documentation of bevy.

Most references belong to the first two chapters of the Bevy Book:

- bevyengine/bevy-website#176

- bevyengine/bevy-website#182

This PR attempts to improve the documentation of `bevy_ecs` and `bevy_app` in order to help readers of the Book who want to delve deeper into technical details.

## Solution

- Add crate and level module documentation

- Document the most important items (basically those included in the preludes), with the following style, where applicable:

- **Summary.** Short description of the item.

- **Second paragraph.** Detailed description of the item, without going too much in the implementation.

- **Code example(s).**

- **Safety or panic notes.**

## Collaboration

Any kind of collaboration is welcome, especially corrections, wording, new ideas and guidelines on where the focus should be put in.

---

### Related issues

- Fixes#2246

This updates the `pipelined-rendering` branch to use the latest `bevy_ecs` from `main`. This accomplishes a couple of goals:

1. prepares for upcoming `custom-shaders` branch changes, which were what drove many of the recent bevy_ecs changes on `main`

2. prepares for the soon-to-happen merge of `pipelined-rendering` into `main`. By including bevy_ecs changes now, we make that merge simpler / easier to review.

I split this up into 3 commits:

1. **add upstream bevy_ecs**: please don't bother reviewing this content. it has already received thorough review on `main` and is a literal copy/paste of the relevant folders (the old folders were deleted so the directories are literally exactly the same as `main`).

2. **support manual buffer application in stages**: this is used to enable the Extract step. we've already reviewed this once on the `pipelined-rendering` branch, but its worth looking at one more time in the new context of (1).

3. **support manual archetype updates in QueryState**: same situation as (2).

This is a rather simple but wide change, and it involves adding a new `bevy_app_macros` crate. Let me know if there is a better way to do any of this!

---

# Objective

- Allow adding and accessing sub-apps by using a label instead of an index

## Solution

- Migrate the bevy label implementation and derive code to the `bevy_utils` and `bevy_macro_utils` crates and then add a new `SubAppLabel` trait to the `bevy_app` crate that is used when adding or getting a sub-app from an app.

# Objective

- Remove all the `.system()` possible.

- Check for remaining missing cases.

## Solution

- Remove all `.system()`, fix compile errors

- 32 calls to `.system()` remains, mostly internals, the few others should be removed after #2446

This is extracted out of eb8f973646476b4a4926ba644a77e2b3a5772159 and includes some additional changes to remove all references to AppBuilder and fix examples that still used App::build() instead of App::new(). In addition I didn't extract the sub app feature as it isn't ready yet.

You can use `git diff --diff-filter=M eb8f973646476b4a4926ba644a77e2b3a5772159` to find all differences in this PR. The `--diff-filtered=M` filters all files added in the original commit but not in this commit away.

Co-Authored-By: Carter Anderson <mcanders1@gmail.com>

# Objective

- Continue work of #2398 and friends.

- Make `.system()` optional in run criteria APIs.

## Solution

- Slight change to `RunCriteriaDescriptorCoercion` signature and implementors.

- Implement `IntoRunCriteria` for `IntoSystem` rather than `System`.

- Remove some usages of `.system()` with run criteria in tests of `stage.rs`, to verify the implementation.

# Objective

- Extend work done in #2398.

- Make `.system()` syntax optional when using system descriptor API.

## Solution

- Slight change to `ParallelSystemDescriptorCoercion` signature and implementors.

---

I haven't touched exclusive systems, because it looks like the only two other solutions are going back to doubling our system insertion methods, or starting to lean into stageless. The latter will invalidate the former, so I think exclusive systems should remian pariahs until stageless.

I can grep & nuke `.system()` thorughout the codebase now, which might take a while, or we can do that in subsequent PR(s).

This can be your 6 months post-christmas present.

# Objective

- Make `.system` optional

- yeet

- It's ugly

- Alternative title: `.system` is dead; long live `.system`

- **yeet**

## Solution

- Use a higher ranked lifetime, and some trait magic.

N.B. This PR does not actually remove any `.system`s, except in a couple of examples. Once this is merged we can do that piecemeal across crates, and decide on syntax for labels.

- simplified code around archetype generations a little bit, as the special case value is not actually needed

- removed unnecessary UnsafeCell around pointer value that is never updated through shared references

- fixed and added a test for correct drop behaviour when removing sparse components through remove_bundle command

During PR #2046 @cart suggested that the `(): ()` notation is less legible than `_input: ()`. The first notation still managed to slip in though. This PR applies the second writing.

`ParallelSystemContainer`'s `system` pointer was extracted from box, but it was never deallocated. This change adds missing drop implementation that cleans up that memory.

The first commit monomorphizes `add_system_inner` which I think was intended to be monomorphized anyway. The second commit moves the type argument of `GraphNode` to an associated type.

The documentation for `ShouldRun` doesn't completely explain what each of the variants you can return does. For instance, it isn't very clear that looping systems aren't executed again until after all the systems in a stage have had a chance to run.

This PR adds to the documentation for `ShouldRun`, and hopefully clarifies what is happening during a stage's execution when run criteria are checked and systems are being executed.

Fixes#1753.

The problem was introduced while reworking the logic around stages' own criteria. Before #1675 they used to be stored and processed inline with the systems' criteria, and systems without criteria used that of their stage. After, criteria-less systems think they should run, always. This PR more or less restores previous behavior; a less cludge solution can wait until after 0.5 - ideally, until stageless.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

This is intended to help protect users against #1671. It doesn't resolve the issue, but I think its a good stop-gap solution for 0.5. A "full" fix would be very involved (and maybe not worth the added complexity).

In the current impl, next clears out the entire stack and replaces it with a new state. This PR moves this functionality into a replace method, and changes the behavior of next to only change the top state.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

I'm opening this prematurely; consider this an RFC that predates RFCs and therefore not super-RFC-like.

This PR does two "big" things: decouple run criteria from system sets, reimagine system sets as weapons of mass system description.

### What it lets us do:

* Reuse run criteria within a stage.

* Pipe output of one run criteria as input to another.

* Assign labels, dependencies, run criteria, and ambiguity sets to many systems at the same time.

### Things already done:

* Decoupled run criteria from system sets.

* Mass system description superpowers to `SystemSet`.

* Implemented `RunCriteriaDescriptor`.

* Removed `VirtualSystemSet`.

* Centralized all run criteria of `SystemStage`.

* Extended system descriptors with per-system run criteria.

* `.before()` and `.after()` for run criteria.

* Explicit order between state driver and related run criteria. Fixes#1672.

* Opt-in run criteria deduplication; default behavior is to panic.

* Labels (not exposed) for state run criteria; state run criteria are deduplicated.

### API issues that need discussion:

* [`FixedTimestep::step(1.0).label("my label")`](eaccf857cd/crates/bevy_ecs/src/schedule/run_criteria.rs (L120-L122)) and [`FixedTimestep::step(1.0).with_label("my label")`](eaccf857cd/crates/bevy_core/src/time/fixed_timestep.rs (L86-L89)) are both valid but do very different things.

---

I will try to maintain this post up-to-date as things change. Do check the diffs in "edited" thingy from time to time.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Problem Definition

The current change tracking (via flags for both components and resources) fails to detect changes made by systems that are scheduled to run earlier in the frame than they are.

This issue is discussed at length in [#68](https://github.com/bevyengine/bevy/issues/68) and [#54](https://github.com/bevyengine/bevy/issues/54).

This is very much a draft PR, and contributions are welcome and needed.

# Criteria

1. Each change is detected at least once, no matter the ordering.

2. Each change is detected at most once, no matter the ordering.

3. Changes should be detected the same frame that they are made.

4. Competitive ergonomics. Ideally does not require opting-in.

5. Low CPU overhead of computation.

6. Memory efficient. This must not increase over time, except where the number of entities / resources does.

7. Changes should not be lost for systems that don't run.

8. A frame needs to act as a pure function. Given the same set of entities / components it needs to produce the same end state without side-effects.

**Exact** change-tracking proposals satisfy criteria 1 and 2.

**Conservative** change-tracking proposals satisfy criteria 1 but not 2.

**Flaky** change tracking proposals satisfy criteria 2 but not 1.

# Code Base Navigation

There are three types of flags:

- `Added`: A piece of data was added to an entity / `Resources`.

- `Mutated`: A piece of data was able to be modified, because its `DerefMut` was accessed

- `Changed`: The bitwise OR of `Added` and `Changed`

The special behavior of `ChangedRes`, with respect to the scheduler is being removed in [#1313](https://github.com/bevyengine/bevy/pull/1313) and does not need to be reproduced.

`ChangedRes` and friends can be found in "bevy_ecs/core/resources/resource_query.rs".

The `Flags` trait for Components can be found in "bevy_ecs/core/query.rs".

`ComponentFlags` are stored in "bevy_ecs/core/archetypes.rs", defined on line 446.

# Proposals

**Proposal 5 was selected for implementation.**

## Proposal 0: No Change Detection