# Objective

- Use the prepass textures in webgl

## Solution

- Bind the prepass textures even when using webgl, but only if msaa is disabled

- Also did some refactors to centralize how textures are bound, similar to the EnvironmentMapLight PR

- ~~Also did some refactors of the example to make it work in webgl~~

- ~~To make the example work in webgl, I needed to use a sampler for the depth texture, the resulting code looks a bit weird, but it's simple enough and I think it's worth it to show how it works when using webgl~~

# Objective

Allow for creating pipelines that use push constants. To be able to use push constants. Fixes#4825

As of right now, trying to call `RenderPass::set_push_constants` will trigger the following error:

```

thread 'main' panicked at 'wgpu error: Validation Error

Caused by:

In a RenderPass

note: encoder = `<CommandBuffer-(0, 59, Vulkan)>`

In a set_push_constant command

provided push constant is for stage(s) VERTEX | FRAGMENT | VERTEX_FRAGMENT, however the pipeline layout has no push constant range for the stage(s) VERTEX | FRAGMENT | VERTEX_FRAGMENT

```

## Solution

Add a field push_constant_ranges to` RenderPipelineDescriptor` and `ComputePipelineDescriptor`.

This PR supersedes #4908 which now contains merge conflicts due to significant changes to `bevy_render`.

Meanwhile, this PR also made the `layout` field of `RenderPipelineDescriptor` and `ComputePipelineDescriptor` non-optional. If the user do not need to specify the bind group layouts, they can simply supply an empty vector here. No need for it to be optional.

---

## Changelog

- Add a field push_constant_ranges to RenderPipelineDescriptor and ComputePipelineDescriptor

- Made the `layout` field of RenderPipelineDescriptor and ComputePipelineDescriptor non-optional.

## Migration Guide

- Add push_constant_ranges: Vec::new() to every `RenderPipelineDescriptor` and `ComputePipelineDescriptor`

- Unwrap the optional values on the `layout` field of `RenderPipelineDescriptor` and `ComputePipelineDescriptor`. If the descriptor has no layout, supply an empty vector.

Co-authored-by: Zhixing Zhang <me@neoto.xin>

# Objective

There was issue #191 requesting subdivisions on the shape::Plane.

I also could have used this recently. I then write the solution.

Fixes #191

## Solution

I changed the shape::Plane to include subdivisions field and the code to create the subdivisions. I don't know how people are counting subdivisions so as I put in the doc comments 0 subdivisions results in the original geometry of the Plane.

Greater then 0 results in the number of lines dividing the plane.

I didn't know if it would be better to create a new struct that implemented this feature, say SubdivisionPlane or change Plane. I decided on changing Plane as that was what the original issue was.

It would be trivial to alter this to use another struct instead of altering Plane.

The issues of migration, although small, would be eliminated if a new struct was implemented.

## Changelog

### Added

Added subdivisions field to shape::Plane

## Migration Guide

All the examples needed to be updated to initalize the subdivisions field.

Also there were two tests in tests/window that need to be updated.

A user would have to update all their uses of shape::Plane to initalize the subdivisions field.

# Objective

NOTE: This depends on #7267 and should not be merged until #7267 is merged. If you are reviewing this before that is merged, I highly recommend viewing the Base Sets commit instead of trying to find my changes amongst those from #7267.

"Default sets" as described by the [Stageless RFC](https://github.com/bevyengine/rfcs/pull/45) have some [unfortunate consequences](https://github.com/bevyengine/bevy/discussions/7365).

## Solution

This adds "base sets" as a variant of `SystemSet`:

A set is a "base set" if `SystemSet::is_base` returns `true`. Typically this will be opted-in to using the `SystemSet` derive:

```rust

#[derive(SystemSet, Clone, Hash, Debug, PartialEq, Eq)]

#[system_set(base)]

enum MyBaseSet {

A,

B,

}

```

**Base sets are exclusive**: a system can belong to at most one "base set". Adding a system to more than one will result in an error. When possible we fail immediately during system-config-time with a nice file + line number. For the more nested graph-ey cases, this will fail at the final schedule build.

**Base sets cannot belong to other sets**: this is where the word "base" comes from

Systems and Sets can only be added to base sets using `in_base_set`. Calling `in_set` with a base set will fail. As will calling `in_base_set` with a normal set.

```rust

app.add_system(foo.in_base_set(MyBaseSet::A))

// X must be a normal set ... base sets cannot be added to base sets

.configure_set(X.in_base_set(MyBaseSet::A))

```

Base sets can still be configured like normal sets:

```rust

app.add_system(MyBaseSet::B.after(MyBaseSet::Ap))

```

The primary use case for base sets is enabling a "default base set":

```rust

schedule.set_default_base_set(CoreSet::Update)

// this will belong to CoreSet::Update by default

.add_system(foo)

// this will override the default base set with PostUpdate

.add_system(bar.in_base_set(CoreSet::PostUpdate))

```

This allows us to build apis that work by default in the standard Bevy style. This is a rough analog to the "default stage" model, but it use the new "stageless sets" model instead, with all of the ordering flexibility (including exclusive systems) that it provides.

---

## Changelog

- Added "base sets" and ported CoreSet to use them.

## Migration Guide

TODO

Huge thanks to @maniwani, @devil-ira, @hymm, @cart, @superdump and @jakobhellermann for the help with this PR.

# Objective

- Followup #6587.

- Minimal integration for the Stageless Scheduling RFC: https://github.com/bevyengine/rfcs/pull/45

## Solution

- [x] Remove old scheduling module

- [x] Migrate new methods to no longer use extension methods

- [x] Fix compiler errors

- [x] Fix benchmarks

- [x] Fix examples

- [x] Fix docs

- [x] Fix tests

## Changelog

### Added

- a large number of methods on `App` to work with schedules ergonomically

- the `CoreSchedule` enum

- `App::add_extract_system` via the `RenderingAppExtension` trait extension method

- the private `prepare_view_uniforms` system now has a public system set for scheduling purposes, called `ViewSet::PrepareUniforms`

### Removed

- stages, and all code that mentions stages

- states have been dramatically simplified, and no longer use a stack

- `RunCriteriaLabel`

- `AsSystemLabel` trait

- `on_hierarchy_reports_enabled` run criteria (now just uses an ad hoc resource checking run condition)

- systems in `RenderSet/Stage::Extract` no longer warn when they do not read data from the main world

- `RunCriteriaLabel`

- `transform_propagate_system_set`: this was a nonstandard pattern that didn't actually provide enough control. The systems are already `pub`: the docs have been updated to ensure that the third-party usage is clear.

### Changed

- `System::default_labels` is now `System::default_system_sets`.

- `App::add_default_labels` is now `App::add_default_sets`

- `CoreStage` and `StartupStage` enums are now `CoreSet` and `StartupSet`

- `App::add_system_set` was renamed to `App::add_systems`

- The `StartupSchedule` label is now defined as part of the `CoreSchedules` enum

- `.label(SystemLabel)` is now referred to as `.in_set(SystemSet)`

- `SystemLabel` trait was replaced by `SystemSet`

- `SystemTypeIdLabel<T>` was replaced by `SystemSetType<T>`

- The `ReportHierarchyIssue` resource now has a public constructor (`new`), and implements `PartialEq`

- Fixed time steps now use a schedule (`CoreSchedule::FixedTimeStep`) rather than a run criteria.

- Adding rendering extraction systems now panics rather than silently failing if no subapp with the `RenderApp` label is found.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied.

- `SceneSpawnerSystem` now runs under `CoreSet::Update`, rather than `CoreStage::PreUpdate.at_end()`.

- `bevy_pbr::add_clusters` is no longer an exclusive system

- the top level `bevy_ecs::schedule` module was replaced with `bevy_ecs::scheduling`

- `tick_global_task_pools_on_main_thread` is no longer run as an exclusive system. Instead, it has been replaced by `tick_global_task_pools`, which uses a `NonSend` resource to force running on the main thread.

## Migration Guide

- Calls to `.label(MyLabel)` should be replaced with `.in_set(MySet)`

- Stages have been removed. Replace these with system sets, and then add command flushes using the `apply_system_buffers` exclusive system where needed.

- The `CoreStage`, `StartupStage, `RenderStage` and `AssetStage` enums have been replaced with `CoreSet`, `StartupSet, `RenderSet` and `AssetSet`. The same scheduling guarantees have been preserved.

- Systems are no longer added to `CoreSet::Update` by default. Add systems manually if this behavior is needed, although you should consider adding your game logic systems to `CoreSchedule::FixedTimestep` instead for more reliable framerate-independent behavior.

- Similarly, startup systems are no longer part of `StartupSet::Startup` by default. In most cases, this won't matter to you.

- For example, `add_system_to_stage(CoreStage::PostUpdate, my_system)` should be replaced with

- `add_system(my_system.in_set(CoreSet::PostUpdate)`

- When testing systems or otherwise running them in a headless fashion, simply construct and run a schedule using `Schedule::new()` and `World::run_schedule` rather than constructing stages

- Run criteria have been renamed to run conditions. These can now be combined with each other and with states.

- Looping run criteria and state stacks have been removed. Use an exclusive system that runs a schedule if you need this level of control over system control flow.

- For app-level control flow over which schedules get run when (such as for rollback networking), create your own schedule and insert it under the `CoreSchedule::Outer` label.

- Fixed timesteps are now evaluated in a schedule, rather than controlled via run criteria. The `run_fixed_timestep` system runs this schedule between `CoreSet::First` and `CoreSet::PreUpdate` by default.

- Command flush points introduced by `AssetStage` have been removed. If you were relying on these, add them back manually.

- Adding extract systems is now typically done directly on the main app. Make sure the `RenderingAppExtension` trait is in scope, then call `app.add_extract_system(my_system)`.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied. You may need to order your movement systems to occur before this system in order to avoid system order ambiguities in culling behavior.

- the `RenderLabel` `AppLabel` was renamed to `RenderApp` for clarity

- `App::add_state` now takes 0 arguments: the starting state is set based on the `Default` impl.

- Instead of creating `SystemSet` containers for systems that run in stages, simply use `.on_enter::<State::Variant>()` or its `on_exit` or `on_update` siblings.

- `SystemLabel` derives should be replaced with `SystemSet`. You will also need to add the `Debug`, `PartialEq`, `Eq`, and `Hash` traits to satisfy the new trait bounds.

- `with_run_criteria` has been renamed to `run_if`. Run criteria have been renamed to run conditions for clarity, and should now simply return a bool.

- States have been dramatically simplified: there is no longer a "state stack". To queue a transition to the next state, call `NextState::set`

## TODO

- [x] remove dead methods on App and World

- [x] add `App::add_system_to_schedule` and `App::add_systems_to_schedule`

- [x] avoid adding the default system set at inappropriate times

- [x] remove any accidental cycles in the default plugins schedule

- [x] migrate benchmarks

- [x] expose explicit labels for the built-in command flush points

- [x] migrate engine code

- [x] remove all mentions of stages from the docs

- [x] verify docs for States

- [x] fix uses of exclusive systems that use .end / .at_start / .before_commands

- [x] migrate RenderStage and AssetStage

- [x] migrate examples

- [x] ensure that transform propagation is exported in a sufficiently public way (the systems are already pub)

- [x] ensure that on_enter schedules are run at least once before the main app

- [x] re-enable opt-in to execution order ambiguities

- [x] revert change to `update_bounds` to ensure it runs in `PostUpdate`

- [x] test all examples

- [x] unbreak directional lights

- [x] unbreak shadows (see 3d_scene, 3d_shape, lighting, transparaency_3d examples)

- [x] game menu example shows loading screen and menu simultaneously

- [x] display settings menu is a blank screen

- [x] `without_winit` example panics

- [x] ensure all tests pass

- [x] SubApp doc test fails

- [x] runs_spawn_local tasks fails

- [x] [Fix panic_when_hierachy_cycle test hanging](https://github.com/alice-i-cecile/bevy/pull/120)

## Points of Difficulty and Controversy

**Reviewers, please give feedback on these and look closely**

1. Default sets, from the RFC, have been removed. These added a tremendous amount of implicit complexity and result in hard to debug scheduling errors. They're going to be tackled in the form of "base sets" by @cart in a followup.

2. The outer schedule controls which schedule is run when `App::update` is called.

3. I implemented `Label for `Box<dyn Label>` for our label types. This enables us to store schedule labels in concrete form, and then later run them. I ran into the same set of problems when working with one-shot systems. We've previously investigated this pattern in depth, and it does not appear to lead to extra indirection with nested boxes.

4. `SubApp::update` simply runs the default schedule once. This sucks, but this whole API is incomplete and this was the minimal changeset.

5. `time_system` and `tick_global_task_pools_on_main_thread` no longer use exclusive systems to attempt to force scheduling order

6. Implemetnation strategy for fixed timesteps

7. `AssetStage` was migrated to `AssetSet` without reintroducing command flush points. These did not appear to be used, and it's nice to remove these bottlenecks.

8. Migration of `bevy_render/lib.rs` and pipelined rendering. The logic here is unusually tricky, as we have complex scheduling requirements.

## Future Work (ideally before 0.10)

- Rename schedule_v3 module to schedule or scheduling

- Add a derive macro to states, and likely a `EnumIter` trait of some form

- Figure out what exactly to do with the "systems added should basically work by default" problem

- Improve ergonomics for working with fixed timesteps and states

- Polish FixedTime API to match Time

- Rebase and merge #7415

- Resolve all internal ambiguities (blocked on better tools, especially #7442)

- Add "base sets" to replace the removed default sets.

# Objective

Update Bevy to wgpu 0.15.

## Changelog

- Update to wgpu 0.15, wgpu-hal 0.15.1, and naga 0.11

- Users can now use the [DirectX Shader Compiler](https://github.com/microsoft/DirectXShaderCompiler) (DXC) on Windows with DX12 for faster shader compilation and ShaderModel 6.0+ support (requires `dxcompiler.dll` and `dxil.dll`, which are included in DXC downloads from [here](https://github.com/microsoft/DirectXShaderCompiler/releases/latest))

## Migration Guide

### WGSL Top-Level `let` is now `const`

All top level constants are now declared with `const`, catching up with the wgsl spec.

`let` is no longer allowed at the global scope, only within functions.

```diff

-let SOME_CONSTANT = 12.0;

+const SOME_CONSTANT = 12.0;

```

#### `TextureDescriptor` and `SurfaceConfiguration` now requires a `view_formats` field

The new `view_formats` field in the `TextureDescriptor` is used to specify a list of formats the texture can be re-interpreted to in a texture view. Currently only changing srgb-ness is allowed (ex. `Rgba8Unorm` <=> `Rgba8UnormSrgb`). You should set `view_formats` to `&[]` (empty) unless you have a specific reason not to.

#### The DirectX Shader Compiler (DXC) is now supported on DX12

DXC is now the default shader compiler when using the DX12 backend. DXC is Microsoft's replacement for their legacy FXC compiler, and is faster, less buggy, and allows for modern shader features to be used (ShaderModel 6.0+). DXC requires `dxcompiler.dll` and `dxil.dll` to be available, otherwise it will log a warning and fall back to FXC.

You can get `dxcompiler.dll` and `dxil.dll` by downloading the latest release from [Microsoft's DirectXShaderCompiler github repo](https://github.com/microsoft/DirectXShaderCompiler/releases/latest) and copying them into your project's root directory. These must be included when you distribute your Bevy game/app/etc if you plan on supporting the DX12 backend and are using DXC.

`WgpuSettings` now has a `dx12_shader_compiler` field which can be used to choose between either FXC or DXC (if you pass None for the paths for DXC, it will check for the .dlls in the working directory).

# Objective

Prevent things from breaking tomorrow when rust 1.67 is released.

## Solution

Fix a few `uninlined_format_args` lints in recently introduced code.

# Objective

Fixes#6952

## Solution

- Request WGPU capabilities `SAMPLED_TEXTURE_AND_STORAGE_BUFFER_ARRAY_NON_UNIFORM_INDEXING`, `SAMPLER_NON_UNIFORM_INDEXING` and `UNIFORM_BUFFER_AND_STORAGE_TEXTURE_ARRAY_NON_UNIFORM_INDEXING` when corresponding features are enabled.

- Add an example (`shaders/texture_binding_array`) illustrating (and testing) the use of non-uniform indexed textures and samplers.

## Changelog

- Added new capabilities for shader validation.

- Added example `shaders/texture_binding_array`.

# Objective

`RenderContext`, the core abstraction for running the render graph, currently only supports recording one `CommandBuffer` across the entire render graph. This means the entire buffer must be recorded sequentially, usually via the render graph itself. This prevents parallelization and forces users to only encode their commands in the render graph.

## Solution

Allow `RenderContext` to store a `Vec<CommandBuffer>` that it progressively appends to. By default, the context will not have a command encoder, but will create one as soon as either `begin_tracked_render_pass` or the `command_encoder` accesor is first called. `RenderContext::add_command_buffer` allows users to interrupt the current command encoder, flush it to the vec, append a user-provided `CommandBuffer` and reset the command encoder to start a new buffer. Users or the render graph will call `RenderContext::finish` to retrieve the series of buffers for submitting to the queue.

This allows users to encode their own `CommandBuffer`s outside of the render graph, potentially in different threads, and store them in components or resources.

Ideally, in the future, the core pipeline passes can run in `RenderStage::Render` systems and end up saving the completed command buffers to either `Commands` or a field in `RenderPhase`.

## Alternatives

The alternative is to use to use wgpu's `RenderBundle`s, which can achieve similar results; however it's not universally available (no OpenGL, WebGL, and DX11).

---

## Changelog

Added: `RenderContext::new`

Added: `RenderContext::add_command_buffer`

Added: `RenderContext::finish`

Changed: `RenderContext::render_device` is now private. Use the accessor `RenderContext::render_device()` instead.

Changed: `RenderContext::command_encoder` is now private. Use the accessor `RenderContext::command_encoder()` instead.

Changed: `RenderContext` now supports adding external `CommandBuffer`s for inclusion into the render graphs. These buffers can be encoded outside of the render graph (i.e. in a system).

## Migration Guide

`RenderContext`'s fields are now private. Use the accessors on `RenderContext` instead, and construct it with `RenderContext::new`.

# Objective

- The changes to the MeshPipeline done for the prepass broke the shader_instancing example. The issue is that the view_layout changes based on if MSAA is enabled or not, but the example hardcoded the view_layout.

## Solution

- Don't overwrite the bind_group_layout of the descriptor since the MeshPipeline already takes care of this in the specialize function.

Closes https://github.com/bevyengine/bevy/issues/7285

# Objective

Fixes#6931

Continues #6954 by squashing `Msaa` to a flat enum

Helps out #7215

# Solution

```

pub enum Msaa {

Off = 1,

#[default]

Sample4 = 4,

}

```

# Changelog

- Modified

- `Msaa` is now enum

- Defaults to 4 samples

- Uses `.samples()` method to get the sample number as `u32`

# Migration Guide

```

let multi = Msaa { samples: 4 }

// is now

let multi = Msaa::Sample4

multi.samples

// is now

multi.samples()

```

Co-authored-by: Sjael <jakeobrien44@gmail.com>

# Objective

- Add a configurable prepass

- A depth prepass is useful for various shader effects and to reduce overdraw. It can be expansive depending on the scene so it's important to be able to disable it if you don't need any effects that uses it or don't suffer from excessive overdraw.

- The goal is to eventually use it for things like TAA, Ambient Occlusion, SSR and various other techniques that can benefit from having a prepass.

## Solution

The prepass node is inserted before the main pass. It runs for each `Camera3d` with a prepass component (`DepthPrepass`, `NormalPrepass`). The presence of one of those components is used to determine which textures are generated in the prepass. When any prepass is enabled, the depth buffer generated will be used by the main pass to reduce overdraw.

The prepass runs for each `Material` created with the `MaterialPlugin::prepass_enabled` option set to `true`. You can overload the shader used by the prepass by using `Material::prepass_vertex_shader()` and/or `Material::prepass_fragment_shader()`. It will also use the `Material::specialize()` for more advanced use cases. It is enabled by default on all materials.

The prepass works on opaque materials and materials using an alpha mask. Transparent materials are ignored.

The `StandardMaterial` overloads the prepass fragment shader to support alpha mask and normal maps.

---

## Changelog

- Add a new `PrepassNode` that runs before the main pass

- Add a `PrepassPlugin` to extract/prepare/queue the necessary data

- Add a `DepthPrepass` and `NormalPrepass` component to control which textures will be created by the prepass and available in later passes.

- Add a new `prepass_enabled` flag to the `MaterialPlugin` that will control if a material uses the prepass or not.

- Add a new `prepass_enabled` flag to the `PbrPlugin` to control if the StandardMaterial uses the prepass. Currently defaults to false.

- Add `Material::prepass_vertex_shader()` and `Material::prepass_fragment_shader()` to control the prepass from the `Material`

## Notes

In bevy's sample 3d scene, the performance is actually worse when enabling the prepass, but on more complex scenes the performance is generally better. I would like more testing on this, but @DGriffin91 has reported a very noticeable improvements in some scenes.

The prepass is also used by @JMS55 for TAA and GTAO

discord thread: <https://discord.com/channels/691052431525675048/1011624228627419187>

This PR was built on top of the work of multiple people

Co-Authored-By: @superdump

Co-Authored-By: @robtfm

Co-Authored-By: @JMS55

Co-authored-by: Charles <IceSentry@users.noreply.github.com>

Co-authored-by: JMS55 <47158642+JMS55@users.noreply.github.com>

# Objective

Fix https://github.com/bevyengine/bevy/issues/4530

- Make it easier to open/close/modify windows by setting them up as `Entity`s with a `Window` component.

- Make multiple windows very simple to set up. (just add a `Window` component to an entity and it should open)

## Solution

- Move all properties of window descriptor to ~components~ a component.

- Replace `WindowId` with `Entity`.

- ~Use change detection for components to update backend rather than events/commands. (The `CursorMoved`/`WindowResized`/... events are kept for user convenience.~

Check each field individually to see what we need to update, events are still kept for user convenience.

---

## Changelog

- `WindowDescriptor` renamed to `Window`.

- Width/height consolidated into a `WindowResolution` component.

- Requesting maximization/minimization is done on the [`Window::state`] field.

- `WindowId` is now `Entity`.

## Migration Guide

- Replace `WindowDescriptor` with `Window`.

- Change `width` and `height` fields in a `WindowResolution`, either by doing

```rust

WindowResolution::new(width, height) // Explicitly

// or using From<_> for tuples for convenience

(1920., 1080.).into()

```

- Replace any `WindowCommand` code to just modify the `Window`'s fields directly and creating/closing windows is now by spawning/despawning an entity with a `Window` component like so:

```rust

let window = commands.spawn(Window { ... }).id(); // open window

commands.entity(window).despawn(); // close window

```

## Unresolved

- ~How do we tell when a window is minimized by a user?~

~Currently using the `Resize(0, 0)` as an indicator of minimization.~

No longer attempting to tell given how finnicky this was across platforms, now the user can only request that a window be maximized/minimized.

## Future work

- Move `exit_on_close` functionality out from windowing and into app(?)

- https://github.com/bevyengine/bevy/issues/5621

- https://github.com/bevyengine/bevy/issues/7099

- https://github.com/bevyengine/bevy/issues/7098

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Allow rendering queue systems to use a `Res<PipelineCache>` even for queueing up new rendering pipelines. This is part of unblocking parallel execution queue systems.

## Solution

- Make `PipelineCache` internally mutable w.r.t to queueing new pipelines. Pipelines are no longer immediately updated into the cache state, but rather queued into a Vec. The Vec of pending new pipelines is then later processed at the same time we actually create the queued pipelines on the GPU device.

---

## Changelog

`PipelineCache` no longer requires mutable access in order to queue render / compute pipelines.

## Migration Guide

* Most usages of `resource_mut::<PipelineCache>` and `ResMut<PipelineCache>` can be changed to `resource::<PipelineCache>` and `Res<PipelineCache>` as long as they don't use any methods requiring mutability - the only public method requiring it is `process_queue`.

# Objective

Speed up the render phase of rendering. Simplify the trait structure for render commands.

## Solution

- Merge `EntityPhaseItem` into `PhaseItem` (`EntityPhaseItem::entity` -> `PhaseItem::entity`)

- Merge `EntityRenderCommand` into `RenderCommand`.

- Add two associated types to `RenderCommand`: `RenderCommand::ViewWorldQuery` and `RenderCommand::WorldQuery`.

- Use the new associated types to construct two `QueryStates`s for `RenderCommandState`.

- Hoist any `SQuery<T>` fetches in `EntityRenderCommand`s into the aformentioned two queries. Batch fetch them all at once.

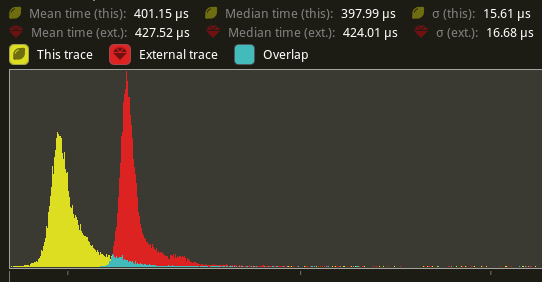

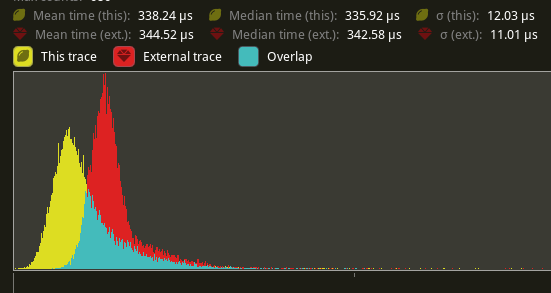

## Performance

`main_opaque_pass_3d` is slightly faster on `many_foxes` (427.52us -> 401.15us)

The shadow pass node is also slightly faster (344.52 -> 338.24us)

## Future Work

- Can we hoist the view level queries out of the core loop?

---

## Changelog

Added: `PhaseItem::entity`

Added: `RenderCommand::ViewWorldQuery` associated type.

Added: `RenderCommand::ItemorldQuery` associated type.

Added: `Draw<T>::prepare` optional trait function.

Removed: `EntityPhaseItem` trait

## Migration Guide

TODO

# Objective

The documentation for camera priority is very confusing at the moment, it requires a bit of "double negative" kind of thinking.

# Solution

Flipping the wording on the documentation to reflect more common usecases like having an overlay camera and also renaming it to "order", since priority implies that it will override the other camera rather than have both run.

Consolidation of all the feedback about #6271 as well as the addition of an "unconditionally visible" mode.

# Objective

The current implementation of the `Visibility` struct simply wraps a boolean.. which seems like an odd pattern when rust has such nice enums that allow for more expression using pattern-matching.

Additionally as it stands Bevy only has two settings for visibility of an entity:

- "unconditionally hidden" `Visibility { is_visible: false }`,

- "inherit visibility from parent" `Visibility { is_visible: true }`

where a root level entity set to "inherit" is visible.

Note that given the behaviour, the current naming of the inner field is a little deceptive or unclear.

Using an enum for `Visibility` opens the door for adding an extra behaviour mode. This PR adds a new "unconditionally visible" mode, which causes an entity to be visible even if its Parent entity is hidden. There should not really be any performance cost to the addition of this new mode.

--

The recently added `toggle` method is removed in this PR, as its semantics could be confusing with 3 variants.

## Solution

Change the Visibility component into

```rust

enum Visibility {

Hidden, // unconditionally hidden

Visible, // unconditionally visible

Inherited, // inherit visibility from parent

}

```

---

## Changelog

### Changed

`Visibility` is now an enum

## Migration Guide

- evaluation of the `visibility.is_visible` field should now check for `visibility == Visibility::Inherited`.

- setting the `visibility.is_visible` field should now directly set the value: `*visibility = Visibility::Inherited`.

- usage of `Visibility::VISIBLE` or `Visibility::INVISIBLE` should now use `Visibility::Inherited` or `Visibility::Hidden` respectively.

- `ComputedVisibility::INVISIBLE` and `SpatialBundle::VISIBLE_IDENTITY` have been renamed to `ComputedVisibility::HIDDEN` and `SpatialBundle::INHERITED_IDENTITY` respectively.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Every usage of `DrawFunctionsInternals::get_id()` was followed by a `.unwrap()`. which just adds boilerplate.

## Solution

- Introduce a fallible version of `DrawFunctionsInternals::get_id()` and use it where possible.

- I also took the opportunity to improve the error message a little in the case where it fails.

---

## Changelog

- Added `DrawFunctionsInternals::id()`

# Objective

- shaders defs can now have a `bool` or `int` value

- `#if SHADER_DEF <operator> 3`

- ok if `SHADER_DEF` is defined, has the correct type and pass the comparison

- `==`, `!=`, `>=`, `>`, `<`, `<=` supported

- `#SHADER_DEF` or `#{SHADER_DEF}`

- will be replaced by the value in the shader code

---

## Migration Guide

- replace `shader_defs.push(String::from("NAME"));` by `shader_defs.push("NAME".into());`

- if you used shader def `NO_STORAGE_BUFFERS_SUPPORT`, check how `AVAILABLE_STORAGE_BUFFER_BINDINGS` is now used in Bevy default shaders

# Objective

`add_node_edge` and `add_slot_edge` are fallible methods, but are always used with `.unwrap()`.

`input_node` is often unwrapped as well.

This points to having an infallible behaviour as default, with an alternative fallible variant if needed.

Improves readability and ergonomics.

## Solution

- Change `add_node_edge` and `add_slot_edge` to panic on error.

- Change `input_node` to panic on `None`.

- Add `try_add_node_edge` and `try_add_slot_edge` in case fallible methods are needed.

- Add `get_input_node` to still be able to get an `Option`.

---

## Changelog

### Added

- `try_add_node_edge`

- `try_add_slot_edge`

- `get_input_node`

### Changed

- `add_node_edge` is now infallible (panics on error)

- `add_slot_edge` is now infallible (panics on error)

- `input_node` now panics on `None`

## Migration Guide

Remove `.unwrap()` from `add_node_edge` and `add_slot_edge`.

For cases where the error was handled, use `try_add_node_edge` and `try_add_slot_edge` instead.

Remove `.unwrap()` from `input_node`.

For cases where the option was handled, use `get_input_node` instead.

Co-authored-by: Torstein Grindvik <52322338+torsteingrindvik@users.noreply.github.com>

# Objective

Allow more use cases where the user may benefit from both `ExtractComponentPlugin` _and_ `UniformComponentPlugin`.

## Solution

Add an associated type to `ExtractComponent` in order to allow specifying the output component (or bundle).

Make `extract_component` return an `Option<_>` such that components can be extracted only when needed.

What problem does this solve?

`ExtractComponentPlugin` allows extracting components, but currently the output type is the same as the input.

This means that use cases such as having a settings struct which turns into a uniform is awkward.

For example we might have:

```rust

struct MyStruct {

enabled: bool,

val: f32

}

struct MyStructUniform {

val: f32

}

```

With the new approach, we can extract `MyStruct` only when it is enabled, and turn it into its related uniform.

This chains well with `UniformComponentPlugin`.

The user may then:

```rust

app.add_plugin(ExtractComponentPlugin::<MyStruct>::default());

app.add_plugin(UniformComponentPlugin::<MyStructUniform>::default());

```

This then saves the user a fair amount of boilerplate.

## Changelog

### Changed

- `ExtractComponent` can specify output type, and outputting is optional.

Co-authored-by: Torstein Grindvik <52322338+torsteingrindvik@users.noreply.github.com>

# Objective

- Using the instancing example as reference, I found it was breaking when enabling HDR on the camera. I found that this was because, unlike in internal code, this was not updating the specialization key with `view.hdr`.

## Solution

- Add the missing HDR bit.

# Objective

Replace `WorldQueryGats` trait with actual gats

## Solution

Replace `WorldQueryGats` trait with actual gats

---

## Changelog

- Replaced `WorldQueryGats` trait with actual gats

## Migration Guide

- Replace usage of `WorldQueryGats` assoc types with the actual gats on `WorldQuery` trait

# Objective

Fixes#5884#2879

Alternative to #2988#5885#2886

"Immutable" Plugin settings are currently represented as normal ECS resources, which are read as part of plugin init. This presents a number of problems:

1. If a user inserts the plugin settings resource after the plugin is initialized, it will be silently ignored (and use the defaults instead)

2. Users can modify the plugin settings resource after the plugin has been initialized. This creates a false sense of control over settings that can no longer be changed.

(1) and (2) are especially problematic and confusing for the `WindowDescriptor` resource, but this is a general problem.

## Solution

Immutable Plugin settings now live on each Plugin struct (ex: `WindowPlugin`). PluginGroups have been reworked to support overriding plugin values. This also removes the need for the `add_plugins_with` api, as the `add_plugins` api can use the builder pattern directly. Settings that can be used at runtime continue to be represented as ECS resources.

Plugins are now configured like this:

```rust

app.add_plugin(AssetPlugin {

watch_for_changes: true,

..default()

})

```

PluginGroups are now configured like this:

```rust

app.add_plugins(DefaultPlugins

.set(AssetPlugin {

watch_for_changes: true,

..default()

})

)

```

This is an alternative to #2988, which is similar. But I personally prefer this solution for a couple of reasons:

* ~~#2988 doesn't solve (1)~~ #2988 does solve (1) and will panic in that case. I was wrong!

* This PR directly ties plugin settings to Plugin types in a 1:1 relationship, rather than a loose "setup resource" <-> plugin coupling (where the setup resource is consumed by the first plugin that uses it).

* I'm not a huge fan of overloading the ECS resource concept and implementation for something that has very different use cases and constraints.

## Changelog

- PluginGroups can now be configured directly using the builder pattern. Individual plugin values can be overridden by using `plugin_group.set(SomePlugin {})`, which enables overriding default plugin values.

- `WindowDescriptor` plugin settings have been moved to `WindowPlugin` and `AssetServerSettings` have been moved to `AssetPlugin`

- `app.add_plugins_with` has been replaced by using `add_plugins` with the builder pattern.

## Migration Guide

The `WindowDescriptor` settings have been moved from a resource to `WindowPlugin::window`:

```rust

// Old (Bevy 0.8)

app

.insert_resource(WindowDescriptor {

width: 400.0,

..default()

})

.add_plugins(DefaultPlugins)

// New (Bevy 0.9)

app.add_plugins(DefaultPlugins.set(WindowPlugin {

window: WindowDescriptor {

width: 400.0,

..default()

},

..default()

}))

```

The `AssetServerSettings` resource has been removed in favor of direct `AssetPlugin` configuration:

```rust

// Old (Bevy 0.8)

app

.insert_resource(AssetServerSettings {

watch_for_changes: true,

..default()

})

.add_plugins(DefaultPlugins)

// New (Bevy 0.9)

app.add_plugins(DefaultPlugins.set(AssetPlugin {

watch_for_changes: true,

..default()

}))

```

`add_plugins_with` has been replaced by `add_plugins` in combination with the builder pattern:

```rust

// Old (Bevy 0.8)

app.add_plugins_with(DefaultPlugins, |group| group.disable::<AssetPlugin>());

// New (Bevy 0.9)

app.add_plugins(DefaultPlugins.build().disable::<AssetPlugin>());

```

# Objective

Now that we can consolidate Bundles and Components under a single insert (thanks to #2975 and #6039), almost 100% of world spawns now look like `world.spawn().insert((Some, Tuple, Here))`. Spawning an entity without any components is an extremely uncommon pattern, so it makes sense to give spawn the "first class" ergonomic api. This consolidated api should be made consistent across all spawn apis (such as World and Commands).

## Solution

All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input:

```rust

// before:

commands

.spawn()

.insert((A, B, C));

world

.spawn()

.insert((A, B, C);

// after

commands.spawn((A, B, C));

world.spawn((A, B, C));

```

All existing instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api. A new `spawn_empty` has been added, replacing the old `spawn` api.

By allowing `world.spawn(some_bundle)` to replace `world.spawn().insert(some_bundle)`, this opened the door to removing the initial entity allocation in the "empty" archetype / table done in `spawn()` (and subsequent move to the actual archetype in `.insert(some_bundle)`).

This improves spawn performance by over 10%:

To take this measurement, I added a new `world_spawn` benchmark.

Unfortunately, optimizing `Commands::spawn` is slightly less trivial, as Commands expose the Entity id of spawned entities prior to actually spawning. Doing the optimization would (naively) require assurances that the `spawn(some_bundle)` command is applied before all other commands involving the entity (which would not necessarily be true, if memory serves). Optimizing `Commands::spawn` this way does feel possible, but it will require careful thought (and maybe some additional checks), which deserves its own PR. For now, it has the same performance characteristics of the current `Commands::spawn_bundle` on main.

**Note that 99% of this PR is simple renames and refactors. The only code that needs careful scrutiny is the new `World::spawn()` impl, which is relatively straightforward, but it has some new unsafe code (which re-uses battle tested BundlerSpawner code path).**

---

## Changelog

- All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input

- All instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api

- World and Commands now have `spawn_empty()`, which is equivalent to the old `spawn()` behavior.

## Migration Guide

```rust

// Old (0.8):

commands

.spawn()

.insert_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

commands.spawn_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

let entity = commands.spawn().id();

// New (0.9)

let entity = commands.spawn_empty().id();

// Old (0.8)

let entity = world.spawn().id();

// New (0.9)

let entity = world.spawn_empty();

```

# Objective

Take advantage of the "impl Bundle for Component" changes in #2975 / add the follow up changes discussed there.

## Solution

- Change `insert` and `remove` to accept a Bundle instead of a Component (for both Commands and World)

- Deprecate `insert_bundle`, `remove_bundle`, and `remove_bundle_intersection`

- Add `remove_intersection`

---

## Changelog

- Change `insert` and `remove` now accept a Bundle instead of a Component (for both Commands and World)

- `insert_bundle` and `remove_bundle` are deprecated

## Migration Guide

Replace `insert_bundle` with `insert`:

```rust

// Old (0.8)

commands.spawn().insert_bundle(SomeBundle::default());

// New (0.9)

commands.spawn().insert(SomeBundle::default());

```

Replace `remove_bundle` with `remove`:

```rust

// Old (0.8)

commands.entity(some_entity).remove_bundle::<SomeBundle>();

// New (0.9)

commands.entity(some_entity).remove::<SomeBundle>();

```

Replace `remove_bundle_intersection` with `remove_intersection`:

```rust

// Old (0.8)

world.entity_mut(some_entity).remove_bundle_intersection::<SomeBundle>();

// New (0.9)

world.entity_mut(some_entity).remove_intersection::<SomeBundle>();

```

Consider consolidating as many operations as possible to improve ergonomics and cut down on archetype moves:

```rust

// Old (0.8)

commands.spawn()

.insert_bundle(SomeBundle::default())

.insert(SomeComponent);

// New (0.9) - Option 1

commands.spawn().insert((

SomeBundle::default(),

SomeComponent,

))

// New (0.9) - Option 2

commands.spawn_bundle((

SomeBundle::default(),

SomeComponent,

))

```

## Next Steps

Consider changing `spawn` to accept a bundle and deprecate `spawn_bundle`.

# Objective

`AssetServer::watch_for_changes()` is racy and redundant with `AssetServerSettings`.

Closes#5964.

## Changelog

* Remove `AssetServer::watch_for_changes()`

* Add `AssetServerSettings` to the prelude.

* Minor cleanup.

## Migration Guide

`AssetServer::watch_for_changes()` was removed.

Instead, use the `AssetServerSettings` resource.

```rust

app // AssetServerSettings must be inserted before adding the AssetPlugin or DefaultPlugins.

.insert_resource(AssetServerSettings {

watch_for_changes: true,

..default()

})

```

Co-authored-by: devil-ira <justthecooldude@gmail.com>

*This PR description is an edited copy of #5007, written by @alice-i-cecile.*

# Objective

Follow-up to https://github.com/bevyengine/bevy/pull/2254. The `Resource` trait currently has a blanket implementation for all types that meet its bounds.

While ergonomic, this results in several drawbacks:

* it is possible to make confusing, silent mistakes such as inserting a function pointer (Foo) rather than a value (Foo::Bar) as a resource

* it is challenging to discover if a type is intended to be used as a resource

* we cannot later add customization options (see the [RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/27-derive-component.md) for the equivalent choice for Component).

* dependencies can use the same Rust type as a resource in invisibly conflicting ways

* raw Rust types used as resources cannot preserve privacy appropriately, as anyone able to access that type can read and write to internal values

* we cannot capture a definitive list of possible resources to display to users in an editor

## Notes to reviewers

* Review this commit-by-commit; there's effectively no back-tracking and there's a lot of churn in some of these commits.

*ira: My commits are not as well organized :')*

* I've relaxed the bound on Local to Send + Sync + 'static: I don't think these concerns apply there, so this can keep things simple. Storing e.g. a u32 in a Local is fine, because there's a variable name attached explaining what it does.

* I think this is a bad place for the Resource trait to live, but I've left it in place to make reviewing easier. IMO that's best tackled with https://github.com/bevyengine/bevy/issues/4981.

## Changelog

`Resource` is no longer automatically implemented for all matching types. Instead, use the new `#[derive(Resource)]` macro.

## Migration Guide

Add `#[derive(Resource)]` to all types you are using as a resource.

If you are using a third party type as a resource, wrap it in a tuple struct to bypass orphan rules. Consider deriving `Deref` and `DerefMut` to improve ergonomics.

`ClearColor` no longer implements `Component`. Using `ClearColor` as a component in 0.8 did nothing.

Use the `ClearColorConfig` in the `Camera3d` and `Camera2d` components instead.

Co-authored-by: Alice <alice.i.cecile@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: devil-ira <justthecooldude@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

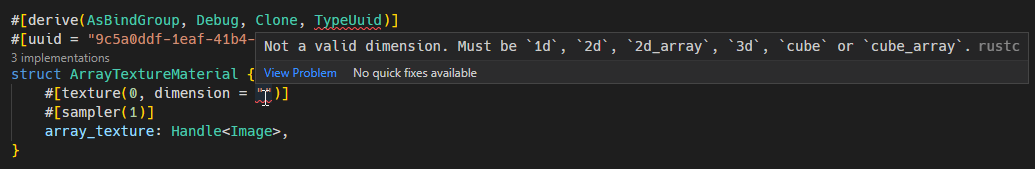

# Objective

- Provide better compile-time errors and diagnostics.

- Add more options to allow more textures types and sampler types.

- Update array_texture example to use upgraded AsBindGroup derive macro.

## Solution

Split out the parsing of the inner struct/field attributes (the inside part of a `#[foo(...)]` attribute) for better clarity

Parse the binding index for all inner attributes, as it is part of all attributes (`#[foo(0, ...)`), then allow each attribute implementer to parse the rest of the attribute metadata as needed. This should make it very trivial to extend/change if needed in the future.

Replaced invocations of `panic!` with the `syn::Error` type, providing fine-grained errors that retains span information. This provides much nicer compile-time errors, and even better IDE errors.

Updated the array_texture example to demonstrate the new changes.

## New AsBindGroup attribute options

### `#[texture(u32, ...)]`

Where `...` is an optional list of arguments.

| Arguments | Values | Default |

|-------------- |---------------------------------------------------------------- | ----------- |

| dimension = "..." | `"1d"`, `"2d"`, `"2d_array"`, `"3d"`, `"cube"`, `"cube_array"` | `"2d"` |

| sample_type = "..." | `"float"`, `"depth"`, `"s_int"` or `"u_int"` | `"float"` |

| filterable = ... | `true`, `false` | `true` |

| multisampled = ... | `true`, `false` | `false` |

| visibility(...) | `all`, `none`, or a list-combination of `vertex`, `fragment`, `compute` | `vertex`, `fragment` |

Example: `#[texture(0, dimension = "2d_array", visibility(vertex, fragment))]`

### `#[sampler(u32, ...)]`

Where `...` is an optional list of arguments.

| Arguments | Values | Default |

|----------- |--------------------------------------------------- | ----------- |

| sampler_type = "..." | `"filtering"`, `"non_filtering"`, `"comparison"`. | `"filtering"` |

| visibility(...) | `all`, `none`, or a list-combination of `vertex`, `fragment`, `compute` | `vertex`, `fragment` |

Example: `#[sampler(0, sampler_type = "filtering", visibility(vertex, fragment)]`

## Changelog

- Added more options to `#[texture(...)]` and `#[sampler(...)]` attributes, supporting more kinds of materials. See above for details.

- Upgraded IDE and compile-time error messages.

- Updated array_texture example using the new options.

Port changes made to Material in #5053 to Material2d as well.

This is more or less an exact copy of the implementation in bevy_pbr; I

simply pretended the API existed, then copied stuff over until it

started building and the shapes example was working again.

# Objective

The changes in #5053 makes it possible to add custom materials with a lot less boiler plate. However, the implementation isn't shared with Material 2d as it's a kind of fork of the bevy_pbr version. It should be possible to use AsBindGroup on the 2d version as well.

## Solution

This makes the same kind of changes in Material2d in bevy_sprite.

This makes the following work:

```rust

//! Draws a circular purple bevy in the middle of the screen using a custom shader

use bevy::{

prelude::*,

reflect::TypeUuid,

render::render_resource::{AsBindGroup, ShaderRef},

sprite::{Material2d, Material2dPlugin, MaterialMesh2dBundle},

};

fn main() {

App::new()

.add_plugins(DefaultPlugins)

.add_plugin(Material2dPlugin::<CustomMaterial>::default())

.add_startup_system(setup)

.run();

}

/// set up a simple 2D scene

fn setup(

mut commands: Commands,

mut meshes: ResMut<Assets<Mesh>>,

mut materials: ResMut<Assets<CustomMaterial>>,

asset_server: Res<AssetServer>,

) {

commands.spawn_bundle(MaterialMesh2dBundle {

mesh: meshes.add(shape::Circle::new(50.).into()).into(),

material: materials.add(CustomMaterial {

color: Color::PURPLE,

color_texture: Some(asset_server.load("branding/icon.png")),

}),

transform: Transform::from_translation(Vec3::new(-100., 0., 0.)),

..default()

});

commands.spawn_bundle(Camera2dBundle::default());

}

/// The Material2d trait is very configurable, but comes with sensible defaults for all methods.

/// You only need to implement functions for features that need non-default behavior. See the Material api docs for details!

impl Material2d for CustomMaterial {

fn fragment_shader() -> ShaderRef {

"shaders/custom_material.wgsl".into()

}

}

// This is the struct that will be passed to your shader

#[derive(AsBindGroup, TypeUuid, Debug, Clone)]

#[uuid = "f690fdae-d598-45ab-8225-97e2a3f056e0"]

pub struct CustomMaterial {

#[uniform(0)]

color: Color,

#[texture(1)]

#[sampler(2)]

color_texture: Option<Handle<Image>>,

}

```

Remove unnecessary calls to `iter()`/`iter_mut()`.

Mainly updates the use of queries in our code, docs, and examples.

```rust

// From

for _ in list.iter() {

for _ in list.iter_mut() {

// To

for _ in &list {

for _ in &mut list {

```

We already enable the pedantic lint [clippy::explicit_iter_loop](https://rust-lang.github.io/rust-clippy/stable/) inside of Bevy. However, this only warns for a few known types from the standard library.

## Note for reviewers

As you can see the additions and deletions are exactly equal.

Maybe give it a quick skim to check I didn't sneak in a crypto miner, but you don't have to torture yourself by reading every line.

I already experienced enough pain making this PR :)

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

- Currently, the `Extract` `RenderStage` is executed on the main world, with the render world available as a resource.

- However, when needing access to resources in the render world (e.g. to mutate them), the only way to do so was to get exclusive access to the whole `RenderWorld` resource.

- This meant that effectively only one extract which wrote to resources could run at a time.

- We didn't previously make `Extract`ing writing to the world a non-happy path, even though we want to discourage that.

## Solution

- Move the extract stage to run on the render world.

- Add the main world as a `MainWorld` resource.

- Add an `Extract` `SystemParam` as a convenience to access a (read only) `SystemParam` in the main world during `Extract`.

## Future work

It should be possible to avoid needing to use `get_or_spawn` for the render commands, since now the `Commands`' `Entities` matches up with the world being executed on.

We need to determine how this interacts with https://github.com/bevyengine/bevy/pull/3519

It's theoretically possible to remove the need for the `value` method on `Extract`. However, that requires slightly changing the `SystemParam` interface, which would make it more complicated. That would probably mess up the `SystemState` api too.

## Todo

I still need to add doc comments to `Extract`.

---

## Changelog

### Changed

- The `Extract` `RenderStage` now runs on the render world (instead of the main world as before).

You must use the `Extract` `SystemParam` to access the main world during the extract phase.

Resources on the render world can now be accessed using `ResMut` during extract.

### Removed

- `Commands::spawn_and_forget`. Use `Commands::get_or_spawn(e).insert_bundle(bundle)` instead

## Migration Guide

The `Extract` `RenderStage` now runs on the render world (instead of the main world as before).

You must use the `Extract` `SystemParam` to access the main world during the extract phase. `Extract` takes a single type parameter, which is any system parameter (such as `Res`, `Query` etc.). It will extract this from the main world, and returns the result of this extraction when `value` is called on it.

For example, if previously your extract system looked like:

```rust

fn extract_clouds(mut commands: Commands, clouds: Query<Entity, With<Cloud>>) {

for cloud in clouds.iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

the new version would be:

```rust

fn extract_clouds(mut commands: Commands, mut clouds: Extract<Query<Entity, With<Cloud>>>) {

for cloud in clouds.value().iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

The diff is:

```diff

--- a/src/clouds.rs

+++ b/src/clouds.rs

@@ -1,5 +1,5 @@

-fn extract_clouds(mut commands: Commands, clouds: Query<Entity, With<Cloud>>) {

- for cloud in clouds.iter() {

+fn extract_clouds(mut commands: Commands, mut clouds: Extract<Query<Entity, With<Cloud>>>) {

+ for cloud in clouds.value().iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

You can now also access resources from the render world using the normal system parameters during `Extract`:

```rust

fn extract_assets(mut render_assets: ResMut<MyAssets>, source_assets: Extract<Res<MyAssets>>) {

*render_assets = source_assets.clone();

}

```

Please note that all existing extract systems need to be updated to match this new style; even if they currently compile they will not run as expected. A warning will be emitted on a best-effort basis if this is not met.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Add texture sampling to the GLSL shader example, as naga does not support the commonly used sampler2d type.

Fixes#5059

## Solution

- Align the shader_material_glsl example behaviour with the shader_material example, as the later includes texture sampling.

- Update the GLSL shader to do texture sampling the way naga supports it, and document the way naga does not support it.

## Changelog

- The shader_material_glsl example has been updated to demonstrate texture sampling using the GLSL shading language.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Reduce the boilerplate code needed to make draw order sorting work correctly when queuing items through new common functionality. Also fix several instances in the bevy code-base (mostly examples) where this boilerplate appears to be incorrect.

## Solution

- Moved the logic for handling back-to-front vs front-to-back draw ordering into the PhaseItems by inverting the sort key ordering of Opaque3d and AlphaMask3d. The means that all the standard 3d rendering phases measure distance in the same way. Clients of these structs no longer need to know to negate the distance.

- Added a new utility struct, ViewRangefinder3d, which encapsulates the maths needed to calculate a "distance" from an ExtractedView and a mesh's transform matrix.

- Converted all the occurrences of the distance calculations in Bevy and its examples to use ViewRangefinder3d. Several of these occurrences appear to be buggy because they don't invert the view matrix or don't negate the distance where appropriate. This leads me to the view that Bevy should expose a facility to correctly perform this calculation.

## Migration Guide

Code which creates Opaque3d, AlphaMask3d, or Transparent3d phase items _should_ use ViewRangefinder3d to calculate the distance value.

Code which manually calculated the distance for Opaque3d or AlphaMask3d phase items and correctly negated the z value will no longer depth sort correctly. However, incorrect depth sorting for these types will not impact the rendered output as sorting is only a performance optimisation when drawing with depth-testing enabled. Code which manually calculated the distance for Transparent3d phase items will continue to work as before.

# Objective

Users often ask for help with rotations as they struggle with `Quat`s.

`Quat` is rather complex and has a ton of verbose methods.

## Solution

Add rotation helper methods to `Transform`.

Co-authored-by: devil-ira <justthecooldude@gmail.com>