# Objective

Add `GamepadButtonInput` event

Resolves#8988

## Solution

- Add `GamepadButtonInput` type

- Emit `GamepadButtonInput` events whenever `Input<GamepadButton>` is

written to

- Update example

---------

Co-authored-by: François <mockersf@gmail.com>

# Objective

Inconvenient initialization of `UiScale`

## Solution

Change `UiScale` to a tuple struct

## Migration Guide

Replace initialization of `UiScale` like ```UiScale { scale: 1.0 }```

with ```UiScale(1.0)```

# Objective

- Significantly reduce the size of MeshUniform by only including

necessary data.

## Solution

Local to world, model transforms are affine. This means they only need a

4x3 matrix to represent them.

`MeshUniform` stores the current, and previous model transforms, and the

inverse transpose of the current model transform, all as 4x4 matrices.

Instead we can store the current, and previous model transforms as 4x3

matrices, and we only need the upper-left 3x3 part of the inverse

transpose of the current model transform. This change allows us to

reduce the serialized MeshUniform size from 208 bytes to 144 bytes,

which is over a 30% saving in data to serialize, and VRAM bandwidth and

space.

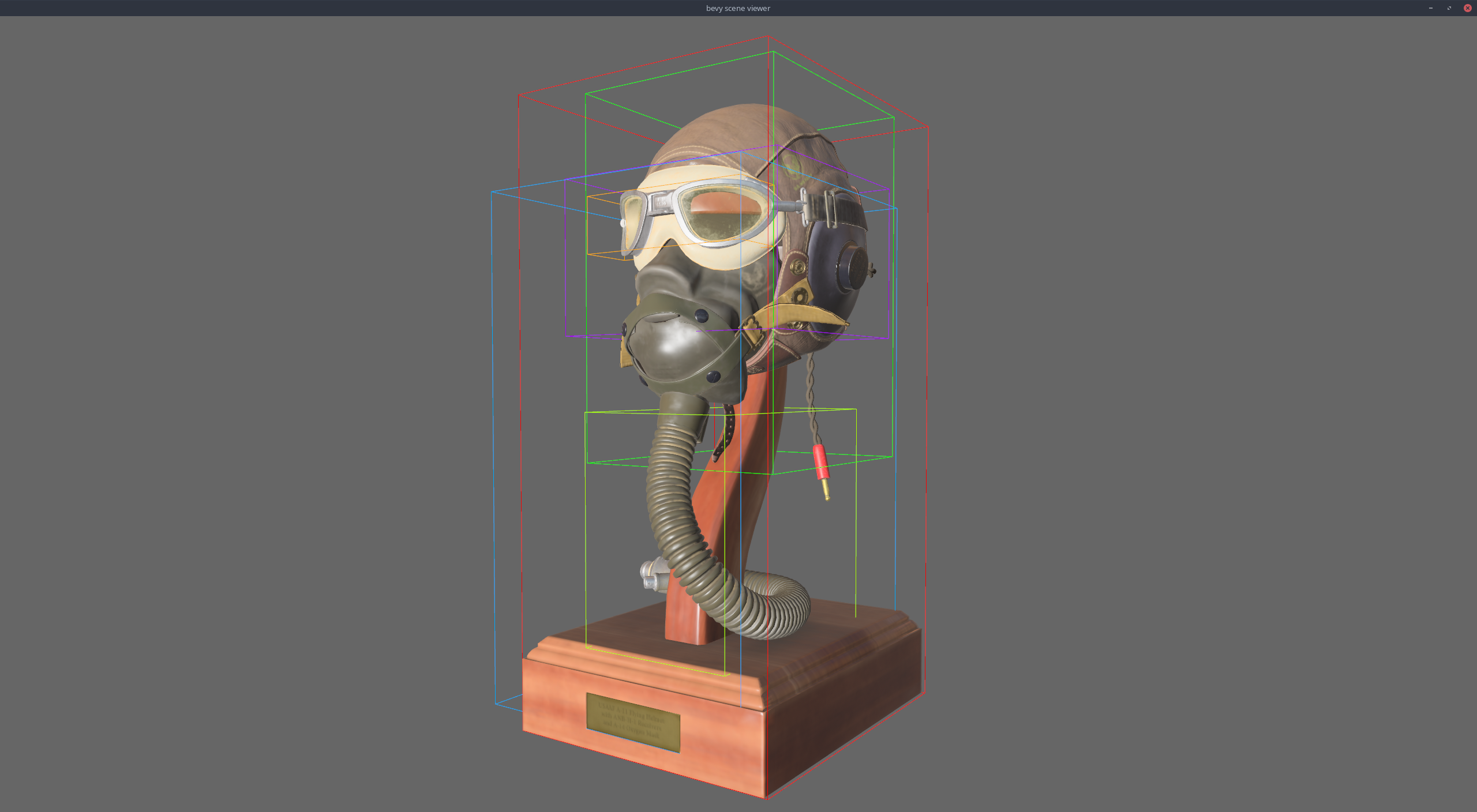

## Benchmarks

On an M1 Max, running `many_cubes -- sphere`, main is in yellow, this PR

is in red:

<img width="1484" alt="Screenshot 2023-08-11 at 02 36 43"

src="https://github.com/bevyengine/bevy/assets/302146/7d99c7b3-f2bb-4004-a8d0-4c00f755cb0d">

A reduction in frame time of ~14%.

---

## Changelog

- Changed: Redefined `MeshUniform` to improve performance by using 4x3

affine transforms and reconstructing 4x4 matrices in the shader. Helper

functions were added to `bevy_pbr::mesh_functions` to unpack the data.

`affine_to_square` converts the packed 4x3 in 3x4 matrix data to a 4x4

matrix. `mat2x4_f32_to_mat3x3` converts the 3x3 in mat2x4 + f32 matrix

data back into a 3x3.

## Migration Guide

Shader code before:

```

var model = mesh[instance_index].model;

```

Shader code after:

```

#import bevy_pbr::mesh_functions affine_to_square

var model = affine_to_square(mesh[instance_index].model);

```

Addresses:

```sh

$ cargo build --release --example lighting --target wasm32-unknown-unknown --features webgl

error: none of the selected packages contains these features: webgl, did you mean: webgl2, webp?

```

# Objective

- When following the instructions for the web examples.

- Document clearly the generated file `./target/wasm_example.js`, since

it didn't appear on `git grep` (missing extension)

## Solution

- Follow the feature rename on the docs.

---------

Signed-off-by: Seb Ospina <kraige@gmail.com>

# Objective

In the `game_menu` example:

```rust

let button_icon_style = Style {

width: Val::Px(30.0),

// This takes the icons out of the flexbox flow, to be positioned exactly

position_type: PositionType::Absolute,

// The icon will be close to the left border of the button

left: Val::Px(10.0),

right: Val::Auto,

..default()

};

```

The default value for `right` is `Val::Auto` so that line is unnecessary

and can be removed.

# Objective

I found it very difficult to understand how bevy tasks work, and I

concluded that the documentation should be improved for beginners like

me.

## Solution

These changes to the documentation were written from my beginner's

perspective after

some extremely helpful explanations by nil on Discord.

I am not familiar enough with rustdoc yet; when looking at the source, I

found the documentation at the very top of `usages.rs` helpful, but I

don't know where they are rendered. They should probably be linked to

from the main `bevy_tasks` README.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: Mike <mike.hsu@gmail.com>

# Objective

- I want to run the post_processing example in a new project, but I

can't because it uses bevy internal imports.

## Solution

- Change the bevy_internal imports to their respective bevy crates

imports

# Objective

In the shader prepass example, changing to the motion vector output

hides the text, because both it and the background are rendererd black.

Seems to have been caused by this commit?

71cf35ce42

## Solution

Make the text white on all outputs.

# Objective

- I forgot to update the example after the `ViewNodeRunner` was merged.

It was even partially mentioned in one of the comments.

## Solution

- Use the `ViewNodeRunner` in the post_processing example

- I also broke up a few lines that were a bit long

---------

Co-authored-by: JMS55 <47158642+JMS55@users.noreply.github.com>

# Objective

The `post_processing` example is currently broken when run with webgl2.

```

cargo run --example post_processing --target=wasm32-unknown-unknown

```

```

wasm.js:387 panicked at 'wgpu error: Validation Error

Caused by:

In Device::create_render_pipeline

note: label = `post_process_pipeline`

In the provided shader, the type given for group 0 binding 2 has a size of 4. As the device does not support `DownlevelFlags::BUFFER_BINDINGS_NOT_16_BYTE_ALIGNED`, the type must have a size that is a multiple of 16 bytes.

```

I bisected the breakage to c7eaedd6a1.

## Solution

Add padding when using webgl2

# Objective

- Some examples crash in CI because of needing too many resources for

the windows runner

- Some examples have random results making it hard to compare

screenshots

## Solution

- `bloom_3d`: reduce the number of spheres

- `pbr`: use simpler spheres and reuse the mesh

- `tonemapping`: use simpler spheres and reuse the mesh

- `shadow_biases`: reduce the number of spheres

- `spotlight`: use a seeded rng, move more cubes in view while reducing

the total number of cubes, and reuse meshes and materials

- `external_source_external_thread`, `iter_combinations`,

`parallel_query`: use a seeded rng

Examples of errors encountered:

```

Caused by:

In Device::create_bind_group

note: label = `bloom_upsampling_bind_group`

Not enough memory left

```

```

Caused by:

In Queue::write_buffer

Parent device is lost

```

```

ERROR wgpu_core::device::life: Mapping failed Device(Lost)

```

Redo of #7590 since I messed up my branch.

# Objective

- Revise docs.

- Refactor event loop code a little bit to make it easier to follow.

## Solution

- Do the above.

---

### Migration Guide

- `UpdateMode::Reactive { max_wait: .. }` -> `UpdateMode::Reactive {

wait: .. }`

- `UpdateMode::ReactiveLowPower { max_wait: .. }` ->

`UpdateMode::ReactiveLowPower { wait: .. }`

---------

Co-authored-by: Sélène Amanita <134181069+Selene-Amanita@users.noreply.github.com>

# Objective

The documentation for the `print_when_completed` system stated that this

system would tick the `Timer` component on every entity in the scene.

This was incorrect as this system only ticks the `Timer` on entities

with the `PrintOnCompletionTimer` component.

## Solution

We suggest a modification to the documentation of this system to make it

more clear.

# Objective

My attempt at implementing #7515

## Solution

Added struct `Pitch` and implemented on it `Source` trait.

## Changelog

### Added

- File pitch.rs to bevy_audio crate

- Struct `Pitch` and type aliases for `AudioSourceBundle<Pitch>` and

`SpatialAudioSourceBundle<Pitch>`

- New example showing how to use `PitchBundle`

### Changed

- `AudioPlugin` now adds system for `Pitch` audio

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

The `QueryParIter::for_each_mut` function is required when doing

parallel iteration with mutable queries.

This results in an unfortunate stutter:

`query.par_iter_mut().par_for_each_mut()` ('mut' is repeated).

## Solution

- Make `for_each` compatible with mutable queries, and deprecate

`for_each_mut`. In order to prevent `for_each` from being called

multiple times in parallel, we take ownership of the QueryParIter.

---

## Changelog

- `QueryParIter::for_each` is now compatible with mutable queries.

`for_each_mut` has been deprecated as it is now redundant.

## Migration Guide

The method `QueryParIter::for_each_mut` has been deprecated and is no

longer functional. Use `for_each` instead, which now supports mutable

queries.

```rust

// Before:

query.par_iter_mut().for_each_mut(|x| ...);

// After:

query.par_iter_mut().for_each(|x| ...);

```

The method `QueryParIter::for_each` now takes ownership of the

`QueryParIter`, rather than taking a shared reference.

```rust

// Before:

let par_iter = my_query.par_iter().batching_strategy(my_batching_strategy);

par_iter.for_each(|x| {

// ...Do stuff with x...

par_iter.for_each(|y| {

// ...Do nested stuff with y...

});

});

// After:

my_query.par_iter().batching_strategy(my_batching_strategy).for_each(|x| {

// ...Do stuff with x...

my_query.par_iter().batching_strategy(my_batching_strategy).for_each(|y| {

// ...Do nested stuff with y...

});

});

```

# Objective

Implements #9082 but with an option to toggle minimize and close buttons

too.

## Solution

- Added an `enabled_buttons` member to the `Window` struct through which

users can enable or disable specific window control buttons.

---

## Changelog

- Added an `enabled_buttons` member to the `Window` struct through which

users can enable or disable specific window control buttons.

- Added a new system to the `window_settings` example which demonstrates

the toggling functionality.

---

## Migration guide

- Added an `enabled_buttons` member to the `Window` struct through which

users can enable or disable specific window control buttons.

# Objective

Fix typos throughout the project.

## Solution

[`typos`](https://github.com/crate-ci/typos) project was used for

scanning, but no automatic corrections were applied. I checked

everything by hand before fixing.

Most of the changes are documentation/comments corrections. Also, there

are few trivial changes to code (variable name, pub(crate) function name

and a few error/panic messages).

## Unsolved

`bevy_reflect_derive` has

[typo](1b51053f19/crates/bevy_reflect/bevy_reflect_derive/src/type_path.rs (L76))

in enum variant name that I didn't fix. Enum is `pub(crate)`, so there

shouldn't be any trouble if fixed. However, code is tightly coupled with

macro usage, so I decided to leave it for more experienced contributor

just in case.

# Objective

Improve the `bevy_audio` API to make it more user-friendly and

ECS-idiomatic. This PR is a first-pass at addressing some of the most

obvious (to me) problems. In the interest of keeping the scope small,

further improvements can be done in future PRs.

The current `bevy_audio` API is very clunky to work with, due to how it

(ab)uses bevy assets to represent audio sinks.

The user needs to write a lot of boilerplate (accessing

`Res<Assets<AudioSink>>`) and deal with a lot of cognitive overhead

(worry about strong vs. weak handles, etc.) in order to control audio

playback.

Audio playback is initiated via a centralized `Audio` resource, which

makes it difficult to keep track of many different sounds playing in a

typical game.

Further, everything carries a generic type parameter for the sound

source type, making it difficult to mix custom sound sources (such as

procedurally generated audio or unofficial formats) with regular audio

assets.

Let's fix these issues.

## Solution

Refactor `bevy_audio` to a more idiomatic ECS API. Remove the `Audio`

resource. Do everything via entities and components instead.

Audio playback data is now stored in components:

- `PlaybackSettings`, `SpatialSettings`, `Handle<AudioSource>` are now

components. The user inserts them to tell Bevy to play a sound and

configure the initial playback parameters.

- `AudioSink`, `SpatialAudioSink` are now components instead of special

magical "asset" types. They are inserted by Bevy when it actually begins

playing the sound, and can be queried for by the user in order to

control the sound during playback.

Bundles: `AudioBundle` and `SpatialAudioBundle` are available to make it

easy for users to play sounds. Spawn an entity with one of these bundles

(or insert them to a complex entity alongside other stuff) to play a

sound.

Each entity represents a sound to be played.

There is also a new "auto-despawn" feature (activated using

`PlaybackSettings`), which, if enabled, tells Bevy to despawn entities

when the sink playback finishes. This allows for "fire-and-forget" sound

playback. Users can simply

spawn entities whenever they want to play sounds and not have to worry

about leaking memory.

## Unsolved Questions

I think the current design is *fine*. I'd be happy for it to be merged.

It has some possibly-surprising usability pitfalls, but I think it is

still much better than the old `bevy_audio`. Here are some discussion

questions for things that we could further improve. I'm undecided on

these questions, which is why I didn't implement them. We should decide

which of these should be addressed in this PR, and what should be left

for future PRs. Or if they should be addressed at all.

### What happens when sounds start playing?

Currently, the audio sink components are inserted and the bundle

components are kept. Should Bevy remove the bundle components? Something

else?

The current design allows an entity to be reused for playing the same

sound with the same parameters repeatedly. This is a niche use case I'd

like to be supported, but if we have to give it up for a simpler design,

I'd be fine with that.

### What happens if users remove any of the components themselves?

As described above, currently, entities can be reused. Removing the

audio sink causes it to be "detached" (I kept the old `Drop` impl), so

the sound keeps playing. However, if the audio bundle components are not

removed, Bevy will detect this entity as a "queued" sound entity again

(has the bundle compoenents, without a sink component), just like before

playing the sound the first time, and start playing the sound again.

This behavior might be surprising? Should we do something different?

### Should mutations to `PlaybackSettings` be applied to the audio sink?

We currently do not do that. `PlaybackSettings` is just for the initial

settings when the sound starts playing. This is clearly documented.

Do we want to keep this behavior, or do we want to allow users to use

`PlaybackSettings` instead of `AudioSink`/`SpatialAudioSink` to control

sounds during playback too?

I think I prefer for them to be kept separate. It is not a bad mental

model once you understand it, and it is documented.

### Should `AudioSink` and `SpatialAudioSink` be unified into a single

component type?

They provide a similar API (via the `AudioSinkPlayback` trait) and it

might be annoying for users to have to deal with both of them. The

unification could be done using an enum that is matched on internally by

the methods. Spatial audio has extra features, so this might make it

harder to access. I think we shouldn't.

### Automatic synchronization of spatial sound properties from

Transforms?

Should Bevy automatically apply changes to Transforms to spatial audio

entities? How do we distinguish between listener and emitter? Which one

does the transform represent? Where should the other one come from?

Alternatively, leave this problem for now, and address it in a future

PR. Or do nothing, and let users deal with it, as shown in the

`spatial_audio_2d` and `spatial_audio_3d` examples.

---

## Changelog

Added:

- `AudioBundle`/`SpatialAudioBundle`, add them to entities to play

sounds.

Removed:

- The `Audio` resource.

- `AudioOutput` is no longer `pub`.

Changed:

- `AudioSink`, `SpatialAudioSink` are now components instead of assets.

## Migration Guide

// TODO: write a more detailed migration guide, after the "unsolved

questions" are answered and this PR is finalized.

Before:

```rust

/// Need to store handles somewhere

#[derive(Resource)]

struct MyMusic {

sink: Handle<AudioSink>,

}

fn play_music(

asset_server: Res<AssetServer>,

audio: Res<Audio>,

audio_sinks: Res<Assets<AudioSink>>,

mut commands: Commands,

) {

let weak_handle = audio.play_with_settings(

asset_server.load("music.ogg"),

PlaybackSettings::LOOP.with_volume(0.5),

);

// upgrade to strong handle and store it

commands.insert_resource(MyMusic {

sink: audio_sinks.get_handle(weak_handle),

});

}

fn toggle_pause_music(

audio_sinks: Res<Assets<AudioSink>>,

mymusic: Option<Res<MyMusic>>,

) {

if let Some(mymusic) = &mymusic {

if let Some(sink) = audio_sinks.get(&mymusic.sink) {

sink.toggle();

}

}

}

```

Now:

```rust

/// Marker component for our music entity

#[derive(Component)]

struct MyMusic;

fn play_music(

mut commands: Commands,

asset_server: Res<AssetServer>,

) {

commands.spawn((

AudioBundle::from_audio_source(asset_server.load("music.ogg"))

.with_settings(PlaybackSettings::LOOP.with_volume(0.5)),

MyMusic,

));

}

fn toggle_pause_music(

// `AudioSink` will be inserted by Bevy when the audio starts playing

query_music: Query<&AudioSink, With<MyMusic>>,

) {

if let Ok(sink) = query.get_single() {

sink.toggle();

}

}

```

# Objective

Currently, `DynamicScene`s extract all components listed in the given

(or the world's) type registry. This acts as a quasi-filter of sorts.

However, it can be troublesome to use effectively and lacks decent

control.

For example, say you need to serialize only the following component over

the network:

```rust

#[derive(Reflect, Component, Default)]

#[reflect(Component)]

struct NPC {

name: Option<String>

}

```

To do this, you'd need to:

1. Create a new `AppTypeRegistry`

2. Register `NPC`

3. Register `Option<String>`

If we skip Step 3, then the entire scene might fail to serialize as

`Option<String>` requires registration.

Not only is this annoying and easy to forget, but it can leave users

with an impossible task: serializing a third-party type that contains

private types.

Generally, the third-party crate will register their private types

within a plugin so the user doesn't need to do it themselves. However,

this means we are now unable to serialize _just_ that type— we're forced

to allow everything!

## Solution

Add the `SceneFilter` enum for filtering components to extract.

This filter can be used to optionally allow or deny entire sets of

components/resources. With the `DynamicSceneBuilder`, users have more

control over how their `DynamicScene`s are built.

To only serialize a subset of components, use the `allow` method:

```rust

let scene = builder

.allow::<ComponentA>()

.allow::<ComponentB>()

.extract_entity(entity)

.build();

```

To serialize everything _but_ a subset of components, use the `deny`

method:

```rust

let scene = builder

.deny::<ComponentA>()

.deny::<ComponentB>()

.extract_entity(entity)

.build();

```

Or create a custom filter:

```rust

let components = HashSet::from([type_id]);

let filter = SceneFilter::Allowlist(components);

// let filter = SceneFilter::Denylist(components);

let scene = builder

.with_filter(Some(filter))

.extract_entity(entity)

.build();

```

Similar operations exist for resources:

<details>

<summary>View Resource Methods</summary>

To only serialize a subset of resources, use the `allow_resource`

method:

```rust

let scene = builder

.allow_resource::<ResourceA>()

.extract_resources()

.build();

```

To serialize everything _but_ a subset of resources, use the

`deny_resource` method:

```rust

let scene = builder

.deny_resource::<ResourceA>()

.extract_resources()

.build();

```

Or create a custom filter:

```rust

let resources = HashSet::from([type_id]);

let filter = SceneFilter::Allowlist(resources);

// let filter = SceneFilter::Denylist(resources);

let scene = builder

.with_resource_filter(Some(filter))

.extract_resources()

.build();

```

</details>

### Open Questions

- [x] ~~`allow` and `deny` are mutually exclusive. Currently, they

overwrite each other. Should this instead be a panic?~~ Took @soqb's

suggestion and made it so that the opposing method simply removes that

type from the list.

- [x] ~~`DynamicSceneBuilder` extracts entity data as soon as

`extract_entity`/`extract_entities` is called. Should this behavior

instead be moved to the `build` method to prevent ordering mixups (e.g.

`.allow::<Foo>().extract_entity(entity)` vs

`.extract_entity(entity).allow::<Foo>()`)? The tradeoff would be

iterating over the given entities twice: once at extraction and again at

build.~~ Based on the feedback from @Testare it sounds like it might be

better to just keep the current functionality (if anything we can open a

separate PR that adds deferred methods for extraction, so the

choice/performance hit is up to the user).

- [ ] An alternative might be to remove the filter from

`DynamicSceneBuilder` and have it as a separate parameter to the

extraction methods (either in the existing ones or as added

`extract_entity_with_filter`-type methods). Is this preferable?

- [x] ~~Should we include constructors that include common types to

allow/deny? For example, a `SceneFilter::standard_allowlist` that

includes things like `Parent` and `Children`?~~ Consensus suggests we

should. I may split this out into a followup PR, though.

- [x] ~~Should we add the ability to remove types from the filter

regardless of whether an allowlist or denylist (e.g.

`filter.remove::<Foo>()`)?~~ See the first list item

- [x] ~~Should `SceneFilter` be an enum? Would it make more sense as a

struct that contains an `is_denylist` boolean?~~ With the added

`SceneFilter::None` state (replacing the need to wrap in an `Option` or

rely on an empty `Denylist`), it seems an enum is better suited now

- [x] ~~Bikeshed: Do we like the naming convention? Should we instead

use `include`/`exclude` terminology?~~ Sounds like we're sticking with

`allow`/`deny`!

- [x] ~~Does this feature need a new example? Do we simply include it in

the existing one (maybe even as a comment?)? Should this be done in a

followup PR instead?~~ Example will be added in a followup PR

### Followup Tasks

- [ ] Add a dedicated `SceneFilter` example

- [ ] Possibly add default types to the filter (e.g. deny things like

`ComputedVisibility`, allow `Parent`, etc)

---

## Changelog

- Added the `SceneFilter` enum for filtering components and resources

when building a `DynamicScene`

- Added methods:

- `DynamicSceneBuilder::with_filter`

- `DynamicSceneBuilder::allow`

- `DynamicSceneBuilder::deny`

- `DynamicSceneBuilder::allow_all`

- `DynamicSceneBuilder::deny_all`

- `DynamicSceneBuilder::with_resource_filter`

- `DynamicSceneBuilder::allow_resource`

- `DynamicSceneBuilder::deny_resource`

- `DynamicSceneBuilder::allow_all_resources`

- `DynamicSceneBuilder::deny_all_resources`

- Removed methods:

- `DynamicSceneBuilder::from_world_with_type_registry`

- `DynamicScene::from_scene` and `DynamicScene::from_world` no longer

require an `AppTypeRegistry` reference

## Migration Guide

- `DynamicScene::from_scene` and `DynamicScene::from_world` no longer

require an `AppTypeRegistry` reference:

```rust

// OLD

let registry = world.resource::<AppTypeRegistry>();

let dynamic_scene = DynamicScene::from_world(&world, registry);

// let dynamic_scene = DynamicScene::from_scene(&scene, registry);

// NEW

let dynamic_scene = DynamicScene::from_world(&world);

// let dynamic_scene = DynamicScene::from_scene(&scene);

```

- Removed `DynamicSceneBuilder::from_world_with_type_registry`. Now the

registry is automatically taken from the given world:

```rust

// OLD

let registry = world.resource::<AppTypeRegistry>();

let builder = DynamicSceneBuilder::from_world_with_type_registry(&world,

registry);

// NEW

let builder = DynamicSceneBuilder::from_world(&world);

```

# Objective

After the UI layout is computed when the coordinates are converted back

from physical coordinates to logical coordinates the `UiScale` is

ignored. This results in a confusing situation where we have two

different systems of logical coordinates.

Example:

```rust

use bevy::prelude::*;

fn main() {

App::new()

.add_plugins(DefaultPlugins)

.add_systems(Startup, setup)

.add_systems(Update, update)

.run();

}

fn setup(mut commands: Commands, mut ui_scale: ResMut<UiScale>) {

ui_scale.scale = 4.;

commands.spawn(Camera2dBundle::default());

commands.spawn(NodeBundle {

style: Style {

align_items: AlignItems::Center,

justify_content: JustifyContent::Center,

width: Val::Percent(100.),

..Default::default()

},

..Default::default()

})

.with_children(|builder| {

builder.spawn(NodeBundle {

style: Style {

width: Val::Px(100.),

height: Val::Px(100.),

..Default::default()

},

background_color: Color::MAROON.into(),

..Default::default()

}).with_children(|builder| {

builder.spawn(TextBundle::from_section("", TextStyle::default());

});

});

}

fn update(

mut text_query: Query<(&mut Text, &Parent)>,

node_query: Query<Ref<Node>>,

) {

for (mut text, parent) in text_query.iter_mut() {

let node = node_query.get(parent.get()).unwrap();

if node.is_changed() {

text.sections[0].value = format!("size: {}", node.size());

}

}

}

```

result:

We asked for a 100x100 UI node but the Node's size is multiplied by the

value of `UiScale` to give a logical size of 400x400.

## Solution

Divide the output physical coordinates by `UiScale` in

`ui_layout_system` and multiply the logical viewport size by `UiScale`

when creating the projection matrix for the UI's `ExtractedView` in

`extract_default_ui_camera_view`.

---

## Changelog

* The UI layout's physical coordinates are divided by both the window

scale factor and `UiScale` when converting them back to logical

coordinates. The logical size of Ui nodes now matches the values given

to their size constraints.

* Multiply the logical viewport size by `UiScale` before creating the

projection matrix for the UI's `ExtractedView` in

`extract_default_ui_camera_view`.

* In `ui_focus_system` the cursor position returned from `Window` is

divided by `UiScale`.

* Added a scale factor parameter to `Node::physical_size` and

`Node::physical_rect`.

* The example `viewport_debug` now uses a `UiScale` of 2. to ensure that

viewport coordinates are working correctly with a non-unit `UiScale`.

## Migration Guide

Physical UI coordinates are now divided by both the `UiScale` and the

window's scale factor to compute the logical sizes and positions of UI

nodes.

This ensures that UI Node size and position values, held by the `Node`

and `GlobalTransform` components, conform to the same logical coordinate

system as the style constraints from which they are derived,

irrespective of the current `scale_factor` and `UiScale`.

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

The current mobile example produces an APK of 1.5 Gb.

- Running the example on a real device takes significant time (around

one minute just to copy the file over USB to my phone).

- Default virtual devices in Android studio run out of space after the

first install. This can of course be solved/configured, but it causes

unnecessary friction.

- One impression could be, that Bevy produces bloated APKs. 1.5Gb is

even double the size of debug builds for desktop examples.

## Solution

- Strip the debug symbols of the shared libraries before they are copied

to the APK

APK size after this change: 200Mb

Copy time on my machine: ~8s

## Considered alternative

APKs built in release mode are only 50Mb in size, but require setting up

signing for the profile and compile longer.

# Objective

The setup code in `animated_fox` uses a `done` boolean to avoid running

the `play` logic repetitively.

It is a common pattern, but it just work with exactly one fox, and

misses an even more common pattern.

When a user modifies the code to try it with several foxes, they are

confused as to why it doesn't work (#8996).

## Solution

The more common pattern is to use `Added<AnimationPlayer>` as a query

filter.

This both reduces complexity and naturally extend the setup code to

handle several foxes, added at any time.

# Objective

**This implementation is based on

https://github.com/bevyengine/rfcs/pull/59.**

---

Resolves#4597

Full details and motivation can be found in the RFC, but here's a brief

summary.

`FromReflect` is a very powerful and important trait within the

reflection API. It allows Dynamic types (e.g., `DynamicList`, etc.) to

be formed into Real ones (e.g., `Vec<i32>`, etc.).

This mainly comes into play concerning deserialization, where the

reflection deserializers both return a `Box<dyn Reflect>` that almost

always contain one of these Dynamic representations of a Real type. To

convert this to our Real type, we need to use `FromReflect`.

It also sneaks up in other ways. For example, it's a required bound for

`T` in `Vec<T>` so that `Vec<T>` as a whole can be made `FromReflect`.

It's also required by all fields of an enum as it's used as part of the

`Reflect::apply` implementation.

So in other words, much like `GetTypeRegistration` and `Typed`, it is

very much a core reflection trait.

The problem is that it is not currently treated like a core trait and is

not automatically derived alongside `Reflect`. This makes using it a bit

cumbersome and easy to forget.

## Solution

Automatically derive `FromReflect` when deriving `Reflect`.

Users can then choose to opt-out if needed using the

`#[reflect(from_reflect = false)]` attribute.

```rust

#[derive(Reflect)]

struct Foo;

#[derive(Reflect)]

#[reflect(from_reflect = false)]

struct Bar;

fn test<T: FromReflect>(value: T) {}

test(Foo); // <-- OK

test(Bar); // <-- Panic! Bar does not implement trait `FromReflect`

```

#### `ReflectFromReflect`

This PR also automatically adds the `ReflectFromReflect` (introduced in

#6245) registration to the derived `GetTypeRegistration` impl— if the

type hasn't opted out of `FromReflect` of course.

<details>

<summary><h4>Improved Deserialization</h4></summary>

> **Warning**

> This section includes changes that have since been descoped from this

PR. They will likely be implemented again in a followup PR. I am mainly

leaving these details in for archival purposes, as well as for reference

when implementing this logic again.

And since we can do all the above, we might as well improve

deserialization. We can now choose to deserialize into a Dynamic type or

automatically convert it using `FromReflect` under the hood.

`[Un]TypedReflectDeserializer::new` will now perform the conversion and

return the `Box`'d Real type.

`[Un]TypedReflectDeserializer::new_dynamic` will work like what we have

now and simply return the `Box`'d Dynamic type.

```rust

// Returns the Real type

let reflect_deserializer = UntypedReflectDeserializer::new(®istry);

let mut deserializer = ron:🇩🇪:Deserializer::from_str(input)?;

let output: SomeStruct = reflect_deserializer.deserialize(&mut deserializer)?.take()?;

// Returns the Dynamic type

let reflect_deserializer = UntypedReflectDeserializer::new_dynamic(®istry);

let mut deserializer = ron:🇩🇪:Deserializer::from_str(input)?;

let output: DynamicStruct = reflect_deserializer.deserialize(&mut deserializer)?.take()?;

```

</details>

---

## Changelog

* `FromReflect` is now automatically derived within the `Reflect` derive

macro

* This includes auto-registering `ReflectFromReflect` in the derived

`GetTypeRegistration` impl

* ~~Renamed `TypedReflectDeserializer::new` and

`UntypedReflectDeserializer::new` to

`TypedReflectDeserializer::new_dynamic` and

`UntypedReflectDeserializer::new_dynamic`, respectively~~ **Descoped**

* ~~Changed `TypedReflectDeserializer::new` and

`UntypedReflectDeserializer::new` to automatically convert the

deserialized output using `FromReflect`~~ **Descoped**

## Migration Guide

* `FromReflect` is now automatically derived within the `Reflect` derive

macro. Items with both derives will need to remove the `FromReflect`

one.

```rust

// OLD

#[derive(Reflect, FromReflect)]

struct Foo;

// NEW

#[derive(Reflect)]

struct Foo;

```

If using a manual implementation of `FromReflect` and the `Reflect`

derive, users will need to opt-out of the automatic implementation.

```rust

// OLD

#[derive(Reflect)]

struct Foo;

impl FromReflect for Foo {/* ... */}

// NEW

#[derive(Reflect)]

#[reflect(from_reflect = false)]

struct Foo;

impl FromReflect for Foo {/* ... */}

```

<details>

<summary><h4>Removed Migrations</h4></summary>

> **Warning**

> This section includes changes that have since been descoped from this

PR. They will likely be implemented again in a followup PR. I am mainly

leaving these details in for archival purposes, as well as for reference

when implementing this logic again.

* The reflect deserializers now perform a `FromReflect` conversion

internally. The expected output of `TypedReflectDeserializer::new` and

`UntypedReflectDeserializer::new` is no longer a Dynamic (e.g.,

`DynamicList`), but its Real counterpart (e.g., `Vec<i32>`).

```rust

let reflect_deserializer =

UntypedReflectDeserializer::new_dynamic(®istry);

let mut deserializer = ron:🇩🇪:Deserializer::from_str(input)?;

// OLD

let output: DynamicStruct = reflect_deserializer.deserialize(&mut

deserializer)?.take()?;

// NEW

let output: SomeStruct = reflect_deserializer.deserialize(&mut

deserializer)?.take()?;

```

Alternatively, if this behavior isn't desired, use the

`TypedReflectDeserializer::new_dynamic` and

`UntypedReflectDeserializer::new_dynamic` methods instead:

```rust

// OLD

let reflect_deserializer = UntypedReflectDeserializer::new(®istry);

// NEW

let reflect_deserializer =

UntypedReflectDeserializer::new_dynamic(®istry);

```

</details>

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

operate on naga IR directly to improve handling of shader modules.

- give codespan reporting into imported modules

- allow glsl to be used from wgsl and vice-versa

the ultimate objective is to make it possible to

- provide user hooks for core shader functions (to modify light

behaviour within the standard pbr pipeline, for example)

- make automatic binding slot allocation possible

but ... since this is already big, adds some value and (i think) is at

feature parity with the existing code, i wanted to push this now.

## Solution

i made a crate called naga_oil (https://github.com/robtfm/naga_oil -

unpublished for now, could be part of bevy) which manages modules by

- building each module independantly to naga IR

- creating "header" files for each supported language, which are used to

build dependent modules/shaders

- make final shaders by combining the shader IR with the IR for imported

modules

then integrated this into bevy, replacing some of the existing shader

processing stuff. also reworked examples to reflect this.

## Migration Guide

shaders that don't use `#import` directives should work without changes.

the most notable user-facing difference is that imported

functions/variables/etc need to be qualified at point of use, and

there's no "leakage" of visible stuff into your shader scope from the

imports of your imports, so if you used things imported by your imports,

you now need to import them directly and qualify them.

the current strategy of including/'spreading' `mesh_vertex_output`

directly into a struct doesn't work any more, so these need to be

modified as per the examples (e.g. color_material.wgsl, or many others).

mesh data is assumed to be in bindgroup 2 by default, if mesh data is

bound into bindgroup 1 instead then the shader def `MESH_BINDGROUP_1`

needs to be added to the pipeline shader_defs.

# Objective

In Bevy 10.1 and before, the only way to enable text wrapping was to set

a local `Val::Px` width constraint on the text node itself.

`Val::Percent` constraints and constraints on the text node's ancestors

did nothing.

#7779 fixed those problems. But perversely displaying unwrapped text is

really difficult now, and requires users to nest each `TextBundle` in a

`NodeBundle` and apply `min_width` and `max_width` constraints. Some

constructions may even need more than one layer of nesting. I've seen

several people already who have really struggled with this when porting

their projects to main in advance of 0.11.

## Solution

Add a `NoWrap` variant to the `BreakLineOn` enum.

If `NoWrap` is set, ignore any constraints on the width for the text and

call `TextPipeline::queue_text` with a width bound of `f32::INFINITY`.

---

## Changelog

* Added a `NoWrap` variant to the `BreakLineOn` enum.

* If `NoWrap` is set, any constraints on the width for the text are

ignored and `TextPipeline::queue_text` is called with a width bound of

`f32::INFINITY`.

* Changed the `size` field of `FixedMeasure` to `pub`. This shouldn't

have been private, it was always intended to have `pub` visibility.

* Added a `with_no_wrap` method to `TextBundle`.

## Migration Guide

`bevy_text::text::BreakLineOn` has a new variant `NoWrap` that disables

text wrapping for the `Text`.

Text wrapping can also be disabled using the `with_no_wrap` method of

`TextBundle`.

`Style` flattened `size`, `min_size` and `max_size` to its root struct,

causing compilation errors.

I uncommented the code to avoid further silent error not caught by CI,

but hid the view to keep the same behaviour.

# Objective

- Fixes#4922

## Solution

- Add an example that maps a custom texture on a 3D mesh.

---

## Changelog

> Added the texture itself (confirmed with mod on discord before it

should be ok) to the assets folder, added to the README and Cargo.toml.

---------

Co-authored-by: Nicola Papale <nicopap@users.noreply.github.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: Sélène Amanita <134181069+Selene-Amanita@users.noreply.github.com>

# Objective

- Add morph targets to `bevy_pbr` (closes#5756) & load them from glTF

- Supersedes #3722

- Fixes#6814

[Morph targets][1] (also known as shape interpolation, shape keys, or

blend shapes) allow animating individual vertices with fine grained

controls. This is typically used for facial expressions. By specifying

multiple poses as vertex offset, and providing a set of weight of each

pose, it is possible to define surprisingly realistic transitions

between poses. Blending between multiple poses also allow composition.

Morph targets are part of the [gltf standard][2] and are a feature of

Unity and Unreal, and babylone.js, it is only natural to implement them

in bevy.

## Solution

This implementation of morph targets uses a 3d texture where each pixel

is a component of an animated attribute. Each layer is a different

target. We use a 2d texture for each target, because the number of

attribute×components×animated vertices is expected to always exceed the

maximum pixel row size limit of webGL2. It copies fairly closely the way

skinning is implemented on the CPU side, while on the GPU side, the

shader morph target implementation is a relatively trivial detail.

We add an optional `morph_texture` to the `Mesh` struct. The

`morph_texture` is built through a method that accepts an iterator over

attribute buffers.

The `MorphWeights` component, user-accessible, controls the blend of

poses used by mesh instances (so that multiple copy of the same mesh may

have different weights), all the weights are uploaded to a uniform

buffer of 256 `f32`. We limit to 16 poses per mesh, and a total of 256

poses.

More literature:

* Old babylone.js implementation (vertex attribute-based):

https://www.eternalcoding.com/dev-log-1-morph-targets/

* Babylone.js implementation (similar to ours):

https://www.youtube.com/watch?v=LBPRmGgU0PE

* GPU gems 3:

https://developer.nvidia.com/gpugems/gpugems3/part-i-geometry/chapter-3-directx-10-blend-shapes-breaking-limits

* Development discord thread

https://discord.com/channels/691052431525675048/1083325980615114772https://user-images.githubusercontent.com/26321040/231181046-3bca2ab2-d4d9-472e-8098-639f1871ce2e.mp4https://github.com/bevyengine/bevy/assets/26321040/d2a0c544-0ef8-45cf-9f99-8c3792f5a258

## Acknowledgements

* Thanks to `storytold` for sponsoring the feature

* Thanks to `superdump` and `james7132` for guidance and help figuring

out stuff

## Future work

- Handling of less and more attributes (eg: animated uv, animated

arbitrary attributes)

- Dynamic pose allocation (so that zero-weighted poses aren't uploaded

to GPU for example, enables much more total poses)

- Better animation API, see #8357

----

## Changelog

- Add morph targets to bevy meshes

- Support up to 64 poses per mesh of individually up to 116508 vertices,

animation currently strictly limited to the position, normal and tangent

attributes.

- Load a morph target using `Mesh::set_morph_targets`

- Add `VisitMorphTargets` and `VisitMorphAttributes` traits to

`bevy_render`, this allows defining morph targets (a fairly complex and

nested data structure) through iterators (ie: single copy instead of

passing around buffers), see documentation of those traits for details

- Add `MorphWeights` component exported by `bevy_render`

- `MorphWeights` control mesh's morph target weights, blending between

various poses defined as morph targets.

- `MorphWeights` are directly inherited by direct children (single level

of hierarchy) of an entity. This allows controlling several mesh

primitives through a unique entity _as per GLTF spec_.

- Add `MorphTargetNames` component, naming each indices of loaded morph

targets.

- Load morph targets weights and buffers in `bevy_gltf`

- handle morph targets animations in `bevy_animation` (previously, it

was a `warn!` log)

- Add the `MorphStressTest.gltf` asset for morph targets testing, taken

from the glTF samples repo, CC0.

- Add morph target manipulation to `scene_viewer`

- Separate the animation code in `scene_viewer` from the rest of the

code, reducing `#[cfg(feature)]` noise

- Add the `morph_targets.rs` example to show off how to manipulate morph

targets, loading `MorpStressTest.gltf`

## Migration Guide

- (very specialized, unlikely to be touched by 3rd parties)

- `MeshPipeline` now has a single `mesh_layouts` field rather than

separate `mesh_layout` and `skinned_mesh_layout` fields. You should

handle all possible mesh bind group layouts in your implementation

- You should also handle properly the new `MORPH_TARGETS` shader def and

mesh pipeline key. A new function is exposed to make this easier:

`setup_moprh_and_skinning_defs`

- The `MeshBindGroup` is now `MeshBindGroups`, cached bind groups are

now accessed through the `get` method.

[1]: https://en.wikipedia.org/wiki/Morph_target_animation

[2]:

https://registry.khronos.org/glTF/specs/2.0/glTF-2.0.html#morph-targets

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Better consistency with `add_systems`.

- Deprecating `add_plugin` in favor of a more powerful `add_plugins`.

- Allow passing `Plugin` to `add_plugins`.

- Allow passing tuples to `add_plugins`.

## Solution

- `App::add_plugins` now takes an `impl Plugins` parameter.

- `App::add_plugin` is deprecated.

- `Plugins` is a new sealed trait that is only implemented for `Plugin`,

`PluginGroup` and tuples over `Plugins`.

- All examples, benchmarks and tests are changed to use `add_plugins`,

using tuples where appropriate.

---

## Changelog

### Changed

- `App::add_plugins` now accepts all types that implement `Plugins`,

which is implemented for:

- Types that implement `Plugin`.

- Types that implement `PluginGroup`.

- Tuples (up to 16 elements) over types that implement `Plugins`.

- Deprecated `App::add_plugin` in favor of `App::add_plugins`.

## Migration Guide

- Replace `app.add_plugin(plugin)` calls with `app.add_plugins(plugin)`.

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Fixes#6920

## Solution

From the issue discussion:

> From looking at the `AsBindGroup` derive macro implementation, the

fallback image's `TextureView` is used when the binding's

`Option<Handle<Image>>` is `None`. Because this relies on already having

a view that matches the desired binding dimensions, I think the solution

will require creating a separate `GpuImage` for each possible

`TextureViewDimension`.

---

## Changelog

Users can now rely on `FallbackImage` to work with a texture binding of

any dimension.

# Objective

This adds support for using texture atlas sprites in UI. From

discussions today in the ui-dev discord it seems this is a much wanted

feature.

This was previously attempted in #5070 by @ManevilleF however that was

blocked #5103. This work can be easily modified to support #5103 changes

after that merges.

## Solution

I created a new UI bundle that reuses the existing texture atlas

infrastructure. I create a new atlas image component to prevent it from

being drawn by the existing non-UI systems and to remove unused

parameters.

In extract I added new system to calculate the required values for the

texture atlas image, this extracts into the same resource as the

existing UI Image and Text components.

This should have minimal performance impact because if texture atlas is

not present then the exact same code path is followed. Also there should

be no unintended behavior changes because without the new components the

existing systems write the extract same resulting data.

I also added an example showing the sprite working and a system to

advance the animation on space bar presses.

Naming is hard and I would accept any feedback on the bundle name!

---

## Changelog

> Added TextureAtlasImageBundle

---------

Co-authored-by: ickshonpe <david.curthoys@googlemail.com>

# Objective

Implement borders for UI nodes.

Relevant discussion: #7785

Related: #5924, #3991

<img width="283" alt="borders"

src="https://user-images.githubusercontent.com/27962798/220968899-7661d5ec-6f5b-4b0f-af29-bf9af02259b5.PNG">

## Solution

Add an extraction function to draw the borders.

---

Can only do one colour rectangular borders due to the limitations of the

Bevy UI renderer.

Maybe it can be combined with #3991 eventually to add curved border

support.

## Changelog

* Added a component `BorderColor`.

* Added the `extract_uinode_borders` system to the UI Render App.

* Added the UI example `borders`

---------

Co-authored-by: Nico Burns <nico@nicoburns.com>

# Objective

The AccessKit PR removed the loading of the image logo from the UI

example.

It also added some alt text with `TextStyle::default()` as a child of

the logo image node.

If you give an image node a child, then its size is no longer determined

by the measurefunc that preserves its aspect ratio. Instead, its width

and height are determined by the constraints set on the node and the

size of the contents of the node. In this case, the image node is set to

have a width of 500 with no constraints on its height. So it looks at

its child node to determine what height it should take. Because the

child has `TextStyle::default` it allocates no space for the text, the

height of the image node is set to zero and the logo isn't drawn.

Fixes#8805

## Solution

Load the image, and set min_size and max_size constraints of 500 by 125

pixels.

# Objective

The goal of this PR is to receive touchpad magnification and rotation

events.

## Solution

Implement pendants for winit's `TouchpadMagnify` and `TouchpadRotate`

events.

Adjust the `mouse_input_events.rs` example to debug magnify and rotate

events.

Since winit only reports these events on macOS, the Bevy events for

touchpad magnification and rotation are currently only fired on macOS.

# Objective

Be consistent with `Resource`s and `Components` and have `Event` types

be more self-documenting.

Although not susceptible to accidentally using a function instead of a

value due to `Event`s only being initialized by their type, much of the

same reasoning for removing the blanket impl on `Resource` also applies

here.

* Not immediately obvious if a type is intended to be an event

* Prevent invisible conflicts if the same third-party or primitive types

are used as events

* Allows for further extensions (e.g. opt-in warning for missed events)

## Solution

Remove the blanket impl for the `Event` trait. Add a derive macro for

it.

---

## Changelog

- `Event` is no longer implemented for all applicable types. Add the

`#[derive(Event)]` macro for events.

## Migration Guide

* Add the `#[derive(Event)]` macro for events. Third-party types used as

events should be wrapped in a newtype.

# Objective

- Fixes https://github.com/bevyengine/bevy/issues/8586.

## Solution

- Add `preferred_theme` field to `Window` and set it when window

creation

- Add `window_theme` field to `InternalWindowState` to store current

window theme

- Expose winit `WindowThemeChanged` event

---------

Co-authored-by: hate <15314665+hate@users.noreply.github.com>

Co-authored-by: Nicola Papale <nicopap@users.noreply.github.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: François <mockersf@gmail.com>

# Objective

I was trying to add some `Diagnostics` to have a better break down of

performance but I noticed that the current implementation uses a

`ResMut` which forces the functions to all run sequentially whereas

before they could run in parallel. This created too great a performance

penalty to be usable.

## Solution

This PR reworks how the diagnostics work with a couple of breaking

changes. The idea is to change how `Diagnostics` works by changing it to

a `SystemParam`. This allows us to hold a `Deferred` buffer of

measurements that can be applied later, avoiding the need for multiple

mutable references to the hashmap. This means we can run systems that

write diagnostic measurements in parallel.

Firstly, we rename the old `Diagnostics` to `DiagnosticsStore`. This

clears up the original name for the new interface while allowing us to

preserve more closely the original API.

Then we create a new `Diagnostics` struct which implements `SystemParam`

and contains a deferred `SystemBuffer`. This can be used very similar to

the old `Diagnostics` for writing new measurements.

```rust

fn system(diagnostics: ResMut<Diagnostics>) { diagnostics.new_measurement(ID, || 10.0)}

// changes to

fn system(mut diagnostics: Diagnostics) { diagnostics.new_measurement(ID, || 10.0)}

```

For reading the diagnostics, the user needs to change from `Diagnostics`

to `DiagnosticsStore` but otherwise the function calls are the same.

Finally, we add a new method to the `App` for registering diagnostics.

This replaces the old method of creating a startup system and adding it

manually.

Testing it, this PR does indeed allow Diagnostic systems to be run in

parallel.

## Changelog

- Change `Diagnostics` to implement `SystemParam` which allows

diagnostic systems to run in parallel.

## Migration Guide

- Register `Diagnostic`'s using the new

`app.register_diagnostic(Diagnostic::new(DIAGNOSTIC_ID,

"diagnostic_name", 10));`

- In systems for writing new measurements, change `mut diagnostics:

ResMut<Diagnostics>` to `mut diagnostics: Diagnostics` to allow the

systems to run in parallel.

- In systems for reading measurements, change `diagnostics:

Res<Diagnostics>` to `diagnostics: Res<DiagnosticsStore>`.

# Objective

- Introduce a stable alternative to

[`std::any::type_name`](https://doc.rust-lang.org/std/any/fn.type_name.html).

- Rewrite of #5805 with heavy inspiration in design.

- On the path to #5830.

- Part of solving #3327.

## Solution

- Add a `TypePath` trait for static stable type path/name information.

- Add a `TypePath` derive macro.

- Add a `impl_type_path` macro for implementing internal and foreign

types in `bevy_reflect`.

---

## Changelog

- Added `TypePath` trait.

- Added `DynamicTypePath` trait and `get_type_path` method to `Reflect`.

- Added a `TypePath` derive macro.

- Added a `bevy_reflect::impl_type_path` for implementing `TypePath` on

internal and foreign types in `bevy_reflect`.

- Changed `bevy_reflect::utility::(Non)GenericTypeInfoCell` to

`(Non)GenericTypedCell<T>` which allows us to be generic over both

`TypeInfo` and `TypePath`.

- `TypePath` is now a supertrait of `Asset`, `Material` and

`Material2d`.

- `impl_reflect_struct` needs a `#[type_path = "..."]` attribute to be

specified.

- `impl_reflect_value` needs to either specify path starting with a

double colon (`::core::option::Option`) or an `in my_crate::foo`

declaration.

- Added `bevy_reflect_derive::ReflectTypePath`.

- Most uses of `Ident` in `bevy_reflect_derive` changed to use

`ReflectTypePath`.

## Migration Guide

- Implementors of `Asset`, `Material` and `Material2d` now also need to

derive `TypePath`.

- Manual implementors of `Reflect` will need to implement the new

`get_type_path` method.

## Open Questions

- [x] ~This PR currently does not migrate any usages of

`std::any::type_name` to use `bevy_reflect::TypePath` to ease the review

process. Should it?~ Migration will be left to a follow-up PR.

- [ ] This PR adds a lot of `#[derive(TypePath)]` and `T: TypePath` to

satisfy new bounds, mostly when deriving `TypeUuid`. Should we make

`TypePath` a supertrait of `TypeUuid`? [Should we remove `TypeUuid` in

favour of

`TypePath`?](2afbd85532 (r961067892))

# Objective

- `apply_system_buffers` is an unhelpful name: it introduces a new

internal-only concept

- this is particularly rough for beginners as reasoning about how

commands work is a critical stumbling block

## Solution

- rename `apply_system_buffers` to the more descriptive `apply_deferred`

- rename related fields, arguments and methods in the internals fo

bevy_ecs for consistency

- update the docs

## Changelog

`apply_system_buffers` has been renamed to `apply_deferred`, to more

clearly communicate its intent and relation to `Deferred` system

parameters like `Commands`.

## Migration Guide

- `apply_system_buffers` has been renamed to `apply_deferred`

- the `apply_system_buffers` method on the `System` trait has been

renamed to `apply_deferred`

- the `is_apply_system_buffers` function has been replaced by

`is_apply_deferred`

- `Executor::set_apply_final_buffers` is now

`Executor::set_apply_final_deferred`

- `Schedule::apply_system_buffers` is now `Schedule::apply_deferred`

---------

Co-authored-by: JoJoJet <21144246+JoJoJet@users.noreply.github.com>

# Objective

- Showcase the use of `or_else()` as requested. Fixes

https://github.com/bevyengine/bevy/issues/8702

## Solution

- Add an uninitialized resource `Unused`

- Use `or_else()` to evaluate a second run condition

- Add documentation explaining how `or_else()` works

# Objective

Since #8446, example `shader_prepass` logs the following error on my mac

m1:

```

ERROR bevy_render::render_resource::pipeline_cache: failed to process shader:

error: Entry point fragment at Fragment is invalid

= Argument 1 varying error

= Capability MULTISAMPLED_SHADING is not supported

```

The example display the 3d scene but doesn't change with the preps

selected

Maybe related to this update in naga:

cc3a8ac737

## Solution

- Disable MSAA in the example, and check if it's enabled in the shader

# Objective

- fix clippy lints early to make sure CI doesn't break when they get

promoted to stable

- have a noise-free `clippy` experience for nightly users

## Solution

- `cargo clippy --fix`

- replace `filter_map(|x| x.ok())` with `map_while(|x| x.ok())` to fix

potential infinite loop in case of IO error

# Objective

Fix the examples many_buttons and many_glyphs not working on the WebGPU

examples page. Currently they both fail with the follow error:

```

panicked at 'Only FIFO/Auto* is supported on web', ..../wgpu-0.16.0/src/backend/web.rs:1162:13

```

## Solution

Change `present_mode` from `PresentMode::Immediate` to

`PresentMode::AutoNoVsync`. AutoNoVsync seems to be common mode used by

other examples of this kind.

# Objective

- Simplify API and make authoring styles easier

See:

https://github.com/bevyengine/bevy/issues/8540#issuecomment-1536177102

## Solution

- The `size`, `min_size`, `max_size`, and `gap` properties have been

replaced by `width`, `height`, `min_width`, `min_height`, `max_width`,

`max_height`, `row_gap`, and `column_gap` properties

---

## Changelog

- Flattened `Style` properties that have a `Size` value directly into

`Style`

## Migration Guide

- The `size`, `min_size`, `max_size`, and `gap` properties have been

replaced by the `width`, `height`, `min_width`, `min_height`,

`max_width`, `max_height`, `row_gap`, and `column_gap` properties. Use

the new properties instead.

---------

Co-authored-by: ickshonpe <david.curthoys@googlemail.com>

# Objective

- Fix#5631

## Solution

- Wait 50ms (configurable) after the last modification event before

reloading an asset.

---

## Changelog

- `AssetPlugin::watch_for_changes` is now a `ChangeWatcher` instead of a

`bool`

- Fixed https://github.com/bevyengine/bevy/issues/5631

## Migration Guide

- Replace `AssetPlugin::watch_for_changes: true` with e.g.

`ChangeWatcher::with_delay(Duration::from_millis(200))`

---------

Co-authored-by: François <mockersf@gmail.com>

# Objective

- Cleanup file tree

## Solution

A mysterious mod.rs lies in the scene_viewer directory. It seems

completely useless, everything ignores it and it doesn't affect

anything.

We cruelly remove it, making the world a less whimsical place. A

dystopian drive for pure and complete order compels us to eliminate all

that is useless, for clarity and to prevent the wonder and beauty of

confusion.

# Objective

`ScheduleRunnerPlugin` was still configured via a resource, meaning

users would be able to change the settings while the app is running, but

the changes wouldn't have an effect.

## Solution

Configure plugin directly

---

## Changelog

- Changed: merged `ScheduleRunnerSettings` into `ScheduleRunnerPlugin`

## Migration Guide

- instead of inserting the `ScheduleRunnerSettings` resource, configure

the `ScheduleRunnerPlugin`

# Objective

Frustum culling for 2D components has been enabled since #7885,

Fixes#8490

## Solution

Re-introduced the comments about frustum culling in the

many_animated_sprites.rs and many_sprites.rs examples.

---------

Co-authored-by: Nicola Papale <nicopap@users.noreply.github.com>

Co-authored-by: François <mockersf@gmail.com>

# Objective

- Support WebGPU

- alternative to #5027 that doesn't need any async / await

- fixes#8315

- Surprise fix#7318

## Solution

### For async renderer initialisation

- Update the plugin lifecycle:

- app builds the plugin

- calls `plugin.build`

- registers the plugin

- app starts the event loop

- event loop waits for `ready` of all registered plugins in the same

order

- returns `true` by default

- then call all `finish` then all `cleanup` in the same order as

registered

- then execute the schedule

In the case of the renderer, to avoid anything async:

- building the renderer plugin creates a detached task that will send

back the initialised renderer through a mutex in a resource

- `ready` will wait for the renderer to be present in the resource

- `finish` will take that renderer and place it in the expected

resources by other plugins

- other plugins (that expect the renderer to be available) `finish` are

called and they are able to set up their pipelines

- `cleanup` is called, only custom one is still for pipeline rendering

### For WebGPU support

- update the `build-wasm-example` script to support passing `--api

webgpu` that will build the example with WebGPU support

- feature for webgl2 was always enabled when building for wasm. it's now

in the default feature list and enabled on all platforms, so check for

this feature must also check that the target_arch is `wasm32`

---

## Migration Guide

- `Plugin::setup` has been renamed `Plugin::cleanup`

- `Plugin::finish` has been added, and plugins adding pipelines should

do it in this function instead of `Plugin::build`

```rust

// Before

impl Plugin for MyPlugin {

fn build(&self, app: &mut App) {

app.insert_resource::<MyResource>

.add_systems(Update, my_system);

let render_app = match app.get_sub_app_mut(RenderApp) {

Ok(render_app) => render_app,

Err(_) => return,

};

render_app

.init_resource::<RenderResourceNeedingDevice>()

.init_resource::<OtherRenderResource>();

}

}

// After

impl Plugin for MyPlugin {

fn build(&self, app: &mut App) {

app.insert_resource::<MyResource>

.add_systems(Update, my_system);

let render_app = match app.get_sub_app_mut(RenderApp) {

Ok(render_app) => render_app,

Err(_) => return,

};

render_app

.init_resource::<OtherRenderResource>();

}

fn finish(&self, app: &mut App) {

let render_app = match app.get_sub_app_mut(RenderApp) {

Ok(render_app) => render_app,

Err(_) => return,

};

render_app

.init_resource::<RenderResourceNeedingDevice>();

}

}

```

# Objective

- Enable taking a screenshot in wasm

- Followup on #7163

## Solution

- Create a blob from the image data, generate a url to that blob, add an

`a` element to the document linking to that url, click on that element,

then revoke the url

- This will automatically trigger a download of the screenshot file in

the browser

# Objective

- Standardize on screen instructions in examples:

- top left, bottom left when better

- white, black when better

- same margin (12px) and font size (20)

## Solution

- Started with a few examples, let's reach consensus then document and

open issues for the rest

# Objective

Provide the ability to trigger controller rumbling (force-feedback) with

a cross-platform API.

## Solution

This adds the `GamepadRumbleRequest` event to `bevy_input` and adds a

system in `bevy_gilrs` to read them and rumble controllers accordingly.

It's a relatively primitive API with a `duration` in seconds and

`GamepadRumbleIntensity` with values for the weak and strong gamepad

motors. It's is an almost 1-to-1 mapping to platform APIs. Some

platforms refer to these motors as left and right, and low frequency and

high frequency, but by convention, they're usually the same.

I used #3868 as a starting point, updated to main, removed the low-level

gilrs effect API, and moved the requests to `bevy_input` and exposed the

strong and weak intensities.

I intend this to hopefully be a non-controversial cross-platform

starting point we can build upon to eventually support more fine-grained

control (closer to the gilrs effect API)

---

## Changelog

### Added

- Gamepads can now be rumbled by sending the `GamepadRumbleRequest`

event.

---------

Co-authored-by: Nicola Papale <nico@nicopap.ch>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: Nicola Papale <nicopap@users.noreply.github.com>

Co-authored-by: Bruce Reif (Buswolley) <bruce.reif@dynata.com>

# Objective

Add a bounding box gizmo

## Changes

- Added the `AabbGizmo` component that will draw the `Aabb` component on

that entity.

- Added an option to draw all bounding boxes in a scene on the

`GizmoConfig` resource.

- Added `TransformPoint` trait to generalize over the point

transformation methods on various transform types (e.g `Transform` and

`GlobalTransform`).

- Changed the `Gizmos::cuboid` method to accept an `impl TransformPoint`

instead of separate translation, rotation, and scale.

# Objective

The objective is to be able to load data from "application-specific"

(see glTF spec 3.7.2.1.) vertex attribute semantics from glTF files into

Bevy meshes.

## Solution

Rather than probe the glTF for the specific attributes supported by

Bevy, this PR changes the loader to iterate through all the attributes

and map them onto `MeshVertexAttribute`s. This mapping includes all the

previously supported attributes, plus it is now possible to add mappings

using the `add_custom_vertex_attribute()` method on `GltfPlugin`.

## Changelog

- Add support for loading custom vertex attributes from glTF files.

- Add the `custom_gltf_vertex_attribute.rs` example to illustrate

loading custom vertex attributes.

## Migration Guide

- If you were instantiating `GltfPlugin` using the unit-like struct

syntax, you must instead use `GltfPlugin::default()` as the type is no

longer unit-like.

Links in the api docs are nice. I noticed that there were several places

where structs / functions and other things were referenced in the docs,

but weren't linked. I added the links where possible / logical.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: François <mockersf@gmail.com>

# Objective

- Enabling AlphaMode::Opaque in the shader_prepass example crashes. The

issue seems to be that enabling opaque also generates vertex_uvs

Fixes https://github.com/bevyengine/bevy/issues/8273

## Solution

- Use the vertex_uvs in the shader if they are present

# Objective

- Have a default font

## Solution

- Add a font based on FiraMono containing only ASCII characters and use

it as the default font

- It is behind a feature `default_font` enabled by default

- I also updated examples to use it, but not UI examples to still show

how to use a custom font

---

## Changelog

* If you display text without using the default handle provided by

`TextStyle`, the text will be displayed

# Objective

Added the possibility to draw arcs in 2d via gizmos

## Solution

- Added `arc_2d` function to `Gizmos`

- Added `arc_inner` function

- Added `Arc2dBuilder<'a, 's>`

- Updated `2d_gizmos.rs` example to draw an arc

---------

Co-authored-by: kjolnyr <kjolnyr@protonmail.ch>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: ira <JustTheCoolDude@gmail.com>

Fixes https://github.com/bevyengine/bevy/issues/1207

# Objective

Right now, it's impossible to capture a screenshot of the entire window

without forking bevy. This is because

- The swapchain texture never has the COPY_SRC usage

- It can't be accessed without taking ownership of it

- Taking ownership of it breaks *a lot* of stuff

## Solution

- Introduce a dedicated api for taking a screenshot of a given bevy

window, and guarantee this screenshot will always match up with what

gets put on the screen.

---

## Changelog

- Added the `ScreenshotManager` resource with two functions,

`take_screenshot` and `save_screenshot_to_disk`