# Objective

I'm reading some of the rendering code for the first time; and using

this opportunity to flesh out some docs for the parts that I did not

understand.

rather than a questionable design choice is not a breaking change.

---------

Co-authored-by: BD103 <59022059+BD103@users.noreply.github.com>

# Objective

Adopted #11748

## Solution

I've rebased on main to fix the merge conflicts. ~~Not quite ready to

merge yet~~

* Clippy is happy and the tests are passing, but...

* ~~The new shapes in `examples/2d/2d_shapes.rs` don't look right at

all~~ Never mind, looks like radians and degrees just got mixed up at

some point?

* I have updated one doc comment based on a review in the original PR.

---------

Co-authored-by: Alexis "spectria" Horizon <spectria.limina@gmail.com>

Co-authored-by: Alexis "spectria" Horizon <118812919+spectria-limina@users.noreply.github.com>

Co-authored-by: Joona Aalto <jondolf.dev@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: Ben Harper <ben@tukom.org>

# Objective

Allow the `Tetrahedron` primitive to be used for mesh generation. This

is part of ongoing work to bring unify the capabilities of `bevy_math`

primitives.

## Solution

`Tetrahedron` implements `Meshable`. Essentially, each face is just

meshed as a `Triangle3d`, but first there is an inversion step when the

signed volume of the tetrahedron is negative to ensure that the faces

all actually point outward.

## Testing

I loaded up some examples and hackily exchanged existing meshes with the

new one to see that it works as expected.

# Objective

This is a long-standing bug that I have experienced since many versions

of Bevy ago, possibly forever. Today I finally wanted to report it, but

the fix was so easy that I just went and fixed it. :)

The problem is that 2D graphics looks blurry at odd-sized window

resolutions. This is with the **default** 2D camera configuration! The

issue will also manifest itself with any Orthographic Projection with

`ScalingMode::WindowSize` where the viewport origin is not at one of the

corners, such as the default where the origin point is at the center.

The issue happens because the Bevy orthographic projection origin point

is specified as a fraction to be multiplied by the size. For example,

the default (origin at center) is `(0.5, 0.5)`. When this value is

multiplied by the window size, it can result in fractional values for

the actual origin of the projection, thus placing the camera "between

pixels" and misaligning the entire pixel grid.

With the default value, this happens at odd-numbered window resolutions.

It is very easy to reproduce the issue by running any Bevy 2D app with a

resizable window, and slowly resizing the window pixel by pixel. As you

move the mouse to resize the window, you can see how the 2D graphics

inside the window alternate between "crisp, blurry, crisp, blurry, ...".

If you change the projection's origin to be at the corner (say, `(0.0,

0.0)`) and run the app again, the graphics always looks crisp,

regardless of window size.

Here are screenshots from **before** this PR, to illustrate the issue:

Even window size:

Odd window size:

## Solution

The solution is easy: just round the computed origin values for the

projection.

To make it work reliably for the general case, I decided to:

- Only do it for `ScalingMode::WindowSize`, as it doesn't make sense for

other scaling modes.

- Round to the nearest multiple of the pixel scale, if it is not 1.0.

This ensures the "pixels" stay aligned even if scaled.

## Testing

I ran Bevy's examples as well as my own projects to ensure things look

correct. I set different values for the pixel scale to test the rounding

behavior and played around with resizing the window to verify that

everything is consistent.

---

## Changelog

Fixed:

- Orthographic projection now rounds the origin point if computed from

screen pixels, so that 2D graphics do not appear blurry at odd window

sizes.

# Objective

- Fixes scaling normals and tangents of meshes

## Solution

- When scaling a mesh by `Vec3::new(1., 1., -1.)`, the normals should be

flipped along the Z-axis. For example a normal of `Vec3::new(0., 0.,

1.)` should become `Vec3::new(0., 0., -1.)` after scaling. This is

achieved by multiplying the normal by the reciprocal of the scale,

cheking for infinity and normalizing. Before, the normal was multiplied

by a covector of the scale, which is incorrect for normals.

- Tangents need to be multiplied by the `scale`, not its reciprocal as

before

---------

Co-authored-by: vero <11307157+atlv24@users.noreply.github.com>

This commit makes us stop using the render world ECS for

`BinnedRenderPhase` and `SortedRenderPhase` and instead use resources

with `EntityHashMap`s inside. There are three reasons to do this:

1. We can use `clear()` to clear out the render phase collections

instead of recreating the components from scratch, allowing us to reuse

allocations.

2. This is a prerequisite for retained bins, because components can't be

retained from frame to frame in the render world, but resources can.

3. We want to move away from storing anything in components in the

render world ECS, and this is a step in that direction.

This patch results in a small performance benefit, due to point (1)

above.

## Changelog

### Changed

* The `BinnedRenderPhase` and `SortedRenderPhase` render world

components have been replaced with `ViewBinnedRenderPhases` and

`ViewSortedRenderPhases` resources.

## Migration Guide

* The `BinnedRenderPhase` and `SortedRenderPhase` render world

components have been replaced with `ViewBinnedRenderPhases` and

`ViewSortedRenderPhases` resources. Instead of querying for the

components, look the camera entity up in the

`ViewBinnedRenderPhases`/`ViewSortedRenderPhases` tables.

# Objective

- All `ShapeMeshBuilder`s have some methods/implementations in common.

These are `fn build(&self) -> Mesh` and this implementation:

```rust

impl From<ShapeMeshBuilder> for Mesh {

fn from(builder: ShapeMeshBuilder) -> {

builder.build()

}

}

```

- For the sake of consistency, these can be moved into a shared trait

## Solution

- Add `trait MeshBuilder` containing a `fn build(&self) -> Mesh` and

implementing `MeshBuilder for ShapeMeshBuilder`

- Implement `From<T: MeshBuilder> for Mesh`

## Migration Guide

- When calling `.build()` you need to import

`bevy_render::mesh::primitives::MeshBuilder`

# Objective

Remove the limit of `RenderLayer` by using a growable mask using

`SmallVec`.

Changes adopted from @UkoeHB's initial PR here

https://github.com/bevyengine/bevy/pull/12502 that contained additional

changes related to propagating render layers.

Changes

## Solution

The main thing needed to unblock this is removing `RenderLayers` from

our shader code. This primarily affects `DirectionalLight`. We are now

computing a `skip` field on the CPU that is then used to skip the light

in the shader.

## Testing

Checked a variety of examples and did a quick benchmark on `many_cubes`.

There were some existing problems identified during the development of

the original pr (see:

https://discord.com/channels/691052431525675048/1220477928605749340/1221190112939872347).

This PR shouldn't change any existing behavior besides removing the

layer limit (sans the comment in migration about `all` layers no longer

being possible).

---

## Changelog

Removed the limit on `RenderLayers` by using a growable bitset that only

allocates when layers greater than 64 are used.

## Migration Guide

- `RenderLayers::all()` no longer exists. Entities expecting to be

visible on all layers, e.g. lights, should compute the active layers

that are in use.

---------

Co-authored-by: robtfm <50659922+robtfm@users.noreply.github.com>

# Objective

- Refactor the changes merged in #11654 to compute flat normals for

indexed meshes instead of smooth normals.

- Fixes#12716

## Solution

- Partially revert the changes in #11654 to compute flat normals for

both indexed and unindexed meshes in `compute_flat_normals`

- Create a new method, `compute_smooth_normals`, that computes smooth

normals for indexed meshes

- Create a new method, `compute_normals`, that computes smooth normals

for indexed meshes and flat normals for unindexed meshes by default. Use

this new method instead of `compute_flat_normals`.

## Testing

- Run the example with and without the changes to ensure that the

results are identical.

This commit implements the [depth of field] effect, simulating the blur

of objects out of focus of the virtual lens. Either the [hexagonal

bokeh] effect or a faster Gaussian blur may be used. In both cases, the

implementation is a simple separable two-pass convolution. This is not

the most physically-accurate real-time bokeh technique that exists;

Unreal Engine has [a more accurate implementation] of "cinematic depth

of field" from 2018. However, it's simple, and most engines provide

something similar as a fast option, often called "mobile" depth of

field.

The general approach is outlined in [a blog post from 2017]. We take

advantage of the fact that both Gaussian blurs and hexagonal bokeh blurs

are *separable*. This means that their 2D kernels can be reduced to a

small number of 1D kernels applied one after another, asymptotically

reducing the amount of work that has to be done. Gaussian blurs can be

accomplished by blurring horizontally and then vertically, while

hexagonal bokeh blurs can be done with a vertical blur plus a diagonal

blur, plus two diagonal blurs. In both cases, only two passes are

needed. Bokeh requires the first pass to have a second render target and

requires two subpasses in the second pass, which decreases its

performance relative to the Gaussian blur.

The bokeh blur is generally more aesthetically pleasing than the

Gaussian blur, as it simulates the effect of a camera more accurately.

The shape of the bokeh circles are determined by the number of blades of

the aperture. In our case, we use a hexagon, which is usually considered

specific to lower-quality cameras. (This is a downside of the fast

hexagon approach compared to the higher-quality approaches.) The blur

amount is generally specified by the [f-number], which we use to compute

the focal length from the film size and FOV. By default, we simulate

standard cinematic cameras of f/1 and [Super 35]. The developer can

customize these values as desired.

A new example has been added to demonstrate depth of field. It allows

customization of the mode (Gaussian vs. bokeh), focal distance and

f-numbers. The test scene is inspired by a [blog post on depth of field

in Unity]; however, the effect is implemented in a completely different

way from that blog post, and all the assets (textures, etc.) are

original.

Bokeh depth of field:

Gaussian depth of field:

No depth of field:

[depth of field]: https://en.wikipedia.org/wiki/Depth_of_field

[hexagonal bokeh]:

https://colinbarrebrisebois.com/2017/04/18/hexagonal-bokeh-blur-revisited/

[a more accurate implementation]:

https://epicgames.ent.box.com/s/s86j70iamxvsuu6j35pilypficznec04

[a blog post from 2017]:

https://colinbarrebrisebois.com/2017/04/18/hexagonal-bokeh-blur-revisited/

[f-number]: https://en.wikipedia.org/wiki/F-number

[Super 35]: https://en.wikipedia.org/wiki/Super_35

[blog post on depth of field in Unity]:

https://catlikecoding.com/unity/tutorials/advanced-rendering/depth-of-field/

## Changelog

### Added

* A depth of field postprocessing effect is now available, to simulate

objects being out of focus of the camera. To use it, add

`DepthOfFieldSettings` to an entity containing a `Camera3d` component.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: Bram Buurlage <brambuurlage@gmail.com>

# Objective

The `Cone` primitive should support meshing.

## Solution

Implement meshing for the `Cone` primitive. The default cone has a

height of 1 and a base radius of 0.5, and is centered at the origin.

An issue with cone meshes is that the tip does not really have a normal

that works, even with duplicated vertices. This PR uses only a single

vertex for the tip, with a normal of zero; this results in an "invalid"

normal that gets ignored by the fragment shader. This seems to be the

only approach we have for perfectly smooth cones. For discussion on the

topic, see #10298 and #5891.

Another thing to note is that the cone uses polar coordinates for the

UVs:

<img

src="https://github.com/bevyengine/bevy/assets/57632562/e101ded9-110a-4ac4-a98d-f1e4d740a24a"

alt="cone" width="400" />

This way, textures are applied as if looking at the cone from above:

<img

src="https://github.com/bevyengine/bevy/assets/57632562/8dea00f1-a283-4bc4-9676-91e8d4adb07a"

alt="texture" width="200" />

<img

src="https://github.com/bevyengine/bevy/assets/57632562/d9d1b5e6-a8ba-4690-b599-904dd85777a1"

alt="cone" width="200" />

# Objective

Fixes two issues related to #13208.

First, we ensure render resources for a window are always dropped first

to ensure that the `winit::Window` always drops on the main thread when

it is removed from `WinitWindows`. Previously, changes in #12978 caused

the window to drop in the render world, causing issues.

We accomplish this by delaying despawning the window by a frame by

inserting a marker component `ClosingWindow` that indicates the window

has been requested to close and is in the process of closing. The render

world now responds to the equivalent `WindowClosing` event rather than

`WindowCloseed` which now fires after the render resources are

guarunteed to be cleaned up.

Secondly, fixing the above caused (revealed?) that additional events

were being delivered to the the event loop handler after exit had

already been requested: in my testing `RedrawRequested` and

`LoopExiting`. This caused errors to be reported try to send an exit

event on the close channel. There are two options here:

- Guard the handler so no additional events are delivered once the app

is exiting. I ~considered this but worried it might be confusing or bug

prone if in the future someone wants to handle `LoopExiting` or some

other event to clean-up while exiting.~ We are now taking this approach.

- Only send an exit signal if we are not already exiting. ~It doesn't

appear to cause any problems to handle the extra events so this seems

safer.~

Fixing this also appears to have fixed#13231.

Fixes#10260.

## Testing

Tested on mac only.

---

## Changelog

### Added

- A `WindowClosing` event has been added that indicates the window will

be despawned on the next frame.

### Changed

- Windows now close a frame after their exit has been requested.

## Migration Guide

- Ensure custom exit logic does not rely on the app exiting the same

frame as a window is closed.

WebGL 2 doesn't support variable-length uniform buffer arrays. So we

arbitrarily set the length of the visibility ranges field to 64 on that

platform.

---------

Co-authored-by: IceSentry <c.giguere42@gmail.com>

# Objective

Fixes#12966

## Solution

Renaming multi_threaded feature to match snake case

## Migration Guide

Bevy feature multi-threaded should be refered to multi_threaded from now

on.

# Objective

- `DynamicUniformBuffer` tries to create a buffer as soon as the changed

flag is set to true. This doesn't work correctly when the buffer wasn't

already created. This currently creates a crash because it's trying to

create a buffer of size 0 if the flag is set but there's no buffer yet.

## Solution

- Don't create a changed buffer until there's data that needs to be

written to a buffer.

## Testing

- run `cargo run --example scene_viewer` and see that it doesn't crash

anymore

Fixes#13235

# Objective

Documentation should mention the two plugins required for your custom

`CameraProjection` to work.

## Solution

Documented!

---

I tried linking to `bevy_pbr::PbrProjectionPlugin` from

`bevy_render:📷:CameraProjection` but it wasn't in scope. Is there

a trick to it?

# Objective

in response to [13222](https://github.com/bevyengine/bevy/issues/13222)

## Solution

The Image trait was already re-exported in bevy_render/src/lib.rs, So I

added it inline there.

## Testing

Confirmed that it does compile. Simple change, shouldn't cause any

bugs/regressions.

# Objective

`bevy_pbr/utils.wgsl` shader file contains mathematical constants and

color conversion functions. Both of those should be accessible without

enabling `bevy_pbr` feature. For example, tonemapping can be done in non

pbr scenario, and it uses color conversion functions.

Fixes#13207

## Solution

* Move mathematical constants (such as PI, E) from

`bevy_pbr/src/render/utils.wgsl` into `bevy_render/src/maths.wgsl`

* Move color conversion functions from `bevy_pbr/src/render/utils.wgsl`

into new file `bevy_render/src/color_operations.wgsl`

## Testing

Ran multiple examples, checked they are working:

* tonemapping

* color_grading

* 3d_scene

* animated_material

* deferred_rendering

* 3d_shapes

* fog

* irradiance_volumes

* meshlet

* parallax_mapping

* pbr

* reflection_probes

* shadow_biases

* 2d_gizmos

* light_gizmos

---

## Changelog

* Moved mathematical constants (such as PI, E) from

`bevy_pbr/src/render/utils.wgsl` into `bevy_render/src/maths.wgsl`

* Moved color conversion functions from `bevy_pbr/src/render/utils.wgsl`

into new file `bevy_render/src/color_operations.wgsl`

## Migration Guide

In user's shader code replace usage of mathematical constants from

`bevy_pbr::utils` to the usage of the same constants from

`bevy_render::maths`.

# Objective

- Add auto exposure/eye adaptation to the bevy render pipeline.

- Support features that users might expect from other engines:

- Metering masks

- Compensation curves

- Smooth exposure transitions

This PR is based on an implementation I already built for a personal

project before https://github.com/bevyengine/bevy/pull/8809 was

submitted, so I wasn't able to adopt that PR in the proper way. I've

still drawn inspiration from it, so @fintelia should be credited as

well.

## Solution

An auto exposure compute shader builds a 64 bin histogram of the scene's

luminance, and then adjusts the exposure based on that histogram. Using

a histogram allows the system to ignore outliers like shadows and

specular highlights, and it allows to give more weight to certain areas

based on a mask.

---

## Changelog

- Added: AutoExposure plugin that allows to adjust a camera's exposure

based on it's scene's luminance.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

This is an adoption of #12670 plus some documentation fixes. See that PR

for more details.

---

## Changelog

* Renamed `BufferVec` to `RawBufferVec` and added a new `BufferVec`

type.

## Migration Guide

`BufferVec` has been renamed to `RawBufferVec` and a new similar type

has taken the `BufferVec` name.

---------

Co-authored-by: Patrick Walton <pcwalton@mimiga.net>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: IceSentry <IceSentry@users.noreply.github.com>

Implement visibility ranges, also known as hierarchical levels of detail

(HLODs).

This commit introduces a new component, `VisibilityRange`, which allows

developers to specify camera distances in which meshes are to be shown

and hidden. Hiding meshes happens early in the rendering pipeline, so

this feature can be used for level of detail optimization. Additionally,

this feature is properly evaluated per-view, so different views can show

different levels of detail.

This feature differs from proper mesh LODs, which can be implemented

later. Engines generally implement true mesh LODs later in the pipeline;

they're typically more efficient than HLODs with GPU-driven rendering.

However, mesh LODs are more limited than HLODs, because they require the

lower levels of detail to be meshes with the same vertex layout and

shader (and perhaps the same material) as the original mesh. Games often

want to use objects other than meshes to replace distant models, such as

*octahedral imposters* or *billboard imposters*.

The reason why the feature is called *hierarchical level of detail* is

that HLODs can replace multiple meshes with a single mesh when the

camera is far away. This can be useful for reducing drawcall count. Note

that `VisibilityRange` doesn't automatically propagate down to children;

it must be placed on every mesh.

Crossfading between different levels of detail is supported, using the

standard 4x4 ordered dithering pattern from [1]. The shader code to

compute the dithering patterns should be well-optimized. The dithering

code is only active when visibility ranges are in use for the mesh in

question, so that we don't lose early Z.

Cascaded shadow maps show the HLOD level of the view they're associated

with. Point light and spot light shadow maps, which have no CSMs,

display all HLOD levels that are visible in any view. To support this

efficiently and avoid doing visibility checks multiple times, we

precalculate all visible HLOD levels for each entity with a

`VisibilityRange` during the `check_visibility_range` system.

A new example, `visibility_range`, has been added to the tree, as well

as a new low-poly version of the flight helmet model to go with it. It

demonstrates use of the visibility range feature to provide levels of

detail.

[1]: https://en.wikipedia.org/wiki/Ordered_dithering#Threshold_map

[^1]: Unreal doesn't have a feature that exactly corresponds to

visibility ranges, but Unreal's HLOD system serves roughly the same

purpose.

## Changelog

### Added

* A new `VisibilityRange` component is available to conditionally enable

entity visibility at camera distances, with optional crossfade support.

This can be used to implement different levels of detail (LODs).

## Screenshots

High-poly model:

Low-poly model up close:

Crossfading between the two:

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- I've been using the `texture_binding_array` example as a base to use

multiple textures in meshes in my program

- I only realised once I was deep in render code that these helpers

existed to create layouts

- I wish I knew the existed earlier because the alternative (filling in

every struct field) is so much more verbose

## Solution

- Use `BindGroupLayoutEntries::with_indices` to teach users that the

helper exists

- Also fix typo which should be `texture_2d`.

## Alternatives considered

- Just leave it as is to teach users about every single struct field

- However, leaving as is leaves users writing roughly 29 lines versus

roughly 2 lines for 2 entries and I'd prefer the 2 line approach

## Testing

Ran the example locally and compared before and after.

Before:

<img width="1280" alt="image"

src="https://github.com/bevyengine/bevy/assets/135186256/f5897210-2560-4110-b92b-85497be9023c">

After:

<img width="1279" alt="image"

src="https://github.com/bevyengine/bevy/assets/135186256/8d13a939-b1ce-4a49-a9da-0b1779c8cb6a">

Co-authored-by: mgi388 <>

# Objective

- `README.md` is a common file that usually gives an overview of the

folder it is in.

- When on <https://crates.io>, `README.md` is rendered as the main

description.

- Many crates in this repository are lacking `README.md` files, which

makes it more difficult to understand their purpose.

<img width="1552" alt="image"

src="https://github.com/bevyengine/bevy/assets/59022059/78ebf91d-b0c4-4b18-9874-365d6310640f">

- There are also a few inconsistencies with `README.md` files that this

PR and its follow-ups intend to fix.

## Solution

- Create a `README.md` file for all crates that do not have one.

- This file only contains the title of the crate (underscores removed,

proper capitalization, acronyms expanded) and the <https://shields.io>

badges.

- Remove the `readme` field in `Cargo.toml` for `bevy` and

`bevy_reflect`.

- This field is redundant because [Cargo automatically detects

`README.md`

files](https://doc.rust-lang.org/cargo/reference/manifest.html#the-readme-field).

The field is only there if you name it something else, like `INFO.md`.

- Fix capitalization of `bevy_utils`'s `README.md`.

- It was originally `Readme.md`, which is inconsistent with the rest of

the project.

- I created two commits renaming it to `README.md`, because Git appears

to be case-insensitive.

- Expand acronyms in title of `bevy_ptr` and `bevy_utils`.

- In the commit where I created all the new `README.md` files, I

preferred using expanded acronyms in the titles. (E.g. "Bevy Developer

Tools" instead of "Bevy Dev Tools".)

- This commit changes the title of existing `README.md` files to follow

the same scheme.

- I do not feel strongly about this change, please comment if you

disagree and I can revert it.

- Add <https://shields.io> badges to `bevy_time` and `bevy_transform`,

which are the only crates currently lacking them.

---

## Changelog

- Added `README.md` files to all crates missing it.

# Objective

- Update glam version requirement to latest version.

## Solution

- Updated `glam` version requirement from 0.25 to 0.27.

- Updated `encase` and `encase_derive_impl` version requirement from 0.7

to 0.8.

- Updated `hexasphere` version requirement from 10.0 to 12.0.

- Breaking changes from glam changelog:

- [0.26.0] Minimum Supported Rust Version bumped to 1.68.2 for impl

From<bool> for {f32,f64} support.

- [0.27.0] Changed implementation of vector fract method to match the

Rust implementation instead of the GLSL implementation, that is self -

self.trunc() instead of self - self.floor().

---

## Migration Guide

- When using `glam` exports, keep in mind that `vector` `fract()` method

now matches Rust implementation (that is `self - self.trunc()` instead

of `self - self.floor()`). If you want to use the GLSL implementation

you should now use `fract_gl()`.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

This commit expands Bevy's existing tonemapping feature to a complete

set of filmic color grading tools, matching those of engines like Unity,

Unreal, and Godot. The following features are supported:

* White point adjustment. This is inspired by Unity's implementation of

the feature, but simplified and optimized. *Temperature* and *tint*

control the adjustments to the *x* and *y* chromaticity values of [CIE

1931]. Following Unity, the adjustments are made relative to the [D65

standard illuminant] in the [LMS color space].

* Hue rotation. This simply converts the RGB value to [HSV], alters the

hue, and converts back.

* Color correction. This allows the *gamma*, *gain*, and *lift* values

to be adjusted according to the standard [ASC CDL combined function].

* Separate color correction for shadows, midtones, and highlights.

Blender's source code was used as a reference for the implementation of

this. The midtone ranges can be adjusted by the user. To avoid abrupt

color changes, a small crossfade is used between the different sections

of the image, again following Blender's formulas.

A new example, `color_grading`, has been added, offering a GUI to change

all the color grading settings. It uses the same test scene as the

existing `tonemapping` example, which has been factored out into a

shared glTF scene.

[CIE 1931]: https://en.wikipedia.org/wiki/CIE_1931_color_space

[D65 standard illuminant]:

https://en.wikipedia.org/wiki/Standard_illuminant#Illuminant_series_D

[LMS color space]: https://en.wikipedia.org/wiki/LMS_color_space

[HSV]: https://en.wikipedia.org/wiki/HSL_and_HSV

[ASC CDL combined function]:

https://en.wikipedia.org/wiki/ASC_CDL#Combined_Function

## Changelog

### Added

* Many new filmic color grading options have been added to the

`ColorGrading` component.

## Migration Guide

* `ColorGrading::gamma` and `ColorGrading::pre_saturation` are now set

separately for the `shadows`, `midtones`, and `highlights` sections. You

can migrate code with the `ColorGrading::all_sections` and

`ColorGrading::all_sections_mut` functions, which access and/or update

all sections at once.

* `ColorGrading::post_saturation` and `ColorGrading::exposure` are now

fields of `ColorGrading::global`.

## Screenshots

In #12889, I mistakenly started dropping unbatchable sorted items on the

floor instead of giving them solitary batches. This caused the objects

in the `shader_instancing` demo to stop showing up. This patch fixes the

issue by giving those items their own batches as expected.

Fixes#13130.

This commit implements opt-in GPU frustum culling, built on top of the

infrastructure in https://github.com/bevyengine/bevy/pull/12773. To

enable it on a camera, add the `GpuCulling` component to it. To

additionally disable CPU frustum culling, add the `NoCpuCulling`

component. Note that adding `GpuCulling` without `NoCpuCulling`

*currently* does nothing useful. The reason why `GpuCulling` doesn't

automatically imply `NoCpuCulling` is that I intend to follow this patch

up with GPU two-phase occlusion culling, and CPU frustum culling plus

GPU occlusion culling seems like a very commonly-desired mode.

Adding the `GpuCulling` component to a view puts that view into

*indirect mode*. This mode makes all drawcalls indirect, relying on the

mesh preprocessing shader to allocate instances dynamically. In indirect

mode, the `PreprocessWorkItem` `output_index` points not to a

`MeshUniform` instance slot but instead to a set of `wgpu`

`IndirectParameters`, from which it allocates an instance slot

dynamically if frustum culling succeeds. Batch building has been updated

to allocate and track indirect parameter slots, and the AABBs are now

supplied to the GPU as `MeshCullingData`.

A small amount of code relating to the frustum culling has been borrowed

from meshlets and moved into `maths.wgsl`. Note that standard Bevy

frustum culling uses AABBs, while meshlets use bounding spheres; this

means that not as much code can be shared as one might think.

This patch doesn't provide any way to perform GPU culling on shadow

maps, to avoid making this patch bigger than it already is. That can be

a followup.

## Changelog

### Added

* Frustum culling can now optionally be done on the GPU. To enable it,

add the `GpuCulling` component to a camera.

* To disable CPU frustum culling, add `NoCpuCulling` to a camera. Note

that `GpuCulling` doesn't automatically imply `NoCpuCulling`.

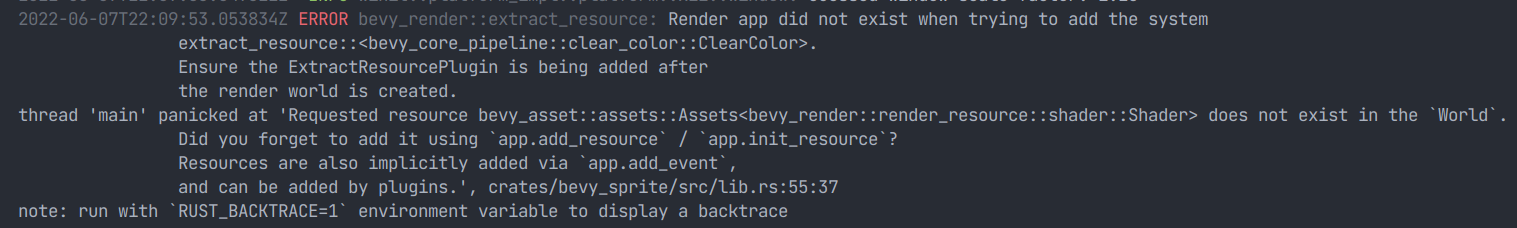

# Objective

- Provide feedback when an extraction plugin fails to add its system.

I had some troubleshooting pain when this happened to me, as the panic

only tells you a resource is missing. This PR adds an error when the

ExtractResource plugin is added before the render world exists, instead

of silently failing.

# Objective

- Since #12622 example `compute_shader_game_of_life` crashes

```

thread 'Compute Task Pool (2)' panicked at examples/shader/compute_shader_game_of_life.rs:137:65:

called `Option::unwrap()` on a `None` value

note: run with `RUST_BACKTRACE=1` environment variable to display a backtrace

Encountered a panic in system `compute_shader_game_of_life::prepare_bind_group`!

thread '<unnamed>' panicked at examples/shader/compute_shader_game_of_life.rs:254:34:

Requested resource compute_shader_game_of_life::GameOfLifeImageBindGroups does not exist in the `World`.

Did you forget to add it using `app.insert_resource` / `app.init_resource`?

Resources are also implicitly added via `app.add_event`,

and can be added by plugins.

Encountered a panic in system `bevy_render::renderer::render_system`!

```

## Solution

- `exhausted()` now checks that there is a limit

# Objective

Fix https://github.com/bevyengine/bevy/issues/11799 and improve

`CameraProjectionPlugin`

## Solution

`CameraProjectionPlugin` is now an all-in-one plugin for adding a custom

`CameraProjection`. I also added `PbrProjectionPlugin` which is like

`CameraProjectionPlugin` but for PBR.

P.S. I'd like to get this merged after

https://github.com/bevyengine/bevy/pull/11766.

---

## Changelog

- Changed `CameraProjectionPlugin` to be an all-in-one plugin for adding

a `CameraProjection`

- Removed `VisibilitySystems::{UpdateOrthographicFrusta,

UpdatePerspectiveFrusta, UpdateProjectionFrusta}`, now replaced with

`VisibilitySystems::UpdateFrusta`

- Added `PbrProjectionPlugin` for projection-specific PBR functionality.

## Migration Guide

`VisibilitySystems`'s `UpdateOrthographicFrusta`,

`UpdatePerspectiveFrusta`, and `UpdateProjectionFrusta` variants were

removed, they were replaced with `VisibilitySystems::UpdateFrusta`

# Objective

allow throttling of gpu uploads to prevent choppy framerate when many

textures/meshes are loaded in.

## Solution

- `RenderAsset`s can implement `byte_len()` which reports their size.

implemented this for `Mesh` and `Image`

- users can add a `RenderAssetBytesPerFrame` which specifies max bytes

to attempt to upload in a frame

- `render_assets::<A>` checks how many bytes have been written before

attempting to upload assets. the limit is a soft cap: assets will be

written until the total has exceeded the cap, to ensure some forward

progress every frame

notes:

- this is a stopgap until we have multiple wgpu queues for proper

streaming of data

- requires #12606

issues

- ~~fonts sometimes only partially upload. i have no clue why, needs to

be fixed~~ fixed now.

- choosing the #bytes is tricky as it should be hardware / framerate

dependent

- many features are not tested (env maps, light probes, etc) - they

won't break unless `RenderAssetBytesPerFrame` is explicitly used though

---------

Co-authored-by: IceSentry <IceSentry@users.noreply.github.com>

Co-authored-by: François Mockers <francois.mockers@vleue.com>

https://github.com/bevyengine/bevy/assets/2632925/e046205e-3317-47c3-9959-fc94c529f7e0

# Objective

- Adds per-object motion blur to the core 3d pipeline. This is a common

effect used in games and other simulations.

- Partially resolves#4710

## Solution

- This is a post-process effect that uses the depth and motion vector

buffers to estimate per-object motion blur. The implementation is

combined from knowledge from multiple papers and articles. The approach

itself, and the shader are quite simple. Most of the effort was in

wiring up the bevy rendering plumbing, and properly specializing for HDR

and MSAA.

- To work with MSAA, the MULTISAMPLED_SHADING wgpu capability is

required. I've extracted this code from #9000. This is because the

prepass buffers are multisampled, and require accessing with

`textureLoad` as opposed to the widely compatible `textureSample`.

- Added an example to demonstrate the effect of motion blur parameters.

## Future Improvements

- While this approach does have limitations, it's one of the most

commonly used, and is much better than camera motion blur, which does

not consider object velocity. For example, this implementation allows a

dolly to track an object, and that object will remain unblurred while

the background is blurred. The biggest issue with this implementation is

that blur is constrained to the boundaries of objects which results in

hard edges. There are solutions to this by either dilating the object or

the motion vector buffer, or by taking a different approach such as

https://casual-effects.com/research/McGuire2012Blur/index.html

- I'm using a noise PRNG function to jitter samples. This could be

replaced with a blue noise texture lookup or similar, however after

playing with the parameters, it gives quite nice results with 4 samples,

and is significantly better than the artifacts generated when not

jittering.

---

## Changelog

- Added: per-object motion blur. This can be enabled and configured by

adding the `MotionBlurBundle` to a camera entity.

---------

Co-authored-by: Torstein Grindvik <52322338+torsteingrindvik@users.noreply.github.com>

# Objective

- bevy usually use `Parallel::scope` to collect items from `par_iter`,

but `scope` will be called with every satifified items. it will cause a

lot of unnecessary lookup.

## Solution

- similar to Rayon ,we introduce `for_each_init` for `par_iter` which

only be invoked when spawn a task for a group of items.

---

## Changelog

- added `for_each_init`

## Performance

`check_visibility ` in `many_foxes `

~40% performance gain in `check_visibility`.

---------

Co-authored-by: James Liu <contact@jamessliu.com>

# Objective

Closes#13017.

## Solution

- Make `AppExit` a enum with a `Success` and `Error` variant.

- Make `App::run()` return a `AppExit` if it ever returns.

- Make app runners return a `AppExit` to signal if they encountered a

error.

---

## Changelog

### Added

- [`App::should_exit`](https://example.org/)

- [`AppExit`](https://docs.rs/bevy/latest/bevy/app/struct.AppExit.html)

to the `bevy` and `bevy_app` preludes,

### Changed

- [`AppExit`](https://docs.rs/bevy/latest/bevy/app/struct.AppExit.html)

is now a enum with 2 variants (`Success` and `Error`).

- The app's [runner

function](https://docs.rs/bevy/latest/bevy/app/struct.App.html#method.set_runner)

now has to return a `AppExit`.

-

[`App::run()`](https://docs.rs/bevy/latest/bevy/app/struct.App.html#method.run)

now also returns the `AppExit` produced by the runner function.

## Migration Guide

- Replace all usages of

[`AppExit`](https://docs.rs/bevy/latest/bevy/app/struct.AppExit.html)

with `AppExit::Success` or `AppExit::Failure`.

- Any custom app runners now need to return a `AppExit`. We suggest you

return a `AppExit::Error` if any `AppExit` raised was a Error. You can

use the new [`App::should_exit`](https://example.org/) method.

- If not exiting from `main` any other way. You should return the

`AppExit` from `App::run()` so the app correctly returns a error code if

anything fails e.g.

```rust

fn main() -> AppExit {

App::new()

//Your setup here...

.run()

}

```

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

- `MeshPipelineKey` use some bits for two things

- First commit in this PR adds an assertion that doesn't work currently

on main

- This leads to some mesh topology not working anymore, for example

`LineStrip`

- With examples `lines`, there should be two groups of lines, the blue

one doesn't display currently

## Solution

- Change the `MeshPipelineKey` to be backed by a `u64` instead, to have

enough bits

# Objective

- Fixes#13024.

## Solution

- Run `cargo clippy --target wasm32-unknown-unknown` until there are no

more errors.

- I recommend reviewing one commit at a time :)

---

## Changelog

- Fixed Clippy lints for `wasm32-unknown-unknown` target.

- Updated `bevy_transform`'s `README.md`.

# Objective

- Closes#12930.

## Solution

- Add a corresponding optional field on `Window` and `ExtractedWindow`

---

## Changelog

### Added

- `wgpu`'s `desired_maximum_frame_latency` is exposed through window

creation. This can be used to override the default maximum number of

queued frames on the GPU (currently 2).

## Migration Guide

- The `desired_maximum_frame_latency` field must be added to instances

of `Window` and `ExtractedWindow` where all fields are explicitly

specified.

# Objective

Make visibility system ordering explicit. Fixes#12953.

## Solution

Specify `CheckVisibility` happens after all other `VisibilitySystems`

sets have happened.

---------

Co-authored-by: Elabajaba <Elabajaba@users.noreply.github.com>

# Objective

- Fixes#12976

## Solution

This one is a doozy.

- Run `cargo +beta clippy --workspace --all-targets --all-features` and

fix all issues

- This includes:

- Moving inner attributes to be outer attributes, when the item in

question has both inner and outer attributes

- Use `ptr::from_ref` in more scenarios

- Extend the valid idents list used by `clippy:doc_markdown` with more

names

- Use `Clone::clone_from` when possible

- Remove redundant `ron` import

- Add backticks to **so many** identifiers and items

- I'm sorry whoever has to review this

---

## Changelog

- Added links to more identifiers in documentation.

[Alpha to coverage] (A2C) replaces alpha blending with a

hardware-specific multisample coverage mask when multisample

antialiasing is in use. It's a simple form of [order-independent

transparency] that relies on MSAA. ["Anti-aliased Alpha Test: The

Esoteric Alpha To Coverage"] is a good summary of the motivation for and

best practices relating to A2C.

This commit implements alpha to coverage support as a new variant for

`AlphaMode`. You can supply `AlphaMode::AlphaToCoverage` as the

`alpha_mode` field in `StandardMaterial` to use it. When in use, the

standard material shader automatically applies the texture filtering

method from ["Anti-aliased Alpha Test: The Esoteric Alpha To Coverage"].

Objects with alpha-to-coverage materials are binned in the opaque pass,

as they're fully order-independent.

The `transparency_3d` example has been updated to feature an object with

alpha to coverage. Happily, the example was already using MSAA.

This is part of #2223, as far as I can tell.

[Alpha to coverage]: https://en.wikipedia.org/wiki/Alpha_to_coverage

[order-independent transparency]:

https://en.wikipedia.org/wiki/Order-independent_transparency

["Anti-aliased Alpha Test: The Esoteric Alpha To Coverage"]:

https://bgolus.medium.com/anti-aliased-alpha-test-the-esoteric-alpha-to-coverage-8b177335ae4f

---

## Changelog

### Added

* The `AlphaMode` enum now supports `AlphaToCoverage`, to provide

limited order-independent transparency when multisample antialiasing is

in use.

# Objective

- `cargo run --release --example bevymark -- --benchmark --waves 160

--per-wave 1000 --mode mesh2d` runs slower and slower over time due to

`no_gpu_preprocessing::write_batched_instance_buffer<bevy_sprite::mesh2d::mesh::Mesh2dPipeline>`

taking longer and longer because the `BatchedInstanceBuffer` is not

cleared

## Solution

- Split the `clear_batched_instance_buffers` system into CPU and GPU

versions

- Use the CPU version for 2D meshes

# Objective

Fixes a crash when transcoding one- or two-channel KTX2 textures

## Solution

transcoded array has been pre-allocated up to levels.len using a macros.

Rgb8 transcoding already uses that and addresses transcoded array by an

index. R8UnormSrgb and Rg8UnormSrgb were pushing on top of the

transcoded vec, resulting in first levels.len() vectors to stay empty,

and second levels.len() levels actually being transcoded, which then

resulted in out of bounds read when copying levels to gpu

# Objective

- I daily drive nightly Rust when developing Bevy, so I notice when new

warnings are raised by `cargo check` and Clippy.

- `cargo +nightly clippy` raises a few of these new warnings.

## Solution

- Fix most warnings from `cargo +nightly clippy`

- I skipped the docs-related warnings because some were covered by

#12692.

- Use `Clone::clone_from` in applicable scenarios, which can sometimes

avoid an extra allocation.

- Implement `Default` for structs that have a `pub const fn new() ->

Self` method.

- Fix an occurrence where generic constraints were defined in both `<C:

Trait>` and `where C: Trait`.

- Removed generic constraints that were implied by the `Bundle` trait.

---

## Changelog

- `BatchingStrategy`, `NonGenericTypeCell`, and `GenericTypeCell` now

implement `Default`.

This commit splits `VisibleEntities::entities` into four separate lists:

one for lights, one for 2D meshes, one for 3D meshes, and one for UI

elements. This allows `queue_material_meshes` and similar methods to

avoid examining entities that are obviously irrelevant. In particular,

this separation helps scenes with many skinned meshes, as the individual

bones are considered visible entities but have no rendered appearance.

Internally, `VisibleEntities::entities` is a `HashMap` from the `TypeId`

representing a `QueryFilter` to the appropriate `Entity` list. I had to

do this because `VisibleEntities` is located within an upstream crate

from the crates that provide lights (`bevy_pbr`) and 2D meshes

(`bevy_sprite`). As an added benefit, this setup allows apps to provide

their own types of renderable components, by simply adding a specialized

`check_visibility` to the schedule.

This provides a 16.23% end-to-end speedup on `many_foxes` with 10,000

foxes (24.06 ms/frame to 20.70 ms/frame).

## Migration guide

* `check_visibility` and `VisibleEntities` now store the four types of

renderable entities--2D meshes, 3D meshes, lights, and UI

elements--separately. If your custom rendering code examines

`VisibleEntities`, it will now need to specify which type of entity it's

interested in using the `WithMesh2d`, `WithMesh`, `WithLight`, and

`WithNode` types respectively. If your app introduces a new type of

renderable entity, you'll need to add an explicit call to

`check_visibility` to the schedule to accommodate your new component or

components.

## Analysis

`many_foxes`, 10,000 foxes: `main`:

`many_foxes`, 10,000 foxes, this branch:

`queue_material_meshes` (yellow = this branch, red = `main`):

`queue_shadows` (yellow = this branch, red = `main`):

# Objective

-

[`clippy::ref_as_ptr`](https://rust-lang.github.io/rust-clippy/master/index.html#/ref_as_ptr)

prevents you from directly casting references to pointers, requiring you

to use `std::ptr::from_ref` instead. This prevents you from accidentally

converting an immutable reference into a mutable pointer (`&x as *mut

T`).

- Follow up to #11818, now that our [`rust-version` is

1.77](11817f4ba4/Cargo.toml (L14)).

## Solution

- Enable lint and fix all warnings.

I ported the two existing PCF techniques to the cubemap domain as best I

could. Generally, the technique is to create a 2D orthonormal basis

using Gram-Schmidt normalization, then apply the technique over that

basis. The results look fine, though the shadow bias often needs

adjusting.

For comparison, Unity uses a 4-tap pattern for PCF on point lights of

(1, 1, 1), (-1, -1, 1), (-1, 1, -1), (1, -1, -1). I tried this but

didn't like the look, so I went with the design above, which ports the

2D techniques to the 3D domain. There's surprisingly little material on

point light PCF.

I've gone through every example using point lights and verified that the

shadow maps look fine, adjusting biases as necessary.

Fixes#3628.

---

## Changelog

### Added

* Shadows from point lights now support percentage-closer filtering

(PCF), and as a result look less aliased.

### Changed

* `ShadowFilteringMethod::Castano13` and

`ShadowFilteringMethod::Jimenez14` have been renamed to

`ShadowFilteringMethod::Gaussian` and `ShadowFilteringMethod::Temporal`

respectively.

## Migration Guide

* `ShadowFilteringMethod::Castano13` and

`ShadowFilteringMethod::Jimenez14` have been renamed to

`ShadowFilteringMethod::Gaussian` and `ShadowFilteringMethod::Temporal`

respectively.