# Objective

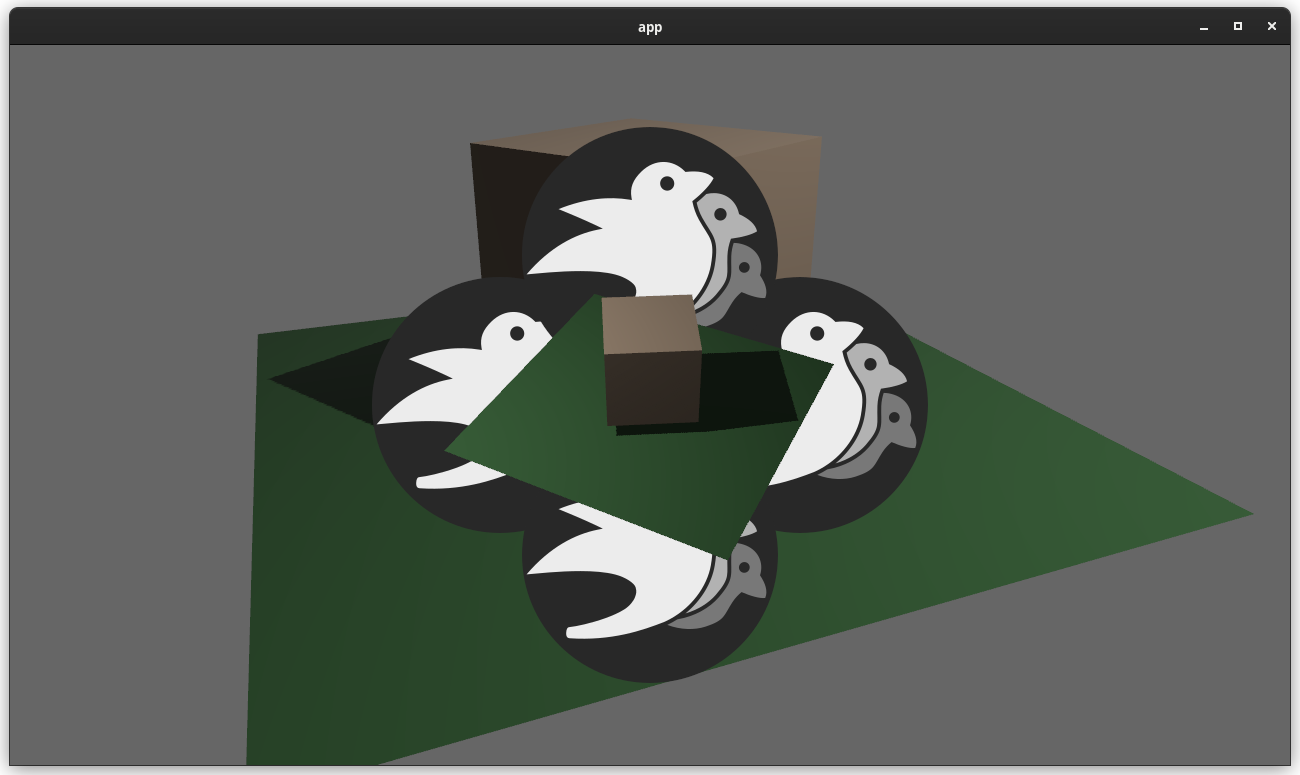

- Add an example showing a custom post processing effect, done after the first rendering pass.

## Solution

- A simple post processing "chromatic aberration" effect. I mixed together examples `3d/render_to_texture`, and `shader/shader_material_screenspace_texture`

- Reading a bit how https://github.com/bevyengine/bevy/pull/3430 was done gave me pointers to apply the main pass to the 2d render rather than using a 3d quad.

This work might be or not be relevant to https://github.com/bevyengine/bevy/issues/2724

<details>

<summary> ⚠️ Click for a video of the render ⚠️ I’ve been told it might hurt the eyes 👀 , maybe we should choose another effect just in case ?</summary>

https://user-images.githubusercontent.com/2290685/169138830-a6dc8a9f-8798-44b9-8d9e-449e60614916.mp4

</details>

# Request for feedbacks

- [ ] Is chromatic aberration effect ok ? (Correct term, not a danger for the eyes ?) I'm open to suggestion to make something different.

- [ ] Is the code idiomatic ? I preferred a "main camera -> **new camera with post processing applied to a quad**" approach to emulate minimum modification to existing code wanting to add global post processing.

---

## Changelog

- Add a full screen post processing shader example

# Objective

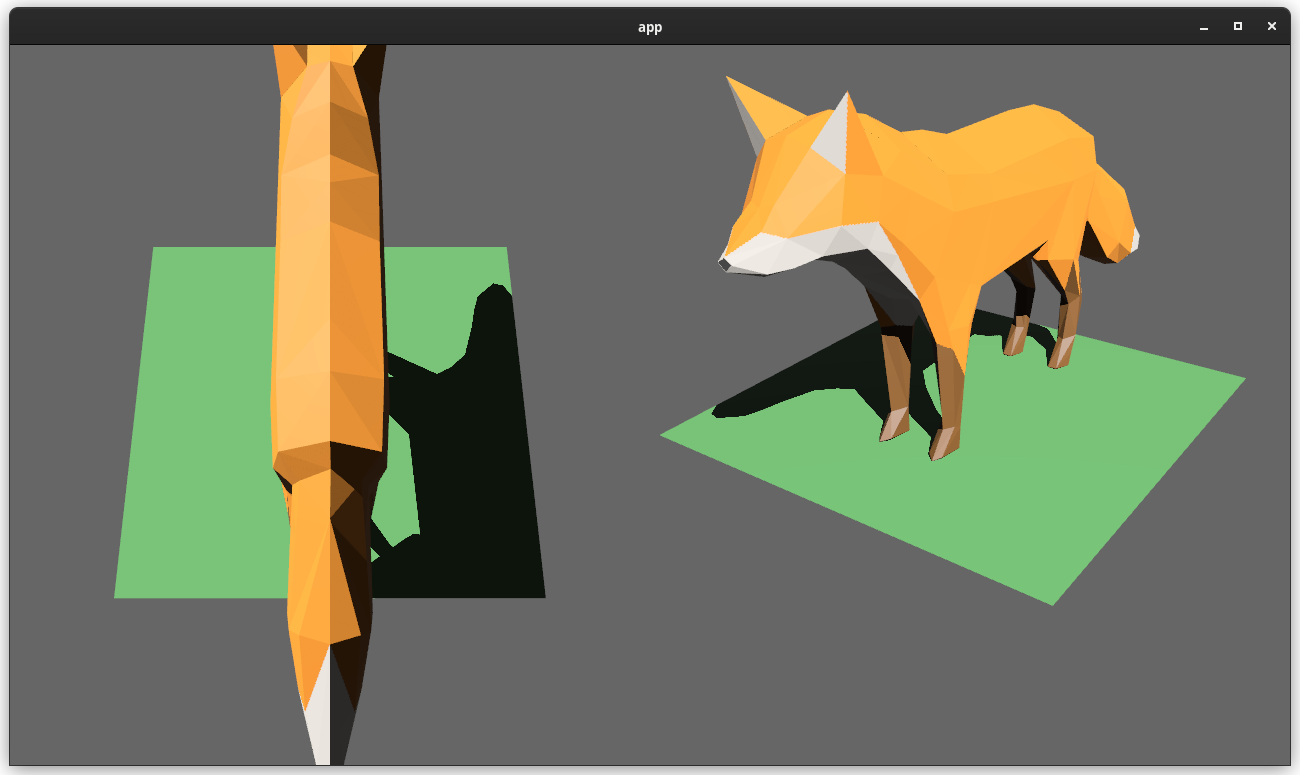

Users should be able to render cameras to specific areas of a render target, which enables scenarios like split screen, minimaps, etc.

Builds on the new Camera Driven Rendering added here: #4745Fixes: #202

Alternative to #1389 and #3626 (which are incompatible with the new Camera Driven Rendering)

## Solution

Cameras can now configure an optional "viewport", which defines a rectangle within their render target to draw to. If a `Viewport` is defined, the camera's `CameraProjection`, `View`, and visibility calculations will use the viewport configuration instead of the full render target.

```rust

// This camera will render to the first half of the primary window (on the left side).

commands.spawn_bundle(Camera3dBundle {

camera: Camera {

viewport: Some(Viewport {

physical_position: UVec2::new(0, 0),

physical_size: UVec2::new(window.physical_width() / 2, window.physical_height()),

depth: 0.0..1.0,

}),

..default()

},

..default()

});

```

To account for this, the `Camera` component has received a few adjustments:

* `Camera` now has some new getter functions:

* `logical_viewport_size`, `physical_viewport_size`, `logical_target_size`, `physical_target_size`, `projection_matrix`

* All computed camera values are now private and live on the `ComputedCameraValues` field (logical/physical width/height, the projection matrix). They are now exposed on `Camera` via getters/setters This wasn't _needed_ for viewports, but it was long overdue.

---

## Changelog

### Added

* `Camera` components now have a `viewport` field, which can be set to draw to a portion of a render target instead of the full target.

* `Camera` component has some new functions: `logical_viewport_size`, `physical_viewport_size`, `logical_target_size`, `physical_target_size`, and `projection_matrix`

* Added a new split_screen example illustrating how to render two cameras to the same scene

## Migration Guide

`Camera::projection_matrix` is no longer a public field. Use the new `Camera::projection_matrix()` method instead:

```rust

// Bevy 0.7

let projection = camera.projection_matrix;

// Bevy 0.8

let projection = camera.projection_matrix();

```

This adds "high level camera driven rendering" to Bevy. The goal is to give users more control over what gets rendered (and where) without needing to deal with render logic. This will make scenarios like "render to texture", "multiple windows", "split screen", "2d on 3d", "3d on 2d", "pass layering", and more significantly easier.

Here is an [example of a 2d render sandwiched between two 3d renders (each from a different perspective)](https://gist.github.com/cart/4fe56874b2e53bc5594a182fc76f4915):

Users can now spawn a camera, point it at a RenderTarget (a texture or a window), and it will "just work".

Rendering to a second window is as simple as spawning a second camera and assigning it to a specific window id:

```rust

// main camera (main window)

commands.spawn_bundle(Camera2dBundle::default());

// second camera (other window)

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

target: RenderTarget::Window(window_id),

..default()

},

..default()

});

```

Rendering to a texture is as simple as pointing the camera at a texture:

```rust

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

target: RenderTarget::Texture(image_handle),

..default()

},

..default()

});

```

Cameras now have a "render priority", which controls the order they are drawn in. If you want to use a camera's output texture as a texture in the main pass, just set the priority to a number lower than the main pass camera (which defaults to `0`).

```rust

// main pass camera with a default priority of 0

commands.spawn_bundle(Camera2dBundle::default());

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

target: RenderTarget::Texture(image_handle.clone()),

priority: -1,

..default()

},

..default()

});

commands.spawn_bundle(SpriteBundle {

texture: image_handle,

..default()

})

```

Priority can also be used to layer to cameras on top of each other for the same RenderTarget. This is what "2d on top of 3d" looks like in the new system:

```rust

commands.spawn_bundle(Camera3dBundle::default());

commands.spawn_bundle(Camera2dBundle {

camera: Camera {

// this will render 2d entities "on top" of the default 3d camera's render

priority: 1,

..default()

},

..default()

});

```

There is no longer the concept of a global "active camera". Resources like `ActiveCamera<Camera2d>` and `ActiveCamera<Camera3d>` have been replaced with the camera-specific `Camera::is_active` field. This does put the onus on users to manage which cameras should be active.

Cameras are now assigned a single render graph as an "entry point", which is configured on each camera entity using the new `CameraRenderGraph` component. The old `PerspectiveCameraBundle` and `OrthographicCameraBundle` (generic on camera marker components like Camera2d and Camera3d) have been replaced by `Camera3dBundle` and `Camera2dBundle`, which set 3d and 2d default values for the `CameraRenderGraph` and projections.

```rust

// old 3d perspective camera

commands.spawn_bundle(PerspectiveCameraBundle::default())

// new 3d perspective camera

commands.spawn_bundle(Camera3dBundle::default())

```

```rust

// old 2d orthographic camera

commands.spawn_bundle(OrthographicCameraBundle::new_2d())

// new 2d orthographic camera

commands.spawn_bundle(Camera2dBundle::default())

```

```rust

// old 3d orthographic camera

commands.spawn_bundle(OrthographicCameraBundle::new_3d())

// new 3d orthographic camera

commands.spawn_bundle(Camera3dBundle {

projection: OrthographicProjection {

scale: 3.0,

scaling_mode: ScalingMode::FixedVertical,

..default()

}.into(),

..default()

})

```

Note that `Camera3dBundle` now uses a new `Projection` enum instead of hard coding the projection into the type. There are a number of motivators for this change: the render graph is now a part of the bundle, the way "generic bundles" work in the rust type system prevents nice `..default()` syntax, and changing projections at runtime is much easier with an enum (ex for editor scenarios). I'm open to discussing this choice, but I'm relatively certain we will all come to the same conclusion here. Camera2dBundle and Camera3dBundle are much clearer than being generic on marker components / using non-default constructors.

If you want to run a custom render graph on a camera, just set the `CameraRenderGraph` component:

```rust

commands.spawn_bundle(Camera3dBundle {

camera_render_graph: CameraRenderGraph::new(some_render_graph_name),

..default()

})

```

Just note that if the graph requires data from specific components to work (such as `Camera3d` config, which is provided in the `Camera3dBundle`), make sure the relevant components have been added.

Speaking of using components to configure graphs / passes, there are a number of new configuration options:

```rust

commands.spawn_bundle(Camera3dBundle {

camera_3d: Camera3d {

// overrides the default global clear color

clear_color: ClearColorConfig::Custom(Color::RED),

..default()

},

..default()

})

commands.spawn_bundle(Camera3dBundle {

camera_3d: Camera3d {

// disables clearing

clear_color: ClearColorConfig::None,

..default()

},

..default()

})

```

Expect to see more of the "graph configuration Components on Cameras" pattern in the future.

By popular demand, UI no longer requires a dedicated camera. `UiCameraBundle` has been removed. `Camera2dBundle` and `Camera3dBundle` now both default to rendering UI as part of their own render graphs. To disable UI rendering for a camera, disable it using the CameraUi component:

```rust

commands

.spawn_bundle(Camera3dBundle::default())

.insert(CameraUi {

is_enabled: false,

..default()

})

```

## Other Changes

* The separate clear pass has been removed. We should revisit this for things like sky rendering, but I think this PR should "keep it simple" until we're ready to properly support that (for code complexity and performance reasons). We can come up with the right design for a modular clear pass in a followup pr.

* I reorganized bevy_core_pipeline into Core2dPlugin and Core3dPlugin (and core_2d / core_3d modules). Everything is pretty much the same as before, just logically separate. I've moved relevant types (like Camera2d, Camera3d, Camera3dBundle, Camera2dBundle) into their relevant modules, which is what motivated this reorganization.

* I adapted the `scene_viewer` example (which relied on the ActiveCameras behavior) to the new system. I also refactored bits and pieces to be a bit simpler.

* All of the examples have been ported to the new camera approach. `render_to_texture` and `multiple_windows` are now _much_ simpler. I removed `two_passes` because it is less relevant with the new approach. If someone wants to add a new "layered custom pass with CameraRenderGraph" example, that might fill a similar niche. But I don't feel much pressure to add that in this pr.

* Cameras now have `target_logical_size` and `target_physical_size` fields, which makes finding the size of a camera's render target _much_ simpler. As a result, the `Assets<Image>` and `Windows` parameters were removed from `Camera::world_to_screen`, making that operation much more ergonomic.

* Render order ambiguities between cameras with the same target and the same priority now produce a warning. This accomplishes two goals:

1. Now that there is no "global" active camera, by default spawning two cameras will result in two renders (one covering the other). This would be a silent performance killer that would be hard to detect after the fact. By detecting ambiguities, we can provide a helpful warning when this occurs.

2. Render order ambiguities could result in unexpected / unpredictable render results. Resolving them makes sense.

## Follow Up Work

* Per-Camera viewports, which will make it possible to render to a smaller area inside of a RenderTarget (great for something like splitscreen)

* Camera-specific MSAA config (should use the same "overriding" pattern used for ClearColor)

* Graph Based Camera Ordering: priorities are simple, but they make complicated ordering constraints harder to express. We should consider adopting a "graph based" camera ordering model with "before" and "after" relationships to other cameras (or build it "on top" of the priority system).

* Consider allowing graphs to run subgraphs from any nest level (aka a global namespace for graphs). Right now the 2d and 3d graphs each need their own UI subgraph, which feels "fine" in the short term. But being able to share subgraphs between other subgraphs seems valuable.

* Consider splitting `bevy_core_pipeline` into `bevy_core_2d` and `bevy_core_3d` packages. Theres a shared "clear color" dependency here, which would need a new home.

# Objective

- Add Vertex Color support to 2D meshes and ColorMaterial. This extends the work from #4528 (which in turn builds on the excellent tangent handling).

## Solution

- Added `#ifdef` wrapped support for vertex colors in the 2D mesh shader and `ColorMaterial` shader.

- Added an example, `mesh2d_vertex_color_texture` to demonstrate it in action.

---

## Changelog

- Added optional (ifdef wrapped) vertex color support to the 2dmesh and color material systems.

# Objective

Provide a starting point for #3951, or a partial solution.

Providing a few comment blocks to discuss, and hopefully find better one in the process.

## Solution

Since I am pretty new to pretty much anything in this context, I figured I'd just start with a draft for some file level doc blocks. For some of them I found more relevant details (or at least things I considered interessting), for some others there is less.

## Changelog

- Moved some existing comments from main() functions in the 2d examples to the file header level

- Wrote some more comment blocks for most other 2d examples

TODO:

- [x] 2d/sprite_sheet, wasnt able to come up with something good yet

- [x] all other example groups...

Also: Please let me know if the commit style is okay, or to verbose. I could certainly squash these things, or add more details if needed.

I also hope its okay to raise this PR this early, with just a few files changed. Took me long enough and I dont wanted to let it go to waste because I lost motivation to do the whole thing. Additionally I am somewhat uncertain over the style and contents of the commets. So let me know what you thing please.

# Objective

Add support for vertex colors

## Solution

This change is modeled after how vertex tangents are handled, so the shader is conditionally compiled with vertex color support if the mesh has the corresponding attribute set.

Vertex colors are multiplied by the base color. I'm not sure if this is the best for all cases, but may be useful for modifying vertex colors without creating a new mesh.

I chose `VertexFormat::Float32x4`, but I'd prefer 16-bit floats if/when support is added.

## Changelog

### Added

- Vertex colors can be specified using the `Mesh::ATTRIBUTE_COLOR` mesh attribute.

# Objective

Bevy users often want to create circles and other simple shapes.

All the machinery is in place to accomplish this, and there are external crates that help. But when writing code for e.g. a new bevy example, it's not really possible to draw a circle without bringing in a new asset, writing a bunch of scary looking mesh code, or adding a dependency.

In particular, this PR was inspired by this interaction in another PR: https://github.com/bevyengine/bevy/pull/3721#issuecomment-1016774535

## Solution

This PR adds `shape::RegularPolygon` and `shape::Circle` (which is just a `RegularPolygon` that defaults to a large number of sides)

## Discussion

There's a lot of ongoing discussion about shapes in <https://github.com/bevyengine/rfcs/pull/12> and at least one other lingering shape PR (although it seems incomplete).

That RFC currently includes `RegularPolygon` and `Circle` shapes, so I don't think that having working mesh generation code in the engine for those shapes would add much burden to an author of an implementation.

But if we'd prefer not to add additional shapes until after that's sorted out, I'm happy to close this for now.

## Alternatives for users

For any users stumbling on this issue, here are some plugins that will help if you need more shapes.

https://github.com/Nilirad/bevy_prototype_lyonhttps://github.com/johanhelsing/bevy_smudhttps://github.com/Weasy666/bevy_svghttps://github.com/redpandamonium/bevy_more_shapeshttps://github.com/ForesightMiningSoftwareCorporation/bevy_polyline

# Objective

- As requested here: https://github.com/bevyengine/bevy/pull/4520#issuecomment-1109302039

- Make it easier to spot issues with built-in shapes

## Solution

https://user-images.githubusercontent.com/200550/165624709-c40dfe7e-0e1e-4bd3-ae52-8ae66888c171.mp4

- Add an example showcasing the built-in 3d shapes with lighting/shadows

- Rotate objects in such a way that all faces are seen by the camera

- Add a UV debug texture

## Discussion

I'm not sure if this is what @alice-i-cecile had in mind, but I adapted the little "torus playground" from the issue linked above to include all built-in shapes.

This exact arrangement might not be particularly scalable if many more shapes are added. Maybe a slow camera pan, or cycling with the keyboard or on a timer, or a sidebar with buttons would work better. If one of the latter options is used, options for showing wireframes or computed flat normals might add some additional utility.

Ideally, I think we'd have a better way of visualizing normals.

Happy to rework this or close it if there's not a consensus around it being useful.

# Objective

Fixes https://github.com/bevyengine/bevy/issues/3499

## Solution

Uses a `HashMap` from `RenderTarget` to sampled textures when preparing `ViewTarget`s to ensure that two passes with the same render target get sampled to the same texture.

This builds on and depends on https://github.com/bevyengine/bevy/pull/3412, so this will be a draft PR until #3412 is merged. All changes for this PR are in the last commit.

# Objective

- Several examples are useful for qualitative tests of Bevy's performance

- By contrast, these are less useful for learning material: they are often relatively complex and have large amounts of setup and are performance optimized.

## Solution

- Move bevymark, many_sprites and many_cubes into the new stress_tests example folder

- Move contributors into the games folder: unlike the remaining examples in the 2d folder, it is not focused on demonstrating a clear feature.

# Objective

- Make use of storage buffers, where they are available, for clustered forward bindings to support far more point lights in a scene

- Fixes#3605

- Based on top of #4079

This branch on an M1 Max can keep 60fps with about 2150 point lights of radius 1m in the Sponza scene where I've been testing. The bottleneck is mostly assigning lights to clusters which grows faster than linearly (I think 1000 lights was about 1.5ms and 5000 was 7.5ms). I have seen papers and presentations leveraging compute shaders that can get this up to over 1 million. That said, I think any further optimisations should probably be done in a separate PR.

## Solution

- Add `RenderDevice` to the `Material` and `SpecializedMaterial` trait `::key()` functions to allow setting flags on the keys depending on feature/limit availability

- Make `GpuPointLights` and `ViewClusterBuffers` into enums containing `UniformVec` and `StorageBuffer` variants. Implement the necessary API on them to make usage the same for both cases, and the only difference is at initialisation time.

- Appropriate shader defs in the shader code to handle the two cases

## Context on some decisions / open questions

- I'm using `max_storage_buffers_per_shader_stage >= 3` as a check to see if storage buffers are supported. I was thinking about diving into 'binding resource management' but it feels like we don't have enough use cases to understand the problem yet, and it is mostly a separate concern to this PR, so I think it should be handled separately.

- Should `ViewClusterBuffers` and `ViewClusterBindings` be merged, duplicating the count variables into the enum variants?

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Load skeletal weights and indices from GLTF files. Animate meshes.

## Solution

- Load skeletal weights and indices from GLTF files.

- Added `SkinnedMesh` component and ` SkinnedMeshInverseBindPose` asset

- Added `extract_skinned_meshes` to extract joint matrices.

- Added queue phase systems for enqueuing the buffer writes.

Some notes:

- This ports part of # #2359 to the current main.

- This generates new `BufferVec`s and bind groups every frame. The expectation here is that the number of `Query::get` calls during extract is probably going to be the stronger bottleneck, with up to 256 calls per skinned mesh. Until that is optimized, caching buffers and bind groups is probably a non-concern.

- Unfortunately, due to the uniform size requirements, this means a 16KB buffer is allocated for every skinned mesh every frame. There's probably a few ways to get around this, but most of them require either compute shaders or storage buffers, which are both incompatible with WebGL2.

Co-authored-by: james7132 <contact@jamessliu.com>

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: James Liu <contact@jamessliu.com>

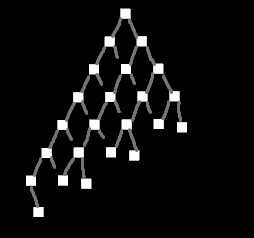

## Objective

There recently was a discussion on Discord about a possible test case for stress-testing transform hierarchies.

## Solution

Create a test case for stress testing transform propagation.

*Edit:* I have scrapped my previous example and built something more functional and less focused on visuals.

There are three test setups:

- `TestCase::Tree` recursively creates a tree with a specified depth and branch width

- `TestCase::NonUniformTree` is the same as `Tree` but omits nodes in a way that makes the tree "lean" towards one side, like this:

<details>

<summary></summary>

</details>

- `TestCase::Humanoids` creates one or more separate hierarchies based on the structure of common humanoid rigs

- this can both insert `active` and `inactive` instances of the human rig

It's possible to parameterize which parts of the hierarchy get updated (transform change) and which remain unchanged. This is based on @james7132 suggestion:

There's a probability to decide which entities should remain static. On top of that these changes can be limited to a certain range in the hierarchy (min_depth..max_depth).

# Objective

- Allow quick and easy testing of scenes

## Solution

- Add a `scene-viewer` tool based on `load_gltf`.

- Run it with e.g. `cargo run --release --example scene_viewer --features jpeg -- ../some/path/assets/models/Sponza/glTF/Sponza.gltf#Scene0`

- Configure the asset path as pointing to the repo root for convenience (paths specified relative to current working directory)

- Copy over the camera controller from the `shadow_biases` example

- Support toggling the light animation

- Support toggling shadows

- Support adjusting the directional light shadow projection (cascaded shadow maps will remove the need for this later)

I don't want to do too much on it up-front. Rather we can add features over time as we need them.

# Add Transform Examples

- Adding examples for moving/rotating entities (with its own section) to resolve#2400

I've stumbled upon this project and been fiddling around a little. Saw the issue and thought I might just add some examples for the proposed transformations.

Mind to check if I got the gist correctly and suggest anything I can improve?

# Objective

- Reduce power usage for games when not focused.

- Reduce power usage to ~0 when a desktop application is minimized (opt-in).

- Reduce power usage when focused, only updating on a `winit` event, or the user sends a redraw request. (opt-in)

https://user-images.githubusercontent.com/2632925/156904387-ec47d7de-7f06-4c6f-8aaf-1e952c1153a2.mp4

Note resource usage in the Task Manager in the above video.

## Solution

- Added a type `UpdateMode` that allows users to specify how the winit event loop is updated, without exposing winit types.

- Added two fields to `WinitConfig`, both with the `UpdateMode` type. One configures how the application updates when focused, and the other configures how the application behaves when it is not focused. Users can modify this resource manually to set the type of event loop control flow they want.

- For convenience, two functions were added to `WinitConfig`, that provide reasonable presets: `game()` (default) and `desktop_app()`.

- The `game()` preset, which is used by default, is unchanged from current behavior with one exception: when the app is out of focus the app updates at a minimum of 10fps, or every time a winit event is received. This has a huge positive impact on power use and responsiveness on my machine, which will otherwise continue running the app at many hundreds of fps when out of focus or minimized.

- The `desktop_app()` preset is fully reactive, only updating when user input (winit event) is supplied or a `RedrawRequest` event is sent. When the app is out of focus, it only updates on `Window` events - i.e. any winit event that directly interacts with the window. What this means in practice is that the app uses *zero* resources when minimized or not interacted with, but still updates fluidly when the app is out of focus and the user mouses over the application.

- Added a `RedrawRequest` event so users can force an update even if there are no events. This is useful in an application when you want to, say, run an animation even when the user isn't providing input.

- Added an example `low_power` to demonstrate these changes

## Usage

Configuring the event loop:

```rs

use bevy::winit::{WinitConfig};

// ...

.insert_resource(WinitConfig::desktop_app()) // preset

// or

.insert_resource(WinitConfig::game()) // preset

// or

.insert_resource(WinitConfig{ .. }) // manual

```

Requesting a redraw:

```rs

use bevy:🪟:RequestRedraw;

// ...

fn request_redraw(mut event: EventWriter<RequestRedraw>) {

event.send(RequestRedraw);

}

```

## Other details

- Because we have a single event loop for multiple windows, every time I've mentioned "focused" above, I more precisely mean, "if at least one bevy window is focused".

- Due to a platform bug in winit (https://github.com/rust-windowing/winit/issues/1619), we can't simply use `Window::request_redraw()`. As a workaround, this PR will temporarily set the window mode to `Poll` when a redraw is requested. This is then reset to the user's `WinitConfig` setting on the next frame.

# Objective

- Add ways to control how audio is played

## Solution

- playing a sound will return a (weak) handle to an asset that can be used to control playback

- if the asset is dropped, it will detach the sink (same behaviour as now)

# Objective

Will fix#3377 and #3254

## Solution

Use an enum to represent either a `WindowId` or `Handle<Image>` in place of `Camera::window`.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Closes#786

- Closes#2252

- Closes#2588

This PR implements a derive macro that allows users to define their queries as structs with named fields.

## Example

```rust

#[derive(WorldQuery)]

#[world_query(derive(Debug))]

struct NumQuery<'w, T: Component, P: Component> {

entity: Entity,

u: UNumQuery<'w>,

generic: GenericQuery<'w, T, P>,

}

#[derive(WorldQuery)]

#[world_query(derive(Debug))]

struct UNumQuery<'w> {

u_16: &'w u16,

u_32_opt: Option<&'w u32>,

}

#[derive(WorldQuery)]

#[world_query(derive(Debug))]

struct GenericQuery<'w, T: Component, P: Component> {

generic: (&'w T, &'w P),

}

#[derive(WorldQuery)]

#[world_query(filter)]

struct NumQueryFilter<T: Component, P: Component> {

_u_16: With<u16>,

_u_32: With<u32>,

_or: Or<(With<i16>, Changed<u16>, Added<u32>)>,

_generic_tuple: (With<T>, With<P>),

_without: Without<Option<u16>>,

_tp: PhantomData<(T, P)>,

}

fn print_nums_readonly(query: Query<NumQuery<u64, i64>, NumQueryFilter<u64, i64>>) {

for num in query.iter() {

println!("{:#?}", num);

}

}

#[derive(WorldQuery)]

#[world_query(mutable, derive(Debug))]

struct MutNumQuery<'w, T: Component, P: Component> {

i_16: &'w mut i16,

i_32_opt: Option<&'w mut i32>,

}

fn print_nums(mut query: Query<MutNumQuery, NumQueryFilter<u64, i64>>) {

for num in query.iter_mut() {

println!("{:#?}", num);

}

}

```

## TODOs:

- [x] Add support for `&T` and `&mut T`

- [x] Test

- [x] Add support for optional types

- [x] Test

- [x] Add support for `Entity`

- [x] Test

- [x] Add support for nested `WorldQuery`

- [x] Test

- [x] Add support for tuples

- [x] Test

- [x] Add support for generics

- [x] Test

- [x] Add support for query filters

- [x] Test

- [x] Add support for `PhantomData`

- [x] Test

- [x] Refactor `read_world_query_field_type_info`

- [x] Properly document `readonly` attribute for nested queries and the static assertions that guarantee safety

- [x] Test that we never implement `ReadOnlyFetch` for types that need mutable access

- [x] Test that we insert static assertions for nested `WorldQuery` that a user marked as readonly

This PR makes a number of changes to how meshes and vertex attributes are handled, which the goal of enabling easy and flexible custom vertex attributes:

* Reworks the `Mesh` type to use the newly added `VertexAttribute` internally

* `VertexAttribute` defines the name, a unique `VertexAttributeId`, and a `VertexFormat`

* `VertexAttributeId` is used to produce consistent sort orders for vertex buffer generation, replacing the more expensive and often surprising "name based sorting"

* Meshes can be used to generate a `MeshVertexBufferLayout`, which defines the layout of the gpu buffer produced by the mesh. `MeshVertexBufferLayouts` can then be used to generate actual `VertexBufferLayouts` according to the requirements of a specific pipeline. This decoupling of "mesh layout" vs "pipeline vertex buffer layout" is what enables custom attributes. We don't need to standardize _mesh layouts_ or contort meshes to meet the needs of a specific pipeline. As long as the mesh has what the pipeline needs, it will work transparently.

* Mesh-based pipelines now specialize on `&MeshVertexBufferLayout` via the new `SpecializedMeshPipeline` trait (which behaves like `SpecializedPipeline`, but adds `&MeshVertexBufferLayout`). The integrity of the pipeline cache is maintained because the `MeshVertexBufferLayout` is treated as part of the key (which is fully abstracted from implementers of the trait ... no need to add any additional info to the specialization key).

* Hashing `MeshVertexBufferLayout` is too expensive to do for every entity, every frame. To make this scalable, I added a generalized "pre-hashing" solution to `bevy_utils`: `Hashed<T>` keys and `PreHashMap<K, V>` (which uses `Hashed<T>` internally) . Why didn't I just do the quick and dirty in-place "pre-compute hash and use that u64 as a key in a hashmap" that we've done in the past? Because its wrong! Hashes by themselves aren't enough because two different values can produce the same hash. Re-hashing a hash is even worse! I decided to build a generalized solution because this pattern has come up in the past and we've chosen to do the wrong thing. Now we can do the right thing! This did unfortunately require pulling in `hashbrown` and using that in `bevy_utils`, because avoiding re-hashes requires the `raw_entry_mut` api, which isn't stabilized yet (and may never be ... `entry_ref` has favor now, but also isn't available yet). If std's HashMap ever provides the tools we need, we can move back to that. Note that adding `hashbrown` doesn't increase our dependency count because it was already in our tree. I will probably break these changes out into their own PR.

* Specializing on `MeshVertexBufferLayout` has one non-obvious behavior: it can produce identical pipelines for two different MeshVertexBufferLayouts. To optimize the number of active pipelines / reduce re-binds while drawing, I de-duplicate pipelines post-specialization using the final `VertexBufferLayout` as the key. For example, consider a pipeline that needs the layout `(position, normal)` and is specialized using two meshes: `(position, normal, uv)` and `(position, normal, other_vec2)`. If both of these meshes result in `(position, normal)` specializations, we can use the same pipeline! Now we do. Cool!

To briefly illustrate, this is what the relevant section of `MeshPipeline`'s specialization code looks like now:

```rust

impl SpecializedMeshPipeline for MeshPipeline {

type Key = MeshPipelineKey;

fn specialize(

&self,

key: Self::Key,

layout: &MeshVertexBufferLayout,

) -> RenderPipelineDescriptor {

let mut vertex_attributes = vec![

Mesh::ATTRIBUTE_POSITION.at_shader_location(0),

Mesh::ATTRIBUTE_NORMAL.at_shader_location(1),

Mesh::ATTRIBUTE_UV_0.at_shader_location(2),

];

let mut shader_defs = Vec::new();

if layout.contains(Mesh::ATTRIBUTE_TANGENT) {

shader_defs.push(String::from("VERTEX_TANGENTS"));

vertex_attributes.push(Mesh::ATTRIBUTE_TANGENT.at_shader_location(3));

}

let vertex_buffer_layout = layout

.get_layout(&vertex_attributes)

.expect("Mesh is missing a vertex attribute");

```

Notice that this is _much_ simpler than it was before. And now any mesh with any layout can be used with this pipeline, provided it has vertex postions, normals, and uvs. We even got to remove `HAS_TANGENTS` from MeshPipelineKey and `has_tangents` from `GpuMesh`, because that information is redundant with `MeshVertexBufferLayout`.

This is still a draft because I still need to:

* Add more docs

* Experiment with adding error handling to mesh pipeline specialization (which would print errors at runtime when a mesh is missing a vertex attribute required by a pipeline). If it doesn't tank perf, we'll keep it.

* Consider breaking out the PreHash / hashbrown changes into a separate PR.

* Add an example illustrating this change

* Verify that the "mesh-specialized pipeline de-duplication code" works properly

Please dont yell at me for not doing these things yet :) Just trying to get this in peoples' hands asap.

Alternative to #3120Fixes#3030

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

My attempt at fixing #2142. My very first attempt at contributing to Bevy so more than open to any feedback.

I borrowed heavily from the [Bevy Cheatbook page](https://bevy-cheatbook.github.io/patterns/generic-systems.html?highlight=generic#generic-systems).

## Solution

Fairly straightforward example using a clean up system to delete entities that are coupled with app state after exiting that state.

Co-authored-by: B-Janson <brandon@canva.com>

Add two examples on how to communicate with a task that is running either in another thread or in a thread from `AsyncComputeTaskPool`.

Loosely based on https://github.com/bevyengine/bevy/discussions/1150

## Objective

There is no bevy example that shows how to transform a sprite. At least as its singular purpose. This creates an example of how to use transform.translate to move a sprite up and down. The last pull request had issues that I couldn't fix so I created a new one

### Solution

I created move_sprite example.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Some new bevy users are unfamiliar with quaternions and have trouble working with rotations in 2D.

There has been an [issue](https://github.com/bitshifter/glam-rs/issues/226) raised with glam to add helpers to better support these users, however for now I feel could be better to provide examples of how to do this in Bevy as a starting point for new users.

## Solution

I've added a 2d_rotation example which demonstrates 3 different rotation examples to try help get people started:

- Rotating and translating a player ship based on keyboard input

- An enemy ship type that rotates to face the player ship immediately

- An enemy ship type that rotates to face the player at a fixed angular velocity

I also have a standalone version of this example here https://github.com/bitshifter/bevy-2d-rotation-example but I think it would be more discoverable if it's included with Bevy.

# Objective

- There are wasm specific examples, which is misleading as now it works by default

- I saw a few people on discord trying to work through those examples that are very limited

## Solution

- Remove them and update the instructions

adds an example using UI for something more related to a game than the current UI examples.

Example with a game menu:

* new game - will display settings for 5 seconds before returning to menu

* preferences - can modify the settings, with two sub menus

* quit - will quit the game

I wanted a more complex UI example before starting the UI rewrite to have ground for comparison

Co-authored-by: François <8672791+mockersf@users.noreply.github.com>

# Objective

In this PR I added the ability to opt-out graphical backends. Closes#3155.

## Solution

I turned backends into `Option` ~~and removed panicking sub app API to force users handle the error (was suggested by `@cart`)~~.

# Objective

The current 2d rendering is specialized to render sprites, we need a generic way to render 2d items, using meshes and materials like we have for 3d.

## Solution

I cloned a good part of `bevy_pbr` into `bevy_sprite/src/mesh2d`, removed lighting and pbr itself, adapted it to 2d rendering, added a `ColorMaterial`, and modified the sprite rendering to break batches around 2d meshes.

~~The PR is a bit crude; I tried to change as little as I could in both the parts copied from 3d and the current sprite rendering to make reviewing easier. In the future, I expect we could make the sprite rendering a normal 2d material, cleanly integrated with the rest.~~ _edit: see <https://github.com/bevyengine/bevy/pull/3460#issuecomment-1003605194>_

## Remaining work

- ~~don't require mesh normals~~ _out of scope_

- ~~add an example~~ _done_

- support 2d meshes & materials in the UI?

- bikeshed names (I didn't think hard about naming, please check if it's fine)

## Remaining questions

- ~~should we add a depth buffer to 2d now that there are 2d meshes?~~ _let's revisit that when we have an opaque render phase_

- ~~should we add MSAA support to the sprites, or remove it from the 2d meshes?~~ _I added MSAA to sprites since it's really needed for 2d meshes_

- ~~how to customize vertex attributes?~~ _#3120_

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- The multiple windows example which was viciously murdered in #3175.

- cart asked me to

## Solution

- Rework the example to work on pipelined-rendering, based on the work from #2898

# Objective

Every time I come back to Bevy I face the same issue: how do I draw a rectangle again? How did that work? So I go to https://github.com/bevyengine/bevy/tree/main/examples in the hope of finding literally the simplest possible example that draws something on the screen without any dependency such as an image. I don't want to have to add some image first, I just quickly want to get something on the screen with `main.rs` alone so that I can continue building on from that point on. Such an example is particularly helpful for a quick start for smaller projects that don't even need any assets such as images (this is my case currently).

Currently every single example of https://github.com/bevyengine/bevy/tree/main/examples#2d-rendering (which is the first section after hello world that beginners will look for for very minimalistic and quick examples) depends on at least an asset or is too complex. This PR solves this.

It also serves as a great comparison for a beginner to realize what Bevy is really like and how different it is from what they may expect Bevy to be. For example for someone coming from [LÖVE](https://love2d.org/), they will have something like this in their head when they think of drawing a rectangle:

```lua

function love.draw()

love.graphics.setColor(0.25, 0.25, 0.75);

love.graphics.rectangle("fill", 0, 0, 50, 50);

end

```

This, of course, differs quite a lot from what you do in Bevy. I imagine there will be people that just want to see something as simple as this in comparison to have a better understanding for the amount of differences.

## Solution

Add a dead simple example drawing a blue 50x50 rectangle in the center with no more and no less than needed.

# Objective

This PR fixes a crash when winit is enabled when there is a camera in the world. Part of #3155

## Solution

In this PR, I removed two unwraps and added an example for regression testing.

This makes the [New Bevy Renderer](#2535) the default (and only) renderer. The new renderer isn't _quite_ ready for the final release yet, but I want as many people as possible to start testing it so we can identify bugs and address feedback prior to release.

The examples are all ported over and operational with a few exceptions:

* I removed a good portion of the examples in the `shader` folder. We still have some work to do in order to make these examples possible / ergonomic / worthwhile: #3120 and "high level shader material plugins" are the big ones. This is a temporary measure.

* Temporarily removed the multiple_windows example: doing this properly in the new renderer will require the upcoming "render targets" changes. Same goes for the render_to_texture example.

* Removed z_sort_debug: entity visibility sort info is no longer available in app logic. we could do this on the "render app" side, but i dont consider it a priority.

# Objective

Port bevy_ui to pipelined-rendering (see #2535 )

## Solution

I did some changes during the port:

- [X] separate color from the texture asset (as suggested [here](https://discord.com/channels/691052431525675048/743663924229963868/874353914525413406))

- [X] ~give the vertex shader a per-instance buffer instead of per-vertex buffer~ (incompatible with batching)

Remaining features to implement to reach parity with the old renderer:

- [x] textures

- [X] TextBundle

I'd also like to add these features, but they need some design discussion:

- [x] batching

- [ ] separate opaque and transparent phases

- [ ] multiple windows

- [ ] texture atlases

- [ ] (maybe) clipping

Applogies, had to recreate this pr because of branching issue.

Old PR: https://github.com/bevyengine/bevy/pull/3033

# Objective

Fixes#3032

Allowing a user to create a transparent window

## Solution

I've allowed the transparent bool to be passed to the winit window builder

# Objective

- Remove `cargo-lipo` as [it's deprecated](https://github.com/TimNN/cargo-lipo#maintenance-status) and doesn't work on new Apple processors

- Fix CI that will fail as soon as GitHub update the worker used by Bevy to macOS 11

## Solution

- Replace `cargo-lipo` with building with the correct target

- Setup the correct path to libraries by using `xcrun --show-sdk-path`

- Also try and fix path to cmake in case it's not found but available through homebrew

Add an example that demonstrates the difference between no MSAA and MSAA 4x. This is also useful for testing panics when resizing the window using MSAA. This is on top of #3042 .

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

## New Features

This adds the following to the new renderer:

* **Shader Assets**

* Shaders are assets again! Users no longer need to call `include_str!` for their shaders

* Shader hot-reloading

* **Shader Defs / Shader Preprocessing**

* Shaders now support `# ifdef NAME`, `# ifndef NAME`, and `# endif` preprocessor directives

* **Bevy RenderPipelineDescriptor and RenderPipelineCache**

* Bevy now provides its own `RenderPipelineDescriptor` and the wgpu version is now exported as `RawRenderPipelineDescriptor`. This allows users to define pipelines with `Handle<Shader>` instead of needing to manually compile and reference `ShaderModules`, enables passing in shader defs to configure the shader preprocessor, makes hot reloading possible (because the descriptor can be owned and used to create new pipelines when a shader changes), and opens the doors to pipeline specialization.

* The `RenderPipelineCache` now handles compiling and re-compiling Bevy RenderPipelineDescriptors. It has internal PipelineLayout and ShaderModule caches. Users receive a `CachedPipelineId`, which can be used to look up the actual `&RenderPipeline` during rendering.

* **Pipeline Specialization**

* This enables defining per-entity-configurable pipelines that specialize on arbitrary custom keys. In practice this will involve specializing based on things like MSAA values, Shader Defs, Bind Group existence, and Vertex Layouts.

* Adds a `SpecializedPipeline` trait and `SpecializedPipelines<MyPipeline>` resource. This is a simple layer that generates Bevy RenderPipelineDescriptors based on a custom key defined for the pipeline.

* Specialized pipelines are also hot-reloadable.

* This was the result of experimentation with two different approaches:

1. **"generic immediate mode multi-key hash pipeline specialization"**

* breaks up the pipeline into multiple "identities" (the core pipeline definition, shader defs, mesh layout, bind group layout). each of these identities has its own key. looking up / compiling a specific version of a pipeline requires composing all of these keys together

* the benefit of this approach is that it works for all pipelines / the pipeline is fully identified by the keys. the multiple keys allow pre-hashing parts of the pipeline identity where possible (ex: pre compute the mesh identity for all meshes)

* the downside is that any per-entity data that informs the values of these keys could require expensive re-hashes. computing each key for each sprite tanked bevymark performance (sprites don't actually need this level of specialization yet ... but things like pbr and future sprite scenarios might).

* this is the approach rafx used last time i checked

2. **"custom key specialization"**

* Pipelines by default are not specialized

* Pipelines that need specialization implement a SpecializedPipeline trait with a custom key associated type

* This allows specialization keys to encode exactly the amount of information required (instead of needing to be a combined hash of the entire pipeline). Generally this should fit in a small number of bytes. Per-entity specialization barely registers anymore on things like bevymark. It also makes things like "shader defs" way cheaper to hash because we can use context specific bitflags instead of strings.

* Despite the extra trait, it actually generally makes pipeline definitions + lookups simpler: managing multiple keys (and making the appropriate calls to manage these keys) was way more complicated.

* I opted for custom key specialization. It performs better generally and in my opinion is better UX. Fortunately the way this is implemented also allows for custom caches as this all builds on a common abstraction: the RenderPipelineCache. The built in custom key trait is just a simple / pre-defined way to interact with the cache

## Callouts

* The SpecializedPipeline trait makes it easy to inherit pipeline configuration in custom pipelines. The changes to `custom_shader_pipelined` and the new `shader_defs_pipelined` example illustrate how much simpler it is to define custom pipelines based on the PbrPipeline.

* The shader preprocessor is currently pretty naive (it just uses regexes to process each line). Ultimately we might want to build a more custom parser for more performance + better error handling, but for now I'm happy to optimize for "easy to implement and understand".

## Next Steps

* Port compute pipelines to the new system

* Add more preprocessor directives (else, elif, import)

* More flexible vertex attribute specialization / enable cheaply specializing on specific mesh vertex layouts

This changes how render logic is composed to make it much more modular. Previously, all extraction logic was centralized for a given "type" of rendered thing. For example, we extracted meshes into a vector of ExtractedMesh, which contained the mesh and material asset handles, the transform, etc. We looked up bindings for "drawn things" using their index in the `Vec<ExtractedMesh>`. This worked fine for built in rendering, but made it hard to reuse logic for "custom" rendering. It also prevented us from reusing things like "extracted transforms" across contexts.

To make rendering more modular, I made a number of changes:

* Entities now drive rendering:

* We extract "render components" from "app components" and store them _on_ entities. No more centralized uber lists! We now have true "ECS-driven rendering"

* To make this perform well, I implemented #2673 in upstream Bevy for fast batch insertions into specific entities. This was merged into the `pipelined-rendering` branch here: #2815

* Reworked the `Draw` abstraction:

* Generic `PhaseItems`: each draw phase can define its own type of "rendered thing", which can define its own "sort key"

* Ported the 2d, 3d, and shadow phases to the new PhaseItem impl (currently Transparent2d, Transparent3d, and Shadow PhaseItems)

* `Draw` trait and and `DrawFunctions` are now generic on PhaseItem

* Modular / Ergonomic `DrawFunctions` via `RenderCommands`

* RenderCommand is a trait that runs an ECS query and produces one or more RenderPass calls. Types implementing this trait can be composed to create a final DrawFunction. For example the DrawPbr DrawFunction is created from the following DrawCommand tuple. Const generics are used to set specific bind group locations:

```rust

pub type DrawPbr = (

SetPbrPipeline,

SetMeshViewBindGroup<0>,

SetStandardMaterialBindGroup<1>,

SetTransformBindGroup<2>,

DrawMesh,

);

```

* The new `custom_shader_pipelined` example illustrates how the commands above can be reused to create a custom draw function:

```rust

type DrawCustom = (

SetCustomMaterialPipeline,

SetMeshViewBindGroup<0>,

SetTransformBindGroup<2>,

DrawMesh,

);

```

* ExtractComponentPlugin and UniformComponentPlugin:

* Simple, standardized ways to easily extract individual components and write them to GPU buffers

* Ported PBR and Sprite rendering to the new primitives above.

* Removed staging buffer from UniformVec in favor of direct Queue usage

* Makes UniformVec much easier to use and more ergonomic. Completely removes the need for custom render graph nodes in these contexts (see the PbrNode and view Node removals and the much simpler call patterns in the relevant Prepare systems).

* Added a many_cubes_pipelined example to benchmark baseline 3d rendering performance and ensure there were no major regressions during this port. Avoiding regressions was challenging given that the old approach of extracting into centralized vectors is basically the "optimal" approach. However thanks to a various ECS optimizations and render logic rephrasing, we pretty much break even on this benchmark!

* Lifetimeless SystemParams: this will be a bit divisive, but as we continue to embrace "trait driven systems" (ex: ExtractComponentPlugin, UniformComponentPlugin, DrawCommand), the ergonomics of `(Query<'static, 'static, (&'static A, &'static B, &'static)>, Res<'static, C>)` were getting very hard to bear. As a compromise, I added "static type aliases" for the relevant SystemParams. The previous example can now be expressed like this: `(SQuery<(Read<A>, Read<B>)>, SRes<C>)`. If anyone has better ideas / conflicting opinions, please let me know!

* RunSystem trait: a way to define Systems via a trait with a SystemParam associated type. This is used to implement the various plugins mentioned above. I also added SystemParamItem and QueryItem type aliases to make "trait stye" ecs interactions nicer on the eyes (and fingers).

* RenderAsset retrying: ensures that render assets are only created when they are "ready" and allows us to create bind groups directly inside render assets (which significantly simplified the StandardMaterial code). I think ultimately we should swap this out on "asset dependency" events to wait for dependencies to load, but this will require significant asset system changes.

* Updated some built in shaders to account for missing MeshUniform fields

This updates the `pipelined-rendering` branch to use the latest `bevy_ecs` from `main`. This accomplishes a couple of goals:

1. prepares for upcoming `custom-shaders` branch changes, which were what drove many of the recent bevy_ecs changes on `main`

2. prepares for the soon-to-happen merge of `pipelined-rendering` into `main`. By including bevy_ecs changes now, we make that merge simpler / easier to review.

I split this up into 3 commits:

1. **add upstream bevy_ecs**: please don't bother reviewing this content. it has already received thorough review on `main` and is a literal copy/paste of the relevant folders (the old folders were deleted so the directories are literally exactly the same as `main`).

2. **support manual buffer application in stages**: this is used to enable the Extract step. we've already reviewed this once on the `pipelined-rendering` branch, but its worth looking at one more time in the new context of (1).

3. **support manual archetype updates in QueryState**: same situation as (2).

# Objective

Forward perspective projections have poor floating point precision distribution over the depth range. Reverse projections fair much better, and instead of having to have a far plane, with the reverse projection, using an infinite far plane is not a problem. The infinite reverse perspective projection has become the industry standard. The renderer rework is a great time to migrate to it.

## Solution

All perspective projections, including point lights, have been moved to using `glam::Mat4::perspective_infinite_reverse_rh()` and so have no far plane. As various depth textures are shared between orthographic and perspective projections, a quirk of this PR is that the near and far planes of the orthographic projection are swapped when the Mat4 is computed. This has no impact on 2D/3D orthographic projection usage, and provides consistency in shaders, texture clear values, etc. throughout the codebase.

## Known issues

For some reason, when looking along -Z, all geometry is black. The camera can be translated up/down / strafed left/right and geometry will still be black. Moving forward/backward or rotating the camera away from looking exactly along -Z causes everything to work as expected.

I have tried to debug this issue but both in macOS and Windows I get crashes when doing pixel debugging. If anyone could reproduce this and debug it I would be very grateful. Otherwise I will have to try to debug it further without pixel debugging, though the projections and such all looked fine to me.

# Objective

Allow marking meshes as not casting / receiving shadows.

## Solution

- Added `NotShadowCaster` and `NotShadowReceiver` zero-sized type components.

- Extract these components into `bool`s in `ExtractedMesh`

- Only generate `DrawShadowMesh` `Drawable`s for meshes _without_ `NotShadowCaster`

- Add a `u32` bit `flags` member to `MeshUniform` with one flag indicating whether the mesh is a shadow receiver

- If a mesh does _not_ have the `NotShadowReceiver` component, then it is a shadow receiver, and so the bit in the `MeshUniform` is set, otherwise it is not set.

- Added an example illustrating the functionality.

NOTE: I wanted to have the default state of a mesh as being a shadow caster and shadow receiver, hence the `Not*` components. However, I am on the fence about this. I don't want to have a negative performance impact, nor have people wondering why their custom meshes don't have shadows because they forgot to add `ShadowCaster` and `ShadowReceiver` components, but I also really don't like the double negatives the `Not*` approach incurs. What do you think?

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Allow the user to set the clear color when using the pipelined renderer

## Solution

- Add a `ClearColor` resource that can be added to the world to configure the clear color

## Remaining Issues

Currently the `ClearColor` resource is cloned from the app world to the render world every frame. There are two ways I can think of around this:

1. Figure out why `app_world.is_resource_changed::<ClearColor>()` always returns `true` in the `extract` step and fix it so that we are only updating the resource when it changes

2. Require the users to add the `ClearColor` resource to the render sub-app instead of the parent app. This is currently sub-optimal until we have labled sub-apps, and probably a helper funciton on `App` such as `app.with_sub_app(RenderApp, |app| { ... })`. Even if we had that, I think it would be more than we want the user to have to think about. They shouldn't have to know about the render sub-app I don't think.

I think the first option is the best, but I could really use some help figuring out the nuance of why `is_resource_changed` is always returning true in that context.

# Objective

Restore the functionality of sprite atlases in the new renderer.

### **Note:** This PR relies on #2555

## Solution

Mostly just a copy paste of the existing sprite atlas implementation, however I unified the rendering between sprites and atlases.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Port bevy_gltf to the pipelined-rendering branch.

## Solution

crates/bevy_gltf has been copied and pasted into pipelined/bevy_gltf2 and modifications were made to work with the pipelined-rendering branch. Notably vertex tangents and vertex colours are not supported.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Noticed a warning when running tests:

```

> cargo test --workspace

warning: output filename collision.

The example target `change_detection` in package `bevy_ecs v0.5.0 (/bevy/crates/bevy_ecs)` has the same output filename as the example target `change_detection` in package `bevy v0.5.0 (/bevy)`.

Colliding filename is: /bevy/target/debug/examples/change_detection

The targets should have unique names.

Consider changing their names to be unique or compiling them separately.

This may become a hard error in the future; see <https://github.com/rust-lang/cargo/issues/6313>.

warning: output filename collision.

The example target `change_detection` in package `bevy_ecs v0.5.0 (/bevy/crates/bevy_ecs)` has the same output filename as the example target `change_detection` in package `bevy v0.5.0 (/bevy)`.

Colliding filename is: /bevy/target/debug/examples/change_detection.dSYM

The targets should have unique names.

Consider changing their names to be unique or compiling them separately.

This may become a hard error in the future; see <https://github.com/rust-lang/cargo/issues/6313>.

```

## Solution

I renamed example `change_detection` to `component_change_detection`

Adds an GitHub Action to check all local (non http://, https:// ) links in all Markdown files of the repository for liveness.

Fails if a file is not found.

# Goal

This should help maintaining the quality of the documentation.

# Impact

Takes ~24 seconds currently and found 3 dead links (pull requests already created).

# Dependent PRs

* #2064

* #2065

* #2066

# Info

See [markdown-link-check](https://github.com/marketplace/actions/markdown-link-check).

# Example output

```

FILE: ./docs/profiling.md

1 links checked.

FILE: ./docs/plugins_guidelines.md

37 links checked.

FILE: ./docs/linters.md

[✖] ../.github/linters/markdown-lint.yml → Status: 400 [Error: ENOENT: no such file or directory, access '/github/workspace/.github/linters/markdown-lint.yml'] {

errno: -2,

code: 'ENOENT',

syscall: 'access',

path: '/github/workspace/.github/linters/markdown-lint.yml'

}

```

# Improvements

* Can also be used to check external links, but fails because of:

* Too many requests (429) responses:

```

FILE: ./CHANGELOG.md

[✖] https://github.com/bevyengine/bevy/pull/1762 → Status: 429

```

* crates.io links respond 404

```

FILE: ./README.md

[✖] https://crates.io/crates/bevy → Status: 404

```

In response to #2023, here is a draft for a PR.

Fixes#2023

I've added an example to show how to use `WithBundle`, and also to test it out.

Right now there is a bug: If a bundle and a query are "the same", then it doesn't filter out

what it needs to filter out.

Example:

```

Print component initated from bundle.

[examples/ecs/query_bundle.rs:57] x = Dummy( <========= This should not get printed

111,

)

[examples/ecs/query_bundle.rs:57] x = Dummy(

222,

)

Show all components

[examples/ecs/query_bundle.rs:50] x = Dummy(

111,

)

[examples/ecs/query_bundle.rs:50] x = Dummy(

222,

)

```

However, it behaves the right way, if I add one more component to the bundle,

so the query and the bundle doesn't look the same:

```

Print component initated from bundle.

[examples/ecs/query_bundle.rs:57] x = Dummy(

222,

)

Show all components

[examples/ecs/query_bundle.rs:50] x = Dummy(

111,

)

[examples/ecs/query_bundle.rs:50] x = Dummy(

222,

)

```

I hope this helps. I'm definitely up for tinkering with this, and adding anything that I'm asked to add

or change.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

If accepted, fixes#1694 .

I wanted to explore system sets and run criteria a bit, so made an example expanding a bit on the snippets shown in the 0.5 release post.

Shows a couple of system sets, uses system labels, run criterion, and a use of the interesting `RunCriterion::pipe` functionality.

This PR adds an example on how to animate a shader by passing the global `time.seconds_since_startup()` to a component, and accessing that component inside the shader.

Hopefully this is the current proper solution, please let me know if it should be solved in another way.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

I was looking into "lower level" rendering and I saw no example on how to do that. Yet, I think it's something relevant to show, so I set up a simple example on how to do that. I hope it's welcome.

I'm not confident about the code and a review is definitely nice to have, especially because there are a few things that are not great.

Specifically, I think it would be nice to see how to render with a completely custom set of attributes (position and color, in this case), but I couldn't manage to get it working without normals and uv.

It makes sense if bevy Meshes need these two attributes, but I'm not sure about it.

Co-authored-by: Alessandro Re <ale@ale-re.net>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

This PR adds normal maps on top of PBR #1554. Once that PR lands, the changes should look simpler.

Edit: Turned out to be so little extra work, I added metallic/roughness texture too. And occlusion and emissive.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

This adds a new project for showing off Frustum Culling.

(Master runs this at sub 1 FPS while with the frustum culling it runs at 144 FPS on my system)

Short clip of the project running:

https://streamable.com/vvzh2u

This is a rebase of StarArawns PBR work from #261 with IngmarBitters work from #1160 cherry-picked on top.

I had to make a few minor changes to make some intermediate commits compile and the end result is not yet 100% what I expected, so there's a bit more work to do.

Co-authored-by: John Mitchell <toasterthegamer@gmail.com>

Co-authored-by: Ingmar Bitter <ingmar.bitter@gmail.com>

1. The instructions in the main README used to point users to the git main version. This has likely misdirected and confused many new users. Update to direct users to the latest release instead.

2. Rewrite the notice in the examples README to make it clearer and more concise, and to show the `latest` git branch.

See also: https://github.com/bevyengine/bevy-website/pull/109 for similar changes to the website and official book.

OK, here's my attempt at sprite flipping. There are a couple of points that I need review/help on, but I think the UX is about ideal:

```rust

.spawn(SpriteBundle {

material: materials.add(texture_handle.into()),

sprite: Sprite {

// Flip the sprite along the x axis

flip: SpriteFlip { x: true, y: false },

..Default::default()

},

..Default::default()

});

```

Now for the issues. The big issue is that for some reason, when flipping the UVs on the sprite, there is a light "bleeding" or whatever you call it where the UV tries to sample past the texture boundry and ends up clipping. This is only noticed when resizing the window, though. You can see a screenshot below.

I am quite baffled why the texture sampling is overrunning like it is and could use some guidance if anybody knows what might be wrong.

The other issue, which I just worked around, is that I had to remove the `#[render_resources(from_self)]` annotation from the Spritesheet because the `SpriteFlip` render resource wasn't being picked up properly in the shader when using it. I'm not sure what the cause of that was, but by removing the annotation and re-organizing the shader inputs accordingly the problem was fixed.

I'm not sure if this is the most efficient way to do this or if there is a better way, but I wanted to try it out if only for the learning experience. Let me know what you think!

It took me a little while to figure out how to use the `SystemParam` derive macro to easily create my own params. So I figured I'd add some docs and an example with what I learned.

- Fixed a bug in the `SystemParam` derive macro where it didn't detect the correct crate name when used in an example (no longer relevant, replaced by #1426 - see further)

- Added some doc comments and a short example code block in the docs for the `SystemParam` trait

- Added a more complete example with explanatory comments in examples

I have run the VSCode Extension [markdownlint](https://marketplace.visualstudio.com/items?itemName=DavidAnson.vscode-markdownlint) on all Markdown Files in the Repo.

The provided Rules are documented here: https://github.com/DavidAnson/markdownlint/blob/v0.23.1/doc/Rules.md

Rules I didn't follow/fix:

* MD024/no-duplicate-heading

* Changelog: Here Heading will always repeat.

* Examples Readme: Platform-specific documentation should be symmetrical.

* MD025/single-title

* MD026/no-trailing-punctuation

* Caused by the ! in "Hello, World!".

* MD033/no-inline-html

* The plugins_guidlines file does need HTML, so the shown badges aren't downscaled too much.

* ~~MD036/no-emphasis-as-heading:~~

* ~~This Warning only Appears in the Github Issue Templates and can be ignored.~~

* ~~MD041/first-line-heading~~

* ~~Only appears in the Readme for the AlienCake example Assets, which is unimportant.~~

---

I also sorted the Examples in the Readme and Cargo.toml in this order/Priority:

* Topic/Folder

* Introductionary Examples

* Alphabetical Order

The explanation for each case, where it isn't Alphabetical :

* Diagnostics

* log_diagnostics: The usage of inbuild Diagnostics is more important than creating your own.

* ECS (Entity Component System)

* ecs_guide: The guide should be read, before diving into other Features.

* Reflection

* reflection: Basic Explanation should be read, before more advanced Topics.

* WASM Examples

* hello_wasm: It's "Hello, World!".

* use `length_squared` for visible entities

* ortho projection 2d/3d different depth calculation

* use ScalingMode::FixedVertical for 3d ortho

* new example: 3d orthographic

- added some missing examples

- changed the order of a few examples (app/empty came after app/empty_default for example)

- added a table with the examples for Android and iOS, like it was done for wasm

see [`issue 1326`](https://github.com/bevyengine/bevy/issues/1326)

* move print diagnostics to log

* entity count diagnostic

* asset count diagnostic

* remove useless `pub`s

* use `BTreeMap` instead of `HashMap`

* get entity count from world

* keep ordered list of diagnostics

* Add force touches, fix ui focus system and touch screen system

* Fix examples README. Update rodio with Android support. Add Android build CI

* Alter android metadata in root Cargo.toml