# Objective

- Add morph targets to `bevy_pbr` (closes#5756) & load them from glTF

- Supersedes #3722

- Fixes#6814

[Morph targets][1] (also known as shape interpolation, shape keys, or

blend shapes) allow animating individual vertices with fine grained

controls. This is typically used for facial expressions. By specifying

multiple poses as vertex offset, and providing a set of weight of each

pose, it is possible to define surprisingly realistic transitions

between poses. Blending between multiple poses also allow composition.

Morph targets are part of the [gltf standard][2] and are a feature of

Unity and Unreal, and babylone.js, it is only natural to implement them

in bevy.

## Solution

This implementation of morph targets uses a 3d texture where each pixel

is a component of an animated attribute. Each layer is a different

target. We use a 2d texture for each target, because the number of

attribute×components×animated vertices is expected to always exceed the

maximum pixel row size limit of webGL2. It copies fairly closely the way

skinning is implemented on the CPU side, while on the GPU side, the

shader morph target implementation is a relatively trivial detail.

We add an optional `morph_texture` to the `Mesh` struct. The

`morph_texture` is built through a method that accepts an iterator over

attribute buffers.

The `MorphWeights` component, user-accessible, controls the blend of

poses used by mesh instances (so that multiple copy of the same mesh may

have different weights), all the weights are uploaded to a uniform

buffer of 256 `f32`. We limit to 16 poses per mesh, and a total of 256

poses.

More literature:

* Old babylone.js implementation (vertex attribute-based):

https://www.eternalcoding.com/dev-log-1-morph-targets/

* Babylone.js implementation (similar to ours):

https://www.youtube.com/watch?v=LBPRmGgU0PE

* GPU gems 3:

https://developer.nvidia.com/gpugems/gpugems3/part-i-geometry/chapter-3-directx-10-blend-shapes-breaking-limits

* Development discord thread

https://discord.com/channels/691052431525675048/1083325980615114772https://user-images.githubusercontent.com/26321040/231181046-3bca2ab2-d4d9-472e-8098-639f1871ce2e.mp4https://github.com/bevyengine/bevy/assets/26321040/d2a0c544-0ef8-45cf-9f99-8c3792f5a258

## Acknowledgements

* Thanks to `storytold` for sponsoring the feature

* Thanks to `superdump` and `james7132` for guidance and help figuring

out stuff

## Future work

- Handling of less and more attributes (eg: animated uv, animated

arbitrary attributes)

- Dynamic pose allocation (so that zero-weighted poses aren't uploaded

to GPU for example, enables much more total poses)

- Better animation API, see #8357

----

## Changelog

- Add morph targets to bevy meshes

- Support up to 64 poses per mesh of individually up to 116508 vertices,

animation currently strictly limited to the position, normal and tangent

attributes.

- Load a morph target using `Mesh::set_morph_targets`

- Add `VisitMorphTargets` and `VisitMorphAttributes` traits to

`bevy_render`, this allows defining morph targets (a fairly complex and

nested data structure) through iterators (ie: single copy instead of

passing around buffers), see documentation of those traits for details

- Add `MorphWeights` component exported by `bevy_render`

- `MorphWeights` control mesh's morph target weights, blending between

various poses defined as morph targets.

- `MorphWeights` are directly inherited by direct children (single level

of hierarchy) of an entity. This allows controlling several mesh

primitives through a unique entity _as per GLTF spec_.

- Add `MorphTargetNames` component, naming each indices of loaded morph

targets.

- Load morph targets weights and buffers in `bevy_gltf`

- handle morph targets animations in `bevy_animation` (previously, it

was a `warn!` log)

- Add the `MorphStressTest.gltf` asset for morph targets testing, taken

from the glTF samples repo, CC0.

- Add morph target manipulation to `scene_viewer`

- Separate the animation code in `scene_viewer` from the rest of the

code, reducing `#[cfg(feature)]` noise

- Add the `morph_targets.rs` example to show off how to manipulate morph

targets, loading `MorpStressTest.gltf`

## Migration Guide

- (very specialized, unlikely to be touched by 3rd parties)

- `MeshPipeline` now has a single `mesh_layouts` field rather than

separate `mesh_layout` and `skinned_mesh_layout` fields. You should

handle all possible mesh bind group layouts in your implementation

- You should also handle properly the new `MORPH_TARGETS` shader def and

mesh pipeline key. A new function is exposed to make this easier:

`setup_moprh_and_skinning_defs`

- The `MeshBindGroup` is now `MeshBindGroups`, cached bind groups are

now accessed through the `get` method.

[1]: https://en.wikipedia.org/wiki/Morph_target_animation

[2]:

https://registry.khronos.org/glTF/specs/2.0/glTF-2.0.html#morph-targets

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Better consistency with `add_systems`.

- Deprecating `add_plugin` in favor of a more powerful `add_plugins`.

- Allow passing `Plugin` to `add_plugins`.

- Allow passing tuples to `add_plugins`.

## Solution

- `App::add_plugins` now takes an `impl Plugins` parameter.

- `App::add_plugin` is deprecated.

- `Plugins` is a new sealed trait that is only implemented for `Plugin`,

`PluginGroup` and tuples over `Plugins`.

- All examples, benchmarks and tests are changed to use `add_plugins`,

using tuples where appropriate.

---

## Changelog

### Changed

- `App::add_plugins` now accepts all types that implement `Plugins`,

which is implemented for:

- Types that implement `Plugin`.

- Types that implement `PluginGroup`.

- Tuples (up to 16 elements) over types that implement `Plugins`.

- Deprecated `App::add_plugin` in favor of `App::add_plugins`.

## Migration Guide

- Replace `app.add_plugin(plugin)` calls with `app.add_plugins(plugin)`.

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Fix broken normals when the NormalPrepass is enabled

## Solution

- Don't use the normal prepass for the world_normal

- Only loadthe normal prepass

- when msaa is disabled

- for opaque or alpha mask meshes and only for use it for N not

world_normal

# Objective

- Make #8015 easier to review;

## Solution

- This commit contains changes not directly related to transmission

required by #8015, in easier-to-review, one-change-per-commit form.

---

## Changelog

### Fixed

- Clear motion vector prepass using `0.0` instead of `1.0`, to avoid TAA

artifacts on transparent objects against the background;

### Added

- The `E` mathematical constant is now available for use in shaders,

exposed under `bevy_pbr::utils`;

- A new `TAA` shader def is now available, for conditionally enabling

shader logic via `#ifdef` when TAA is enabled; (e.g. for jittering

texture samples)

- A new `FallbackImageZero` resource is introduced, for when a fallback

image filled with zeroes is required;

- A new `RenderPhase<I>::render_range()` method is introduced, for

render phases that need to render their items in multiple parceled out

“steps”;

### Changed

- The `MainTargetTextures` struct now holds both `Texture` and

`TextureViews` for the main textures;

- The fog shader functions under `bevy_pbr::fog` now take the a `Fog`

structure as their first argument, instead of relying on the global

`fog` uniform;

- The main textures can now be used as copy sources;

## Migration Guide

- `ViewTarget::main_texture()` and `ViewTarget::main_texture_other()`

now return `&Texture` instead of `&TextureView`. If you were relying on

these methods, replace your usage with

`ViewTarget::main_texture_view()`and

`ViewTarget::main_texture_other_view()`, respectively;

- `ViewTarget::sampled_main_texture()` now returns `Option<&Texture>`

instead of a `Option<&TextureView>`. If you were relying on this method,

replace your usage with `ViewTarget::sampled_main_texture_view()`;

- The `apply_fog()`, `linear_fog()`, `exponential_fog()`,

`exponential_squared_fog()` and `atmospheric_fog()` functions now take a

configurable `Fog` struct. If you were relying on them, update your

usage by adding the global `fog` uniform as their first argument;

# Objective

- Right now we can't really benefit from [early depth

testing](https://www.khronos.org/opengl/wiki/Early_Fragment_Test) in our

PBR shader because it includes codepaths with `discard`, even for

situations where they are not necessary.

## Solution

- This PR introduces a new `MeshPipelineKey` and shader def,

`MAY_DISCARD`;

- All possible material/mesh options that that may result in `discard`s

being needed must set `MAY_DISCARD` ahead of time:

- Right now, this is only `AlphaMode::Mask(f32)`, but in the future

might include other options/effects; (e.g. one effect I'm personally

interested in is bayer dither pseudo-transparency for LOD transitions of

opaque meshes)

- Shader codepaths that can `discard` are guarded by an `#ifdef

MAY_DISCARD` preprocessor directive:

- Right now, this is just one branch in `alpha_discard()`;

- If `MAY_DISCARD` is _not_ set, the `@early_depth_test` attribute is

added to the PBR fragment shader. This is a not yet documented, possibly

non-standard WGSL extension I found browsing Naga's source code. [I

opened a PR to document it

there](https://github.com/gfx-rs/naga/pull/2132). My understanding is

that for backends where this attribute is supported, it will force an

explicit opt-in to early depth test. (e.g. via

`layout(early_fragment_tests) in;` in GLSL)

## Caveats

- I included `@early_depth_test` for the sake of us being explicit, and

avoiding the need for the driver to be “smart” about enabling this

feature. That way, if we make a mistake and include a `discard`

unguarded by `MAY_DISCARD`, it will either produce errors or noticeable

visual artifacts so that we'll catch early, instead of causing a

performance regression.

- I'm not sure explicit early depth test is supported on the naga Metal

backend, which is what I'm currently using, so I can't really test the

explicit early depth test enable, I would like others with Vulkan/GL

hardware to test it if possible;

- I would like some guidance on how to measure/verify the performance

benefits of this;

- If I understand it correctly, this, or _something like this_ is needed

to fully reap the performance gains enabled by #6284;

- This will _most definitely_ conflict with #6284 and #6644. I can fix

the conflicts as needed, depending on whether/the order they end up

being merging in.

---

## Changelog

### Changed

- Early depth tests are now enabled whenever possible for meshes using

`StandardMaterial`, reducing the number of fragments evaluated for

scenes with lots of occlusions.

# Objective

- Support WebGPU

- alternative to #5027 that doesn't need any async / await

- fixes#8315

- Surprise fix#7318

## Solution

### For async renderer initialisation

- Update the plugin lifecycle:

- app builds the plugin

- calls `plugin.build`

- registers the plugin

- app starts the event loop

- event loop waits for `ready` of all registered plugins in the same

order

- returns `true` by default

- then call all `finish` then all `cleanup` in the same order as

registered

- then execute the schedule

In the case of the renderer, to avoid anything async:

- building the renderer plugin creates a detached task that will send

back the initialised renderer through a mutex in a resource

- `ready` will wait for the renderer to be present in the resource

- `finish` will take that renderer and place it in the expected

resources by other plugins

- other plugins (that expect the renderer to be available) `finish` are

called and they are able to set up their pipelines

- `cleanup` is called, only custom one is still for pipeline rendering

### For WebGPU support

- update the `build-wasm-example` script to support passing `--api

webgpu` that will build the example with WebGPU support

- feature for webgl2 was always enabled when building for wasm. it's now

in the default feature list and enabled on all platforms, so check for

this feature must also check that the target_arch is `wasm32`

---

## Migration Guide

- `Plugin::setup` has been renamed `Plugin::cleanup`

- `Plugin::finish` has been added, and plugins adding pipelines should

do it in this function instead of `Plugin::build`

```rust

// Before

impl Plugin for MyPlugin {

fn build(&self, app: &mut App) {

app.insert_resource::<MyResource>

.add_systems(Update, my_system);

let render_app = match app.get_sub_app_mut(RenderApp) {

Ok(render_app) => render_app,

Err(_) => return,

};

render_app

.init_resource::<RenderResourceNeedingDevice>()

.init_resource::<OtherRenderResource>();

}

}

// After

impl Plugin for MyPlugin {

fn build(&self, app: &mut App) {

app.insert_resource::<MyResource>

.add_systems(Update, my_system);

let render_app = match app.get_sub_app_mut(RenderApp) {

Ok(render_app) => render_app,

Err(_) => return,

};

render_app

.init_resource::<OtherRenderResource>();

}

fn finish(&self, app: &mut App) {

let render_app = match app.get_sub_app_mut(RenderApp) {

Ok(render_app) => render_app,

Err(_) => return,

};

render_app

.init_resource::<RenderResourceNeedingDevice>();

}

}

```

# Objective

- Updated to wgpu 0.16.0 and wgpu-hal 0.16.0

---

## Changelog

1. Upgrade wgpu to 0.16.0 and wgpu-hal to 0.16.0

2. Fix the error in native when using a filterable

`TextureSampleType::Float` on a multisample `BindingType::Texture`.

([https://github.com/gfx-rs/wgpu/pull/3686](https://github.com/gfx-rs/wgpu/pull/3686))

---------

Co-authored-by: François <mockersf@gmail.com>

# Objective

- We support enabling a normal prepass, but the main pass never actually

uses it and recomputes the normals in the main pass. This isn't ideal

since it's doing redundant work.

## Solution

- Use the normal texture from the prepass in the main pass

## Notes

~~I used `NORMAL_PREPASS_ENABLED` as a shader_def because

`NORMAL_PREPASS` is currently used to signify that it is running in the

prepass while this shader_def need to indicate the prepass is done and

the normal prepass was ran before. I'm not sure if there's a better way

to name this.~~

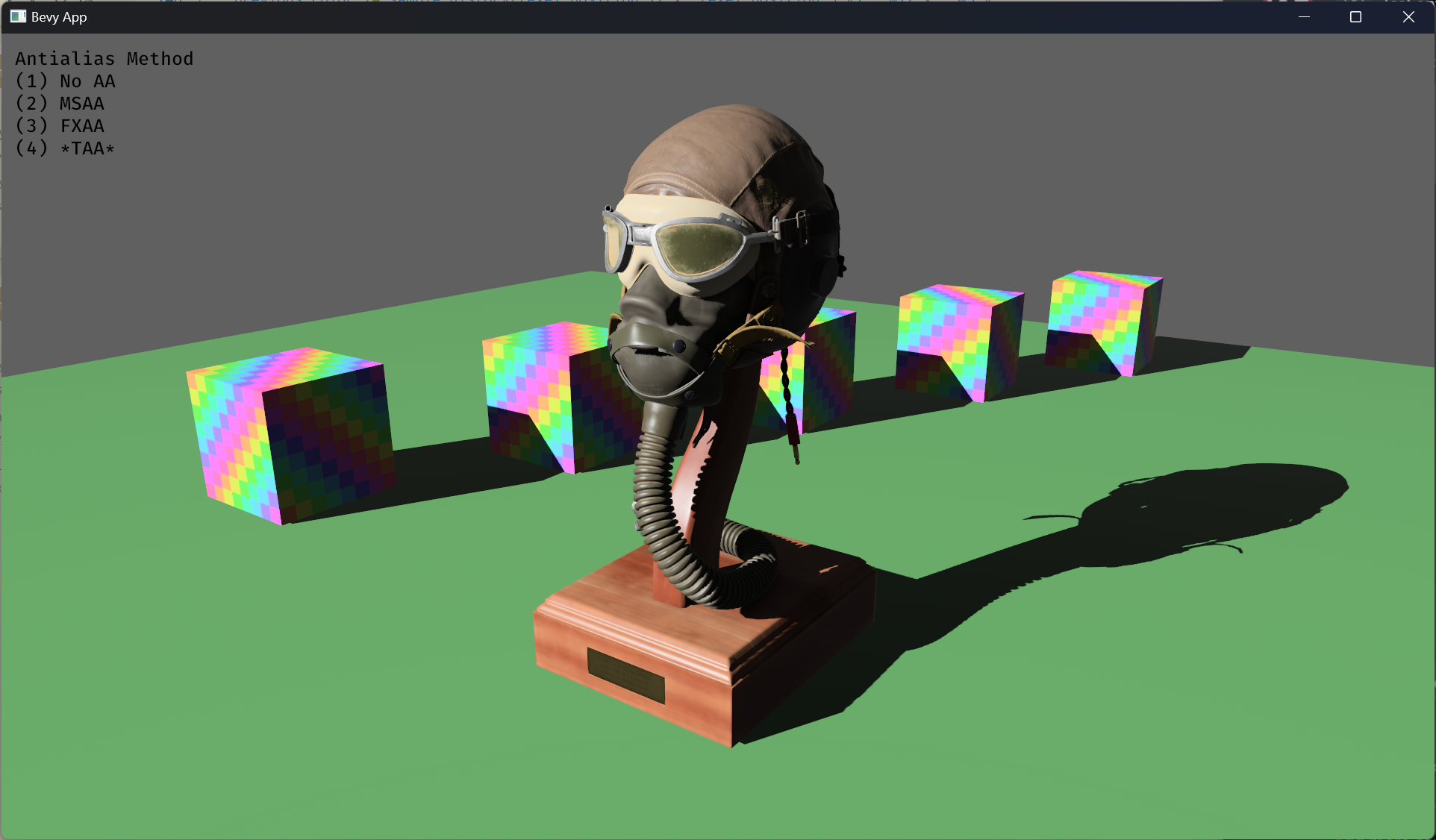

# Objective

- Implement an alternative antialias technique

- TAA scales based off of view resolution, not geometry complexity

- TAA filters textures, firefly pixels, and other aliasing not covered

by MSAA

- TAA additionally will reduce noise / increase quality in future

stochastic rendering techniques

- Closes https://github.com/bevyengine/bevy/issues/3663

## Solution

- Add a temporal jitter component

- Add a motion vector prepass

- Add a TemporalAntialias component and plugin

- Combine existing MSAA and FXAA examples and add TAA

## Followup Work

- Prepass motion vector support for skinned meshes

- Move uniforms needed for motion vectors into a separate bind group,

instead of using different bind group layouts

- Reuse previous frame's GPU view buffer for motion vectors, instead of

recomputing

- Mip biasing for sharper textures, and or unjitter texture UVs

https://github.com/bevyengine/bevy/issues/7323

- Compute shader for better performance

- Investigate FSR techniques

- Historical depth based disocclusion tests, for geometry disocclusion

- Historical luminance/hue based tests, for shading disocclusion

- Pixel "locks" to reduce blending rate / revamp history confidence

mechanism

- Orthographic camera support for TemporalJitter

- Figure out COD's 1-tap bicubic filter

---

## Changelog

- Added MotionVectorPrepass and TemporalJitter

- Added TemporalAntialiasPlugin, TemporalAntialiasBundle, and

TemporalAntialiasSettings

---------

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: IceSentry <IceSentry@users.noreply.github.com>

Co-authored-by: Robert Swain <robert.swain@gmail.com>

Co-authored-by: Daniel Chia <danstryder@gmail.com>

Co-authored-by: robtfm <50659922+robtfm@users.noreply.github.com>

Co-authored-by: Brandon Dyer <brandondyer64@gmail.com>

Co-authored-by: Edgar Geier <geieredgar@gmail.com>

# Objective

revert combining pipelines for AlphaMode::Blend and AlphaMode::Premultiplied & Add

the recent blend state pr changed `AlphaMode::Blend` to use a blend state of `Blend::PREMULTIPLIED_ALPHA_BLENDING`, and recovered the original behaviour by multiplying colour by alpha in the standard material's fragment shader.

this had some advantages (specifically it means more material instances can be batched together in future), but this also means that custom materials that specify `AlphaMode::Blend` now get a premultiplied blend state, so they must also multiply colour by alpha.

## Solution

revert that combination to preserve 0.9 behaviour for custom materials with AlphaMode::Blend.

# Objective

- Fixes#4372.

## Solution

- Use the prepass shaders for the shadow passes.

- Move `DEPTH_CLAMP_ORTHO` from `ShadowPipelineKey` to `MeshPipelineKey` and the associated clamp operation from `depth.wgsl` to `prepass.wgsl`.

- Remove `depth.wgsl` .

- Replace `ShadowPipeline` with `ShadowSamplers`.

Instead of running the custom `ShadowPipeline` we run the `PrepassPipeline` with the `DEPTH_PREPASS` flag and additionally the `DEPTH_CLAMP_ORTHO` flag for directional lights as well as the `ALPHA_MASK` flag for materials that use `AlphaMode::Mask(_)`.

# Objective

- Use the prepass textures in webgl

## Solution

- Bind the prepass textures even when using webgl, but only if msaa is disabled

- Also did some refactors to centralize how textures are bound, similar to the EnvironmentMapLight PR

- ~~Also did some refactors of the example to make it work in webgl~~

- ~~To make the example work in webgl, I needed to use a sampler for the depth texture, the resulting code looks a bit weird, but it's simple enough and I think it's worth it to show how it works when using webgl~~

# Objective

Support the following syntax for adding systems:

```rust

App::new()

.add_system(setup.on_startup())

.add_systems((

show_menu.in_schedule(OnEnter(GameState::Paused)),

menu_ssytem.in_set(OnUpdate(GameState::Paused)),

hide_menu.in_schedule(OnExit(GameState::Paused)),

))

```

## Solution

Add the traits `IntoSystemAppConfig{s}`, which provide the extension methods necessary for configuring which schedule a system belongs to. These extension methods return `IntoSystemAppConfig{s}`, which `App::add_system{s}` uses to choose which schedule to add systems to.

---

## Changelog

+ Added the extension methods `in_schedule(label)` and `on_startup()` for configuring the schedule a system belongs to.

## Future Work

* Replace all uses of `add_startup_system` in the engine.

* Deprecate this method

# Objective

- ambiguities bad

## Solution

- solve ambiguities

- by either ignoring (e.g. on `queue_mesh_view_bind_groups` since `LightMeta` access is different)

- by introducing a dependency (`prepare_windows -> prepare_*` because the latter use the fallback Msaa)

- make `prepare_assets` public so that we can do a proper `.after`

# Objective

- Fix the environment map shader not working under webgl due to textureNumLevels() not being supported

- Fixes https://github.com/bevyengine/bevy/issues/7722

## Solution

- Instead of using textureNumLevels(), put an extra field in the GpuLights uniform to store the mip count

# Objective

Splits tone mapping from https://github.com/bevyengine/bevy/pull/6677 into a separate PR.

Address https://github.com/bevyengine/bevy/issues/2264.

Adds tone mapping options:

- None: Bypasses tonemapping for instances where users want colors output to match those set.

- Reinhard

- Reinhard Luminance: Bevy's exiting tonemapping

- [ACES](https://github.com/TheRealMJP/BakingLab/blob/master/BakingLab/ACES.hlsl) (Fitted version, based on the same implementation that Godot 4 uses) see https://github.com/bevyengine/bevy/issues/2264

- [AgX](https://github.com/sobotka/AgX)

- SomewhatBoringDisplayTransform

- TonyMcMapface

- Blender Filmic

This PR also adds support for EXR images so they can be used to compare tonemapping options with reference images.

## Migration Guide

- Tonemapping is now an enum with NONE and the various tonemappers.

- The DebandDither is now a separate component.

Co-authored-by: JMS55 <47158642+JMS55@users.noreply.github.com>

# Objective

Allow for creating pipelines that use push constants. To be able to use push constants. Fixes#4825

As of right now, trying to call `RenderPass::set_push_constants` will trigger the following error:

```

thread 'main' panicked at 'wgpu error: Validation Error

Caused by:

In a RenderPass

note: encoder = `<CommandBuffer-(0, 59, Vulkan)>`

In a set_push_constant command

provided push constant is for stage(s) VERTEX | FRAGMENT | VERTEX_FRAGMENT, however the pipeline layout has no push constant range for the stage(s) VERTEX | FRAGMENT | VERTEX_FRAGMENT

```

## Solution

Add a field push_constant_ranges to` RenderPipelineDescriptor` and `ComputePipelineDescriptor`.

This PR supersedes #4908 which now contains merge conflicts due to significant changes to `bevy_render`.

Meanwhile, this PR also made the `layout` field of `RenderPipelineDescriptor` and `ComputePipelineDescriptor` non-optional. If the user do not need to specify the bind group layouts, they can simply supply an empty vector here. No need for it to be optional.

---

## Changelog

- Add a field push_constant_ranges to RenderPipelineDescriptor and ComputePipelineDescriptor

- Made the `layout` field of RenderPipelineDescriptor and ComputePipelineDescriptor non-optional.

## Migration Guide

- Add push_constant_ranges: Vec::new() to every `RenderPipelineDescriptor` and `ComputePipelineDescriptor`

- Unwrap the optional values on the `layout` field of `RenderPipelineDescriptor` and `ComputePipelineDescriptor`. If the descriptor has no layout, supply an empty vector.

Co-authored-by: Zhixing Zhang <me@neoto.xin>

(Before)

(After)

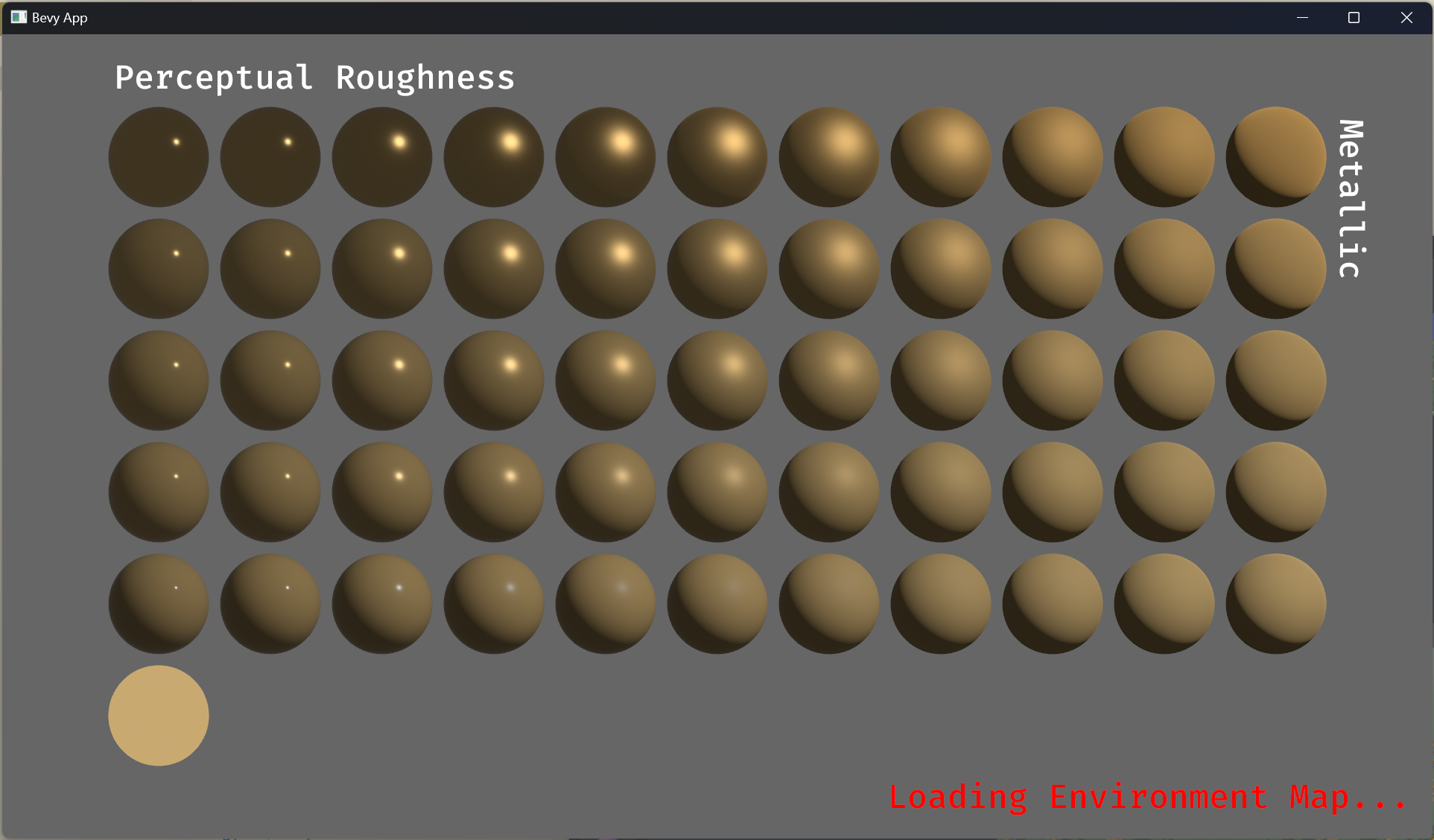

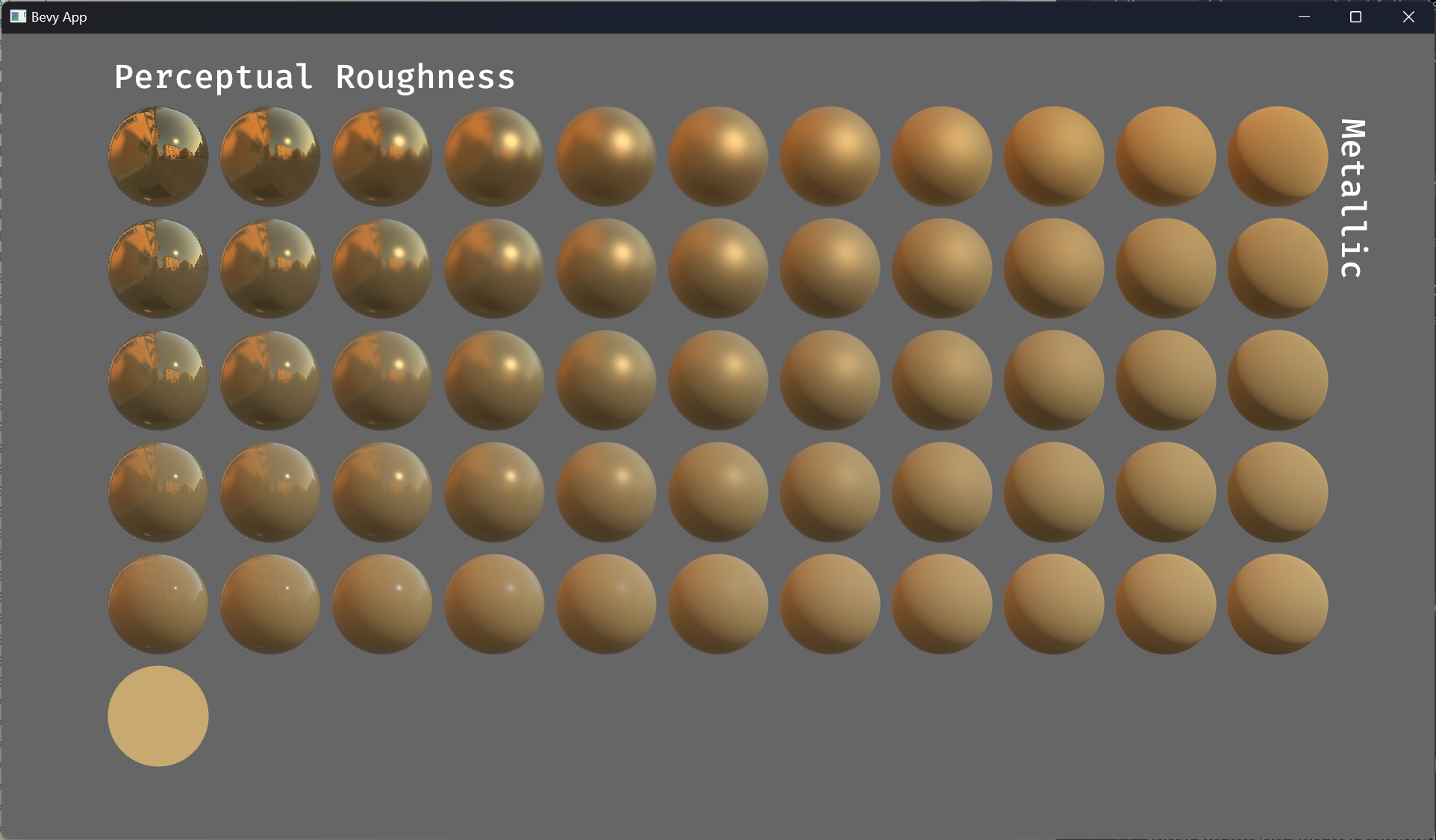

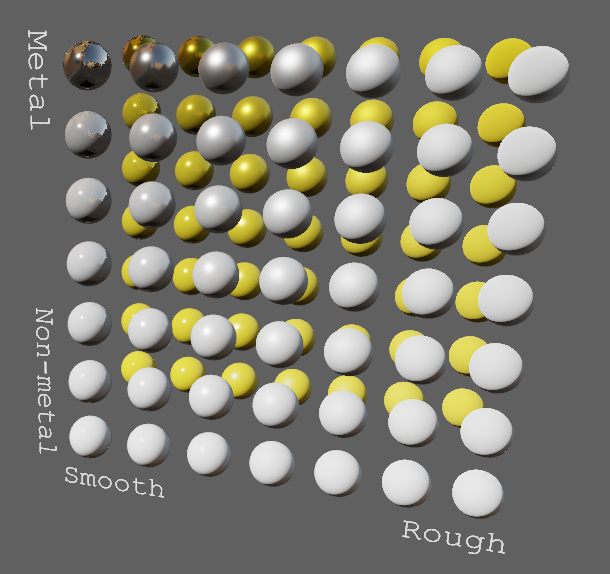

# Objective

- Improve lighting; especially reflections.

- Closes https://github.com/bevyengine/bevy/issues/4581.

## Solution

- Implement environment maps, providing better ambient light.

- Add microfacet multibounce approximation for specular highlights from Filament.

- Occlusion is no longer incorrectly applied to direct lighting. It now only applies to diffuse indirect light. Unsure if it's also supposed to apply to specular indirect light - the glTF specification just says "indirect light". In the case of ambient occlusion, for instance, that's usually only calculated as diffuse though. For now, I'm choosing to apply this just to indirect diffuse light, and not specular.

- Modified the PBR example to use an environment map, and have labels.

- Added `FallbackImageCubemap`.

## Implementation

- IBL technique references can be found in environment_map.wgsl.

- It's more accurate to use a LUT for the scale/bias. Filament has a good reference on generating this LUT. For now, I just used an analytic approximation.

- For now, environment maps must first be prefiltered outside of bevy using a 3rd party tool. See the `EnvironmentMap` documentation.

- Eventually, we should have our own prefiltering code, so that we can have dynamically changing environment maps, as well as let users drop in an HDR image and use asset preprocessing to create the needed textures using only bevy.

---

## Changelog

- Added an `EnvironmentMapLight` camera component that adds additional ambient light to a scene.

- StandardMaterials will now appear brighter and more saturated at high roughness, due to internal material changes. This is more physically correct.

- Fixed StandardMaterial occlusion being incorrectly applied to direct lighting.

- Added `FallbackImageCubemap`.

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: James Liu <contact@jamessliu.com>

Co-authored-by: Rob Parrett <robparrett@gmail.com>

Huge thanks to @maniwani, @devil-ira, @hymm, @cart, @superdump and @jakobhellermann for the help with this PR.

# Objective

- Followup #6587.

- Minimal integration for the Stageless Scheduling RFC: https://github.com/bevyengine/rfcs/pull/45

## Solution

- [x] Remove old scheduling module

- [x] Migrate new methods to no longer use extension methods

- [x] Fix compiler errors

- [x] Fix benchmarks

- [x] Fix examples

- [x] Fix docs

- [x] Fix tests

## Changelog

### Added

- a large number of methods on `App` to work with schedules ergonomically

- the `CoreSchedule` enum

- `App::add_extract_system` via the `RenderingAppExtension` trait extension method

- the private `prepare_view_uniforms` system now has a public system set for scheduling purposes, called `ViewSet::PrepareUniforms`

### Removed

- stages, and all code that mentions stages

- states have been dramatically simplified, and no longer use a stack

- `RunCriteriaLabel`

- `AsSystemLabel` trait

- `on_hierarchy_reports_enabled` run criteria (now just uses an ad hoc resource checking run condition)

- systems in `RenderSet/Stage::Extract` no longer warn when they do not read data from the main world

- `RunCriteriaLabel`

- `transform_propagate_system_set`: this was a nonstandard pattern that didn't actually provide enough control. The systems are already `pub`: the docs have been updated to ensure that the third-party usage is clear.

### Changed

- `System::default_labels` is now `System::default_system_sets`.

- `App::add_default_labels` is now `App::add_default_sets`

- `CoreStage` and `StartupStage` enums are now `CoreSet` and `StartupSet`

- `App::add_system_set` was renamed to `App::add_systems`

- The `StartupSchedule` label is now defined as part of the `CoreSchedules` enum

- `.label(SystemLabel)` is now referred to as `.in_set(SystemSet)`

- `SystemLabel` trait was replaced by `SystemSet`

- `SystemTypeIdLabel<T>` was replaced by `SystemSetType<T>`

- The `ReportHierarchyIssue` resource now has a public constructor (`new`), and implements `PartialEq`

- Fixed time steps now use a schedule (`CoreSchedule::FixedTimeStep`) rather than a run criteria.

- Adding rendering extraction systems now panics rather than silently failing if no subapp with the `RenderApp` label is found.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied.

- `SceneSpawnerSystem` now runs under `CoreSet::Update`, rather than `CoreStage::PreUpdate.at_end()`.

- `bevy_pbr::add_clusters` is no longer an exclusive system

- the top level `bevy_ecs::schedule` module was replaced with `bevy_ecs::scheduling`

- `tick_global_task_pools_on_main_thread` is no longer run as an exclusive system. Instead, it has been replaced by `tick_global_task_pools`, which uses a `NonSend` resource to force running on the main thread.

## Migration Guide

- Calls to `.label(MyLabel)` should be replaced with `.in_set(MySet)`

- Stages have been removed. Replace these with system sets, and then add command flushes using the `apply_system_buffers` exclusive system where needed.

- The `CoreStage`, `StartupStage, `RenderStage` and `AssetStage` enums have been replaced with `CoreSet`, `StartupSet, `RenderSet` and `AssetSet`. The same scheduling guarantees have been preserved.

- Systems are no longer added to `CoreSet::Update` by default. Add systems manually if this behavior is needed, although you should consider adding your game logic systems to `CoreSchedule::FixedTimestep` instead for more reliable framerate-independent behavior.

- Similarly, startup systems are no longer part of `StartupSet::Startup` by default. In most cases, this won't matter to you.

- For example, `add_system_to_stage(CoreStage::PostUpdate, my_system)` should be replaced with

- `add_system(my_system.in_set(CoreSet::PostUpdate)`

- When testing systems or otherwise running them in a headless fashion, simply construct and run a schedule using `Schedule::new()` and `World::run_schedule` rather than constructing stages

- Run criteria have been renamed to run conditions. These can now be combined with each other and with states.

- Looping run criteria and state stacks have been removed. Use an exclusive system that runs a schedule if you need this level of control over system control flow.

- For app-level control flow over which schedules get run when (such as for rollback networking), create your own schedule and insert it under the `CoreSchedule::Outer` label.

- Fixed timesteps are now evaluated in a schedule, rather than controlled via run criteria. The `run_fixed_timestep` system runs this schedule between `CoreSet::First` and `CoreSet::PreUpdate` by default.

- Command flush points introduced by `AssetStage` have been removed. If you were relying on these, add them back manually.

- Adding extract systems is now typically done directly on the main app. Make sure the `RenderingAppExtension` trait is in scope, then call `app.add_extract_system(my_system)`.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied. You may need to order your movement systems to occur before this system in order to avoid system order ambiguities in culling behavior.

- the `RenderLabel` `AppLabel` was renamed to `RenderApp` for clarity

- `App::add_state` now takes 0 arguments: the starting state is set based on the `Default` impl.

- Instead of creating `SystemSet` containers for systems that run in stages, simply use `.on_enter::<State::Variant>()` or its `on_exit` or `on_update` siblings.

- `SystemLabel` derives should be replaced with `SystemSet`. You will also need to add the `Debug`, `PartialEq`, `Eq`, and `Hash` traits to satisfy the new trait bounds.

- `with_run_criteria` has been renamed to `run_if`. Run criteria have been renamed to run conditions for clarity, and should now simply return a bool.

- States have been dramatically simplified: there is no longer a "state stack". To queue a transition to the next state, call `NextState::set`

## TODO

- [x] remove dead methods on App and World

- [x] add `App::add_system_to_schedule` and `App::add_systems_to_schedule`

- [x] avoid adding the default system set at inappropriate times

- [x] remove any accidental cycles in the default plugins schedule

- [x] migrate benchmarks

- [x] expose explicit labels for the built-in command flush points

- [x] migrate engine code

- [x] remove all mentions of stages from the docs

- [x] verify docs for States

- [x] fix uses of exclusive systems that use .end / .at_start / .before_commands

- [x] migrate RenderStage and AssetStage

- [x] migrate examples

- [x] ensure that transform propagation is exported in a sufficiently public way (the systems are already pub)

- [x] ensure that on_enter schedules are run at least once before the main app

- [x] re-enable opt-in to execution order ambiguities

- [x] revert change to `update_bounds` to ensure it runs in `PostUpdate`

- [x] test all examples

- [x] unbreak directional lights

- [x] unbreak shadows (see 3d_scene, 3d_shape, lighting, transparaency_3d examples)

- [x] game menu example shows loading screen and menu simultaneously

- [x] display settings menu is a blank screen

- [x] `without_winit` example panics

- [x] ensure all tests pass

- [x] SubApp doc test fails

- [x] runs_spawn_local tasks fails

- [x] [Fix panic_when_hierachy_cycle test hanging](https://github.com/alice-i-cecile/bevy/pull/120)

## Points of Difficulty and Controversy

**Reviewers, please give feedback on these and look closely**

1. Default sets, from the RFC, have been removed. These added a tremendous amount of implicit complexity and result in hard to debug scheduling errors. They're going to be tackled in the form of "base sets" by @cart in a followup.

2. The outer schedule controls which schedule is run when `App::update` is called.

3. I implemented `Label for `Box<dyn Label>` for our label types. This enables us to store schedule labels in concrete form, and then later run them. I ran into the same set of problems when working with one-shot systems. We've previously investigated this pattern in depth, and it does not appear to lead to extra indirection with nested boxes.

4. `SubApp::update` simply runs the default schedule once. This sucks, but this whole API is incomplete and this was the minimal changeset.

5. `time_system` and `tick_global_task_pools_on_main_thread` no longer use exclusive systems to attempt to force scheduling order

6. Implemetnation strategy for fixed timesteps

7. `AssetStage` was migrated to `AssetSet` without reintroducing command flush points. These did not appear to be used, and it's nice to remove these bottlenecks.

8. Migration of `bevy_render/lib.rs` and pipelined rendering. The logic here is unusually tricky, as we have complex scheduling requirements.

## Future Work (ideally before 0.10)

- Rename schedule_v3 module to schedule or scheduling

- Add a derive macro to states, and likely a `EnumIter` trait of some form

- Figure out what exactly to do with the "systems added should basically work by default" problem

- Improve ergonomics for working with fixed timesteps and states

- Polish FixedTime API to match Time

- Rebase and merge #7415

- Resolve all internal ambiguities (blocked on better tools, especially #7442)

- Add "base sets" to replace the removed default sets.

<img width="1392" alt="image" src="https://user-images.githubusercontent.com/418473/203873533-44c029af-13b7-4740-8ea3-af96bd5867c9.png">

<img width="1392" alt="image" src="https://user-images.githubusercontent.com/418473/203873549-36be7a23-b341-42a2-8a9f-ceea8ac7a2b8.png">

# Objective

- Add support for the “classic” distance fog effect, as well as a more advanced atmospheric fog effect.

## Solution

This PR:

- Introduces a new `FogSettings` component that controls distance fog per-camera.

- Adds support for three widely used “traditional” fog falloff modes: `Linear`, `Exponential` and `ExponentialSquared`, as well as a more advanced `Atmospheric` fog;

- Adds support for directional light influence over fog color;

- Extracts fog via `ExtractComponent`, then uses a prepare system that sets up a new dynamic uniform struct (`Fog`), similar to other mesh view types;

- Renders fog in PBR material shader, as a final adjustment to the `output_color`, after PBR is computed (but before tone mapping);

- Adds a new `StandardMaterial` flag to enable fog; (`fog_enabled`)

- Adds convenience methods for easier artistic control when creating non-linear fog types;

- Adds documentation around fog.

---

## Changelog

### Added

- Added support for distance-based fog effects for PBR materials, controllable per-camera via the new `FogSettings` component;

- Added `FogFalloff` enum for selecting between three widely used “traditional” fog falloff modes: `Linear`, `Exponential` and `ExponentialSquared`, as well as a more advanced `Atmospheric` fog;

Co-authored-by: Robert Swain <robert.swain@gmail.com>

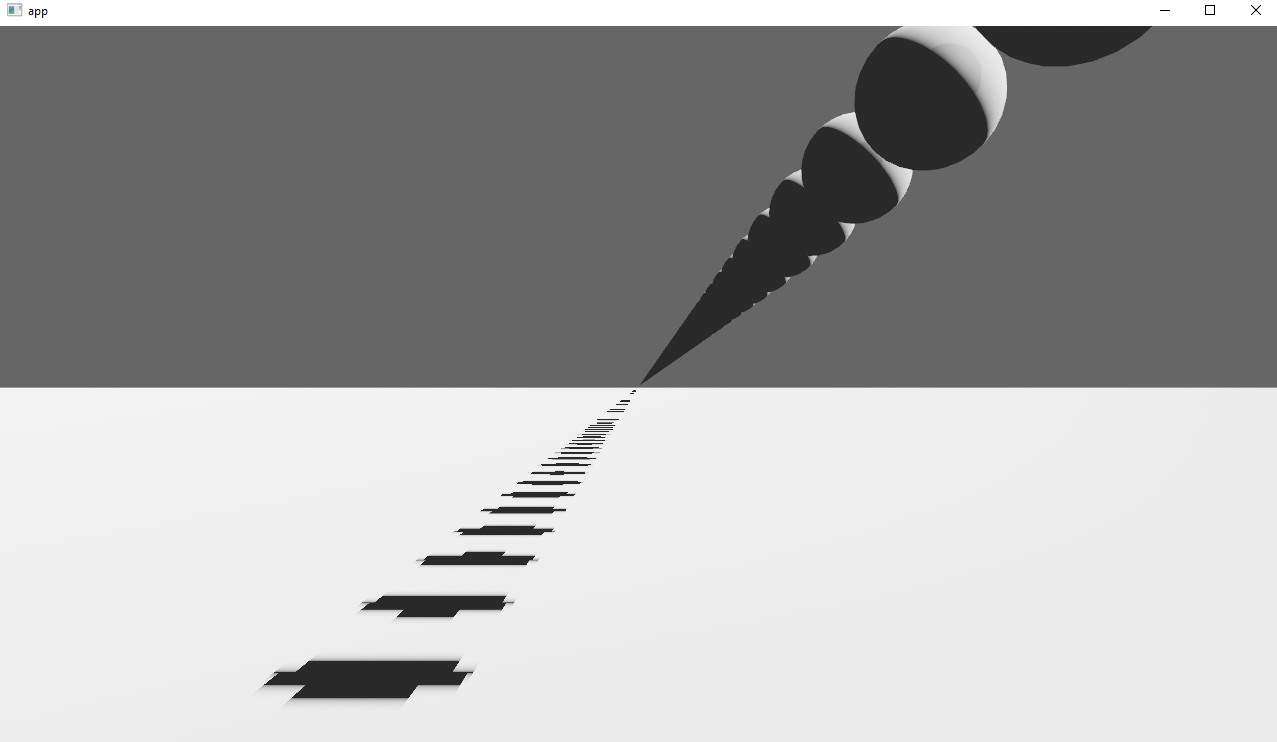

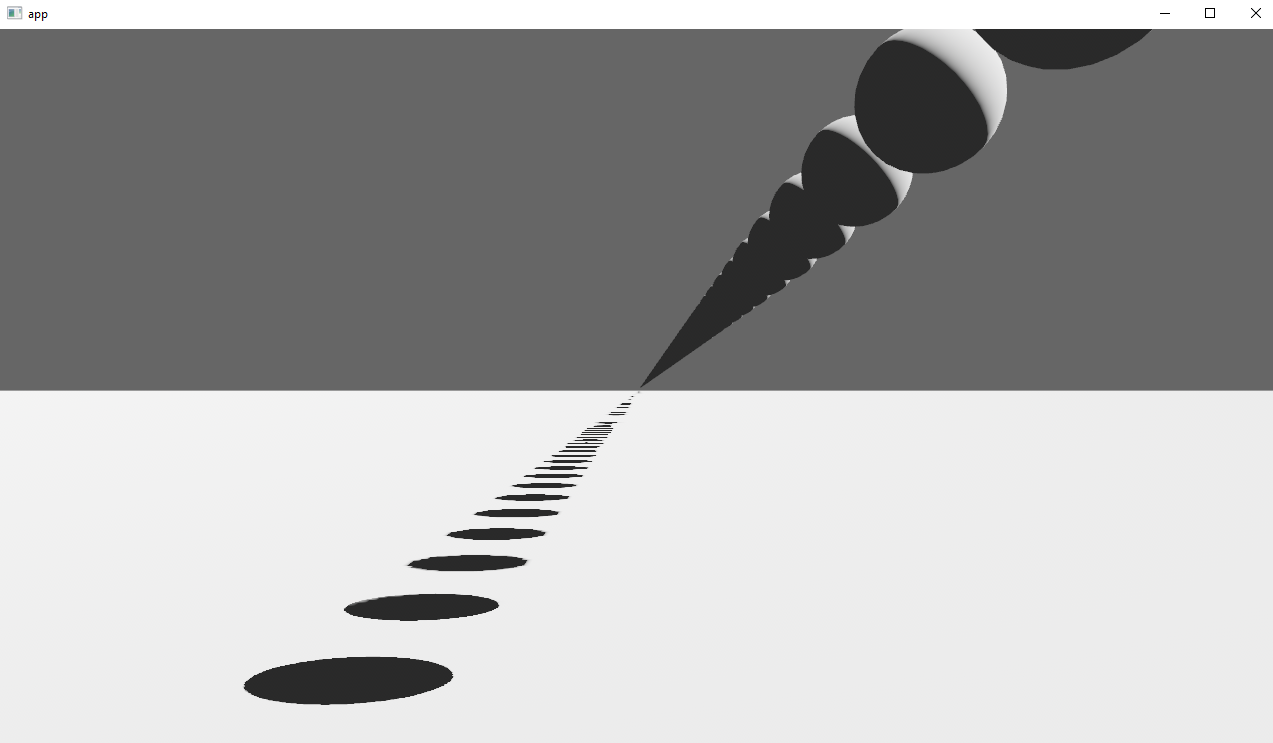

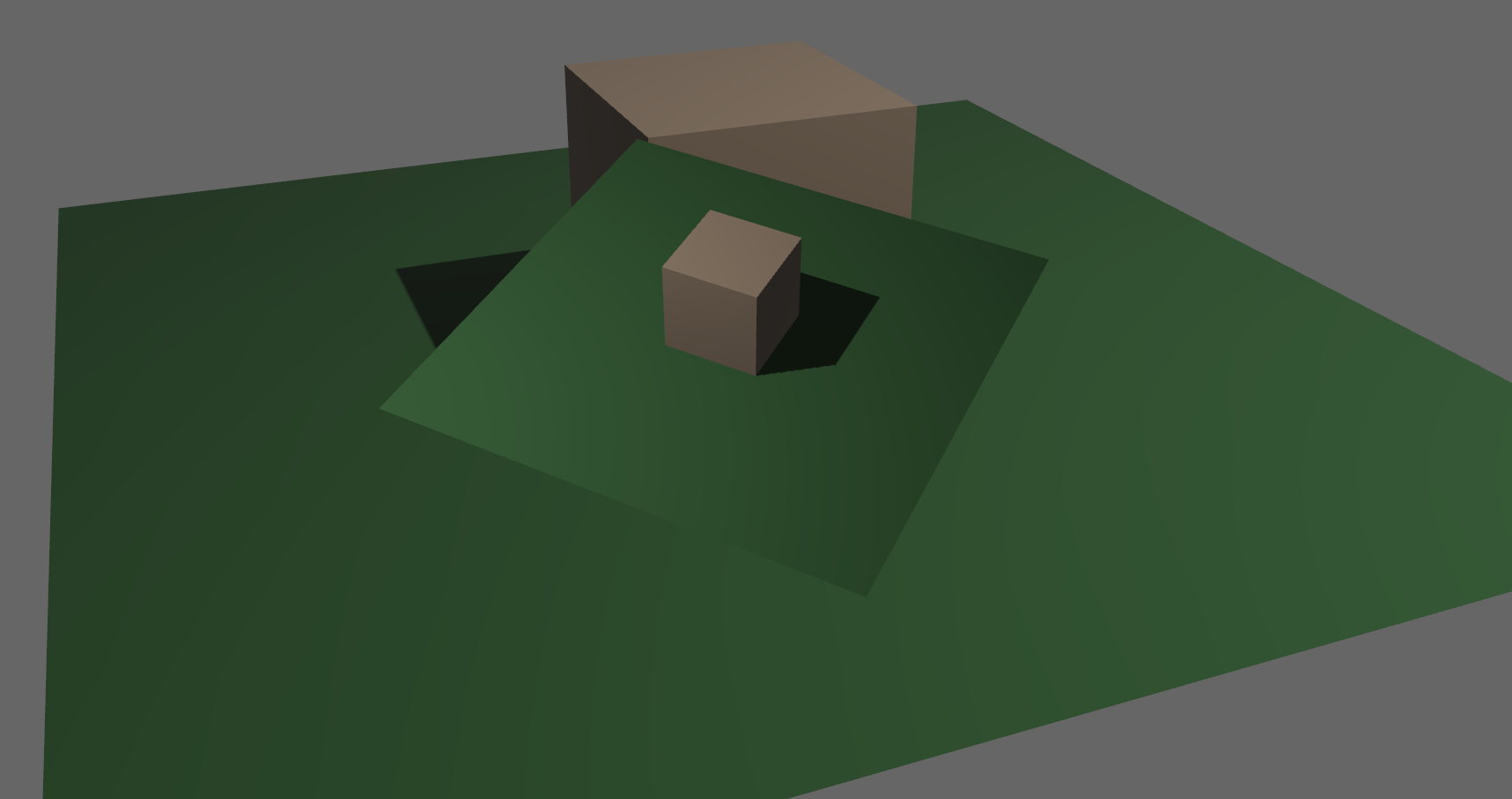

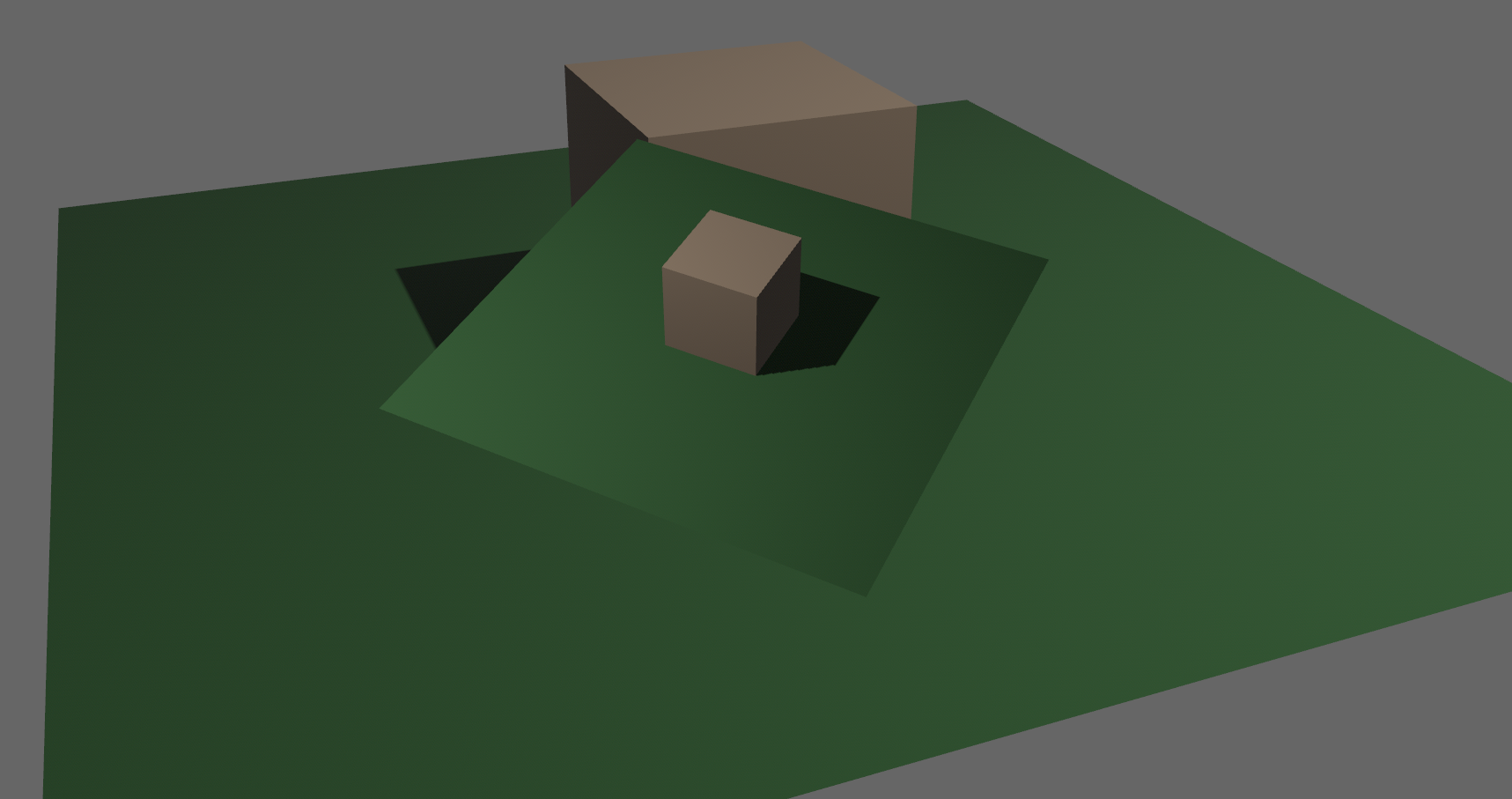

# Objective

Implements cascaded shadow maps for directional lights, which produces better quality shadows without needing excessively large shadow maps.

Fixes#3629

Before

After

## Solution

Rather than rendering a single shadow map for directional light, the view frustum is divided into a series of cascades, each of which gets its own shadow map. The correct cascade is then sampled for shadow determination.

---

## Changelog

Directional lights now use cascaded shadow maps for improved shadow quality.

## Migration Guide

You no longer have to manually specify a `shadow_projection` for a directional light, and these settings should be removed. If customization of how cascaded shadow maps work is desired, modify the `CascadeShadowConfig` component instead.

# Objective

- This PR adds support for blend modes to the PBR `StandardMaterial`.

<img width="1392" alt="Screenshot 2022-11-18 at 20 00 56" src="https://user-images.githubusercontent.com/418473/202820627-0636219a-a1e5-437a-b08b-b08c6856bf9c.png">

<img width="1392" alt="Screenshot 2022-11-18 at 20 01 01" src="https://user-images.githubusercontent.com/418473/202820615-c8d43301-9a57-49c4-bd21-4ae343c3e9ec.png">

## Solution

- The existing `AlphaMode` enum is extended, adding three more modes: `AlphaMode::Premultiplied`, `AlphaMode::Add` and `AlphaMode::Multiply`;

- All new modes are rendered in the existing `Transparent3d` phase;

- The existing mesh flags for alpha mode are reorganized for a more compact/efficient representation, and new values are added;

- `MeshPipelineKey::TRANSPARENT_MAIN_PASS` is refactored into `MeshPipelineKey::BLEND_BITS`.

- `AlphaMode::Opaque` and `AlphaMode::Mask(f32)` share a single opaque pipeline key: `MeshPipelineKey::BLEND_OPAQUE`;

- `Blend`, `Premultiplied` and `Add` share a single premultiplied alpha pipeline key, `MeshPipelineKey::BLEND_PREMULTIPLIED_ALPHA`. In the shader, color values are premultiplied accordingly (or not) depending on the blend mode to produce the three different results after PBR/tone mapping/dithering;

- `Multiply` uses its own independent pipeline key, `MeshPipelineKey::BLEND_MULTIPLY`;

- Example and documentation are provided.

---

## Changelog

### Added

- Added support for additive and multiplicative blend modes in the PBR `StandardMaterial`, via `AlphaMode::Add` and `AlphaMode::Multiply`;

- Added support for premultiplied alpha in the PBR `StandardMaterial`, via `AlphaMode::Premultiplied`;

# Objective

Fixes#6931

Continues #6954 by squashing `Msaa` to a flat enum

Helps out #7215

# Solution

```

pub enum Msaa {

Off = 1,

#[default]

Sample4 = 4,

}

```

# Changelog

- Modified

- `Msaa` is now enum

- Defaults to 4 samples

- Uses `.samples()` method to get the sample number as `u32`

# Migration Guide

```

let multi = Msaa { samples: 4 }

// is now

let multi = Msaa::Sample4

multi.samples

// is now

multi.samples()

```

Co-authored-by: Sjael <jakeobrien44@gmail.com>

# Objective

- Add a configurable prepass

- A depth prepass is useful for various shader effects and to reduce overdraw. It can be expansive depending on the scene so it's important to be able to disable it if you don't need any effects that uses it or don't suffer from excessive overdraw.

- The goal is to eventually use it for things like TAA, Ambient Occlusion, SSR and various other techniques that can benefit from having a prepass.

## Solution

The prepass node is inserted before the main pass. It runs for each `Camera3d` with a prepass component (`DepthPrepass`, `NormalPrepass`). The presence of one of those components is used to determine which textures are generated in the prepass. When any prepass is enabled, the depth buffer generated will be used by the main pass to reduce overdraw.

The prepass runs for each `Material` created with the `MaterialPlugin::prepass_enabled` option set to `true`. You can overload the shader used by the prepass by using `Material::prepass_vertex_shader()` and/or `Material::prepass_fragment_shader()`. It will also use the `Material::specialize()` for more advanced use cases. It is enabled by default on all materials.

The prepass works on opaque materials and materials using an alpha mask. Transparent materials are ignored.

The `StandardMaterial` overloads the prepass fragment shader to support alpha mask and normal maps.

---

## Changelog

- Add a new `PrepassNode` that runs before the main pass

- Add a `PrepassPlugin` to extract/prepare/queue the necessary data

- Add a `DepthPrepass` and `NormalPrepass` component to control which textures will be created by the prepass and available in later passes.

- Add a new `prepass_enabled` flag to the `MaterialPlugin` that will control if a material uses the prepass or not.

- Add a new `prepass_enabled` flag to the `PbrPlugin` to control if the StandardMaterial uses the prepass. Currently defaults to false.

- Add `Material::prepass_vertex_shader()` and `Material::prepass_fragment_shader()` to control the prepass from the `Material`

## Notes

In bevy's sample 3d scene, the performance is actually worse when enabling the prepass, but on more complex scenes the performance is generally better. I would like more testing on this, but @DGriffin91 has reported a very noticeable improvements in some scenes.

The prepass is also used by @JMS55 for TAA and GTAO

discord thread: <https://discord.com/channels/691052431525675048/1011624228627419187>

This PR was built on top of the work of multiple people

Co-Authored-By: @superdump

Co-Authored-By: @robtfm

Co-Authored-By: @JMS55

Co-authored-by: Charles <IceSentry@users.noreply.github.com>

Co-authored-by: JMS55 <47158642+JMS55@users.noreply.github.com>

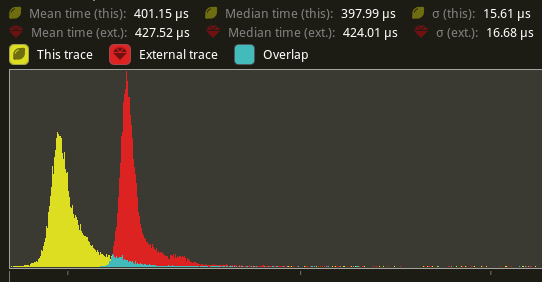

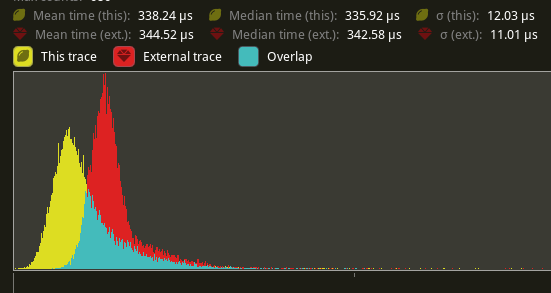

# Objective

Speed up the render phase of rendering. Simplify the trait structure for render commands.

## Solution

- Merge `EntityPhaseItem` into `PhaseItem` (`EntityPhaseItem::entity` -> `PhaseItem::entity`)

- Merge `EntityRenderCommand` into `RenderCommand`.

- Add two associated types to `RenderCommand`: `RenderCommand::ViewWorldQuery` and `RenderCommand::WorldQuery`.

- Use the new associated types to construct two `QueryStates`s for `RenderCommandState`.

- Hoist any `SQuery<T>` fetches in `EntityRenderCommand`s into the aformentioned two queries. Batch fetch them all at once.

## Performance

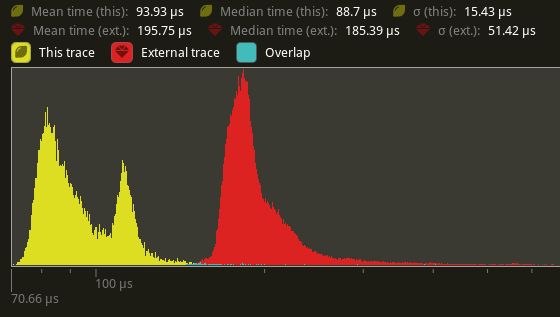

`main_opaque_pass_3d` is slightly faster on `many_foxes` (427.52us -> 401.15us)

The shadow pass node is also slightly faster (344.52 -> 338.24us)

## Future Work

- Can we hoist the view level queries out of the core loop?

---

## Changelog

Added: `PhaseItem::entity`

Added: `RenderCommand::ViewWorldQuery` associated type.

Added: `RenderCommand::ItemorldQuery` associated type.

Added: `Draw<T>::prepare` optional trait function.

Removed: `EntityPhaseItem` trait

## Migration Guide

TODO

# Objective

Following #4402, extract systems run on the render world instead of the main world, and allow retained state operations on it's resources. We're currently extracting to `ExtractedJoints` and then copying it twice during Prepare. Once into `SkinnedMeshJoints` and again into the actual GPU buffer.

This makes #4902 obsolete.

## Solution

Cut out the middle copy and directly extract joints into `SkinnedMeshJoints` and remove `ExtractedJoints` entirely.

This also removes the per-frame allocation that is being made to send `ExtractedJoints` into the render world.

## Performance

On my local machine, this halves the time for `prepare_skinned _meshes` on `many_foxes` (195.75us -> 93.93us on average).

---

## Changelog

Added: `BufferVec::truncate`

Added: `BufferVec::extend`

Changed: `SkinnedMeshJoints::build` now takes a `&mut BufferVec` instead of a `&mut Vec` as a parameter.

Removed: `ExtractedJoints`.

## Migration Guide

`ExtractedJoints` has been removed. Read the bound bones from `SkinnedMeshJoints` instead.

# Objective

- Fixes#6841

- In some case, the number of maximum storage buffers is `u32::MAX` which doesn't fit in a `i32`

## Solution

- Add an option to have a `u32` in a `ShaderDefVal`

# Objective

- shaders defs can now have a `bool` or `int` value

- `#if SHADER_DEF <operator> 3`

- ok if `SHADER_DEF` is defined, has the correct type and pass the comparison

- `==`, `!=`, `>=`, `>`, `<`, `<=` supported

- `#SHADER_DEF` or `#{SHADER_DEF}`

- will be replaced by the value in the shader code

---

## Migration Guide

- replace `shader_defs.push(String::from("NAME"));` by `shader_defs.push("NAME".into());`

- if you used shader def `NO_STORAGE_BUFFERS_SUPPORT`, check how `AVAILABLE_STORAGE_BUFFER_BINDINGS` is now used in Bevy default shaders

# Objective

- Closes#5262

- Fix color banding caused by quantization.

## Solution

- Adds dithering to the tonemapping node from #3425.

- This is inspired by Godot's default "debanding" shader: https://gist.github.com/belzecue/

- Unlike Godot:

- debanding happens after tonemapping. My understanding is that this is preferred, because we are running the debanding at the last moment before quantization (`[f32, f32, f32, f32]` -> `f32`). This ensures we aren't biasing the dithering strength by applying it in a different (linear) color space.

- This code instead uses and reference the origin source, Valve at GDC 2015

## Additional Notes

Real time rendering to standard dynamic range outputs is limited to 8 bits of depth per color channel. Internally we keep everything in full 32-bit precision (`vec4<f32>`) inside passes and 16-bit between passes until the image is ready to be displayed, at which point the GPU implicitly converts our `vec4<f32>` into a single 32bit value per pixel, with each channel (rgba) getting 8 of those 32 bits.

### The Problem

8 bits of color depth is simply not enough precision to make each step invisible - we only have 256 values per channel! Human vision can perceive steps in luma to about 14 bits of precision. When drawing a very slight gradient, the transition between steps become visible because with a gradient, neighboring pixels will all jump to the next "step" of precision at the same time.

### The Solution

One solution is to simply output in HDR - more bits of color data means the transition between bands will become smaller. However, not everyone has hardware that supports 10+ bit color depth. Additionally, 10 bit color doesn't even fully solve the issue, banding will result in coherent bands on shallow gradients, but the steps will be harder to perceive.

The solution in this PR adds noise to the signal before it is "quantized" or resampled from 32 to 8 bits. Done naively, it's easy to add unneeded noise to the image. To ensure dithering is correct and absolutely minimal, noise is adding *within* one step of the output color depth. When converting from the 32bit to 8bit signal, the value is rounded to the nearest 8 bit value (0 - 255). Banding occurs around the transition from one value to the next, let's say from 50-51. Dithering will never add more than +/-0.5 bits of noise, so the pixels near this transition might round to 50 instead of 51 but will never round more than one step. This means that the output image won't have excess variance:

- in a gradient from 49 to 51, there will be a step between each band at 49, 50, and 51.

- Done correctly, the modified image of this gradient will never have a adjacent pixels more than one step (0-255) from each other.

- I.e. when scanning across the gradient you should expect to see:

```

|-band-| |-band-| |-band-|

Baseline: 49 49 49 50 50 50 51 51 51

Dithered: 49 50 49 50 50 51 50 51 51

Dithered (wrong): 49 50 51 49 50 51 49 51 50

```

You can see from above how correct dithering "fuzzes" the transition between bands to reduce distinct steps in color, without adding excess noise.

### HDR

The previous section (and this PR) assumes the final output is to an 8-bit texture, however this is not always the case. When Bevy adds HDR support, the dithering code will need to take the per-channel depth into account instead of assuming it to be 0-255. Edit: I talked with Rob about this and it seems like the current solution is okay. We may need to revisit once we have actual HDR final image output.

---

## Changelog

### Added

- All pipelines now support deband dithering. This is enabled by default in 3D, and can be toggled in the `Tonemapping` component in camera bundles. Banding is a graphical artifact created when the rendered image is crunched from high precision (f32 per color channel) down to the final output (u8 per channel in SDR). This results in subtle gradients becoming blocky due to the reduced color precision. Deband dithering applies a small amount of noise to the signal before it is "crunched", which breaks up the hard edges of blocks (bands) of color. Note that this does not add excess noise to the image, as the amount of noise is less than a single step of a color channel - just enough to break up the transition between color blocks in a gradient.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Bevy still has many instances of using single-tuples `(T,)` to create a bundle. Due to #2975, this is no longer necessary.

## Solution

Search for regex `\(.+\s*,\)`. This should have found every instance.

Attempt to make features like bloom https://github.com/bevyengine/bevy/pull/2876 easier to implement.

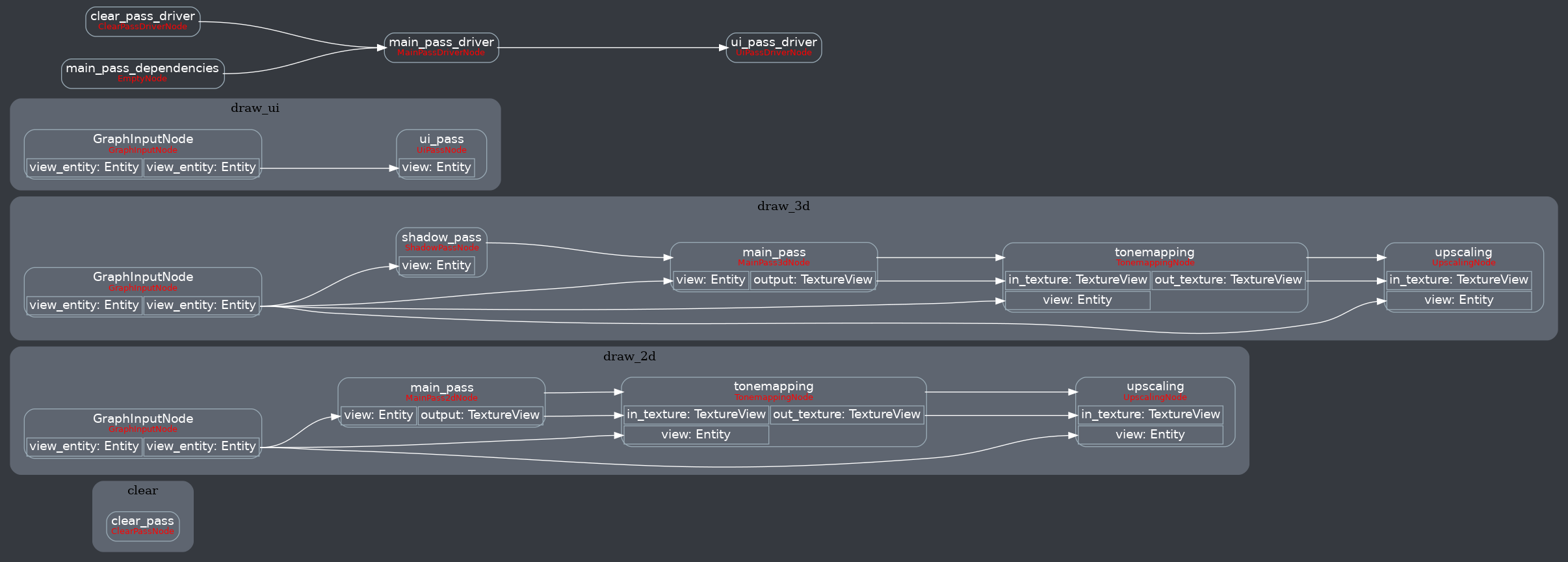

**This PR:**

- Moves the tonemapping from `pbr.wgsl` into a separate pass

- also add a separate upscaling pass after the tonemapping which writes to the swap chain (enables resolution-independant rendering and post-processing after tonemapping)

- adds a `hdr` bool to the camera which controls whether the pbr and sprite shaders render into a `Rgba16Float` texture

**Open questions:**

- ~should the 2d graph work the same as the 3d one?~ it is the same now

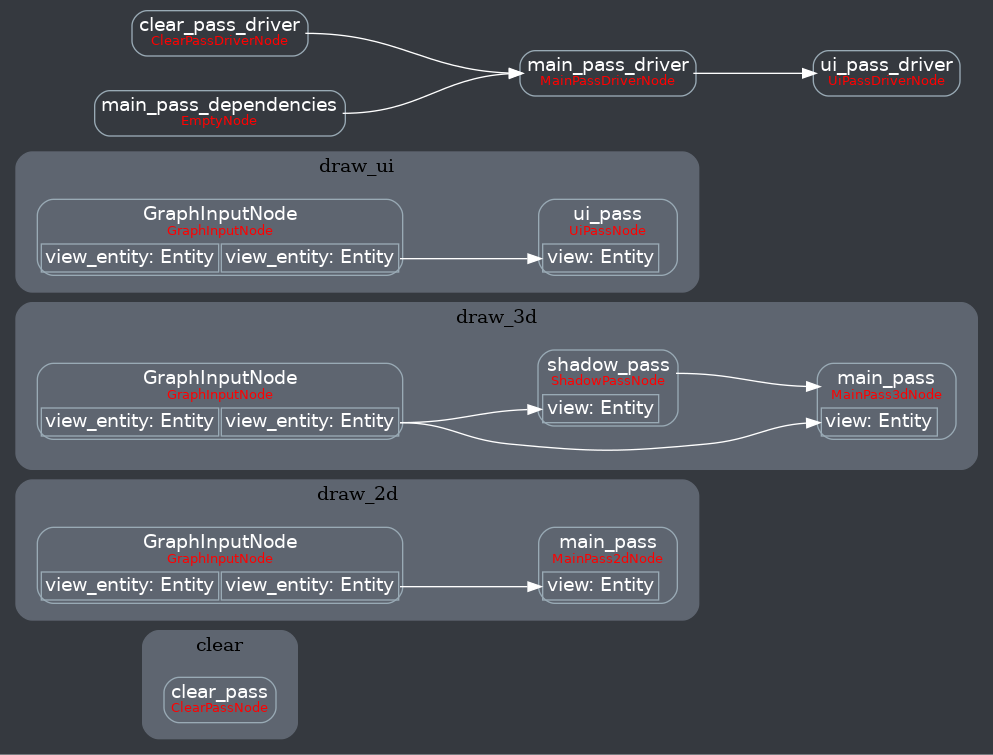

- ~The current solution is a bit inflexible because while you can add a post processing pass that writes to e.g. the `hdr_texture`, you can't write to a separate `user_postprocess_texture` while reading the `hdr_texture` and tell the tone mapping pass to read from the `user_postprocess_texture` instead. If the tonemapping and upscaling render graph nodes were to take in a `TextureView` instead of the view entity this would almost work, but the bind groups for their respective input textures are already created in the `Queue` render stage in the hardcoded order.~ solved by creating bind groups in render node

**New render graph:**

<details>

<summary>Before</summary>

</details>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- It's possible to create a mesh without positions or normals, but currently bevy forces these attributes to be present on any mesh.

## Solution

- Don't assume these attributes are present and add a shader defs for each attributes

- I updated 2d and 3d meshes to use the same logic.

---

## Changelog

- Meshes don't require any attributes

# Notes

I didn't update the pbr.wgsl shader because I'm not sure how to handle it. It doesn't really make sense to use it without positions or normals.

# Objective

There is no Srgb support on some GPU and display protocols with `winit` (for example, Nvidia's GPUs with Wayland). Thus `TextureFormat::bevy_default()` which returns `Rgba8UnormSrgb` or `Bgra8UnormSrgb` will cause panics on such platforms. This patch will resolve this problem. Fix https://github.com/bevyengine/bevy/issues/3897.

## Solution

Make `initialize_renderer` expose `wgpu::Adapter` and `first_available_texture_format`, use the `first_available_texture_format` by default.

## Changelog

* Fixed https://github.com/bevyengine/bevy/issues/3897.

# Objective

Simple docs/comments only PR that just fixes some outdated file references left over from the render rewrite.

## Solution

- Change the references to point to the correct files

# Objective

Implement `IntoIterator` for `&Extract<P>` if the system parameter it wraps implements `IntoIterator`.

Enables the use of `IntoIterator` with an extracted query.

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

- Morten Mikkelsen clarified that the world normal and tangent must be normalized in the vertex stage and the interpolated values must not be normalized in the fragment stage. This is in order to match the mikktspace approach exactly.

- Fixes#5514 by ensuring the tangent basis matrix (TBN) is orthonormal

## Solution

- Normalize the world normal in the vertex stage and not the fragment stage

- Normalize the world tangent xyz in the vertex stage

- Take into account the sign of the determinant of the local to world matrix when calculating the bitangent

---

## Changelog

- Fixed - scaling a model that uses normal mapping now has correct lighting again

*This PR description is an edited copy of #5007, written by @alice-i-cecile.*

# Objective

Follow-up to https://github.com/bevyengine/bevy/pull/2254. The `Resource` trait currently has a blanket implementation for all types that meet its bounds.

While ergonomic, this results in several drawbacks:

* it is possible to make confusing, silent mistakes such as inserting a function pointer (Foo) rather than a value (Foo::Bar) as a resource

* it is challenging to discover if a type is intended to be used as a resource

* we cannot later add customization options (see the [RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/27-derive-component.md) for the equivalent choice for Component).

* dependencies can use the same Rust type as a resource in invisibly conflicting ways

* raw Rust types used as resources cannot preserve privacy appropriately, as anyone able to access that type can read and write to internal values

* we cannot capture a definitive list of possible resources to display to users in an editor

## Notes to reviewers

* Review this commit-by-commit; there's effectively no back-tracking and there's a lot of churn in some of these commits.

*ira: My commits are not as well organized :')*

* I've relaxed the bound on Local to Send + Sync + 'static: I don't think these concerns apply there, so this can keep things simple. Storing e.g. a u32 in a Local is fine, because there's a variable name attached explaining what it does.

* I think this is a bad place for the Resource trait to live, but I've left it in place to make reviewing easier. IMO that's best tackled with https://github.com/bevyengine/bevy/issues/4981.

## Changelog

`Resource` is no longer automatically implemented for all matching types. Instead, use the new `#[derive(Resource)]` macro.

## Migration Guide

Add `#[derive(Resource)]` to all types you are using as a resource.

If you are using a third party type as a resource, wrap it in a tuple struct to bypass orphan rules. Consider deriving `Deref` and `DerefMut` to improve ergonomics.

`ClearColor` no longer implements `Component`. Using `ClearColor` as a component in 0.8 did nothing.

Use the `ClearColorConfig` in the `Camera3d` and `Camera2d` components instead.

Co-authored-by: Alice <alice.i.cecile@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: devil-ira <justthecooldude@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Add capability to use `Affine3A`s for some `GlobalTransform`s. This allows affine transformations that are not possible using a single `Transform` such as shear and non-uniform scaling along an arbitrary axis.

- Related to #1755 and #2026

## Solution

- `GlobalTransform` becomes an enum wrapping either a `Transform` or an `Affine3A`.

- The API of `GlobalTransform` is minimized to avoid inefficiency, and to make it clear that operations should be performed using the underlying data types.

- using `GlobalTransform::Affine3A` disables transform propagation, because the main use is for cases that `Transform`s cannot support.

---

## Changelog

- `GlobalTransform`s can optionally support any affine transformation using an `Affine3A`.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Fixes#4907. Fixes#838. Fixes#5089.

Supersedes #5146. Supersedes #2087. Supersedes #865. Supersedes #5114

Visibility is currently entirely local. Set a parent entity to be invisible, and the children are still visible. This makes it hard for users to hide entire hierarchies of entities.

Additionally, the semantics of `Visibility` vs `ComputedVisibility` are inconsistent across entity types. 3D meshes use `ComputedVisibility` as the "definitive" visibility component, with `Visibility` being just one data source. Sprites just use `Visibility`, which means they can't feed off of `ComputedVisibility` data, such as culling information, RenderLayers, and (added in this pr) visibility inheritance information.

## Solution

Splits `ComputedVisibilty::is_visible` into `ComputedVisibilty::is_visible_in_view` and `ComputedVisibilty::is_visible_in_hierarchy`. For each visible entity, `is_visible_in_hierarchy` is computed by propagating visibility down the hierarchy. The `ComputedVisibility::is_visible()` function combines these two booleans for the canonical "is this entity visible" function.

Additionally, all entities that have `Visibility` now also have `ComputedVisibility`. Sprites, Lights, and UI entities now use `ComputedVisibility` when appropriate.

This means that in addition to visibility inheritance, everything using Visibility now also supports RenderLayers. Notably, Sprites (and other 2d objects) now support `RenderLayers` and work properly across multiple views.

Also note that this does increase the amount of work done per sprite. Bevymark with 100,000 sprites on `main` runs in `0.017612` seconds and this runs in `0.01902`. That is certainly a gap, but I believe the api consistency and extra functionality this buys us is worth it. See [this thread](https://github.com/bevyengine/bevy/pull/5146#issuecomment-1182783452) for more info. Note that #5146 in combination with #5114 _are_ a viable alternative to this PR and _would_ perform better, but that comes at the cost of api inconsistencies and doing visibility calculations in the "wrong" place. The current visibility system does have potential for performance improvements. I would prefer to evolve that one system as a whole rather than doing custom hacks / different behaviors for each feature slice.

Here is a "split screen" example where the left camera uses RenderLayers to filter out the blue sprite.

Note that this builds directly on #5146 and that @james7132 deserves the credit for the baseline visibility inheritance work. This pr moves the inherited visibility field into `ComputedVisibility`, then does the additional work of porting everything to `ComputedVisibility`. See my [comments here](https://github.com/bevyengine/bevy/pull/5146#issuecomment-1182783452) for rationale.

## Follow up work

* Now that lights use ComputedVisibility, VisibleEntities now includes "visible lights" in the entity list. Functionally not a problem as we use queries to filter the list down in the desired context. But we should consider splitting this out into a separate`VisibleLights` collection for both clarity and performance reasons. And _maybe_ even consider scoping `VisibleEntities` down to `VisibleMeshes`?.

* Investigate alternative sprite rendering impls (in combination with visibility system tweaks) that avoid re-generating a per-view fixedbitset of visible entities every frame, then checking each ExtractedEntity. This is where most of the performance overhead lives. Ex: we could generate ExtractedEntities per-view using the VisibleEntities list, avoiding the need for the bitset.

* Should ComputedVisibility use bitflags under the hood? This would cut down on the size of the component, potentially speed up the `is_visible()` function, and allow us to cheaply expand ComputedVisibility with more data (ex: split out local visibility and parent visibility, add more culling classes, etc).

---

## Changelog

* ComputedVisibility now takes hierarchy visibility into account.

* 2D, UI and Light entities now use the ComputedVisibility component.

## Migration Guide

If you were previously reading `Visibility::is_visible` as the "actual visibility" for sprites or lights, use `ComputedVisibilty::is_visible()` instead:

```rust

// before (0.7)

fn system(query: Query<&Visibility>) {

for visibility in query.iter() {

if visibility.is_visible {

log!("found visible entity");

}

}

}

// after (0.8)

fn system(query: Query<&ComputedVisibility>) {

for visibility in query.iter() {

if visibility.is_visible() {

log!("found visible entity");

}

}

}

```

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

Remove unnecessary calls to `iter()`/`iter_mut()`.

Mainly updates the use of queries in our code, docs, and examples.

```rust

// From

for _ in list.iter() {

for _ in list.iter_mut() {

// To

for _ in &list {

for _ in &mut list {

```

We already enable the pedantic lint [clippy::explicit_iter_loop](https://rust-lang.github.io/rust-clippy/stable/) inside of Bevy. However, this only warns for a few known types from the standard library.

## Note for reviewers

As you can see the additions and deletions are exactly equal.

Maybe give it a quick skim to check I didn't sneak in a crypto miner, but you don't have to torture yourself by reading every line.

I already experienced enough pain making this PR :)

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

- Currently, the `Extract` `RenderStage` is executed on the main world, with the render world available as a resource.

- However, when needing access to resources in the render world (e.g. to mutate them), the only way to do so was to get exclusive access to the whole `RenderWorld` resource.

- This meant that effectively only one extract which wrote to resources could run at a time.

- We didn't previously make `Extract`ing writing to the world a non-happy path, even though we want to discourage that.

## Solution

- Move the extract stage to run on the render world.

- Add the main world as a `MainWorld` resource.

- Add an `Extract` `SystemParam` as a convenience to access a (read only) `SystemParam` in the main world during `Extract`.

## Future work

It should be possible to avoid needing to use `get_or_spawn` for the render commands, since now the `Commands`' `Entities` matches up with the world being executed on.

We need to determine how this interacts with https://github.com/bevyengine/bevy/pull/3519

It's theoretically possible to remove the need for the `value` method on `Extract`. However, that requires slightly changing the `SystemParam` interface, which would make it more complicated. That would probably mess up the `SystemState` api too.

## Todo

I still need to add doc comments to `Extract`.

---

## Changelog

### Changed

- The `Extract` `RenderStage` now runs on the render world (instead of the main world as before).

You must use the `Extract` `SystemParam` to access the main world during the extract phase.

Resources on the render world can now be accessed using `ResMut` during extract.

### Removed

- `Commands::spawn_and_forget`. Use `Commands::get_or_spawn(e).insert_bundle(bundle)` instead

## Migration Guide

The `Extract` `RenderStage` now runs on the render world (instead of the main world as before).

You must use the `Extract` `SystemParam` to access the main world during the extract phase. `Extract` takes a single type parameter, which is any system parameter (such as `Res`, `Query` etc.). It will extract this from the main world, and returns the result of this extraction when `value` is called on it.

For example, if previously your extract system looked like:

```rust

fn extract_clouds(mut commands: Commands, clouds: Query<Entity, With<Cloud>>) {

for cloud in clouds.iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

the new version would be:

```rust

fn extract_clouds(mut commands: Commands, mut clouds: Extract<Query<Entity, With<Cloud>>>) {

for cloud in clouds.value().iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

The diff is:

```diff

--- a/src/clouds.rs

+++ b/src/clouds.rs

@@ -1,5 +1,5 @@

-fn extract_clouds(mut commands: Commands, clouds: Query<Entity, With<Cloud>>) {

- for cloud in clouds.iter() {

+fn extract_clouds(mut commands: Commands, mut clouds: Extract<Query<Entity, With<Cloud>>>) {

+ for cloud in clouds.value().iter() {

commands.get_or_spawn(cloud).insert(Cloud);

}

}

```

You can now also access resources from the render world using the normal system parameters during `Extract`:

```rust

fn extract_assets(mut render_assets: ResMut<MyAssets>, source_assets: Extract<Res<MyAssets>>) {

*render_assets = source_assets.clone();

}

```

Please note that all existing extract systems need to be updated to match this new style; even if they currently compile they will not run as expected. A warning will be emitted on a best-effort basis if this is not met.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>