Currently, the specialized pipeline cache maps a (view entity, mesh

entity) tuple to the retained pipeline for that entity. This causes two

problems:

1. Using the view entity is incorrect, because the view entity isn't

stable from frame to frame.

2. Switching the view entity to a `RetainedViewEntity`, which is

necessary for correctness, significantly regresses performance of

`specialize_material_meshes` and `specialize_shadows` because of the

loss of the fast `EntityHash`.

This patch fixes both problems by switching to a *two-level* hash table.

The outer level of the table maps each `RetainedViewEntity` to an inner

table, which maps each `MainEntity` to its pipeline ID and change tick.

Because we loop over views first and, within that loop, loop over

entities visible from that view, we hoist the slow lookup of the view

entity out of the inner entity loop.

Additionally, this patch fixes a bug whereby pipeline IDs were leaked

when removing the view. We still have a problem with leaking pipeline

IDs for deleted entities, but that won't be fixed until the specialized

pipeline cache is retained.

This patch improves performance of the [Caldera benchmark] from 7.8×

faster than 0.14 to 9.0× faster than 0.14, when applied on top of the

global binding arrays PR, #17898.

[Caldera benchmark]: https://github.com/DGriffin91/bevy_caldera_scene

The GPU can fill out many of the fields in `IndirectParametersMetadata`

using information it already has:

* `early_instance_count` and `late_instance_count` are always

initialized to zero.

* `mesh_index` is already present in the work item buffer as the

`input_index` of the first work item in each batch.

This patch moves these fields to a separate buffer, the *GPU indirect

parameters metadata* buffer. That way, it avoids having to write them on

CPU during `batch_and_prepare_binned_render_phase`. This effectively

reduces the number of bits that that function must write per mesh from

160 to 64 (in addition to the 64 bits per mesh *instance*).

Additionally, this PR refactors `UntypedPhaseIndirectParametersBuffers`

to add another layer, `MeshClassIndirectParametersBuffers`, which allows

abstracting over the buffers corresponding indexed and non-indexed

meshes. This patch doesn't make much use of this abstraction, but

forthcoming patches will, and it's overall a cleaner approach.

This didn't seem to have much of an effect by itself on

`batch_and_prepare_binned_render_phase` time, but subsequent PRs

dependent on this PR yield roughly a 2× speedup.

# Objective

- #17787 removed sweeping of binned render phases from 2D by accident

due to them not using the `BinnedRenderPhasePlugin`.

- Fixes#17885

## Solution

- Schedule `sweep_old_entities` in `QueueSweep` like

`BinnedRenderPhasePlugin` does, but for 2D where that plugin is not

used.

## Testing

Tested with the modified `shader_defs` example in #17885 .

# Objective

Add reference to reported position space in picking backend docs.

Fixes#17844

## Solution

Add explanatory docs to the implementation notes of each picking

backend.

## Testing

`cargo r -p ci -- doc-check` & `cargo r -p ci -- lints`

Currently, invocations of `batch_and_prepare_binned_render_phase` and

`batch_and_prepare_sorted_render_phase` can't run in parallel because

they write to scene-global GPU buffers. After PR #17698,

`batch_and_prepare_binned_render_phase` started accounting for the

lion's share of the CPU time, causing us to be strongly CPU bound on

scenes like Caldera when occlusion culling was on (because of the

overhead of batching for the Z-prepass). Although I eventually plan to

optimize `batch_and_prepare_binned_render_phase`, we can obtain

significant wins now by parallelizing that system across phases.

This commit splits all GPU buffers that

`batch_and_prepare_binned_render_phase` and

`batch_and_prepare_sorted_render_phase` touches into separate buffers

for each phase so that the scheduler will run those phases in parallel.

At the end of batch preparation, we gather the render phases up into a

single resource with a new *collection* phase. Because we already run

mesh preprocessing separately for each phase in order to make occlusion

culling work, this is actually a cleaner separation. For example, mesh

output indices (the unique ID that identifies each mesh instance on GPU)

are now guaranteed to be sequential starting from 0, which will simplify

the forthcoming work to remove them in favor of the compute dispatch ID.

On Caldera, this brings the frame time down to approximately 9.1 ms with

occlusion culling on.

Currently, we look up each `MeshInputUniform` index in a hash table that

maps the main entity ID to the index every frame. This is inefficient,

cache unfriendly, and unnecessary, as the `MeshInputUniform` index for

an entity remains the same from frame to frame (even if the input

uniform changes). This commit changes the `IndexSet` in the `RenderBin`

to an `IndexMap` that maps the `MainEntity` to `MeshInputUniformIndex`

(a new type that this patch adds for more type safety).

On Caldera with parallel `batch_and_prepare_binned_render_phase`, this

patch improves that function from 3.18 ms to 2.42 ms, a 31% speedup.

Currently, we *sweep*, or remove entities from bins when those entities

became invisible or changed phases, during `queue_material_meshes` and

similar phases. This, however, is wrong, because `queue_material_meshes`

executes once per material type, not once per phase. This could result

in sweeping bins multiple times per phase, which can corrupt the bins.

This commit fixes the issue by moving sweeping to a separate system that

runs after queuing.

This manifested itself as entities appearing and disappearing seemingly

at random.

Closes#17759.

---------

Co-authored-by: Robert Swain <robert.swain@gmail.com>

# Objective

Because of mesh preprocessing, users cannot rely on

`@builtin(instance_index)` in order to reference external data, as the

instance index is not stable, either from frame to frame or relative to

the total spawn order of mesh instances.

## Solution

Add a user supplied mesh index that can be used for referencing external

data when drawing instanced meshes.

Closes#13373

## Testing

Benchmarked `many_cubes` showing no difference in total frame time.

## Showcase

https://github.com/user-attachments/assets/80620147-aafc-4d9d-a8ee-e2149f7c8f3b

---------

Co-authored-by: IceSentry <IceSentry@users.noreply.github.com>

# Objective

https://github.com/bevyengine/bevy/issues/17746

## Solution

- Change `Image.data` from being a `Vec<u8>` to a `Option<Vec<u8>>`

- Added functions to help with creating images

## Testing

- Did you test these changes? If so, how?

All current tests pass

Tested a variety of existing examples to make sure they don't crash

(they don't)

- If relevant, what platforms did you test these changes on, and are

there any important ones you can't test?

Linux x86 64-bit NixOS

---

## Migration Guide

Code that directly access `Image` data will now need to use unwrap or

handle the case where no data is provided.

Behaviour of new_fill slightly changed, but not in a way that is likely

to affect anything. It no longer panics and will fill the whole texture

instead of leaving black pixels if the data provided is not a nice

factor of the size of the image.

---------

Co-authored-by: IceSentry <IceSentry@users.noreply.github.com>

Didn't remove WgpuWrapper. Not sure if it's needed or not still.

## Testing

- Did you test these changes? If so, how? Example runner

- Are there any parts that need more testing? Web (portable atomics

thingy?), DXC.

## Migration Guide

- Bevy has upgraded to [wgpu

v24](https://github.com/gfx-rs/wgpu/blob/trunk/CHANGELOG.md#v2400-2025-01-15).

- When using the DirectX 12 rendering backend, the new priority system

for choosing a shader compiler is as follows:

- If the `WGPU_DX12_COMPILER` environment variable is set at runtime, it

is used

- Else if the new `statically-linked-dxc` feature is enabled, a custom

version of DXC will be statically linked into your app at compile time.

- Else Bevy will look in the app's working directory for

`dxcompiler.dll` and `dxil.dll` at runtime.

- Else if they are missing, Bevy will fall back to FXC (not recommended)

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: François Mockers <francois.mockers@vleue.com>

# Objective

- publish script copy the license files to all subcrates, meaning that

all publish are dirty. this breaks git verification of crates

- the order and list of crates to publish is manually maintained,

leading to error. cargo 1.84 is more strict and the list is currently

wrong

## Solution

- duplicate all the licenses to all crates and remove the

`--allow-dirty` flag

- instead of a manual list of crates, get it from `cargo package

--workspace`

- remove the `--no-verify` flag to... verify more things?

# Objective

Things were breaking post-cs.

## Solution

`specialize_mesh_materials` must run after

`collect_meshes_for_gpu_building`. Therefore, its placement in the

`PrepareAssets` set didn't make sense (also more generally). To fix, we

put this class of system in ~`PrepareResources`~ `QueueMeshes`, although

it potentially could use a more descriptive location. We may want to

review the placement of `check_views_need_specialization` which is also

currently in `PrepareAssets`.

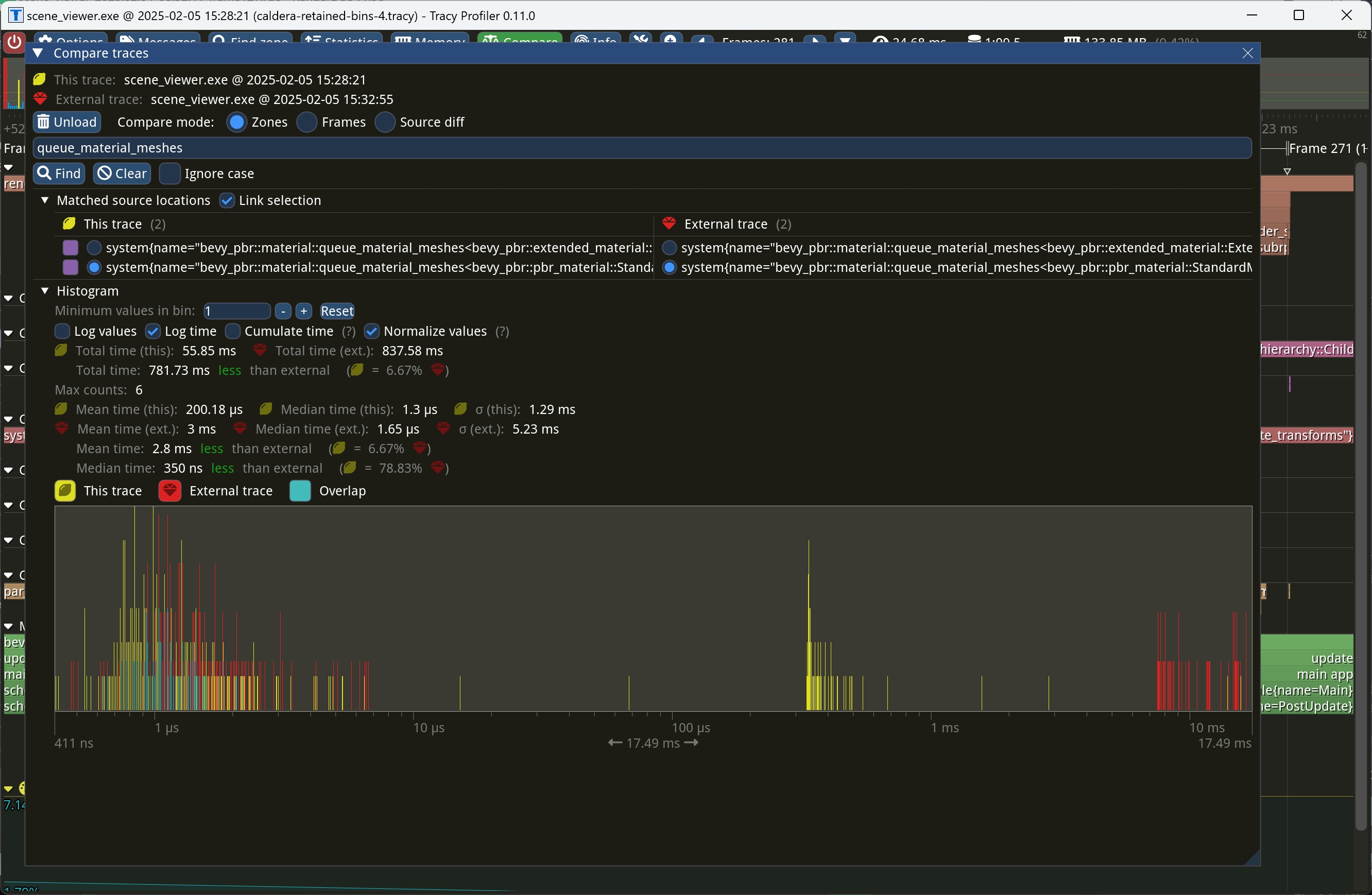

This PR makes Bevy keep entities in bins from frame to frame if they

haven't changed. This reduces the time spent in `queue_material_meshes`

and related functions to near zero for static geometry. This patch uses

the same change tick technique that #17567 uses to detect when meshes

have changed in such a way as to require re-binning.

In order to quickly find the relevant bin for an entity when that entity

has changed, we introduce a new type of cache, the *bin key cache*. This

cache stores a mapping from main world entity ID to cached bin key, as

well as the tick of the most recent change to the entity. As we iterate

through the visible entities in `queue_material_meshes`, we check the

cache to see whether the entity needs to be re-binned. If it doesn't,

then we mark it as clean in the `valid_cached_entity_bin_keys` bit set.

If it does, then we insert it into the correct bin, and then mark the

entity as clean. At the end, all entities not marked as clean are

removed from the bins.

This patch has a dramatic effect on the rendering performance of most

benchmarks, as it effectively eliminates `queue_material_meshes` from

the profile. Note, however, that it generally simultaneously regresses

`batch_and_prepare_binned_render_phase` by a bit (not by enough to

outweigh the win, however). I believe that's because, before this patch,

`queue_material_meshes` put the bins in the CPU cache for

`batch_and_prepare_binned_render_phase` to use, while with this patch,

`batch_and_prepare_binned_render_phase` must load the bins into the CPU

cache itself.

On Caldera, this reduces the time spent in `queue_material_meshes` from

5+ ms to 0.2ms-0.3ms. Note that benchmarking on that scene is very noisy

right now because of https://github.com/bevyengine/bevy/issues/17535.

# Objective

- Make use of the new `weak_handle!` macro added in

https://github.com/bevyengine/bevy/pull/17384

## Solution

- Migrate bevy from `Handle::weak_from_u128` to the new `weak_handle!`

macro that takes a random UUID

- Deprecate `Handle::weak_from_u128`, since there are no remaining use

cases that can't also be addressed by constructing the type manually

## Testing

- `cargo run -p ci -- test`

---

## Migration Guide

Replace `Handle::weak_from_u128` with `weak_handle!` and a random UUID.

# Cold Specialization

## Objective

An ongoing part of our quest to retain everything in the render world,

cold-specialization aims to cache pipeline specialization so that

pipeline IDs can be recomputed only when necessary, rather than every

frame. This approach reduces redundant work in stable scenes, while

still accommodating scenarios in which materials, views, or visibility

might change, as well as unlocking future optimization work like

retaining render bins.

## Solution

Queue systems are split into a specialization system and queue system,

the former of which only runs when necessary to compute a new pipeline

id. Pipelines are invalidated using a combination of change detection

and ECS ticks.

### The difficulty with change detection

Detecting “what changed” can be tricky because pipeline specialization

depends not only on the entity’s components (e.g., mesh, material, etc.)

but also on which view (camera) it is rendering in. In other words, the

cache key for a given pipeline id is a view entity/render entity pair.

As such, it's not sufficient simply to react to change detection in

order to specialize -- an entity could currently be out of view or could

be rendered in the future in camera that is currently disabled or hasn't

spawned yet.

### Why ticks?

Ticks allow us to ensure correctness by allowing us to compare the last

time a view or entity was updated compared to the cached pipeline id.

This ensures that even if an entity was out of view or has never been

seen in a given camera before we can still correctly determine whether

it needs to be re-specialized or not.

## Testing

TODO: Tested a bunch of different examples, need to test more.

## Migration Guide

TODO

- `AssetEvents` has been moved into the `PostUpdate` schedule.

---------

Co-authored-by: Patrick Walton <pcwalton@mimiga.net>

# Objective

Fix text 2d. Fixes https://github.com/bevyengine/bevy/issues/17670

## Solution

Evidently there's a 1:N extraction going on here that requires using the

render entity rather than main entity.

## Testing

Text 2d example

# Objective

Currently, `prepare_sprite_image_bind_group` spawns sprite batches onto

an individual representative entity of the batch. This poses significant

problems for multi-camera setups, since an entity may appear in multiple

phase instances.

## Solution

Instead, move batches into a resource that is keyed off the view and the

representative entity. Long term we should switch to mesh2d and use the

existing BinnedRenderPhase functionality rather than naively queueing

into transparent and doing our own ad-hoc batching logic.

Fixes#16867, #17351

## Testing

Tested repros in above issues.

# Objective

Fix this comment in `queue_sprites`:

```

// batch_range and dynamic_offset will be calculated in prepare_sprites.

```

`Transparent2d` no longer has a `dynamic_offset` field and the

`batch_range` is calculated in `prepare_sprite_image_bind_groups` now.

*Occlusion culling* allows the GPU to skip the vertex and fragment

shading overhead for objects that can be quickly proved to be invisible

because they're behind other geometry. A depth prepass already

eliminates most fragment shading overhead for occluded objects, but the

vertex shading overhead, as well as the cost of testing and rejecting

fragments against the Z-buffer, is presently unavoidable for standard

meshes. We currently perform occlusion culling only for meshlets. But

other meshes, such as skinned meshes, can benefit from occlusion culling

too in order to avoid the transform and skinning overhead for unseen

meshes.

This commit adapts the same [*two-phase occlusion culling*] technique

that meshlets use to Bevy's standard 3D mesh pipeline when the new

`OcclusionCulling` component, as well as the `DepthPrepass` component,

are present on the camera. It has these steps:

1. *Early depth prepass*: We use the hierarchical Z-buffer from the

previous frame to cull meshes for the initial depth prepass, effectively

rendering only the meshes that were visible in the last frame.

2. *Early depth downsample*: We downsample the depth buffer to create

another hierarchical Z-buffer, this time with the current view

transform.

3. *Late depth prepass*: We use the new hierarchical Z-buffer to test

all meshes that weren't rendered in the early depth prepass. Any meshes

that pass this check are rendered.

4. *Late depth downsample*: Again, we downsample the depth buffer to

create a hierarchical Z-buffer in preparation for the early depth

prepass of the next frame. This step is done after all the rendering, in

order to account for custom phase items that might write to the depth

buffer.

Note that this patch has no effect on the per-mesh CPU overhead for

occluded objects, which remains high for a GPU-driven renderer due to

the lack of `cold-specialization` and retained bins. If

`cold-specialization` and retained bins weren't on the horizon, then a

more traditional approach like potentially visible sets (PVS) or low-res

CPU rendering would probably be more efficient than the GPU-driven

approach that this patch implements for most scenes. However, at this

point the amount of effort required to implement a PVS baking tool or a

low-res CPU renderer would probably be greater than landing

`cold-specialization` and retained bins, and the GPU driven approach is

the more modern one anyway. It does mean that the performance

improvements from occlusion culling as implemented in this patch *today*

are likely to be limited, because of the high CPU overhead for occluded

meshes.

Note also that this patch currently doesn't implement occlusion culling

for 2D objects or shadow maps. Those can be addressed in a follow-up.

Additionally, note that the techniques in this patch require compute

shaders, which excludes support for WebGL 2.

This PR is marked experimental because of known precision issues with

the downsampling approach when applied to non-power-of-two framebuffer

sizes (i.e. most of them). These precision issues can, in rare cases,

cause objects to be judged occluded that in fact are not. (I've never

seen this in practice, but I know it's possible; it tends to be likelier

to happen with small meshes.) As a follow-up to this patch, we desire to

switch to the [SPD-based hi-Z buffer shader from the Granite engine],

which doesn't suffer from these problems, at which point we should be

able to graduate this feature from experimental status. I opted not to

include that rewrite in this patch for two reasons: (1) @JMS55 is

planning on doing the rewrite to coincide with the new availability of

image atomic operations in Naga; (2) to reduce the scope of this patch.

A new example, `occlusion_culling`, has been added. It demonstrates

objects becoming quickly occluded and disoccluded by dynamic geometry

and shows the number of objects that are actually being rendered. Also,

a new `--occlusion-culling` switch has been added to `scene_viewer`, in

order to make it easy to test this patch with large scenes like Bistro.

[*two-phase occlusion culling*]:

https://medium.com/@mil_kru/two-pass-occlusion-culling-4100edcad501

[Aaltonen SIGGRAPH 2015]:

https://www.advances.realtimerendering.com/s2015/aaltonenhaar_siggraph2015_combined_final_footer_220dpi.pdf

[Some literature]:

https://gist.github.com/reduz/c5769d0e705d8ab7ac187d63be0099b5?permalink_comment_id=5040452#gistcomment-5040452

[SPD-based hi-Z buffer shader from the Granite engine]:

https://github.com/Themaister/Granite/blob/master/assets/shaders/post/hiz.comp

## Migration guide

* When enqueuing a custom mesh pipeline, work item buffers are now

created with

`bevy::render::batching::gpu_preprocessing::get_or_create_work_item_buffer`,

not `PreprocessWorkItemBuffers::new`. See the

`specialized_mesh_pipeline` example.

## Showcase

Occlusion culling example:

Bistro zoomed out, before occlusion culling:

Bistro zoomed out, after occlusion culling:

In this scene, occlusion culling reduces the number of meshes Bevy has

to render from 1591 to 585.

# Objective

Bevy sprite image mode lacks proportional scaling for the underlying

texture. In many cases, it's required. For example, if it is desired to

support a wide variety of screens with a single texture, it's okay to

cut off some portion of the original texture.

## Solution

I added scaling of the texture during the preparation step. To fill the

sprite with the original texture, I scaled UV coordinates accordingly to

the sprite size aspect ratio and texture size aspect ratio. To fit

texture in a sprite the original `quad` is scaled and then the

additional translation is applied to place the scaled quad properly.

## Testing

For testing purposes could be used `2d/sprite_scale.rs`. Also, I am

thinking that it would be nice to have some tests for a

`crates/bevy_sprite/src/render/mod.rs:sprite_scale`.

---

## Showcase

<img width="1392" alt="image"

src="https://github.com/user-attachments/assets/c2c37b96-2493-4717-825f-7810d921b4bc"

/>

# Objective

- Contributes to #16877

## Solution

- Moved `hashbrown`, `foldhash`, and related types out of `bevy_utils`

and into `bevy_platform_support`

- Refactored the above to match the layout of these types in `std`.

- Updated crates as required.

## Testing

- CI

---

## Migration Guide

- The following items were moved out of `bevy_utils` and into

`bevy_platform_support::hash`:

- `FixedState`

- `DefaultHasher`

- `RandomState`

- `FixedHasher`

- `Hashed`

- `PassHash`

- `PassHasher`

- `NoOpHash`

- The following items were moved out of `bevy_utils` and into

`bevy_platform_support::collections`:

- `HashMap`

- `HashSet`

- `bevy_utils::hashbrown` has been removed. Instead, import from

`bevy_platform_support::collections` _or_ take a dependency on

`hashbrown` directly.

- `bevy_utils::Entry` has been removed. Instead, import from

`bevy_platform_support::collections::hash_map` or

`bevy_platform_support::collections::hash_set` as appropriate.

- All of the above equally apply to `bevy::utils` and

`bevy::platform_support`.

## Notes

- I left `PreHashMap`, `PreHashMapExt`, and `TypeIdMap` in `bevy_utils`

as they might be candidates for micro-crating. They can always be moved

into `bevy_platform_support` at a later date if desired.

This commit makes Bevy use change detection to only update

`RenderMaterialInstances` and `RenderMeshMaterialIds` when meshes have

been added, changed, or removed. `extract_mesh_materials`, the system

that extracts these, now follows the pattern that

`extract_meshes_for_gpu_building` established.

This improves frame time of `many_cubes` from 3.9ms to approximately

3.1ms, which slightly surpasses the performance of Bevy 0.14.

(Resubmitted from #16878 to clean up history.)

---------

Co-authored-by: Charlotte McElwain <charlotte.c.mcelwain@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

Fixes https://github.com/bevyengine/bevy/issues/17111

## Solution

Move `#![warn(clippy::allow_attributes,

clippy::allow_attributes_without_reason)]` to the workspace `Cargo.toml`

## Testing

Lots of CI testing, and local testing too.

---------

Co-authored-by: Benjamin Brienen <benjamin.brienen@outlook.com>

This commit allows Bevy to use `multi_draw_indirect_count` for drawing

meshes. The `multi_draw_indirect_count` feature works just like

`multi_draw_indirect`, but it takes the number of indirect parameters

from a GPU buffer rather than specifying it on the CPU.

Currently, the CPU constructs the list of indirect draw parameters with

the instance count for each batch set to zero, uploads the resulting

buffer to the GPU, and dispatches a compute shader that bumps the

instance count for each mesh that survives culling. Unfortunately, this

is inefficient when we support `multi_draw_indirect_count`. Draw

commands corresponding to meshes for which all instances were culled

will remain present in the list when calling

`multi_draw_indirect_count`, causing overhead. Proper use of

`multi_draw_indirect_count` requires eliminating these empty draw

commands.

To address this inefficiency, this PR makes Bevy fully construct the

indirect draw commands on the GPU instead of on the CPU. Instead of

writing instance counts to the draw command buffer, the mesh

preprocessing shader now writes them to a separate *indirect metadata

buffer*. A second compute dispatch known as the *build indirect

parameters* shader runs after mesh preprocessing and converts the

indirect draw metadata into actual indirect draw commands for the GPU.

The build indirect parameters shader operates on a batch at a time,

rather than an instance at a time, and as such each thread writes only 0

or 1 indirect draw parameters, simplifying the current logic in

`mesh_preprocessing`, which currently has to have special cases for the

first mesh in each batch. The build indirect parameters shader emits

draw commands in a tightly packed manner, enabling maximally efficient

use of `multi_draw_indirect_count`.

Along the way, this patch switches mesh preprocessing to dispatch one

compute invocation per render phase per view, instead of dispatching one

compute invocation per view. This is preparation for two-phase occlusion

culling, in which we will have two mesh preprocessing stages. In that

scenario, the first mesh preprocessing stage must only process opaque

and alpha tested objects, so the work items must be separated into those

that are opaque or alpha tested and those that aren't. Thus this PR

splits out the work items into a separate buffer for each phase. As this

patch rewrites so much of the mesh preprocessing infrastructure, it was

simpler to just fold the change into this patch instead of deferring it

to the forthcoming occlusion culling PR.

Finally, this patch changes mesh preprocessing so that it runs

separately for indexed and non-indexed meshes. This is because draw

commands for indexed and non-indexed meshes have different sizes and

layouts. *The existing code is actually broken for non-indexed meshes*,

as it attempts to overlay the indirect parameters for non-indexed meshes

on top of those for indexed meshes. Consequently, right now the

parameters will be read incorrectly when multiple non-indexed meshes are

multi-drawn together. *This is a bug fix* and, as with the change to

dispatch phases separately noted above, was easiest to include in this

patch as opposed to separately.

## Migration Guide

* Systems that add custom phase items now need to populate the indirect

drawing-related buffers. See the `specialized_mesh_pipeline` example for

an example of how this is done.

We won't be able to retain render phases from frame to frame if the keys

are unstable. It's not as simple as simply keying off the main world

entity, however, because some main world entities extract to multiple

render world entities. For example, directional lights extract to

multiple shadow cascades, and point lights extract to one view per

cubemap face. Therefore, we key off a new type, `RetainedViewEntity`,

which contains the main entity plus a *subview ID*.

This is part of the preparation for retained bins.

---------

Co-authored-by: ickshonpe <david.curthoys@googlemail.com>

# Objective

PR #17225 allowed for sprite picking to be opt-in. After some

discussion, it was agreed that `PickingBehavior` should be used to

opt-in to sprite picking behavior for entities. This leads to

`PickingBehavior` having two purposes: mark an entity for use in a

backend, and describe how it should be picked. Discussion led to the

name `Pickable`making more sense (also: this is what the component was

named before upstreaming).

A follow-up pass will be made after this PR to unify backends.

## Solution

Replace all instances of `PickingBehavior` and `picking_behavior` with

`Pickable` and `pickable`, respectively.

## Testing

CI

## Migration Guide

Change all instances of `PickingBehavior` to `Pickable`.

# Objective

I realized that setting these to `deny` may have been a little

aggressive - especially since we upgrade warnings to denies in CI.

## Solution

Downgrades these lints to `warn`, so that compiles can work locally. CI

will still treat these as denies.

# Objective

Stumbled upon a `from <-> form` transposition while reviewing a PR,

thought it was interesting, and went down a bit of a rabbit hole.

## Solution

Fix em

# Objective

Fixes#16903.

## Solution

- Make sprite picking opt-in by requiring a new `SpritePickingCamera`

component for cameras and usage of a new `Pickable` component for

entities.

- Update the `sprite_picking` example to reflect these changes.

- Some reflection cleanup (I hope that's ok).

## Testing

Ran the `sprite_picking` example

## Open Questions

<del>

<ul>

<li>Is the name `SpritePickable` appropriate?</li>

<li>Should `SpritePickable` be in `bevy_sprite::prelude?</li>

</ul>

</del>

## Migration Guide

The sprite picking backend is now strictly opt-in using the

`SpritePickingCamera` and `Pickable` components. You should add the

`Pickable` component any entities that you want sprite picking to be

enabled for, and mark their respective cameras with

`SpritePickingCamera`.

# Objective

Many instances of `clippy::too_many_arguments` linting happen to be on

systems - functions which we don't call manually, and thus there's not

much reason to worry about the argument count.

## Solution

Allow `clippy::too_many_arguments` globally, and remove all lint

attributes related to it.

# Objective

I never realized `clippy::type_complexity` was an allowed lint - I've

been assuming it'd generate a warning when performing my linting PRs.

## Solution

Removes any instances of `#[allow(clippy::type_complexity)]` and

`#[expect(clippy::type_complexity)]`

## Testing

`cargo clippy` ran without errors or warnings.

# Objective

- Allow other crates to use `TextureAtlas` and friends without needing

to depend on `bevy_sprite`.

- Specifically, this allows adding `TextureAtlas` support to custom

cursors in https://github.com/bevyengine/bevy/pull/17121 by allowing

`bevy_winit` to depend on `bevy_image` instead of `bevy_sprite` which is

a [non-starter].

[non-starter]:

https://github.com/bevyengine/bevy/pull/17121#discussion_r1904955083

## Solution

- Move `TextureAtlas`, `TextureAtlasBuilder`, `TextureAtlasSources`,

`TextureAtlasLayout` and `DynamicTextureAtlasBuilder` into `bevy_image`.

- Add a new plugin to `bevy_image` named `TextureAtlasPlugin` which

allows us to register `TextureAtlas` and `TextureAtlasLayout` which was

previously done in `SpritePlugin`. Since `SpritePlugin` did the

registration previously, we just need to make it add

`TextureAtlasPlugin`.

## Testing

- CI builds it.

- I also ran multiple examples which hopefully covered any issues:

```

$ cargo run --example sprite

$ cargo run --example text

$ cargo run --example ui_texture_atlas

$ cargo run --example sprite_animation

$ cargo run --example sprite_sheet

$ cargo run --example sprite_picking

```

---

## Migration Guide

The following types have been moved from `bevy_sprite` to `bevy_image`:

`TextureAtlas`, `TextureAtlasBuilder`, `TextureAtlasSources`,

`TextureAtlasLayout` and `DynamicTextureAtlasBuilder`.

If you are using the `bevy` crate, and were importing these types

directly (e.g. before `use bevy::sprite::TextureAtlas`), be sure to

update your import paths (e.g. after `use bevy::image::TextureAtlas`)

If you are using the `bevy` prelude to import these types (e.g. `use

bevy::prelude::*`), you don't need to change anything.

If you are using the `bevy_sprite` subcrate, be sure to add `bevy_image`

as a dependency if you do not already have it, and be sure to update

your import paths.

I broke the commit history on the other one,

https://github.com/bevyengine/bevy/pull/17160. Woops.

# Objective

- https://github.com/bevyengine/bevy/issues/17111

## Solution

Set the `clippy::allow_attributes` and

`clippy::allow_attributes_without_reason` lints to `deny`, and bring

`bevy_sprite` in line with the new restrictions.

## Testing

`cargo clippy` and `cargo test --package bevy_sprite` were run, and no

errors were encountered.

Currently, our batchable binned items are stored in a hash table that

maps bin key, which includes the batch set key, to a list of entities.

Multidraw is handled by sorting the bin keys and accumulating adjacent

bins that can be multidrawn together (i.e. have the same batch set key)

into multidraw commands during `batch_and_prepare_binned_render_phase`.

This is reasonably efficient right now, but it will complicate future

work to retain indirect draw parameters from frame to frame. Consider

what must happen when we have retained indirect draw parameters and the

application adds a bin (i.e. a new mesh) that shares a batch set key

with some pre-existing meshes. (That is, the new mesh can be multidrawn

with the pre-existing meshes.) To be maximally efficient, our goal in

that scenario will be to update *only* the indirect draw parameters for

the batch set (i.e. multidraw command) containing the mesh that was

added, while leaving the others alone. That means that we have to

quickly locate all the bins that belong to the batch set being modified.

In the existing code, we would have to sort the list of bin keys so that

bins that can be multidrawn together become adjacent to one another in

the list. Then we would have to do a binary search through the sorted

list to find the location of the bin that was just added. Next, we would

have to widen our search to adjacent indexes that contain the same batch

set, doing expensive comparisons against the batch set key every time.

Finally, we would reallocate the indirect draw parameters and update the

stored pointers to the indirect draw parameters that the bins store.

By contrast, it'd be dramatically simpler if we simply changed the way

bins are stored to first map from batch set key (i.e. multidraw command)

to the bins (i.e. meshes) within that batch set key, and then from each

individual bin to the mesh instances. That way, the scenario above in

which we add a new mesh will be simpler to handle. First, we will look

up the batch set key corresponding to that mesh in the outer map to find

an inner map corresponding to the single multidraw command that will

draw that batch set. We will know how many meshes the multidraw command

is going to draw by the size of that inner map. Then we simply need to

reallocate the indirect draw parameters and update the pointers to those

parameters within the bins as necessary. There will be no need to do any

binary search or expensive batch set key comparison: only a single hash

lookup and an iteration over the inner map to update the pointers.

This patch implements the above technique. Because we don't have

retained bins yet, this PR provides no performance benefits. However, it

opens the door to maximally efficient updates when only a small number

of meshes change from frame to frame.

The main churn that this patch causes is that the *batch set key* (which

uniquely specifies a multidraw command) and *bin key* (which uniquely

specifies a mesh *within* that multidraw command) are now separate,

instead of the batch set key being embedded *within* the bin key.

In order to isolate potential regressions, I think that at least #16890,

#16836, and #16825 should land before this PR does.

## Migration Guide

* The *batch set key* is now separate from the *bin key* in

`BinnedPhaseItem`. The batch set key is used to collect multidrawable

meshes together. If you aren't using the multidraw feature, you can

safely set the batch set key to `()`.

Bump version after release

This PR has been auto-generated

---------

Co-authored-by: Bevy Auto Releaser <41898282+github-actions[bot]@users.noreply.github.com>

Co-authored-by: François Mockers <mockersf@gmail.com>

# Objective

- Contributes to #11478

## Solution

- Made `bevy_utils::tracing` `doc(hidden)`

- Re-exported `tracing` from `bevy_log` for end-users

- Added `tracing` directly to crates that need it.

## Testing

- CI

---

## Migration Guide

If you were importing `tracing` via `bevy::utils::tracing`, instead use

`bevy::log::tracing`. Note that many items within `tracing` are also

directly re-exported from `bevy::log` as well, so you may only need

`bevy::log` for the most common items (e.g., `warn!`, `trace!`, etc.).

This also applies to the `log_once!` family of macros.

## Notes

- While this doesn't reduce the line-count in `bevy_utils`, it further

decouples the internal crates from `bevy_utils`, making its eventual

removal more feasible in the future.

- I have just imported `tracing` as we do for all dependencies. However,

a workspace dependency may be more appropriate for version management.

# Objective

Optimization for sprite picking

## Solution

Use `radsort` for the sort.

We already have `radsort` in tree for sorting various phase items

(including `Transparent2d` / sprites). It's a stable parallel radix

sort.

## Testing

Tested on an M1 Max.

`cargo run --example sprite_picking`

`cargo run --example bevymark --release --features=trace,trace_tracy --

--waves 100 --per-wave 1000 --benchmark`

<img width="983" alt="image"

src="https://github.com/user-attachments/assets/0f7a8c3a-006b-4323-a2ed-03788918dffa"

/>

Derived `Default` for all public unit structs that already derive from

`Component`. This allows them to be used more easily as required

components.

To avoid clutter in tests/examples, only public components were

affected, but this could easily be expanded to affect all unit

components.

Fixes#17052.

# Objective

Fixes#17098

It seems that it's not totally obvious how to fix this, but that

reverting might be part of the solution anyway.

Let's get the repo back into a working state.

## Solution

Revert the [recent

optimization](https://github.com/bevyengine/bevy/pull/17078) that broke

"many-to-one main->render world entities" for 2d.

## Testing

`cargo run --example text2d`

`cargo run --example sprite_slice`

# Objective

Use the latest version of `typos` and fix the typos that it now detects

# Additional Info

By the way, `typos` has a "low priority typo suggestions issue" where we

can throw typos we find that `typos` doesn't catch.

(This link may go stale) https://github.com/crate-ci/typos/issues/1200

# Objective

- Fixes https://github.com/bevyengine/bevy/issues/16556

- Closes https://github.com/bevyengine/bevy/issues/11807

## Solution

- Simplify custom projections by using a single source of truth -

`Projection`, removing all existing generic systems and types.

- Existing perspective and orthographic structs are no longer components

- I could dissolve these to simplify further, but keeping them around

was the fast way to implement this.

- Instead of generics, introduce a third variant, with a trait object.

- Do an object safety dance with an intermediate trait to allow cloning

boxed camera projections. This is a normal rust polymorphism papercut.

You can do this with a crate but a manual impl is short and sweet.

## Testing

- Added a custom projection example

---

## Showcase

- Custom projections and projection handling has been simplified.

- Projection systems are no longer generic, with the potential for many

different projection components on the same camera.

- Instead `Projection` is now the single source of truth for camera

projections, and is the only projection component.

- Custom projections are still supported, and can be constructed with

`Projection::custom()`.

## Migration Guide

- `PerspectiveProjection` and `OrthographicProjection` are no longer

components. Use `Projection` instead.

- Custom projections should no longer be inserted as a component.

Instead, simply set the custom projection as a value of `Projection`

with `Projection::custom()`.

# Objective

- Fix sprite rendering performance regression since retained render

world changes

- The retained render world changes moved `ExtractedSprites` from using

the highly-optimised `EntityHasher` with an `Entity` to using

`FixedHasher` with `(Entity, MainEntity)`. This was enough to regress

framerate in bevymark by 25%.

## Solution

- Move the render world entity into a member of `ExtractedSprite` and

change `ExtractedSprites` to use `MainEntityHashMap` for its storage

- Disable sprite picking in bevymark

## Testing

M4 Max. `bevymark --waves 100 --per-wave 1000 --benchmark`. main in

yellow vs PR in red:

<img width="590" alt="Screenshot 2025-01-01 at 16 36 22"

src="https://github.com/user-attachments/assets/1e4ed6ec-3811-4abf-8b30-336153737f89"

/>

20.2% median frame time reduction.

<img width="594" alt="Screenshot 2025-01-01 at 16 38 37"

src="https://github.com/user-attachments/assets/157c2022-cda6-4cf2-bc63-d0bc40528cf0"

/>

49.7% median extract_sprites execution time reduction.

Comparing 0.14.2 yellow vs PR red:

<img width="593" alt="Screenshot 2025-01-01 at 16 40 06"

src="https://github.com/user-attachments/assets/abd59b6f-290a-4eb6-8835-ed110af995f3"

/>

~6.1% median frame time reduction.

---

## Migration Guide

- `ExtractedSprites` is now using `MainEntityHashMap` for storage, which

is keyed on `MainEntity`.

- The render world entity corresponding to an `ExtractedSprite` is now

stored in the `render_entity` member of it.

# Objective

In `prepare_sprite_image_bind_groups` the `batch_image_changed`

condition is checked twice but the second if-block seems unnecessary.

# Solution

Queue new `SpriteBatch`es inside the first if-block and remove the

second if-block.

This commit makes the following changes:

* `IndirectParametersBuffer` has been changed from a `BufferVec` to a

`RawBufferVec`. This won about 20us or so on Bistro by avoiding `encase`

overhead.

* The methods on the `GetFullBatchData` trait no longer have the

`entity` parameter, as it was unused.

* `PreprocessWorkItem`, which specifies a transform-and-cull operation,

now supplies the mesh instance uniform output index directly instead of

having the shader look it up from the indirect draw parameters.

Accordingly, the responsibility of writing the output index to the

indirect draw parameters has been moved from the CPU to the GPU. This is

in preparation for retained indirect instance draw commands, where the

mesh instance uniform output index may change from frame to frame, while

the indirect instance draw commands will be cached. We won't want the

CPU to have to upload the same indirect draw parameters again and again

if a batch didn't change from frame to frame.

* `batch_and_prepare_binned_render_phase` and

`batch_and_prepare_sorted_render_phase` now allocate indirect draw

commands for an entire batch set at a time when possible, instead of one

batch at a time. This change will allow us to retain the indirect draw

commands for whole batch sets.

* `GetFullBatchData::get_batch_indirect_parameters_index` has been

replaced with `GetFullBatchData::write_batch_indirect_parameters`, which

takes an offset and writes into it instead of allocating. This is

necessary in order to use the optimization mentioned in the previous

point.

* At the WGSL level, `IndirectParameters` has been factored out into

`mesh_preprocess_types.wgsl`. This is because we'll need a new compute

shader that zeroes out the instance counts in preparation for a new

frame. That shader will need to access `IndirectParameters`, so it was

moved to a separate file.

* Bins are no longer raw vectors but are instances of a separate type,

`RenderBin`. This is so that the bin can eventually contain its retained

batches.