2021-12-14 03:58:23 +00:00

|

|

|

use crate::{

|

|

|

|

|

render_graph::{

|

|

|

|

|

Edge, Node, NodeId, NodeLabel, NodeRunError, NodeState, RenderGraphContext,

|

|

|

|

|

RenderGraphError, SlotInfo, SlotLabel,

|

|

|

|

|

},

|

|

|

|

|

renderer::RenderContext,

|

Bevy ECS V2 (#1525)

# Bevy ECS V2

This is a rewrite of Bevy ECS (basically everything but the new executor/schedule, which are already awesome). The overall goal was to improve the performance and versatility of Bevy ECS. Here is a quick bulleted list of changes before we dive into the details:

* Complete World rewrite

* Multiple component storage types:

* Tables: fast cache friendly iteration, slower add/removes (previously called Archetypes)

* Sparse Sets: fast add/remove, slower iteration

* Stateful Queries (caches query results for faster iteration. fragmented iteration is _fast_ now)

* Stateful System Params (caches expensive operations. inspired by @DJMcNab's work in #1364)

* Configurable System Params (users can set configuration when they construct their systems. once again inspired by @DJMcNab's work)

* Archetypes are now "just metadata", component storage is separate

* Archetype Graph (for faster archetype changes)

* Component Metadata

* Configure component storage type

* Retrieve information about component size/type/name/layout/send-ness/etc

* Components are uniquely identified by a densely packed ComponentId

* TypeIds are now totally optional (which should make implementing scripting easier)

* Super fast "for_each" query iterators

* Merged Resources into World. Resources are now just a special type of component

* EntityRef/EntityMut builder apis (more efficient and more ergonomic)

* Fast bitset-backed `Access<T>` replaces old hashmap-based approach everywhere

* Query conflicts are determined by component access instead of archetype component access (to avoid random failures at runtime)

* With/Without are still taken into account for conflicts, so this should still be comfy to use

* Much simpler `IntoSystem` impl

* Significantly reduced the amount of hashing throughout the ecs in favor of Sparse Sets (indexed by densely packed ArchetypeId, ComponentId, BundleId, and TableId)

* Safety Improvements

* Entity reservation uses a normal world reference instead of unsafe transmute

* QuerySets no longer transmute lifetimes

* Made traits "unsafe" where relevant

* More thorough safety docs

* WorldCell

* Exposes safe mutable access to multiple resources at a time in a World

* Replaced "catch all" `System::update_archetypes(world: &World)` with `System::new_archetype(archetype: &Archetype)`

* Simpler Bundle implementation

* Replaced slow "remove_bundle_one_by_one" used as fallback for Commands::remove_bundle with fast "remove_bundle_intersection"

* Removed `Mut<T>` query impl. it is better to only support one way: `&mut T`

* Removed with() from `Flags<T>` in favor of `Option<Flags<T>>`, which allows querying for flags to be "filtered" by default

* Components now have is_send property (currently only resources support non-send)

* More granular module organization

* New `RemovedComponents<T>` SystemParam that replaces `query.removed::<T>()`

* `world.resource_scope()` for mutable access to resources and world at the same time

* WorldQuery and QueryFilter traits unified. FilterFetch trait added to enable "short circuit" filtering. Auto impled for cases that don't need it

* Significantly slimmed down SystemState in favor of individual SystemParam state

* System Commands changed from `commands: &mut Commands` back to `mut commands: Commands` (to allow Commands to have a World reference)

Fixes #1320

## `World` Rewrite

This is a from-scratch rewrite of `World` that fills the niche that `hecs` used to. Yes, this means Bevy ECS is no longer a "fork" of hecs. We're going out our own!

(the only shared code between the projects is the entity id allocator, which is already basically ideal)

A huge shout out to @SanderMertens (author of [flecs](https://github.com/SanderMertens/flecs)) for sharing some great ideas with me (specifically hybrid ecs storage and archetype graphs). He also helped advise on a number of implementation details.

## Component Storage (The Problem)

Two ECS storage paradigms have gained a lot of traction over the years:

* **Archetypal ECS**:

* Stores components in "tables" with static schemas. Each "column" stores components of a given type. Each "row" is an entity.

* Each "archetype" has its own table. Adding/removing an entity's component changes the archetype.

* Enables super-fast Query iteration due to its cache-friendly data layout

* Comes at the cost of more expensive add/remove operations for an Entity's components, because all components need to be copied to the new archetype's "table"

* **Sparse Set ECS**:

* Stores components of the same type in densely packed arrays, which are sparsely indexed by densely packed unsigned integers (Entity ids)

* Query iteration is slower than Archetypal ECS because each entity's component could be at any position in the sparse set. This "random access" pattern isn't cache friendly. Additionally, there is an extra layer of indirection because you must first map the entity id to an index in the component array.

* Adding/removing components is a cheap, constant time operation

Bevy ECS V1, hecs, legion, flec, and Unity DOTS are all "archetypal ecs-es". I personally think "archetypal" storage is a good default for game engines. An entity's archetype doesn't need to change frequently in general, and it creates "fast by default" query iteration (which is a much more common operation). It is also "self optimizing". Users don't need to think about optimizing component layouts for iteration performance. It "just works" without any extra boilerplate.

Shipyard and EnTT are "sparse set ecs-es". They employ "packing" as a way to work around the "suboptimal by default" iteration performance for specific sets of components. This helps, but I didn't think this was a good choice for a general purpose engine like Bevy because:

1. "packs" conflict with each other. If bevy decides to internally pack the Transform and GlobalTransform components, users are then blocked if they want to pack some custom component with Transform.

2. users need to take manual action to optimize

Developers selecting an ECS framework are stuck with a hard choice. Select an "archetypal" framework with "fast iteration everywhere" but without the ability to cheaply add/remove components, or select a "sparse set" framework to cheaply add/remove components but with slower iteration performance.

## Hybrid Component Storage (The Solution)

In Bevy ECS V2, we get to have our cake and eat it too. It now has _both_ of the component storage types above (and more can be added later if needed):

* **Tables** (aka "archetypal" storage)

* The default storage. If you don't configure anything, this is what you get

* Fast iteration by default

* Slower add/remove operations

* **Sparse Sets**

* Opt-in

* Slower iteration

* Faster add/remove operations

These storage types complement each other perfectly. By default Query iteration is fast. If developers know that they want to add/remove a component at high frequencies, they can set the storage to "sparse set":

```rust

world.register_component(

ComponentDescriptor::new::<MyComponent>(StorageType::SparseSet)

).unwrap();

```

## Archetypes

Archetypes are now "just metadata" ... they no longer store components directly. They do store:

* The `ComponentId`s of each of the Archetype's components (and that component's storage type)

* Archetypes are uniquely defined by their component layouts

* For example: entities with "table" components `[A, B, C]` _and_ "sparse set" components `[D, E]` will always be in the same archetype.

* The `TableId` associated with the archetype

* For now each archetype has exactly one table (which can have no components),

* There is a 1->Many relationship from Tables->Archetypes. A given table could have any number of archetype components stored in it:

* Ex: an entity with "table storage" components `[A, B, C]` and "sparse set" components `[D, E]` will share the same `[A, B, C]` table as an entity with `[A, B, C]` table component and `[F]` sparse set components.

* This 1->Many relationship is how we preserve fast "cache friendly" iteration performance when possible (more on this later)

* A list of entities that are in the archetype and the row id of the table they are in

* ArchetypeComponentIds

* unique densely packed identifiers for (ArchetypeId, ComponentId) pairs

* used by the schedule executor for cheap system access control

* "Archetype Graph Edges" (see the next section)

## The "Archetype Graph"

Archetype changes in Bevy (and a number of other archetypal ecs-es) have historically been expensive to compute. First, you need to allocate a new vector of the entity's current component ids, add or remove components based on the operation performed, sort it (to ensure it is order-independent), then hash it to find the archetype (if it exists). And thats all before we get to the _already_ expensive full copy of all components to the new table storage.

The solution is to build a "graph" of archetypes to cache these results. @SanderMertens first exposed me to the idea (and he got it from @gjroelofs, who came up with it). They propose adding directed edges between archetypes for add/remove component operations. If `ComponentId`s are densely packed, you can use sparse sets to cheaply jump between archetypes.

Bevy takes this one step further by using add/remove `Bundle` edges instead of `Component` edges. Bevy encourages the use of `Bundles` to group add/remove operations. This is largely for "clearer game logic" reasons, but it also helps cut down on the number of archetype changes required. `Bundles` now also have densely-packed `BundleId`s. This allows us to use a _single_ edge for each bundle operation (rather than needing to traverse N edges ... one for each component). Single component operations are also bundles, so this is strictly an improvement over a "component only" graph.

As a result, an operation that used to be _heavy_ (both for allocations and compute) is now two dirt-cheap array lookups and zero allocations.

## Stateful Queries

World queries are now stateful. This allows us to:

1. Cache archetype (and table) matches

* This resolves another issue with (naive) archetypal ECS: query performance getting worse as the number of archetypes goes up (and fragmentation occurs).

2. Cache Fetch and Filter state

* The expensive parts of fetch/filter operations (such as hashing the TypeId to find the ComponentId) now only happen once when the Query is first constructed

3. Incrementally build up state

* When new archetypes are added, we only process the new archetypes (no need to rebuild state for old archetypes)

As a result, the direct `World` query api now looks like this:

```rust

let mut query = world.query::<(&A, &mut B)>();

for (a, mut b) in query.iter_mut(&mut world) {

}

```

Requiring `World` to generate stateful queries (rather than letting the `QueryState` type be constructed separately) allows us to ensure that _all_ queries are properly initialized (and the relevant world state, such as ComponentIds). This enables QueryState to remove branches from its operations that check for initialization status (and also enables query.iter() to take an immutable world reference because it doesn't need to initialize anything in world).

However in systems, this is a non-breaking change. State management is done internally by the relevant SystemParam.

## Stateful SystemParams

Like Queries, `SystemParams` now also cache state. For example, `Query` system params store the "stateful query" state mentioned above. Commands store their internal `CommandQueue`. This means you can now safely use as many separate `Commands` parameters in your system as you want. `Local<T>` system params store their `T` value in their state (instead of in Resources).

SystemParam state also enabled a significant slim-down of SystemState. It is much nicer to look at now.

Per-SystemParam state naturally insulates us from an "aliased mut" class of errors we have hit in the past (ex: using multiple `Commands` system params).

(credit goes to @DJMcNab for the initial idea and draft pr here #1364)

## Configurable SystemParams

@DJMcNab also had the great idea to make SystemParams configurable. This allows users to provide some initial configuration / values for system parameters (when possible). Most SystemParams have no config (the config type is `()`), but the `Local<T>` param now supports user-provided parameters:

```rust

fn foo(value: Local<usize>) {

}

app.add_system(foo.system().config(|c| c.0 = Some(10)));

```

## Uber Fast "for_each" Query Iterators

Developers now have the choice to use a fast "for_each" iterator, which yields ~1.5-3x iteration speed improvements for "fragmented iteration", and minor ~1.2x iteration speed improvements for unfragmented iteration.

```rust

fn system(query: Query<(&A, &mut B)>) {

// you now have the option to do this for a speed boost

query.for_each_mut(|(a, mut b)| {

});

// however normal iterators are still available

for (a, mut b) in query.iter_mut() {

}

}

```

I think in most cases we should continue to encourage "normal" iterators as they are more flexible and more "rust idiomatic". But when that extra "oomf" is needed, it makes sense to use `for_each`.

We should also consider using `for_each` for internal bevy systems to give our users a nice speed boost (but that should be a separate pr).

## Component Metadata

`World` now has a `Components` collection, which is accessible via `world.components()`. This stores mappings from `ComponentId` to `ComponentInfo`, as well as `TypeId` to `ComponentId` mappings (where relevant). `ComponentInfo` stores information about the component, such as ComponentId, TypeId, memory layout, send-ness (currently limited to resources), and storage type.

## Significantly Cheaper `Access<T>`

We used to use `TypeAccess<TypeId>` to manage read/write component/archetype-component access. This was expensive because TypeIds must be hashed and compared individually. The parallel executor got around this by "condensing" type ids into bitset-backed access types. This worked, but it had to be re-generated from the `TypeAccess<TypeId>`sources every time archetypes changed.

This pr removes TypeAccess in favor of faster bitset access everywhere. We can do this thanks to the move to densely packed `ComponentId`s and `ArchetypeComponentId`s.

## Merged Resources into World

Resources had a lot of redundant functionality with Components. They stored typed data, they had access control, they had unique ids, they were queryable via SystemParams, etc. In fact the _only_ major difference between them was that they were unique (and didn't correlate to an entity).

Separate resources also had the downside of requiring a separate set of access controls, which meant the parallel executor needed to compare more bitsets per system and manage more state.

I initially got the "separate resources" idea from `legion`. I think that design was motivated by the fact that it made the direct world query/resource lifetime interactions more manageable. It certainly made our lives easier when using Resources alongside hecs/bevy_ecs. However we already have a construct for safely and ergonomically managing in-world lifetimes: systems (which use `Access<T>` internally).

This pr merges Resources into World:

```rust

world.insert_resource(1);

world.insert_resource(2.0);

let a = world.get_resource::<i32>().unwrap();

let mut b = world.get_resource_mut::<f64>().unwrap();

*b = 3.0;

```

Resources are now just a special kind of component. They have their own ComponentIds (and their own resource TypeId->ComponentId scope, so they don't conflict wit components of the same type). They are stored in a special "resource archetype", which stores components inside the archetype using a new `unique_components` sparse set (note that this sparse set could later be used to implement Tags). This allows us to keep the code size small by reusing existing datastructures (namely Column, Archetype, ComponentFlags, and ComponentInfo). This allows us the executor to use a single `Access<ArchetypeComponentId>` per system. It should also make scripting language integration easier.

_But_ this merge did create problems for people directly interacting with `World`. What if you need mutable access to multiple resources at the same time? `world.get_resource_mut()` borrows World mutably!

## WorldCell

WorldCell applies the `Access<ArchetypeComponentId>` concept to direct world access:

```rust

let world_cell = world.cell();

let a = world_cell.get_resource_mut::<i32>().unwrap();

let b = world_cell.get_resource_mut::<f64>().unwrap();

```

This adds cheap runtime checks (a sparse set lookup of `ArchetypeComponentId` and a counter) to ensure that world accesses do not conflict with each other. Each operation returns a `WorldBorrow<'w, T>` or `WorldBorrowMut<'w, T>` wrapper type, which will release the relevant ArchetypeComponentId resources when dropped.

World caches the access sparse set (and only one cell can exist at a time), so `world.cell()` is a cheap operation.

WorldCell does _not_ use atomic operations. It is non-send, does a mutable borrow of world to prevent other accesses, and uses a simple `Rc<RefCell<ArchetypeComponentAccess>>` wrapper in each WorldBorrow pointer.

The api is currently limited to resource access, but it can and should be extended to queries / entity component access.

## Resource Scopes

WorldCell does not yet support component queries, and even when it does there are sometimes legitimate reasons to want a mutable world ref _and_ a mutable resource ref (ex: bevy_render and bevy_scene both need this). In these cases we could always drop down to the unsafe `world.get_resource_unchecked_mut()`, but that is not ideal!

Instead developers can use a "resource scope"

```rust

world.resource_scope(|world: &mut World, a: &mut A| {

})

```

This temporarily removes the `A` resource from `World`, provides mutable pointers to both, and re-adds A to World when finished. Thanks to the move to ComponentIds/sparse sets, this is a cheap operation.

If multiple resources are required, scopes can be nested. We could also consider adding a "resource tuple" to the api if this pattern becomes common and the boilerplate gets nasty.

## Query Conflicts Use ComponentId Instead of ArchetypeComponentId

For safety reasons, systems cannot contain queries that conflict with each other without wrapping them in a QuerySet. On bevy `main`, we use ArchetypeComponentIds to determine conflicts. This is nice because it can take into account filters:

```rust

// these queries will never conflict due to their filters

fn filter_system(a: Query<&mut A, With<B>>, b: Query<&mut B, Without<B>>) {

}

```

But it also has a significant downside:

```rust

// these queries will not conflict _until_ an entity with A, B, and C is spawned

fn maybe_conflicts_system(a: Query<(&mut A, &C)>, b: Query<(&mut A, &B)>) {

}

```

The system above will panic at runtime if an entity with A, B, and C is spawned. This makes it hard to trust that your game logic will run without crashing.

In this pr, I switched to using `ComponentId` instead. This _is_ more constraining. `maybe_conflicts_system` will now always fail, but it will do it consistently at startup. Naively, it would also _disallow_ `filter_system`, which would be a significant downgrade in usability. Bevy has a number of internal systems that rely on disjoint queries and I expect it to be a common pattern in userspace.

To resolve this, I added a new `FilteredAccess<T>` type, which wraps `Access<T>` and adds with/without filters. If two `FilteredAccess` have with/without values that prove they are disjoint, they will no longer conflict.

## EntityRef / EntityMut

World entity operations on `main` require that the user passes in an `entity` id to each operation:

```rust

let entity = world.spawn((A, )); // create a new entity with A

world.get::<A>(entity);

world.insert(entity, (B, C));

world.insert_one(entity, D);

```

This means that each operation needs to look up the entity location / verify its validity. The initial spawn operation also requires a Bundle as input. This can be awkward when no components are required (or one component is required).

These operations have been replaced by `EntityRef` and `EntityMut`, which are "builder-style" wrappers around world that provide read and read/write operations on a single, pre-validated entity:

```rust

// spawn now takes no inputs and returns an EntityMut

let entity = world.spawn()

.insert(A) // insert a single component into the entity

.insert_bundle((B, C)) // insert a bundle of components into the entity

.id() // id returns the Entity id

// Returns EntityMut (or panics if the entity does not exist)

world.entity_mut(entity)

.insert(D)

.insert_bundle(SomeBundle::default());

{

// returns EntityRef (or panics if the entity does not exist)

let d = world.entity(entity)

.get::<D>() // gets the D component

.unwrap();

// world.get still exists for ergonomics

let d = world.get::<D>(entity).unwrap();

}

// These variants return Options if you want to check existence instead of panicing

world.get_entity_mut(entity)

.unwrap()

.insert(E);

if let Some(entity_ref) = world.get_entity(entity) {

let d = entity_ref.get::<D>().unwrap();

}

```

This _does not_ affect the current Commands api or terminology. I think that should be a separate conversation as that is a much larger breaking change.

## Safety Improvements

* Entity reservation in Commands uses a normal world borrow instead of an unsafe transmute

* QuerySets no longer transmutes lifetimes

* Made traits "unsafe" when implementing a trait incorrectly could cause unsafety

* More thorough safety docs

## RemovedComponents SystemParam

The old approach to querying removed components: `query.removed:<T>()` was confusing because it had no connection to the query itself. I replaced it with the following, which is both clearer and allows us to cache the ComponentId mapping in the SystemParamState:

```rust

fn system(removed: RemovedComponents<T>) {

for entity in removed.iter() {

}

}

```

## Simpler Bundle implementation

Bundles are no longer responsible for sorting (or deduping) TypeInfo. They are just a simple ordered list of component types / data. This makes the implementation smaller and opens the door to an easy "nested bundle" implementation in the future (which i might even add in this pr). Duplicate detection is now done once per bundle type by World the first time a bundle is used.

## Unified WorldQuery and QueryFilter types

(don't worry they are still separate type _parameters_ in Queries .. this is a non-breaking change)

WorldQuery and QueryFilter were already basically identical apis. With the addition of `FetchState` and more storage-specific fetch methods, the overlap was even clearer (and the redundancy more painful).

QueryFilters are now just `F: WorldQuery where F::Fetch: FilterFetch`. FilterFetch requires `Fetch<Item = bool>` and adds new "short circuit" variants of fetch methods. This enables a filter tuple like `(With<A>, Without<B>, Changed<C>)` to stop evaluating the filter after the first mismatch is encountered. FilterFetch is automatically implemented for `Fetch` implementations that return bool.

This forces fetch implementations that return things like `(bool, bool, bool)` (such as the filter above) to manually implement FilterFetch and decide whether or not to short-circuit.

## More Granular Modules

World no longer globs all of the internal modules together. It now exports `core`, `system`, and `schedule` separately. I'm also considering exporting `core` submodules directly as that is still pretty "glob-ey" and unorganized (feedback welcome here).

## Remaining Draft Work (to be done in this pr)

* ~~panic on conflicting WorldQuery fetches (&A, &mut A)~~

* ~~bevy `main` and hecs both currently allow this, but we should protect against it if possible~~

* ~~batch_iter / par_iter (currently stubbed out)~~

* ~~ChangedRes~~

* ~~I skipped this while we sort out #1313. This pr should be adapted to account for whatever we land on there~~.

* ~~The `Archetypes` and `Tables` collections use hashes of sorted lists of component ids to uniquely identify each archetype/table. This hash is then used as the key in a HashMap to look up the relevant ArchetypeId or TableId. (which doesn't handle hash collisions properly)~~

* ~~It is currently unsafe to generate a Query from "World A", then use it on "World B" (despite the api claiming it is safe). We should probably close this gap. This could be done by adding a randomly generated WorldId to each world, then storing that id in each Query. They could then be compared to each other on each `query.do_thing(&world)` operation. This _does_ add an extra branch to each query operation, so I'm open to other suggestions if people have them.~~

* ~~Nested Bundles (if i find time)~~

## Potential Future Work

* Expand WorldCell to support queries.

* Consider not allocating in the empty archetype on `world.spawn()`

* ex: return something like EntityMutUninit, which turns into EntityMut after an `insert` or `insert_bundle` op

* this actually regressed performance last time i tried it, but in theory it should be faster

* Optimize SparseSet::insert (see `PERF` comment on insert)

* Replace SparseArray `Option<T>` with T::MAX to cut down on branching

* would enable cheaper get_unchecked() operations

* upstream fixedbitset optimizations

* fixedbitset could be allocation free for small block counts (store blocks in a SmallVec)

* fixedbitset could have a const constructor

* Consider implementing Tags (archetype-specific by-value data that affects archetype identity)

* ex: ArchetypeA could have `[A, B, C]` table components and `[D(1)]` "tag" component. ArchetypeB could have `[A, B, C]` table components and a `[D(2)]` tag component. The archetypes are different, despite both having D tags because the value inside D is different.

* this could potentially build on top of the `archetype.unique_components` added in this pr for resource storage.

* Consider reverting `all_tuples` proc macro in favor of the old `macro_rules` implementation

* all_tuples is more flexible and produces cleaner documentation (the macro_rules version produces weird type parameter orders due to parser constraints)

* but unfortunately all_tuples also appears to make Rust Analyzer sad/slow when working inside of `bevy_ecs` (does not affect user code)

* Consider "resource queries" and/or "mixed resource and entity component queries" as an alternative to WorldCell

* this is basically just "systems" so maybe it's not worth it

* Add more world ops

* `world.clear()`

* `world.reserve<T: Bundle>(count: usize)`

* Try using the old archetype allocation strategy (allocate new memory on resize and copy everything over). I expect this to improve batch insertion performance at the cost of unbatched performance. But thats just a guess. I'm not an allocation perf pro :)

* Adapt Commands apis for consistency with new World apis

## Benchmarks

key:

* `bevy_old`: bevy `main` branch

* `bevy`: this branch

* `_foreach`: uses an optimized for_each iterator

* ` _sparse`: uses sparse set storage (if unspecified assume table storage)

* `_system`: runs inside a system (if unspecified assume test happens via direct world ops)

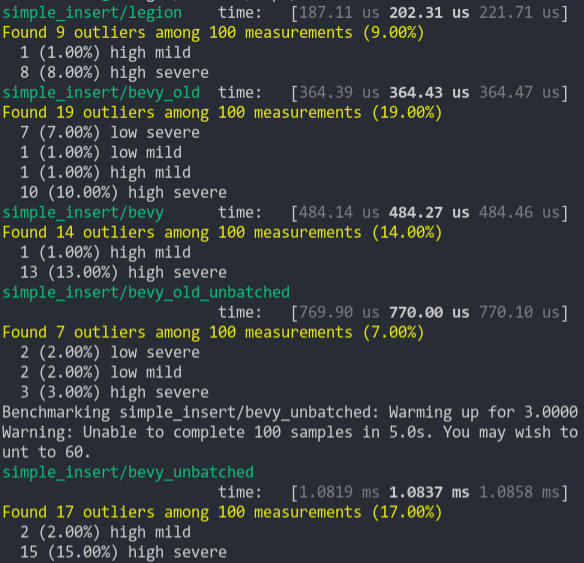

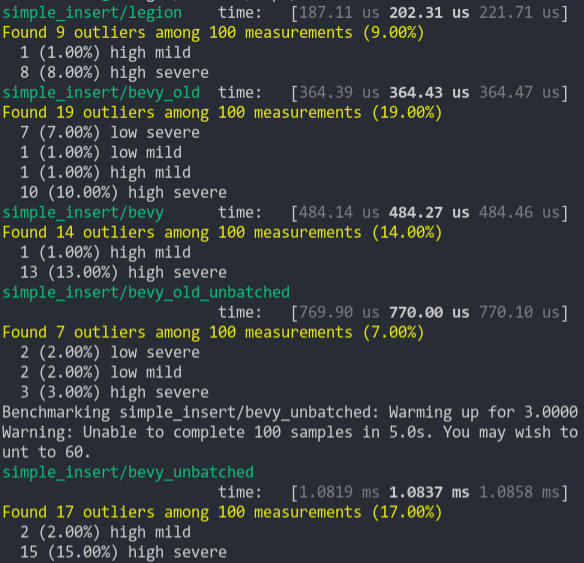

### Simple Insert (from ecs_bench_suite)

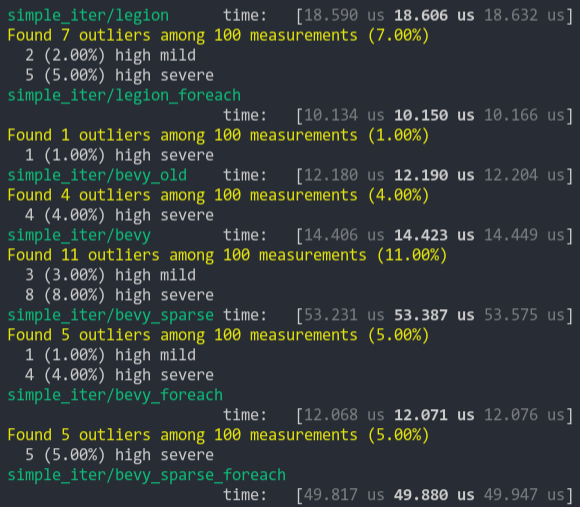

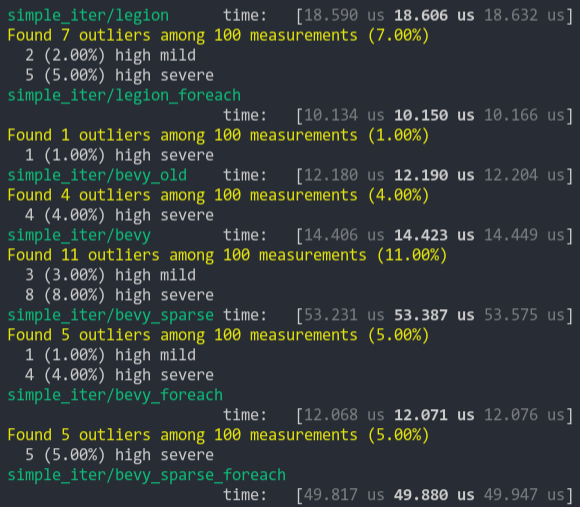

### Simpler Iter (from ecs_bench_suite)

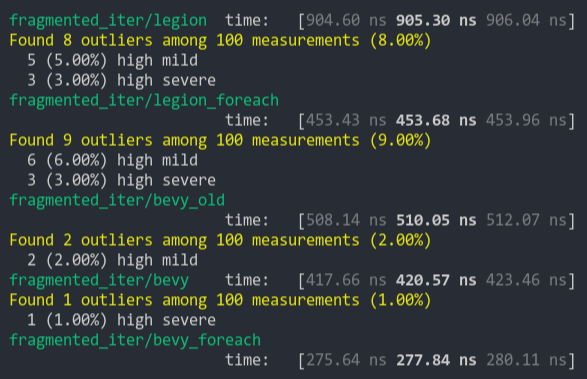

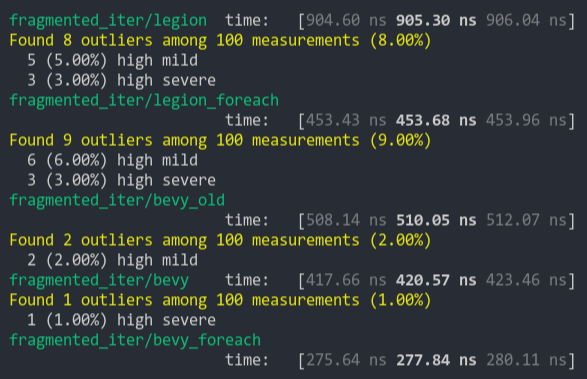

### Fragment Iter (from ecs_bench_suite)

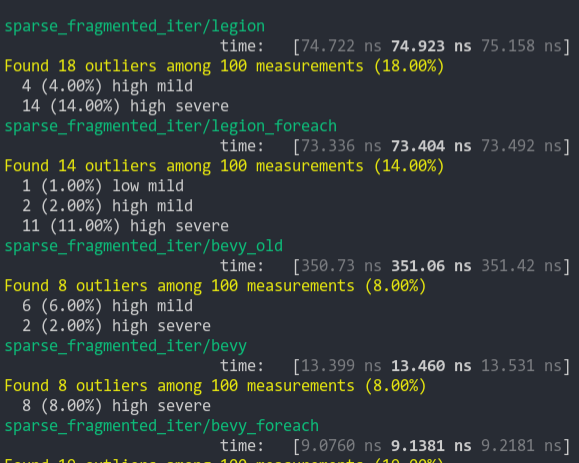

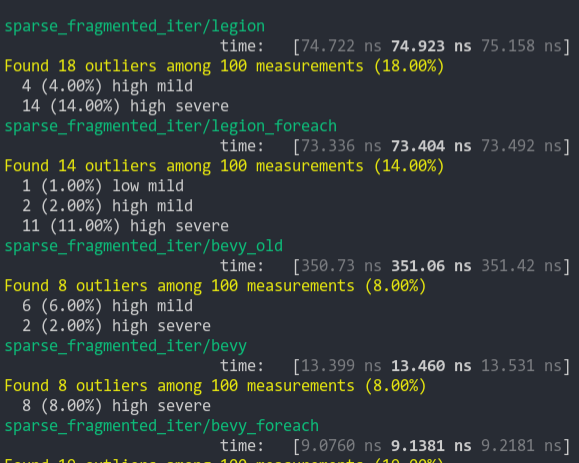

### Sparse Fragmented Iter

Iterate a query that matches 5 entities from a single matching archetype, but there are 100 unmatching archetypes

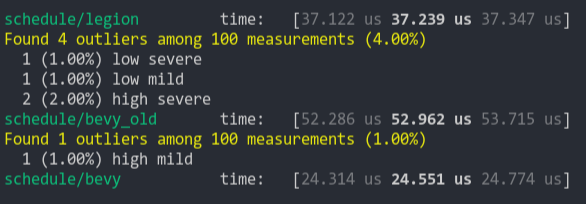

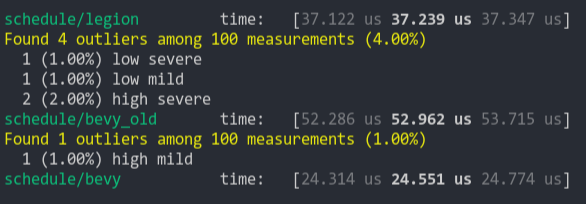

### Schedule (from ecs_bench_suite)

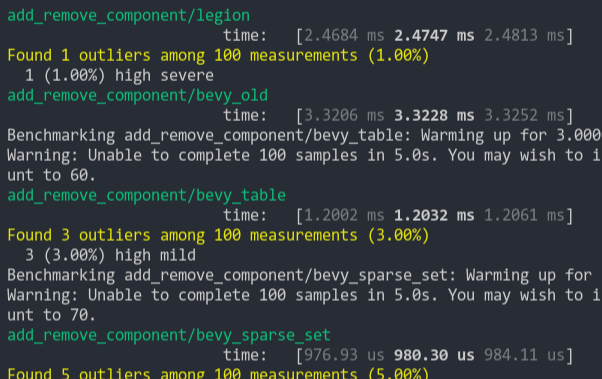

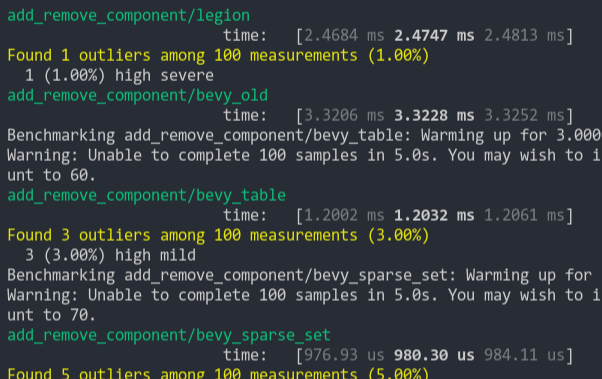

### Add Remove Component (from ecs_bench_suite)

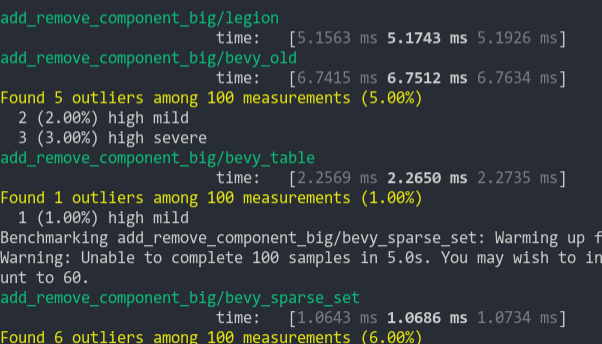

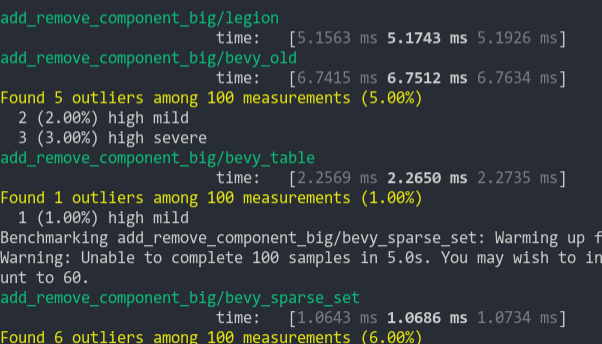

### Add Remove Component Big

Same as the test above, but each entity has 5 "large" matrix components and 1 "large" matrix component is added and removed

### Get Component

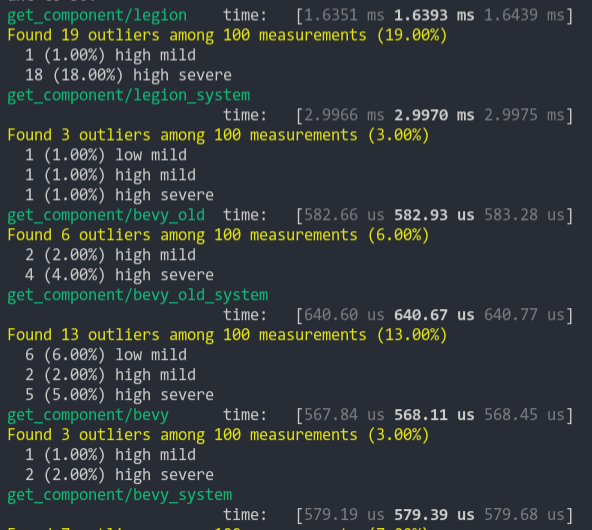

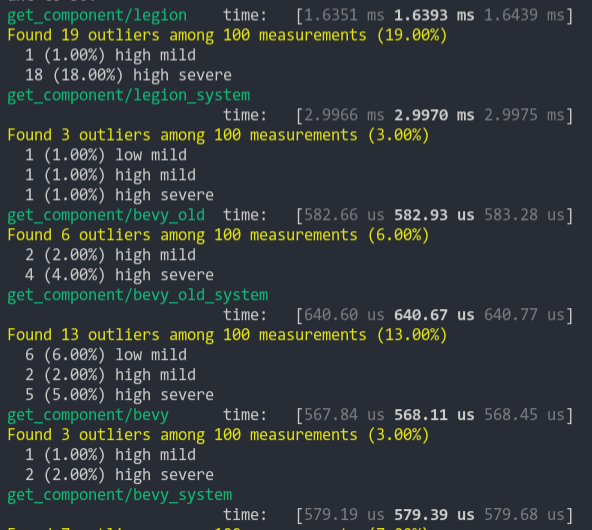

Looks up a single component value a large number of times

2021-03-05 07:54:35 +00:00

|

|

|

};

|

Make `Resource` trait opt-in, requiring `#[derive(Resource)]` V2 (#5577)

*This PR description is an edited copy of #5007, written by @alice-i-cecile.*

# Objective

Follow-up to https://github.com/bevyengine/bevy/pull/2254. The `Resource` trait currently has a blanket implementation for all types that meet its bounds.

While ergonomic, this results in several drawbacks:

* it is possible to make confusing, silent mistakes such as inserting a function pointer (Foo) rather than a value (Foo::Bar) as a resource

* it is challenging to discover if a type is intended to be used as a resource

* we cannot later add customization options (see the [RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/27-derive-component.md) for the equivalent choice for Component).

* dependencies can use the same Rust type as a resource in invisibly conflicting ways

* raw Rust types used as resources cannot preserve privacy appropriately, as anyone able to access that type can read and write to internal values

* we cannot capture a definitive list of possible resources to display to users in an editor

## Notes to reviewers

* Review this commit-by-commit; there's effectively no back-tracking and there's a lot of churn in some of these commits.

*ira: My commits are not as well organized :')*

* I've relaxed the bound on Local to Send + Sync + 'static: I don't think these concerns apply there, so this can keep things simple. Storing e.g. a u32 in a Local is fine, because there's a variable name attached explaining what it does.

* I think this is a bad place for the Resource trait to live, but I've left it in place to make reviewing easier. IMO that's best tackled with https://github.com/bevyengine/bevy/issues/4981.

## Changelog

`Resource` is no longer automatically implemented for all matching types. Instead, use the new `#[derive(Resource)]` macro.

## Migration Guide

Add `#[derive(Resource)]` to all types you are using as a resource.

If you are using a third party type as a resource, wrap it in a tuple struct to bypass orphan rules. Consider deriving `Deref` and `DerefMut` to improve ergonomics.

`ClearColor` no longer implements `Component`. Using `ClearColor` as a component in 0.8 did nothing.

Use the `ClearColorConfig` in the `Camera3d` and `Camera2d` components instead.

Co-authored-by: Alice <alice.i.cecile@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: devil-ira <justthecooldude@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

2022-08-08 21:36:35 +00:00

|

|

|

use bevy_ecs::{prelude::World, system::Resource};

|

2020-08-29 00:08:51 +00:00

|

|

|

use bevy_utils::HashMap;

|

|

|

|

|

use std::{borrow::Cow, fmt::Debug};

|

2021-12-14 03:58:23 +00:00

|

|

|

|

2022-03-21 23:58:37 +00:00

|

|

|

use super::EdgeExistence;

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// The render graph configures the modular, parallel and re-usable render logic.

|

2022-08-16 20:46:46 +00:00

|

|

|

/// It is a retained and stateless (nodes themselves may have their own internal state) structure,

|

2021-12-14 03:58:23 +00:00

|

|

|

/// which can not be modified while it is executed by the graph runner.

|

|

|

|

|

///

|

|

|

|

|

/// The `RenderGraphRunner` is responsible for executing the entire graph each frame.

|

|

|

|

|

///

|

|

|

|

|

/// It consists of three main components: [`Nodes`](Node), [`Edges`](Edge)

|

|

|

|

|

/// and [`Slots`](super::SlotType).

|

|

|

|

|

///

|

|

|

|

|

/// Nodes are responsible for generating draw calls and operating on input and output slots.

|

|

|

|

|

/// Edges specify the order of execution for nodes and connect input and output slots together.

|

|

|

|

|

/// Slots describe the render resources created or used by the nodes.

|

|

|

|

|

///

|

|

|

|

|

/// Additionally a render graph can contain multiple sub graphs, which are run by the

|

2022-08-13 15:27:49 +00:00

|

|

|

/// corresponding nodes. Every render graph can have its own optional input node.

|

2021-12-14 03:58:23 +00:00

|

|

|

///

|

|

|

|

|

/// ## Example

|

|

|

|

|

/// Here is a simple render graph example with two nodes connected by a node edge.

|

|

|

|

|

/// ```

|

|

|

|

|

/// # use bevy_app::prelude::*;

|

|

|

|

|

/// # use bevy_ecs::prelude::World;

|

|

|

|

|

/// # use bevy_render::render_graph::{RenderGraph, Node, RenderGraphContext, NodeRunError};

|

|

|

|

|

/// # use bevy_render::renderer::RenderContext;

|

|

|

|

|

/// #

|

|

|

|

|

/// # struct MyNode;

|

|

|

|

|

/// #

|

|

|

|

|

/// # impl Node for MyNode {

|

|

|

|

|

/// # fn run(&self, graph: &mut RenderGraphContext, render_context: &mut RenderContext, world: &World) -> Result<(), NodeRunError> {

|

|

|

|

|

/// # unimplemented!()

|

|

|

|

|

/// # }

|

|

|

|

|

/// # }

|

|

|

|

|

/// #

|

|

|

|

|

/// let mut graph = RenderGraph::default();

|

|

|

|

|

/// graph.add_node("input_node", MyNode);

|

|

|

|

|

/// graph.add_node("output_node", MyNode);

|

2022-11-21 21:58:39 +00:00

|

|

|

/// graph.add_node_edge("output_node", "input_node");

|

2021-12-14 03:58:23 +00:00

|

|

|

/// ```

|

Make `Resource` trait opt-in, requiring `#[derive(Resource)]` V2 (#5577)

*This PR description is an edited copy of #5007, written by @alice-i-cecile.*

# Objective

Follow-up to https://github.com/bevyengine/bevy/pull/2254. The `Resource` trait currently has a blanket implementation for all types that meet its bounds.

While ergonomic, this results in several drawbacks:

* it is possible to make confusing, silent mistakes such as inserting a function pointer (Foo) rather than a value (Foo::Bar) as a resource

* it is challenging to discover if a type is intended to be used as a resource

* we cannot later add customization options (see the [RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/27-derive-component.md) for the equivalent choice for Component).

* dependencies can use the same Rust type as a resource in invisibly conflicting ways

* raw Rust types used as resources cannot preserve privacy appropriately, as anyone able to access that type can read and write to internal values

* we cannot capture a definitive list of possible resources to display to users in an editor

## Notes to reviewers

* Review this commit-by-commit; there's effectively no back-tracking and there's a lot of churn in some of these commits.

*ira: My commits are not as well organized :')*

* I've relaxed the bound on Local to Send + Sync + 'static: I don't think these concerns apply there, so this can keep things simple. Storing e.g. a u32 in a Local is fine, because there's a variable name attached explaining what it does.

* I think this is a bad place for the Resource trait to live, but I've left it in place to make reviewing easier. IMO that's best tackled with https://github.com/bevyengine/bevy/issues/4981.

## Changelog

`Resource` is no longer automatically implemented for all matching types. Instead, use the new `#[derive(Resource)]` macro.

## Migration Guide

Add `#[derive(Resource)]` to all types you are using as a resource.

If you are using a third party type as a resource, wrap it in a tuple struct to bypass orphan rules. Consider deriving `Deref` and `DerefMut` to improve ergonomics.

`ClearColor` no longer implements `Component`. Using `ClearColor` as a component in 0.8 did nothing.

Use the `ClearColorConfig` in the `Camera3d` and `Camera2d` components instead.

Co-authored-by: Alice <alice.i.cecile@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: devil-ira <justthecooldude@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

2022-08-08 21:36:35 +00:00

|

|

|

#[derive(Resource, Default)]

|

2020-04-25 00:46:54 +00:00

|

|

|

pub struct RenderGraph {

|

2020-04-24 00:24:41 +00:00

|

|

|

nodes: HashMap<NodeId, NodeState>,

|

|

|

|

|

node_names: HashMap<Cow<'static, str>, NodeId>,

|

2021-12-14 03:58:23 +00:00

|

|

|

sub_graphs: HashMap<Cow<'static, str>, RenderGraph>,

|

|

|

|

|

input_node: Option<NodeId>,

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

impl RenderGraph {

|

|

|

|

|

/// The name of the [`GraphInputNode`] of this graph. Used to connect other nodes to it.

|

|

|

|

|

pub const INPUT_NODE_NAME: &'static str = "GraphInputNode";

|

|

|

|

|

|

|

|

|

|

/// Updates all nodes and sub graphs of the render graph. Should be called before executing it.

|

|

|

|

|

pub fn update(&mut self, world: &mut World) {

|

|

|

|

|

for node in self.nodes.values_mut() {

|

|

|

|

|

node.node.update(world);

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

for sub_graph in self.sub_graphs.values_mut() {

|

|

|

|

|

sub_graph.update(world);

|

2020-07-10 04:18:35 +00:00

|

|

|

}

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Creates an [`GraphInputNode`] with the specified slots if not already present.

|

|

|

|

|

pub fn set_input(&mut self, inputs: Vec<SlotInfo>) -> NodeId {

|

2022-02-13 22:33:55 +00:00

|

|

|

assert!(self.input_node.is_none(), "Graph already has an input node");

|

2021-12-14 03:58:23 +00:00

|

|

|

|

|

|

|

|

let id = self.add_node("GraphInputNode", GraphInputNode { inputs });

|

|

|

|

|

self.input_node = Some(id);

|

|

|

|

|

id

|

|

|

|

|

}

|

|

|

|

|

|

2022-11-21 21:58:39 +00:00

|

|

|

/// Returns the [`NodeState`] of the input node of this graph.

|

|

|

|

|

///

|

|

|

|

|

/// # See also

|

|

|

|

|

///

|

|

|

|

|

/// - [`input_node`](Self::input_node) for an unchecked version.

|

2021-12-14 03:58:23 +00:00

|

|

|

#[inline]

|

2022-11-21 21:58:39 +00:00

|

|

|

pub fn get_input_node(&self) -> Option<&NodeState> {

|

2021-12-14 03:58:23 +00:00

|

|

|

self.input_node.and_then(|id| self.get_node_state(id).ok())

|

|

|

|

|

}

|

|

|

|

|

|

2022-11-21 21:58:39 +00:00

|

|

|

/// Returns the [`NodeState`] of the input node of this graph.

|

|

|

|

|

///

|

|

|

|

|

/// # Panics

|

|

|

|

|

///

|

|

|

|

|

/// Panics if there is no input node set.

|

|

|

|

|

///

|

|

|

|

|

/// # See also

|

|

|

|

|

///

|

|

|

|

|

/// - [`get_input_node`](Self::get_input_node) for a version which returns an [`Option`] instead.

|

|

|

|

|

#[inline]

|

|

|

|

|

pub fn input_node(&self) -> &NodeState {

|

|

|

|

|

self.get_input_node().unwrap()

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Adds the `node` with the `name` to the graph.

|

|

|

|

|

/// If the name is already present replaces it instead.

|

2020-05-14 00:35:48 +00:00

|

|

|

pub fn add_node<T>(&mut self, name: impl Into<Cow<'static, str>>, node: T) -> NodeId

|

2020-04-24 00:24:41 +00:00

|

|

|

where

|

|

|

|

|

T: Node,

|

|

|

|

|

{

|

|

|

|

|

let id = NodeId::new();

|

|

|

|

|

let name = name.into();

|

|

|

|

|

let mut node_state = NodeState::new(id, node);

|

|

|

|

|

node_state.name = Some(name.clone());

|

|

|

|

|

self.nodes.insert(id, node_state);

|

|

|

|

|

self.node_names.insert(name, id);

|

|

|

|

|

id

|

|

|

|

|

}

|

|

|

|

|

|

2022-03-21 23:58:37 +00:00

|

|

|

/// Removes the `node` with the `name` from the graph.

|

|

|

|

|

/// If the name is does not exist, nothing happens.

|

|

|

|

|

pub fn remove_node(

|

|

|

|

|

&mut self,

|

|

|

|

|

name: impl Into<Cow<'static, str>>,

|

|

|

|

|

) -> Result<(), RenderGraphError> {

|

|

|

|

|

let name = name.into();

|

|

|

|

|

if let Some(id) = self.node_names.remove(&name) {

|

|

|

|

|

if let Some(node_state) = self.nodes.remove(&id) {

|

|

|

|

|

// Remove all edges from other nodes to this one. Note that as we're removing this

|

|

|

|

|

// node, we don't need to remove its input edges

|

|

|

|

|

for input_edge in node_state.edges.input_edges().iter() {

|

|

|

|

|

match input_edge {

|

2022-05-31 01:38:07 +00:00

|

|

|

Edge::SlotEdge { output_node, .. }

|

|

|

|

|

| Edge::NodeEdge {

|

2022-03-21 23:58:37 +00:00

|

|

|

input_node: _,

|

|

|

|

|

output_node,

|

|

|

|

|

} => {

|

|

|

|

|

if let Ok(output_node) = self.get_node_state_mut(*output_node) {

|

|

|

|

|

output_node.edges.remove_output_edge(input_edge.clone())?;

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

// Remove all edges from this node to other nodes. Note that as we're removing this

|

|

|

|

|

// node, we don't need to remove its output edges

|

|

|

|

|

for output_edge in node_state.edges.output_edges().iter() {

|

|

|

|

|

match output_edge {

|

|

|

|

|

Edge::SlotEdge {

|

|

|

|

|

output_node: _,

|

|

|

|

|

output_index: _,

|

|

|

|

|

input_node,

|

|

|

|

|

input_index: _,

|

|

|

|

|

}

|

2022-05-31 01:38:07 +00:00

|

|

|

| Edge::NodeEdge {

|

2022-03-21 23:58:37 +00:00

|

|

|

output_node: _,

|

|

|

|

|

input_node,

|

|

|

|

|

} => {

|

|

|

|

|

if let Ok(input_node) = self.get_node_state_mut(*input_node) {

|

|

|

|

|

input_node.edges.remove_input_edge(output_edge.clone())?;

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

Ok(())

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Retrieves the [`NodeState`] referenced by the `label`.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn get_node_state(

|

|

|

|

|

&self,

|

|

|

|

|

label: impl Into<NodeLabel>,

|

|

|

|

|

) -> Result<&NodeState, RenderGraphError> {

|

|

|

|

|

let label = label.into();

|

|

|

|

|

let node_id = self.get_node_id(&label)?;

|

|

|

|

|

self.nodes

|

|

|

|

|

.get(&node_id)

|

2020-09-26 04:34:47 +00:00

|

|

|

.ok_or(RenderGraphError::InvalidNode(label))

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Retrieves the [`NodeState`] referenced by the `label` mutably.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn get_node_state_mut(

|

|

|

|

|

&mut self,

|

|

|

|

|

label: impl Into<NodeLabel>,

|

|

|

|

|

) -> Result<&mut NodeState, RenderGraphError> {

|

|

|

|

|

let label = label.into();

|

|

|

|

|

let node_id = self.get_node_id(&label)?;

|

|

|

|

|

self.nodes

|

|

|

|

|

.get_mut(&node_id)

|

2020-09-26 04:34:47 +00:00

|

|

|

.ok_or(RenderGraphError::InvalidNode(label))

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Retrieves the [`NodeId`] referenced by the `label`.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn get_node_id(&self, label: impl Into<NodeLabel>) -> Result<NodeId, RenderGraphError> {

|

|

|

|

|

let label = label.into();

|

|

|

|

|

match label {

|

|

|

|

|

NodeLabel::Id(id) => Ok(id),

|

|

|

|

|

NodeLabel::Name(ref name) => self

|

|

|

|

|

.node_names

|

|

|

|

|

.get(name)

|

|

|

|

|

.cloned()

|

2020-09-26 04:34:47 +00:00

|

|

|

.ok_or(RenderGraphError::InvalidNode(label)),

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Retrieves the [`Node`] referenced by the `label`.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn get_node<T>(&self, label: impl Into<NodeLabel>) -> Result<&T, RenderGraphError>

|

|

|

|

|

where

|

|

|

|

|

T: Node,

|

|

|

|

|

{

|

|

|

|

|

self.get_node_state(label).and_then(|n| n.node())

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Retrieves the [`Node`] referenced by the `label` mutably.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn get_node_mut<T>(

|

|

|

|

|

&mut self,

|

|

|

|

|

label: impl Into<NodeLabel>,

|

|

|

|

|

) -> Result<&mut T, RenderGraphError>

|

|

|

|

|

where

|

|

|

|

|

T: Node,

|

|

|

|

|

{

|

|

|

|

|

self.get_node_state_mut(label).and_then(|n| n.node_mut())

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Adds the [`Edge::SlotEdge`] to the graph. This guarantees that the `output_node`

|

|

|

|

|

/// is run before the `input_node` and also connects the `output_slot` to the `input_slot`.

|

2022-11-21 21:58:39 +00:00

|

|

|

///

|

|

|

|

|

/// Fails if any invalid [`NodeLabel`]s or [`SlotLabel`]s are given.

|

|

|

|

|

///

|

|

|

|

|

/// # See also

|

|

|

|

|

///

|

|

|

|

|

/// - [`add_slot_edge`](Self::add_slot_edge) for an infallible version.

|

|

|

|

|

pub fn try_add_slot_edge(

|

2020-04-24 00:24:41 +00:00

|

|

|

&mut self,

|

|

|

|

|

output_node: impl Into<NodeLabel>,

|

|

|

|

|

output_slot: impl Into<SlotLabel>,

|

|

|

|

|

input_node: impl Into<NodeLabel>,

|

|

|

|

|

input_slot: impl Into<SlotLabel>,

|

|

|

|

|

) -> Result<(), RenderGraphError> {

|

2021-12-14 03:58:23 +00:00

|

|

|

let output_slot = output_slot.into();

|

|

|

|

|

let input_slot = input_slot.into();

|

2020-04-24 00:24:41 +00:00

|

|

|

let output_node_id = self.get_node_id(output_node)?;

|

|

|

|

|

let input_node_id = self.get_node_id(input_node)?;

|

|

|

|

|

|

|

|

|

|

let output_index = self

|

|

|

|

|

.get_node_state(output_node_id)?

|

|

|

|

|

.output_slots

|

2021-12-14 03:58:23 +00:00

|

|

|

.get_slot_index(output_slot.clone())

|

|

|

|

|

.ok_or(RenderGraphError::InvalidOutputNodeSlot(output_slot))?;

|

2020-04-24 00:24:41 +00:00

|

|

|

let input_index = self

|

|

|

|

|

.get_node_state(input_node_id)?

|

|

|

|

|

.input_slots

|

2021-12-14 03:58:23 +00:00

|

|

|

.get_slot_index(input_slot.clone())

|

|

|

|

|

.ok_or(RenderGraphError::InvalidInputNodeSlot(input_slot))?;

|

2020-04-24 00:24:41 +00:00

|

|

|

|

|

|

|

|

let edge = Edge::SlotEdge {

|

|

|

|

|

output_node: output_node_id,

|

|

|

|

|

output_index,

|

|

|

|

|

input_node: input_node_id,

|

|

|

|

|

input_index,

|

|

|

|

|

};

|

|

|

|

|

|

2022-03-21 23:58:37 +00:00

|

|

|

self.validate_edge(&edge, EdgeExistence::DoesNotExist)?;

|

2020-04-24 00:24:41 +00:00

|

|

|

|

|

|

|

|

{

|

|

|

|

|

let output_node = self.get_node_state_mut(output_node_id)?;

|

2020-04-24 03:53:38 +00:00

|

|

|

output_node.edges.add_output_edge(edge.clone())?;

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

let input_node = self.get_node_state_mut(input_node_id)?;

|

2020-04-24 03:53:38 +00:00

|

|

|

input_node.edges.add_input_edge(edge)?;

|

2020-04-24 00:24:41 +00:00

|

|

|

|

|

|

|

|

Ok(())

|

|

|

|

|

}

|

|

|

|

|

|

2022-11-21 21:58:39 +00:00

|

|

|

/// Adds the [`Edge::SlotEdge`] to the graph. This guarantees that the `output_node`

|

|

|

|

|

/// is run before the `input_node` and also connects the `output_slot` to the `input_slot`.

|

|

|

|

|

///

|

|

|

|

|

/// # Panics

|

|

|

|

|

///

|

|

|

|

|

/// Any invalid [`NodeLabel`]s or [`SlotLabel`]s are given.

|

|

|

|

|

///

|

|

|

|

|

/// # See also

|

|

|

|

|

///

|

|

|

|

|

/// - [`try_add_slot_edge`](Self::try_add_slot_edge) for a fallible version.

|

|

|

|

|

pub fn add_slot_edge(

|

|

|

|

|

&mut self,

|

|

|

|

|

output_node: impl Into<NodeLabel>,

|

|

|

|

|

output_slot: impl Into<SlotLabel>,

|

|

|

|

|

input_node: impl Into<NodeLabel>,

|

|

|

|

|

input_slot: impl Into<SlotLabel>,

|

|

|

|

|

) {

|

|

|

|

|

self.try_add_slot_edge(output_node, output_slot, input_node, input_slot)

|

|

|

|

|

.unwrap();

|

|

|

|

|

}

|

|

|

|

|

|

2022-03-21 23:58:37 +00:00

|

|

|

/// Removes the [`Edge::SlotEdge`] from the graph. If any nodes or slots do not exist then

|

|

|

|

|

/// nothing happens.

|

|

|

|

|

pub fn remove_slot_edge(

|

|

|

|

|

&mut self,

|

|

|

|

|

output_node: impl Into<NodeLabel>,

|

|

|

|

|

output_slot: impl Into<SlotLabel>,

|

|

|

|

|

input_node: impl Into<NodeLabel>,

|

|

|

|

|

input_slot: impl Into<SlotLabel>,

|

|

|

|

|

) -> Result<(), RenderGraphError> {

|

|

|

|

|

let output_slot = output_slot.into();

|

|

|

|

|

let input_slot = input_slot.into();

|

|

|

|

|

let output_node_id = self.get_node_id(output_node)?;

|

|

|

|

|

let input_node_id = self.get_node_id(input_node)?;

|

|

|

|

|

|

|

|

|

|

let output_index = self

|

|

|

|

|

.get_node_state(output_node_id)?

|

|

|

|

|

.output_slots

|

|

|

|

|

.get_slot_index(output_slot.clone())

|

|

|

|

|

.ok_or(RenderGraphError::InvalidOutputNodeSlot(output_slot))?;

|

|

|

|

|

let input_index = self

|

|

|

|

|

.get_node_state(input_node_id)?

|

|

|

|

|

.input_slots

|

|

|

|

|

.get_slot_index(input_slot.clone())

|

|

|

|

|

.ok_or(RenderGraphError::InvalidInputNodeSlot(input_slot))?;

|

|

|

|

|

|

|

|

|

|

let edge = Edge::SlotEdge {

|

|

|

|

|

output_node: output_node_id,

|

|

|

|

|

output_index,

|

|

|

|

|

input_node: input_node_id,

|

|

|

|

|

input_index,

|

|

|

|

|

};

|

|

|

|

|

|

|

|

|

|

self.validate_edge(&edge, EdgeExistence::Exists)?;

|

|

|

|

|

|

|

|

|

|

{

|

|

|

|

|

let output_node = self.get_node_state_mut(output_node_id)?;

|

|

|

|

|

output_node.edges.remove_output_edge(edge.clone())?;

|

|

|

|

|

}

|

|

|

|

|

let input_node = self.get_node_state_mut(input_node_id)?;

|

|

|

|

|

input_node.edges.remove_input_edge(edge)?;

|

|

|

|

|

|

|

|

|

|

Ok(())

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Adds the [`Edge::NodeEdge`] to the graph. This guarantees that the `output_node`

|

|

|

|

|

/// is run before the `input_node`.

|

2022-11-21 21:58:39 +00:00

|

|

|

///

|

|

|

|

|

/// Fails if any invalid [`NodeLabel`] is given.

|

|

|

|

|

///

|

|

|

|

|

/// # See also

|

|

|

|

|

///

|

|

|

|

|

/// - [`add_node_edge`](Self::add_node_edge) for an infallible version.

|

|

|

|

|

pub fn try_add_node_edge(

|

2020-04-24 00:24:41 +00:00

|

|

|

&mut self,

|

|

|

|

|

output_node: impl Into<NodeLabel>,

|

|

|

|

|

input_node: impl Into<NodeLabel>,

|

|

|

|

|

) -> Result<(), RenderGraphError> {

|

|

|

|

|

let output_node_id = self.get_node_id(output_node)?;

|

|

|

|

|

let input_node_id = self.get_node_id(input_node)?;

|

|

|

|

|

|

|

|

|

|

let edge = Edge::NodeEdge {

|

|

|

|

|

output_node: output_node_id,

|

|

|

|

|

input_node: input_node_id,

|

|

|

|

|

};

|

|

|

|

|

|

2022-03-21 23:58:37 +00:00

|

|

|

self.validate_edge(&edge, EdgeExistence::DoesNotExist)?;

|

2020-04-24 00:24:41 +00:00

|

|

|

|

|

|

|

|

{

|

|

|

|

|

let output_node = self.get_node_state_mut(output_node_id)?;

|

2020-04-24 03:53:38 +00:00

|

|

|

output_node.edges.add_output_edge(edge.clone())?;

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

let input_node = self.get_node_state_mut(input_node_id)?;

|

2020-04-24 03:53:38 +00:00

|

|

|

input_node.edges.add_input_edge(edge)?;

|

2020-04-24 00:24:41 +00:00

|

|

|

|

|

|

|

|

Ok(())

|

|

|

|

|

}

|

|

|

|

|

|

2022-11-21 21:58:39 +00:00

|

|

|

/// Adds the [`Edge::NodeEdge`] to the graph. This guarantees that the `output_node`

|

|

|

|

|

/// is run before the `input_node`.

|

|

|

|

|

///

|

|

|

|

|

/// # Panics

|

|

|

|

|

///

|

|

|

|

|

/// Panics if any invalid [`NodeLabel`] is given.

|

|

|

|

|

///

|

|

|

|

|

/// # See also

|

|

|

|

|

///

|

|

|

|

|

/// - [`try_add_node_edge`](Self::try_add_node_edge) for a fallible version.

|

|

|

|

|

pub fn add_node_edge(

|

|

|

|

|

&mut self,

|

|

|

|

|

output_node: impl Into<NodeLabel>,

|

|

|

|

|

input_node: impl Into<NodeLabel>,

|

|

|

|

|

) {

|

|

|

|

|

self.try_add_node_edge(output_node, input_node).unwrap();

|

|

|

|

|

}

|

|

|

|

|

|

2022-03-21 23:58:37 +00:00

|

|

|

/// Removes the [`Edge::NodeEdge`] from the graph. If either node does not exist then nothing

|

|

|

|

|

/// happens.

|

|

|

|

|

pub fn remove_node_edge(

|

|

|

|

|

&mut self,

|

|

|

|

|

output_node: impl Into<NodeLabel>,

|

|

|

|

|

input_node: impl Into<NodeLabel>,

|

|

|

|

|

) -> Result<(), RenderGraphError> {

|

|

|

|

|

let output_node_id = self.get_node_id(output_node)?;

|

|

|

|

|

let input_node_id = self.get_node_id(input_node)?;

|

|

|

|

|

|

|

|

|

|

let edge = Edge::NodeEdge {

|

|

|

|

|

output_node: output_node_id,

|

|

|

|

|

input_node: input_node_id,

|

|

|

|

|

};

|

|

|

|

|

|

|

|

|

|

self.validate_edge(&edge, EdgeExistence::Exists)?;

|

|

|

|

|

|

|

|

|

|

{

|

|

|

|

|

let output_node = self.get_node_state_mut(output_node_id)?;

|

|

|

|

|

output_node.edges.remove_output_edge(edge.clone())?;

|

|

|

|

|

}

|

|

|

|

|

let input_node = self.get_node_state_mut(input_node_id)?;

|

|

|

|

|

input_node.edges.remove_input_edge(edge)?;

|

|

|

|

|

|

|

|

|

|

Ok(())

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

/// Verifies that the edge existence is as expected and

|

2021-12-14 03:58:23 +00:00

|

|

|

/// checks that slot edges are connected correctly.

|

2022-03-21 23:58:37 +00:00

|

|

|

pub fn validate_edge(

|

|

|

|

|

&mut self,

|

|

|

|

|

edge: &Edge,

|

|

|

|

|

should_exist: EdgeExistence,

|

|

|

|

|

) -> Result<(), RenderGraphError> {

|

|

|

|

|

if should_exist == EdgeExistence::Exists && !self.has_edge(edge) {

|

|

|

|

|

return Err(RenderGraphError::EdgeDoesNotExist(edge.clone()));

|

|

|

|

|

} else if should_exist == EdgeExistence::DoesNotExist && self.has_edge(edge) {

|

2020-04-24 00:24:41 +00:00

|

|

|

return Err(RenderGraphError::EdgeAlreadyExists(edge.clone()));

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

match *edge {

|

|

|

|

|

Edge::SlotEdge {

|

|

|

|

|

output_node,

|

|

|

|

|

output_index,

|

|

|

|

|

input_node,

|

|

|

|

|

input_index,

|

|

|

|

|

} => {

|

|

|

|

|

let output_node_state = self.get_node_state(output_node)?;

|

|

|

|

|

let input_node_state = self.get_node_state(input_node)?;

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

let output_slot = output_node_state

|

|

|

|

|

.output_slots

|

|

|

|

|

.get_slot(output_index)

|

|

|

|

|

.ok_or(RenderGraphError::InvalidOutputNodeSlot(SlotLabel::Index(

|

|

|

|

|

output_index,

|

|

|

|

|

)))?;

|

|

|

|

|

let input_slot = input_node_state.input_slots.get_slot(input_index).ok_or(

|

|

|

|

|

RenderGraphError::InvalidInputNodeSlot(SlotLabel::Index(input_index)),

|

|

|

|

|

)?;

|

2020-04-24 00:24:41 +00:00

|

|

|

|

|

|

|

|

if let Some(Edge::SlotEdge {

|

|

|

|

|

output_node: current_output_node,

|

|

|

|

|

..

|

2022-03-21 23:58:37 +00:00

|

|

|

}) = input_node_state.edges.input_edges().iter().find(|e| {

|

2020-04-24 00:24:41 +00:00

|

|

|

if let Edge::SlotEdge {

|

|

|

|

|

input_index: current_input_index,

|

|

|

|

|

..

|

|

|

|

|

} = e

|

|

|

|

|

{

|

|

|

|

|

input_index == *current_input_index

|

|

|

|

|

} else {

|

|

|

|

|

false

|

|

|

|

|

}

|

|

|

|

|

}) {

|

2022-03-21 23:58:37 +00:00

|

|

|

if should_exist == EdgeExistence::DoesNotExist {

|

|

|

|

|

return Err(RenderGraphError::NodeInputSlotAlreadyOccupied {

|

|

|

|

|

node: input_node,

|

|

|

|

|

input_slot: input_index,

|

|

|

|

|

occupied_by_node: *current_output_node,

|

|

|

|

|

});

|

|

|

|

|

}

|

2020-04-24 00:24:41 +00:00

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

if output_slot.slot_type != input_slot.slot_type {

|

2020-04-24 00:24:41 +00:00

|

|

|

return Err(RenderGraphError::MismatchedNodeSlots {

|

|

|

|

|

output_node,

|

|

|

|

|

output_slot: output_index,

|

|

|

|

|

input_node,

|

|

|

|

|

input_slot: input_index,

|

|

|

|

|

});

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

Edge::NodeEdge { .. } => { /* nothing to validate here */ }

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

Ok(())

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Checks whether the `edge` already exists in the graph.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn has_edge(&self, edge: &Edge) -> bool {

|

|

|

|

|

let output_node_state = self.get_node_state(edge.get_output_node());

|

|

|

|

|

let input_node_state = self.get_node_state(edge.get_input_node());

|

|

|

|

|

if let Ok(output_node_state) = output_node_state {

|

2022-03-21 23:58:37 +00:00

|

|

|

if output_node_state.edges.output_edges().contains(edge) {

|

2020-04-24 00:24:41 +00:00

|

|

|

if let Ok(input_node_state) = input_node_state {

|

2022-03-21 23:58:37 +00:00

|

|

|

if input_node_state.edges.input_edges().contains(edge) {

|

2020-04-24 00:24:41 +00:00

|

|

|

return true;

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

false

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Returns an iterator over the [`NodeStates`](NodeState).

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn iter_nodes(&self) -> impl Iterator<Item = &NodeState> {

|

|

|

|

|

self.nodes.values()

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Returns an iterator over the [`NodeStates`](NodeState), that allows modifying each value.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn iter_nodes_mut(&mut self) -> impl Iterator<Item = &mut NodeState> {

|

|

|

|

|

self.nodes.values_mut()

|

|

|

|

|

}

|

|

|

|

|

|

2021-12-14 03:58:23 +00:00

|

|

|

/// Returns an iterator over the sub graphs.

|

|

|

|

|

pub fn iter_sub_graphs(&self) -> impl Iterator<Item = (&str, &RenderGraph)> {

|

|

|

|

|

self.sub_graphs

|

|

|

|

|

.iter()

|

|

|

|

|

.map(|(name, graph)| (name.as_ref(), graph))

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

/// Returns an iterator over the sub graphs, that allows modifying each value.

|

|

|

|

|

pub fn iter_sub_graphs_mut(&mut self) -> impl Iterator<Item = (&str, &mut RenderGraph)> {

|

|

|

|

|

self.sub_graphs

|

|

|

|

|

.iter_mut()

|

|

|

|

|

.map(|(name, graph)| (name.as_ref(), graph))

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

/// Returns an iterator over a tuple of the input edges and the corresponding output nodes

|

|

|

|

|

/// for the node referenced by the label.

|

2020-04-24 00:24:41 +00:00

|

|

|

pub fn iter_node_inputs(

|

|

|

|

|

&self,

|

|

|

|

|

label: impl Into<NodeLabel>,

|

|

|

|

|

) -> Result<impl Iterator<Item = (&Edge, &NodeState)>, RenderGraphError> {

|

|

|

|

|

let node = self.get_node_state(label)?;

|

|

|

|

|

Ok(node

|

2020-04-24 03:53:38 +00:00

|

|

|

.edges

|

2022-03-21 23:58:37 +00:00

|

|

|

.input_edges()

|

2020-04-24 00:24:41 +00:00

|