# Objective

- Part of #9400.

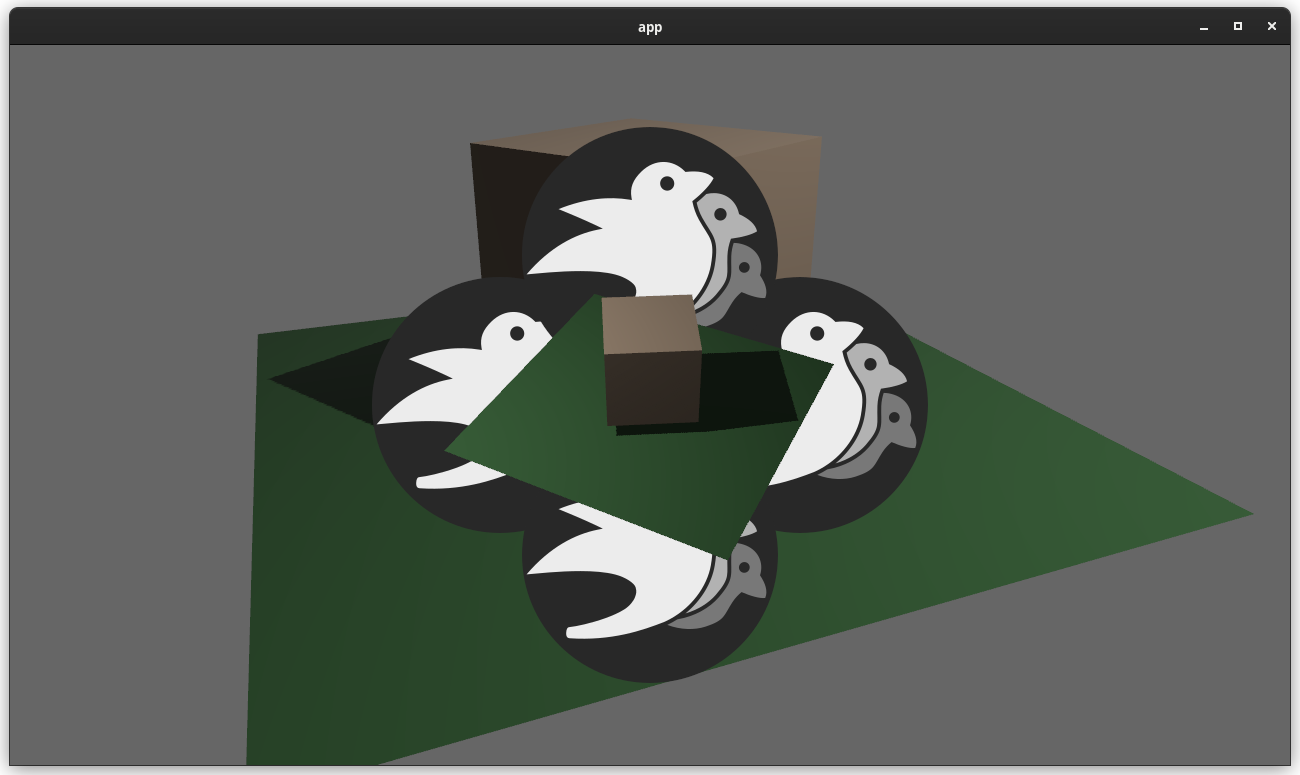

- Add light gizmos for `SpotLight`, `PointLight` and `DirectionalLight`.

## Solution

- Add a `ShowLightGizmo` and its related gizmo group and plugin, that

shows a gizmo for all lights of an entities when inserted on it. Light

display can also be toggled globally through the gizmo config in the

same way it can already be done for `Aabb`s.

- Add distinct segment setters for height and base one `Cone3dBuilder`.

This allow having a properly rounded base without too much edges along

the height. The doc comments explain how to ensure height and base

connect when setting different values.

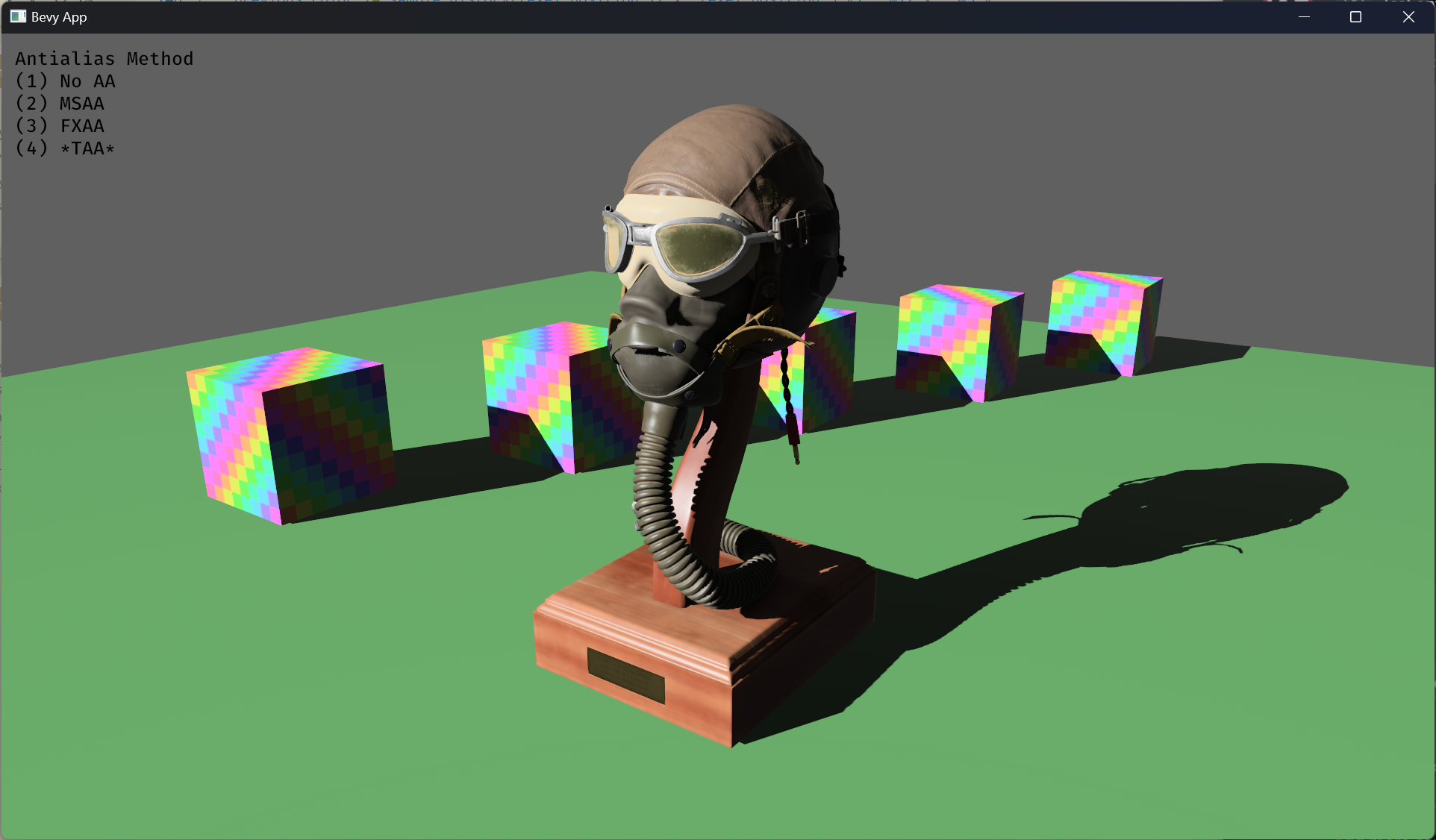

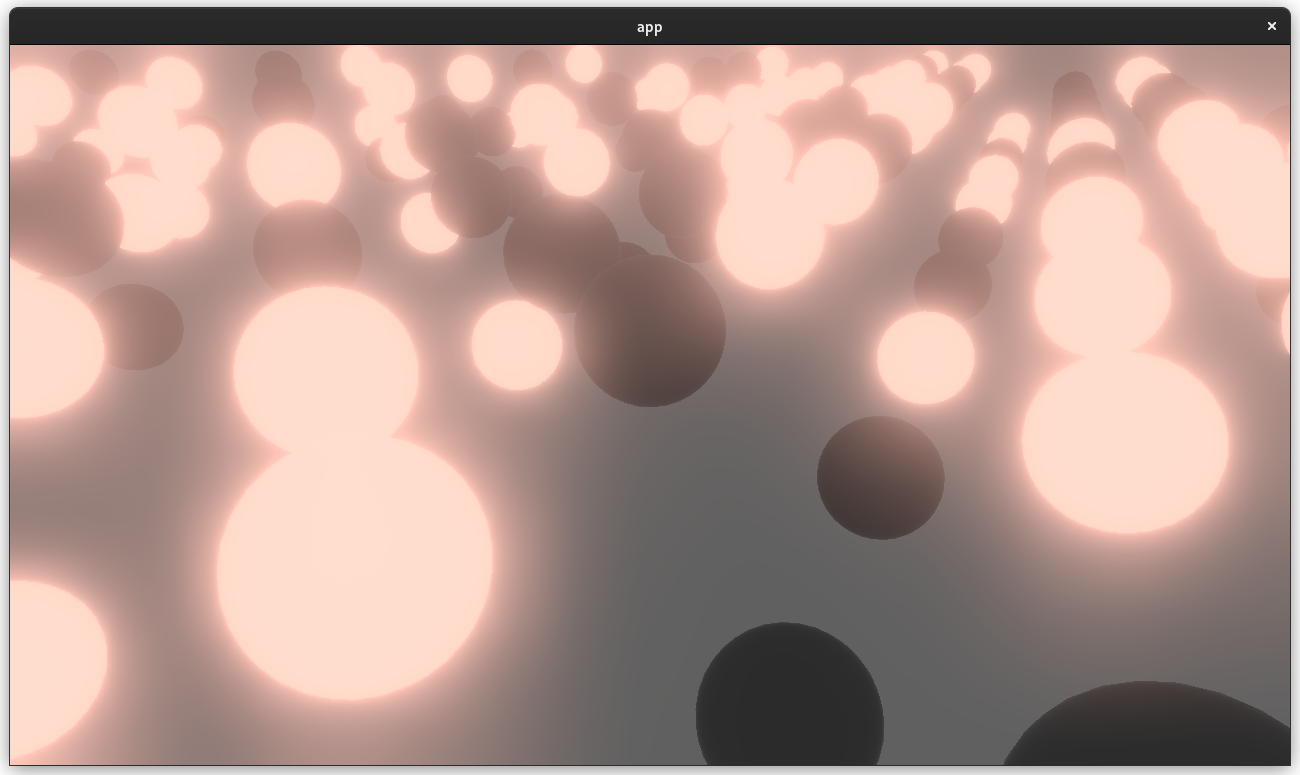

Gizmo for the three light types without radius with the depth bias set

to -1:

With Radius:

Possible future improvements:

- Add a billboarded sprite with a distinct sprite for each light type.

- Display the intensity of the light somehow (no idea how to represent

that apart from some text).

---

## Changelog

### Added

- The new `ShowLightGizmo`, part of the `LightGizmoPlugin` and

configurable globally with `LightGizmoConfigGroup`, allows drawing gizmo

for `PointLight`, `SpotLight` and `DirectionalLight`. The gizmos color

behavior can be controlled with the `LightGizmoColor` member of

`ShowLightGizmo` and `LightGizmoConfigGroup`.

- The cone gizmo builder (`Cone3dBuilder`) now allows setting a

differing number of segments for the base and height.

---------

Co-authored-by: Gino Valente <49806985+MrGVSV@users.noreply.github.com>

# Objective

This PR unpins `web-sys` so that unrelated projects that have

`bevy_render` in their workspace can finally update their `web-sys`.

More details in and fixes#12246.

## Solution

* Update `wgpu` from 0.19.1 to 0.19.3.

* Remove the `web-sys` pin.

* Update docs and wasm helper to remove the now-stale

`--cfg=web_sys_unstable_apis` Rust flag.

---

## Changelog

Updated `wgpu` to v0.19.3 and removed `web-sys` pin.

# Objective

- Provide a reliable and performant mechanism to allows users to keep

components synchronized with external sources: closing/opening sockets,

updating indexes, debugging etc.

- Implement a generic mechanism to provide mutable access to the world

without allowing structural changes; this will not only be used here but

is a foundational piece for observers, which are key for a performant

implementation of relations.

## Solution

- Implement a new type `DeferredWorld` (naming is not important,

`StaticWorld` is also suitable) that wraps a world pointer and prevents

user code from making any structural changes to the ECS; spawning

entities, creating components, initializing resources etc.

- Add component lifecycle hooks `on_add`, `on_insert` and `on_remove`

that can be assigned callbacks in user code.

---

## Changelog

- Add new `DeferredWorld` type.

- Add new world methods: `register_component::<T>` and

`register_component_with_descriptor`. These differ from `init_component`

in that they provide mutable access to the created `ComponentInfo` but

will panic if the component is already in any archetypes. These

restrictions serve two purposes:

1. Prevent users from defining hooks for components that may already

have associated hooks provided in another plugin. (a use case better

served by observers)

2. Ensure that when an `Archetype` is created it gets the appropriate

flags to early-out when triggering hooks.

- Add methods to `ComponentInfo`: `on_add`, `on_insert` and `on_remove`

to be used to register hooks of the form `fn(DeferredWorld, Entity,

ComponentId)`

- Modify `BundleInserter`, `BundleSpawner` and `EntityWorldMut` to

trigger component hooks when appropriate.

- Add bit flags to `Archetype` indicating whether or not any contained

components have each type of hook, this can be expanded for other flags

as needed.

- Add `component_hooks` example to illustrate usage. Try it out! It's

fun to mash keys.

## Safety

The changes to component insertion, removal and deletion involve a large

amount of unsafe code and it's fair for that to raise some concern. I

have attempted to document it as clearly as possible and have confirmed

that all the hooks examples are accepted by `cargo miri` as not causing

any undefined behavior. The largest issue is in ensuring there are no

outstanding references when passing a `DeferredWorld` to the hooks which

requires some use of raw pointers (as was already happening to some

degree in those places) and I have taken some time to ensure that is the

case but feel free to let me know if I've missed anything.

## Performance

These changes come with a small but measurable performance cost of

between 1-5% on `add_remove` benchmarks and between 1-3% on `insert`

benchmarks. One consideration to be made is the existence of the current

`RemovedComponents` which is on average more costly than the addition of

`on_remove` hooks due to the early-out, however hooks doesn't completely

remove the need for `RemovedComponents` as there is a chance you want to

respond to the removal of a component that already has an `on_remove`

hook defined in another plugin, so I have not removed it here. I do

intend to deprecate it with the introduction of observers in a follow up

PR.

## Discussion Questions

- Currently `DeferredWorld` implements `Deref` to `&World` which makes

sense conceptually, however it does cause some issues with rust-analyzer

providing autocomplete for `&mut World` references which is annoying.

There are alternative implementations that may address this but involve

more code churn so I have attempted them here. The other alternative is

to not implement `Deref` at all but that leads to a large amount of API

duplication.

- `DeferredWorld`, `StaticWorld`, something else?

- In adding support for hooks to `EntityWorldMut` I encountered some

unfortunate difficulties with my desired API. If commands are flushed

after each call i.e. `world.spawn() // flush commands .insert(A) //

flush commands` the entity may be despawned while `EntityWorldMut` still

exists which is invalid. An alternative was then to add

`self.world.flush_commands()` to the drop implementation for

`EntityWorldMut` but that runs into other problems for implementing

functions like `into_unsafe_entity_cell`. For now I have implemented a

`.flush()` which will flush the commands and consume `EntityWorldMut` or

users can manually run `world.flush_commands()` after using

`EntityWorldMut`.

- In order to allowing querying on a deferred world we need

implementations of `WorldQuery` to not break our guarantees of no

structural changes through their `UnsafeWorldCell`. All our

implementations do this, but there isn't currently any safety

documentation specifying what is or isn't allowed for an implementation,

just for the caller, (they also shouldn't be aliasing components they

didn't specify access for etc.) is that something we should start doing?

(see 10752)

Please check out the example `component_hooks` or the tests in

`bundle.rs` for usage examples. I will continue to expand this

description as I go.

See #10839 for a more ergonomic API built on top of this one that isn't

subject to the same restrictions and supports `SystemParam` dependency

injection.

```

$ xcrun simctl devices list

Unrecognized subcommand: devices

usage: simctl [--set <path>] [--profiles <path>] <subcommand> ...

simctl help [subcommand]

Command line utility to control the Simulator

```

# Objective

- The `examples/README.md` contains an invalid example command for

listing iOS devices using `xcrun`.

## Solution

- Update example command to omit the current invalid subcommand

"`devices`".

# Objective

Move Gizmo examples into the separate directory

Fixes#11899

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: Joona Aalto <jondolf.dev@gmail.com>

I just implemented this to record a video for the new blog post, but I

figured it would also make a good dedicated example. This also allows us

to remove a lot of code from the 2d/3d gizmo examples since it

supersedes this portion of code.

Depends on: https://github.com/bevyengine/bevy/pull/11699

> Follow up to #10588

> Closes#11749 (Supersedes #11756)

Enable Texture slicing for the following UI nodes:

- `ImageBundle`

- `ButtonBundle`

<img width="739" alt="Screenshot 2024-01-29 at 13 57 43"

src="https://github.com/bevyengine/bevy/assets/26703856/37675681-74eb-4689-ab42-024310cf3134">

I also added a collection of `fantazy-ui-borders` from

[Kenney's](www.kenney.nl) assets, with the appropriate license (CC).

If it's a problem I can use the same textures as the `sprite_slice`

example

# Work done

Added the `ImageScaleMode` component to the targetted bundles, most of

the logic is directly reused from `bevy_sprite`.

The only additional internal component is the UI specific

`ComputedSlices`, which does the same thing as its spritee equivalent

but adapted to UI code.

Again the slicing is not compatible with `TextureAtlas`, it's something

I need to tackle more deeply in the future

# Fixes

* [x] I noticed that `TextureSlicer::compute_slices` could infinitely

loop if the border was larger that the image half extents, now an error

is triggered and the texture will fallback to being stretched

* [x] I noticed that when using small textures with very small *tiling*

options we could generate hundred of thousands of slices. Now I set a

minimum size of 1 pixel per slice, which is already ridiculously small,

and a warning will be sent at runtime when slice count goes above 1000

* [x] Sprite slicing with `flip_x` or `flip_y` would give incorrect

results, correct flipping is now supported to both sprites and ui image

nodes thanks to @odecay observation

# GPU Alternative

I create a separate branch attempting to implementing 9 slicing and

tiling directly through the `ui.wgsl` fragment shader. It works but

requires sending more data to the GPU:

- slice border

- tiling factors

And more importantly, the actual quad *scale* which is hard to put in

the shader with the current code, so that would be for a later iteration

# Objective

Bevy could benefit from *irradiance volumes*, also known as *voxel

global illumination* or simply as light probes (though this term is not

preferred, as multiple techniques can be called light probes).

Irradiance volumes are a form of baked global illumination; they work by

sampling the light at the centers of each voxel within a cuboid. At

runtime, the voxels surrounding the fragment center are sampled and

interpolated to produce indirect diffuse illumination.

## Solution

This is divided into two sections. The first is copied and pasted from

the irradiance volume module documentation and describes the technique.

The second part consists of notes on the implementation.

### Overview

An *irradiance volume* is a cuboid voxel region consisting of

regularly-spaced precomputed samples of diffuse indirect light. They're

ideal if you have a dynamic object such as a character that can move

about

static non-moving geometry such as a level in a game, and you want that

dynamic object to be affected by the light bouncing off that static

geometry.

To use irradiance volumes, you need to precompute, or *bake*, the

indirect

light in your scene. Bevy doesn't currently come with a way to do this.

Fortunately, [Blender] provides a [baking tool] as part of the Eevee

renderer, and its irradiance volumes are compatible with those used by

Bevy.

The [`bevy-baked-gi`] project provides a tool, `export-blender-gi`, that

can

extract the baked irradiance volumes from the Blender `.blend` file and

package them up into a `.ktx2` texture for use by the engine. See the

documentation in the `bevy-baked-gi` project for more details as to this

workflow.

Like all light probes in Bevy, irradiance volumes are 1×1×1 cubes that

can

be arbitrarily scaled, rotated, and positioned in a scene with the

[`bevy_transform::components::Transform`] component. The 3D voxel grid

will

be stretched to fill the interior of the cube, and the illumination from

the

irradiance volume will apply to all fragments within that bounding

region.

Bevy's irradiance volumes are based on Valve's [*ambient cubes*] as used

in

*Half-Life 2* ([Mitchell 2006], slide 27). These encode a single color

of

light from the six 3D cardinal directions and blend the sides together

according to the surface normal.

The primary reason for choosing ambient cubes is to match Blender, so

that

its Eevee renderer can be used for baking. However, they also have some

advantages over the common second-order spherical harmonics approach:

ambient cubes don't suffer from ringing artifacts, they are smaller (6

colors for ambient cubes as opposed to 9 for spherical harmonics), and

evaluation is faster. A smaller basis allows for a denser grid of voxels

with the same storage requirements.

If you wish to use a tool other than `export-blender-gi` to produce the

irradiance volumes, you'll need to pack the irradiance volumes in the

following format. The irradiance volume of resolution *(Rx, Ry, Rz)* is

expected to be a 3D texture of dimensions *(Rx, 2Ry, 3Rz)*. The

unnormalized

texture coordinate *(s, t, p)* of the voxel at coordinate *(x, y, z)*

with

side *S* ∈ *{-X, +X, -Y, +Y, -Z, +Z}* is as follows:

```text

s = x

t = y + ⎰ 0 if S ∈ {-X, -Y, -Z}

⎱ Ry if S ∈ {+X, +Y, +Z}

⎧ 0 if S ∈ {-X, +X}

p = z + ⎨ Rz if S ∈ {-Y, +Y}

⎩ 2Rz if S ∈ {-Z, +Z}

```

Visually, in a left-handed coordinate system with Y up, viewed from the

right, the 3D texture looks like a stacked series of voxel grids, one

for

each cube side, in this order:

| **+X** | **+Y** | **+Z** |

| ------ | ------ | ------ |

| **-X** | **-Y** | **-Z** |

A terminology note: Other engines may refer to irradiance volumes as

*voxel

global illumination*, *VXGI*, or simply as *light probes*. Sometimes

*light

probe* refers to what Bevy calls a reflection probe. In Bevy, *light

probe*

is a generic term that encompasses all cuboid bounding regions that

capture

indirect illumination, whether based on voxels or not.

Note that, if binding arrays aren't supported (e.g. on WebGPU or WebGL

2),

then only the closest irradiance volume to the view will be taken into

account during rendering.

[*ambient cubes*]:

https://advances.realtimerendering.com/s2006/Mitchell-ShadingInValvesSourceEngine.pdf

[Mitchell 2006]:

https://advances.realtimerendering.com/s2006/Mitchell-ShadingInValvesSourceEngine.pdf

[Blender]: http://blender.org/

[baking tool]:

https://docs.blender.org/manual/en/latest/render/eevee/render_settings/indirect_lighting.html

[`bevy-baked-gi`]: https://github.com/pcwalton/bevy-baked-gi

### Implementation notes

This patch generalizes light probes so as to reuse as much code as

possible between irradiance volumes and the existing reflection probes.

This approach was chosen because both techniques share numerous

similarities:

1. Both irradiance volumes and reflection probes are cuboid bounding

regions.

2. Both are responsible for providing baked indirect light.

3. Both techniques involve presenting a variable number of textures to

the shader from which indirect light is sampled. (In the current

implementation, this uses binding arrays.)

4. Both irradiance volumes and reflection probes require gathering and

sorting probes by distance on CPU.

5. Both techniques require the GPU to search through a list of bounding

regions.

6. Both will eventually want to have falloff so that we can smoothly

blend as objects enter and exit the probes' influence ranges. (This is

not implemented yet to keep this patch relatively small and reviewable.)

To do this, we generalize most of the methods in the reflection probes

patch #11366 to be generic over a trait, `LightProbeComponent`. This

trait is implemented by both `EnvironmentMapLight` (for reflection

probes) and `IrradianceVolume` (for irradiance volumes). Using a trait

will allow us to add more types of light probes in the future. In

particular, I highly suspect we will want real-time reflection planes

for mirrors in the future, which can be easily slotted into this

framework.

## Changelog

> This section is optional. If this was a trivial fix, or has no

externally-visible impact, you can delete this section.

### Added

* A new `IrradianceVolume` asset type is available for baked voxelized

light probes. You can bake the global illumination using Blender or

another tool of your choice and use it in Bevy to apply indirect

illumination to dynamic objects.

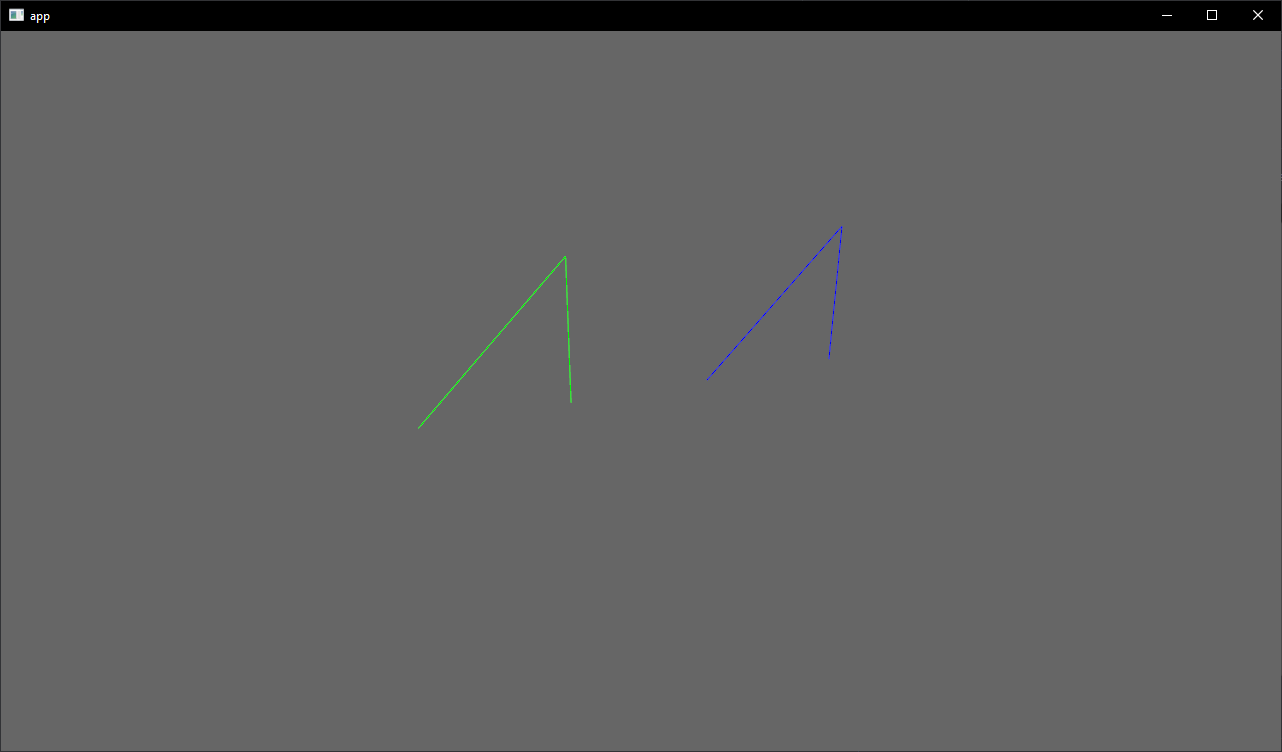

# Objective

- Create an example for bounding volumes and intersection tests

## Solution

- Add an example with a few bounding volumes, created from primitives

- Allow the user to cycle trough the different intersection tests

# Objective

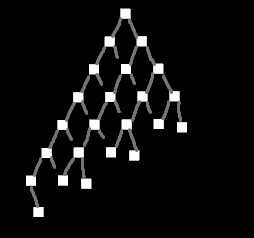

Add interactive system debugging capabilities to bevy, providing

step/break/continue style capabilities to running system schedules.

* Original implementation: #8063

- `ignore_stepping()` everywhere was too much complexity

* Schedule-config & Resource discussion: #8168

- Decided on selective adding of Schedules & Resource-based control

## Solution

Created `Stepping` Resource. This resource can be used to enable

stepping on a per-schedule basis. Systems within schedules can be

individually configured to:

* AlwaysRun: Ignore any stepping state and run every frame

* NeverRun: Never run while stepping is enabled

- this allows for disabling of systems while debugging

* Break: If we're running the full frame, stop before this system is run

Stepping provides two modes of execution that reflect traditional

debuggers:

* Step-based: Only execute one system at a time

* Continue/Break: Run all systems, but stop before running a system

marked as Break

### Demo

https://user-images.githubusercontent.com/857742/233630981-99f3bbda-9ca6-4cc4-a00f-171c4946dc47.mov

Breakout has been modified to use Stepping. The game runs normally for a

couple of seconds, then stepping is enabled and the game appears to

pause. A list of Schedules & Systems appears with a cursor at the first

System in the list. The demo then steps forward full frames using the

spacebar until the ball is about to hit a brick. Then we step system by

system as the ball impacts a brick, showing the cursor moving through

the individual systems. Finally the demo switches back to frame stepping

as the ball changes course.

### Limitations

Due to architectural constraints in bevy, there are some cases systems

stepping will not function as a user would expect.

#### Event-driven systems

Stepping does not support systems that are driven by `Event`s as events

are flushed after 1-2 frames. Although game systems are not running

while stepping, ignored systems are still running every frame, so events

will be flushed.

This presents to the user as stepping the event-driven system never

executes the system. It does execute, but the events have already been

flushed.

This can be resolved by changing event handling to use a buffer for

events, and only dropping an event once all readers have read it.

The work-around to allow these systems to properly execute during

stepping is to have them ignore stepping:

`app.add_systems(event_driven_system.ignore_stepping())`. This was done

in the breakout example to ensure sound played even while stepping.

#### Conditional Systems

When a system is stepped, it is given an opportunity to run. If the

conditions of the system say it should not run, it will not.

Similar to Event-driven systems, if a system is conditional, and that

condition is only true for a very small time window, then stepping the

system may not execute the system. This includes depending on any sort

of external clock.

This exhibits to the user as the system not always running when it is

stepped.

A solution to this limitation is to ensure any conditions are consistent

while stepping is enabled. For example, all systems that modify any

state the condition uses should also enable stepping.

#### State-transition Systems

Stepping is configured on the per-`Schedule` level, requiring the user

to have a `ScheduleLabel`.

To support state-transition systems, bevy generates needed schedules

dynamically. Currently it’s very difficult (if not impossible, I haven’t

verified) for the user to get the labels for these schedules.

Without ready access to the dynamically generated schedules, and a

resolution for the `Event` lifetime, **stepping of the state-transition

systems is not supported**

---

## Changelog

- `Schedule::run()` updated to consult `Stepping` Resource to determine

which Systems to run each frame

- Added `Schedule.label` as a `BoxedSystemLabel`, along with supporting

`Schedule::set_label()` and `Schedule::label()` methods

- `Stepping` needed to know which `Schedule` was running, and prior to

this PR, `Schedule` didn't track its own label

- Would have preferred to add `Schedule::with_label()` and remove

`Schedule::new()`, but this PR touches enough already

- Added calls to `Schedule.set_label()` to `App` and `World` as needed

- Added `Stepping` resource

- Added `Stepping::begin_frame()` system to `MainSchedulePlugin`

- Run before `Main::run_main()`

- Notifies any `Stepping` Resource a new render frame is starting

## Migration Guide

- Add a call to `Schedule::set_label()` for any custom `Schedule`

- This is only required if the `Schedule` will be stepped

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

The first part of #10569, split up from #11007.

The goal is to implement meshing support for Bevy's new geometric

primitives, starting with 2D primitives. 3D meshing will be added in a

follow-up, and we can consider removing the old mesh shapes completely.

## Solution

Add a `Meshable` trait that primitives need to implement to support

meshing, as suggested by the

[RFC](https://github.com/bevyengine/rfcs/blob/main/rfcs/12-primitive-shapes.md#meshing).

```rust

/// A trait for shapes that can be turned into a [`Mesh`].

pub trait Meshable {

/// The output of [`Self::mesh`]. This can either be a [`Mesh`]

/// or a builder used for creating a [`Mesh`].

type Output;

/// Creates a [`Mesh`] for a shape.

fn mesh(&self) -> Self::Output;

}

```

This PR implements it for the following primitives:

- `Circle`

- `Ellipse`

- `Rectangle`

- `RegularPolygon`

- `Triangle2d`

The `mesh` method typically returns a builder-like struct such as

`CircleMeshBuilder`. This is needed to support shape-specific

configuration for things like mesh resolution or UV configuration:

```rust

meshes.add(Circle { radius: 0.5 }.mesh().resolution(64));

```

Note that if no configuration is needed, you can even skip calling

`mesh` because `From<MyPrimitive>` is implemented for `Mesh`:

```rust

meshes.add(Circle { radius: 0.5 });

```

I also updated the `2d_shapes` example to use primitives, and tweaked

the colors to have better contrast against the dark background.

Before:

After:

Here you can see the UVs and different facing directions: (taken from

#11007, so excuse the 3D primitives at the bottom left)

---

## Changelog

- Added `bevy_render::mesh::primitives` module

- Added `Meshable` trait and implemented it for:

- `Circle`

- `Ellipse`

- `Rectangle`

- `RegularPolygon`

- `Triangle2d`

- Implemented `Default` and `Copy` for several 2D primitives

- Updated `2d_shapes` example to use primitives

- Tweaked colors in `2d_shapes` example to have better contrast against

the (new-ish) dark background

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

- Sending and receiving events of the same type in the same system is a

reasonably common need, generally due to event filtering.

- However, actually doing so is non-trivial, as the borrow checker

simultaneous hates mutable and immutable access.

## Solution

- Demonstrate two sensible patterns for doing so.

- Update the `ManualEventReader` docs to be more clear and link to this

example.

---------

Co-authored-by: Alice Cecile <alice.i.cecil@gmail.com>

Co-authored-by: Joona Aalto <jondolf.dev@gmail.com>

Co-authored-by: ickk <git@ickk.io>

# Objective

Keep core dependencies up to date.

## Solution

Update the dependencies.

wgpu 0.19 only supports raw-window-handle (rwh) 0.6, so bumping that was

included in this.

The rwh 0.6 version bump is just the simplest way of doing it. There

might be a way we can take advantage of wgpu's new safe surface creation

api, but I'm not familiar enough with bevy's window management to

untangle it and my attempt ended up being a mess of lifetimes and rustc

complaining about missing trait impls (that were implemented). Thanks to

@MiniaczQ for the (much simpler) rwh 0.6 version bump code.

Unblocks https://github.com/bevyengine/bevy/pull/9172 and

https://github.com/bevyengine/bevy/pull/10812

~~This might be blocked on cpal and oboe updating their ndk versions to

0.8, as they both currently target ndk 0.7 which uses rwh 0.5.2~~ Tested

on android, and everything seems to work correctly (audio properly stops

when minimized, and plays when re-focusing the app).

---

## Changelog

- `wgpu` has been updated to 0.19! The long awaited arcanization has

been merged (for more info, see

https://gfx-rs.github.io/2023/11/24/arcanization.html), and Vulkan

should now be working again on Intel GPUs.

- Targeting WebGPU now requires that you add the new `webgpu` feature

(setting the `RUSTFLAGS` environment variable to

`--cfg=web_sys_unstable_apis` is still required). This feature currently

overrides the `webgl2` feature if you have both enabled (the `webgl2`

feature is enabled by default), so it is not recommended to add it as a

default feature to libraries without putting it behind a flag that

allows library users to opt out of it! In the future we plan on

supporting wasm binaries that can target both webgl2 and webgpu now that

wgpu added support for doing so (see

https://github.com/bevyengine/bevy/issues/11505).

- `raw-window-handle` has been updated to version 0.6.

## Migration Guide

- `bevy_render::instance_index::get_instance_index()` has been removed

as the webgl2 workaround is no longer required as it was fixed upstream

in wgpu. The `BASE_INSTANCE_WORKAROUND` shaderdef has also been removed.

- WebGPU now requires the new `webgpu` feature to be enabled. The

`webgpu` feature currently overrides the `webgl2` feature so you no

longer need to disable all default features and re-add them all when

targeting `webgpu`, but binaries built with both the `webgpu` and

`webgl2` features will only target the webgpu backend, and will only

work on browsers that support WebGPU.

- Places where you conditionally compiled things for webgl2 need to be

updated because of this change, eg:

- `#[cfg(any(not(feature = "webgl"), not(target_arch = "wasm32")))]`

becomes `#[cfg(any(not(feature = "webgl") ,not(target_arch = "wasm32"),

feature = "webgpu"))]`

- `#[cfg(all(feature = "webgl", target_arch = "wasm32"))]` becomes

`#[cfg(all(feature = "webgl", target_arch = "wasm32", not(feature =

"webgpu")))]`

- `if cfg!(all(feature = "webgl", target_arch = "wasm32"))` becomes `if

cfg!(all(feature = "webgl", target_arch = "wasm32", not(feature =

"webgpu")))`

- `create_texture_with_data` now also takes a `TextureDataOrder`. You

can probably just set this to `TextureDataOrder::default()`

- `TextureFormat`'s `block_size` has been renamed to `block_copy_size`

- See the `wgpu` changelog for anything I might've missed:

https://github.com/gfx-rs/wgpu/blob/trunk/CHANGELOG.md

---------

Co-authored-by: François <mockersf@gmail.com>

# Objective

- Fixes#11516

## Solution

- Add Animated Material example (colors are hue-cycling smoothly

per-mesh)

Note: this example reproduces the perf issue found in #10610 pretty

consistently, with and without the changes from that PR included. Frame

time is sometimes around 4.3ms, other times around 12-14ms. Its pretty

random per run. I think this clears #10610 for merge.

# Objective

Fixes#11411

## Solution

- Added a simple example how to create and configure custom schedules

that are run by the `Main` schedule.

- Spot checked some of the API docs used, fixed `App::add_schedule` docs

that referred to a function argument that was removed by #9600.

## Open Questions

- While spot checking the docs, I noticed that the `Schedule` label is

stored in a field called `name` instead of `label`. This seems

unintuitive since the term label is used everywhere else. Should we

change that field name? It was introduced in #9600. If so, I do think

this change would be out of scope for this PR that mainly adds the

example.

This pull request re-submits #10057, which was backed out for breaking

macOS, iOS, and Android. I've tested this version on macOS and Android

and on the iOS simulator.

# Objective

This pull request implements *reflection probes*, which generalize

environment maps to allow for multiple environment maps in the same

scene, each of which has an axis-aligned bounding box. This is a

standard feature of physically-based renderers and was inspired by [the

corresponding feature in Blender's Eevee renderer].

## Solution

This is a minimal implementation of reflection probes that allows

artists to define cuboid bounding regions associated with environment

maps. For every view, on every frame, a system builds up a list of the

nearest 4 reflection probes that are within the view's frustum and

supplies that list to the shader. The PBR fragment shader searches

through the list, finds the first containing reflection probe, and uses

it for indirect lighting, falling back to the view's environment map if

none is found. Both forward and deferred renderers are fully supported.

A reflection probe is an entity with a pair of components, *LightProbe*

and *EnvironmentMapLight* (as well as the standard *SpatialBundle*, to

position it in the world). The *LightProbe* component (along with the

*Transform*) defines the bounding region, while the

*EnvironmentMapLight* component specifies the associated diffuse and

specular cubemaps.

A frequent question is "why two components instead of just one?" The

advantages of this setup are:

1. It's readily extensible to other types of light probes, in particular

*irradiance volumes* (also known as ambient cubes or voxel global

illumination), which use the same approach of bounding cuboids. With a

single component that applies to both reflection probes and irradiance

volumes, we can share the logic that implements falloff and blending

between multiple light probes between both of those features.

2. It reduces duplication between the existing *EnvironmentMapLight* and

these new reflection probes. Systems can treat environment maps attached

to cameras the same way they treat environment maps applied to

reflection probes if they wish.

Internally, we gather up all environment maps in the scene and place

them in a cubemap array. At present, this means that all environment

maps must have the same size, mipmap count, and texture format. A

warning is emitted if this restriction is violated. We could potentially

relax this in the future as part of the automatic mipmap generation

work, which could easily do texture format conversion as part of its

preprocessing.

An easy way to generate reflection probe cubemaps is to bake them in

Blender and use the `export-blender-gi` tool that's part of the

[`bevy-baked-gi`] project. This tool takes a `.blend` file containing

baked cubemaps as input and exports cubemap images, pre-filtered with an

embedded fork of the [glTF IBL Sampler], alongside a corresponding

`.scn.ron` file that the scene spawner can use to recreate the

reflection probes.

Note that this is intentionally a minimal implementation, to aid

reviewability. Known issues are:

* Reflection probes are basically unsupported on WebGL 2, because WebGL

2 has no cubemap arrays. (Strictly speaking, you can have precisely one

reflection probe in the scene if you have no other cubemaps anywhere,

but this isn't very useful.)

* Reflection probes have no falloff, so reflections will abruptly change

when objects move from one bounding region to another.

* As mentioned before, all cubemaps in the world of a given type

(diffuse or specular) must have the same size, format, and mipmap count.

Future work includes:

* Blending between multiple reflection probes.

* A falloff/fade-out region so that reflected objects disappear

gradually instead of vanishing all at once.

* Irradiance volumes for voxel-based global illumination. This should

reuse much of the reflection probe logic, as they're both GI techniques

based on cuboid bounding regions.

* Support for WebGL 2, by breaking batches when reflection probes are

used.

These issues notwithstanding, I think it's best to land this with

roughly the current set of functionality, because this patch is useful

as is and adding everything above would make the pull request

significantly larger and harder to review.

---

## Changelog

### Added

* A new *LightProbe* component is available that specifies a bounding

region that an *EnvironmentMapLight* applies to. The combination of a

*LightProbe* and an *EnvironmentMapLight* offers *reflection probe*

functionality similar to that available in other engines.

[the corresponding feature in Blender's Eevee renderer]:

https://docs.blender.org/manual/en/latest/render/eevee/light_probes/reflection_cubemaps.html

[`bevy-baked-gi`]: https://github.com/pcwalton/bevy-baked-gi

[glTF IBL Sampler]: https://github.com/KhronosGroup/glTF-IBL-Sampler

# Objective

Expand the existing `Query` API to support more dynamic use cases i.e.

scripting.

## Prior Art

- #6390

- #8308

- #10037

## Solution

- Create a `QueryBuilder` with runtime methods to define the set of

component accesses for a built query.

- Create new `WorldQueryData` implementations `FilteredEntityMut` and

`FilteredEntityRef` as variants of `EntityMut` and `EntityRef` that

provide run time checked access to the components included in a given

query.

- Add new methods to `Query` to create "query lens" with a subset of the

access of the initial query.

### Query Builder

The `QueryBuilder` API allows you to define a query at runtime. At it's

most basic use it will simply create a query with the corresponding type

signature:

```rust

let query = QueryBuilder::<Entity, With<A>>::new(&mut world).build();

// is equivalent to

let query = QueryState::<Entity, With<A>>::new(&mut world);

```

Before calling `.build()` you also have the opportunity to add

additional accesses and filters. Here is a simple example where we add

additional filter terms:

```rust

let entity_a = world.spawn((A(0), B(0))).id();

let entity_b = world.spawn((A(0), C(0))).id();

let mut query_a = QueryBuilder::<Entity>::new(&mut world)

.with::<A>()

.without::<C>()

.build();

assert_eq!(entity_a, query_a.single(&world));

```

This alone is useful in that allows you to decide which archetypes your

query will match at runtime. However it is also very limited, consider a

case like the following:

```rust

let query_a = QueryBuilder::<&A>::new(&mut world)

// Add an additional access

.data::<&B>()

.build();

```

This will grant the query an additional read access to component B

however we have no way of accessing the data while iterating as the type

signature still only includes &A. For an even more concrete example of

this consider dynamic components:

```rust

let query_a = QueryBuilder::<Entity>::new(&mut world)

// Adding a filter is easy since it doesn't need be read later

.with_id(component_id_a)

// How do I access the data of this component?

.ref_id(component_id_b)

.build();

```

With this in mind the `QueryBuilder` API seems somewhat incomplete by

itself, we need some way method of accessing the components dynamically.

So here's one:

### Query Transmutation

If the problem is not having the component in the type signature why not

just add it? This PR also adds transmute methods to `QueryBuilder` and

`QueryState`. Here's a simple example:

```rust

world.spawn(A(0));

world.spawn((A(1), B(0)));

let mut query = QueryBuilder::<()>::new(&mut world)

.with::<B>()

.transmute::<&A>()

.build();

query.iter(&world).for_each(|a| assert_eq!(a.0, 1));

```

The `QueryState` and `QueryBuilder` transmute methods look quite similar

but are different in one respect. Transmuting a builder will always

succeed as it will just add the additional accesses needed for the new

terms if they weren't already included. Transmuting a `QueryState` will

panic in the case that the new type signature would give it access it

didn't already have, for example:

```rust

let query = QueryState::<&A, Option<&B>>::new(&mut world);

/// This is fine, the access for Option<&A> is less restrictive than &A

query.transmute::<Option<&A>>(&world);

/// Oh no, this would allow access to &B on entities that might not have it, so it panics

query.transmute::<&B>(&world);

/// This is right out

query.transmute::<&C>(&world);

```

This is quite an appealing API to also have available on `Query` however

it does pose one additional wrinkle: In order to to change the iterator

we need to create a new `QueryState` to back it. `Query` doesn't own

it's own state though, it just borrows it, so we need a place to borrow

it from. This is why `QueryLens` exists, it is a place to store the new

state so it can be borrowed when you call `.query()` leaving you with an

API like this:

```rust

fn function_that_takes_a_query(query: &Query<&A>) {

// ...

}

fn system(query: Query<(&A, &B)>) {

let lens = query.transmute_lens::<&A>();

let q = lens.query();

function_that_takes_a_query(&q);

}

```

Now you may be thinking: Hey, wait a second, you introduced the problem

with dynamic components and then described a solution that only works

for static components! Ok, you got me, I guess we need a bit more:

### Filtered Entity References

Currently the only way you can access dynamic components on entities

through a query is with either `EntityMut` or `EntityRef`, however these

can access all components and so conflict with all other accesses. This

PR introduces `FilteredEntityMut` and `FilteredEntityRef` as

alternatives that have additional runtime checking to prevent accessing

components that you shouldn't. This way you can build a query with a

`QueryBuilder` and actually access the components you asked for:

```rust

let mut query = QueryBuilder::<FilteredEntityRef>::new(&mut world)

.ref_id(component_id_a)

.with(component_id_b)

.build();

let entity_ref = query.single(&world);

// Returns Some(Ptr) as we have that component and are allowed to read it

let a = entity_ref.get_by_id(component_id_a);

// Will return None even though the entity does have the component, as we are not allowed to read it

let b = entity_ref.get_by_id(component_id_b);

```

For the most part these new structs have the exact same methods as their

non-filtered equivalents.

Putting all of this together we can do some truly dynamic ECS queries,

check out the `dynamic` example to see it in action:

```

Commands:

comp, c Create new components

spawn, s Spawn entities

query, q Query for entities

Enter a command with no parameters for usage.

> c A, B, C, Data 4

Component A created with id: 0

Component B created with id: 1

Component C created with id: 2

Component Data created with id: 3

> s A, B, Data 1

Entity spawned with id: 0v0

> s A, C, Data 0

Entity spawned with id: 1v0

> q &Data

0v0: Data: [1, 0, 0, 0]

1v0: Data: [0, 0, 0, 0]

> q B, &mut Data

0v0: Data: [2, 1, 1, 1]

> q B || C, &Data

0v0: Data: [2, 1, 1, 1]

1v0: Data: [0, 0, 0, 0]

```

## Changelog

- Add new `transmute_lens` methods to `Query`.

- Add new types `QueryBuilder`, `FilteredEntityMut`, `FilteredEntityRef`

and `QueryLens`

- `update_archetype_component_access` has been removed, archetype

component accesses are now determined by the accesses set in

`update_component_access`

- Added method `set_access` to `WorldQuery`, this is called before

`update_component_access` for queries that have a restricted set of

accesses, such as those built by `QueryBuilder` or `QueryLens`. This is

primarily used by the `FilteredEntity*` variants and has an empty trait

implementation.

- Added method `get_state` to `WorldQuery` as a fallible version of

`init_state` when you don't have `&mut World` access.

## Future Work

Improve performance of `FilteredEntityMut` and `FilteredEntityRef`,

currently they have to determine the accesses a query has in a given

archetype during iteration which is far from ideal, especially since we

already did the work when matching the archetype in the first place. To

avoid making more internal API changes I have left it out of this PR.

---------

Co-authored-by: Mike Hsu <mike.hsu@gmail.com>

# Objective

Add support for presenting each UI tree on a specific window and

viewport, while making as few breaking changes as possible.

This PR is meant to resolve the following issues at once, since they're

all related.

- Fixes#5622

- Fixes#5570

- Fixes#5621

Adopted #5892 , but started over since the current codebase diverged

significantly from the original PR branch. Also, I made a decision to

propagate component to children instead of recursively iterating over

nodes in search for the root.

## Solution

Add a new optional component that can be inserted to UI root nodes and

propagate to children to specify which camera it should render onto.

This is then used to get the render target and the viewport for that UI

tree. Since this component is optional, the default behavior should be

to render onto the single camera (if only one exist) and warn of

ambiguity if multiple cameras exist. This reduces the complexity for

users with just one camera, while giving control in contexts where it

matters.

## Changelog

- Adds `TargetCamera(Entity)` component to specify which camera should a

node tree be rendered into. If only one camera exists, this component is

optional.

- Adds an example of rendering UI to a texture and using it as a

material in a 3D world.

- Fixes recalculation of physical viewport size when target scale factor

changes. This can happen when the window is moved between displays with

different DPI.

- Changes examples to demonstrate assigning UI to different viewports

and windows and make interactions in an offset viewport testable.

- Removes `UiCameraConfig`. UI visibility now can be controlled via

combination of explicit `TargetCamera` and `Visibility` on the root

nodes.

---------

Co-authored-by: davier <bricedavier@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: Alice Cecile <alice.i.cecil@gmail.com>

> Replaces #5213

# Objective

Implement sprite tiling and [9 slice

scaling](https://en.wikipedia.org/wiki/9-slice_scaling) for

`bevy_sprite`.

Allowing slice scaling and texture tiling.

Basic scaling vs 9 slice scaling:

Slicing example:

<img width="481" alt="Screenshot 2022-07-05 at 15 05 49"

src="https://user-images.githubusercontent.com/26703856/177336112-9e961af0-c0af-4197-aec9-430c1170a79d.png">

Tiling example:

<img width="1329" alt="Screenshot 2023-11-16 at 13 53 32"

src="https://github.com/bevyengine/bevy/assets/26703856/14db39b7-d9e0-4bc3-ba0e-b1f2db39ae8f">

# Solution

- `SpriteBundlue` now has a `scale_mode` component storing a

`SpriteScaleMode` enum with three variants:

- `Stretched` (default)

- `Tiled` to have sprites tile horizontally and/or vertically

- `Sliced` allowing 9 slicing the texture and optionally tile some

sections with a `Textureslicer`.

- `bevy_sprite` has two extra systems to compute a

`ComputedTextureSlices` if necessary,:

- One system react to changes on `Sprite`, `Handle<Image>` or

`SpriteScaleMode`

- The other listens to `AssetEvent<Image>` to compute slices on sprites

when the texture is ready or changed

- I updated the `bevy_sprite` extraction stage to extract potentially

multiple textures instead of one, depending on the presence of

`ComputedTextureSlices`

- I added two examples showcasing the slicing and tiling feature.

The addition of `ComputedTextureSlices` as a cache is to avoid querying

the image data, to retrieve its dimensions, every frame in a extract or

prepare stage. Also it reacts to changes so we can have stuff like this

(tiling example):

https://github.com/bevyengine/bevy/assets/26703856/a349a9f3-33c3-471f-8ef4-a0e5dfce3b01

# Related

- [ ] Once #5103 or #10099 is merged I can enable tiling and slicing for

texture sheets as ui

# To discuss

There is an other option, to consider slice/tiling as part of the asset,

using the new asset preprocessing but I have no clue on how to do it.

Also, instead of retrieving the Image dimensions, we could use the same

system as the sprite sheet and have the user give the image dimensions

directly (grid). But I think it's less user friendly

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: ickshonpe <david.curthoys@googlemail.com>

Co-authored-by: Alice Cecile <alice.i.cecil@gmail.com>

# Objective

This PR is heavily inspired by

https://github.com/bevyengine/bevy/pull/7682

It aims to solve the same problem: allowing the user to extend the

tracing subscriber with extra layers.

(in my case, I'd like to use `use

metrics_tracing_context::{MetricsLayer, TracingContextLayer};`)

## Solution

I'm proposing a different api where the user has the opportunity to take

the existing `subscriber` and apply any transformations on it.

---

## Changelog

- Added a `update_subscriber` option on the `LogPlugin` that lets the

user modify the `subscriber` (for example to extend it with more tracing

`Layers`

## Migration Guide

> This section is optional. If there are no breaking changes, you can

delete this section.

- Added a new field `update_subscriber` in the `LogPlugin`

---------

Co-authored-by: Charles Bournhonesque <cbournhonesque@snapchat.com>

# Objective

Issue #10243: rendering multiple triangles in the same place results in

flickering.

## Solution

Considered these alternatives:

- `depth_bias` may not work, because of high number of entities, so

creating a material per entity is practically not possible

- rendering at slightly different positions does not work, because when

camera is far, float rounding causes the same issues (edit: assuming we

have to use the same `depth_bias`)

- considered implementing deterministic operation like

`query.par_iter().flat_map(...).collect()` to be used in

`check_visibility` system (which would solve the issue since query is

deterministic), and could not figure out how to make it as cheap as

current approach with thread-local collectors (#11249)

So adding an option to sort entities after `check_visibility` system

run.

Should not be too bad, because after visibility check, only a handful

entities remain.

This is probably not the only source of non-determinism in Bevy, but

this is one I could find so far. At least it fixes the repro example.

## Changelog

- `DeterministicRenderingConfig` option to enable deterministic

rendering

## Test

<img width="1392" alt="image"

src="https://github.com/bevyengine/bevy/assets/28969/c735bce1-3a71-44cd-8677-c19f6c0ee6bd">

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

# Objective

Re this comment:

https://github.com/bevyengine/bevy/pull/11141#issuecomment-1872455313

Since https://github.com/bevyengine/bevy/pull/9822, Bevy automatically

inserts `apply_deferred` between systems with dependencies where needed,

so manually inserted `apply_deferred` doesn't to anything useful, and in

current state this example does more harm than good.

## Solution

The example can be modified with removal of automatic `apply_deferred`

insertion, but that would immediately upgrade this example from beginner

level, to upper intermediate. Most users don't need to disable automatic

sync point insertion, and remaining few who do probably already know how

it works.

CC @hymm

# Objective

This pull request implements *reflection probes*, which generalize

environment maps to allow for multiple environment maps in the same

scene, each of which has an axis-aligned bounding box. This is a

standard feature of physically-based renderers and was inspired by [the

corresponding feature in Blender's Eevee renderer].

## Solution

This is a minimal implementation of reflection probes that allows

artists to define cuboid bounding regions associated with environment

maps. For every view, on every frame, a system builds up a list of the

nearest 4 reflection probes that are within the view's frustum and

supplies that list to the shader. The PBR fragment shader searches

through the list, finds the first containing reflection probe, and uses

it for indirect lighting, falling back to the view's environment map if

none is found. Both forward and deferred renderers are fully supported.

A reflection probe is an entity with a pair of components, *LightProbe*

and *EnvironmentMapLight* (as well as the standard *SpatialBundle*, to

position it in the world). The *LightProbe* component (along with the

*Transform*) defines the bounding region, while the

*EnvironmentMapLight* component specifies the associated diffuse and

specular cubemaps.

A frequent question is "why two components instead of just one?" The

advantages of this setup are:

1. It's readily extensible to other types of light probes, in particular

*irradiance volumes* (also known as ambient cubes or voxel global

illumination), which use the same approach of bounding cuboids. With a

single component that applies to both reflection probes and irradiance

volumes, we can share the logic that implements falloff and blending

between multiple light probes between both of those features.

2. It reduces duplication between the existing *EnvironmentMapLight* and

these new reflection probes. Systems can treat environment maps attached

to cameras the same way they treat environment maps applied to

reflection probes if they wish.

Internally, we gather up all environment maps in the scene and place

them in a cubemap array. At present, this means that all environment

maps must have the same size, mipmap count, and texture format. A

warning is emitted if this restriction is violated. We could potentially

relax this in the future as part of the automatic mipmap generation

work, which could easily do texture format conversion as part of its

preprocessing.

An easy way to generate reflection probe cubemaps is to bake them in

Blender and use the `export-blender-gi` tool that's part of the

[`bevy-baked-gi`] project. This tool takes a `.blend` file containing

baked cubemaps as input and exports cubemap images, pre-filtered with an

embedded fork of the [glTF IBL Sampler], alongside a corresponding

`.scn.ron` file that the scene spawner can use to recreate the

reflection probes.

Note that this is intentionally a minimal implementation, to aid

reviewability. Known issues are:

* Reflection probes are basically unsupported on WebGL 2, because WebGL

2 has no cubemap arrays. (Strictly speaking, you can have precisely one

reflection probe in the scene if you have no other cubemaps anywhere,

but this isn't very useful.)

* Reflection probes have no falloff, so reflections will abruptly change

when objects move from one bounding region to another.

* As mentioned before, all cubemaps in the world of a given type

(diffuse or specular) must have the same size, format, and mipmap count.

Future work includes:

* Blending between multiple reflection probes.

* A falloff/fade-out region so that reflected objects disappear

gradually instead of vanishing all at once.

* Irradiance volumes for voxel-based global illumination. This should

reuse much of the reflection probe logic, as they're both GI techniques

based on cuboid bounding regions.

* Support for WebGL 2, by breaking batches when reflection probes are

used.

These issues notwithstanding, I think it's best to land this with

roughly the current set of functionality, because this patch is useful

as is and adding everything above would make the pull request

significantly larger and harder to review.

---

## Changelog

### Added

* A new *LightProbe* component is available that specifies a bounding

region that an *EnvironmentMapLight* applies to. The combination of a

*LightProbe* and an *EnvironmentMapLight* offers *reflection probe*

functionality similar to that available in other engines.

[the corresponding feature in Blender's Eevee renderer]:

https://docs.blender.org/manual/en/latest/render/eevee/light_probes/reflection_cubemaps.html

[`bevy-baked-gi`]: https://github.com/pcwalton/bevy-baked-gi

[glTF IBL Sampler]: https://github.com/KhronosGroup/glTF-IBL-Sampler

# Objective

Lightmaps, textures that store baked global illumination, have been a

mainstay of real-time graphics for decades. Bevy currently has no

support for them, so this pull request implements them.

## Solution

The new `Lightmap` component can be attached to any entity that contains

a `Handle<Mesh>` and a `StandardMaterial`. When present, it will be

applied in the PBR shader. Because multiple lightmaps are frequently

packed into atlases, each lightmap may have its own UV boundaries within

its texture. An `exposure` field is also provided, to control the

brightness of the lightmap.

Note that this PR doesn't provide any way to bake the lightmaps. That

can be done with [The Lightmapper] or another solution, such as Unity's

Bakery.

---

## Changelog

### Added

* A new component, `Lightmap`, is available, for baked global

illumination. If your mesh has a second UV channel (UV1), and you attach

this component to the entity with that mesh, Bevy will apply the texture

referenced in the lightmap.

[The Lightmapper]: https://github.com/Naxela/The_Lightmapper

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

Provide an example of how to achieve pixel-perfect "grid snapping" in 2D

via rendering to a texture. This is a common use case in retro pixel art

game development.

## Solution

Render sprites to a canvas via a Camera, then use another (scaled up)

Camera to render the resulting canvas to the screen. This example is

based on the `3d/render_to_texture.rs` example. Furthermore, this

example demonstrates mixing retro-style graphics with high-resolution

graphics, as well as pixel-snapped rendering of a

`MaterialMesh2dBundle`.

# Objective

- Fixes#10518

## Solution

I've added a method to `LoadContext`, `load_direct_with_reader`, which

mirrors the behaviour of `load_direct` with a single key difference: it

is provided with the `Reader` by the caller, rather than getting it from

the contained `AssetServer`. This allows for an `AssetLoader` to process

its `Reader` stream, and then directly hand the results off to the

`LoadContext` to handle further loading. The outer `AssetLoader` can

control how the `Reader` is interpreted by providing a relevant

`AssetPath`.

For example, a Gzip decompression loader could process the asset

`images/my_image.png.gz` by decompressing the bytes, then handing the

decompressed result to the `LoadContext` with the new path

`images/my_image.png.gz/my_image.png`. This intuitively reflects the

nature of contained assets, whilst avoiding unintended behaviour, since

the generated path cannot be a real file path (a file and folder of the

same name cannot coexist in most file-systems).

```rust

#[derive(Asset, TypePath)]

pub struct GzAsset {

pub uncompressed: ErasedLoadedAsset,

}

#[derive(Default)]

pub struct GzAssetLoader;

impl AssetLoader for GzAssetLoader {

type Asset = GzAsset;

type Settings = ();

type Error = GzAssetLoaderError;

fn load<'a>(

&'a self,

reader: &'a mut Reader,

_settings: &'a (),

load_context: &'a mut LoadContext,

) -> BoxedFuture<'a, Result<Self::Asset, Self::Error>> {

Box::pin(async move {

let compressed_path = load_context.path();

let file_name = compressed_path

.file_name()

.ok_or(GzAssetLoaderError::IndeterminateFilePath)?

.to_string_lossy();

let uncompressed_file_name = file_name

.strip_suffix(".gz")

.ok_or(GzAssetLoaderError::IndeterminateFilePath)?;

let contained_path = compressed_path.join(uncompressed_file_name);

let mut bytes_compressed = Vec::new();

reader.read_to_end(&mut bytes_compressed).await?;

let mut decoder = GzDecoder::new(bytes_compressed.as_slice());

let mut bytes_uncompressed = Vec::new();

decoder.read_to_end(&mut bytes_uncompressed)?;

// Now that we have decompressed the asset, let's pass it back to the

// context to continue loading

let mut reader = VecReader::new(bytes_uncompressed);

let uncompressed = load_context

.load_direct_with_reader(&mut reader, contained_path)

.await?;

Ok(GzAsset { uncompressed })

})

}

fn extensions(&self) -> &[&str] {

&["gz"]

}

}

```

Because this example is so prudent, I've included an

`asset_decompression` example which implements this exact behaviour:

```rust

fn main() {

App::new()

.add_plugins(DefaultPlugins)

.init_asset::<GzAsset>()

.init_asset_loader::<GzAssetLoader>()

.add_systems(Startup, setup)

.add_systems(Update, decompress::<Image>)

.run();

}

fn setup(mut commands: Commands, asset_server: Res<AssetServer>) {

commands.spawn(Camera2dBundle::default());

commands.spawn((

Compressed::<Image> {

compressed: asset_server.load("data/compressed_image.png.gz"),

..default()

},

Sprite::default(),

TransformBundle::default(),

VisibilityBundle::default(),

));

}

fn decompress<A: Asset>(

mut commands: Commands,

asset_server: Res<AssetServer>,

mut compressed_assets: ResMut<Assets<GzAsset>>,

query: Query<(Entity, &Compressed<A>)>,

) {

for (entity, Compressed { compressed, .. }) in query.iter() {

let Some(GzAsset { uncompressed }) = compressed_assets.remove(compressed) else {

continue;

};

let uncompressed = uncompressed.take::<A>().unwrap();

commands

.entity(entity)

.remove::<Compressed<A>>()

.insert(asset_server.add(uncompressed));

}

}

```

A key limitation to this design is how to type the internally loaded

asset, since the example `GzAssetLoader` is unaware of the internal

asset type `A`. As such, in this example I store the contained asset as

an `ErasedLoadedAsset`, and leave it up to the consumer of the `GzAsset`

to handle typing the final result, which is the purpose of the

`decompress` system. This limitation can be worked around by providing

type information to the `GzAssetLoader`, such as `GzAssetLoader<Image,

ImageAssetLoader>`, but this would require registering the asset loader

for every possible decompression target.

Aside from this limitation, nested asset containerisation works as an

end user would expect; if the user registers a `TarAssetLoader`, and a

`GzAssetLoader`, then they can load assets with compound

containerisation, such as `images.tar.gz`.

---

## Changelog

- Added `LoadContext::load_direct_with_reader`

- Added `asset_decompression` example

## Notes

- While I believe my implementation of a Gzip asset loader is

reasonable, I haven't included it as a public feature of `bevy_asset` to

keep the scope of this PR as focussed as possible.

- I have included `flate2` as a `dev-dependency` for the example; it is

not included in the main dependency graph.

# Objective

- 2d materials have subtle differences with 3d materials that aren't

obvious to beginners

## Solution

- Add an example that shows how to make a 2d material

# Objective

<img width="1920" alt="Screenshot 2023-04-26 at 01 07 34"

src="https://user-images.githubusercontent.com/418473/234467578-0f34187b-5863-4ea1-88e9-7a6bb8ce8da3.png">

This PR adds both diffuse and specular light transmission capabilities

to the `StandardMaterial`, with support for screen space refractions.

This enables realistically representing a wide range of real-world

materials, such as:

- Glass; (Including frosted glass)

- Transparent and translucent plastics;

- Various liquids and gels;

- Gemstones;

- Marble;

- Wax;

- Paper;

- Leaves;

- Porcelain.

Unlike existing support for transparency, light transmission does not

rely on fixed function alpha blending, and therefore works with both

`AlphaMode::Opaque` and `AlphaMode::Mask` materials.

## Solution

- Introduces a number of transmission related fields in the

`StandardMaterial`;

- For specular transmission:

- Adds logic to take a view main texture snapshot after the opaque

phase; (in order to perform screen space refractions)

- Introduces a new `Transmissive3d` phase to the renderer, to which all

meshes with `transmission > 0.0` materials are sent.

- Calculates a light exit point (of the approximate mesh volume) using

`ior` and `thickness` properties

- Samples the snapshot texture with an adaptive number of taps across a

`roughness`-controlled radius enabling “blurry” refractions

- For diffuse transmission:

- Approximates transmitted diffuse light by using a second, flipped +

displaced, diffuse-only Lambertian lobe for each light source.

## To Do

- [x] Figure out where `fresnel_mix()` is taking place, if at all, and

where `dielectric_specular` is being calculated, if at all, and update

them to use the `ior` value (Not a blocker, just a nice-to-have for more

correct BSDF)

- To the _best of my knowledge, this is now taking place, after

964340cdd. The fresnel mix is actually "split" into two parts in our

implementation, one `(1 - fresnel(...))` in the transmission, and

`fresnel()` in the light implementations. A surface with more

reflectance now will produce slightly dimmer transmission towards the

grazing angle, as more of the light gets reflected.

- [x] Add `transmission_texture`

- [x] Add `diffuse_transmission_texture`

- [x] Add `thickness_texture`

- [x] Add `attenuation_distance` and `attenuation_color`

- [x] Connect values to glTF loader

- [x] `transmission` and `transmission_texture`

- [x] `thickness` and `thickness_texture`

- [x] `ior`

- [ ] `diffuse_transmission` and `diffuse_transmission_texture` (needs

upstream support in `gltf` crate, not a blocker)

- [x] Add support for multiple screen space refraction “steps”

- [x] Conditionally create no transmission snapshot texture at all if

`steps == 0`

- [x] Conditionally enable/disable screen space refraction transmission

snapshots

- [x] Read from depth pre-pass to prevent refracting pixels in front of

the light exit point

- [x] Use `interleaved_gradient_noise()` function for sampling blur in a

way that benefits from TAA

- [x] Drill down a TAA `#define`, tweak some aspects of the effect

conditionally based on it

- [x] Remove const array that's crashing under HLSL (unless a new `naga`

release with https://github.com/gfx-rs/naga/pull/2496 comes out before

we merge this)

- [ ] Look into alternatives to the `switch` hack for dynamically

indexing the const array (might not be needed, compilers seem to be

decent at expanding it)

- [ ] Add pipeline keys for gating transmission (do we really want/need

this?)

- [x] Tweak some material field/function names?

## A Note on Texture Packing

_This was originally added as a comment to the

`specular_transmission_texture`, `thickness_texture` and

`diffuse_transmission_texture` documentation, I removed it since it was

more confusing than helpful, and will likely be made redundant/will need

to be updated once we have a better infrastructure for preprocessing

assets_

Due to how channels are mapped, you can more efficiently use a single

shared texture image

for configuring the following:

- R - `specular_transmission_texture`

- G - `thickness_texture`

- B - _unused_

- A - `diffuse_transmission_texture`

The `KHR_materials_diffuse_transmission` glTF extension also defines a

`diffuseTransmissionColorTexture`,

that _we don't currently support_. One might choose to pack the

intensity and color textures together,

using RGB for the color and A for the intensity, in which case this

packing advice doesn't really apply.

---

## Changelog

- Added a new `Transmissive3d` render phase for rendering specular

transmissive materials with screen space refractions

- Added rendering support for transmitted environment map light on the

`StandardMaterial` as a fallback for screen space refractions

- Added `diffuse_transmission`, `specular_transmission`, `thickness`,

`ior`, `attenuation_distance` and `attenuation_color` to the

`StandardMaterial`

- Added `diffuse_transmission_texture`, `specular_transmission_texture`,

`thickness_texture` to the `StandardMaterial`, gated behind a new

`pbr_transmission_textures` cargo feature (off by default, for maximum

hardware compatibility)

- Added `Camera3d::screen_space_specular_transmission_steps` for

controlling the number of “layers of transparency” rendered for

transmissive objects

- Added a `TransmittedShadowReceiver` component for enabling shadows in

(diffusely) transmitted light. (disabled by default, as it requires

carefully setting up the `thickness` to avoid self-shadow artifacts)

- Added support for the `KHR_materials_transmission`,

`KHR_materials_ior` and `KHR_materials_volume` glTF extensions

- Renamed items related to temporal jitter for greater consistency

## Migration Guide

- `SsaoPipelineKey::temporal_noise` has been renamed to

`SsaoPipelineKey::temporal_jitter`

- The `TAA` shader def (controlled by the presence of the

`TemporalAntiAliasSettings` component in the camera) has been replaced

with the `TEMPORAL_JITTER` shader def (controlled by the presence of the

`TemporalJitter` component in the camera)

- `MeshPipelineKey::TAA` has been replaced by

`MeshPipelineKey::TEMPORAL_JITTER`

- The `TEMPORAL_NOISE` shader def has been consolidated with

`TEMPORAL_JITTER`

# Objective

- Fixes#10133

## Solution

- Add a new example that focuses on using `Virtual` time

## Changelog

### Added

- new `virtual_time` example

### Changed

- moved `time` & `timers` examples to the new `examples/time` folder

# Objective

- Reduce noise to allow users to see previous asset changes and other

logs like from asset reloading

- The example file is named differently than the example

## Solution

- Only print the asset content if there are asset events

- Rename the example file to `asset_processing`

# Objective

- Fixes#9382

## Solution

- Added a few extra notes in regards to WebGPU experimental state and

the need of enabling unstable APIs through certain attribute flags in

`cargo_features.md` and the examples `README.md` files.

# Objective

allow extending `Material`s (including the built in `StandardMaterial`)

with custom vertex/fragment shaders and additional data, to easily get

pbr lighting with custom modifications, or otherwise extend a base

material.

# Solution

- added `ExtendedMaterial<B: Material, E: MaterialExtension>` which

contains a base material and a user-defined extension.

- added example `extended_material` showing how to use it

- modified AsBindGroup to have "unprepared" functions that return raw

resources / layout entries so that the extended material can combine

them

note: doesn't currently work with array resources, as i can't figure out

how to make the OwnedBindingResource::get_binding() work, as wgpu

requires a `&'a[&'a TextureView]` and i have a `Vec<TextureView>`.

# Migration Guide

manual implementations of `AsBindGroup` will need to be adjusted, the

changes are pretty straightforward and can be seen in the diff for e.g.

the `texture_binding_array` example.

---------

Co-authored-by: Robert Swain <robert.swain@gmail.com>

# Objective

Current `FixedTime` and `Time` have several problems. This pull aims to

fix many of them at once.

- If there is a longer pause between app updates, time will jump forward

a lot at once and fixed time will iterate on `FixedUpdate` for a large

number of steps. If the pause is merely seconds, then this will just

mean jerkiness and possible unexpected behaviour in gameplay. If the

pause is hours/days as with OS suspend, the game will appear to freeze

until it has caught up with real time.

- If calculating a fixed step takes longer than specified fixed step

period, the game will enter a death spiral where rendering each frame

takes longer and longer due to more and more fixed step updates being

run per frame and the game appears to freeze.

- There is no way to see current fixed step elapsed time inside fixed

steps. In order to track this, the game designer needs to add a custom

system inside `FixedUpdate` that calculates elapsed or step count in a

resource.

- Access to delta time inside fixed step is `FixedStep::period` rather

than `Time::delta`. This, coupled with the issue that `Time::elapsed`

isn't available at all for fixed steps, makes it that time requiring

systems are either implemented to be run in `FixedUpdate` or `Update`,

but rarely work in both.

- Fixes#8800

- Fixes#8543

- Fixes#7439

- Fixes#5692

## Solution

- Create a generic `Time<T>` clock that has no processing logic but

which can be instantiated for multiple usages. This is also exposed for

users to add custom clocks.

- Create three standard clocks, `Time<Real>`, `Time<Virtual>` and

`Time<Fixed>`, all of which contain their individual logic.

- Create one "default" clock, which is just `Time` (or `Time<()>`),

which will be overwritten from `Time<Virtual>` on each update, and

`Time<Fixed>` inside `FixedUpdate` schedule. This way systems that do

not care specifically which time they track can work both in `Update`

and `FixedUpdate` without changes and the behaviour is intuitive.

- Add `max_delta` to virtual time update, which limits how much can be

added to virtual time by a single update. This fixes both the behaviour

after a long freeze, and also the death spiral by limiting how many

fixed timestep iterations there can be per update. Possible future work

could be adding `max_accumulator` to add a sort of "leaky bucket" time

processing to possibly smooth out jumps in time while keeping frame rate

stable.

- Many minor tweaks and clarifications to the time functions and their

documentation.

## Changelog

- `Time::raw_delta()`, `Time::raw_elapsed()` and related methods are

moved to `Time<Real>::delta()` and `Time<Real>::elapsed()` and now match

`Time` API

- `FixedTime` is now `Time<Fixed>` and matches `Time` API.

- `Time<Fixed>` default timestep is now 64 Hz, or 15625 microseconds.

- `Time` inside `FixedUpdate` now reflects fixed timestep time, making

systems portable between `Update ` and `FixedUpdate`.

- `Time::pause()`, `Time::set_relative_speed()` and related methods must

now be called as `Time<Virtual>::pause()` etc.

- There is a new `max_delta` setting in `Time<Virtual>` that limits how

much the clock can jump by a single update. The default value is 0.25

seconds.

- Removed `on_fixed_timer()` condition as `on_timer()` does the right

thing inside `FixedUpdate` now.

## Migration Guide

- Change all `Res<Time>` instances that access `raw_delta()`,

`raw_elapsed()` and related methods to `Res<Time<Real>>` and `delta()`,

`elapsed()`, etc.

- Change access to `period` from `Res<FixedTime>` to `Res<Time<Fixed>>`

and use `delta()`.

- The default timestep has been changed from 60 Hz to 64 Hz. If you wish

to restore the old behaviour, use

`app.insert_resource(Time::<Fixed>::from_hz(60.0))`.

- Change `app.insert_resource(FixedTime::new(duration))` to

`app.insert_resource(Time::<Fixed>::from_duration(duration))`

- Change `app.insert_resource(FixedTime::new_from_secs(secs))` to

`app.insert_resource(Time::<Fixed>::from_seconds(secs))`

- Change `system.on_fixed_timer(duration)` to

`system.on_timer(duration)`. Timers in systems placed in `FixedUpdate`

schedule automatically use the fixed time clock.

- Change `ResMut<Time>` calls to `pause()`, `is_paused()`,