2022-12-25 00:23:13 +00:00

|

|

|

//! A simple glTF scene viewer made with Bevy.

|

|

|

|

|

//!

|

|

|

|

|

//! Just run `cargo run --release --example scene_viewer /path/to/model.gltf`,

|

|

|

|

|

//! replacing the path as appropriate.

|

|

|

|

|

//! In case of multiple scenes, you can select which to display by adapting the file path: `/path/to/model.gltf#Scene1`.

|

|

|

|

|

//! With no arguments it will load the `FlightHelmet` glTF model from the repository assets subdirectory.

|

Multiple Asset Sources (#9885)

This adds support for **Multiple Asset Sources**. You can now register a

named `AssetSource`, which you can load assets from like you normally

would:

```rust

let shader: Handle<Shader> = asset_server.load("custom_source://path/to/shader.wgsl");

```

Notice that `AssetPath` now supports `some_source://` syntax. This can

now be accessed through the `asset_path.source()` accessor.

Asset source names _are not required_. If one is not specified, the

default asset source will be used:

```rust

let shader: Handle<Shader> = asset_server.load("path/to/shader.wgsl");

```

The behavior of the default asset source has not changed. Ex: the

`assets` folder is still the default.

As referenced in #9714

## Why?

**Multiple Asset Sources** enables a number of often-asked-for

scenarios:

* **Loading some assets from other locations on disk**: you could create

a `config` asset source that reads from the OS-default config folder

(not implemented in this PR)

* **Loading some assets from a remote server**: you could register a new

`remote` asset source that reads some assets from a remote http server

(not implemented in this PR)

* **Improved "Binary Embedded" Assets**: we can use this system for

"embedded-in-binary assets", which allows us to replace the old

`load_internal_asset!` approach, which couldn't support asset

processing, didn't support hot-reloading _well_, and didn't make

embedded assets accessible to the `AssetServer` (implemented in this pr)

## Adding New Asset Sources

An `AssetSource` is "just" a collection of `AssetReader`, `AssetWriter`,

and `AssetWatcher` entries. You can configure new asset sources like

this:

```rust

app.register_asset_source(

"other",

AssetSource::build()

.with_reader(|| Box::new(FileAssetReader::new("other")))

)

)

```

Note that `AssetSource` construction _must_ be repeatable, which is why

a closure is accepted.

`AssetSourceBuilder` supports `with_reader`, `with_writer`,

`with_watcher`, `with_processed_reader`, `with_processed_writer`, and

`with_processed_watcher`.

Note that the "asset source" system replaces the old "asset providers"

system.

## Processing Multiple Sources

The `AssetProcessor` now supports multiple asset sources! Processed

assets can refer to assets in other sources and everything "just works".

Each `AssetSource` defines an unprocessed and processed `AssetReader` /

`AssetWriter`.

Currently this is all or nothing for a given `AssetSource`. A given

source is either processed or it is not. Later we might want to add

support for "lazy asset processing", where an `AssetSource` (such as a

remote server) can be configured to only process assets that are

directly referenced by local assets (in order to save local disk space

and avoid doing extra work).

## A new `AssetSource`: `embedded`

One of the big features motivating **Multiple Asset Sources** was

improving our "embedded-in-binary" asset loading. To prove out the

**Multiple Asset Sources** implementation, I chose to build a new

`embedded` `AssetSource`, which replaces the old `load_interal_asset!`

system.

The old `load_internal_asset!` approach had a number of issues:

* The `AssetServer` was not aware of (or capable of loading) internal

assets.

* Because internal assets weren't visible to the `AssetServer`, they

could not be processed (or used by assets that are processed). This

would prevent things "preprocessing shaders that depend on built in Bevy

shaders", which is something we desperately need to start doing.

* Each "internal asset" needed a UUID to be defined in-code to reference

it. This was very manual and toilsome.

The new `embedded` `AssetSource` enables the following pattern:

```rust

// Called in `crates/bevy_pbr/src/render/mesh.rs`

embedded_asset!(app, "mesh.wgsl");

// later in the app

let shader: Handle<Shader> = asset_server.load("embedded://bevy_pbr/render/mesh.wgsl");

```

Notice that this always treats the crate name as the "root path", and it

trims out the `src` path for brevity. This is generally predictable, but

if you need to debug you can use the new `embedded_path!` macro to get a

`PathBuf` that matches the one used by `embedded_asset`.

You can also reference embedded assets in arbitrary assets, such as WGSL

shaders:

```rust

#import "embedded://bevy_pbr/render/mesh.wgsl"

```

This also makes `embedded` assets go through the "normal" asset

lifecycle. They are only loaded when they are actually used!

We are also discussing implicitly converting asset paths to/from shader

modules, so in the future (not in this PR) you might be able to load it

like this:

```rust

#import bevy_pbr::render::mesh::Vertex

```

Compare that to the old system!

```rust

pub const MESH_SHADER_HANDLE: Handle<Shader> = Handle::weak_from_u128(3252377289100772450);

load_internal_asset!(app, MESH_SHADER_HANDLE, "mesh.wgsl", Shader::from_wgsl);

// The mesh asset is the _only_ accessible via MESH_SHADER_HANDLE and _cannot_ be loaded via the AssetServer.

```

## Hot Reloading `embedded`

You can enable `embedded` hot reloading by enabling the

`embedded_watcher` cargo feature:

```

cargo run --features=embedded_watcher

```

## Improved Hot Reloading Workflow

First: the `filesystem_watcher` cargo feature has been renamed to

`file_watcher` for brevity (and to match the `FileAssetReader` naming

convention).

More importantly, hot asset reloading is no longer configured in-code by

default. If you enable any asset watcher feature (such as `file_watcher`

or `rust_source_watcher`), asset watching will be automatically enabled.

This removes the need to _also_ enable hot reloading in your app code.

That means you can replace this:

```rust

app.add_plugins(DefaultPlugins.set(AssetPlugin::default().watch_for_changes()))

```

with this:

```rust

app.add_plugins(DefaultPlugins)

```

If you want to hot reload assets in your app during development, just

run your app like this:

```

cargo run --features=file_watcher

```

This means you can use the same code for development and deployment! To

deploy an app, just don't include the watcher feature

```

cargo build --release

```

My intent is to move to this approach for pretty much all dev workflows.

In a future PR I would like to replace `AssetMode::ProcessedDev` with a

`runtime-processor` cargo feature. We could then group all common "dev"

cargo features under a single `dev` feature:

```sh

# this would enable file_watcher, embedded_watcher, runtime-processor, and more

cargo run --features=dev

```

## AssetMode

`AssetPlugin::Unprocessed`, `AssetPlugin::Processed`, and

`AssetPlugin::ProcessedDev` have been replaced with an `AssetMode` field

on `AssetPlugin`.

```rust

// before

app.add_plugins(DefaultPlugins.set(AssetPlugin::Processed { /* fields here */ })

// after

app.add_plugins(DefaultPlugins.set(AssetPlugin { mode: AssetMode::Processed, ..default() })

```

This aligns `AssetPlugin` with our other struct-like plugins. The old

"source" and "destination" `AssetProvider` fields in the enum variants

have been replaced by the "asset source" system. You no longer need to

configure the AssetPlugin to "point" to custom asset providers.

## AssetServerMode

To improve the implementation of **Multiple Asset Sources**,

`AssetServer` was made aware of whether or not it is using "processed"

or "unprocessed" assets. You can check that like this:

```rust

if asset_server.mode() == AssetServerMode::Processed {

/* do something */

}

```

Note that this refactor should also prepare the way for building "one to

many processed output files", as it makes the server aware of whether it

is loading from processed or unprocessed sources. Meaning we can store

and read processed and unprocessed assets differently!

## AssetPath can now refer to folders

The "file only" restriction has been removed from `AssetPath`. The

`AssetServer::load_folder` API now accepts an `AssetPath` instead of a

`Path`, meaning you can load folders from other asset sources!

## Improved AssetPath Parsing

AssetPath parsing was reworked to support sources, improve error

messages, and to enable parsing with a single pass over the string.

`AssetPath::new` was replaced by `AssetPath::parse` and

`AssetPath::try_parse`.

## AssetWatcher broken out from AssetReader

`AssetReader` is no longer responsible for constructing `AssetWatcher`.

This has been moved to `AssetSourceBuilder`.

## Duplicate Event Debouncing

Asset V2 already debounced duplicate filesystem events, but this was

_input_ events. Multiple input event types can produce the same _output_

`AssetSourceEvent`. Now that we have `embedded_watcher`, which does

expensive file io on events, it made sense to debounce output events

too, so I added that! This will also benefit the AssetProcessor by

preventing integrity checks for duplicate events (and helps keep the

noise down in trace logs).

## Next Steps

* **Port Built-in Shaders**: Currently the primary (and essentially

only) user of `load_interal_asset` in Bevy's source code is "built-in

shaders". I chose not to do that in this PR for a few reasons:

1. We need to add the ability to pass shader defs in to shaders via meta

files. Some shaders (such as MESH_VIEW_TYPES) need to pass shader def

values in that are defined in code.

2. We need to revisit the current shader module naming system. I think

we _probably_ want to imply modules from source structure (at least by

default). Ideally in a way that can losslessly convert asset paths

to/from shader modules (to enable the asset system to resolve modules

using the asset server).

3. I want to keep this change set minimal / get this merged first.

* **Deprecate `load_internal_asset`**: we can't do that until we do (1)

and (2)

* **Relative Asset Paths**: This PR significantly increases the need for

relative asset paths (which was already pretty high). Currently when

loading dependencies, it is assumed to be an absolute path, which means

if in an `AssetLoader` you call `context.load("some/path/image.png")` it

will assume that is the "default" asset source, _even if the current

asset is in a different asset source_. This will cause breakage for

AssetLoaders that are not designed to add the current source to whatever

paths are being used. AssetLoaders should generally not need to be aware

of the name of their current asset source, or need to think about the

"current asset source" generally. We should build apis that support

relative asset paths and then encourage using relative paths as much as

possible (both via api design and docs). Relative paths are also

important because they will allow developers to move folders around

(even across providers) without reprocessing, provided there is no path

breakage.

2023-10-13 16:17:32 -07:00

|

|

|

//!

|

|

|

|

|

//! If you want to hot reload asset changes, enable the `file_watcher` cargo feature.

|

2022-12-25 00:23:13 +00:00

|

|

|

|

|

|

|

|

use bevy::{

|

|

|

|

|

prelude::*,

|

|

|

|

|

render::primitives::{Aabb, Sphere},

|

|

|

|

|

};

|

|

|

|

|

|

2024-01-14 14:50:33 +01:00

|

|

|

#[path = "../../helpers/camera_controller.rs"]

|

|

|

|

|

mod camera_controller;

|

|

|

|

|

|

Add morph targets (#8158)

# Objective

- Add morph targets to `bevy_pbr` (closes #5756) & load them from glTF

- Supersedes #3722

- Fixes #6814

[Morph targets][1] (also known as shape interpolation, shape keys, or

blend shapes) allow animating individual vertices with fine grained

controls. This is typically used for facial expressions. By specifying

multiple poses as vertex offset, and providing a set of weight of each

pose, it is possible to define surprisingly realistic transitions

between poses. Blending between multiple poses also allow composition.

Morph targets are part of the [gltf standard][2] and are a feature of

Unity and Unreal, and babylone.js, it is only natural to implement them

in bevy.

## Solution

This implementation of morph targets uses a 3d texture where each pixel

is a component of an animated attribute. Each layer is a different

target. We use a 2d texture for each target, because the number of

attribute×components×animated vertices is expected to always exceed the

maximum pixel row size limit of webGL2. It copies fairly closely the way

skinning is implemented on the CPU side, while on the GPU side, the

shader morph target implementation is a relatively trivial detail.

We add an optional `morph_texture` to the `Mesh` struct. The

`morph_texture` is built through a method that accepts an iterator over

attribute buffers.

The `MorphWeights` component, user-accessible, controls the blend of

poses used by mesh instances (so that multiple copy of the same mesh may

have different weights), all the weights are uploaded to a uniform

buffer of 256 `f32`. We limit to 16 poses per mesh, and a total of 256

poses.

More literature:

* Old babylone.js implementation (vertex attribute-based):

https://www.eternalcoding.com/dev-log-1-morph-targets/

* Babylone.js implementation (similar to ours):

https://www.youtube.com/watch?v=LBPRmGgU0PE

* GPU gems 3:

https://developer.nvidia.com/gpugems/gpugems3/part-i-geometry/chapter-3-directx-10-blend-shapes-breaking-limits

* Development discord thread

https://discord.com/channels/691052431525675048/1083325980615114772

https://user-images.githubusercontent.com/26321040/231181046-3bca2ab2-d4d9-472e-8098-639f1871ce2e.mp4

https://github.com/bevyengine/bevy/assets/26321040/d2a0c544-0ef8-45cf-9f99-8c3792f5a258

## Acknowledgements

* Thanks to `storytold` for sponsoring the feature

* Thanks to `superdump` and `james7132` for guidance and help figuring

out stuff

## Future work

- Handling of less and more attributes (eg: animated uv, animated

arbitrary attributes)

- Dynamic pose allocation (so that zero-weighted poses aren't uploaded

to GPU for example, enables much more total poses)

- Better animation API, see #8357

----

## Changelog

- Add morph targets to bevy meshes

- Support up to 64 poses per mesh of individually up to 116508 vertices,

animation currently strictly limited to the position, normal and tangent

attributes.

- Load a morph target using `Mesh::set_morph_targets`

- Add `VisitMorphTargets` and `VisitMorphAttributes` traits to

`bevy_render`, this allows defining morph targets (a fairly complex and

nested data structure) through iterators (ie: single copy instead of

passing around buffers), see documentation of those traits for details

- Add `MorphWeights` component exported by `bevy_render`

- `MorphWeights` control mesh's morph target weights, blending between

various poses defined as morph targets.

- `MorphWeights` are directly inherited by direct children (single level

of hierarchy) of an entity. This allows controlling several mesh

primitives through a unique entity _as per GLTF spec_.

- Add `MorphTargetNames` component, naming each indices of loaded morph

targets.

- Load morph targets weights and buffers in `bevy_gltf`

- handle morph targets animations in `bevy_animation` (previously, it

was a `warn!` log)

- Add the `MorphStressTest.gltf` asset for morph targets testing, taken

from the glTF samples repo, CC0.

- Add morph target manipulation to `scene_viewer`

- Separate the animation code in `scene_viewer` from the rest of the

code, reducing `#[cfg(feature)]` noise

- Add the `morph_targets.rs` example to show off how to manipulate morph

targets, loading `MorpStressTest.gltf`

## Migration Guide

- (very specialized, unlikely to be touched by 3rd parties)

- `MeshPipeline` now has a single `mesh_layouts` field rather than

separate `mesh_layout` and `skinned_mesh_layout` fields. You should

handle all possible mesh bind group layouts in your implementation

- You should also handle properly the new `MORPH_TARGETS` shader def and

mesh pipeline key. A new function is exposed to make this easier:

`setup_moprh_and_skinning_defs`

- The `MeshBindGroup` is now `MeshBindGroups`, cached bind groups are

now accessed through the `get` method.

[1]: https://en.wikipedia.org/wiki/Morph_target_animation

[2]:

https://registry.khronos.org/glTF/specs/2.0/glTF-2.0.html#morph-targets

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

2023-06-22 22:00:01 +02:00

|

|

|

#[cfg(feature = "animation")]

|

|

|

|

|

mod animation_plugin;

|

|

|

|

|

mod morph_viewer_plugin;

|

2022-12-25 00:23:13 +00:00

|

|

|

mod scene_viewer_plugin;

|

|

|

|

|

|

2024-01-14 14:50:33 +01:00

|

|

|

use camera_controller::{CameraController, CameraControllerPlugin};

|

Add morph targets (#8158)

# Objective

- Add morph targets to `bevy_pbr` (closes #5756) & load them from glTF

- Supersedes #3722

- Fixes #6814

[Morph targets][1] (also known as shape interpolation, shape keys, or

blend shapes) allow animating individual vertices with fine grained

controls. This is typically used for facial expressions. By specifying

multiple poses as vertex offset, and providing a set of weight of each

pose, it is possible to define surprisingly realistic transitions

between poses. Blending between multiple poses also allow composition.

Morph targets are part of the [gltf standard][2] and are a feature of

Unity and Unreal, and babylone.js, it is only natural to implement them

in bevy.

## Solution

This implementation of morph targets uses a 3d texture where each pixel

is a component of an animated attribute. Each layer is a different

target. We use a 2d texture for each target, because the number of

attribute×components×animated vertices is expected to always exceed the

maximum pixel row size limit of webGL2. It copies fairly closely the way

skinning is implemented on the CPU side, while on the GPU side, the

shader morph target implementation is a relatively trivial detail.

We add an optional `morph_texture` to the `Mesh` struct. The

`morph_texture` is built through a method that accepts an iterator over

attribute buffers.

The `MorphWeights` component, user-accessible, controls the blend of

poses used by mesh instances (so that multiple copy of the same mesh may

have different weights), all the weights are uploaded to a uniform

buffer of 256 `f32`. We limit to 16 poses per mesh, and a total of 256

poses.

More literature:

* Old babylone.js implementation (vertex attribute-based):

https://www.eternalcoding.com/dev-log-1-morph-targets/

* Babylone.js implementation (similar to ours):

https://www.youtube.com/watch?v=LBPRmGgU0PE

* GPU gems 3:

https://developer.nvidia.com/gpugems/gpugems3/part-i-geometry/chapter-3-directx-10-blend-shapes-breaking-limits

* Development discord thread

https://discord.com/channels/691052431525675048/1083325980615114772

https://user-images.githubusercontent.com/26321040/231181046-3bca2ab2-d4d9-472e-8098-639f1871ce2e.mp4

https://github.com/bevyengine/bevy/assets/26321040/d2a0c544-0ef8-45cf-9f99-8c3792f5a258

## Acknowledgements

* Thanks to `storytold` for sponsoring the feature

* Thanks to `superdump` and `james7132` for guidance and help figuring

out stuff

## Future work

- Handling of less and more attributes (eg: animated uv, animated

arbitrary attributes)

- Dynamic pose allocation (so that zero-weighted poses aren't uploaded

to GPU for example, enables much more total poses)

- Better animation API, see #8357

----

## Changelog

- Add morph targets to bevy meshes

- Support up to 64 poses per mesh of individually up to 116508 vertices,

animation currently strictly limited to the position, normal and tangent

attributes.

- Load a morph target using `Mesh::set_morph_targets`

- Add `VisitMorphTargets` and `VisitMorphAttributes` traits to

`bevy_render`, this allows defining morph targets (a fairly complex and

nested data structure) through iterators (ie: single copy instead of

passing around buffers), see documentation of those traits for details

- Add `MorphWeights` component exported by `bevy_render`

- `MorphWeights` control mesh's morph target weights, blending between

various poses defined as morph targets.

- `MorphWeights` are directly inherited by direct children (single level

of hierarchy) of an entity. This allows controlling several mesh

primitives through a unique entity _as per GLTF spec_.

- Add `MorphTargetNames` component, naming each indices of loaded morph

targets.

- Load morph targets weights and buffers in `bevy_gltf`

- handle morph targets animations in `bevy_animation` (previously, it

was a `warn!` log)

- Add the `MorphStressTest.gltf` asset for morph targets testing, taken

from the glTF samples repo, CC0.

- Add morph target manipulation to `scene_viewer`

- Separate the animation code in `scene_viewer` from the rest of the

code, reducing `#[cfg(feature)]` noise

- Add the `morph_targets.rs` example to show off how to manipulate morph

targets, loading `MorpStressTest.gltf`

## Migration Guide

- (very specialized, unlikely to be touched by 3rd parties)

- `MeshPipeline` now has a single `mesh_layouts` field rather than

separate `mesh_layout` and `skinned_mesh_layout` fields. You should

handle all possible mesh bind group layouts in your implementation

- You should also handle properly the new `MORPH_TARGETS` shader def and

mesh pipeline key. A new function is exposed to make this easier:

`setup_moprh_and_skinning_defs`

- The `MeshBindGroup` is now `MeshBindGroups`, cached bind groups are

now accessed through the `get` method.

[1]: https://en.wikipedia.org/wiki/Morph_target_animation

[2]:

https://registry.khronos.org/glTF/specs/2.0/glTF-2.0.html#morph-targets

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

2023-06-22 22:00:01 +02:00

|

|

|

use morph_viewer_plugin::MorphViewerPlugin;

|

2022-12-25 00:23:13 +00:00

|

|

|

use scene_viewer_plugin::{SceneHandle, SceneViewerPlugin};

|

|

|

|

|

|

|

|

|

|

fn main() {

|

|

|

|

|

let mut app = App::new();

|

2024-01-16 06:53:21 -08:00

|

|

|

app.add_plugins((

|

2022-12-25 00:23:13 +00:00

|

|

|

DefaultPlugins

|

|

|

|

|

.set(WindowPlugin {

|

2023-01-19 00:38:28 +00:00

|

|

|

primary_window: Some(Window {

|

2022-12-25 00:23:13 +00:00

|

|

|

title: "bevy scene viewer".to_string(),

|

|

|

|

|

..default()

|

2023-01-19 00:38:28 +00:00

|

|

|

}),

|

2022-12-25 00:23:13 +00:00

|

|

|

..default()

|

|

|

|

|

})

|

Multiple Asset Sources (#9885)

This adds support for **Multiple Asset Sources**. You can now register a

named `AssetSource`, which you can load assets from like you normally

would:

```rust

let shader: Handle<Shader> = asset_server.load("custom_source://path/to/shader.wgsl");

```

Notice that `AssetPath` now supports `some_source://` syntax. This can

now be accessed through the `asset_path.source()` accessor.

Asset source names _are not required_. If one is not specified, the

default asset source will be used:

```rust

let shader: Handle<Shader> = asset_server.load("path/to/shader.wgsl");

```

The behavior of the default asset source has not changed. Ex: the

`assets` folder is still the default.

As referenced in #9714

## Why?

**Multiple Asset Sources** enables a number of often-asked-for

scenarios:

* **Loading some assets from other locations on disk**: you could create

a `config` asset source that reads from the OS-default config folder

(not implemented in this PR)

* **Loading some assets from a remote server**: you could register a new

`remote` asset source that reads some assets from a remote http server

(not implemented in this PR)

* **Improved "Binary Embedded" Assets**: we can use this system for

"embedded-in-binary assets", which allows us to replace the old

`load_internal_asset!` approach, which couldn't support asset

processing, didn't support hot-reloading _well_, and didn't make

embedded assets accessible to the `AssetServer` (implemented in this pr)

## Adding New Asset Sources

An `AssetSource` is "just" a collection of `AssetReader`, `AssetWriter`,

and `AssetWatcher` entries. You can configure new asset sources like

this:

```rust

app.register_asset_source(

"other",

AssetSource::build()

.with_reader(|| Box::new(FileAssetReader::new("other")))

)

)

```

Note that `AssetSource` construction _must_ be repeatable, which is why

a closure is accepted.

`AssetSourceBuilder` supports `with_reader`, `with_writer`,

`with_watcher`, `with_processed_reader`, `with_processed_writer`, and

`with_processed_watcher`.

Note that the "asset source" system replaces the old "asset providers"

system.

## Processing Multiple Sources

The `AssetProcessor` now supports multiple asset sources! Processed

assets can refer to assets in other sources and everything "just works".

Each `AssetSource` defines an unprocessed and processed `AssetReader` /

`AssetWriter`.

Currently this is all or nothing for a given `AssetSource`. A given

source is either processed or it is not. Later we might want to add

support for "lazy asset processing", where an `AssetSource` (such as a

remote server) can be configured to only process assets that are

directly referenced by local assets (in order to save local disk space

and avoid doing extra work).

## A new `AssetSource`: `embedded`

One of the big features motivating **Multiple Asset Sources** was

improving our "embedded-in-binary" asset loading. To prove out the

**Multiple Asset Sources** implementation, I chose to build a new

`embedded` `AssetSource`, which replaces the old `load_interal_asset!`

system.

The old `load_internal_asset!` approach had a number of issues:

* The `AssetServer` was not aware of (or capable of loading) internal

assets.

* Because internal assets weren't visible to the `AssetServer`, they

could not be processed (or used by assets that are processed). This

would prevent things "preprocessing shaders that depend on built in Bevy

shaders", which is something we desperately need to start doing.

* Each "internal asset" needed a UUID to be defined in-code to reference

it. This was very manual and toilsome.

The new `embedded` `AssetSource` enables the following pattern:

```rust

// Called in `crates/bevy_pbr/src/render/mesh.rs`

embedded_asset!(app, "mesh.wgsl");

// later in the app

let shader: Handle<Shader> = asset_server.load("embedded://bevy_pbr/render/mesh.wgsl");

```

Notice that this always treats the crate name as the "root path", and it

trims out the `src` path for brevity. This is generally predictable, but

if you need to debug you can use the new `embedded_path!` macro to get a

`PathBuf` that matches the one used by `embedded_asset`.

You can also reference embedded assets in arbitrary assets, such as WGSL

shaders:

```rust

#import "embedded://bevy_pbr/render/mesh.wgsl"

```

This also makes `embedded` assets go through the "normal" asset

lifecycle. They are only loaded when they are actually used!

We are also discussing implicitly converting asset paths to/from shader

modules, so in the future (not in this PR) you might be able to load it

like this:

```rust

#import bevy_pbr::render::mesh::Vertex

```

Compare that to the old system!

```rust

pub const MESH_SHADER_HANDLE: Handle<Shader> = Handle::weak_from_u128(3252377289100772450);

load_internal_asset!(app, MESH_SHADER_HANDLE, "mesh.wgsl", Shader::from_wgsl);

// The mesh asset is the _only_ accessible via MESH_SHADER_HANDLE and _cannot_ be loaded via the AssetServer.

```

## Hot Reloading `embedded`

You can enable `embedded` hot reloading by enabling the

`embedded_watcher` cargo feature:

```

cargo run --features=embedded_watcher

```

## Improved Hot Reloading Workflow

First: the `filesystem_watcher` cargo feature has been renamed to

`file_watcher` for brevity (and to match the `FileAssetReader` naming

convention).

More importantly, hot asset reloading is no longer configured in-code by

default. If you enable any asset watcher feature (such as `file_watcher`

or `rust_source_watcher`), asset watching will be automatically enabled.

This removes the need to _also_ enable hot reloading in your app code.

That means you can replace this:

```rust

app.add_plugins(DefaultPlugins.set(AssetPlugin::default().watch_for_changes()))

```

with this:

```rust

app.add_plugins(DefaultPlugins)

```

If you want to hot reload assets in your app during development, just

run your app like this:

```

cargo run --features=file_watcher

```

This means you can use the same code for development and deployment! To

deploy an app, just don't include the watcher feature

```

cargo build --release

```

My intent is to move to this approach for pretty much all dev workflows.

In a future PR I would like to replace `AssetMode::ProcessedDev` with a

`runtime-processor` cargo feature. We could then group all common "dev"

cargo features under a single `dev` feature:

```sh

# this would enable file_watcher, embedded_watcher, runtime-processor, and more

cargo run --features=dev

```

## AssetMode

`AssetPlugin::Unprocessed`, `AssetPlugin::Processed`, and

`AssetPlugin::ProcessedDev` have been replaced with an `AssetMode` field

on `AssetPlugin`.

```rust

// before

app.add_plugins(DefaultPlugins.set(AssetPlugin::Processed { /* fields here */ })

// after

app.add_plugins(DefaultPlugins.set(AssetPlugin { mode: AssetMode::Processed, ..default() })

```

This aligns `AssetPlugin` with our other struct-like plugins. The old

"source" and "destination" `AssetProvider` fields in the enum variants

have been replaced by the "asset source" system. You no longer need to

configure the AssetPlugin to "point" to custom asset providers.

## AssetServerMode

To improve the implementation of **Multiple Asset Sources**,

`AssetServer` was made aware of whether or not it is using "processed"

or "unprocessed" assets. You can check that like this:

```rust

if asset_server.mode() == AssetServerMode::Processed {

/* do something */

}

```

Note that this refactor should also prepare the way for building "one to

many processed output files", as it makes the server aware of whether it

is loading from processed or unprocessed sources. Meaning we can store

and read processed and unprocessed assets differently!

## AssetPath can now refer to folders

The "file only" restriction has been removed from `AssetPath`. The

`AssetServer::load_folder` API now accepts an `AssetPath` instead of a

`Path`, meaning you can load folders from other asset sources!

## Improved AssetPath Parsing

AssetPath parsing was reworked to support sources, improve error

messages, and to enable parsing with a single pass over the string.

`AssetPath::new` was replaced by `AssetPath::parse` and

`AssetPath::try_parse`.

## AssetWatcher broken out from AssetReader

`AssetReader` is no longer responsible for constructing `AssetWatcher`.

This has been moved to `AssetSourceBuilder`.

## Duplicate Event Debouncing

Asset V2 already debounced duplicate filesystem events, but this was

_input_ events. Multiple input event types can produce the same _output_

`AssetSourceEvent`. Now that we have `embedded_watcher`, which does

expensive file io on events, it made sense to debounce output events

too, so I added that! This will also benefit the AssetProcessor by

preventing integrity checks for duplicate events (and helps keep the

noise down in trace logs).

## Next Steps

* **Port Built-in Shaders**: Currently the primary (and essentially

only) user of `load_interal_asset` in Bevy's source code is "built-in

shaders". I chose not to do that in this PR for a few reasons:

1. We need to add the ability to pass shader defs in to shaders via meta

files. Some shaders (such as MESH_VIEW_TYPES) need to pass shader def

values in that are defined in code.

2. We need to revisit the current shader module naming system. I think

we _probably_ want to imply modules from source structure (at least by

default). Ideally in a way that can losslessly convert asset paths

to/from shader modules (to enable the asset system to resolve modules

using the asset server).

3. I want to keep this change set minimal / get this merged first.

* **Deprecate `load_internal_asset`**: we can't do that until we do (1)

and (2)

* **Relative Asset Paths**: This PR significantly increases the need for

relative asset paths (which was already pretty high). Currently when

loading dependencies, it is assumed to be an absolute path, which means

if in an `AssetLoader` you call `context.load("some/path/image.png")` it

will assume that is the "default" asset source, _even if the current

asset is in a different asset source_. This will cause breakage for

AssetLoaders that are not designed to add the current source to whatever

paths are being used. AssetLoaders should generally not need to be aware

of the name of their current asset source, or need to think about the

"current asset source" generally. We should build apis that support

relative asset paths and then encourage using relative paths as much as

possible (both via api design and docs). Relative paths are also

important because they will allow developers to move folders around

(even across providers) without reprocessing, provided there is no path

breakage.

2023-10-13 16:17:32 -07:00

|

|

|

.set(AssetPlugin {

|

|

|

|

|

file_path: std::env::var("CARGO_MANIFEST_DIR").unwrap_or_else(|_| ".".to_string()),

|

|

|

|

|

..default()

|

|

|

|

|

}),

|

2023-06-21 22:51:03 +02:00

|

|

|

CameraControllerPlugin,

|

|

|

|

|

SceneViewerPlugin,

|

Add morph targets (#8158)

# Objective

- Add morph targets to `bevy_pbr` (closes #5756) & load them from glTF

- Supersedes #3722

- Fixes #6814

[Morph targets][1] (also known as shape interpolation, shape keys, or

blend shapes) allow animating individual vertices with fine grained

controls. This is typically used for facial expressions. By specifying

multiple poses as vertex offset, and providing a set of weight of each

pose, it is possible to define surprisingly realistic transitions

between poses. Blending between multiple poses also allow composition.

Morph targets are part of the [gltf standard][2] and are a feature of

Unity and Unreal, and babylone.js, it is only natural to implement them

in bevy.

## Solution

This implementation of morph targets uses a 3d texture where each pixel

is a component of an animated attribute. Each layer is a different

target. We use a 2d texture for each target, because the number of

attribute×components×animated vertices is expected to always exceed the

maximum pixel row size limit of webGL2. It copies fairly closely the way

skinning is implemented on the CPU side, while on the GPU side, the

shader morph target implementation is a relatively trivial detail.

We add an optional `morph_texture` to the `Mesh` struct. The

`morph_texture` is built through a method that accepts an iterator over

attribute buffers.

The `MorphWeights` component, user-accessible, controls the blend of

poses used by mesh instances (so that multiple copy of the same mesh may

have different weights), all the weights are uploaded to a uniform

buffer of 256 `f32`. We limit to 16 poses per mesh, and a total of 256

poses.

More literature:

* Old babylone.js implementation (vertex attribute-based):

https://www.eternalcoding.com/dev-log-1-morph-targets/

* Babylone.js implementation (similar to ours):

https://www.youtube.com/watch?v=LBPRmGgU0PE

* GPU gems 3:

https://developer.nvidia.com/gpugems/gpugems3/part-i-geometry/chapter-3-directx-10-blend-shapes-breaking-limits

* Development discord thread

https://discord.com/channels/691052431525675048/1083325980615114772

https://user-images.githubusercontent.com/26321040/231181046-3bca2ab2-d4d9-472e-8098-639f1871ce2e.mp4

https://github.com/bevyengine/bevy/assets/26321040/d2a0c544-0ef8-45cf-9f99-8c3792f5a258

## Acknowledgements

* Thanks to `storytold` for sponsoring the feature

* Thanks to `superdump` and `james7132` for guidance and help figuring

out stuff

## Future work

- Handling of less and more attributes (eg: animated uv, animated

arbitrary attributes)

- Dynamic pose allocation (so that zero-weighted poses aren't uploaded

to GPU for example, enables much more total poses)

- Better animation API, see #8357

----

## Changelog

- Add morph targets to bevy meshes

- Support up to 64 poses per mesh of individually up to 116508 vertices,

animation currently strictly limited to the position, normal and tangent

attributes.

- Load a morph target using `Mesh::set_morph_targets`

- Add `VisitMorphTargets` and `VisitMorphAttributes` traits to

`bevy_render`, this allows defining morph targets (a fairly complex and

nested data structure) through iterators (ie: single copy instead of

passing around buffers), see documentation of those traits for details

- Add `MorphWeights` component exported by `bevy_render`

- `MorphWeights` control mesh's morph target weights, blending between

various poses defined as morph targets.

- `MorphWeights` are directly inherited by direct children (single level

of hierarchy) of an entity. This allows controlling several mesh

primitives through a unique entity _as per GLTF spec_.

- Add `MorphTargetNames` component, naming each indices of loaded morph

targets.

- Load morph targets weights and buffers in `bevy_gltf`

- handle morph targets animations in `bevy_animation` (previously, it

was a `warn!` log)

- Add the `MorphStressTest.gltf` asset for morph targets testing, taken

from the glTF samples repo, CC0.

- Add morph target manipulation to `scene_viewer`

- Separate the animation code in `scene_viewer` from the rest of the

code, reducing `#[cfg(feature)]` noise

- Add the `morph_targets.rs` example to show off how to manipulate morph

targets, loading `MorpStressTest.gltf`

## Migration Guide

- (very specialized, unlikely to be touched by 3rd parties)

- `MeshPipeline` now has a single `mesh_layouts` field rather than

separate `mesh_layout` and `skinned_mesh_layout` fields. You should

handle all possible mesh bind group layouts in your implementation

- You should also handle properly the new `MORPH_TARGETS` shader def and

mesh pipeline key. A new function is exposed to make this easier:

`setup_moprh_and_skinning_defs`

- The `MeshBindGroup` is now `MeshBindGroups`, cached bind groups are

now accessed through the `get` method.

[1]: https://en.wikipedia.org/wiki/Morph_target_animation

[2]:

https://registry.khronos.org/glTF/specs/2.0/glTF-2.0.html#morph-targets

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

2023-06-22 22:00:01 +02:00

|

|

|

MorphViewerPlugin,

|

2023-06-21 22:51:03 +02:00

|

|

|

))

|

2023-03-17 18:45:34 -07:00

|

|

|

.add_systems(Startup, setup)

|

|

|

|

|

.add_systems(PreUpdate, setup_scene_after_load);

|

2022-12-25 00:23:13 +00:00

|

|

|

|

Add morph targets (#8158)

# Objective

- Add morph targets to `bevy_pbr` (closes #5756) & load them from glTF

- Supersedes #3722

- Fixes #6814

[Morph targets][1] (also known as shape interpolation, shape keys, or

blend shapes) allow animating individual vertices with fine grained

controls. This is typically used for facial expressions. By specifying

multiple poses as vertex offset, and providing a set of weight of each

pose, it is possible to define surprisingly realistic transitions

between poses. Blending between multiple poses also allow composition.

Morph targets are part of the [gltf standard][2] and are a feature of

Unity and Unreal, and babylone.js, it is only natural to implement them

in bevy.

## Solution

This implementation of morph targets uses a 3d texture where each pixel

is a component of an animated attribute. Each layer is a different

target. We use a 2d texture for each target, because the number of

attribute×components×animated vertices is expected to always exceed the

maximum pixel row size limit of webGL2. It copies fairly closely the way

skinning is implemented on the CPU side, while on the GPU side, the

shader morph target implementation is a relatively trivial detail.

We add an optional `morph_texture` to the `Mesh` struct. The

`morph_texture` is built through a method that accepts an iterator over

attribute buffers.

The `MorphWeights` component, user-accessible, controls the blend of

poses used by mesh instances (so that multiple copy of the same mesh may

have different weights), all the weights are uploaded to a uniform

buffer of 256 `f32`. We limit to 16 poses per mesh, and a total of 256

poses.

More literature:

* Old babylone.js implementation (vertex attribute-based):

https://www.eternalcoding.com/dev-log-1-morph-targets/

* Babylone.js implementation (similar to ours):

https://www.youtube.com/watch?v=LBPRmGgU0PE

* GPU gems 3:

https://developer.nvidia.com/gpugems/gpugems3/part-i-geometry/chapter-3-directx-10-blend-shapes-breaking-limits

* Development discord thread

https://discord.com/channels/691052431525675048/1083325980615114772

https://user-images.githubusercontent.com/26321040/231181046-3bca2ab2-d4d9-472e-8098-639f1871ce2e.mp4

https://github.com/bevyengine/bevy/assets/26321040/d2a0c544-0ef8-45cf-9f99-8c3792f5a258

## Acknowledgements

* Thanks to `storytold` for sponsoring the feature

* Thanks to `superdump` and `james7132` for guidance and help figuring

out stuff

## Future work

- Handling of less and more attributes (eg: animated uv, animated

arbitrary attributes)

- Dynamic pose allocation (so that zero-weighted poses aren't uploaded

to GPU for example, enables much more total poses)

- Better animation API, see #8357

----

## Changelog

- Add morph targets to bevy meshes

- Support up to 64 poses per mesh of individually up to 116508 vertices,

animation currently strictly limited to the position, normal and tangent

attributes.

- Load a morph target using `Mesh::set_morph_targets`

- Add `VisitMorphTargets` and `VisitMorphAttributes` traits to

`bevy_render`, this allows defining morph targets (a fairly complex and

nested data structure) through iterators (ie: single copy instead of

passing around buffers), see documentation of those traits for details

- Add `MorphWeights` component exported by `bevy_render`

- `MorphWeights` control mesh's morph target weights, blending between

various poses defined as morph targets.

- `MorphWeights` are directly inherited by direct children (single level

of hierarchy) of an entity. This allows controlling several mesh

primitives through a unique entity _as per GLTF spec_.

- Add `MorphTargetNames` component, naming each indices of loaded morph

targets.

- Load morph targets weights and buffers in `bevy_gltf`

- handle morph targets animations in `bevy_animation` (previously, it

was a `warn!` log)

- Add the `MorphStressTest.gltf` asset for morph targets testing, taken

from the glTF samples repo, CC0.

- Add morph target manipulation to `scene_viewer`

- Separate the animation code in `scene_viewer` from the rest of the

code, reducing `#[cfg(feature)]` noise

- Add the `morph_targets.rs` example to show off how to manipulate morph

targets, loading `MorpStressTest.gltf`

## Migration Guide

- (very specialized, unlikely to be touched by 3rd parties)

- `MeshPipeline` now has a single `mesh_layouts` field rather than

separate `mesh_layout` and `skinned_mesh_layout` fields. You should

handle all possible mesh bind group layouts in your implementation

- You should also handle properly the new `MORPH_TARGETS` shader def and

mesh pipeline key. A new function is exposed to make this easier:

`setup_moprh_and_skinning_defs`

- The `MeshBindGroup` is now `MeshBindGroups`, cached bind groups are

now accessed through the `get` method.

[1]: https://en.wikipedia.org/wiki/Morph_target_animation

[2]:

https://registry.khronos.org/glTF/specs/2.0/glTF-2.0.html#morph-targets

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

2023-06-22 22:00:01 +02:00

|

|

|

#[cfg(feature = "animation")]

|

|

|

|

|

app.add_plugins(animation_plugin::AnimationManipulationPlugin);

|

|

|

|

|

|

2022-12-25 00:23:13 +00:00

|

|

|

app.run();

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

fn parse_scene(scene_path: String) -> (String, usize) {

|

|

|

|

|

if scene_path.contains('#') {

|

|

|

|

|

let gltf_and_scene = scene_path.split('#').collect::<Vec<_>>();

|

|

|

|

|

if let Some((last, path)) = gltf_and_scene.split_last() {

|

|

|

|

|

if let Some(index) = last

|

|

|

|

|

.strip_prefix("Scene")

|

|

|

|

|

.and_then(|index| index.parse::<usize>().ok())

|

|

|

|

|

{

|

|

|

|

|

return (path.join("#"), index);

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

(scene_path, 0)

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

fn setup(mut commands: Commands, asset_server: Res<AssetServer>) {

|

|

|

|

|

let scene_path = std::env::args()

|

|

|

|

|

.nth(1)

|

|

|

|

|

.unwrap_or_else(|| "assets/models/FlightHelmet/FlightHelmet.gltf".to_string());

|

|

|

|

|

info!("Loading {}", scene_path);

|

|

|

|

|

let (file_path, scene_index) = parse_scene(scene_path);

|

|

|

|

|

|

Copy on Write AssetPaths (#9729)

# Objective

The `AssetServer` and `AssetProcessor` do a lot of `AssetPath` cloning

(across many threads). To store the path on the handle, to store paths

in dependency lists, to pass an owned path to the offloaded thread, to

pass a path to the LoadContext, etc , etc. Cloning multiple string

allocations multiple times like this will add up. It is worth optimizing

this.

Referenced in #9714

## Solution

Added a new `CowArc<T>` type to `bevy_util`, which behaves a lot like

`Cow<T>`, but the Owned variant is an `Arc<T>`. Use this in place of

`Cow<str>` and `Cow<Path>` on `AssetPath`.

---

## Changelog

- `AssetPath` now internally uses `CowArc`, making clone operations much

cheaper

- `AssetPath` now serializes as `AssetPath("some_path.extension#Label")`

instead of as `AssetPath { path: "some_path.extension", label:

Some("Label) }`

## Migration Guide

```rust

// Old

AssetPath::new("logo.png", None);

// New

AssetPath::new("logo.png");

// Old

AssetPath::new("scene.gltf", Some("Mesh0");

// New

AssetPath::new("scene.gltf").with_label("Mesh0");

```

`AssetPath` now serializes as `AssetPath("some_path.extension#Label")`

instead of as `AssetPath { path: "some_path.extension", label:

Some("Label) }`

---------

Co-authored-by: Pascal Hertleif <killercup@gmail.com>

2023-09-09 16:15:10 -07:00

|

|

|

commands.insert_resource(SceneHandle::new(asset_server.load(file_path), scene_index));

|

2022-12-25 00:23:13 +00:00

|

|

|

}

|

|

|

|

|

|

|

|

|

|

fn setup_scene_after_load(

|

|

|

|

|

mut commands: Commands,

|

|

|

|

|

mut setup: Local<bool>,

|

|

|

|

|

mut scene_handle: ResMut<SceneHandle>,

|

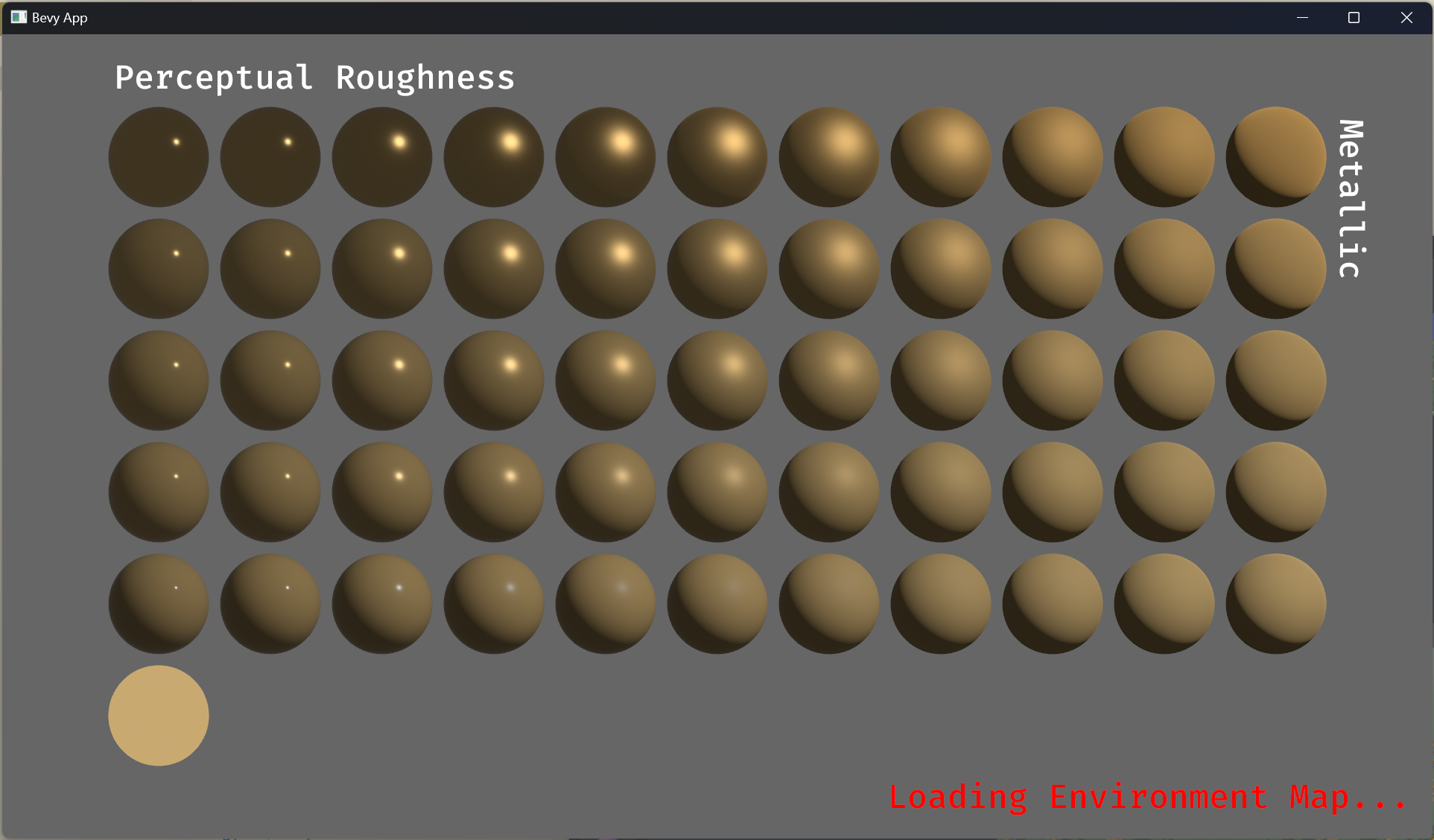

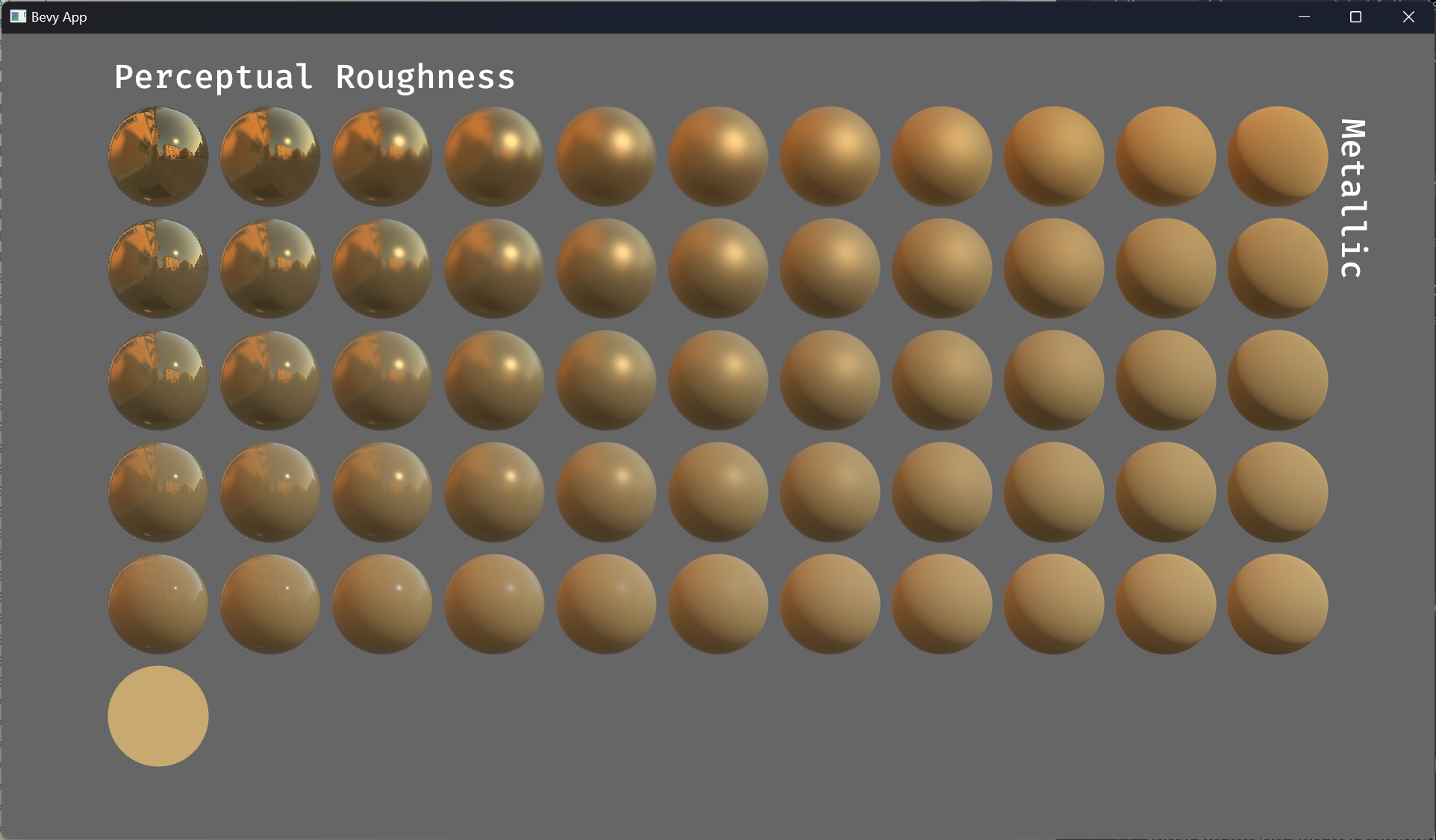

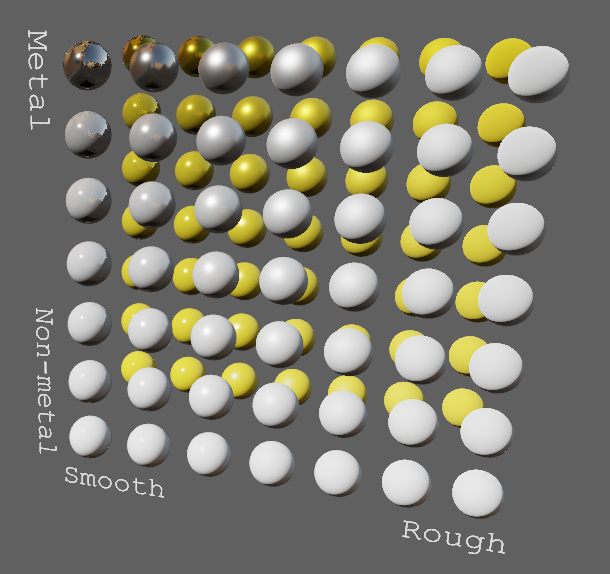

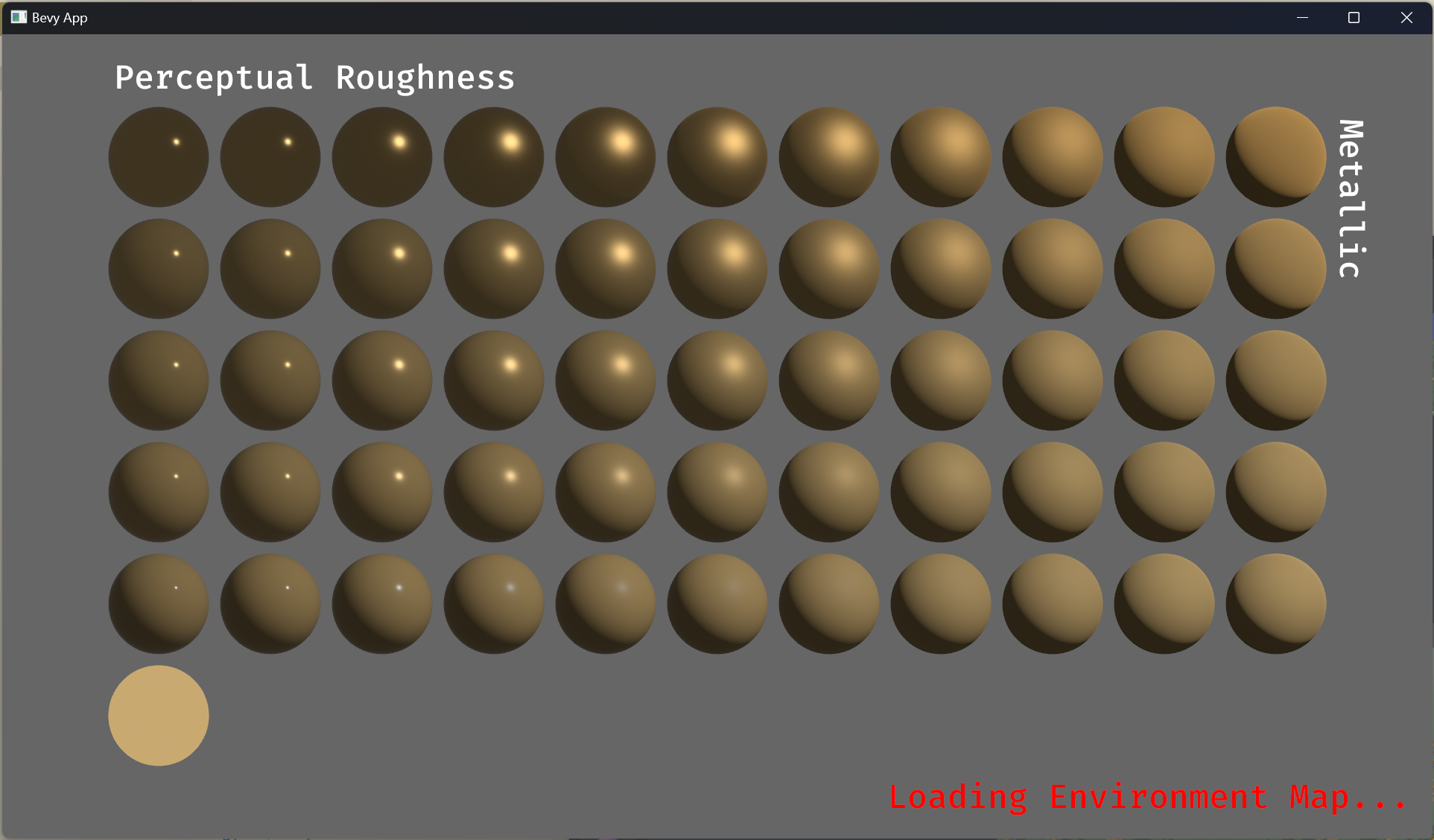

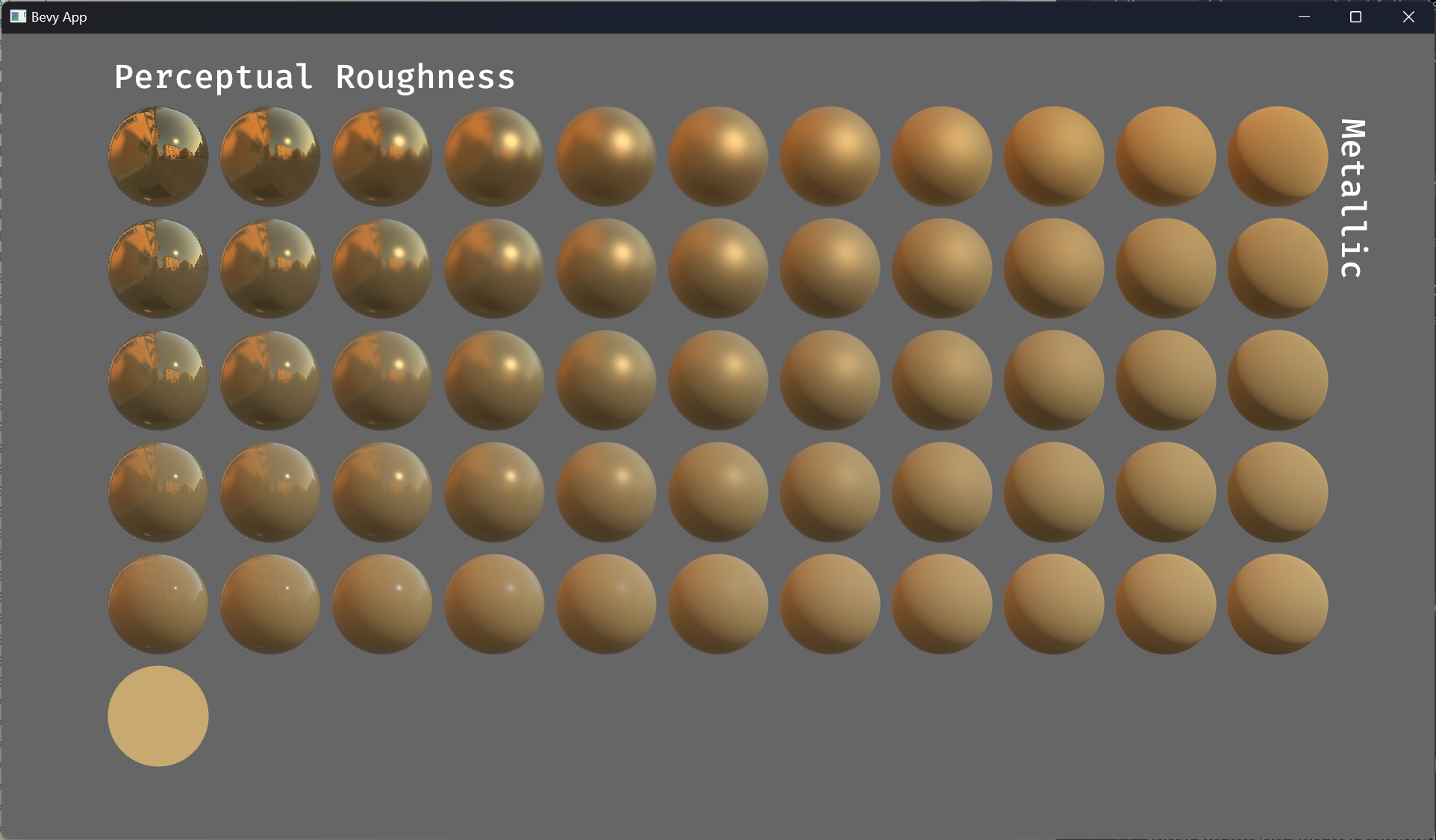

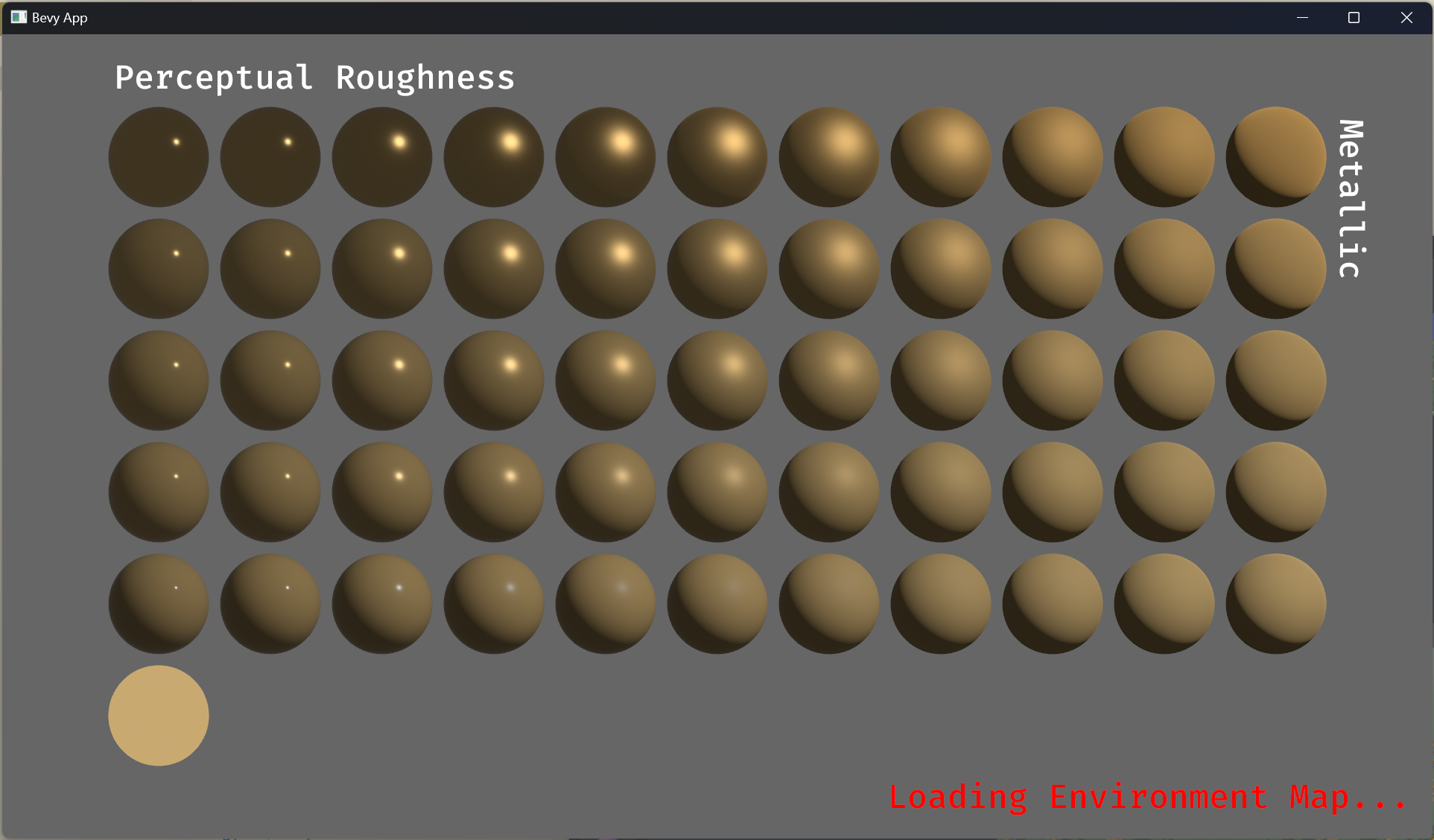

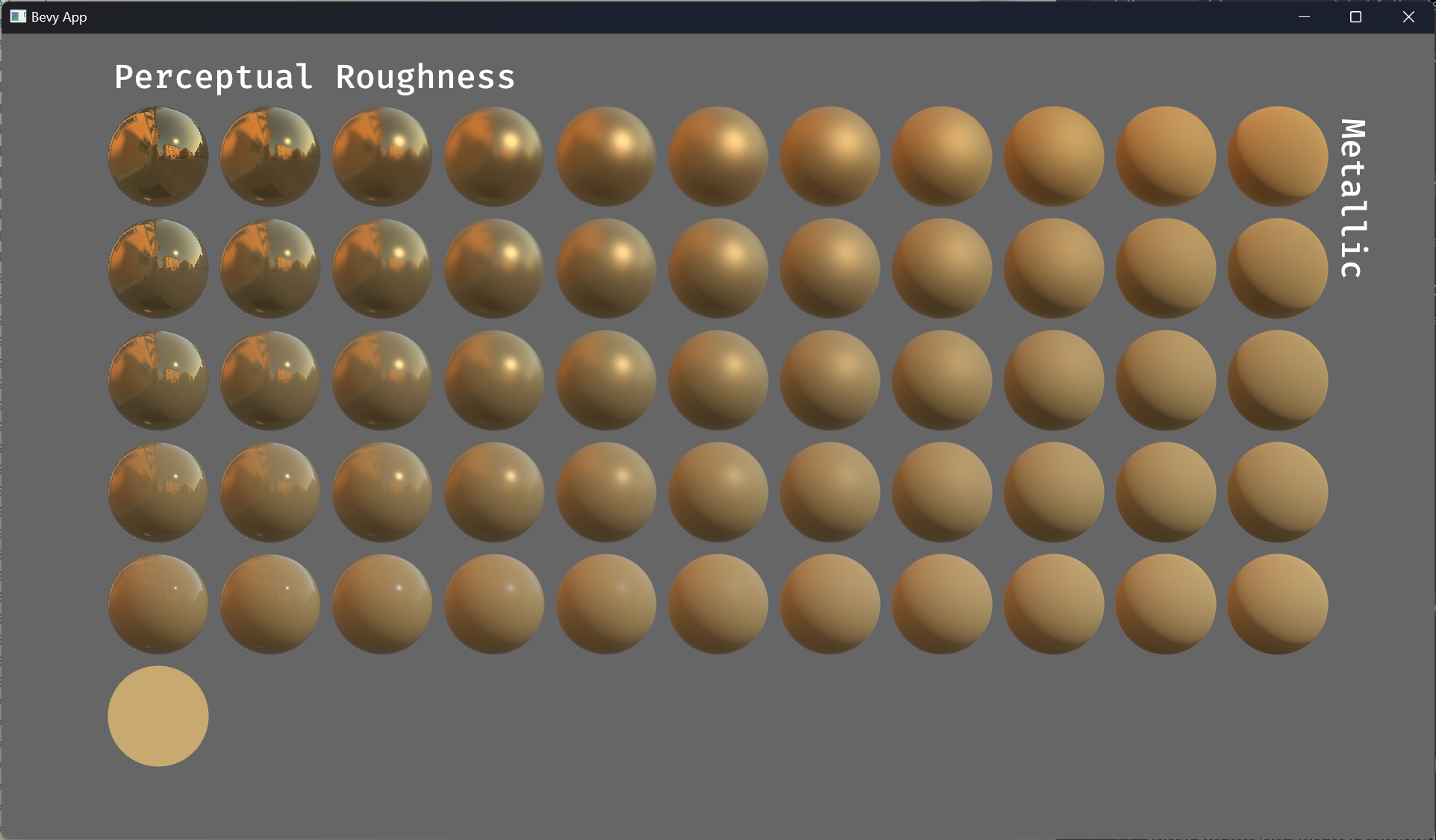

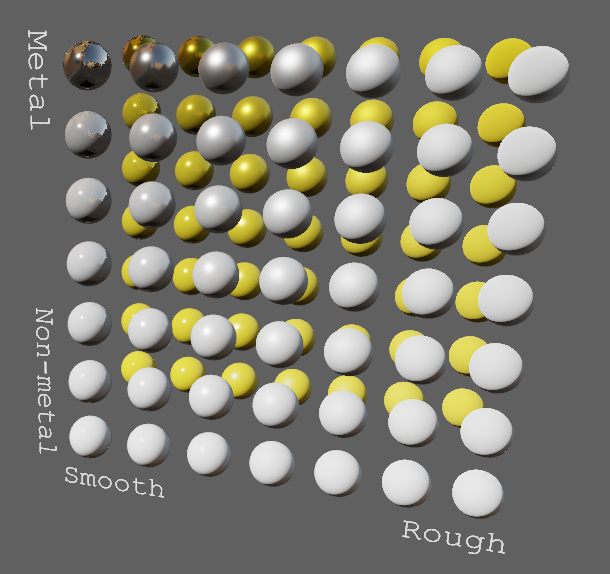

EnvironmentMapLight, BRDF Improvements (#7051)

(Before)

(After)

# Objective

- Improve lighting; especially reflections.

- Closes https://github.com/bevyengine/bevy/issues/4581.

## Solution

- Implement environment maps, providing better ambient light.

- Add microfacet multibounce approximation for specular highlights from Filament.

- Occlusion is no longer incorrectly applied to direct lighting. It now only applies to diffuse indirect light. Unsure if it's also supposed to apply to specular indirect light - the glTF specification just says "indirect light". In the case of ambient occlusion, for instance, that's usually only calculated as diffuse though. For now, I'm choosing to apply this just to indirect diffuse light, and not specular.

- Modified the PBR example to use an environment map, and have labels.

- Added `FallbackImageCubemap`.

## Implementation

- IBL technique references can be found in environment_map.wgsl.

- It's more accurate to use a LUT for the scale/bias. Filament has a good reference on generating this LUT. For now, I just used an analytic approximation.

- For now, environment maps must first be prefiltered outside of bevy using a 3rd party tool. See the `EnvironmentMap` documentation.

- Eventually, we should have our own prefiltering code, so that we can have dynamically changing environment maps, as well as let users drop in an HDR image and use asset preprocessing to create the needed textures using only bevy.

---

## Changelog

- Added an `EnvironmentMapLight` camera component that adds additional ambient light to a scene.

- StandardMaterials will now appear brighter and more saturated at high roughness, due to internal material changes. This is more physically correct.

- Fixed StandardMaterial occlusion being incorrectly applied to direct lighting.

- Added `FallbackImageCubemap`.

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: James Liu <contact@jamessliu.com>

Co-authored-by: Rob Parrett <robparrett@gmail.com>

2023-02-09 16:46:32 +00:00

|

|

|

asset_server: Res<AssetServer>,

|

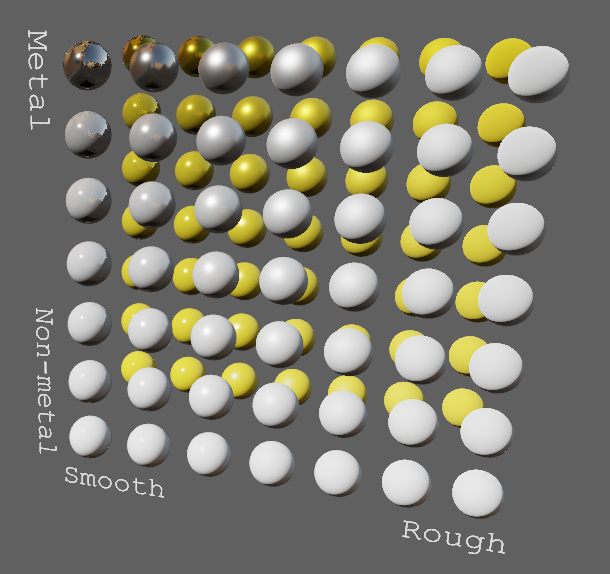

Migrate meshes and materials to required components (#15524)

# Objective

A big step in the migration to required components: meshes and

materials!

## Solution

As per the [selected

proposal](https://hackmd.io/@bevy/required_components/%2Fj9-PnF-2QKK0on1KQ29UWQ):

- Deprecate `MaterialMesh2dBundle`, `MaterialMeshBundle`, and

`PbrBundle`.

- Add `Mesh2d` and `Mesh3d` components, which wrap a `Handle<Mesh>`.

- Add `MeshMaterial2d<M: Material2d>` and `MeshMaterial3d<M: Material>`,

which wrap a `Handle<M>`.

- Meshes *without* a mesh material should be rendered with a default

material. The existence of a material is determined by

`HasMaterial2d`/`HasMaterial3d`, which is required by

`MeshMaterial2d`/`MeshMaterial3d`. This gets around problems with the

generics.

Previously:

```rust

commands.spawn(MaterialMesh2dBundle {

mesh: meshes.add(Circle::new(100.0)).into(),

material: materials.add(Color::srgb(7.5, 0.0, 7.5)),

transform: Transform::from_translation(Vec3::new(-200., 0., 0.)),

..default()

});

```

Now:

```rust

commands.spawn((

Mesh2d(meshes.add(Circle::new(100.0))),

MeshMaterial2d(materials.add(Color::srgb(7.5, 0.0, 7.5))),

Transform::from_translation(Vec3::new(-200., 0., 0.)),

));

```

If the mesh material is missing, previously nothing was rendered. Now,

it renders a white default `ColorMaterial` in 2D and a

`StandardMaterial` in 3D (this can be overridden). Below, only every

other entity has a material:

Why white? This is still open for discussion, but I think white makes

sense for a *default* material, while *invalid* asset handles pointing

to nothing should have something like a pink material to indicate that

something is broken (I don't handle that in this PR yet). This is kind

of a mix of Godot and Unity: Godot just renders a white material for

non-existent materials, while Unity renders nothing when no materials

exist, but renders pink for invalid materials. I can also change the

default material to pink if that is preferable though.

## Testing

I ran some 2D and 3D examples to test if anything changed visually. I

have not tested all examples or features yet however. If anyone wants to

test more extensively, it would be appreciated!

## Implementation Notes

- The relationship between `bevy_render` and `bevy_pbr` is weird here.

`bevy_render` needs `Mesh3d` for its own systems, but `bevy_pbr` has all

of the material logic, and `bevy_render` doesn't depend on it. I feel

like the two crates should be refactored in some way, but I think that's

out of scope for this PR.

- I didn't migrate meshlets to required components yet. That can

probably be done in a follow-up, as this is already a huge PR.

- It is becoming increasingly clear to me that we really, *really* want

to disallow raw asset handles as components. They caused me a *ton* of

headache here already, and it took me a long time to find every place

that queried for them or inserted them directly on entities, since there

were no compiler errors for it. If we don't remove the `Component`

derive, I expect raw asset handles to be a *huge* footgun for users as

we transition to wrapper components, especially as handles as components

have been the norm so far. I personally consider this to be a blocker

for 0.15: we need to migrate to wrapper components for asset handles

everywhere, and remove the `Component` derive. Also see

https://github.com/bevyengine/bevy/issues/14124.

---

## Migration Guide

Asset handles for meshes and mesh materials must now be wrapped in the

`Mesh2d` and `MeshMaterial2d` or `Mesh3d` and `MeshMaterial3d`

components for 2D and 3D respectively. Raw handles as components no

longer render meshes.

Additionally, `MaterialMesh2dBundle`, `MaterialMeshBundle`, and

`PbrBundle` have been deprecated. Instead, use the mesh and material

components directly.

Previously:

```rust

commands.spawn(MaterialMesh2dBundle {

mesh: meshes.add(Circle::new(100.0)).into(),

material: materials.add(Color::srgb(7.5, 0.0, 7.5)),

transform: Transform::from_translation(Vec3::new(-200., 0., 0.)),

..default()

});

```

Now:

```rust

commands.spawn((

Mesh2d(meshes.add(Circle::new(100.0))),

MeshMaterial2d(materials.add(Color::srgb(7.5, 0.0, 7.5))),

Transform::from_translation(Vec3::new(-200., 0., 0.)),

));

```

If the mesh material is missing, a white default material is now used.

Previously, nothing was rendered if the material was missing.

The `WithMesh2d` and `WithMesh3d` query filter type aliases have also

been removed. Simply use `With<Mesh2d>` or `With<Mesh3d>`.

---------

Co-authored-by: Tim Blackbird <justthecooldude@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

2024-10-02 00:33:17 +03:00

|

|

|

meshes: Query<(&GlobalTransform, Option<&Aabb>), With<Mesh3d>>,

|

2022-12-25 00:23:13 +00:00

|

|

|

) {

|

|

|

|

|

if scene_handle.is_loaded && !*setup {

|

|

|

|

|

*setup = true;

|

|

|

|

|

// Find an approximate bounding box of the scene from its meshes

|

|

|

|

|

if meshes.iter().any(|(_, maybe_aabb)| maybe_aabb.is_none()) {

|

|

|

|

|

return;

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

let mut min = Vec3A::splat(f32::MAX);

|

|

|

|

|

let mut max = Vec3A::splat(f32::MIN);

|

|

|

|

|

for (transform, maybe_aabb) in &meshes {

|

|

|

|

|

let aabb = maybe_aabb.unwrap();

|

|

|

|

|

// If the Aabb had not been rotated, applying the non-uniform scale would produce the

|

|

|

|

|

// correct bounds. However, it could very well be rotated and so we first convert to

|

|

|

|

|

// a Sphere, and then back to an Aabb to find the conservative min and max points.

|

|

|

|

|

let sphere = Sphere {

|

|

|

|

|

center: Vec3A::from(transform.transform_point(Vec3::from(aabb.center))),

|

|

|

|

|

radius: transform.radius_vec3a(aabb.half_extents),

|

|

|

|

|

};

|

|

|

|

|

let aabb = Aabb::from(sphere);

|

|

|

|

|

min = min.min(aabb.min());

|

|

|

|

|

max = max.max(aabb.max());

|

|

|

|

|

}

|

|

|

|

|

|

|

|

|

|

let size = (max - min).length();

|

|

|

|

|

let aabb = Aabb::from_min_max(Vec3::from(min), Vec3::from(max));

|

|

|

|

|

|

|

|

|

|

info!("Spawning a controllable 3D perspective camera");

|

|

|

|

|

let mut projection = PerspectiveProjection::default();

|

|

|

|

|

projection.far = projection.far.max(size * 10.0);

|

|

|

|

|

|

2024-02-19 17:57:20 +01:00

|

|

|

let walk_speed = size * 3.0;

|

|

|

|

|

let camera_controller = CameraController {

|

|

|

|

|

walk_speed,

|

|

|

|

|

run_speed: 3.0 * walk_speed,

|

|

|

|

|

..default()

|

|

|

|

|

};

|

2022-12-25 00:23:13 +00:00

|

|

|

|

|

|

|

|

// Display the controls of the scene viewer

|

|

|

|

|

info!("{}", camera_controller);

|

|

|

|

|

info!("{}", *scene_handle);

|

|

|

|

|

|

|

|

|

|

commands.spawn((

|

2024-10-05 04:59:52 +03:00

|

|

|

Camera3d::default(),

|

|

|

|

|

Projection::from(projection),

|

|

|

|

|

Transform::from_translation(Vec3::from(aabb.center) + size * Vec3::new(0.5, 0.25, 0.5))

|

2022-12-25 00:23:13 +00:00

|

|

|

.looking_at(Vec3::from(aabb.center), Vec3::Y),

|

2024-10-05 04:59:52 +03:00

|

|

|

Camera {

|

|

|

|

|

is_active: false,

|

2022-12-25 00:23:13 +00:00

|

|

|

..default()

|

|

|

|

|

},

|

EnvironmentMapLight, BRDF Improvements (#7051)

(Before)

(After)

# Objective

- Improve lighting; especially reflections.

- Closes https://github.com/bevyengine/bevy/issues/4581.

## Solution

- Implement environment maps, providing better ambient light.

- Add microfacet multibounce approximation for specular highlights from Filament.

- Occlusion is no longer incorrectly applied to direct lighting. It now only applies to diffuse indirect light. Unsure if it's also supposed to apply to specular indirect light - the glTF specification just says "indirect light". In the case of ambient occlusion, for instance, that's usually only calculated as diffuse though. For now, I'm choosing to apply this just to indirect diffuse light, and not specular.

- Modified the PBR example to use an environment map, and have labels.

- Added `FallbackImageCubemap`.

## Implementation

- IBL technique references can be found in environment_map.wgsl.

- It's more accurate to use a LUT for the scale/bias. Filament has a good reference on generating this LUT. For now, I just used an analytic approximation.

- For now, environment maps must first be prefiltered outside of bevy using a 3rd party tool. See the `EnvironmentMap` documentation.

- Eventually, we should have our own prefiltering code, so that we can have dynamically changing environment maps, as well as let users drop in an HDR image and use asset preprocessing to create the needed textures using only bevy.

---

## Changelog

- Added an `EnvironmentMapLight` camera component that adds additional ambient light to a scene.

- StandardMaterials will now appear brighter and more saturated at high roughness, due to internal material changes. This is more physically correct.

- Fixed StandardMaterial occlusion being incorrectly applied to direct lighting.

- Added `FallbackImageCubemap`.

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: James Liu <contact@jamessliu.com>

Co-authored-by: Rob Parrett <robparrett@gmail.com>

2023-02-09 16:46:32 +00:00

|

|

|

EnvironmentMapLight {

|

|

|

|

|

diffuse_map: asset_server

|

|

|

|

|

.load("assets/environment_maps/pisa_diffuse_rgb9e5_zstd.ktx2"),

|

|

|

|

|

specular_map: asset_server

|

|

|

|

|

.load("assets/environment_maps/pisa_specular_rgb9e5_zstd.ktx2"),

|

2024-01-16 06:53:21 -08:00

|

|

|

intensity: 150.0,

|

2024-07-19 23:00:50 +08:00

|

|

|

..default()

|

EnvironmentMapLight, BRDF Improvements (#7051)

(Before)

(After)

# Objective

- Improve lighting; especially reflections.

- Closes https://github.com/bevyengine/bevy/issues/4581.

## Solution

- Implement environment maps, providing better ambient light.

- Add microfacet multibounce approximation for specular highlights from Filament.

- Occlusion is no longer incorrectly applied to direct lighting. It now only applies to diffuse indirect light. Unsure if it's also supposed to apply to specular indirect light - the glTF specification just says "indirect light". In the case of ambient occlusion, for instance, that's usually only calculated as diffuse though. For now, I'm choosing to apply this just to indirect diffuse light, and not specular.

- Modified the PBR example to use an environment map, and have labels.

- Added `FallbackImageCubemap`.

## Implementation

- IBL technique references can be found in environment_map.wgsl.

- It's more accurate to use a LUT for the scale/bias. Filament has a good reference on generating this LUT. For now, I just used an analytic approximation.

- For now, environment maps must first be prefiltered outside of bevy using a 3rd party tool. See the `EnvironmentMap` documentation.

- Eventually, we should have our own prefiltering code, so that we can have dynamically changing environment maps, as well as let users drop in an HDR image and use asset preprocessing to create the needed textures using only bevy.

---

## Changelog

- Added an `EnvironmentMapLight` camera component that adds additional ambient light to a scene.

- StandardMaterials will now appear brighter and more saturated at high roughness, due to internal material changes. This is more physically correct.

- Fixed StandardMaterial occlusion being incorrectly applied to direct lighting.

- Added `FallbackImageCubemap`.

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: James Liu <contact@jamessliu.com>

Co-authored-by: Rob Parrett <robparrett@gmail.com>

2023-02-09 16:46:32 +00:00

|

|

|

},

|

2022-12-25 00:23:13 +00:00

|

|

|

camera_controller,

|

|

|

|

|

));

|

|

|

|

|

|

|

|

|

|

// Spawn a default light if the scene does not have one

|

|

|

|

|

if !scene_handle.has_light {

|

|

|

|

|

info!("Spawning a directional light");

|

2024-10-01 06:20:43 +03:00

|

|

|

commands.spawn((

|

|

|

|

|

DirectionalLight::default(),

|

|

|

|

|

Transform::from_xyz(1.0, 1.0, 0.0).looking_at(Vec3::ZERO, Vec3::Y),

|

|

|

|

|

));

|

2022-12-25 00:23:13 +00:00

|

|

|

|

|

|

|

|

scene_handle.has_light = true;

|

|

|

|

|

}

|

|

|

|

|

}

|

|

|

|

|

}

|