I don't like our macro tests -- they are brittle and don't inspire

confidence. I think the reason for that is that we try to unit-test

them, but that is at odds with reality, where macro expansion

fundamentally depends on name resolution.

Consider these expples

{ 92 }

async { 92 }

'a: { 92 }

#[a] { 92 }

Previously the tree for them were

BLOCK_EXPR

{ ... }

EFFECT_EXPR

async

BLOCK_EXPR

{ ... }

EFFECT_EXPR

'a:

BLOCK_EXPR

{ ... }

BLOCK_EXPR

#[a]

{ ... }

As you see, it gets progressively worse :) The last two items are

especially odd. The last one even violates the balanced curleys

invariant we have (#10357) The new approach is to say that the stuff in

`{}` is stmt_list, and the block is stmt_list + optional modifiers

BLOCK_EXPR

STMT_LIST

{ ... }

BLOCK_EXPR

async

STMT_LIST

{ ... }

BLOCK_EXPR

'a:

STMT_LIST

{ ... }

BLOCK_EXPR

#[a]

STMT_LIST

{ ... }

FragmentKind played two roles:

* entry point to the parser

* syntactic category of a macro call

These are different use-cases, and warrant different types. For example,

macro can't expand to visibility, but we have such fragment today.

This PR introduces `ExpandsTo` enum to separate this two use-cases.

I suspect we might further split `FragmentKind` into `$x:specifier` enum

specific to MBE, and a general parser entry point, but that's for

another PR!

9970: feat: Implement attribute input token mapping, fix attribute item token mapping r=Veykril a=Veykril

The token mapping for items with attributes got overwritten partially by the attributes non-item input, since attributes have two different inputs, the item and the direct input both.

This PR gives attributes a second TokenMap for its direct input. We now shift all normal input IDs by the item input maximum(we maybe wanna swap this see below) similar to what we do for macro-rules/def. For mapping down we then have to figure out whether we are inside the direct attribute input or its item input to pick the appropriate mapping which can be done with some token range comparisons.

Fixes https://github.com/rust-analyzer/rust-analyzer/issues/9867

Co-authored-by: Lukas Wirth <lukastw97@gmail.com>

9989: Fix two more “a”/“an” typos (this time the other way) r=lnicola a=steffahn

Follow-up to #9987

you guys are still merging these fast 😅

_this time I thought – for sure – that I’d get this commit into #9987 before it’s merged…_

Co-authored-by: Frank Steffahn <frank.steffahn@stu.uni-kiel.de>

Today, rust-analyzer (and rustc, and bat, and IntelliJ) fail badly on

some kinds of maliciously constructed code, like a deep sequence of

nested parenthesis.

"Who writes 100k nested parenthesis" you'd ask?

Well, in a language with macros, a run-away macro expansion might do

that (see the added tests)! Such expansion can be broad, rather than

deep, so it bypasses recursion check at the macro-expansion layer, but

triggers deep recursion in parser.

In the ideal world, the parser would just handle deeply nested structs

gracefully. We'll get there some day, but at the moment, let's try to be

simple, and just avoid expanding macros with unbalanced parenthesis in

the first place.

closes#9358

We generally avoid "syntax only" helper wrappers, which don't do much:

they make code easier to write, but harder to read. They also make

investigations harder, as "find_usages" needs to be invoked both for the

wrapped and unwrapped APIs

9700: fix: Remove the legacy macro scoping hack r=matklad a=jonas-schievink

This stops prepending `self::` to single-ident macro paths, resolving even legacy-scoped macros using the fixed-point algorithm. This is not correct, but a lot easier than fixing this properly (which involves pushing a new scope for every macro definition and invocation).

This allows resolution of macros from the prelude, fixing https://github.com/rust-analyzer/rust-analyzer/issues/9687.

Co-authored-by: Jonas Schievink <jonasschievink@gmail.com>

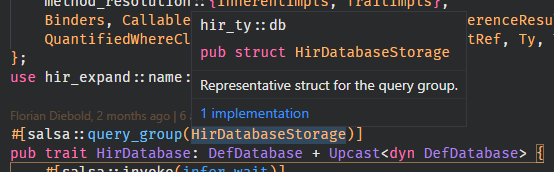

9453: Add first-class limits. r=matklad,lnicola a=rbartlensky

Partially fixes#9286.

This introduces a new `Limits` structure which is passed as an input

to `SourceDatabase`. This makes limits accessible almost everywhere in

the code, since most places have a database in scope.

One downside of this approach is that whenever you query limits, you

essentially do an `Arc::clone` which is less than ideal.

Let me know if I missed anything, or would like me to take a different approach!

Co-authored-by: Robert Bartlensky <bartlensky.robert@gmail.com>

9637: Overhaul doc_links testing infra r=Veykril a=Veykril

and fix several issues with current implementation.

Fixes#9617

Co-authored-by: Lukas Wirth <lukastw97@gmail.com>

In rust-analyzer, we avoid defualt impls for types which don't have

sensible, "empty" defaults. In particular, we avoid using invalid

indices for defaults and similar hacks.

This treats the consts generated by older synstructure versions like

unnamed consts. We should remove this at some point (at least after

Chalk has switched).

At the moment, this moves only a single diagnostic, but the idea is

reafactor the rest to use the same pattern. We are going to have a

single file per diagnostic. This file will define diagnostics code,

rendering range and fixes, if any. It'll also have all of the tests.

This is similar to how we deal with assists.

After we refactor all diagnostics to follow this pattern, we'll probably

move them to a new `ide_diagnostics` crate.

Not that we intentionally want to test all diagnostics on this layer,

despite the fact that they are generally emitted in the guts on the

compiler. Diagnostics care to much about the end presentation

details/fixes to be worth-while "unit" testing. So, we'll unit-test only

the primary output of compilation process (types and name res tables),

and will use integrated UI tests for diagnostics.

9244: feat: Make block-local trait impls work r=flodiebold a=flodiebold

As long as either the trait or the implementing type are defined in the same block.

CC #8961

Co-authored-by: Florian Diebold <flodiebold@gmail.com>