Changes to get Bevy to compile with wgpu master.

With this, on a Mac:

* 2d examples look fine

* ~~3d examples crash with an error specific to metal about a compilation error~~

* 3d examples work fine after enabling feature `wgpu/cross`

Feature `wgpu/cross` seems to be needed only on some platforms, not sure how to know which. It was introduced in https://github.com/gfx-rs/wgpu-rs/pull/826

Fixes#2037 (and then some)

Problem:

- `TypeUuid`, `RenderResource`, and `Bytes` derive macros did not properly handle generic structs.

Solution:

- Rework the derive macro implementations to handle the generics.

If a mesh without any vertex attributes is rendered (for example, one that only has indices), bevy will crash since the mesh still creates a vertex buffer even though it's empty. Later code assumes that there is vertex data, causing an index-out-of-bounds panic. This PR fixes the issue by adding a check that there is any vertex data before creating a vertex buffer.

I ran into this issue while rendering a tilemap without any vertex attributes (only indices).

Stack trace:

```

thread 'main' panicked at 'index out of bounds: the len is 0 but the index is 0', C:\Dev\Games\bevy\crates\bevy_render\src\render_graph\nodes\pass_node.rs:346:9

stack backtrace:

0: std::panicking::begin_panic_handler

at /rustc/bb491ed23937aef876622e4beb68ae95938b3bf9\/library\std\src\panicking.rs:493

1: core::panicking::panic_fmt

at /rustc/bb491ed23937aef876622e4beb68ae95938b3bf9\/library\core\src\panicking.rs:92

2: core::panicking::panic_bounds_check

at /rustc/bb491ed23937aef876622e4beb68ae95938b3bf9\/library\core\src\panicking.rs:69

3: core::slice::index::{{impl}}::index<core::option::Option<tuple<bevy_render::renderer::render_resource::buffer::BufferId, u64>>>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\core\src\slice\index.rs:184

4: core::slice::index::{{impl}}::index<core::option::Option<tuple<bevy_render::renderer::render_resource::buffer::BufferId, u64>>,usize>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\core\src\slice\index.rs:15

5: alloc::vec::{{impl}}::index<core::option::Option<tuple<bevy_render::renderer::render_resource::buffer::BufferId, u64>>,usize,alloc::alloc::Global>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\alloc\src\vec\mod.rs:2386

6: bevy_render::render_graph::nodes::pass_node::DrawState::is_vertex_buffer_set

at C:\Dev\Games\bevy\crates\bevy_render\src\render_graph\nodes\pass_node.rs:346

7: bevy_render::render_graph::nodes::pass_node::{{impl}}::update::{{closure}}<bevy_render::render_graph::base::MainPass*>

at C:\Dev\Games\bevy\crates\bevy_render\src\render_graph\nodes\pass_node.rs:285

8: bevy_wgpu::renderer::wgpu_render_context::{{impl}}::begin_pass

at C:\Dev\Games\bevy\crates\bevy_wgpu\src\renderer\wgpu_render_context.rs:196

9: bevy_render::render_graph::nodes::pass_node::{{impl}}::update<bevy_render::render_graph::base::MainPass*>

at C:\Dev\Games\bevy\crates\bevy_render\src\render_graph\nodes\pass_node.rs:244

10: bevy_wgpu::renderer::wgpu_render_graph_executor::WgpuRenderGraphExecutor::execute

at C:\Dev\Games\bevy\crates\bevy_wgpu\src\renderer\wgpu_render_graph_executor.rs:75

11: bevy_wgpu::wgpu_renderer::{{impl}}::run_graph::{{closure}}

at C:\Dev\Games\bevy\crates\bevy_wgpu\src\wgpu_renderer.rs:115

12: bevy_ecs::world::World::resource_scope<bevy_render::render_graph::graph::RenderGraph,tuple<>,closure-0>

at C:\Dev\Games\bevy\crates\bevy_ecs\src\world\mod.rs:715

13: bevy_wgpu::wgpu_renderer::WgpuRenderer::run_graph

at C:\Dev\Games\bevy\crates\bevy_wgpu\src\wgpu_renderer.rs:104

14: bevy_wgpu::wgpu_renderer::WgpuRenderer::update

at C:\Dev\Games\bevy\crates\bevy_wgpu\src\wgpu_renderer.rs:121

15: bevy_wgpu::get_wgpu_render_system::{{closure}}

at C:\Dev\Games\bevy\crates\bevy_wgpu\src\lib.rs:112

16: alloc::boxed::{{impl}}::call_mut<tuple<mut bevy_ecs::world::World*>,FnMut<tuple<mut bevy_ecs::world::World*>>,alloc::alloc::Global>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\alloc\src\boxed.rs:1553

17: bevy_ecs::system::exclusive_system::{{impl}}::run

at C:\Dev\Games\bevy\crates\bevy_ecs\src\system\exclusive_system.rs:41

18: bevy_ecs::schedule::stage::{{impl}}::run

at C:\Dev\Games\bevy\crates\bevy_ecs\src\schedule\stage.rs:812

19: bevy_ecs::schedule::Schedule::run_once

at C:\Dev\Games\bevy\crates\bevy_ecs\src\schedule\mod.rs:201

20: bevy_ecs::schedule::{{impl}}::run

at C:\Dev\Games\bevy\crates\bevy_ecs\src\schedule\mod.rs:219

21: bevy_app::app::App::update

at C:\Dev\Games\bevy\crates\bevy_app\src\app.rs:58

22: bevy_winit::winit_runner_with::{{closure}}

at C:\Dev\Games\bevy\crates\bevy_winit\src\lib.rs:485

23: winit::platform_impl::platform::event_loop::{{impl}}::run_return::{{closure}}<tuple<>,closure-1>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop.rs:203

24: alloc::boxed::{{impl}}::call_mut<tuple<winit::event::Event<tuple<>>, mut winit::event_loop::ControlFlow*>,FnMut<tuple<winit::event::Event<tuple<>>, mut winit::event_loop::ControlFlow*>>,alloc::alloc::Global>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\alloc\src\boxed.rs:1553

25: winit::platform_impl::platform::event_loop:🏃:{{impl}}::call_event_handler::{{closure}}<tuple<>>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop\runner.rs:245

26: std::panic::{{impl}}::call_once<tuple<>,closure-0>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panic.rs:344

27: std::panicking::try::do_call<std::panic::AssertUnwindSafe<closure-0>,tuple<>>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panicking.rs:379

28: hashbrown::set::HashSet<mut winapi::shared::windef::HWND__*, std::collections:#️⃣:map::RandomState, alloc::alloc::Global>::iter<mut winapi::shared::windef::HWND__*,std::collections:#️⃣:map::RandomState,alloc::alloc::Global>

29: std::panicking::try<tuple<>,std::panic::AssertUnwindSafe<closure-0>>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panicking.rs:343

30: std::panic::catch_unwind<std::panic::AssertUnwindSafe<closure-0>,tuple<>>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panic.rs:431

31: winit::platform_impl::platform::event_loop:🏃:EventLoopRunner<tuple<>>::catch_unwind<tuple<>,tuple<>,closure-0>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop\runner.rs:152

32: winit::platform_impl::platform::event_loop:🏃:EventLoopRunner<tuple<>>::call_event_handler<tuple<>>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop\runner.rs:239

33: winit::platform_impl::platform::event_loop:🏃:EventLoopRunner<tuple<>>::move_state_to<tuple<>>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop\runner.rs:341

34: winit::platform_impl::platform::event_loop:🏃:EventLoopRunner<tuple<>>::main_events_cleared<tuple<>>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop\runner.rs:227

35: winit::platform_impl::platform::event_loop::flush_paint_messages<tuple<>>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop.rs:676

36: winit::platform_impl::platform::event_loop::thread_event_target_callback::{{closure}}<tuple<>>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop.rs:1967

37: std::panic::{{impl}}::call_once<isize,closure-0>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panic.rs:344

38: std::panicking::try::do_call<std::panic::AssertUnwindSafe<closure-0>,isize>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panicking.rs:379

39: hashbrown::set::HashSet<mut winapi::shared::windef::HWND__*, std::collections:#️⃣:map::RandomState, alloc::alloc::Global>::iter<mut winapi::shared::windef::HWND__*,std::collections:#️⃣:map::RandomState,alloc::alloc::Global>

40: std::panicking::try<isize,std::panic::AssertUnwindSafe<closure-0>>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panicking.rs:343

41: std::panic::catch_unwind<std::panic::AssertUnwindSafe<closure-0>,isize>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\std\src\panic.rs:431

42: winit::platform_impl::platform::event_loop:🏃:EventLoopRunner<tuple<>>::catch_unwind<tuple<>,isize,closure-0>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop\runner.rs:152

43: winit::platform_impl::platform::event_loop::thread_event_target_callback<tuple<>>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop.rs:2151

44: DefSubclassProc

45: DefSubclassProc

46: CallWindowProcW

47: DispatchMessageW

48: SendMessageTimeoutW

49: KiUserCallbackDispatcher

50: NtUserDispatchMessage

51: DispatchMessageW

52: winit::platform_impl::platform::event_loop::EventLoop<tuple<>>::run_return<tuple<>,closure-1>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop.rs:218

53: winit::platform_impl::platform::event_loop::EventLoop<tuple<>>::run<tuple<>,closure-1>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\platform_impl\windows\event_loop.rs:188

54: winit::event_loop::EventLoop<tuple<>>::run<tuple<>,closure-1>

at C:\Users\tehpe\.cargo\registry\src\github.com-1ecc6299db9ec823\winit-0.24.0\src\event_loop.rs:154

55: bevy_winit::run<closure-1>

at C:\Dev\Games\bevy\crates\bevy_winit\src\lib.rs:171

56: bevy_winit::winit_runner_with

at C:\Dev\Games\bevy\crates\bevy_winit\src\lib.rs:493

57: bevy_winit::winit_runner

at C:\Dev\Games\bevy\crates\bevy_winit\src\lib.rs:211

58: core::ops::function::Fn::call<fn(bevy_app::app::App),tuple<bevy_app::app::App>>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\core\src\ops\function.rs:70

59: alloc::boxed::{{impl}}::call<tuple<bevy_app::app::App>,Fn<tuple<bevy_app::app::App>>,alloc::alloc::Global>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\alloc\src\boxed.rs:1560

60: bevy_app::app::App::run

at C:\Dev\Games\bevy\crates\bevy_app\src\app.rs:68

61: bevy_app::app_builder::AppBuilder::run

at C:\Dev\Games\bevy\crates\bevy_app\src\app_builder.rs:54

62: game_main::main

at .\crates\game_main\src\main.rs:23

63: core::ops::function::FnOnce::call_once<fn(),tuple<>>

at C:\Users\tehpe\.rustup\toolchains\nightly-x86_64-pc-windows-msvc\lib\rustlib\src\rust\library\core\src\ops\function.rs:227

note: Some details are omitted, run with `RUST_BACKTRACE=full` for a verbose backtrace.

Apr 27 21:51:01.026 ERROR gpu_descriptor::allocator: `DescriptorAllocator` is dropped while some descriptor sets were not deallocated

error: process didn't exit successfully: `target/cargo\debug\game_main.exe` (exit code: 0xc000041d)

```

There are cases where we want an enum variant name. Right now the only way to do that with rust's std is to derive Debug, but this will also print out the variant's fields. This creates the unfortunate situation where we need to manually write out each variant's string name (ex: in #1963), which is both boilerplate-ey and error-prone. Crates such as `strum` exist for this reason, but it includes a lot of code and complexity that we don't need.

This adds a dead-simple `EnumVariantMeta` derive that exposes `enum_variant_index` and `enum_variant_name` functions. This allows us to make cases like #1963 much cleaner (see the second commit). We might also be able to reuse this logic for `bevy_reflect` enum derives.

In bevy_webgl2, the `RenderResourceContext` is created after startup as it needs to first wait for an event from js side:

f31e5d49de/src/lib.rs (L117)

remove `panic` introduced in #1965 and log as a `warn` instead

This implementations allows you

convert std::vec::Vec<T> to VertexAttributeValues::T and back.

# Examples

```rust

use std::convert::TryInto;

use bevy_render::mesh::VertexAttributeValues;

// creating vector of values

let before = vec![[0_u32; 4]; 10];

let values = VertexAttributeValues::from(before.clone());

let after: Vec<[u32; 4]> = values.try_into().unwrap();

assert_eq!(before, after);

```

Co-authored-by: aloucks <aloucks@cofront.net>

Co-authored-by: simens_green <34134129+simensgreen@users.noreply.github.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

Allows render resources to move data to the heap by boxing them. I did this as a workaround to #1892, but it seems like it'd be useful regardless. If not, feel free to close this PR.

Implements `Byteable` and `RenderResource` for any array containing `Byteable` elements. This allows `RenderResources` to be implemented on structs with arbitrarily-sized arrays, among other things:

```rust

#[derive(RenderResources, TypeUuid)]

#[uuid = "2733ff34-8f95-459f-bf04-3274e686ac5f"]

struct Foo {

buffer: [i32; 256],

}

```

Fixes#1809. It makes it also possible to use `derive` for `SystemParam` inside ECS and avoid manual implementation. An alternative solution to macro changes is to use `use crate as bevy_ecs;` in `event.rs`.

The `VertexBufferLayout` returned by `crates\bevy_render\src\mesh\mesh.rs:308` was unstable, because `HashMap.iter()` has a random order. This caused the pipeline_compiler to wrongly consider a specialization to be different (`crates\bevy_render\src\pipeline\pipeline_compiler.rs:123`), causing each mesh changed event to potentially result in a different `PipelineSpecialization`. This in turn caused `Draw` to emit a `set_pipeline` much more often than needed.

This fix shaves off a `BindPipeline` and two `BindDescriptorSets` (for the Camera and for global renderresources) for every mesh after the first that can now use the same specialization, where it didn't before (which was random).

`StableHashMap` was not a good replacement, because it isn't `Clone`, so instead I replaced it with a `BTreeMap` which is OK in this instance, because there shouldn't be many insertions on `Mesh.attributes` after the mesh is created.

- prints glsl compile error message in multiple lines instead of `thread 'main' panicked at 'called Result::unwrap() on an Err value: Compilation("glslang_shader_parse:\nInfo log:\nERROR: 0:335: \'assign\' : l-value required \"anon@7\" (can\'t modify a uniform)\nERROR: 0:335: \'\' : compilation terminated \nERROR: 2 compilation errors. No code generated.\n\n\nDebug log:\n\n")', crates/bevy_render/src/pipeline/pipeline_compiler.rs:161:22`

- makes gltf error messages have more context

New error:

```rust

thread 'Compute Task Pool (5)' panicked at 'Shader compilation error:

glslang_shader_parse:

Info log:

ERROR: 0:12: 'assign' : l-value required "anon@1" (can't modify a uniform)

ERROR: 0:12: '' : compilation terminated

ERROR: 2 compilation errors. No code generated.

', crates/bevy_render/src/pipeline/pipeline_compiler.rs:364:5

```

These changes are a bit unrelated. I can open separate PRs if someone wants that.

After #1697 I looked at all other Iterators from Bevy and added overrides for `size_hint` where it wasn't done.

Also implemented `ExactSizeIterator` where applicable.

In shaders, `vec3` should be avoided for `std140` layout, as they take the size of a `vec4` and won't support manual padding by adding an additional `float`.

This change is needed for 3D to work in WebGL2. With it, I get PBR to render

<img width="1407" alt="Screenshot 2021-04-02 at 02 57 14" src="https://user-images.githubusercontent.com/8672791/113368551-5a3c2780-935f-11eb-8c8d-e9ba65b5ee98.png">

Without it, nothing renders... @cart Could this be considered for 0.5 release?

Also, I learned shaders 2 days ago, so don't hesitate to correct any issue or misunderstanding I may have

bevy_webgl2 PR in progress for Bevy 0.5 is here if you want to test: https://github.com/rparrett/bevy_webgl2/pull/1

I think [collection, thing_removed_from_collection] is a more natural order than [thing_removed_from_collection, collection]. Just a small tweak that I think we should include in 0.5.

This PR adds normal maps on top of PBR #1554. Once that PR lands, the changes should look simpler.

Edit: Turned out to be so little extra work, I added metallic/roughness texture too. And occlusion and emissive.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

This PR adds two systems to the sprite module that culls Sprites and AtlasSprites that are not within the camera's view.

This is achieved by removing / adding a new `Viewable` Component dynamically.

Some of the render queries now use a `With<Viewable>` filter to only process the sprites that are actually on screen, which improves performance drastically for scene swith a large amount of sprites off-screen.

https://streamable.com/vvzh2u

This scene shows a map with a 320x320 tiles, with a grid size of 64p.

This is exactly 102400 Sprites in the entire scene.

Without this PR, this scene runs with 1 to 4 FPS.

With this PR..

.. at 720p, there are around 600 visible sprites and runs at ~215 FPS

.. at 1440p there are around 2000 visible sprites and runs at ~135 FPS

The Systems this PR adds take around 1.2ms (with 100K+ sprites in the scene)

Note:

This is only implemented for Sprites and AtlasTextureSprites.

There is no culling for 3D in this PR.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

This is a rebase of StarArawns PBR work from #261 with IngmarBitters work from #1160 cherry-picked on top.

I had to make a few minor changes to make some intermediate commits compile and the end result is not yet 100% what I expected, so there's a bit more work to do.

Co-authored-by: John Mitchell <toasterthegamer@gmail.com>

Co-authored-by: Ingmar Bitter <ingmar.bitter@gmail.com>

Alternative to #1203 and #1611

Camera bindings have historically been "hacked in". They were _required_ in all shaders and only supported a single Mat4. PBR (#1554) requires the CameraView matrix, but adding this using the "hacked" method forced users to either include all possible camera data in a single binding (#1203) or include all possible bindings (#1611).

This approach instead assigns each "active camera" its own RenderResourceBindings, which are populated by CameraNode. The PassNode then retrieves (and initializes) the relevant bind groups for all render pipelines used by visible entities.

* Enables any number of camera bindings , including zero (with any set or binding number ... set 0 should still be used to avoid rebinds).

* Renames Camera binding to CameraViewProj

* Adds CameraView binding

# Problem Definition

The current change tracking (via flags for both components and resources) fails to detect changes made by systems that are scheduled to run earlier in the frame than they are.

This issue is discussed at length in [#68](https://github.com/bevyengine/bevy/issues/68) and [#54](https://github.com/bevyengine/bevy/issues/54).

This is very much a draft PR, and contributions are welcome and needed.

# Criteria

1. Each change is detected at least once, no matter the ordering.

2. Each change is detected at most once, no matter the ordering.

3. Changes should be detected the same frame that they are made.

4. Competitive ergonomics. Ideally does not require opting-in.

5. Low CPU overhead of computation.

6. Memory efficient. This must not increase over time, except where the number of entities / resources does.

7. Changes should not be lost for systems that don't run.

8. A frame needs to act as a pure function. Given the same set of entities / components it needs to produce the same end state without side-effects.

**Exact** change-tracking proposals satisfy criteria 1 and 2.

**Conservative** change-tracking proposals satisfy criteria 1 but not 2.

**Flaky** change tracking proposals satisfy criteria 2 but not 1.

# Code Base Navigation

There are three types of flags:

- `Added`: A piece of data was added to an entity / `Resources`.

- `Mutated`: A piece of data was able to be modified, because its `DerefMut` was accessed

- `Changed`: The bitwise OR of `Added` and `Changed`

The special behavior of `ChangedRes`, with respect to the scheduler is being removed in [#1313](https://github.com/bevyengine/bevy/pull/1313) and does not need to be reproduced.

`ChangedRes` and friends can be found in "bevy_ecs/core/resources/resource_query.rs".

The `Flags` trait for Components can be found in "bevy_ecs/core/query.rs".

`ComponentFlags` are stored in "bevy_ecs/core/archetypes.rs", defined on line 446.

# Proposals

**Proposal 5 was selected for implementation.**

## Proposal 0: No Change Detection

The baseline, where computations are performed on everything regardless of whether it changed.

**Type:** Conservative

**Pros:**

- already implemented

- will never miss events

- no overhead

**Cons:**

- tons of repeated work

- doesn't allow users to avoid repeating work (or monitoring for other changes)

## Proposal 1: Earlier-This-Tick Change Detection

The current approach as of Bevy 0.4. Flags are set, and then flushed at the end of each frame.

**Type:** Flaky

**Pros:**

- already implemented

- simple to understand

- low memory overhead (2 bits per component)

- low time overhead (clear every flag once per frame)

**Cons:**

- misses systems based on ordering

- systems that don't run every frame miss changes

- duplicates detection when looping

- can lead to unresolvable circular dependencies

## Proposal 2: Two-Tick Change Detection

Flags persist for two frames, using a double-buffer system identical to that used in events.

A change is observed if it is found in either the current frame's list of changes or the previous frame's.

**Type:** Conservative

**Pros:**

- easy to understand

- easy to implement

- low memory overhead (4 bits per component)

- low time overhead (bit mask and shift every flag once per frame)

**Cons:**

- can result in a great deal of duplicated work

- systems that don't run every frame miss changes

- duplicates detection when looping

## Proposal 3: Last-Tick Change Detection

Flags persist for two frames, using a double-buffer system identical to that used in events.

A change is observed if it is found in the previous frame's list of changes.

**Type:** Exact

**Pros:**

- exact

- easy to understand

- easy to implement

- low memory overhead (4 bits per component)

- low time overhead (bit mask and shift every flag once per frame)

**Cons:**

- change detection is always delayed, possibly causing painful chained delays

- systems that don't run every frame miss changes

- duplicates detection when looping

## Proposal 4: Flag-Doubling Change Detection

Combine Proposal 2 and Proposal 3. Differentiate between `JustChanged` (current behavior) and `Changed` (Proposal 3).

Pack this data into the flags according to [this implementation proposal](https://github.com/bevyengine/bevy/issues/68#issuecomment-769174804).

**Type:** Flaky + Exact

**Pros:**

- allows users to acc

- easy to implement

- low memory overhead (4 bits per component)

- low time overhead (bit mask and shift every flag once per frame)

**Cons:**

- users must specify the type of change detection required

- still quite fragile to system ordering effects when using the flaky `JustChanged` form

- cannot get immediate + exact results

- systems that don't run every frame miss changes

- duplicates detection when looping

## [SELECTED] Proposal 5: Generation-Counter Change Detection

A global counter is increased after each system is run. Each component saves the time of last mutation, and each system saves the time of last execution. Mutation is detected when the component's counter is greater than the system's counter. Discussed [here](https://github.com/bevyengine/bevy/issues/68#issuecomment-769174804). How to handle addition detection is unsolved; the current proposal is to use the highest bit of the counter as in proposal 1.

**Type:** Exact (for mutations), flaky (for additions)

**Pros:**

- low time overhead (set component counter on access, set system counter after execution)

- robust to systems that don't run every frame

- robust to systems that loop

**Cons:**

- moderately complex implementation

- must be modified as systems are inserted dynamically

- medium memory overhead (4 bytes per component + system)

- unsolved addition detection

## Proposal 6: System-Data Change Detection

For each system, track which system's changes it has seen. This approach is only worth fully designing and implementing if Proposal 5 fails in some way.

**Type:** Exact

**Pros:**

- exact

- conceptually simple

**Cons:**

- requires storing data on each system

- implementation is complex

- must be modified as systems are inserted dynamically

## Proposal 7: Total-Order Change Detection

Discussed [here](https://github.com/bevyengine/bevy/issues/68#issuecomment-754326523). This proposal is somewhat complicated by the new scheduler, but I believe it should still be conceptually feasible. This approach is only worth fully designing and implementing if Proposal 5 fails in some way.

**Type:** Exact

**Pros:**

- exact

- efficient data storage relative to other exact proposals

**Cons:**

- requires access to the scheduler

- complex implementation and difficulty grokking

- must be modified as systems are inserted dynamically

# Tests

- We will need to verify properties 1, 2, 3, 7 and 8. Priority: 1 > 2 = 3 > 8 > 7

- Ideally we can use identical user-facing syntax for all proposals, allowing us to re-use the same syntax for each.

- When writing tests, we need to carefully specify order using explicit dependencies.

- These tests will need to be duplicated for both components and resources.

- We need to be sure to handle cases where ambiguous system orders exist.

`changing_system` is always the system that makes the changes, and `detecting_system` always detects the changes.

The component / resource changed will be simple boolean wrapper structs.

## Basic Added / Mutated / Changed

2 x 3 design:

- Resources vs. Components

- Added vs. Changed vs. Mutated

- `changing_system` runs before `detecting_system`

- verify at the end of tick 2

## At Least Once

2 x 3 design:

- Resources vs. Components

- Added vs. Changed vs. Mutated

- `changing_system` runs after `detecting_system`

- verify at the end of tick 2

## At Most Once

2 x 3 design:

- Resources vs. Components

- Added vs. Changed vs. Mutated

- `changing_system` runs once before `detecting_system`

- increment a counter based on the number of changes detected

- verify at the end of tick 2

## Fast Detection

2 x 3 design:

- Resources vs. Components

- Added vs. Changed vs. Mutated

- `changing_system` runs before `detecting_system`

- verify at the end of tick 1

## Ambiguous System Ordering Robustness

2 x 3 x 2 design:

- Resources vs. Components

- Added vs. Changed vs. Mutated

- `changing_system` runs [before/after] `detecting_system` in tick 1

- `changing_system` runs [after/before] `detecting_system` in tick 2

## System Pausing

2 x 3 design:

- Resources vs. Components

- Added vs. Changed vs. Mutated

- `changing_system` runs in tick 1, then is disabled by run criteria

- `detecting_system` is disabled by run criteria until it is run once during tick 3

- verify at the end of tick 3

## Addition Causes Mutation

2 design:

- Resources vs. Components

- `adding_system_1` adds a component / resource

- `adding system_2` adds the same component / resource

- verify the `Mutated` flag at the end of the tick

- verify the `Added` flag at the end of the tick

First check tests for: https://github.com/bevyengine/bevy/issues/333

Second check tests for: https://github.com/bevyengine/bevy/issues/1443

## Changes Made By Commands

- `adding_system` runs in Update in tick 1, and sends a command to add a component

- `detecting_system` runs in Update in tick 1 and 2, after `adding_system`

- We can't detect the changes in tick 1, since they haven't been processed yet

- If we were to track these changes as being emitted by `adding_system`, we can't detect the changes in tick 2 either, since `detecting_system` has already run once after `adding_system` :(

# Benchmarks

See: [general advice](https://github.com/bevyengine/bevy/blob/master/docs/profiling.md), [Criterion crate](https://github.com/bheisler/criterion.rs)

There are several critical parameters to vary:

1. entity count (1 to 10^9)

2. fraction of entities that are changed (0% to 100%)

3. cost to perform work on changed entities, i.e. workload (1 ns to 1s)

1 and 2 should be varied between benchmark runs. 3 can be added on computationally.

We want to measure:

- memory cost

- run time

We should collect these measurements across several frames (100?) to reduce bootup effects and accurately measure the mean, variance and drift.

Entity-component change detection is much more important to benchmark than resource change detection, due to the orders of magnitude higher number of pieces of data.

No change detection at all should be included in benchmarks as a second control for cases where missing changes is unacceptable.

## Graphs

1. y: performance, x: log_10(entity count), color: proposal, facet: performance metric. Set cost to perform work to 0.

2. y: run time, x: cost to perform work, color: proposal, facet: fraction changed. Set number of entities to 10^6

3. y: memory, x: frames, color: proposal

# Conclusions

1. Is the theoretical categorization of the proposals correct according to our tests?

2. How does the performance of the proposals compare without any load?

3. How does the performance of the proposals compare with realistic loads?

4. At what workload does more exact change tracking become worth the (presumably) higher overhead?

5. When does adding change-detection to save on work become worthwhile?

6. Is there enough divergence in performance between the best solutions in each class to ship more than one change-tracking solution?

# Implementation Plan

1. Write a test suite.

2. Verify that tests fail for existing approach.

3. Write a benchmark suite.

4. Get performance numbers for existing approach.

5. Implement, test and benchmark various solutions using a Git branch per proposal.

6. Create a draft PR with all solutions and present results to team.

7. Select a solution and replace existing change detection.

Co-authored-by: Brice DAVIER <bricedavier@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

`Color` can now be from different color spaces or representation:

- sRGB

- linear RGB

- HSL

This fixes#1193 by allowing the creation of const colors of all types, and writing it to the linear RGB color space for rendering.

I went with an enum after trying with two different types (`Color` and `LinearColor`) to be able to use the different variants in all place where a `Color` is expected.

I also added the HLS representation because:

- I like it

- it's useful for some case, see example `contributors`: I can just change the saturation and lightness while keeping the hue of the color

- I think adding another variant not using `red`, `green`, `blue` makes it clearer there are differences

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

it's a followup of #1550

I think calling explicit methods/values instead of default makes the code easier to read: "what is `Quat::default()`" vs "Oh, it's `Quat::IDENTITY`"

`Transform::identity()` and `GlobalTransform::identity()` can also be consts and I replaced the calls to their `default()` impl with `identity()`

Fixes all warnings from `cargo doc --all`.

Those related to code blocks were introduced in #1612, but re-formatting using the experimental features in `rustfmt.toml` doesn't seem to reintroduce them.

* Adds labels and orderings to systems that need them (uses the new many-to-many labels for InputSystem)

* Removes the Event, PreEvent, Scene, and Ui stages in favor of First, PreUpdate, and PostUpdate (there is more collapsing potential, such as the Asset stages and _maybe_ removing First, but those have more nuance so they should be handled separately)

* Ambiguity detection now prints component conflicts

* Removed broken change filters from flex calculation (which implicitly relied on the z-update system always modifying translation.z). This will require more work to make it behave as expected so i just removed it (and it was already doing this work every frame).

This is an effort to provide the correct `#[reflect_value(...)]` attributes where they are needed.

Supersedes #1533 and resolves#1528.

---

I am working under the following assumptions (thanks to @bjorn3 and @Davier for advice here):

- Any `enum` that derives `Reflect` and one or more of { `Serialize`, `Deserialize`, `PartialEq`, `Hash` } needs a `#[reflect_value(...)]` attribute containing the same subset of { `Serialize`, `Deserialize`, `PartialEq`, `Hash` } that is present on the derive.

- Same as above for `struct` and `#[reflect(...)]`, respectively.

- If a `struct` is used as a component, it should also have `#[reflect(Component)]`

- All reflected types should be registered in their plugins

I treated the following as components (added `#[reflect(Component)]` if necessary):

- `bevy_render`

- `struct RenderLayers`

- `bevy_transform`

- `struct GlobalTransform`

- `struct Parent`

- `struct Transform`

- `bevy_ui`

- `struct Style`

Not treated as components:

- `bevy_math`

- `struct Size<T>`

- `struct Rect<T>`

- Note: The updates for `Size<T>` and `Rect<T>` in `bevy::math::geometry` required using @Davier's suggestion to add `+ PartialEq` to the trait bound. I then registered the specific types used over in `bevy_ui` such as `Size<Val>`, etc. in `bevy_ui`'s plugin, since `bevy::math` does not contain a plugin.

- `bevy_render`

- `struct Color`

- `struct PipelineSpecialization`

- `struct ShaderSpecialization`

- `enum PrimitiveTopology`

- `enum IndexFormat`

Not Addressed:

- I am not searching for components in Bevy that are _not_ reflected. So if there are components that are not reflected that should be reflected, that will need to be figured out in another PR.

- I only added `#[reflect(...)]` or `#[reflect_value(...)]` entries for the set of four traits { `Serialize`, `Deserialize`, `PartialEq`, `Hash` } _if they were derived via `#[derive(...)]`_. I did not look for manual trait implementations of the same set of four, nor did I consider any traits outside the four. Are those other possibilities something that needs to be looked into?

This adds a `EventWriter<T>` `SystemParam` that is just a thin wrapper around `ResMut<Events<T>>`. This is primarily to have API symmetry between the reader and writer, and has the added benefit of easily improving the API later with no breaking changes.

Super simple and straight forward. I need this for the tilemap because if I need to update all chunk indices, then I can calculate it once and clone it. Of course, for now I'm just returning the Vec itself then wrapping it but would be nice if I didn't have to do that.

I was fiddling with creating a mesh importer today, and decided to write some more docs.

A lot of this is describing general renderer/GL stuff, so you'll probably find most of it self explanatory anyway, but perhaps it will be useful for someone.

Fix staging buffer required size calculation (fixes#1056)

The `required_staging_buffer_size` is currently calculated differently in two places, each will be correct in different situations:

* `prepare_staging_buffers()` based on actual `buffer_byte_len()`

* `set_required_staging_buffer_size_to_max()` based on item_size

In the case of render assets, `prepare_staging_buffers()` would only operate over changed assets. If some of the assets didn't change, their size wouldn't be taken into account for the `required_staging_buffer_size`. In some cases, this meant the buffers wouldn't be resized when they should. Now `prepare_staging_buffers()` is called over all assets, which may hit performance but at least gets the size right.

Shortly after `prepare_staging_buffers()`, `set_required_staging_buffer_size_to_max()` would unconditionally overwrite the previously computed value, even if using `item_size` made no sense. Now it only overwrites the value if bigger.

This can be considered a short term hack, but should prevent a few hard to debug panics.

# Bevy ECS V2

This is a rewrite of Bevy ECS (basically everything but the new executor/schedule, which are already awesome). The overall goal was to improve the performance and versatility of Bevy ECS. Here is a quick bulleted list of changes before we dive into the details:

* Complete World rewrite

* Multiple component storage types:

* Tables: fast cache friendly iteration, slower add/removes (previously called Archetypes)

* Sparse Sets: fast add/remove, slower iteration

* Stateful Queries (caches query results for faster iteration. fragmented iteration is _fast_ now)

* Stateful System Params (caches expensive operations. inspired by @DJMcNab's work in #1364)

* Configurable System Params (users can set configuration when they construct their systems. once again inspired by @DJMcNab's work)

* Archetypes are now "just metadata", component storage is separate

* Archetype Graph (for faster archetype changes)

* Component Metadata

* Configure component storage type

* Retrieve information about component size/type/name/layout/send-ness/etc

* Components are uniquely identified by a densely packed ComponentId

* TypeIds are now totally optional (which should make implementing scripting easier)

* Super fast "for_each" query iterators

* Merged Resources into World. Resources are now just a special type of component

* EntityRef/EntityMut builder apis (more efficient and more ergonomic)

* Fast bitset-backed `Access<T>` replaces old hashmap-based approach everywhere

* Query conflicts are determined by component access instead of archetype component access (to avoid random failures at runtime)

* With/Without are still taken into account for conflicts, so this should still be comfy to use

* Much simpler `IntoSystem` impl

* Significantly reduced the amount of hashing throughout the ecs in favor of Sparse Sets (indexed by densely packed ArchetypeId, ComponentId, BundleId, and TableId)

* Safety Improvements

* Entity reservation uses a normal world reference instead of unsafe transmute

* QuerySets no longer transmute lifetimes

* Made traits "unsafe" where relevant

* More thorough safety docs

* WorldCell

* Exposes safe mutable access to multiple resources at a time in a World

* Replaced "catch all" `System::update_archetypes(world: &World)` with `System::new_archetype(archetype: &Archetype)`

* Simpler Bundle implementation

* Replaced slow "remove_bundle_one_by_one" used as fallback for Commands::remove_bundle with fast "remove_bundle_intersection"

* Removed `Mut<T>` query impl. it is better to only support one way: `&mut T`

* Removed with() from `Flags<T>` in favor of `Option<Flags<T>>`, which allows querying for flags to be "filtered" by default

* Components now have is_send property (currently only resources support non-send)

* More granular module organization

* New `RemovedComponents<T>` SystemParam that replaces `query.removed::<T>()`

* `world.resource_scope()` for mutable access to resources and world at the same time

* WorldQuery and QueryFilter traits unified. FilterFetch trait added to enable "short circuit" filtering. Auto impled for cases that don't need it

* Significantly slimmed down SystemState in favor of individual SystemParam state

* System Commands changed from `commands: &mut Commands` back to `mut commands: Commands` (to allow Commands to have a World reference)

Fixes#1320

## `World` Rewrite

This is a from-scratch rewrite of `World` that fills the niche that `hecs` used to. Yes, this means Bevy ECS is no longer a "fork" of hecs. We're going out our own!

(the only shared code between the projects is the entity id allocator, which is already basically ideal)

A huge shout out to @SanderMertens (author of [flecs](https://github.com/SanderMertens/flecs)) for sharing some great ideas with me (specifically hybrid ecs storage and archetype graphs). He also helped advise on a number of implementation details.

## Component Storage (The Problem)

Two ECS storage paradigms have gained a lot of traction over the years:

* **Archetypal ECS**:

* Stores components in "tables" with static schemas. Each "column" stores components of a given type. Each "row" is an entity.

* Each "archetype" has its own table. Adding/removing an entity's component changes the archetype.

* Enables super-fast Query iteration due to its cache-friendly data layout

* Comes at the cost of more expensive add/remove operations for an Entity's components, because all components need to be copied to the new archetype's "table"

* **Sparse Set ECS**:

* Stores components of the same type in densely packed arrays, which are sparsely indexed by densely packed unsigned integers (Entity ids)

* Query iteration is slower than Archetypal ECS because each entity's component could be at any position in the sparse set. This "random access" pattern isn't cache friendly. Additionally, there is an extra layer of indirection because you must first map the entity id to an index in the component array.

* Adding/removing components is a cheap, constant time operation

Bevy ECS V1, hecs, legion, flec, and Unity DOTS are all "archetypal ecs-es". I personally think "archetypal" storage is a good default for game engines. An entity's archetype doesn't need to change frequently in general, and it creates "fast by default" query iteration (which is a much more common operation). It is also "self optimizing". Users don't need to think about optimizing component layouts for iteration performance. It "just works" without any extra boilerplate.

Shipyard and EnTT are "sparse set ecs-es". They employ "packing" as a way to work around the "suboptimal by default" iteration performance for specific sets of components. This helps, but I didn't think this was a good choice for a general purpose engine like Bevy because:

1. "packs" conflict with each other. If bevy decides to internally pack the Transform and GlobalTransform components, users are then blocked if they want to pack some custom component with Transform.

2. users need to take manual action to optimize

Developers selecting an ECS framework are stuck with a hard choice. Select an "archetypal" framework with "fast iteration everywhere" but without the ability to cheaply add/remove components, or select a "sparse set" framework to cheaply add/remove components but with slower iteration performance.

## Hybrid Component Storage (The Solution)

In Bevy ECS V2, we get to have our cake and eat it too. It now has _both_ of the component storage types above (and more can be added later if needed):

* **Tables** (aka "archetypal" storage)

* The default storage. If you don't configure anything, this is what you get

* Fast iteration by default

* Slower add/remove operations

* **Sparse Sets**

* Opt-in

* Slower iteration

* Faster add/remove operations

These storage types complement each other perfectly. By default Query iteration is fast. If developers know that they want to add/remove a component at high frequencies, they can set the storage to "sparse set":

```rust

world.register_component(

ComponentDescriptor:🆕:<MyComponent>(StorageType::SparseSet)

).unwrap();

```

## Archetypes

Archetypes are now "just metadata" ... they no longer store components directly. They do store:

* The `ComponentId`s of each of the Archetype's components (and that component's storage type)

* Archetypes are uniquely defined by their component layouts

* For example: entities with "table" components `[A, B, C]` _and_ "sparse set" components `[D, E]` will always be in the same archetype.

* The `TableId` associated with the archetype

* For now each archetype has exactly one table (which can have no components),

* There is a 1->Many relationship from Tables->Archetypes. A given table could have any number of archetype components stored in it:

* Ex: an entity with "table storage" components `[A, B, C]` and "sparse set" components `[D, E]` will share the same `[A, B, C]` table as an entity with `[A, B, C]` table component and `[F]` sparse set components.

* This 1->Many relationship is how we preserve fast "cache friendly" iteration performance when possible (more on this later)

* A list of entities that are in the archetype and the row id of the table they are in

* ArchetypeComponentIds

* unique densely packed identifiers for (ArchetypeId, ComponentId) pairs

* used by the schedule executor for cheap system access control

* "Archetype Graph Edges" (see the next section)

## The "Archetype Graph"

Archetype changes in Bevy (and a number of other archetypal ecs-es) have historically been expensive to compute. First, you need to allocate a new vector of the entity's current component ids, add or remove components based on the operation performed, sort it (to ensure it is order-independent), then hash it to find the archetype (if it exists). And thats all before we get to the _already_ expensive full copy of all components to the new table storage.

The solution is to build a "graph" of archetypes to cache these results. @SanderMertens first exposed me to the idea (and he got it from @gjroelofs, who came up with it). They propose adding directed edges between archetypes for add/remove component operations. If `ComponentId`s are densely packed, you can use sparse sets to cheaply jump between archetypes.

Bevy takes this one step further by using add/remove `Bundle` edges instead of `Component` edges. Bevy encourages the use of `Bundles` to group add/remove operations. This is largely for "clearer game logic" reasons, but it also helps cut down on the number of archetype changes required. `Bundles` now also have densely-packed `BundleId`s. This allows us to use a _single_ edge for each bundle operation (rather than needing to traverse N edges ... one for each component). Single component operations are also bundles, so this is strictly an improvement over a "component only" graph.

As a result, an operation that used to be _heavy_ (both for allocations and compute) is now two dirt-cheap array lookups and zero allocations.

## Stateful Queries

World queries are now stateful. This allows us to:

1. Cache archetype (and table) matches

* This resolves another issue with (naive) archetypal ECS: query performance getting worse as the number of archetypes goes up (and fragmentation occurs).

2. Cache Fetch and Filter state

* The expensive parts of fetch/filter operations (such as hashing the TypeId to find the ComponentId) now only happen once when the Query is first constructed

3. Incrementally build up state

* When new archetypes are added, we only process the new archetypes (no need to rebuild state for old archetypes)

As a result, the direct `World` query api now looks like this:

```rust

let mut query = world.query::<(&A, &mut B)>();

for (a, mut b) in query.iter_mut(&mut world) {

}

```

Requiring `World` to generate stateful queries (rather than letting the `QueryState` type be constructed separately) allows us to ensure that _all_ queries are properly initialized (and the relevant world state, such as ComponentIds). This enables QueryState to remove branches from its operations that check for initialization status (and also enables query.iter() to take an immutable world reference because it doesn't need to initialize anything in world).

However in systems, this is a non-breaking change. State management is done internally by the relevant SystemParam.

## Stateful SystemParams

Like Queries, `SystemParams` now also cache state. For example, `Query` system params store the "stateful query" state mentioned above. Commands store their internal `CommandQueue`. This means you can now safely use as many separate `Commands` parameters in your system as you want. `Local<T>` system params store their `T` value in their state (instead of in Resources).

SystemParam state also enabled a significant slim-down of SystemState. It is much nicer to look at now.

Per-SystemParam state naturally insulates us from an "aliased mut" class of errors we have hit in the past (ex: using multiple `Commands` system params).

(credit goes to @DJMcNab for the initial idea and draft pr here #1364)

## Configurable SystemParams

@DJMcNab also had the great idea to make SystemParams configurable. This allows users to provide some initial configuration / values for system parameters (when possible). Most SystemParams have no config (the config type is `()`), but the `Local<T>` param now supports user-provided parameters:

```rust

fn foo(value: Local<usize>) {

}

app.add_system(foo.system().config(|c| c.0 = Some(10)));

```

## Uber Fast "for_each" Query Iterators

Developers now have the choice to use a fast "for_each" iterator, which yields ~1.5-3x iteration speed improvements for "fragmented iteration", and minor ~1.2x iteration speed improvements for unfragmented iteration.

```rust

fn system(query: Query<(&A, &mut B)>) {

// you now have the option to do this for a speed boost

query.for_each_mut(|(a, mut b)| {

});

// however normal iterators are still available

for (a, mut b) in query.iter_mut() {

}

}

```

I think in most cases we should continue to encourage "normal" iterators as they are more flexible and more "rust idiomatic". But when that extra "oomf" is needed, it makes sense to use `for_each`.

We should also consider using `for_each` for internal bevy systems to give our users a nice speed boost (but that should be a separate pr).

## Component Metadata

`World` now has a `Components` collection, which is accessible via `world.components()`. This stores mappings from `ComponentId` to `ComponentInfo`, as well as `TypeId` to `ComponentId` mappings (where relevant). `ComponentInfo` stores information about the component, such as ComponentId, TypeId, memory layout, send-ness (currently limited to resources), and storage type.

## Significantly Cheaper `Access<T>`

We used to use `TypeAccess<TypeId>` to manage read/write component/archetype-component access. This was expensive because TypeIds must be hashed and compared individually. The parallel executor got around this by "condensing" type ids into bitset-backed access types. This worked, but it had to be re-generated from the `TypeAccess<TypeId>`sources every time archetypes changed.

This pr removes TypeAccess in favor of faster bitset access everywhere. We can do this thanks to the move to densely packed `ComponentId`s and `ArchetypeComponentId`s.

## Merged Resources into World

Resources had a lot of redundant functionality with Components. They stored typed data, they had access control, they had unique ids, they were queryable via SystemParams, etc. In fact the _only_ major difference between them was that they were unique (and didn't correlate to an entity).

Separate resources also had the downside of requiring a separate set of access controls, which meant the parallel executor needed to compare more bitsets per system and manage more state.

I initially got the "separate resources" idea from `legion`. I think that design was motivated by the fact that it made the direct world query/resource lifetime interactions more manageable. It certainly made our lives easier when using Resources alongside hecs/bevy_ecs. However we already have a construct for safely and ergonomically managing in-world lifetimes: systems (which use `Access<T>` internally).

This pr merges Resources into World:

```rust

world.insert_resource(1);

world.insert_resource(2.0);

let a = world.get_resource::<i32>().unwrap();

let mut b = world.get_resource_mut::<f64>().unwrap();

*b = 3.0;

```

Resources are now just a special kind of component. They have their own ComponentIds (and their own resource TypeId->ComponentId scope, so they don't conflict wit components of the same type). They are stored in a special "resource archetype", which stores components inside the archetype using a new `unique_components` sparse set (note that this sparse set could later be used to implement Tags). This allows us to keep the code size small by reusing existing datastructures (namely Column, Archetype, ComponentFlags, and ComponentInfo). This allows us the executor to use a single `Access<ArchetypeComponentId>` per system. It should also make scripting language integration easier.

_But_ this merge did create problems for people directly interacting with `World`. What if you need mutable access to multiple resources at the same time? `world.get_resource_mut()` borrows World mutably!

## WorldCell

WorldCell applies the `Access<ArchetypeComponentId>` concept to direct world access:

```rust

let world_cell = world.cell();

let a = world_cell.get_resource_mut::<i32>().unwrap();

let b = world_cell.get_resource_mut::<f64>().unwrap();

```

This adds cheap runtime checks (a sparse set lookup of `ArchetypeComponentId` and a counter) to ensure that world accesses do not conflict with each other. Each operation returns a `WorldBorrow<'w, T>` or `WorldBorrowMut<'w, T>` wrapper type, which will release the relevant ArchetypeComponentId resources when dropped.

World caches the access sparse set (and only one cell can exist at a time), so `world.cell()` is a cheap operation.

WorldCell does _not_ use atomic operations. It is non-send, does a mutable borrow of world to prevent other accesses, and uses a simple `Rc<RefCell<ArchetypeComponentAccess>>` wrapper in each WorldBorrow pointer.

The api is currently limited to resource access, but it can and should be extended to queries / entity component access.

## Resource Scopes

WorldCell does not yet support component queries, and even when it does there are sometimes legitimate reasons to want a mutable world ref _and_ a mutable resource ref (ex: bevy_render and bevy_scene both need this). In these cases we could always drop down to the unsafe `world.get_resource_unchecked_mut()`, but that is not ideal!

Instead developers can use a "resource scope"

```rust

world.resource_scope(|world: &mut World, a: &mut A| {

})

```

This temporarily removes the `A` resource from `World`, provides mutable pointers to both, and re-adds A to World when finished. Thanks to the move to ComponentIds/sparse sets, this is a cheap operation.

If multiple resources are required, scopes can be nested. We could also consider adding a "resource tuple" to the api if this pattern becomes common and the boilerplate gets nasty.

## Query Conflicts Use ComponentId Instead of ArchetypeComponentId

For safety reasons, systems cannot contain queries that conflict with each other without wrapping them in a QuerySet. On bevy `main`, we use ArchetypeComponentIds to determine conflicts. This is nice because it can take into account filters:

```rust

// these queries will never conflict due to their filters

fn filter_system(a: Query<&mut A, With<B>>, b: Query<&mut B, Without<B>>) {

}

```

But it also has a significant downside:

```rust

// these queries will not conflict _until_ an entity with A, B, and C is spawned

fn maybe_conflicts_system(a: Query<(&mut A, &C)>, b: Query<(&mut A, &B)>) {

}

```

The system above will panic at runtime if an entity with A, B, and C is spawned. This makes it hard to trust that your game logic will run without crashing.

In this pr, I switched to using `ComponentId` instead. This _is_ more constraining. `maybe_conflicts_system` will now always fail, but it will do it consistently at startup. Naively, it would also _disallow_ `filter_system`, which would be a significant downgrade in usability. Bevy has a number of internal systems that rely on disjoint queries and I expect it to be a common pattern in userspace.

To resolve this, I added a new `FilteredAccess<T>` type, which wraps `Access<T>` and adds with/without filters. If two `FilteredAccess` have with/without values that prove they are disjoint, they will no longer conflict.

## EntityRef / EntityMut

World entity operations on `main` require that the user passes in an `entity` id to each operation:

```rust

let entity = world.spawn((A, )); // create a new entity with A

world.get::<A>(entity);

world.insert(entity, (B, C));

world.insert_one(entity, D);

```

This means that each operation needs to look up the entity location / verify its validity. The initial spawn operation also requires a Bundle as input. This can be awkward when no components are required (or one component is required).

These operations have been replaced by `EntityRef` and `EntityMut`, which are "builder-style" wrappers around world that provide read and read/write operations on a single, pre-validated entity:

```rust

// spawn now takes no inputs and returns an EntityMut

let entity = world.spawn()

.insert(A) // insert a single component into the entity

.insert_bundle((B, C)) // insert a bundle of components into the entity

.id() // id returns the Entity id

// Returns EntityMut (or panics if the entity does not exist)

world.entity_mut(entity)

.insert(D)

.insert_bundle(SomeBundle::default());

{

// returns EntityRef (or panics if the entity does not exist)

let d = world.entity(entity)

.get::<D>() // gets the D component

.unwrap();

// world.get still exists for ergonomics

let d = world.get::<D>(entity).unwrap();

}

// These variants return Options if you want to check existence instead of panicing

world.get_entity_mut(entity)

.unwrap()

.insert(E);

if let Some(entity_ref) = world.get_entity(entity) {

let d = entity_ref.get::<D>().unwrap();

}

```

This _does not_ affect the current Commands api or terminology. I think that should be a separate conversation as that is a much larger breaking change.

## Safety Improvements

* Entity reservation in Commands uses a normal world borrow instead of an unsafe transmute

* QuerySets no longer transmutes lifetimes

* Made traits "unsafe" when implementing a trait incorrectly could cause unsafety

* More thorough safety docs

## RemovedComponents SystemParam

The old approach to querying removed components: `query.removed:<T>()` was confusing because it had no connection to the query itself. I replaced it with the following, which is both clearer and allows us to cache the ComponentId mapping in the SystemParamState:

```rust

fn system(removed: RemovedComponents<T>) {

for entity in removed.iter() {

}

}

```

## Simpler Bundle implementation

Bundles are no longer responsible for sorting (or deduping) TypeInfo. They are just a simple ordered list of component types / data. This makes the implementation smaller and opens the door to an easy "nested bundle" implementation in the future (which i might even add in this pr). Duplicate detection is now done once per bundle type by World the first time a bundle is used.

## Unified WorldQuery and QueryFilter types

(don't worry they are still separate type _parameters_ in Queries .. this is a non-breaking change)

WorldQuery and QueryFilter were already basically identical apis. With the addition of `FetchState` and more storage-specific fetch methods, the overlap was even clearer (and the redundancy more painful).

QueryFilters are now just `F: WorldQuery where F::Fetch: FilterFetch`. FilterFetch requires `Fetch<Item = bool>` and adds new "short circuit" variants of fetch methods. This enables a filter tuple like `(With<A>, Without<B>, Changed<C>)` to stop evaluating the filter after the first mismatch is encountered. FilterFetch is automatically implemented for `Fetch` implementations that return bool.

This forces fetch implementations that return things like `(bool, bool, bool)` (such as the filter above) to manually implement FilterFetch and decide whether or not to short-circuit.

## More Granular Modules

World no longer globs all of the internal modules together. It now exports `core`, `system`, and `schedule` separately. I'm also considering exporting `core` submodules directly as that is still pretty "glob-ey" and unorganized (feedback welcome here).

## Remaining Draft Work (to be done in this pr)

* ~~panic on conflicting WorldQuery fetches (&A, &mut A)~~

* ~~bevy `main` and hecs both currently allow this, but we should protect against it if possible~~

* ~~batch_iter / par_iter (currently stubbed out)~~

* ~~ChangedRes~~

* ~~I skipped this while we sort out #1313. This pr should be adapted to account for whatever we land on there~~.

* ~~The `Archetypes` and `Tables` collections use hashes of sorted lists of component ids to uniquely identify each archetype/table. This hash is then used as the key in a HashMap to look up the relevant ArchetypeId or TableId. (which doesn't handle hash collisions properly)~~

* ~~It is currently unsafe to generate a Query from "World A", then use it on "World B" (despite the api claiming it is safe). We should probably close this gap. This could be done by adding a randomly generated WorldId to each world, then storing that id in each Query. They could then be compared to each other on each `query.do_thing(&world)` operation. This _does_ add an extra branch to each query operation, so I'm open to other suggestions if people have them.~~

* ~~Nested Bundles (if i find time)~~

## Potential Future Work

* Expand WorldCell to support queries.

* Consider not allocating in the empty archetype on `world.spawn()`

* ex: return something like EntityMutUninit, which turns into EntityMut after an `insert` or `insert_bundle` op

* this actually regressed performance last time i tried it, but in theory it should be faster

* Optimize SparseSet::insert (see `PERF` comment on insert)

* Replace SparseArray `Option<T>` with T::MAX to cut down on branching

* would enable cheaper get_unchecked() operations

* upstream fixedbitset optimizations

* fixedbitset could be allocation free for small block counts (store blocks in a SmallVec)

* fixedbitset could have a const constructor

* Consider implementing Tags (archetype-specific by-value data that affects archetype identity)

* ex: ArchetypeA could have `[A, B, C]` table components and `[D(1)]` "tag" component. ArchetypeB could have `[A, B, C]` table components and a `[D(2)]` tag component. The archetypes are different, despite both having D tags because the value inside D is different.

* this could potentially build on top of the `archetype.unique_components` added in this pr for resource storage.

* Consider reverting `all_tuples` proc macro in favor of the old `macro_rules` implementation

* all_tuples is more flexible and produces cleaner documentation (the macro_rules version produces weird type parameter orders due to parser constraints)

* but unfortunately all_tuples also appears to make Rust Analyzer sad/slow when working inside of `bevy_ecs` (does not affect user code)

* Consider "resource queries" and/or "mixed resource and entity component queries" as an alternative to WorldCell

* this is basically just "systems" so maybe it's not worth it

* Add more world ops

* `world.clear()`

* `world.reserve<T: Bundle>(count: usize)`

* Try using the old archetype allocation strategy (allocate new memory on resize and copy everything over). I expect this to improve batch insertion performance at the cost of unbatched performance. But thats just a guess. I'm not an allocation perf pro :)

* Adapt Commands apis for consistency with new World apis

## Benchmarks

key:

* `bevy_old`: bevy `main` branch

* `bevy`: this branch

* `_foreach`: uses an optimized for_each iterator

* ` _sparse`: uses sparse set storage (if unspecified assume table storage)

* `_system`: runs inside a system (if unspecified assume test happens via direct world ops)

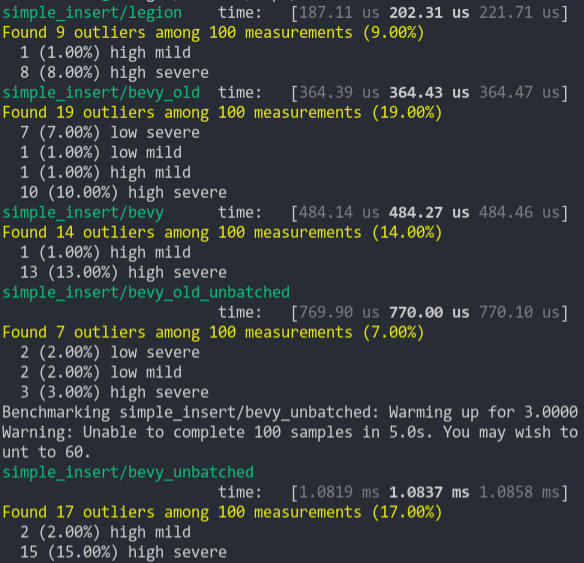

### Simple Insert (from ecs_bench_suite)

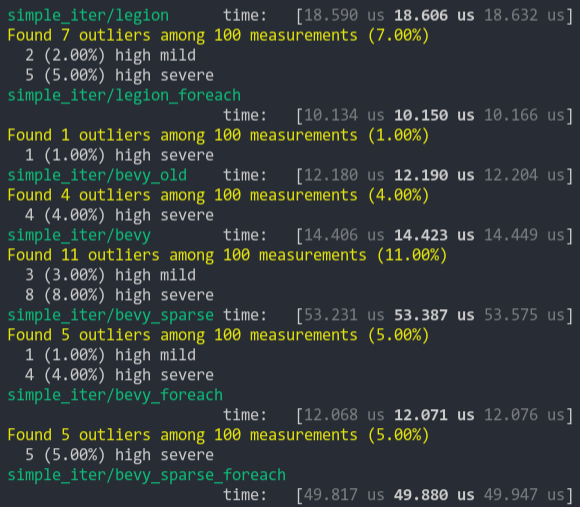

### Simpler Iter (from ecs_bench_suite)

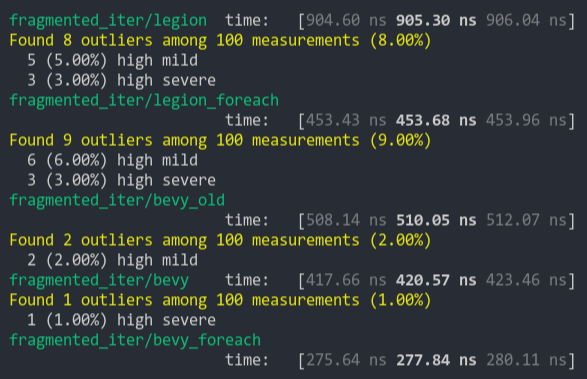

### Fragment Iter (from ecs_bench_suite)

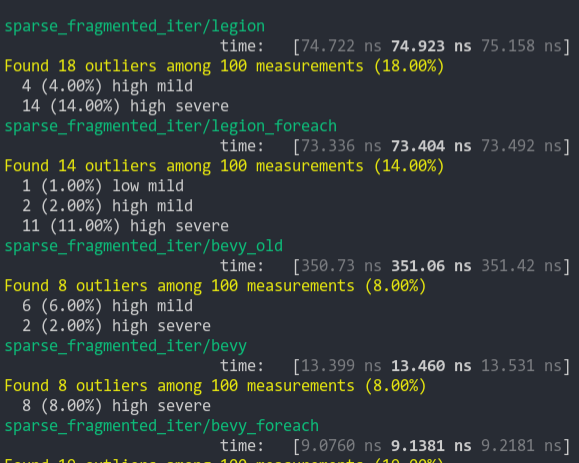

### Sparse Fragmented Iter

Iterate a query that matches 5 entities from a single matching archetype, but there are 100 unmatching archetypes

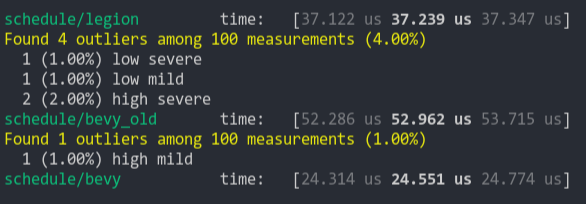

### Schedule (from ecs_bench_suite)

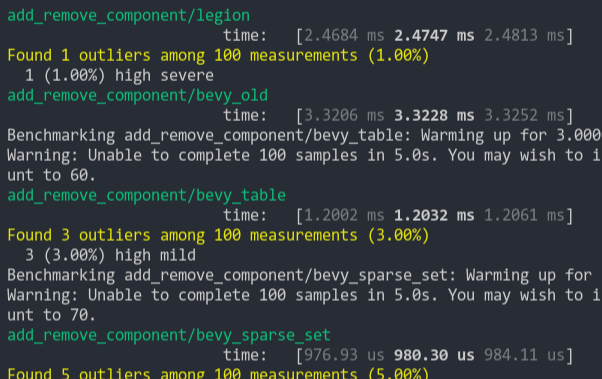

### Add Remove Component (from ecs_bench_suite)

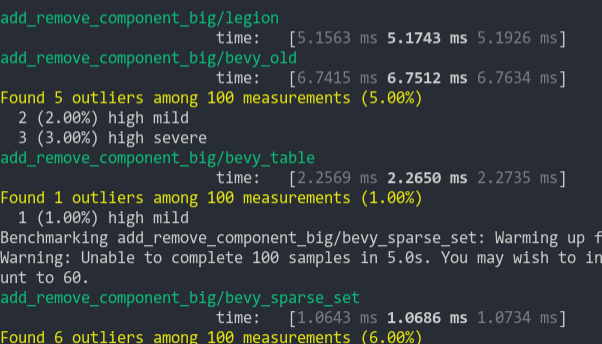

### Add Remove Component Big

Same as the test above, but each entity has 5 "large" matrix components and 1 "large" matrix component is added and removed

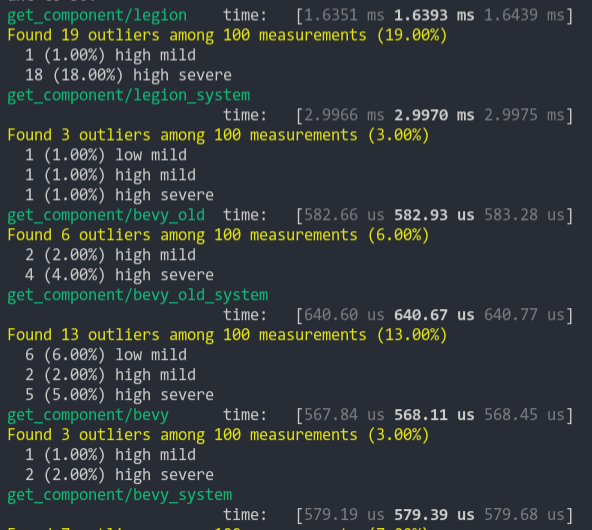

### Get Component

Looks up a single component value a large number of times

This PR implements wireframe rendering.

Usage:

This is now ready as soon as #1401 gets merged.

Usage:

```rust

app

.insert_resource(WgpuOptions {

name: Some("3d_scene"),

features: WgpuFeatures::NON_FILL_POLYGON_MODE,

..Default::default()

}) // To enable the NON_FILL_POLYGON_MODE feature

.add_plugin(WireframePlugin)

.run();

```

Now we just need to add the Wireframe component on an entity, and it'll draw. its wireframe.

We can also enable wireframe drawing globally by setting the global property in the `WireframeConfig` resource to `true`.

Co-authored-by: Zhixing Zhang <me@neoto.xin>

Adds the original type_name to `NodeState`, enabling plugins like [this](https://github.com/jakobhellermann/bevy_mod_debugdump).

This does increase the `NodeState` type by 16 bytes, but it is already 176 so it's not that big of an increase.

`RenderGraph` errors only give the `Uuid` of the node. So for my graphviz dot based visualization of the `RenderGraph` I really wanted to show it to the user. I think it makes sense to have it accessible for at least debugging purposes.

This PR is easiest to review commit by commit.

Followup on https://github.com/bevyengine/bevy/pull/1309#issuecomment-767310084

- [x] Switch from a bash script to an xtask rust workspace member.

- Results in ~30s longer CI due to compilation of the xtask itself

- Enables Bevy contributors on any platform to run `cargo ci` to run linting -- if the default available Rust is the same version as on CI, then the command should give an identical result.

- [x] Use the xtask from official CI so there's only one place to update.

- [x] Bonus: Run clippy on the _entire_ workspace (existing CI setup was missing the `--workspace` flag

- [x] Clean up newly-exposed clippy errors

~#1388 builds on this to clean up newly discovered clippy errors -- I thought it might be nicer as a separate PR.~ Nope, merged it into this one so CI would pass.

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

For some cases, like driving a full screen fragment shader, it is sometimes convenient to not have to create and upload a mesh because the necessary vertices are simple to synthesize in the vertex shader. Bevy's existing pipeline compiler assumes that there will always be a vertex buffer. This PR changes that such that vertex buffer descriptor is only added to the pipeline layout if there are vertex attributes in the shader.

The `Texture::convert` function previously was only compiled when

one of the image format features (`png`, `jpeg` etc.) were enabled.

The `bevy_sprite` crate needs this function though, which led

to compilation errors when using `cargo check --no-default-features

--features render`.

Now the `convert` function has no features and the `texture_to_image`

and `image_to_texture` utilites functions are in an unconditionally

compiled module.

* use `length_squared` for visible entities

* ortho projection 2d/3d different depth calculation

* use ScalingMode::FixedVertical for 3d ortho

* new example: 3d orthographic

* add normalized orthographic projection

* custom scale for ScaledOrthographicProjection

* allow choosing base axis for ScaledOrthographicProjection

* cargo fmt

* add general (scaled) orthographic camera bundle

FIXME: does the same "far" trick from Camera2DBundle make any sense here?

* fixes

* camera bundles: rename and new ortho constructors

* unify orthographic projections

* give PerspectiveCameraBundle constructors like those of OrthographicCameraBundle

* update examples with new camera bundle syntax

* rename CameraUiBundle to UiCameraBundle

* update examples

* ScalingMode::None

* remove extra blank lines

* sane default bounds for orthographic projection

* fix alien_cake_addict example

* reorder ScalingMode enum variants

* ios example fix

* Remove AHashExt

There is little benefit of Hash*::new() over Hash*::default(), but it

does require more code that needs to be duplicated for every Hash* in

bevy_utils. It may also slightly increase compile times.

* Add StableHash* to bevy_utils

* Use StableHashMap instead of HashMap + BTreeSet for diagnostics

This is a significant reduction in the release mode compile times of

bevy_diagnostics

```

Benchmark #1: touch crates/bevy_diagnostic/src/lib.rs && cargo build --release -p bevy_diagnostic -j1

Time (mean ± σ): 3.645 s ± 0.009 s [User: 3.551 s, System: 0.094 s]

Range (min … max): 3.632 s … 3.658 s 20 runs

```

```

Benchmark #1: touch crates/bevy_diagnostic/src/lib.rs && cargo build --release -p bevy_diagnostic -j1

Time (mean ± σ): 2.938 s ± 0.012 s [User: 2.850 s, System: 0.090 s]

Range (min … max): 2.919 s … 2.969 s 20 runs

```