# Objective

- `apply_system_buffers` is an unhelpful name: it introduces a new

internal-only concept

- this is particularly rough for beginners as reasoning about how

commands work is a critical stumbling block

## Solution

- rename `apply_system_buffers` to the more descriptive `apply_deferred`

- rename related fields, arguments and methods in the internals fo

bevy_ecs for consistency

- update the docs

## Changelog

`apply_system_buffers` has been renamed to `apply_deferred`, to more

clearly communicate its intent and relation to `Deferred` system

parameters like `Commands`.

## Migration Guide

- `apply_system_buffers` has been renamed to `apply_deferred`

- the `apply_system_buffers` method on the `System` trait has been

renamed to `apply_deferred`

- the `is_apply_system_buffers` function has been replaced by

`is_apply_deferred`

- `Executor::set_apply_final_buffers` is now

`Executor::set_apply_final_deferred`

- `Schedule::apply_system_buffers` is now `Schedule::apply_deferred`

---------

Co-authored-by: JoJoJet <21144246+JoJoJet@users.noreply.github.com>

# Objective

- If I understand correctly, forward points in `direction`, so the

negative of `direction` should be back.

## Migration Guide

- `Transform::look_to` method changed default value of

`direction.try_normalize()` from `Vec3::Z` to `Vec3::NEG_Z`

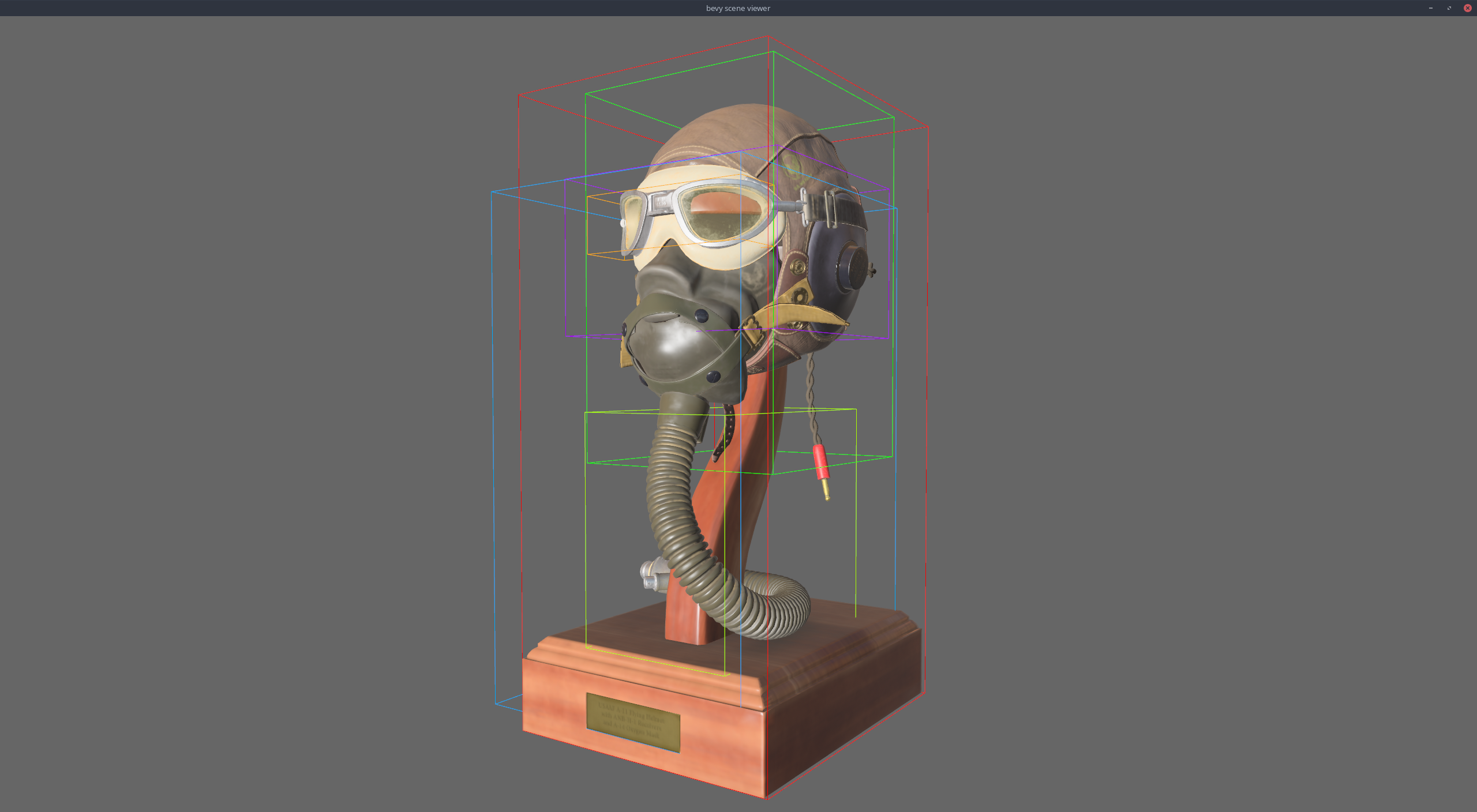

# Objective

Add a bounding box gizmo

## Changes

- Added the `AabbGizmo` component that will draw the `Aabb` component on

that entity.

- Added an option to draw all bounding boxes in a scene on the

`GizmoConfig` resource.

- Added `TransformPoint` trait to generalize over the point

transformation methods on various transform types (e.g `Transform` and

`GlobalTransform`).

- Changed the `Gizmos::cuboid` method to accept an `impl TransformPoint`

instead of separate translation, rotation, and scale.

Links in the api docs are nice. I noticed that there were several places

where structs / functions and other things were referenced in the docs,

but weren't linked. I added the links where possible / logical.

---------

Co-authored-by: Alice Cecile <alice.i.cecile@gmail.com>

Co-authored-by: François <mockersf@gmail.com>

# Objective

- Fix#7263

This has nothing to do with #7024. This is for the case where the

user opted to **not** keep the same global transform on update.

## Solution

- Add a `RemovedComponent<Parent>` to `propagate_transforms`

- Add a `RemovedComponent<Parent>` and `Local<Vec<Entity>>` to

`sync_simple_transforms`

- Add test to make sure all of this works.

### Performance note

This should only incur a cost in cases where a parent is removed.

A minimal overhead (one look up in the `removed_components`

sparse set) per root entities without children which transform didn't

change. A `Vec` the size of the largest number of entities removed

with a `Parent` component in a single frame, and a binary search on

a `Vec` per root entities.

It could slow up considerably in situations where a lot of entities are

orphaned consistently during every frame, since

`sync_simple_transforms` is not parallel. But in this situation,

it is likely that the overhead of archetype updates overwhelms

everything.

---

## Changelog

- Fix the `GlobalTransform` not getting updated when `Parent` is removed

## Migration Guide

- If you called `bevy_transform::systems::sync_simple_transforms` and

`bevy_transform::systems::propagate_transforms` (which is not

re-exported by bevy) you need to account for the additional

`RemovedComponents<Parent>` parameter.

---------

Co-authored-by: vyb <vyb@users.noreply.github.com>

Co-authored-by: JoJoJet <21144246+JoJoJet@users.noreply.github.com>

# Objective

The clippy lint `type_complexity` is known not to play well with bevy.

It frequently triggers when writing complex queries, and taking the

lint's advice of using a type alias almost always just obfuscates the

code with no benefit. Because of this, this lint is currently ignored in

CI, but unfortunately it still shows up when viewing bevy code in an

IDE.

As someone who's made a fair amount of pull requests to this repo, I

will say that this issue has been a consistent thorn in my side. Since

bevy code is filled with spurious, ignorable warnings, it can be very

difficult to spot the *real* warnings that must be fixed -- most of the

time I just ignore all warnings, only to later find out that one of them

was real after I'm done when CI runs.

## Solution

Suppress this lint in all bevy crates. This was previously attempted in

#7050, but the review process ended up making it more complicated than

it needs to be and landed on a subpar solution.

The discussion in https://github.com/rust-lang/rust-clippy/pull/10571

explores some better long-term solutions to this problem. Since there is

no timeline on when these solutions may land, we should resolve this

issue in the meantime by locally suppressing these lints.

### Unresolved issues

Currently, these lints are not suppressed in our examples, since that

would require suppressing the lint in every single source file. They are

still ignored in CI.

# Objective

Documentation should no longer be using pre-stageless terminology to

avoid confusion.

## Solution

- update all docs referring to stages to instead refer to sets/schedules

where appropriate

- also mention `apply_system_buffers` for anything system-buffer-related

that previously referred to buffers being applied "at the end of a

stage"

Alternative to #7804

Allows other instances of the `sync_simple_transforms` and `propagate_transforms` systems to be added.

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

Closes#7573

- Make `StartupSet` a base set

## Solution

- Add `#[system_set(base)]` to the enum declaration

- Replace `.in_set(StartupSet::...)` with `.in_base_set(StartupSet::...)`

**Note**: I don't really know what I'm doing and what exactly the difference between base and non-base sets are. I mostly opened this PR based on discussion in Discord. I also don't really know how to test that I didn't break everything. Your reviews are appreciated!

---

## Changelog

- `StartupSet` is now a base set

## Migration Guide

`StartupSet` is now a base set. This means that you have to use `.in_base_set` instead of `.in_set`:

### Before

```rs

app.add_system(foo.in_set(StartupSet::PreStartup))

```

### After

```rs

app.add_system(foo.in_base_set(StartupSet::PreStartup))

```

# Objective

We have a few old system labels that are now system sets but are still named or documented as labels. Documentation also generally mentioned system labels in some places.

## Solution

- Clean up naming and documentation regarding system sets

## Migration Guide

`PrepareAssetLabel` is now called `PrepareAssetSet`

# Objective

NOTE: This depends on #7267 and should not be merged until #7267 is merged. If you are reviewing this before that is merged, I highly recommend viewing the Base Sets commit instead of trying to find my changes amongst those from #7267.

"Default sets" as described by the [Stageless RFC](https://github.com/bevyengine/rfcs/pull/45) have some [unfortunate consequences](https://github.com/bevyengine/bevy/discussions/7365).

## Solution

This adds "base sets" as a variant of `SystemSet`:

A set is a "base set" if `SystemSet::is_base` returns `true`. Typically this will be opted-in to using the `SystemSet` derive:

```rust

#[derive(SystemSet, Clone, Hash, Debug, PartialEq, Eq)]

#[system_set(base)]

enum MyBaseSet {

A,

B,

}

```

**Base sets are exclusive**: a system can belong to at most one "base set". Adding a system to more than one will result in an error. When possible we fail immediately during system-config-time with a nice file + line number. For the more nested graph-ey cases, this will fail at the final schedule build.

**Base sets cannot belong to other sets**: this is where the word "base" comes from

Systems and Sets can only be added to base sets using `in_base_set`. Calling `in_set` with a base set will fail. As will calling `in_base_set` with a normal set.

```rust

app.add_system(foo.in_base_set(MyBaseSet::A))

// X must be a normal set ... base sets cannot be added to base sets

.configure_set(X.in_base_set(MyBaseSet::A))

```

Base sets can still be configured like normal sets:

```rust

app.add_system(MyBaseSet::B.after(MyBaseSet::Ap))

```

The primary use case for base sets is enabling a "default base set":

```rust

schedule.set_default_base_set(CoreSet::Update)

// this will belong to CoreSet::Update by default

.add_system(foo)

// this will override the default base set with PostUpdate

.add_system(bar.in_base_set(CoreSet::PostUpdate))

```

This allows us to build apis that work by default in the standard Bevy style. This is a rough analog to the "default stage" model, but it use the new "stageless sets" model instead, with all of the ordering flexibility (including exclusive systems) that it provides.

---

## Changelog

- Added "base sets" and ported CoreSet to use them.

## Migration Guide

TODO

Huge thanks to @maniwani, @devil-ira, @hymm, @cart, @superdump and @jakobhellermann for the help with this PR.

# Objective

- Followup #6587.

- Minimal integration for the Stageless Scheduling RFC: https://github.com/bevyengine/rfcs/pull/45

## Solution

- [x] Remove old scheduling module

- [x] Migrate new methods to no longer use extension methods

- [x] Fix compiler errors

- [x] Fix benchmarks

- [x] Fix examples

- [x] Fix docs

- [x] Fix tests

## Changelog

### Added

- a large number of methods on `App` to work with schedules ergonomically

- the `CoreSchedule` enum

- `App::add_extract_system` via the `RenderingAppExtension` trait extension method

- the private `prepare_view_uniforms` system now has a public system set for scheduling purposes, called `ViewSet::PrepareUniforms`

### Removed

- stages, and all code that mentions stages

- states have been dramatically simplified, and no longer use a stack

- `RunCriteriaLabel`

- `AsSystemLabel` trait

- `on_hierarchy_reports_enabled` run criteria (now just uses an ad hoc resource checking run condition)

- systems in `RenderSet/Stage::Extract` no longer warn when they do not read data from the main world

- `RunCriteriaLabel`

- `transform_propagate_system_set`: this was a nonstandard pattern that didn't actually provide enough control. The systems are already `pub`: the docs have been updated to ensure that the third-party usage is clear.

### Changed

- `System::default_labels` is now `System::default_system_sets`.

- `App::add_default_labels` is now `App::add_default_sets`

- `CoreStage` and `StartupStage` enums are now `CoreSet` and `StartupSet`

- `App::add_system_set` was renamed to `App::add_systems`

- The `StartupSchedule` label is now defined as part of the `CoreSchedules` enum

- `.label(SystemLabel)` is now referred to as `.in_set(SystemSet)`

- `SystemLabel` trait was replaced by `SystemSet`

- `SystemTypeIdLabel<T>` was replaced by `SystemSetType<T>`

- The `ReportHierarchyIssue` resource now has a public constructor (`new`), and implements `PartialEq`

- Fixed time steps now use a schedule (`CoreSchedule::FixedTimeStep`) rather than a run criteria.

- Adding rendering extraction systems now panics rather than silently failing if no subapp with the `RenderApp` label is found.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied.

- `SceneSpawnerSystem` now runs under `CoreSet::Update`, rather than `CoreStage::PreUpdate.at_end()`.

- `bevy_pbr::add_clusters` is no longer an exclusive system

- the top level `bevy_ecs::schedule` module was replaced with `bevy_ecs::scheduling`

- `tick_global_task_pools_on_main_thread` is no longer run as an exclusive system. Instead, it has been replaced by `tick_global_task_pools`, which uses a `NonSend` resource to force running on the main thread.

## Migration Guide

- Calls to `.label(MyLabel)` should be replaced with `.in_set(MySet)`

- Stages have been removed. Replace these with system sets, and then add command flushes using the `apply_system_buffers` exclusive system where needed.

- The `CoreStage`, `StartupStage, `RenderStage` and `AssetStage` enums have been replaced with `CoreSet`, `StartupSet, `RenderSet` and `AssetSet`. The same scheduling guarantees have been preserved.

- Systems are no longer added to `CoreSet::Update` by default. Add systems manually if this behavior is needed, although you should consider adding your game logic systems to `CoreSchedule::FixedTimestep` instead for more reliable framerate-independent behavior.

- Similarly, startup systems are no longer part of `StartupSet::Startup` by default. In most cases, this won't matter to you.

- For example, `add_system_to_stage(CoreStage::PostUpdate, my_system)` should be replaced with

- `add_system(my_system.in_set(CoreSet::PostUpdate)`

- When testing systems or otherwise running them in a headless fashion, simply construct and run a schedule using `Schedule::new()` and `World::run_schedule` rather than constructing stages

- Run criteria have been renamed to run conditions. These can now be combined with each other and with states.

- Looping run criteria and state stacks have been removed. Use an exclusive system that runs a schedule if you need this level of control over system control flow.

- For app-level control flow over which schedules get run when (such as for rollback networking), create your own schedule and insert it under the `CoreSchedule::Outer` label.

- Fixed timesteps are now evaluated in a schedule, rather than controlled via run criteria. The `run_fixed_timestep` system runs this schedule between `CoreSet::First` and `CoreSet::PreUpdate` by default.

- Command flush points introduced by `AssetStage` have been removed. If you were relying on these, add them back manually.

- Adding extract systems is now typically done directly on the main app. Make sure the `RenderingAppExtension` trait is in scope, then call `app.add_extract_system(my_system)`.

- the `calculate_bounds` system, with the `CalculateBounds` label, is now in `CoreSet::Update`, rather than in `CoreSet::PostUpdate` before commands are applied. You may need to order your movement systems to occur before this system in order to avoid system order ambiguities in culling behavior.

- the `RenderLabel` `AppLabel` was renamed to `RenderApp` for clarity

- `App::add_state` now takes 0 arguments: the starting state is set based on the `Default` impl.

- Instead of creating `SystemSet` containers for systems that run in stages, simply use `.on_enter::<State::Variant>()` or its `on_exit` or `on_update` siblings.

- `SystemLabel` derives should be replaced with `SystemSet`. You will also need to add the `Debug`, `PartialEq`, `Eq`, and `Hash` traits to satisfy the new trait bounds.

- `with_run_criteria` has been renamed to `run_if`. Run criteria have been renamed to run conditions for clarity, and should now simply return a bool.

- States have been dramatically simplified: there is no longer a "state stack". To queue a transition to the next state, call `NextState::set`

## TODO

- [x] remove dead methods on App and World

- [x] add `App::add_system_to_schedule` and `App::add_systems_to_schedule`

- [x] avoid adding the default system set at inappropriate times

- [x] remove any accidental cycles in the default plugins schedule

- [x] migrate benchmarks

- [x] expose explicit labels for the built-in command flush points

- [x] migrate engine code

- [x] remove all mentions of stages from the docs

- [x] verify docs for States

- [x] fix uses of exclusive systems that use .end / .at_start / .before_commands

- [x] migrate RenderStage and AssetStage

- [x] migrate examples

- [x] ensure that transform propagation is exported in a sufficiently public way (the systems are already pub)

- [x] ensure that on_enter schedules are run at least once before the main app

- [x] re-enable opt-in to execution order ambiguities

- [x] revert change to `update_bounds` to ensure it runs in `PostUpdate`

- [x] test all examples

- [x] unbreak directional lights

- [x] unbreak shadows (see 3d_scene, 3d_shape, lighting, transparaency_3d examples)

- [x] game menu example shows loading screen and menu simultaneously

- [x] display settings menu is a blank screen

- [x] `without_winit` example panics

- [x] ensure all tests pass

- [x] SubApp doc test fails

- [x] runs_spawn_local tasks fails

- [x] [Fix panic_when_hierachy_cycle test hanging](https://github.com/alice-i-cecile/bevy/pull/120)

## Points of Difficulty and Controversy

**Reviewers, please give feedback on these and look closely**

1. Default sets, from the RFC, have been removed. These added a tremendous amount of implicit complexity and result in hard to debug scheduling errors. They're going to be tackled in the form of "base sets" by @cart in a followup.

2. The outer schedule controls which schedule is run when `App::update` is called.

3. I implemented `Label for `Box<dyn Label>` for our label types. This enables us to store schedule labels in concrete form, and then later run them. I ran into the same set of problems when working with one-shot systems. We've previously investigated this pattern in depth, and it does not appear to lead to extra indirection with nested boxes.

4. `SubApp::update` simply runs the default schedule once. This sucks, but this whole API is incomplete and this was the minimal changeset.

5. `time_system` and `tick_global_task_pools_on_main_thread` no longer use exclusive systems to attempt to force scheduling order

6. Implemetnation strategy for fixed timesteps

7. `AssetStage` was migrated to `AssetSet` without reintroducing command flush points. These did not appear to be used, and it's nice to remove these bottlenecks.

8. Migration of `bevy_render/lib.rs` and pipelined rendering. The logic here is unusually tricky, as we have complex scheduling requirements.

## Future Work (ideally before 0.10)

- Rename schedule_v3 module to schedule or scheduling

- Add a derive macro to states, and likely a `EnumIter` trait of some form

- Figure out what exactly to do with the "systems added should basically work by default" problem

- Improve ergonomics for working with fixed timesteps and states

- Polish FixedTime API to match Time

- Rebase and merge #7415

- Resolve all internal ambiguities (blocked on better tools, especially #7442)

- Add "base sets" to replace the removed default sets.

# Objective

- Bevy should not have any "internal" execution order ambiguities. These clutter the output of user-facing error reporting, and can result in nasty, nondetermistic, very difficult to solve bugs.

- Verifying this currently involves repeated non-trivial manual work.

## Solution

- [x] add an example to quickly check this

- ~~[ ] ensure that this example panics if there are any unresolved ambiguities~~

- ~~[ ] run the example in CI 😈~~

There's one tricky ambiguity left, between UI and animation. I don't have the tools to fix this without system set configuration, so the remaining work is going to be left to #7267 or another PR after that.

```

2023-01-27T18:38:42.989405Z INFO bevy_ecs::schedule::ambiguity_detection: Execution order ambiguities detected, you might want to add an explicit dependency relation between some of these systems:

* Parallel systems:

-- "bevy_animation::animation_player" and "bevy_ui::flex::flex_node_system"

conflicts: ["bevy_transform::components::transform::Transform"]

```

## Changelog

Resolved internal execution order ambiguities for:

1. Transform propagation (ignored, we need smarter filter checking).

2. Gamepad processing (fixed).

3. bevy_winit's window handling (fixed).

4. Cascaded shadow maps and perspectives (fixed).

Also fixed a desynchronized state bug that could occur when the `Window` component is removed and then added to the same entity in a single frame.

# Objective

Fixes#3184. Fixes#6640. Fixes#4798. Using `Query::par_for_each(_mut)` currently requires a `batch_size` parameter, which affects how it chunks up large archetypes and tables into smaller chunks to run in parallel. Tuning this value is difficult, as the performance characteristics entirely depends on the state of the `World` it's being run on. Typically, users will just use a flat constant and just tune it by hand until it performs well in some benchmarks. However, this is both error prone and risks overfitting the tuning on that benchmark.

This PR proposes a naive automatic batch-size computation based on the current state of the `World`.

## Background

`Query::par_for_each(_mut)` schedules a new Task for every archetype or table that it matches. Archetypes/tables larger than the batch size are chunked into smaller tasks. Assuming every entity matched by the query has an identical workload, this makes the worst case scenario involve using a batch size equal to the size of the largest matched archetype or table. Conversely, a batch size of `max {archetype, table} size / thread count * COUNT_PER_THREAD` is likely the sweetspot where the overhead of scheduling tasks is minimized, at least not without grouping small archetypes/tables together.

There is also likely a strict minimum batch size below which the overhead of scheduling these tasks is heavier than running the entire thing single-threaded.

## Solution

- [x] Remove the `batch_size` from `Query(State)::par_for_each` and friends.

- [x] Add a check to compute `batch_size = max {archeytpe/table} size / thread count * COUNT_PER_THREAD`

- [x] ~~Panic if thread count is 0.~~ Defer to `for_each` if the thread count is 1 or less.

- [x] Early return if there is no matched table/archetype.

- [x] Add override option for users have queries that strongly violate the initial assumption that all iterated entities have an equal workload.

---

## Changelog

Changed: `Query::par_for_each(_mut)` has been changed to `Query::par_iter(_mut)` and will now automatically try to produce a batch size for callers based on the current `World` state.

## Migration Guide

The `batch_size` parameter for `Query(State)::par_for_each(_mut)` has been removed. These calls will automatically compute a batch size for you. Remove these parameters from all calls to these functions.

Before:

```rust

fn parallel_system(query: Query<&MyComponent>) {

query.par_for_each(32, |comp| {

...

});

}

```

After:

```rust

fn parallel_system(query: Query<&MyComponent>) {

query.par_iter().for_each(|comp| {

...

});

}

```

Co-authored-by: Arnav Choubey <56453634+x-52@users.noreply.github.com>

Co-authored-by: Robert Swain <robert.swain@gmail.com>

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Corey Farwell <coreyf@rwell.org>

Co-authored-by: Aevyrie <aevyrie@gmail.com>

# Objective

Fix#4647. If any child is changed, or even reordered, `Changed<Children>` is true, which causes transform propagation to propagate changes to all siblings of a changed child, even if they don't need to be.

## Solution

As `Parent` and `Children` are updated in tandem in hierarchy commands after #4800. `Changed<Parent>` is true on the child when `Changed<Children>` is true on the parent. However, unlike checking children, checking `Changed<Parent>` is only localized to the current entity and will not force propagation to the siblings.

Also took the opportunity to change propagation to use `Query::iter_many` instead of repeated `Query::get` calls. Should cut a bit of the overhead out of propagation. This means we won't panic when there isn't a `Parent` on the child, just skip over it.

The tests from #4608 still pass, so the change detection here still works just fine under this approach.

# Objective

It is often necessary to update an entity's parent

while keeping its GlobalTransform static. Currently

it is cumbersome and error-prone (two questions in

the discord `#help` channel in the past week)

- Part 2, resolves#5475

- Builds on: #7020.

## Solution

- Added the `BuildChildrenTransformExt` trait, it is part

of `bevy::prelude` and adds the following methods to `EntityCommands`:

- `set_parent_in_place`: Change the parent of an entity and

update its `Transform` in order to preserve its `GlobalTransform` after the parent change

- `remove_parent_in_place`: Remove an entity from a hierarchy,

while preserving its `GlobalTransform`.

---

## Changelog

- Added the `BuildChildrenTransformExt` trait, it is part

of `bevy::prelude` and adds the following methods to `EntityCommands`:

- `set_parent_in_place`: Change the parent of an entity and

update its `Transform` in order to preserve its `GlobalTransform` after the parent change

- `remove_parent_in_place`: Remove an entity from a hierarchy,

while preserving its `GlobalTransform`.

Co-authored-by: Nicola Papale <nicopap@users.noreply.github.com>

# Objective

It is possible to manually update `GlobalTransform`.

The engine actually assumes this is not possible.

For example, `propagate_transform` does not update children

of an `Entity` which **`GlobalTransform`** changed,

leading to unexpected behaviors.

A `GlobalTransform` set by the user may also be blindly

overwritten by the propagation system.

## Solution

- Remove `translation_mut`

- Explain to users that they shouldn't manually update the `GlobalTransform`

- Remove `global_vs_local.rs` example, since it misleads users

in believing that it is a valid use-case to manually update the

`GlobalTransform`

---

## Changelog

- Remove `GlobalTransform::translation_mut`

## Migration Guide

`GlobalTransform::translation_mut` has been removed without alternative,

if you were relying on this, update the `Transform` instead. If the given entity

had children or parent, you may need to remove its parent to make its transform

independent (in which case the new `Commands::set_parent_in_place` and

`Commands::remove_parent_in_place` may be of interest)

Bevy may add in the future a way to toggle transform propagation on

an entity basis.

# Objective

It is often necessary to update an entity's parent while keeping its GlobalTransform static. Currently it is cumbersome and error-prone (two questions in the discord `#help` channel in the past week)

- Part 1 of #5475

- Part 2: #7024.

## Solution

- Add a `reparented_to` method to `GlobalTransform`

---

## Changelog

- Add a `reparented_to` method to `GlobalTransform`

# Objective

Fixes#4697. Hierarchical propagation of properties, currently only Transform -> GlobalTransform, can be a very expensive operation. Transform propagation is a strict dependency for anything positioned in world-space. In large worlds, this can take quite a bit of time, so limiting it to a single thread can result in poor CPU utilization as it bottlenecks the rest of the frame's systems.

## Solution

- Move transforms without a parent or a child (free-floating (Global)Transform) entities into a separate parallel system.

- Chunk the hierarchy based on the root entities and process it in parallel with `Query::par_for_each_mut`.

- Utilize the hierarchy's specific properties introduced in #4717 to allow for safe use of `Query::get_unchecked` on multiple threads. Assuming each child is unique in the hierarchy, it is impossible to have an aliased `&mut GlobalTransform` so long as we verify that the parent for a child is the same one propagated from.

---

## Changelog

Removed: `transform_propagate_system` is no longer `pub`.

Add a method to rotate a transform to point towards a direction.

Also updated the docs to link to `forward` and `up` instead of mentioning local negative `Z` and local `Y`.

Unfortunately, links to methods don't work in rust-analyzer :(

Co-authored-by: Devil Ira <justthecooldude@gmail.com>

# Objective

Fix#6453.

## Solution

Use the solution mentioned in the issue by catching the unwind and dropping the error. Wrap the `executor.try_tick` calls with `std::catch::unwind`.

Ideally this would be moved outside of the hot loop, but the mut ref to the `spawned` future is not `UnwindSafe`.

This PR only addresses the bug, we can address the perf issues (should there be any) later.

# Objective

Right now, the `TaskPool` implementation allows panics to permanently kill worker threads upon panicking. This is currently non-recoverable without using a `std::panic::catch_unwind` in every scheduled task. This is poor ergonomics and even poorer developer experience. This is exacerbated by #2250 as these threads are global and cannot be replaced after initialization.

Removes the need for temporary fixes like #4998. Fixes#4996. Fixes#6081. Fixes#5285. Fixes#5054. Supersedes #2307.

## Solution

The current solution is to wrap `Executor::run` in `TaskPool` with a `catch_unwind`, and discarding the potential panic. This was taken straight from [smol](404c7bcc0a/src/spawn.rs (L44))'s current implementation. ~~However, this is not entirely ideal as:~~

- ~~the signaled to the awaiting task. We would need to change `Task<T>` to use `async_task::FallibleTask` internally, and even then it doesn't signal *why* it panicked, just that it did.~~ (See below).

- ~~no error is logged of any kind~~ (See below)

- ~~it's unclear if it drops other tasks in the executor~~ (it does not)

- ~~This allows the ECS parallel executor to keep chugging even though a system's task has been dropped. This inevitably leads to deadlock in the executor.~~ Assuming we don't catch the unwind in ParallelExecutor, this will naturally kill the main thread.

### Alternatives

A final solution likely will incorporate elements of any or all of the following.

#### ~~Log and Ignore~~

~~Log the panic, drop the task, keep chugging. This only addresses the discoverability of the panic. The process will continue to run, probably deadlocking the executor. tokio's detatched tasks operate in this fashion.~~

Panics already do this by default, even when caught by `catch_unwind`.

#### ~~`catch_unwind` in `ParallelExecutor`~~

~~Add another layer catching system-level panics into the `ParallelExecutor`. How the executor continues when a core dependency of many systems fails to run is up for debate.~~

`async_task::Task` bubbles up panics already, this will transitively push panics all the way to the main thread.

#### ~~Emulate/Copy `tokio::JoinHandle` with `Task<T>`~~

~~`tokio::JoinHandle<T>` bubbles up the panic from the underlying task when awaited. This can be transitively applied across other APIs that also use `Task<T>` like `Query::par_for_each` and `TaskPool::scope`, bubbling up the panic until it's either caught or it reaches the main thread.~~

`async_task::Task` bubbles up panics already, this will transitively push panics all the way to the main thread.

#### Abort on Panic

The nuclear option. Log the error, abort the entire process on any thread in the task pool panicking. Definitely avoids any additional infrastructure for passing the panic around, and might actually lead to more efficient code as any unwinding is optimized out. However gives the developer zero options for dealing with the issue, a seemingly poor choice for debuggability, and prevents graceful shutdown of the process. Potentially an option for handling very low-level task management (a la #4740). Roughly takes the shape of:

```rust

struct AbortOnPanic;

impl Drop for AbortOnPanic {

fn drop(&mut self) {

abort!();

}

}

let guard = AbortOnPanic;

// Run task

std::mem::forget(AbortOnPanic);

```

---

## Changelog

Changed: `bevy_tasks::TaskPool`'s threads will no longer terminate permanently when a task scheduled onto them panics.

Changed: `bevy_tasks::Task` and`bevy_tasks::Scope` will propagate panics in the spawned tasks/scopes to the parent thread.

This reverts commit 53d387f340.

# Objective

Reverts #6448. This didn't have the intended effect: we're now getting bevy::prelude shown in the docs again.

Co-authored-by: Alejandro Pascual <alejandro.pascual.pozo@gmail.com>

# Objective

- Right now re-exports are completely hidden in prelude docs.

- Fixes#6433

## Solution

- We could show the re-exports without inlining their documentation.

# Objective

Fixes#6378

`bevy_transform` is missing a feature corresponding to the `serialize` feature on the `bevy` crate.

## Solution

Adds a `serialize` feature to `bevy_transform`.

Derives `serde::Serialize` and `Deserialize` when feature is enabled.

Bevy's coordinate system is right-handed Y up, so +Z points towards my nose and I'm looking in the -Z direction. Therefore, `Transform::looking_at/look_at` must be pointing towards -Z. Or am I wrong here?

This is a holdover from back when `Transform` was backed by a private `Mat4` two years ago.

Not particularly useful anymore :)

## Migration Guide

`Transform::apply_non_uniform_scale` has been removed.

It can be replaced with the following snippet:

```rust

transform.scale *= scale_factor;

```

Co-authored-by: devil-ira <justthecooldude@gmail.com>

The docs ended up quite verbose :v

Also added a missing `#[inline]` to `GlobalTransform::mul_transform`.

I'd say this resolves#5500

# Migration Guide

`Transform::mul_vec3` has been renamed to `transform_point`.

Co-authored-by: devil-ira <justthecooldude@gmail.com>

# Objective

Make `GlobalTransform` constructible from scripts, in the same vein as #6187.

## Solution

- Use the derive macro to reflect default

---

## Changelog

> This section is optional. If this was a trivial fix, or has no externally-visible impact, you can delete this section.

- `GlobalTransform` now reflects the `Default` trait.

# Objective

- Fix#5285

## Solution

- Put the panicking system in a single threaded stage during the test

- This way only the main thread will panic, which is handled by `cargo test`

# Objective

Now that we can consolidate Bundles and Components under a single insert (thanks to #2975 and #6039), almost 100% of world spawns now look like `world.spawn().insert((Some, Tuple, Here))`. Spawning an entity without any components is an extremely uncommon pattern, so it makes sense to give spawn the "first class" ergonomic api. This consolidated api should be made consistent across all spawn apis (such as World and Commands).

## Solution

All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input:

```rust

// before:

commands

.spawn()

.insert((A, B, C));

world

.spawn()

.insert((A, B, C);

// after

commands.spawn((A, B, C));

world.spawn((A, B, C));

```

All existing instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api. A new `spawn_empty` has been added, replacing the old `spawn` api.

By allowing `world.spawn(some_bundle)` to replace `world.spawn().insert(some_bundle)`, this opened the door to removing the initial entity allocation in the "empty" archetype / table done in `spawn()` (and subsequent move to the actual archetype in `.insert(some_bundle)`).

This improves spawn performance by over 10%:

To take this measurement, I added a new `world_spawn` benchmark.

Unfortunately, optimizing `Commands::spawn` is slightly less trivial, as Commands expose the Entity id of spawned entities prior to actually spawning. Doing the optimization would (naively) require assurances that the `spawn(some_bundle)` command is applied before all other commands involving the entity (which would not necessarily be true, if memory serves). Optimizing `Commands::spawn` this way does feel possible, but it will require careful thought (and maybe some additional checks), which deserves its own PR. For now, it has the same performance characteristics of the current `Commands::spawn_bundle` on main.

**Note that 99% of this PR is simple renames and refactors. The only code that needs careful scrutiny is the new `World::spawn()` impl, which is relatively straightforward, but it has some new unsafe code (which re-uses battle tested BundlerSpawner code path).**

---

## Changelog

- All `spawn` apis (`World::spawn`, `Commands:;spawn`, `ChildBuilder::spawn`, and `WorldChildBuilder::spawn`) now accept a bundle as input

- All instances of `spawn_bundle` have been deprecated in favor of the new `spawn` api

- World and Commands now have `spawn_empty()`, which is equivalent to the old `spawn()` behavior.

## Migration Guide

```rust

// Old (0.8):

commands

.spawn()

.insert_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

commands.spawn_bundle((A, B, C));

// New (0.9)

commands.spawn((A, B, C));

// Old (0.8):

let entity = commands.spawn().id();

// New (0.9)

let entity = commands.spawn_empty().id();

// Old (0.8)

let entity = world.spawn().id();

// New (0.9)

let entity = world.spawn_empty();

```

# Objective

Both components already derives `Reflect` and it would be nice to have `FromReflect` in order to ser/de between those types without relaying on `downcast`, since it can fail between different platforms, like WebAssembly.

## Solution

Derive `FromReflect` for `Transform` and `GlobalTransform`.

I thought if I should also derive `FromReflect` for `GlobalTransform`, since it's a computed component, but there may be some use cases where a `GlobalTransform` is needed to be sent over the wire, so I decided to do it.

# Objective

Take advantage of the "impl Bundle for Component" changes in #2975 / add the follow up changes discussed there.

## Solution

- Change `insert` and `remove` to accept a Bundle instead of a Component (for both Commands and World)

- Deprecate `insert_bundle`, `remove_bundle`, and `remove_bundle_intersection`

- Add `remove_intersection`

---

## Changelog

- Change `insert` and `remove` now accept a Bundle instead of a Component (for both Commands and World)

- `insert_bundle` and `remove_bundle` are deprecated

## Migration Guide

Replace `insert_bundle` with `insert`:

```rust

// Old (0.8)

commands.spawn().insert_bundle(SomeBundle::default());

// New (0.9)

commands.spawn().insert(SomeBundle::default());

```

Replace `remove_bundle` with `remove`:

```rust

// Old (0.8)

commands.entity(some_entity).remove_bundle::<SomeBundle>();

// New (0.9)

commands.entity(some_entity).remove::<SomeBundle>();

```

Replace `remove_bundle_intersection` with `remove_intersection`:

```rust

// Old (0.8)

world.entity_mut(some_entity).remove_bundle_intersection::<SomeBundle>();

// New (0.9)

world.entity_mut(some_entity).remove_intersection::<SomeBundle>();

```

Consider consolidating as many operations as possible to improve ergonomics and cut down on archetype moves:

```rust

// Old (0.8)

commands.spawn()

.insert_bundle(SomeBundle::default())

.insert(SomeComponent);

// New (0.9) - Option 1

commands.spawn().insert((

SomeBundle::default(),

SomeComponent,

))

// New (0.9) - Option 2

commands.spawn_bundle((

SomeBundle::default(),

SomeComponent,

))

```

## Next Steps

Consider changing `spawn` to accept a bundle and deprecate `spawn_bundle`.

# Objective

Working on issue #1934 , with linking examples to the documentation. PR for transform examples.

## Solution

Added to the documentation in bevy_transform transform.rs and global_transform.rs utilizing links from examples.

[X] 3d_rotations.rs linked to rotate in Transform

[X] global_vs_local_translation.rs linked to top of Transform and GlobalTransform documentation

[X] scale.rs linked to scale Struct in Transform

[X] transform.rs linked to top of Transform documentation

[X] translation.rs linked to from_translation in Transform

Co-authored-by: bwhitt7 <103079612+bwhitt7@users.noreply.github.com>

# Objective

A common pitfall since 0.8 is the requirement on `ComputedVisibility`

being present on all ancestors of an entity that itself has

`ComputedVisibility`, without which, the entity becomes invisible.

I myself hit the issue and got very confused, and saw a few people hit

it as well, so it makes sense to provide a hint of what to do when such

a situation is encountered.

- Fixes#5849

- Closes#5616

- Closes#2277

- Closes#5081

## Solution

We now check that all entities with both a `Parent` and a

`ComputedVisibility` component have parents that themselves have a

`ComputedVisibility` component.

Note that the warning is only printed once.

We also add a similar warning to `GlobalTransform`.

This only emits a warning. Because sometimes it could be an intended

behavior.

Alternatives:

- Do nothing and keep repeating to newcomers how to avoid recurring

pitfalls

- Make the transform and visibility propagation tolerant to missing

components (#5616)

- Probably archetype invariants, though the current draft would not

allow detecting that kind of errors

---

## Changelog

- Add a warning when encountering dubious component hierarchy structure

Co-authored-by: Nicola Papale <nicopap@users.noreply.github.com>