# Objective

- Add a [Deferred

Renderer](https://en.wikipedia.org/wiki/Deferred_shading) to Bevy.

- This allows subsequent passes to access per pixel material information

before/during shading.

- Accessing this per pixel material information is needed for some

features, like GI. It also makes other features (ex. Decals) simpler to

implement and/or improves their capability. There are multiple

approaches to accomplishing this. The deferred shading approach works

well given the limitations of WebGPU and WebGL2.

Motivation: [I'm working on a GI solution for

Bevy](https://youtu.be/eH1AkL-mwhI)

# Solution

- The deferred renderer is implemented with a prepass and a deferred

lighting pass.

- The prepass renders opaque objects into the Gbuffer attachment

(`Rgba32Uint`). The PBR shader generates a `PbrInput` in mostly the same

way as the forward implementation and then [packs it into the

Gbuffer](ec1465559f/crates/bevy_pbr/src/render/pbr.wgsl (L168)).

- The deferred lighting pass unpacks the `PbrInput` and [feeds it into

the pbr()

function](ec1465559f/crates/bevy_pbr/src/deferred/deferred_lighting.wgsl (L65)),

then outputs the shaded color data.

- There is now a resource

[DefaultOpaqueRendererMethod](ec1465559f/crates/bevy_pbr/src/material.rs (L599))

that can be used to set the default render method for opaque materials.

If materials return `None` from

[opaque_render_method()](ec1465559f/crates/bevy_pbr/src/material.rs (L131))

the `DefaultOpaqueRendererMethod` will be used. Otherwise, custom

materials can also explicitly choose to only support Deferred or Forward

by returning the respective

[OpaqueRendererMethod](ec1465559f/crates/bevy_pbr/src/material.rs (L603))

- Deferred materials can be used seamlessly along with both opaque and

transparent forward rendered materials in the same scene. The [deferred

rendering

example](https://github.com/DGriffin91/bevy/blob/deferred/examples/3d/deferred_rendering.rs)

does this.

- The deferred renderer does not support MSAA. If any deferred materials

are used, MSAA must be disabled. Both TAA and FXAA are supported.

- Deferred rendering supports WebGL2/WebGPU.

## Custom deferred materials

- Custom materials can support both deferred and forward at the same

time. The

[StandardMaterial](ec1465559f/crates/bevy_pbr/src/render/pbr.wgsl (L166))

does this. So does [this

example](https://github.com/DGriffin91/bevy_glowy_orb_tutorial/blob/deferred/assets/shaders/glowy.wgsl#L56).

- Custom deferred materials that require PBR lighting can create a

`PbrInput`, write it to the deferred GBuffer and let it be rendered by

the `PBRDeferredLightingPlugin`.

- Custom deferred materials that require custom lighting have two

options:

1. Use the base_color channel of the `PbrInput` combined with the

`STANDARD_MATERIAL_FLAGS_UNLIT_BIT` flag.

[Example.](https://github.com/DGriffin91/bevy_glowy_orb_tutorial/blob/deferred/assets/shaders/glowy.wgsl#L56)

(If the unlit bit is set, the base_color is stored as RGB9E5 for extra

precision)

2. A Custom Deferred Lighting pass can be created, either overriding the

default, or running in addition. The a depth buffer is used to limit

rendering to only the required fragments for each deferred lighting

pass. Materials can set their respective depth id via the

[deferred_lighting_pass_id](b79182d2a3/crates/bevy_pbr/src/prepass/prepass_io.wgsl (L95))

attachment. The custom deferred lighting pass plugin can then set [its

corresponding

depth](ec1465559f/crates/bevy_pbr/src/deferred/deferred_lighting.wgsl (L37)).

Then with the lighting pass using

[CompareFunction::Equal](ec1465559f/crates/bevy_pbr/src/deferred/mod.rs (L335)),

only the fragments with a depth that equal the corresponding depth

written in the material will be rendered.

Custom deferred lighting plugins can also be created to render the

StandardMaterial. The default deferred lighting plugin can be bypassed

with `DefaultPlugins.set(PBRDeferredLightingPlugin { bypass: true })`

---------

Co-authored-by: nickrart <nickolas.g.russell@gmail.com>

# Objective

- Significantly reduce the size of MeshUniform by only including

necessary data.

## Solution

Local to world, model transforms are affine. This means they only need a

4x3 matrix to represent them.

`MeshUniform` stores the current, and previous model transforms, and the

inverse transpose of the current model transform, all as 4x4 matrices.

Instead we can store the current, and previous model transforms as 4x3

matrices, and we only need the upper-left 3x3 part of the inverse

transpose of the current model transform. This change allows us to

reduce the serialized MeshUniform size from 208 bytes to 144 bytes,

which is over a 30% saving in data to serialize, and VRAM bandwidth and

space.

## Benchmarks

On an M1 Max, running `many_cubes -- sphere`, main is in yellow, this PR

is in red:

<img width="1484" alt="Screenshot 2023-08-11 at 02 36 43"

src="https://github.com/bevyengine/bevy/assets/302146/7d99c7b3-f2bb-4004-a8d0-4c00f755cb0d">

A reduction in frame time of ~14%.

---

## Changelog

- Changed: Redefined `MeshUniform` to improve performance by using 4x3

affine transforms and reconstructing 4x4 matrices in the shader. Helper

functions were added to `bevy_pbr::mesh_functions` to unpack the data.

`affine_to_square` converts the packed 4x3 in 3x4 matrix data to a 4x4

matrix. `mat2x4_f32_to_mat3x3` converts the 3x3 in mat2x4 + f32 matrix

data back into a 3x3.

## Migration Guide

Shader code before:

```

var model = mesh[instance_index].model;

```

Shader code after:

```

#import bevy_pbr::mesh_functions affine_to_square

var model = affine_to_square(mesh[instance_index].model);

```

naga and wgpu should polyfill WGSL instance_index functionality where it

is not available in GLSL. Until that is done, we can work around it in

bevy using a push constant which is converted to a uniform by naga and

wgpu.

# Objective

- Fixes#9375

## Solution

- Use a push constant to pass in the base instance to the shader on

WebGL2 so that base instance + gl_InstanceID is used to correctly

represent the instance index.

## TODO

- [ ] Benchmark vs per-object dynamic offset MeshUniform as this will

now push a uniform value per-draw as well as update the dynamic offset

per-batch.

- [x] Test on DX12 AMD/NVIDIA to check that this PR does not regress any

problems that were observed there. (@Elabajaba @robtfm were testing that

last time - help appreciated. <3 )

---

## Changelog

- Added: `bevy_render::instance_index` shader import which includes a

workaround for the lack of a WGSL `instance_index` polyfill for WebGL2

in naga and wgpu for the time being. It uses a push_constant which gets

converted to a plain uniform by naga and wgpu.

## Migration Guide

Shader code before:

```

struct Vertex {

@builtin(instance_index) instance_index: u32,

...

}

@vertex

fn vertex(vertex_no_morph: Vertex) -> VertexOutput {

...

var model = mesh[vertex_no_morph.instance_index].model;

```

After:

```

#import bevy_render::instance_index

struct Vertex {

@builtin(instance_index) instance_index: u32,

...

}

@vertex

fn vertex(vertex_no_morph: Vertex) -> VertexOutput {

...

var model = mesh[bevy_render::instance_index::get_instance_index(vertex_no_morph.instance_index)].model;

```

# Objective

- Reduce the number of rebindings to enable batching of draw commands

## Solution

- Use the new `GpuArrayBuffer` for `MeshUniform` data to store all

`MeshUniform` data in arrays within fewer bindings

- Sort opaque/alpha mask prepass, opaque/alpha mask main, and shadow

phases also by the batch per-object data binding dynamic offset to

improve performance on WebGL2.

---

## Changelog

- Changed: Per-object `MeshUniform` data is now managed by

`GpuArrayBuffer` as arrays in buffers that need to be indexed into.

## Migration Guide

Accessing the `model` member of an individual mesh object's shader

`Mesh` struct the old way where each `MeshUniform` was stored at its own

dynamic offset:

```rust

struct Vertex {

@location(0) position: vec3<f32>,

};

fn vertex(vertex: Vertex) -> VertexOutput {

var out: VertexOutput;

out.clip_position = mesh_position_local_to_clip(

mesh.model,

vec4<f32>(vertex.position, 1.0)

);

return out;

}

```

The new way where one needs to index into the array of `Mesh`es for the

batch:

```rust

struct Vertex {

@builtin(instance_index) instance_index: u32,

@location(0) position: vec3<f32>,

};

fn vertex(vertex: Vertex) -> VertexOutput {

var out: VertexOutput;

out.clip_position = mesh_position_local_to_clip(

mesh[vertex.instance_index].model,

vec4<f32>(vertex.position, 1.0)

);

return out;

}

```

Note that using the instance_index is the default way to pass the

per-object index into the shader, but if you wish to do custom rendering

approaches you can pass it in however you like.

---------

Co-authored-by: robtfm <50659922+robtfm@users.noreply.github.com>

Co-authored-by: Elabajaba <Elabajaba@users.noreply.github.com>

# Objective

Since 10f5c92, shadows were broken for models with morph target.

When #5703 was merged, the morph target code in `render/mesh.wgsl` was

correctly updated to use the new import syntax. However, similar code

exists in `prepass/prepass.wgsl`, but it was never update. (the reason

code is duplicated is that the `Vertex` struct is different for both

files).

## Solution

Update the code, so that shadows render correctly with morph targets.

# Objective

operate on naga IR directly to improve handling of shader modules.

- give codespan reporting into imported modules

- allow glsl to be used from wgsl and vice-versa

the ultimate objective is to make it possible to

- provide user hooks for core shader functions (to modify light

behaviour within the standard pbr pipeline, for example)

- make automatic binding slot allocation possible

but ... since this is already big, adds some value and (i think) is at

feature parity with the existing code, i wanted to push this now.

## Solution

i made a crate called naga_oil (https://github.com/robtfm/naga_oil -

unpublished for now, could be part of bevy) which manages modules by

- building each module independantly to naga IR

- creating "header" files for each supported language, which are used to

build dependent modules/shaders

- make final shaders by combining the shader IR with the IR for imported

modules

then integrated this into bevy, replacing some of the existing shader

processing stuff. also reworked examples to reflect this.

## Migration Guide

shaders that don't use `#import` directives should work without changes.

the most notable user-facing difference is that imported

functions/variables/etc need to be qualified at point of use, and

there's no "leakage" of visible stuff into your shader scope from the

imports of your imports, so if you used things imported by your imports,

you now need to import them directly and qualify them.

the current strategy of including/'spreading' `mesh_vertex_output`

directly into a struct doesn't work any more, so these need to be

modified as per the examples (e.g. color_material.wgsl, or many others).

mesh data is assumed to be in bindgroup 2 by default, if mesh data is

bound into bindgroup 1 instead then the shader def `MESH_BINDGROUP_1`

needs to be added to the pipeline shader_defs.

# Objective

- Add morph targets to `bevy_pbr` (closes#5756) & load them from glTF

- Supersedes #3722

- Fixes#6814

[Morph targets][1] (also known as shape interpolation, shape keys, or

blend shapes) allow animating individual vertices with fine grained

controls. This is typically used for facial expressions. By specifying

multiple poses as vertex offset, and providing a set of weight of each

pose, it is possible to define surprisingly realistic transitions

between poses. Blending between multiple poses also allow composition.

Morph targets are part of the [gltf standard][2] and are a feature of

Unity and Unreal, and babylone.js, it is only natural to implement them

in bevy.

## Solution

This implementation of morph targets uses a 3d texture where each pixel

is a component of an animated attribute. Each layer is a different

target. We use a 2d texture for each target, because the number of

attribute×components×animated vertices is expected to always exceed the

maximum pixel row size limit of webGL2. It copies fairly closely the way

skinning is implemented on the CPU side, while on the GPU side, the

shader morph target implementation is a relatively trivial detail.

We add an optional `morph_texture` to the `Mesh` struct. The

`morph_texture` is built through a method that accepts an iterator over

attribute buffers.

The `MorphWeights` component, user-accessible, controls the blend of

poses used by mesh instances (so that multiple copy of the same mesh may

have different weights), all the weights are uploaded to a uniform

buffer of 256 `f32`. We limit to 16 poses per mesh, and a total of 256

poses.

More literature:

* Old babylone.js implementation (vertex attribute-based):

https://www.eternalcoding.com/dev-log-1-morph-targets/

* Babylone.js implementation (similar to ours):

https://www.youtube.com/watch?v=LBPRmGgU0PE

* GPU gems 3:

https://developer.nvidia.com/gpugems/gpugems3/part-i-geometry/chapter-3-directx-10-blend-shapes-breaking-limits

* Development discord thread

https://discord.com/channels/691052431525675048/1083325980615114772https://user-images.githubusercontent.com/26321040/231181046-3bca2ab2-d4d9-472e-8098-639f1871ce2e.mp4https://github.com/bevyengine/bevy/assets/26321040/d2a0c544-0ef8-45cf-9f99-8c3792f5a258

## Acknowledgements

* Thanks to `storytold` for sponsoring the feature

* Thanks to `superdump` and `james7132` for guidance and help figuring

out stuff

## Future work

- Handling of less and more attributes (eg: animated uv, animated

arbitrary attributes)

- Dynamic pose allocation (so that zero-weighted poses aren't uploaded

to GPU for example, enables much more total poses)

- Better animation API, see #8357

----

## Changelog

- Add morph targets to bevy meshes

- Support up to 64 poses per mesh of individually up to 116508 vertices,

animation currently strictly limited to the position, normal and tangent

attributes.

- Load a morph target using `Mesh::set_morph_targets`

- Add `VisitMorphTargets` and `VisitMorphAttributes` traits to

`bevy_render`, this allows defining morph targets (a fairly complex and

nested data structure) through iterators (ie: single copy instead of

passing around buffers), see documentation of those traits for details

- Add `MorphWeights` component exported by `bevy_render`

- `MorphWeights` control mesh's morph target weights, blending between

various poses defined as morph targets.

- `MorphWeights` are directly inherited by direct children (single level

of hierarchy) of an entity. This allows controlling several mesh

primitives through a unique entity _as per GLTF spec_.

- Add `MorphTargetNames` component, naming each indices of loaded morph

targets.

- Load morph targets weights and buffers in `bevy_gltf`

- handle morph targets animations in `bevy_animation` (previously, it

was a `warn!` log)

- Add the `MorphStressTest.gltf` asset for morph targets testing, taken

from the glTF samples repo, CC0.

- Add morph target manipulation to `scene_viewer`

- Separate the animation code in `scene_viewer` from the rest of the

code, reducing `#[cfg(feature)]` noise

- Add the `morph_targets.rs` example to show off how to manipulate morph

targets, loading `MorpStressTest.gltf`

## Migration Guide

- (very specialized, unlikely to be touched by 3rd parties)

- `MeshPipeline` now has a single `mesh_layouts` field rather than

separate `mesh_layout` and `skinned_mesh_layout` fields. You should

handle all possible mesh bind group layouts in your implementation

- You should also handle properly the new `MORPH_TARGETS` shader def and

mesh pipeline key. A new function is exposed to make this easier:

`setup_moprh_and_skinning_defs`

- The `MeshBindGroup` is now `MeshBindGroups`, cached bind groups are

now accessed through the `get` method.

[1]: https://en.wikipedia.org/wiki/Morph_target_animation

[2]:

https://registry.khronos.org/glTF/specs/2.0/glTF-2.0.html#morph-targets

---------

Co-authored-by: François <mockersf@gmail.com>

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Fixes#8645

## Solution

Cascaded shadow maps use a technique commonly called shadow pancaking to

enhance shadow map resolution by restricting the orthographic projection

used in creating the shadow maps to the frustum slice for the cascade.

The implication of this restriction is that shadow casters can be closer

than the near plane of the projection volume.

Prior to this PR, we address clamp the depth of the prepass vertex

output to ensure that these shadow casters do not get clipped, resulting

in shadow loss. However, a flaw / bug of the prior approach is that the

depth that gets written to the shadow map isn't quite correct - the

depth was previously derived by interpolated the clamped clip position,

resulting in depths that are further than they should be. This creates

artifacts that are particularly noticeable when a very 'long' object

intersects the near plane close to perpendicularly.

The fix in this PR is to propagate the unclamped depth to the prepass

fragment shader and use that depth value directly.

A complementary solution would be to use

[DEPTH_CLIP_CONTROL](https://docs.rs/wgpu/latest/wgpu/struct.Features.html#associatedconstant.DEPTH_CLIP_CONTROL)

to request `unclipped_depth`. However due to the relatively low support

of the feature on Vulkan (I believe it's ~38%), I went with this

solution for now to get the broadest fix out first.

---

## Changelog

- Fixed: Shadows from directional lights were sometimes incorrectly

omitted when the shadow caster was partially out of view.

---------

Co-authored-by: Carter Anderson <mcanders1@gmail.com>

# Objective

- Enabling AlphaMode::Opaque in the shader_prepass example crashes. The

issue seems to be that enabling opaque also generates vertex_uvs

Fixes https://github.com/bevyengine/bevy/issues/8273

## Solution

- Use the vertex_uvs in the shader if they are present

# Objective

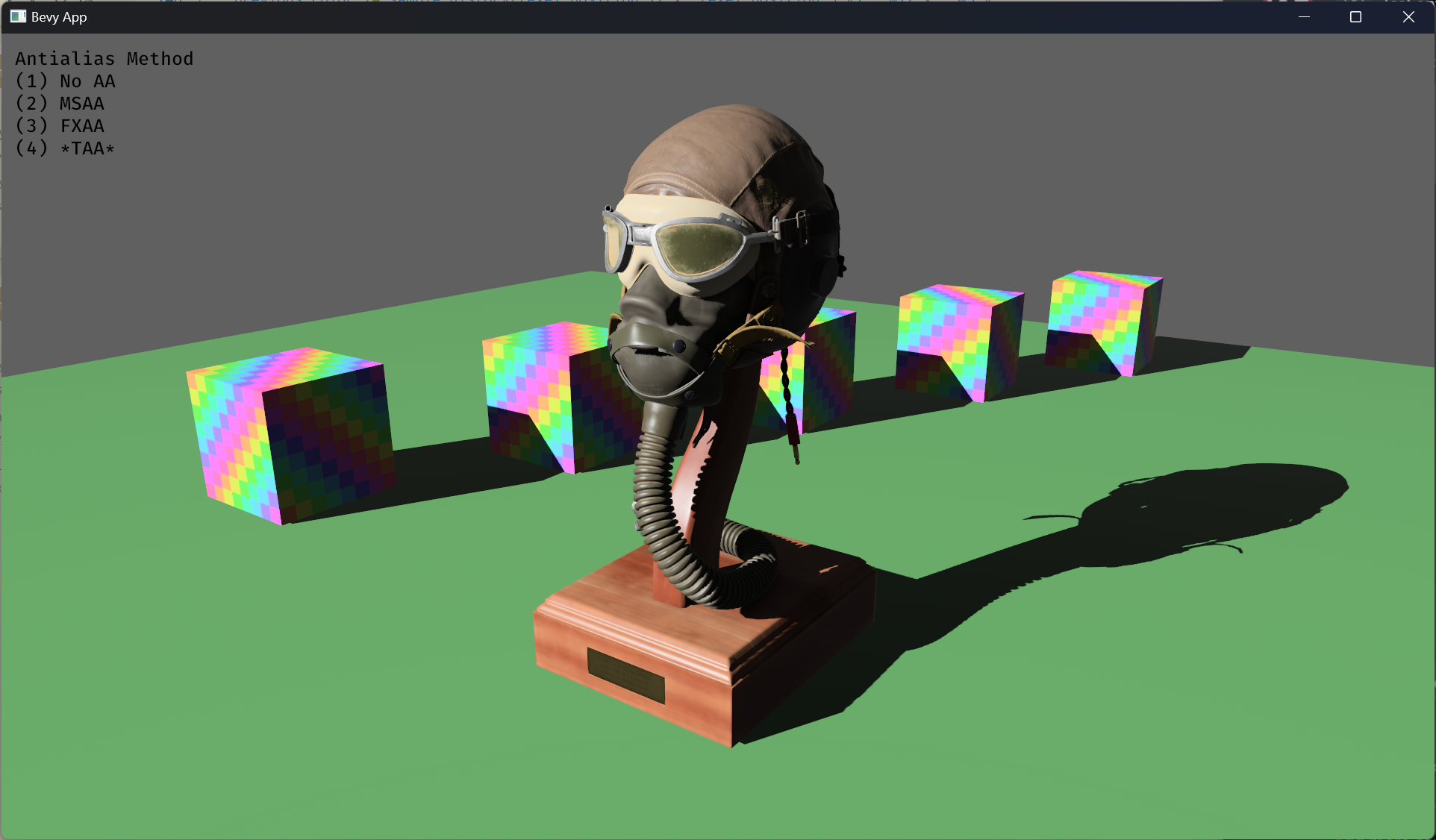

- Implement an alternative antialias technique

- TAA scales based off of view resolution, not geometry complexity

- TAA filters textures, firefly pixels, and other aliasing not covered

by MSAA

- TAA additionally will reduce noise / increase quality in future

stochastic rendering techniques

- Closes https://github.com/bevyengine/bevy/issues/3663

## Solution

- Add a temporal jitter component

- Add a motion vector prepass

- Add a TemporalAntialias component and plugin

- Combine existing MSAA and FXAA examples and add TAA

## Followup Work

- Prepass motion vector support for skinned meshes

- Move uniforms needed for motion vectors into a separate bind group,

instead of using different bind group layouts

- Reuse previous frame's GPU view buffer for motion vectors, instead of

recomputing

- Mip biasing for sharper textures, and or unjitter texture UVs

https://github.com/bevyengine/bevy/issues/7323

- Compute shader for better performance

- Investigate FSR techniques

- Historical depth based disocclusion tests, for geometry disocclusion

- Historical luminance/hue based tests, for shading disocclusion

- Pixel "locks" to reduce blending rate / revamp history confidence

mechanism

- Orthographic camera support for TemporalJitter

- Figure out COD's 1-tap bicubic filter

---

## Changelog

- Added MotionVectorPrepass and TemporalJitter

- Added TemporalAntialiasPlugin, TemporalAntialiasBundle, and

TemporalAntialiasSettings

---------

Co-authored-by: IceSentry <c.giguere42@gmail.com>

Co-authored-by: IceSentry <IceSentry@users.noreply.github.com>

Co-authored-by: Robert Swain <robert.swain@gmail.com>

Co-authored-by: Daniel Chia <danstryder@gmail.com>

Co-authored-by: robtfm <50659922+robtfm@users.noreply.github.com>

Co-authored-by: Brandon Dyer <brandondyer64@gmail.com>

Co-authored-by: Edgar Geier <geieredgar@gmail.com>

# Objective

- Fixes#4372.

## Solution

- Use the prepass shaders for the shadow passes.

- Move `DEPTH_CLAMP_ORTHO` from `ShadowPipelineKey` to `MeshPipelineKey` and the associated clamp operation from `depth.wgsl` to `prepass.wgsl`.

- Remove `depth.wgsl` .

- Replace `ShadowPipeline` with `ShadowSamplers`.

Instead of running the custom `ShadowPipeline` we run the `PrepassPipeline` with the `DEPTH_PREPASS` flag and additionally the `DEPTH_CLAMP_ORTHO` flag for directional lights as well as the `ALPHA_MASK` flag for materials that use `AlphaMode::Mask(_)`.

# Objective

- Add a configurable prepass

- A depth prepass is useful for various shader effects and to reduce overdraw. It can be expansive depending on the scene so it's important to be able to disable it if you don't need any effects that uses it or don't suffer from excessive overdraw.

- The goal is to eventually use it for things like TAA, Ambient Occlusion, SSR and various other techniques that can benefit from having a prepass.

## Solution

The prepass node is inserted before the main pass. It runs for each `Camera3d` with a prepass component (`DepthPrepass`, `NormalPrepass`). The presence of one of those components is used to determine which textures are generated in the prepass. When any prepass is enabled, the depth buffer generated will be used by the main pass to reduce overdraw.

The prepass runs for each `Material` created with the `MaterialPlugin::prepass_enabled` option set to `true`. You can overload the shader used by the prepass by using `Material::prepass_vertex_shader()` and/or `Material::prepass_fragment_shader()`. It will also use the `Material::specialize()` for more advanced use cases. It is enabled by default on all materials.

The prepass works on opaque materials and materials using an alpha mask. Transparent materials are ignored.

The `StandardMaterial` overloads the prepass fragment shader to support alpha mask and normal maps.

---

## Changelog

- Add a new `PrepassNode` that runs before the main pass

- Add a `PrepassPlugin` to extract/prepare/queue the necessary data

- Add a `DepthPrepass` and `NormalPrepass` component to control which textures will be created by the prepass and available in later passes.

- Add a new `prepass_enabled` flag to the `MaterialPlugin` that will control if a material uses the prepass or not.

- Add a new `prepass_enabled` flag to the `PbrPlugin` to control if the StandardMaterial uses the prepass. Currently defaults to false.

- Add `Material::prepass_vertex_shader()` and `Material::prepass_fragment_shader()` to control the prepass from the `Material`

## Notes

In bevy's sample 3d scene, the performance is actually worse when enabling the prepass, but on more complex scenes the performance is generally better. I would like more testing on this, but @DGriffin91 has reported a very noticeable improvements in some scenes.

The prepass is also used by @JMS55 for TAA and GTAO

discord thread: <https://discord.com/channels/691052431525675048/1011624228627419187>

This PR was built on top of the work of multiple people

Co-Authored-By: @superdump

Co-Authored-By: @robtfm

Co-Authored-By: @JMS55

Co-authored-by: Charles <IceSentry@users.noreply.github.com>

Co-authored-by: JMS55 <47158642+JMS55@users.noreply.github.com>